Essex Police suspend live facial recognition cameras after study reveals racial bias concerns

3 Sources

3 Sources

[1]

UK cops suspend live facial recog as study finds racial bias

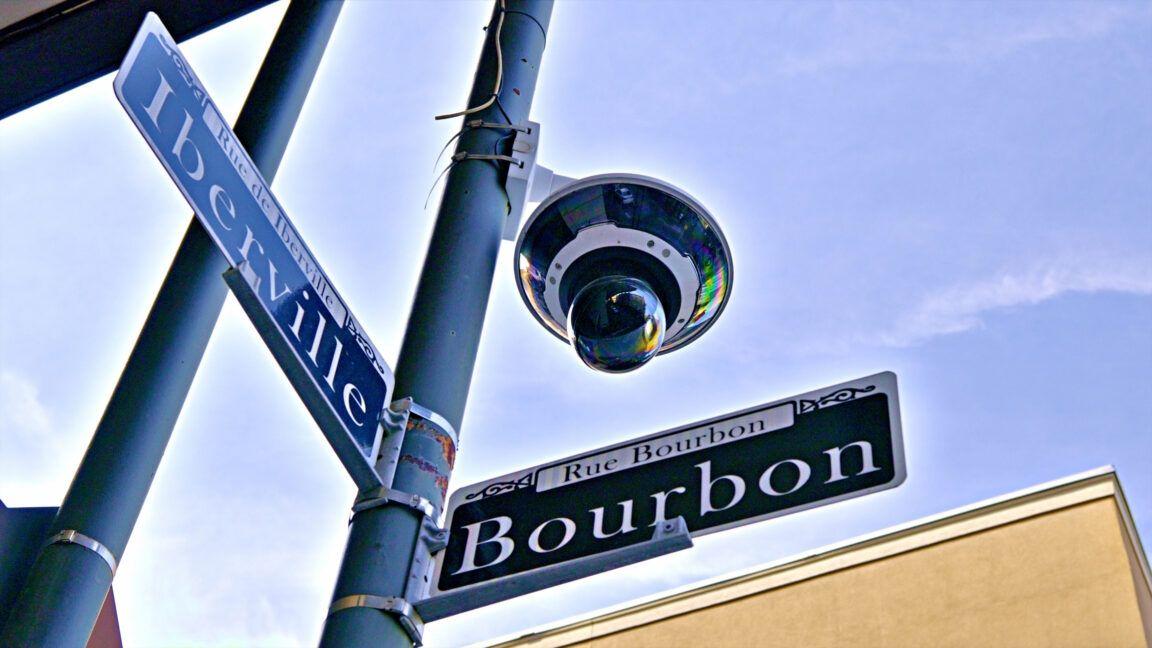

Cams statistically more likely to ID Black people, says new research A UK police force has suspended its deployment of live facial recognition (LFR) technology after a study revealed it was statistically more likely to identify Black people on a watchlist database. Essex Police said it had paused use of the technology to update the system with the help of the algorithm software provider. Another similar study identified no bias, it said. The report from Cambridge University researchers found the Essex police system was more likely to correctly identify men than women and was statistically significantly more likely to correctly identify Black participants than participants from other ethnic groups. Police forces can use LFR to identify people on a pre-configured watchlist, usually made up of criminals, people of interest, or missing vulnerable individuals. The study [PDF] used 188 volunteers to act as members of the public in a controlled field experiment during a real police deployment. Because the researchers knew exactly who was present, it was possible to measure both correct and missed identifications. It found that at the "current operational setting" used by Essex Police, the system correctly identified around half of the people on the watchlist who passed the cameras and that incorrect identifications were "extremely rare." "Of the six false positive identifications observed in this test, four involved Black individuals. Given that observations of Black subjects constituted 536/2,251 (23.8 per cent) of the sample, the observed imbalance is unlikely to be due to chance alone but this could reflect the limited number of false positive events rather than a true systematic effect," it said. The finding should be treated as suggestive rather than conclusive, it added. A spokesperson for Essex Police said that as part of a commitment to its Public Sector Equality Duty, it had commissioned two independent studies which were completed by academia. "The first of these indicated there was a potential bias in the positive identification rate, while the second suggested there was no statistical relevant bias in the results. "Based on the fact there was potential bias, the force decided to pause deployments while we worked with the algorithm software provider to review the results and seek to update the software." The force added: "We then sought further academic assessment. As a result of this work, we have revised our policies and procedures and are now confident that we can start deploying this important technology as part of policing operations to trace and arrest wanted criminals. We will continue to monitor all results to ensure there is no risk of bias against any one section of the community." Earlier this year, the British government decided the police in England and Wales should increase their use of live facial recognition (LFR) and artificial intelligence (AI) under wide-ranging government plans to reform law enforcement. In a white paper [PDF], the Home Office launched plans to fund 40 more LFR-equipped vans in addition to ten already in use. It said they would be used in "town centers and high crime hotspots" with the government planning to spend more than £26 million on a national facial recognition system and £11.6 million on LFR capabilities. ®

[2]

Essex Police tweaks 'biased' facial recognition software

A police force has paused the use of live facial recognition (LFR) cameras after a study found it was statistically more likely to identify black people than other ethnic groups. Essex Police has used the technology since summer 2024, but the study identified "a potential bias in the positive identification rate". The force said that following updates to its algorithm and software, it was confident that LFR cameras could be deployed again. But campaign group Big Brother Watch said the technology was "authoritarian, inaccurate and ineffective in equal measure". Essex Police said it commissioned two independent studies into its use of LFR - carried out by the University of Cambridge - with one of them indicating the potential bias. In that study, 188 volunteers acted as members of the public in a controlled field experiment during a real police deployment, with the system correctly identifying about half the people on the watchlist who passed the cameras. But the report said the system was more likely to correctly identify men than women, and "it was statistically significantly more likely to correctly identify Black participants than participants from other ethnic groups". The second study - which analysed more than 40 deployments of LFR technology between August 2024 and February 2025 - found it had scanned approximately 1.3 million faces in public spaces. It said that officers intervened 123 times to speak to people and carried out 48 arrests - roughly one for every 27,000 faces scanned - and there was one confirmed mistaken intervention. A spokesperson for Essex Police said it "decided to pause deployments while we worked with the algorithm software provider to review the results and seek to update the software". They added: "As a result of this work, we have revised our policies and procedures and are now confident that we can start deploying this important technology as part of policing operations to trace and arrest wanted criminals. "We will continue to monitor all results to ensure there is no risk of bias against any one section of the community." The BBC understands that Essex Police procured its own LFR system. The Home Office declined to comment. The government announced in January it would increase the number of LFR vans across the country from 10 to 50, with the home secretary saying she made "no apology" for rolling the technology out to all police forces. "The [Metropolitan Police] has been using it for some time now. They've made 1,700 arrests off the back of using live facial recognition," Shabana Mahmood told the BBC. But Jake Hurfurt, from Big Brother Watch, said it had warned that the use of LFR could "put the rights of thousands of people at risk". "LFR as a tool of general mass surveillance has no place in a democracy like Britain, but if police are going to use it the very least the public can expect is that it doesn't racially discriminate against people," he said. "AI surveillance that is experimental, untested, inaccurate or potentially biased has no place on our streets." Follow Essex news on BBC Sounds, Facebook, Instagram and X.

[3]

Essex Police pauses use of live facial recognition cameras due to racial bias concerns

The technology is seen by the government as a way of using AI to get dangerous individuals off the streets - but there are concerns over privacy and data retention. Essex Police has paused the use of live facial recognition cameras (LFR) after a study found they identified more black people than other ethnic groups. The cameras are mounted on vans and designed to identify people on watchlists if they pass by. Thirteen forces were using it by the end of last year and the home secretary said in January that the number of LFR vans would increase from 10 to 50. However, Essex Police said it had paused use of LFR after "potential bias in the positive identification rate" - although it now believes the issue has been corrected by updating the algorithm. Nearly 200 people were recruited by University of Cambridge researchers to test LFR during one of the force's deployments. They found it correctly identified around half of those on the watchlist, and that it was "extremely rare" for someone to be flagged up if they weren't on the list. But the study found it was "statistically significantly more likely" to correctly identify black people than other ethnicities. It was also "more likely" to spot men than women. Researchers said it raised "questions about fairness that require continued monitoring". Essex Police told Sky News the study was one of two it commissioned - and the second suggested no bias - but that it had paused deployments to work "with the algorithm software provider" to update the system. It said "further academic assessment" had been done and it believed the system was ready to hit the streets again. "We have revised our policies and procedures and are now confident that we can start deploying this important technology as part of policing operations to trace and arrest wanted criminals," said a statement. "We will continue to monitor all results to ensure there is no risk of bias against any one section of the community". 'Real risk of unfairness' Beyond concerns about identifying some groups more than others, the study also examined how LFR had worked so far for Essex Police. It said about 1.3 million faces had been scanned from August 2024 to February 2025, with 48 arrests, about one for every 27,000 faces. There was only one mistaken intervention. Researchers said different LFR systems and conditions could produce different results and that more testing is needed "to build a fuller understanding of the technology's performance". As the government looks to ramp up use of the technology, questions over privacy and the huge number of images taken remain a key concern. The study said "proportionality, transparency and oversight" were vital in deciding when to use LFR, and the Information Commissioner's Office (ICO) is also scrutinising the technology. "All forces should also be conducting routine testing for bias and discriminatory outcomes - whether arising from technology design, training data, or watchlist composition," said the ICO. "Without this, there is a real risk of unfairness." The Home Office said a person's image is "immediately and automatically" deleted if it does not match the watchlist and all deployments are "targeted, intelligence-led, time-bound, and geographically limited". It said more then 1,300 people suspected of serious crimes - including rape, domestic abuse and GBH - had been arrested in London thanks to LFR between January 2024 and September 2025.

Share

Share

Copy Link

Essex Police has temporarily halted its live facial recognition technology after a Cambridge University study found it was statistically more likely to identify Black people than other ethnic groups. The force has worked with its algorithm software provider to update the system and now plans to resume deployments, even as the UK government pushes to expand LFR use from 10 to 50 vans nationwide.

Essex Police Halt Live Facial Recognition Amid Bias Findings

Essex Police has suspended deployment of live facial recognition cameras after a Cambridge University study revealed the technology exhibited racial bias in its identification patterns

1

. The research found the facial recognition system was statistically significantly more likely to correctly identify Black participants compared to other ethnic groups, raising questions about fairness in AI technologies in policing2

. The force, which began using live facial recognition technology in summer 2024, commissioned two independent studies as part of its Public Sector Equality Duty obligations1

.

Source: BBC

Cambridge University Study Reveals Identification Rate Disparities

The Cambridge University study used 188 volunteers to act as members of the public during a controlled field experiment conducted during an actual police deployment

1

. Researchers found that at current operational settings, the facial recognition software correctly identified approximately half of the people on the watchlist who passed the cameras2

. While incorrect identifications were extremely rare, the study observed concerning patterns. Of six false positive identifications, four involved Black individuals, despite Black subjects constituting only 536 out of 2,251 observations, or 23.8 per cent of the sample1

. The system also proved more likely to correctly identify men than women, highlighting multiple dimensions of potential bias in the technology3

.Algorithm Update and Software Provider Collaboration

Following the findings, Essex Police worked directly with its algorithm software provider to review results and implement updates to address the identified bias

1

. The force noted that while one study indicated potential bias in the positive identification rate, a second study analyzing more than 40 deployments between August 2024 and February 2025 suggested no statistically relevant bias2

. During this period, the system scanned approximately 1.3 million faces in public spaces, leading to 123 police interventions and 48 arrests—roughly one arrest for every 27,000 faces scanned2

. There was one confirmed mistaken intervention. After revising policies and procedures, Essex Police expressed confidence in resuming deployments of live facial recognition as part of operations to trace and arrest wanted criminals, while committing to continued monitoring to ensure no bias against any section of the community1

.Related Stories

Government Plans to Expand LFR Despite Privacy Concerns

The suspension comes as the British government pushes to dramatically expand the use of live facial recognition across England and Wales. Earlier this year, the Home Office announced plans to increase LFR-equipped vans from 10 to 50, with deployments planned for town centers and high crime hotspots

1

. The government committed more than £26 million to a national facial recognition system and £11.6 million specifically for LFR capabilities1

. Home Secretary Shabana Mahmood defended the expansion, noting that the Metropolitan Police had made 1,700 arrests using the technology2

. By the end of last year, 13 forces were using live facial recognition technology. The Home Office maintains that images are immediately and automatically deleted if they don't match the watchlist, and that all deployments are targeted, intelligence-led, time-bound, and geographically limited.

Source: Sky News

Civil Liberties Groups Challenge Biased AI Surveillance

Campaign group Big Brother Watch strongly criticized the technology, calling it "authoritarian, inaccurate and ineffective in equal measure"

2

. Jake Hurfurt from the organization warned that LFR as a tool of general mass surveillance has no place in a democracy like Britain, emphasizing that if police use it, the public should expect it doesn't racially discriminate2

. The group argued that AI surveillance that is experimental, untested, inaccurate, or potentially biased has no place on British streets2

. The Information Commissioner's Office has also weighed in, stating that all forces should conduct routine bias testing to check for discriminatory outcomes arising from technology design, training data, or watchlist composition. Without such testing, the ICO warned of a real risk of unfairness, emphasizing the importance of proportionality, transparency, and oversight in deciding when to deploy the technology. Researchers noted that different systems and conditions could produce varying results, calling for more testing to build fuller understanding of the technology's performance and ethics and accuracy.References

Summarized by

Navi

[1]

Related Stories

Axon tests AI-powered police body cameras with facial recognition on 7,000-person watch list

07 Dec 2025•Technology

West Midlands Police Copilot scandal exposes AI hallucinations in UK policing decisions

24 Feb 2026•Policy and Regulation

New Orleans Police Pause Controversial AI-Powered Facial Recognition Program Amid Privacy Concerns

20 May 2025•Technology