Facial recognition jails Tennessee grandmother for 6 months as AI misidentification cases mount

2 Sources

2 Sources

[1]

Facial recognition is jailing the wrong people, but police keep using it anyway -- Tennessee grandmother latest victim of AI-driven misidentification

Angela Lipps spent nearly six months behind bars after AI software misidentified her. She's at least the ninth American it's happened to. A Tennessee grandmother spent nearly six months in jail after police in Fargo, North Dakota, used facial recognition software to identify her as the primary suspect in a bank fraud case, according to reporting by WDAY News. The problem was that Angela Lipps, 50, had never been to North Dakota, and bank records confirmed she was more than 1,200 miles away at the time of the alleged crimes. Her case is the latest in a documented pattern of wrongful arrests driven by facial recognition technology deployed without adequate investigative follow-up. Fargo police were investigating a series of bank fraud incidents in April and May last year, in which a woman used a fake U.S. Army ID to withdraw tens of thousands of dollars. Detectives ran surveillance footage through facial recognition software, which returned a match to Lipps. A detective then compared her Tennessee driver's license and social media images to the suspect and concluded that she was the perpetrator based on facial features, body type, and hair. Nobody from the department contacted Lipps before U.S. Marshals arrested her at gunpoint on July 14 while she was babysitting four children. Lipps sat in a Tennessee county jail for 108 days before North Dakota officers collected her. Her attorney, Jay Greenwood, immediately requested her bank records, and when Fargo police finally met with Greenwood and Lipps on December 19, five months after her arrest, the records showed she had been buying cigarettes and depositing Social Security checks in Tennessee at the time police placed her in Fargo. The case was dismissed on Christmas Eve, but the damage had already been done; she had no money, no coat, and no way home, and subsequently lost her house, her car, and her dog. Its not unusual Shockingly, this is just the latest in a series of structural failures that have led to innocent people being persecuted for crimes they didn't commit. A January 2025 WaPo investigation documented at least eight instances of Americans wrongfully arrested after police found a possible FRT match, and in every case, investigators skipped fundamental steps like checking alibis and comparing physical descriptions that would have cleared the suspect before arrest. The facial recognition vendors themselves, such as Clearview AI, even attach explicit caveats to their systems. Clearview requires agencies to acknowledge that results "are indicative and not definitive" and that officers must conduct further research before acting on them. According to an April 2024 ACLU submission to the U.S. Commission on Civil Rights, in at least five of seven wrongful arrest cases, police had received explicit warnings that FRT results don't constitute probable cause but made arrests anyway. Robert Williams, whose 2020 wrongful arrest in Detroit was the first publicly reported FRT false-positive case, reached a landmark settlement with the city in June 2024 that now requires independent corroborating evidence before any FRT match can be used to seek an arrest warrant. However, only 15 states had enacted any FRT legislation covering law enforcement at the start of 2025, and North Dakota is not among them. As for Lipps, she is now back home in Tennessee, awaiting an apology from the Fargo Police Department that hasn't yet come. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

Grandmother spent six months in jail after AI facial recognition misidentified her

Serving tech enthusiasts for over 25 years. TechSpot means tech analysis and advice you can trust. WTF?! Facial recognition systems aren't always accurate, and the consequences of these mistakes can be severe. One of the worst instances of AI getting things wrong is the case of Angela Lipps, a Tennessee grandmother who spent six months in jail after the technology linked her to a North Dakota bank fraud investigation. A team of US Marshals arrested 50-year-old Lipps in Tennessee at gunpoint last July while she was babysitting four young children, writes InForum. She was booked into the county jail in Tennessee as a fugitive from justice from North Dakota. But Lipps had never been to North Dakota in her life, and bank records later confirmed she was more than 1,200 miles away at the time of the alleged crime. Lipps remained in a Tennessee jail for nearly four months without bail while awaiting extradition. She was charged with four counts of unauthorized use of personal identifying information and four counts of theft. Fargo police records obtained by WDAY News show that the arrest was the result of an investigation into bank fraud cases in April and May 2025. Image credit: Matt Henson / WDAY Detectives reportedly reviewed surveillance video of a woman using a fake US army military ID to withdraw tens of thousands of dollars. By using facial recognition software, the detectives identified the woman as Lipps. One of the investigators compared Lipps' Tennessee driver's license and social media images to the suspect. The conclusion was that she appeared to match the real criminal based on facial features, body type and hairstyle. Lipps said no one from the Fargo police department contacted her before the arrest. She was held in a Tennessee county jail for 108 days before being transported to North Dakota. Lipps' attorney, Jay Greenwood, requested her bank records, which proved she had been buying cigarettes and depositing Social Security checks in Tennessee at the time police said the fraud occurred in Fargo. Lipps was released from jail on Christmas Eve. She was stranded in Fargo with no money, coat, or way to get home. Local defense attorneys gave Lipps money to pay for a hotel room and food on Christmas Eve and Christmas Day. A local non-profit, the F5 Project, helped her return to Tennessee. As she could not pay her bills from jail, Lipps has lost her home, her car and even her dog. She said no one from the Fargo police department has even apologized for what happened. This is far from an isolated case. There have been several instances of Americans wrongfully arrested as a result of facial recognition incorrectly identifying them and detectives failing to check alibis or carry out further confirmation checks. In 2020, Robert Williams was arrested after his driver's license photo was flagged as a likely match to a man seen stealing designer watches from a store in 2018. During questioning, Williams was told he was being arrested based solely on the results of a facial recognition lineup. Williams was innocent, the city of Detroit later agreed to pay him $300,000, and Detroit stopped making arrests based only on facial recognition software results. AI doesn't just misidentify people. In October, a high school student was swarmed by police after an AI thought the Doritos bag he was holding was a gun.

Share

Share

Copy Link

Angela Lipps spent nearly six months behind bars after facial recognition software wrongly identified her in a North Dakota bank fraud case. She was arrested at gunpoint while babysitting, held without bail, and later cleared when bank records proved she was 1,200 miles away. This marks at least the ninth documented case of wrongful arrest driven by AI-driven misidentification, revealing a troubling pattern where law enforcement skips basic verification steps despite explicit warnings from AI vendors.

Facial Recognition Software Leads to Six-Month Wrongful Incarceration

Angela Lipps, a 50-year-old Tennessee grandmother, endured nearly six months behind bars after facial recognition technology incorrectly linked her to a bank fraud investigation in Fargo, North Dakota

1

. U.S. Marshals arrested her at gunpoint on July 14 while she was babysitting four children, charging her with four counts of unauthorized use of personal identifying information and four counts of theft2

. The Angela Lipps case represents at least the ninth documented instance of wrongful arrest stemming from AI misidentification, exposing critical failures in how law enforcement deploys these systems.

Source: TechSpot

The Fargo Police Department was investigating a series of incidents from April and May where a woman used a fake U.S. Army ID to withdraw tens of thousands of dollars from banks

1

. Detectives ran surveillance footage through facial recognition software, which returned a match to Lipps. A detective then compared her Tennessee driver's license photo and social media images to the suspect, concluding she was the perpetrator based on facial features, body type, and hair1

. Critically, no one from the department contacted Lipps before her arrest.Bank Records Prove Innocence After Months of Wrongful Incarceration

Lipps spent 108 days in a Tennessee county jail without bail before North Dakota officers transported her for the bank fraud investigation

1

. Her attorney, Jay Greenwood, immediately requested bank records that would prove decisive. When Fargo police finally met with Greenwood and Lipps on December 19—five months after her arrest—the records showed she had been buying cigarettes and depositing Social Security checks in Tennessee at the time police placed her in Fargo, more than 1,200 miles away1

2

.

Source: Tom's Hardware

The case was dismissed on Christmas Eve, but the damage proved catastrophic. Lipps was released in Fargo with no money, no coat, and no way home

1

. Local defense attorneys provided funds for a hotel room and food on Christmas Eve and Christmas Day, while the F5 Project, a local non-profit, helped her return to Tennessee2

. Unable to pay bills from jail, she lost her house, her car, and even her dog. The Fargo Police Department has yet to issue an apology .Pattern of Police Use of Facial Recognition Without Proper Verification

A January 2025 Washington Post investigation documented at least eight instances of Americans experiencing wrongful arrest after police found a possible facial recognition match . In every case, investigators skipped fundamental steps like checking alibis and comparing physical descriptions that would have cleared suspects before arrest. This pattern of inaccurate AI identification reveals a systemic problem where law enforcement treats AI results as definitive rather than investigative leads requiring corroborating evidence.

Vendors like Clearview AI attach explicit caveats to their systems, requiring agencies to acknowledge that results "are indicative and not definitive" and that officers must conduct further research before acting . According to an April 2024 ACLU submission to the U.S. Commission on Civil Rights, in at least five of seven wrongful arrest cases, police had received explicit warnings that facial recognition results don't constitute probable cause but made arrests anyway .

Related Stories

Limited Legal Protections and the Robert Williams Precedent

Robert Williams, whose 2020 wrongful arrest in Detroit was the first publicly reported case of a facial recognition false-positive, reached a landmark settlement with the city in June 2024 . Williams was arrested after his driver's license photo was flagged as a likely match to a man stealing designer watches in 2018, and during questioning was told the arrest was based solely on facial recognition results

2

. Detroit later agreed to pay him $300,000, and the city now requires independent corroborating evidence before any facial recognition match can be used to seek an arrest warrant .However, only 15 states had enacted any FRT laws covering law enforcement at the start of 2025, and North Dakota is not among them . This legislative gap leaves millions of Americans vulnerable to similar experiences. As facial recognition technology becomes more widespread in law enforcement operations, the absence of mandatory verification protocols and accountability measures raises urgent questions about civil liberties and due process. The grandmother wrongfully jailed for six months now awaits an apology that hasn't come, while her case underscores the human cost of deploying AI systems without adequate safeguards or investigative rigor.

References

Summarized by

Navi

[1]

Related Stories

Police Facial Recognition Use Raises Concerns Over Transparency and Accuracy

07 Oct 2024•Technology

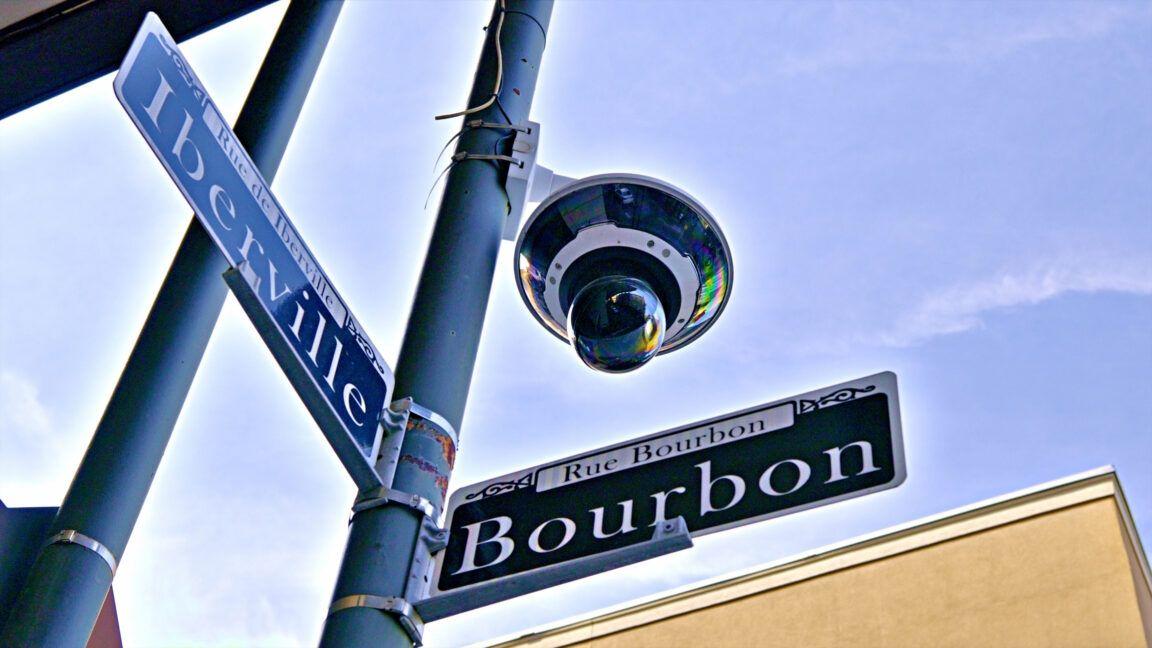

New Orleans Police Pause Controversial AI-Powered Facial Recognition Program Amid Privacy Concerns

20 May 2025•Technology

Arizona Police Deploy AI-Generated Sketches to Identify Suspects, Raising Bias Concerns

10 Dec 2025•Technology

Recent Highlights

1

Google Maps unveils Ask Maps with Gemini AI and 3D Immersive Navigation in biggest update

Technology

2

AI chatbots help plan violent attacks as safety guardrails fail, new investigation reveals

Technology

3

OpenAI secures $110 billion funding round as questions swirl around AI bubble and profitability

Business and Economy