Google Lens Introduces Voice-Activated Video Search and Enhanced Shopping Features

13 Sources

13 Sources

[1]

Google Lens Now Lets You Ask Questions With Only Your Voice

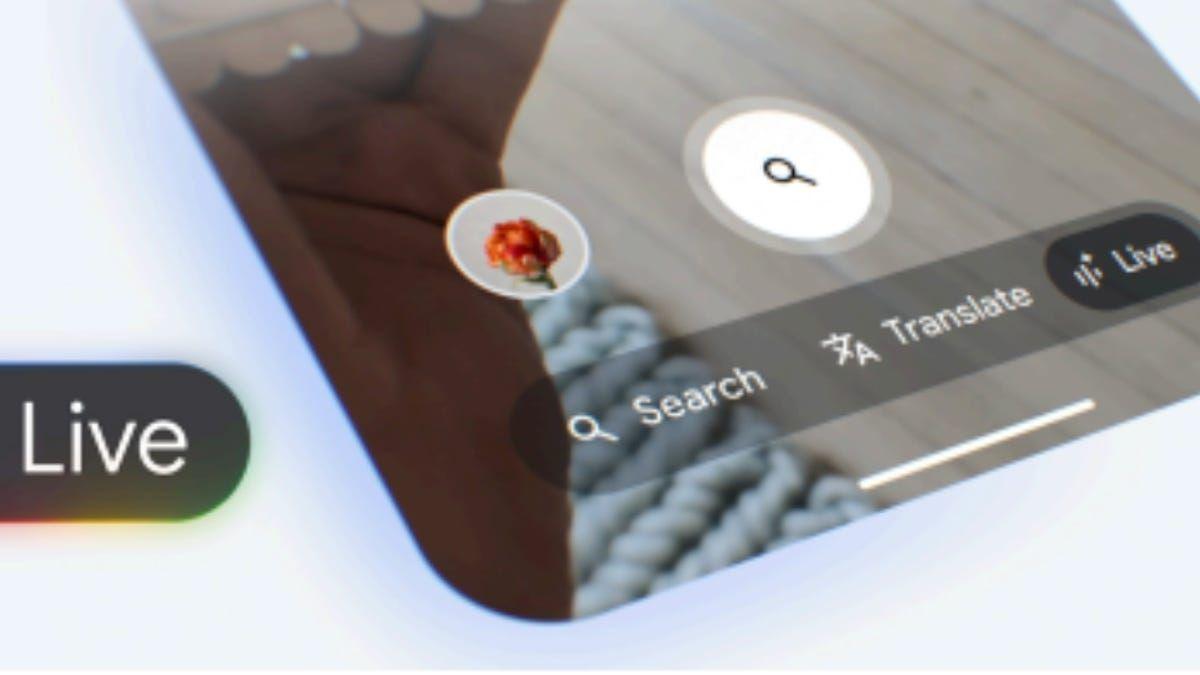

Google Lens now lets you ask questions about the world using only your voice following its latest update, which adds a bundle of new AI capabilities. Users can now point their camera at whatever interests them, hold down their phone's shutter button, and ask the app a question -- just like you would a close friend sitting next to you. You'll then be given AI-generated replies, alongside links to learn more, generated using Google's Gemini AI platform. You can check it out in the Google app now for English queries. Google Lens also added new "video understanding" capabilities, which it first previewed at Google I/O in May. This means you can take videos using Lens and have the app answer questions about any moving objects you see. For example, you can point your camera at a school of fish or a flock of birds, and Google Lens will give you an AI-generated overview that explains why they are swimming or flying together. This video option is available globally in the Android and iOS Google app for Search Labs users enrolled in the "AI Overviews and more" experiment. One of Google Lens' most popular real-world use cases until now has been retail therapy; people can use the app to find visually similar matches to items of clothing. For example, users can scan an image of a designer handbag from a magazine or TV series and find a much cheaper lookalike alternative elsewhere. According to Google, this feature is now receiving a hefty upgrade. Starting this week, users will get a more detailed results page that shows additional key information about the item of clothing they are looking for, including reviews, price info across different retailers, and where to buy. This year has seen Google release plenty of other innovative AI-search products, like its "Circle to Search" function. If something jumps out as interesting during a smartphone scrolling session, you search for it by just highlighting or circling it with your finger on supported phones.

[2]

Google Lens Will Now Let You Search Using Your Voice

The voice search feature currently supports English language queries Google Lens was recently updated with a short video feature that will allow users to upload a video and get a response to their queries via AI Overviews. However, this is not the only new update coming to the visual lookup feature. The Mountain View-based tech giant recently revealed that Google Lens would also be updated with a voice search feature. Meanwhile, shopping for similar products using the tool will also be easier with a significant update to how the results are shown to users. The tech giant explains that the voice search feature is similar to the short video feature, but it also works with photos. Users can point their camera towards anything they want to run a search for, hold the shutter button, and speak their query to have it be answered by the AI. The voice input feature is currently available globally in the Google app for Android and iOS. However, at the moment it only supports English queries, according to Google. Alongside the voice search capability, the company is also making improvements to the shopping experience with Lens. When an image of a product is captured using the tool, it can find the exact same product and similar products listed online on various e-commerce websites. But now, Google is changing the results page to show more helpful content about the searched product. Google says users will now see key information about the product searched for, including reviews, price info across retailers and where to buy. The Google Lens feature now uses advanced AI models and the company's Shopping Graph to sort through a catalogue of 45 billion products to find the right information. Google Lens received a significant upgrade with the short video-based search functionality that was rolled out last week. With this feature, users can long press the shutter icon to record a roughly 20-second-long video which can then be processed by Gemini AI that powers the AI Overviews. Google said that this feature will make it easier to capture an action or a moving object and ask queries about it.

[3]

You Can Now Search Google Via Video Thanks to New Lens Feature

Google Lens will now let people search the internet via video as well as still images thanks to a set of new features just announced. Video search lets users point their camera at an object, record it, ask a question about it, and the app will bring up search results. Google Lens is a separate app on Android while iOS users can access it via the Google app. The features use a customized version of the Gemini AI model which uses computer vision to identify what is in the video and has the ability to answer pertinent questions. "Let's say you want to learn more about some interesting fish," Lou Wanlg, director of product management, explains to the press via Tech Crunch. "Lens will produce an overview that explains why they're swimming in a circle, along with more resources and helpful information." Users wanting to gain access to Lens' new video search feature must sign up for Google's Search Labs program and opt into the "AI Overviews and more" experimental features in Labs. While in the Google app, users can hold down the smartphone camera shutter button which will activate Lens' video capture mode. With the video recording, the user can ask a question and Lens will link out to an answer supplied by AI Overviews, people will be familiar with AI overviews that have started appearing at the top of Google search results. Wang explains that the AI model powering Lens determines which frames from the video are interesting and which frames will help answer the questions being asked by the person. "All this comes from an observation of how people are trying to use things like Lens right now," Wang says. "If you lower the barrier of asking these questions and helping people satisfy their curiosity, people are going to pick this up pretty naturally." The ability to ask a question about still images has also been rolled out too. Users can now take a photo by holding down the shutter button and ask a question which will return a relevant search result. Another update to Lens is e-commerce-specific functionality. Now when Lens recognizes a product it will display more detailed information about it, such as how much it costs and any relevant deals, reviews, and stock. It is limited to select countries and specific shopping categories like electronics, toys, and beauty. "Let's say you saw a backpack, and you like it," Wang says. "You can use Lens to identify that product and you'll be able to instantly see details you might be wondering about."

[4]

Google Lens can now answer questions about videos

Google is upgrading its visual search app, Lens, with the ability to answer near-real-time questions about your surroundings. English-speaking Android and iOS users with the Google app installed can now start capturing a video via Lens, and ask questions about objects of interest in the video. Lou Wang, director of product management for Lens, said the feature uses a "customized" Gemini model to make sense of the video and pertinent questions. Gemini is Google's family of AI models, and powers a number of products across the company's portfolio. "Let's say you want to learn more about some interesting fish," Wang said in a press briefing. "[Lens will] produce an overview that explains why they're swimming in a circle, along with more resources and helpful information." To access Lens' new video analysis feature, you must sign up for Google's Search Labs program, as well as opt-in to the "AI Overviews and more" experimental features in Labs. In the Google app, holding your smartphone's shutter button activates Lens' video-capturing mode. Ask a question while recording a video, and Lens will link out to an answer supplied by AI Overviews, the feature in Google Search that uses AI to summarize information from around the web. According to Wang, Lens uses AI to determine which frames in a video are most "interesting" and salient -- and above all, relevant to the question being asked -- and uses these to "ground" the answer from AI Overviews. "All this comes from an observation of how people are trying to use things like Lens right now," Wang said. "If you lower the barrier of asking these questions and helping people satisfy their curiosity, people are going to pick this up pretty naturally." The launch of video for Lens comes on the heels of a similar feature Meta previewed last month for its AR glasses, Ray-Ban Meta. Meta plans to bring real-time AI video capabilities to the glasses, letting wearers ask questions about what's around them (e.g., "What type of flower is this?"). OpenAI has also teased a feature that lets its Advanced Voice Mode tool understand videos. Eventually, Advanced Voice Mode -- a premium ChatGPT feature -- will be able to analyze videos in real time and take context into account as it answers you. Google has beaten both companies to the punch, it seems -- minus the fact that Lens is asynchronous (you can't chat with it in real time), and assuming that the video feature works as advertised. We weren't shown a live demo during the press briefing, and Google has a history of overpromising when it comes to its AI's capabilities. Aside from video analysis, Lens can also now search with images and text in one go. English-speaking users, including those not enrolled in Labs, can launch the Google app and hold the shutter button to take a photo, then ask a question by speaking out loud. Finally, Lens is getting new e-commerce-specific functionality. Starting today, when Lens on Android or iOS recognizes a product, it'll display information about it, including the price and deals, brand, reviews, and stock. Product ID works on uploaded and newly-snapped photos (but not videos) and it is limited to select countries and certain shopping categories, including electronics, toys and beauty, for now. "Let's say you saw a backpack, and you like it," Wang said. "You can use Lens to identify that product and you'll be able to instantly see details you might be wondering about." There's an advertising component to this, too. The results page for Lens-identified products will also show "relevant" shopping ads with options and prices, Google says. Why stick ads in Lens? Because roughly 4 billion Lens searches each month are related to shopping, per Google. For a tech giant whose lifeblood is advertising, it's simply too lucrative an opportunity to pass up.

[5]

Google Lens Can Now Record a Video and Answer Queries Based on It

Google Lens is getting a significant update and will now support video recording. Users can use this feature to capture videos about objects and places they would like to run a quick web search about. The feature was first unveiled at Google I/O earlier this year, and it uses the capabilities of the company's in-house artificial intelligence model, Gemini. The feature is also integrated with AI Overviews, so it might not be available in the regions where the AI-powered search experience is not available. Gadgets 360 staff members spotted the feature within the Google Lens interface. Earlier, Google Lens would only let users capture an image and offer tools such as translation, image search, and homework which can look up a mathematical problem and find a solution for it online. While the feature was useful for quickly translating street signs in a foreign language and looking up the name of a flower, it also had limitations. Users could not search for a moving object or run a detailed query about something. For instance, you could not snap a picture of a table and ask about the type of wood used for it. However, at Google I/O, the Mountain View-based tech giant unveiled a solution for it in the form of short video recording capability. Google Lens with video now allows users to record a roughly 20-second-long video and add a verbal prompt for the video. Once the recording is done, Google Lens uses Gemini-powered AI Overviews to run a search for it using the prompt spoken in the video. AI Overviews then processes the data using computer vision and provides a response. In our testing, the feature was able to accurately identify moving objects, describe its colour and shape, as well as the material used for it. This feature is currently rolling out to all users who have access to Google Lens and live in a region where AI Overviews is available. Using the feature is simple. Just open the Google Lens interface, and in the Search mode, long press the capture icon. The interface automatically begins recording a video. The user, at this point, can say what they want to know about the video. Once done, Google Lens automatically opens Search within the Google app and AI Overviews begins generating a response. The response usually shows up within two to three seconds.

[6]

New Google Lens update lets you ask questions in video form

Google Messages is preparing to implement its new messaging protocol Key Takeaways Google Lens now allows users to record a short video with a voice or text query for quick answers. Results are generated through Google's AI Overviews feature in Search, and accuracy can vary sometimes. This new feature can be accessed through the Google app or from the dedicated Lens app in the Play Store. Pixel users can also trigger Lens via the persistent search bar on the home screen. Google Lens is a handy tool for getting quick answers about practically anything and everything you see, as well as real-time text translation, all accessible in a couple of taps. During this year's I/O dev conference, Google talked about a new Lens feature that would let users record and upload a short video while asking a relevant question about an item. This feature was then spotted in development within the Google app for Android back in June. Nearly four months have passed since then, and Google has finally flipped the switch on this minor but useful feature. Related How to use Google Lens to transform your phone into a visual search engine Don't miss out on one of Google's most useful tools Writing on his Telegram channel, Android expert and AP contributor Mishaal Rahman revealed that the ability to record a short video with a voice or text-based query is now live in Google Lens. There aren't too many changes to the functionality since we last saw it in action in June, with users simply required to open Google Lens, tap Search with your camera, and long press the camera shutter button (magnifying glass) to start recording. You can speak as the video is recorded or type in the text post-recording. Although this arrangement isn't ideal, it appears to be the fastest way to get info on something around you. As Rahman notes in his post, results are generated through Google's AI Overviews, provided it's supported in your location. Not quite perfect, but it gets the job done Close The feature appears to be rolling out widely, so I could try it on my Android smartphone. However, accuracy is still not a hundred percent, and it can get some things slightly off. For instance, I pulled up an Apple iPhone 11 and asked Lens what phone it was, only to be told by AI Overviews that it was the iPhone XR. However, recording a slightly longer video with all angles covered achieved the desired result. Similarly, Rahman also points out that the smartwatch in the video above is the OnePlus Watch 2, while Lens appears to think it's the Watch 2R. But this could be down to the fact that very little of it was shown in the video, he added. Regardless of these little quirks, there's no denying that this is a handy tool to get quick answers about things or places in your surroundings. There's currently no way to ask Lens about a recorded video from your phone's media gallery. If you own a Pixel device running the stock launcher, you will find a Google Lens icon in the persistent search bar at the bottom of the home screen. It's accessible via the Google app's search bar, too, while you can also get the Google Lens app from the Play Store app to uncomplicate things a little.

[7]

Google Lens streamlines voice input and tests video search

According to Google, "Lens queries are now one of the fastest growing query types on Search" with 20 billion visual searches every month. Google Lens is now rolling out the voice and video search capabilities announced at I/O 2024. Google Lens already lets you take a picture and then add a specific text query if the general results don't suffice. Voice input for Lens streamlines this process by letting you long-press and speak your question. Lens captures the frame and prompts you to "Speak now to ask about this image." After letting go of the shutter, the transcribed question and image are submitted at the same time to generate the AI Overview. Google Lens voice input is live globally for English queries. Video understanding takes this a step further by letting you ask "questions about the moving objects that you see." Our systems will make sense of the video and your question together to produce an AI Overview, along with helpful resources from across the web. Available by joining the "AI Overviews and more" experiment in Search Labs, long-pressing records up to 20 seconds and prompts you to "Speak now to ask about this video." This is available for English queries in Google Lens on Android and iOS. Meanwhile, Lens (and Circle to Search) shopping queries that identify a product will now include reviews, pricing, retail availability, current deals, and other key information, as well as "relevant shopping ads." We're rolling out this new experience today for Android and iOS devices in select countries, starting with top holiday shopping categories like toys, electronics and beauty. Finally, Google says the Lens-adjacent Circle to Search is now available on over 150 million Android devices.

[8]

Google Lens now lets you search with video

If you can't capture what you want to search for with just a picture, Google Lens will now let you take a video -- and even use your voice to ask about what you're seeing. The feature will surface an AI Overview and search results based on the video's contents and your question. It's rolling out in Search Labs on Android and iOS today. Google first previewed using video to search at I/O in May. As an example, Google says someone curious about the fish they're seeing at an aquarium can hold up their phone to the exhibit, open the Google Lens app, and then hold down the shutter button. Once Lens starts recording, they can say their question: "Why are they swimming together?" Google Lens then uses the Gemini AI model to provide a response, similar to what you see in the GIF below.

[9]

You can now ask Google Lens questions about your photos and videos

Google demoed a new video search feature for Google Lens at I/O this year that can answer questions about recorded clips. The feature recently rolled out to some users, and Google has now officially announced wider availability along with a couple of other nifty upgrades for Lens, Search, and AI Overviews. With the new video search feature, you can use Google Lens to capture a video with your phone while asking a question about moving objects in the frame. Google says that the feature will "make sense of the video and your question together to produce an AI Overview, along with helpful resources from across the web." The ability to ask questions won't be limited to video content, and voice search will also be available when you take a photo with Google Lens.

[10]

Google Lens Now Supports Video Search and Voice Input

Google today shared several updates to the Google Lens search feature that's available in the Google app for iOS. Google is adding support for asking questions about videos, so that users can get information about moving objects. For example, if you're at an aquarium and want to learn more, you can use Google Lens to capture a short video of a fish and get details about it. According to Google, Lens can interpret the video and the question, using the information to provide an AI Overview with additional web links to learn more. The video feature can be used by opening Lens in the Google app and holding down the shutter button to record a video while also asking a question out loud. Google has also added support for asking a voice-based question when taking an image with Lens. Users can point the camera, hold the shutter button, and ask a question. For those who use Google Lens to find visually similar images for shopping purposes, Google says results will be "dramatically more helpful" with information about the product you want to buy, including price info across retailers and where to get it. In addition to these Google Lens changes, Google is rolling out search results pages organized by AI in the United States. AI results will show first for recipes and meal inspiration on mobile devices, with research results showing relevant results organized by different recipe options, ingredients, and more. A new look for AI Overviews is rolling out, with the updated design showing prominent links to supporting webpages within the text of an AI Overview. Google says that this layout better drives traffic to supporting websites. Google is also adding ads to AI Overviews, and the company says that people find ads in AI Overviews helpful because "they can quickly connect with relevant businesses, products and services." The updated Google Lens features are rolling out to iOS devices starting today, as are the search changes.

[11]

See it, record it, search it: Google Lens video search feature starts rolling out

Users can record a short video and ask questions about it; Google Lens will provide relevant search results, including AI-generated responses in supported regions. Google seems to have started rolling out the new video search feature, allowing users to find video content based on clips they capture. The feature aims to enhance the way users discover and interact with their surroundings Google announced the feature at I/O 2024, and earlier this month, Android Authority activated and demoed it, showcasing its capabilities. Now, our contributor, Mishaal Rahman, is reporting that the feature is now appearing for more users. Essentially, you can now fire up Google Lens and record a short video of something that you want to know more about. You'll be able to ask questions about the recorded clip and Google will show you relevant search results about the subject in the video. In regions where AI Overviews are supported, users will see an AI-generated response. To use the new Google Lens video search feature, first enter into Google Lens and hold down the shutter button. During this time, you can start vocally asking your question about the subject in the video. With this rollout, Google Lens is yet again making information discovery easier and bridging the gap between still images and dynamic video content.

[12]

Google Lens video search starting to roll out now -- this is a huge upgrade

It appears that Google is slowly rolling out the Search with Video feature for Google Lens that it originally announced during the Google I/O event. Google has massively improved Google Lens since its original release in 2017. For instance, there's the recent addition of generative artificial intelligence which shows a full AI-generated response to uploaded images. Then there's Google Lens' integration into the hugely popular Circle to Search, which is also constantly adding new features. Now a recent post on X says that the Search with Video feature has started to roll out to Android devices. The post comes from Mishaal Rahman, via Android Authority, who states that he was able to get the new search with video feature to work on his device. Sadly, I was not able to get it to work on my Galaxy Z Fold 5. Search with Video allows users to record a short clip on Google Lens and ask questions about the content, with Lens offering search results based on the questions. If the users live in an area that allows AI overlays, then the answers will be AI-generated. The feature is easy to find. All users need to do is enter Google Lens and then hold down the shutter button. This allows your phone to record video and gives you time to ask questions. In theory, this should add more to the average user's arsenal when trying to find answers to a question where a picture just isn't enough. For instance, you could request information on a fault for a product that only happens when it is running. The addition of video isn't the only update that Google has added to Google Lens. Only recently we saw the implementation of Search With Voice. Interestingly, this feature is also accessed by holding down the shutter button, so it might mean that searching with a still image becomes less common. Meanwhile, Apple fans shouldn't despair as Apple Intelligence promises to be able to turn their iPhone 16's camera into a visual search engine as well. Google is constantly working to improve and integrate its features into some of the best Android phones and it is always appreciated. Let us know if you were able to activate the feature on your Android device.

[13]

You can sign up to try the new Google Lens video search right now

Key Takeaways Google Lens now supports video search for Search Labs participants. You can record video and ask questions, but accuracy of answers may vary. Google Lens product manager says Google's different visual search products are distinct because people use them for different things. Back in June, we got our first glimpse of upcoming Google Lens functionality that lets you search not only using photos, but also videos. Then, earlier this week, some users got access to that new option with very little fanfare -- Android sleuth Mishaal Rahman broke the news on his Telegram channel, and some of us here at AP found that we could use Lens for video search shortly thereafter. Today, Google's finally officially announcing the feature. While Google's announcement doesn't contain much in the way of new info about video search itself, it does reveal how to access it: video search in Google Lens is currently a Search Labs exclusive feature. If you're curious to try video search out but you're not already enrolled, it's easy to get in. New multimodal Lens features are official For users enrolled in Search Labs' AI Overviews and more experiment, searching with video in Lens is very simple: tap the Lens icon in the Google search bar, point your camera at what you want to ask about, and press and hold the shutter button. As you're recording, you can ask questions about the subject of the video. Google will try to parse the video and your question about it, then deliver an AI-powered summary of the answer. Source: Mishaal Rahman The process is surprisingly snappy, but it's not always fully accurate; in Mishaal's example above, the watch he scanned into Lens was actually a OnePlus Watch 2, but Lens identified it as a OnePlus Watch 2R. That's an easy enough mistake to make, though; the two watches are very similar. If you're not a Search Labs participant, pressing and holding the shutter button won't record a video, but will instead snap a photo, then record audio while your finger is held down so you can ask questions out loud. The end result is similar: you'll get an AI-generated answer to your question. How to sign up for Search Labs To get in on Lens's new video capabilities, you'll need to sign up for Search Labs, then opt into the AI Overviews and more experiment. The new Lens video option is available in the Google app on Android and iOS. Google demoed multimodal functionality in Gemini at I/O Google showed off some Gemini-powered multimodal video capabilities at I/O earlier this year. While this new Lens feature is much more limited in scope than the wide-ranging Project Astra demos I got to try, it still feels like Google's visual search efforts -- across Lens, Circle to Search, and Gemini -- have a lot of overlap. I asked Lens product manager Harsh Kharbanda about this apparent redundancy earlier this week, and he said that Google's various visual search products are distinct largely because of the the different ways people tend to use them. Related I saw Google's 'vision for the future of AI assistants' at I/O, and I'm cautiously optimistic Project Astra is set to bring real-time multimodal input to Gemini "People use these visual search products in different ways, and they have sort of slightly different mental models," Kharbanda told me. "So for (Lens), generally people ask questions about things in front of them. A plant that's dying, a coin that they found in the house, and they're like, hey how much is it worth, and so forth. For Circle to Search, almost all questions are about things on your screen. An Instagram influencer is sporting a new backpack and you're like, hey, like I want to know more about that." "And in fact, in Gemini, a lot of the questions are more about creative collaboration. You know, like, what other artwork can my toddler do?" Kharbanda continued. "And so I think at this point, the way users are using these products is distinct enough that it makes sense to people to actually have these different entry points and different products across their journey and their phone." Related Google Lens: What it is and how to use it Search, shop, translate, and more with Google Lens

Share

Share

Copy Link

Google has rolled out significant updates to its Lens app, including voice-activated video search capabilities and improved shopping features, leveraging AI technology to enhance user experience and product information retrieval.

Voice-Activated Video Search

Google has introduced a groundbreaking feature to its Lens app, allowing users to search the internet using video and voice commands. This new capability enables users to point their camera at an object, record a short video, and ask questions about it verbally

1

. The app then processes the query using Google's Gemini AI platform to provide AI-generated replies and relevant links2

.AI-Powered Video Understanding

The update includes "video understanding" capabilities, which allow users to take videos of moving objects and receive AI-generated overviews explaining the observed phenomena. For instance, users can capture footage of fish swimming in circles and receive an explanation for this behavior

3

.Enhanced Shopping Experience

Google Lens has also received a significant upgrade to its shopping features. When users scan an image of a product, the app now provides a more detailed results page, including key information such as reviews, price comparisons across different retailers, and purchase options

1

. This enhancement is powered by advanced AI models and Google's Shopping Graph, which catalogs over 45 billion products2

.Technology Behind the Features

The new features are powered by a customized version of Google's Gemini AI model, which utilizes computer vision to identify objects in videos and images

4

. The AI determines which video frames are most relevant to the user's question and uses these to ground the answers provided by AI Overviews4

.Availability and Access

The voice search feature is available globally in the Google app for Android and iOS, currently supporting English queries

2

. To access the video search feature, users must sign up for Google's Search Labs program and opt into the "AI Overviews and more" experimental features3

.Related Stories

Implications for E-commerce

The new shopping-related features in Google Lens have significant implications for e-commerce. With approximately 4 billion Lens searches per month related to shopping, Google is capitalizing on this trend by integrating relevant shopping ads within the results page for Lens-identified products

4

.Future of Visual Search

These updates to Google Lens represent a significant step forward in visual search technology. By combining video capture, voice commands, and AI-powered analysis, Google is lowering the barrier for users to satisfy their curiosity about the world around them

5

. As this technology continues to evolve, it may reshape how people interact with and learn about their environment through their smartphones.References

Summarized by

Navi

[1]

[2]

[4]

Related Stories

Recent Highlights

1

Tennessee Teens Sue Elon Musk's xAI Over Grok AI-Generated Child Abuse Images

Policy and Regulation

2

Supermicro Co-Founder Indicted in $2.5 Billion Nvidia AI Chip Smuggling Scheme to China

Policy and Regulation

3

Val Kilmer to appear posthumously in As Deep as the Grave through AI-generated performance

Entertainment and Society