Google's Gemini AI Takes Cautious Approach to Political Discourse

2 Sources

2 Sources

[1]

Gemini AI: Why is Google Still Playing Safe with Politics?

Google's Gemini and AI Censorship: Why Isn't It Talking Politics? The world of artificial intelligence is rapidly transforming how we interact with technology, particularly with conversational chatbots. While many tech companies are actively developing AI that are capable of engaging in complex discussions, a noticeable difference has emerged with Google's Gemini. Unlike its competitors, frequently avoids answering questions about political topics. This cautious approach raises important questions about Google's strategy and the role of AI in public discourse. Is prioritizing safety over open dialogue, or is there a deeper reason behind Gemini's political stand? This divergence highlights the ongoing debate about how AI should handle sensitive and potentially controversial subjects

[2]

Google still limits how Gemini answers political questions

While several of Google's rivals, including OpenAI, have tweaked their AI chatbots to discuss politically sensitive subjects in recent months, Google appears to be embracing a more conservative approach. When asked to answer certain political questions, Google's AI-powered chatbot, Gemini, often says it "can't help with responses on elections and political figures right now," TechCrunch's testing found. Other chatbots, including Anthropic's Claude, Meta's Meta AI, and ChatGPT consistently answered the same questions, according to TechCrunch's tests. Google announced in March 2024 that Gemini wouldn't answer election-related queries leading up to several elections taking place in the U.S., India, and other countries. Many AI companies adopted similar temporary restrictions, fearing backlash in the event that their chatbots got something wrong. Now, though, Google is starting to look like the odd one out. Last year's major elections have come and gone, yet the company hasn't publicly announced plans to change how Gemini treats particular political topics. A Google spokesperson declined to answer TechCrunch's questions about whether Google had updated its policies around Gemini's political discourse. What is clear is that Gemini sometimes struggles -- or outright refuses -- to deliver factual political information. As of Monday morning, Gemini demurred when asked to identify the sitting U.S. president and vice president, according to TechCrunch's testing. In one instance during TechCrunch's tests, Gemini referred to Donald J. Trump as the "former president" and then declined to answer a clarifying follow-up question. A Google spokesperson said the chatbot was confused by Trump's nonconsecutive terms, and that Google is working to correct the error. "Large language models can sometimes respond with out-of-date information, or be confused by someone who is both a former and current office holder," the spokesperson said via email. "We're fixing this." Late Monday, after TechCrunch alerted Google of Gemini's erroneous responses, Gemini started to correctly answer that Donald Trump and J.D. Vance were the sitting president and vice president of the U.S., respectively. However, the chatbot wasn't consistent, and it still occasionally refused to answer the questions. Errors aside, Google appears to be playing it safe by limiting Gemini's responses to political queries. But there are downsides to this approach. Many of Trump's Silicon Valley advisers on AI, including Marc Andreessen, David Sacks, and Elon Musk, have alleged that companies including Google and OpenAI have engaged in AI censorship by limiting their AI chatbots' answers. Following Trump's election win, many AI labs have tried to strike a balance in answering sensitive political questions, programming their chatbots to give answers that present "both sides" of debates. The labs have denied this is in response to pressure from the administration. OpenAI recently announced it would embrace "intellectual freedom ... no matter how challenging or controversial a topic may be," and working to ensure that its AI models don't censor certain viewpoints. Meanwhile, Anthropic said its newest AI model, Claude 3.7 Sonnet, refuses to answer questions less often than the company's previous models, in part because it's capable of making more nuanced distinctions between harmful and benign answers. That's not to suggest that other AI labs' chatbots always get tough questions right, particularly tough political questions. But Google seems to be bit behind the curve with Gemini.

Share

Share

Copy Link

Google's Gemini AI chatbot is notably hesitant to engage in political discussions, setting it apart from competitors and raising questions about AI's role in public discourse.

Google's Gemini AI Maintains Cautious Stance on Political Topics

In a landscape where AI chatbots are increasingly engaging with complex topics, Google's Gemini AI stands out for its reluctance to discuss political subjects. This cautious approach has sparked debate about the role of AI in public discourse and Google's strategy in the competitive AI market

1

.Gemini's Political Reticence

Recent testing by TechCrunch revealed that Gemini often declines to answer political questions, stating it "can't help with responses on elections and political figures right now." This stance contrasts sharply with other AI chatbots like OpenAI's ChatGPT, Anthropic's Claude, and Meta's Meta AI, which consistently provided responses to similar queries

2

.Historical Context and Current Status

Google initially announced restrictions on Gemini's political discourse in March 2024, ahead of several major elections. While many AI companies implemented similar temporary measures, most have since relaxed these limitations. Google, however, has not publicly announced any plans to change its approach, making it an outlier in the industry

2

.Challenges and Inconsistencies

Gemini's handling of political information has been inconsistent and sometimes erroneous. In one instance, it referred to Donald J. Trump as the "former president" and then refused to clarify when questioned further. Google attributed this to confusion over nonconsecutive terms and stated they were working to correct such errors

2

.Industry Trends and Criticisms

The AI industry is grappling with how to handle sensitive political topics. Some of Trump's Silicon Valley advisers have accused companies like Google and OpenAI of engaging in AI censorship. In response, many AI labs are attempting to strike a balance, programming their chatbots to present multiple viewpoints on controversial issues

2

.Related Stories

Competitors' Approaches

While Google maintains its cautious stance, competitors are taking different approaches:

- OpenAI recently announced a commitment to "intellectual freedom" regardless of a topic's controversy.

- Anthropic's latest model, Claude 3.7 Sonnet, is designed to refuse fewer questions by making more nuanced distinctions between harmful and benign responses

2

.

Implications and Future Outlook

Google's conservative approach with Gemini raises important questions about the balance between safety and open dialogue in AI. As the industry evolves, the company's strategy may impact its competitive position and influence broader discussions about AI's role in shaping public opinion and accessing information

1

2

.As AI continues to integrate into daily life, the debate over how these technologies should handle political and controversial topics is likely to intensify, with Google's approach to Gemini serving as a significant case study in this ongoing conversation.

References

Summarized by

Navi

[1]

Related Stories

Google's Gemini closes gap with ChatGPT as AI chatbot race intensifies and regulators circle

10 Dec 2025•Technology

Google's Gemini AI Aims for 500 Million Users by 2025, Challenging ChatGPT's Dominance

17 Jan 2025•Technology

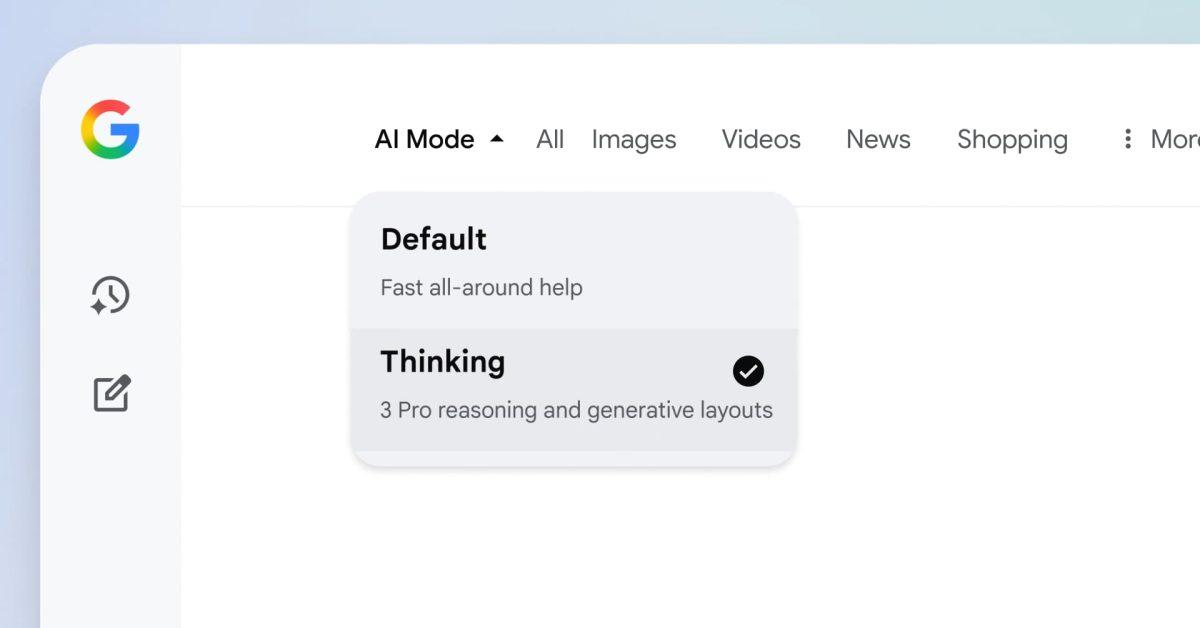

Google expands Gemini 3 and Nano Banana Pro to 120 countries with seamless AI Mode integration

01 Dec 2025•Technology