Meta unveils four MTIA chip generations to power AI inference and reduce Nvidia dependence

7 Sources

7 Sources

[1]

Meta's new MTIA lineup joins hyperscalers' unified push for dedicated inferencing chips -- companies diversify AI chips in effort to diversify from sole reliance on Nvidia

Google, AWS, Microsoft, and Meta have all independently reached the same conclusion. Meta announced four successive generations of its custom Meta Training and Inference Accelerator (MTIA) chips on March 11: The MTIA 300, 400, 450, and 500, all scheduled for deployment over the next two years. Meta described the chips as progressively optimized for AI inference workloads on the premise that HBM memory bandwidth is the binding constraint on inference. Coming two weeks after Meta disclosed a long-term AI infrastructure with AMD, the announcement puts Meta alongside Google, AWS, and Microsoft, each of which has spent the last few years building and scaling custom silicon programs for AI accelerated workloads. Will this emerging class of chips put a dent in Nvidia's stranglehold on the AI chip industry? An inference case against GPUs In a technical blog post published alongside the announcement, Meta described HBM's bandwidth as the most important factor affecting AI inference performance, adding that mainstream chips, built for large-scale pre-training, are then applied less cost-effectively to inference workloads. "We doubled HBM bandwidth from MTIA 400 to 450, making it much higher than that of existing leading commercial products," it reads. The MTIA 500 then increases HBM bandwidth again by an additional 50% compared with the MTIA 450. Both chips are optimized primarily for AI inference but can be applied to other workloads, including training as a secondary use case. The MTIA 300 is already in production for ranking and recommendations training. Meanwhile, the MTIA 400 -- which features a 72-accelerator scale-up domain and performance -- has completed lab testing and is on the path to data center deployment. The 450 and 500 are scheduled for mass deployment in early 2027 and later in 2027, respectively. Across the full 300-to-500 progression, HBM bandwidth increases 4.5 times and compute FLOPs increase 25 times, with the MTIA 450's HBM bandwidth exceeding that of existing leading commercial products, while the MTIA 500 adds another 50% on top, along with up to 80% more HBM capacity. According to Meta, the chips use a modular chiplet architecture that allows the MTIA 400, 450, and 500 to share the same chassis, rack, and network infrastructure. That compatibility means each new chip generation drops into the existing physical footprint without requiring new data center buildouts, the mechanism Meta cited for its roughly six-month development cadence, well faster than the industry's typical one-to-two year cycle. "More importantly, we have deployed hundreds of thousands of MTIA chips in production, onboarded numerous internal production models, and tested MTIA with large language models (LLMs) like Llama." Google, AWS, and Microsoft Google announced Ironwood, its seventh-generation TPU, at Google Cloud Next in April 2025; the company described it as the first TPU purpose-built for inference and the beginning of an "age of inference," distinct from the training-first era that preceded it. Ironwood delivers 192 GB of HBM3E per chip at 7.37 TB/s of memory bandwidth, per Google's published specifications, and scales to configurations of up to 9,216 AI accelerators. Then, in December at re:Invent, AWS announced Trainium3, a 3nm chip with 144 GB HBM3E per chip at 4.9 TB/s bandwidth, with a single Trainium3 UltraServer connecting 144 chips. AWS has also maintained a separate Inferentia product line -- a chip dedicated exclusively to inference -- since 2019. Meanwhile, Microsoft introduced its Maia 200 for inference workloads built on TSMC 3nm, which it called its "most efficient inference system." Broadcom is what's connecting the dots across many of these programs, having had a hand in building both Google's TPUs (as the company's silicon integrator) and Meta's MTIA family. Meta described the MTIA chips as being developed "in close partnership with" Broadcom, and said that the company "has remained and will continue" to be a key partner of Meta's AI infrastructure strategy. Broadcom also notably secured an agreement back in October to help OpenAI build 10 GW of custom ASICs, with deployments beginning as early as this year. If nothing else, the role that Broadcom now plays across competing hyperscaler programs reflects both how capital-intensive custom silicon development is and how consistent the underlying architectural requirements have become. This convergence continues with software stacks, with Meta building MTIA natively on PyTorch, vLLM, and Triton. Google also added TPU support for vLLM in beta, and AWS runs its Neuron SDK across PyTorch, TensorFlow, and JAX. These shared inference-serving frameworks ultimately determine how easily production workloads can port between chips, and portability is what will make the economics of switching from CUDA-locked Nvidia silicon as the default GPU credible at scale. Nvidia retains training None of this changes Nvidia's position in large-scale pre-training. Frontier model development still overwhelmingly runs on high-end GPU clusters, and Nvidia's Blackwell is the current standard for that workload. Meta itself operates large Nvidia GPU clusters alongside MTIA deployments, and its February 2026 AMD agreement adds further GPU capacity to a portfolio that already spans multiple silicon vendors. Instead, what we're seeing is workload segmentation, whereby custom silicon takes high-volume, predictable inference workloads and GPUs retain training. MTIA 450 and 500 are designed to cover AI inference production through 2027, while Google, AWS, and Microsoft have each made equivalent commitments on their own timelines. At the point where inference represents the bulk of AI compute cycles, hyperscalers appear to have collectively decided that paying a premium for GPUs to run those workloads is no longer financially sound.

[2]

Meta Preparing to Deploy Four New Homegrown Chips to Handle AI

Meta will continue buying chips from other companies, including Nvidia Corp. and Advanced Micro Devices Inc., as it pursues a dual approach of buying traditional hardware and investing in custom silicon for specialized tasks. Meta Platforms Inc. plans to deploy four new generations of its in-house artificial intelligence chips by the end of 2027 as the company turns to custom silicon to help power its rapidly expanding AI workloads. Meta on Wednesday announced plans for the new chips - MTIA 300, MTIA 400, MTIA 450 and MTIA 500 - as a part of an effort to diversify its hardware sources, reduce reliance on outside chipmakers and bring down costs amid a fast-moving and expensive AI race. Meta will continue buying chips from other companies as well, and recently announced deals to spend billions on AI hardware from Nvidia Corp. and Advanced Micro Devices Inc. The MTIA 300 is already in production for content ranking and recommendations training, the company said, and MTIA 400, also known as Iris, has completed lab testing and is moving toward deployment. The MTIA 450 and MTIA 500 chips -- code-named Arke and Astrid, respectfully -- are scheduled for mass deployment in 2027. Yee Jiun Song, Meta's vice president of engineering, said that the products are being built in parallel, with the MTIA 450 model expected to arrive early in the year and the MTIA 500 slated six months later. "If we look at overall AI development, I think even in the last two or three months things have accelerated at a pace that has kind of blown everyone's minds," Song said. "Silicon programs have to keep up with that evolution of workloads, so we're constantly looking at our road maps and making sure we're building what we think will be the most useful products." Meta is spending aggressively to build competitive AI models and products, which has led to unprecedented demand for computing power. Meta has turned to Nvidia and AMD to power some of its AI efforts, but has also worked to build out its bench of talent focused on chip design in hopes of developing its own products. Last year, after Chief Executive Officer Mark Zuckerberg grew impatient with the company's in-house progress, Meta tried to acquire South Korean chip startup FuriosaAI. After FuriosaAI rejected an $800 million offer, Meta instead acquired Santa Clara, California-based startup Rivos Inc., along with more than 400 of its employees. Get the Tech Newsletter bundle. Get the Tech Newsletter bundle. Get the Tech Newsletter bundle. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Bloomberg may send me offers and promotions. Plus Signed UpPlus Sign UpPlus Sign Up By submitting my information, I agree to the Privacy Policy and Terms of Service. The additional headcount has helped Meta's in-house chips team, known as the Meta Training and Inference Accelerator, pursue several projects at once. MTIA is focused on building more efficient computing architecture for the company's internal needs, which range from ranking and recommendations systems used to determine what content appears on users' Instagram feeds to large-scale generative AI inference, where a trained model is used to generate text or images in response to a prompt. While Meta executives have emphasized the benefits of the company building its own chips, it's also one of the biggest buyers of graphics processing units, or GPUs, used for training and running AI models. Its recent agreements with Nvidia and AMD are worth tens of billions of dollars each, and mean Meta has locked in gigawatts of AI capacity over the coming years. The strategy reflects the company's dual approach of buying more traditional hardware from industry partners while continuing to invest in custom silicon for tasks that are more specialized to Meta's platforms. "We're not building for the general market, so our chips don't need to be as general purpose," Song said. "We can cut out things that we don't need, which really allows us to drive down cost." Still, the economics of chipmaking are challenging. Taking a product from the design phase to manufacturing by a third party - usually Taiwan Semiconductor Manufacturing Co. - can cost billions of dollars and take precious time. Song said it typically takes his team two years to go from design to production. Custom chips usually only pay off at scale and with high utilization rates. Last month, the Information reported that Meta had scrapped its most advanced chip focused on training AI models, known by the code name Olympus, after struggling with its design, shifting instead to focus on a less complicated version. A Meta spokesperson declined to comment on the reporting, but said that the company regularly evaluates and evolves its silicon road map and learns from product deployments. Meta Chief Financial Officer Susan Li said at a conference hosted by Morgan Stanley earlier this month that the company still aims to develop processors that can train AI models.

[3]

Meta reveals four new MTIA chips built for AI inference -- to be released on a six-month cadence

The chiplet-based accelerators are designed to run AI inference more efficiently than GPUs optimized for training workloads. Meta today announced four successive generations of its in-house Meta Training and Inference Accelerator (MTIA) chips, all developed in partnership with Broadcom and scheduled for deployment within the next two years. "We've developed a competitive strategy for MTIA by prioritizing rapid, iterative development, reads Meta's press release, along with an inference-first focus and frictionless adoption by building natively on industry standards. The four new chips are MTIA 300, 400, 450, and 500. MTIA 300 is already in production for ranking and recommendations training, while 400 is currently in lab testing ahead of data center deployment. MTIA 450 and 500 are targeted at AI inference and are scheduled for mass deployment in early 2027 and later in 2027, respectively. According to Meta's technical blog, from MTIA 300 through to MTIA 500, HBM bandwidth increases 4.5 times, and compute FLOPs increase 25 times. Meta says MTIA 450 doubles the HBM bandwidth of MTIA 400, describing it as "much higher than that of existing leading commercial products," or, in other words, Nvidia's H100 and H200. MTIA 500 then adds another 50% HBM bandwidth on top of 450, along with up to 80% more HBM capacity. Indeed, it's HBM bandwidth and not raw FLOPs that are the main bottleneck during the decode phase of transformer inference, and mainstream GPUs are architected to maximize FLOPs for large-scale pre-training. This means they carry a cost and power overhead that Meta says is unnecessary for inference workloads. Meta's approach also includes hardware acceleration for FlashAttention and mixture-of-experts feed-forward network computation, plus custom low-precision data types co-designed for inference. MTIA 450 supports MX4, delivering six times the MX4 FLOPs of FP16/BF16, with mixed low-precision computation that avoids the software overhead of data type conversion. In terms of eventual deployment, MTIA 400, 450, and 500 will all use the same chassis, rack, and network infrastructure, meaning each new chip generation drops into the existing physical footprint for easy interchange. It's this modularity, Meta says, that's behind MTIA's roughly six-month chip cadence, which itself is much faster than the industry's typical one-to-two year cycle. The software stack runs natively on PyTorch, vLLM, and Triton, with support for torch.compile and torch.export so that production models can be deployed simultaneously on both GPUs and MTIA without MTIA-specific rewrites. Meta said it has already deployed hundreds of thousands of MTIA chips across its apps for inference on organic content and ads. All this comes just two weeks after Meta disclosed a long-term, $100 billion AI infrastructure agreement with AMD, suggesting that there's a broader effort at play to reduce dependence on Nvidia across different parts of Meta's AI stack while keeping MTIA at the core of inference workloads. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[4]

Meta rolls out in-house AI chips weeks after massive Nvidia, AMD deals

The specialized silicon is part of the Meta Training and Inference Accelerator, or MTIA, family of chips, which it publicly revealed for the first time in 2023 before unveiling a second-generation version in 2024. Meta Vice President of Engineering Yee Jiun Song told CNBC that by designing custom chips, which are then manufactured by Taiwan Semiconductor, the social media giant can squeeze more price per performance across its data center fleet rather than relying on only vendors. "This also provides us with, with more diversity in terms of silicon supply, and insulates us from price changes to some extent," Song said. "This is a little bit more leverage." The first new chip, MTIA 300, was deployed a few weeks ago and is intended to help train smaller AI models that underpin Meta's core ranking and recommendation tasks, Song said. These kinds of tasks include showing people relevant content and online ads within the company's family of apps like Facebook and Instagram. The upcoming chips -- MTIA 400, MTIA 450 and MTIA 500 -- are intended for more cutting-edge generative AI-related inference tasks like creating images and videos based on people's written prompts. The chips will not be used for training giant large language models, Song said.

[5]

Meta's Race to Scale AI Chips for Billions: Four Chips in Two Years

Seamless model onboarding: MTIA supports both eager and graph modes. In graph mode, it integrates directly with PyTorch 2.0's compilation pipeline. Developers use familiar tools -- torch.compile and torch.export -- to capture and optimize model graphs. No MTIA-specific rewrites are required to enable models. This portability enables our production models to be deployed simultaneously on both GPUs and MTIA. Compilers: Beneath the PyTorch frontend, MTIA-specific compilers translate high-level graph representations into highly optimized device code. The graph compiler is built on Torch FX IR and TorchInductor. The kernel compiler and lower-level backends are based on Triton, MLIR, and LLVM, enhanced and optimized for MTIA. We improved and tailored TorchInductor's Triton code generations and kernel fusion for MTIA, and introduced MTIA-aware MLIR dialects and Triton DSL extensions. These extensions can be used optionally for performance-critical kernels. The compiler stack has autotuning capabilities that automatically optimize workloads using multiple compilation strategies. Kernel authoring: MTIA supports compiler-driven kernel generation and fusion, enables both auto-generated and user-driven manual kernel authoring using Triton and C++, and provides kernel auto-tuning and optimizations. Furthermore, we have built agentic AI systems to automate kernel generation; see our papers on TritorX and KernelEvolve for details. Communication and transport: MTIA's communication library, Hoot Collective Communications Library (HCCL), is similar to GPU communication libraries but offers several differentiators. It leverages the MTIA chips' built-in network chiplets for efficient communication, offloads collective operations to dedicated message engines, and uses near-memory compute to accelerate reduction-heavy collectives. HCCL also supports fusing compute and collective kernels to minimize latency. Finally, its transport stack is optimized for low-latency transactions and offloads the entire data path to reduce host-stack runtime overhead. Runtime and firmware: The MTIA runtime manages device memory, kernel scheduling, and execution coordination across multiple devices. It supports both eager and graph execution modes. Additionally, it orchestrates compute and collective operations in an Inductor-native, eager-style graph mode. This approach enables compute and communication to be captured and scheduled together, providing a GPU-like experience with minimal overhead. The runtime interfaces with a Rust-based user-space driver, rather than a traditional in-kernel Linux driver. The firmware is written in bare-metal Rust, delivering low latency and high performance, with built-in memory and thread safety. vLLM support : vLLM's plugin architecture allows easy integration with MTIA. Our MTIA plugin replaces important operators, such as FlashAttention and fused LayerNorm, with MTIA-specific kernels. Graph-mode execution is supported via a custom torch.compile backend. MTIA inherits and benefits from vLLM's features such as prefill-decode disaggregation and continuous batching. Production tools: To reliably operate hundreds of thousands of MTIA chips in production, MTIA offers production-grade monitoring, profiling, and debugging tools comparable to those available for mainstream GPUs, while providing unique capabilities such as full-stack, at-scale observability across both host and device, spanning software, firmware, and hardware. Its debugger enables fine-grained control, including breakpoints and coordinated stepping at the PE level.

[6]

Meta debuts internally-developed AI chips for inference workloads - SiliconANGLE

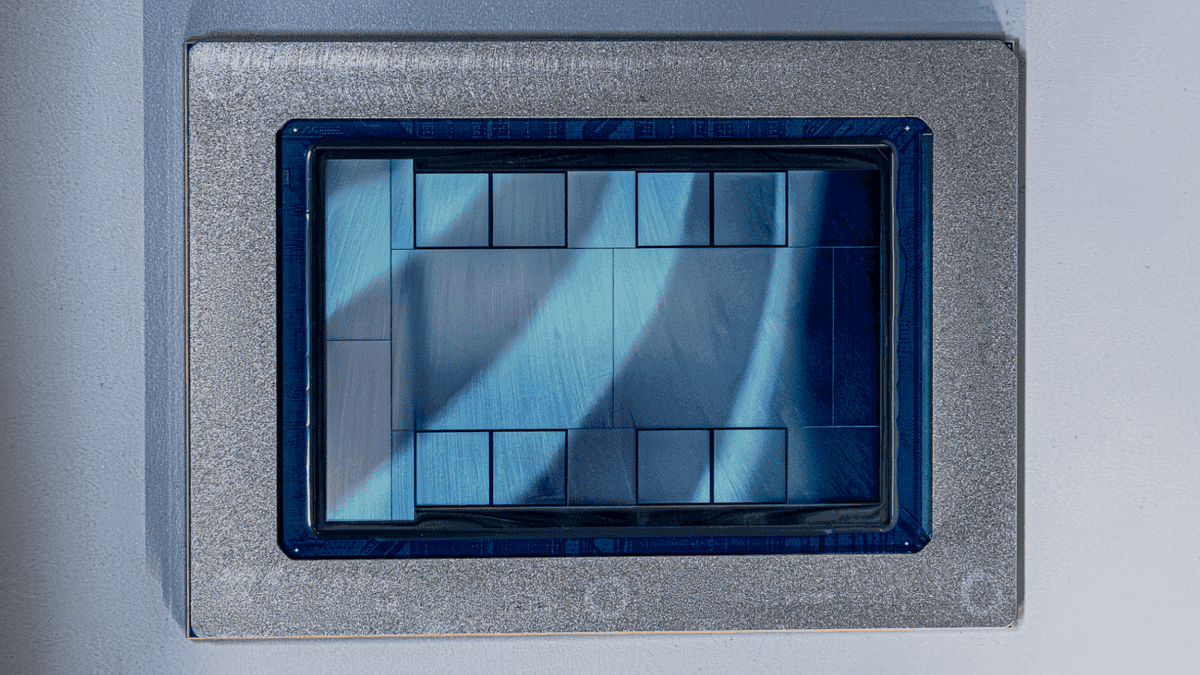

Meta debuts internally-developed AI chips for inference workloads Meta Platforms Inc. today revealed that it has designed 4 custom chips to power its internal artificial intelligence workloads. The company last provided an update about its processor development efforts in 2024. In April of that year, it revealed a custom AI accelerator with an energy footprint of 90 watts. The most advanced of the 4 accelerators that Meta debuted today has a thermal design point of 1,700 watts. The custom chip that the company revealed in April 2024, the MTIA 200, was designed solely to run ranking and recommendation models. Those are neural networks that Meta uses to determine what posts and ads to display in users' feeds. The first new chip that it unveiled today, the MTIA 300, is focused on the same use cases. It can provide 1.2 petaflops of performance when processing data in the MX8 format and features 216 gigabytes of HBM memory. "MTIA 300 comprises one compute chiplet, two network chiplets, and several HBM stacks," a group of Meta engineers wrote in a blog post today. "Each compute chiplet comprises a grid of processing elements (PEs), with some redundant PEs to improve yield." The MTIA 300 is the only one of the 4 newly revealed chips that Meta has already deployed in production. The 3 other processors support a broader range of use cases. Besides ranking and recommendation workloads, they can also run generative AI software such as large language models. The most advanced chip in the lineup, the MTIA 500, can provide 10 petaflops of performance when processing MX8 data. It also supports a more efficient data format called MX4. The latter technology reduces the number of bytes that AI models must analyze to answer prompts, which speeds up processing. The MTIA 500 carries out calculations using four logic chiplets. The modules are surrounded by multiple stacks of HBM memory that can together store up to 516 gigabytes of data, or twice as much as the MTIA 300. Rounding out the processor's list of components is a so-called SoC chiplet that is responsible for moving information to and from the host server. The MTIA 500 is expected to enter production in 2027 alongside a similar, but less advanced chip called the MTIA 450. Both processors are optimized for generative AI inference workloads. They include circuits that are designed to accelerate specific, hardware-intensive elements of the inference workflow such as FlashAttention. That's a popular implementation of the attention mechanism with which LLMs analyze input data. "At the system level, MTIA 400, 450, and 500 all utilize the same chassis, rack, and network infrastructure," the Meta engineers wrote. "Therefore, each new chip generation can be dropped into the same physical footprint, accelerating the transition from silicon to production deployment. Our modular, reusable designs also minimize the resources needed to develop and deploy multiple chip generations." Meta uses custom compilers to optimize AI models for its MTIA chips. Another custom software module, the Hoot Collective Communications Library, manages the flow of data between the processors. It carries out certain calculations using transistors that are located near memory cells, which reduces data travel times and thereby boosts performance.

[7]

Why is Meta building its own AI chips, and at what cost?

The timing is awkward, even by Silicon Valley standards. Last week, reports emerged that Meta had quietly killed its most ambitious AI training chip, codenamed Olympus. According to The Information, Meta scrapped the chip after struggling with its design, pivoting instead to a less complicated approach. Meta declined to comment. Then suddenly the company announced four new generations of its homegrown MTIA chips - 300, 400, 450, and 500 - and said nothing about any of it. Also read: Elon Musk's crazy idea to turn Grok into an AI agent for your PC The four chips it announced are all inference-focused, designed to run AI models cheaply at scale, not to train them. That's a meaningful distinction. Training is where Nvidia's stranglehold on the industry is strongest, and it's the arena Meta just quietly retreated from. The official rationale for building custom silicon is straightforward enough. Meta's stated goal is to diversify its hardware sources, reduce reliance on outside chipmakers, and bring down costs amid a fast-moving and expensive AI race. Its MTIA inference chip reportedly reduced total cost of ownership by 40 to 44 percent across recommendation systems for Facebook and Instagram, real savings at a company serving billions of users daily. When you're running AI at that scale, shaving small margins off for each inference request adds up astronomically. Also read: Anthropic Institute wants to warn us on how AI is bad for human civilization But the "at what cost" question has a literal answer that complicates the independence narrative. In January 2026, Meta announced a capital expenditure budget of between $115 billion and $135 billion for the year which is nearly double the previous year's $72.2 billion, with the majority allocated to chips. And most of that is going straight to the companies Meta says it wants to depend on less. Within ten days in February, Meta signed a multi-year strategic partnership with Nvidia to deploy millions of Blackwell and next-generation GPUs, followed by a chip agreement with AMD worth between $60 billion and $100 billion. There's also the question of what "homegrown" actually means here. Meta's MTIA chips are developed in close partnership with Broadcom - the same company that co-designs Google's TPUs. The press release calls them Meta's chips. The reality is considerably more collaborative. Meta's chip program has a history of setbacks. It scrapped an earlier inference chip after it underperformed in small-scale testing, and pivoted in 2022 to billions of dollars' worth of Nvidia GPUs. Olympus is the just the latest addition to that pattern. Meta's own Chief Product Officer once described the company's chip journey as "a walk, crawl, run situation." Right now, it still looks like a crawl - an expensive, ambitious, necessary one, but a crawl nonetheless.

Share

Share

Copy Link

Meta announced four successive generations of its custom MTIA chips on March 11, scheduled for deployment through 2027. The MTIA 300, 400, 450, and 500 are optimized for AI inference workloads, with HBM bandwidth increasing 4.5 times across the lineup. Meta has already deployed hundreds of thousands of MTIA chips in production, joining Google, AWS, and Microsoft in building custom silicon to diversify from sole reliance on Nvidia.

Meta Accelerates Custom Silicon Development with Four MTIA Chip Generations

Meta announced four successive generations of its custom Meta Training and Inference Accelerator (MTIA) chips on March 11, marking an aggressive push into in-house AI chips designed to handle the company's rapidly expanding AI inference workloads

1

. The MTIA 300, 400, 450, and 500 are all scheduled for deployment over the next two years, with Meta describing the chips as progressively optimized for AI inference on the premise that HBM memory bandwidth is the binding constraint on inference performance1

.

Source: Meta AI

The MTIA 300 is already in production for content ranking and recommendations training, while the MTIA 400—also known as Iris—has completed lab testing and is moving toward data center deployment

2

. The MTIA 450 and MTIA 500 chips, code-named Arke and Astrid respectively, are scheduled for mass deployment in early 2027 and later in 20272

. According to Yee Jiun Song, Meta's vice president of engineering, the products are being built in parallel on a roughly six-month cadence, much faster than the industry's typical one-to-two year cycle3

.HBM Bandwidth Emerges as Critical Performance Driver for AI Inference Workloads

Meta doubled HBM bandwidth from MTIA 400 to 450, making it "much higher than that of existing leading commercial products," a reference to Nvidia's H100 and H200 chips

3

. The MTIA 500 then increases HBM bandwidth by an additional 50% compared with the MTIA 450, along with up to 80% more HBM capacity1

. Across the full 300-to-500 progression, HBM bandwidth increases 4.5 times and compute FLOPs increase 25 times3

.

Source: Tom's Hardware

In a technical blog post, Meta described HBM's bandwidth as the most important factor affecting AI inference performance, adding that mainstream chips built for large-scale pre-training are applied less cost-effectively to AI inference workloads

1

. The chips use a modular chiplet architecture that allows the MTIA 400, 450, and 500 to share the same chassis, rack, and network infrastructure, meaning each new chip generation drops into the existing physical footprint without requiring new data center buildouts1

.Strategic Move to Reduce Reliance on Nvidia While Maintaining Dual Hardware Approach

The announcement comes just two weeks after Meta disclosed a long-term, $100 billion AI infrastructure agreement with AMD, suggesting a broader effort to reduce reliance on Nvidia across different parts of Meta's AI stack

3

. However, Meta will continue buying chips from other companies, including Nvidia and AMD, as it pursues a dual approach of buying traditional hardware and investing in custom silicon development for specialized tasks2

.

Source: Bloomberg

Song told CNBC that by designing custom chips, which are then manufactured by Taiwan Semiconductor, Meta can squeeze more price per performance across its data center fleet rather than relying only on vendors

4

. "This also provides us with more diversity in terms of silicon supply, and insulates us from price changes to some extent," Song said. "This is a little bit more leverage"4

. Meta has already deployed hundreds of thousands of MTIA chips in production, onboarded numerous internal production models, and tested MTIA with large language models like Llama1

.Related Stories

Hyperscalers Converge on Inference-Optimized Custom Silicon and Shared Software Stack

Meta's announcement puts the company alongside Google, AWS, and Microsoft, each of which has spent the last few years building and scaling custom silicon programs for AI accelerators

1

. Google announced Ironwood, its seventh-generation TPU purpose-built for inference, at Google Cloud Next in April 2025, delivering 192 GB of HBM3E per chip at 7.37 TB/s of memory bandwidth1

. AWS announced Trainium3, a 3nm chip with 144 GB HBM3E per chip at 4.9 TB/s bandwidth, while Microsoft introduced its Maia 200 for inference workloads built on TSMC 3nm1

.Broadcom is connecting the dots across many of these programs, having had a hand in building both Google's TPUs and Meta's MTIA family

1

. Meta described the MTIA chips as being developed "in close partnership with" Broadcom, and said that the company "has remained and will continue" to be a key partner of Meta's AI infrastructure strategy1

. The software stack runs natively on PyTorch, vLLM, and Triton, with support for torch.compile and torch.export so that production models can be deployed simultaneously on both GPUs and MTIA without MTIA-specific rewrites3

. MTIA supports both eager and graph modes, integrating directly with PyTorch 2.0's compilation pipeline, with compilers built on Torch FX IR, TorchInductor, MLIR, and LLVM5

.References

Summarized by

Navi

[3]

Related Stories

Recent Highlights

1

AI Models Lie and Deceive to Protect Other AI Models From Deletion, Study Reveals

Science and Research

2

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research

3

Judge blocks Pentagon from blacklisting Anthropic over AI safety guardrails dispute

Policy and Regulation