Microsoft releases three in-house AI models in direct challenge to OpenAI partnership

16 Sources

[1]

Microsoft takes on AI rivals with three new foundational models | TechCrunch

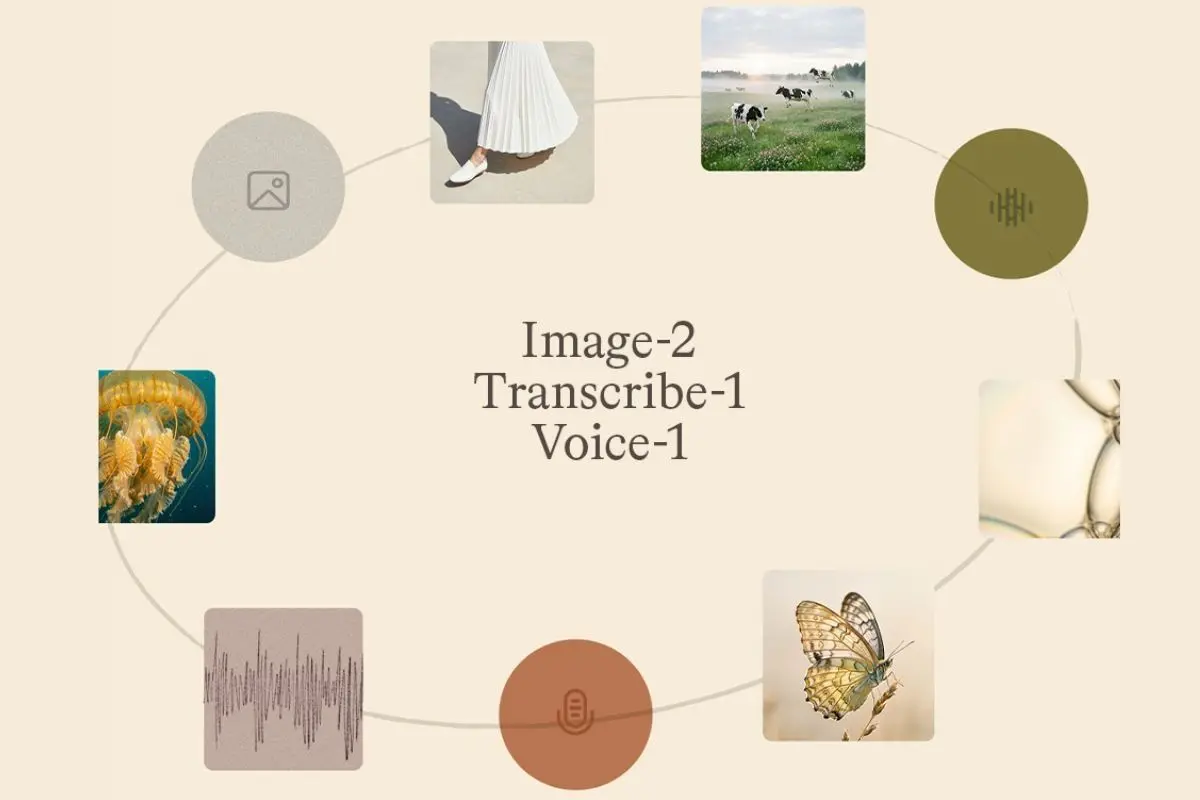

Microsoft AI, the tech giant's research lab, announced the release of three foundational AI models on Thursday that can generate text, voice, and images. The release signals Microsoft's continued push to build out its own stack of multimodal AI models -- and compete with rival AI labs -- even though it remains tied to OpenAI. MAI-Transcribe-1 transcribes speech across 25 different languages into text and is 2.5 times faster than Microsoft's Azure Fast offering, according to a company press release. MAI-Voice-1 is an audio-generating model. This voice model allows users to generate 60 seconds of audio in one second and allows users to create a custom voice. MAI-Image-2 is a video-generating model. MAI-Image-2 was originally released on MAI Playground, a new large language model testing software on March 19. Now, all three models are being released on Microsoft Foundry and the transcription and voice models are available in MAI Playground as well. The models were developed by Microsoft's MAI Superintelligence team, an AI research team led by Mustafa Suleyman, the CEO of Microsoft AI, that was formed and announced in November 2025. "At Microsoft AI, we're building Humanist AI. We have a distinct view when creating our AI models -- putting humans at the center, optimizing for how people actually communicate, training for practical use," Suleyman wrote in a blog post. "You'll see more models from us soon in Foundry and directly in Microsoft products and experiences." In an increasingly crowded LLM market, MAI hopes a selling point for these models is that they are cheaper than those from Google and OpenAI, the company wrote in the blog post. MAI-Transcribe-1 starts at $0.36 per hour. MAI-Voice-1 starts at $22 per 1 million characters, and MAI-Image-2 starts at $5 for 1 million tokens for text input and $33 for 1 million tokens for image output. Despite releasing its own models, Suleyman reaffirmed Microsoft's commitment to its partnership with OpenAI in an interview with VentureBeat -- although a recent renegotiation of that partnership allowed Microsoft to truly pursue this superintelligence research, Suleyman told The Verge. Microsoft has invested more than $13 billion into the AI research lab and hosts its models in its various products through a multi-year partnership. Microsoft takes the same stance with chips; it both produces its own and buys from outside players as well.

[2]

Microsoft's New AI Models Go Beyond Just Text

Microsoft is doubling down on AI models that aren't large language models. The company announced on Thursday that it is releasing three new models: brand new models for voice and text transcription and the second generation of its in-house image model. The voice and text transcription models are the first of their kind from Microsoft. The transcription model can translate recordings into text in 25 different languages. It's built for video captioning, meeting transcription and voice agents. The voice model can create audio recordings up to 60 seconds long. The company says its second-generation image model has a faster generation speed and more lifelike depictions, improving on its previous model. They're available now in Microsoft's Foundry and MAI playground, with future plans to bring MAI-Image-2 to Bing and PowerPoint. Developers can check out pricing info here. These new models are a clear sign that Microsoft is looking to expand its offerings across the AI market. Microsoft's Copilot is one of the most popular chatbots for businesses, especially those who already use Microsoft's Office 360 suite and Azure cloud service. Aside from the now-outdated original image model, Microsoft has primarily focused on text-based models, trying to distinguish itself among its many competitors as a secure, enterprise-friendly option. Its newest AI tools, Copilot Cowork and Copilot Health, are proof of that. However, these new models are a reminder that Microsoft, as a legacy tech company, has the cash and compute to burn on these kinds of "side quests" that even billion-dollar start-ups like OpenAI can't always afford to do. Last week, OpenAI confirmed that it will be discontinuing its Sora AI video app, citing that it will refocus on core activities. The AI industry in 2026 has been aiming to prove its tools are useful in the workplace, especially with Anthropic's Claude Code leapfrogging the competition. Generative media, like the models that power AI image and video generation, require a lot of compute and energy to run, which could be spent elsewhere. Google, as another legacy tech company with billions of its budget allocated to AI research, indicated this week that it won't be giving up on generative media but will be trying to make models more cost- and energy-efficient, as with its new Veo 3.1 Lite video model.

[3]

Microsoft shivs OpenAI with new AI models for speech, images

Microsoft on Thursday unveiled public preview versions of three home-baked machine learning models focused on speech recognition, speech synthesis, and image generation. The release makes the Windows biz look more like a direct competitor to OpenAI than an investor - Redmond held an OpenAI stake valued at about $135 billion as of last October. The models include: MAI-Transcribe-1, a speech recognition model that delivers "enterprise-grade accuracy across 25 languages at approximately 50 percent lower GPU cost than leading alternatives"; MAI-Voice-1, a speech generation model that can supposedly produce 60 seconds of audio in less than a second on a single GPU; and MAI-Image-2, a text-to-image model, to compound the despair of digital artists. OpenAI just happens to offer its own speech recognition, speech generation, and text-to-image models. Microsoft's models are available through Foundry (formerly Azure AI Studio), a platform to develop AI agents and applications. Naomi Moneypenny, who leads the Microsoft Azure AI Foundry Models product team, talked up the model arrivals in a blog post. "These are the same models already powering our own products such as Copilot, Bing, PowerPoint, and Azure Speech, and now they're available exclusively on Foundry for developers to use," she wrote. The models look well-suited for common enterprise use cases, such as designing customer support agents that can recognize speech and generate a response. Moneypenny suggests the models would also be useful to provide captioning for large events and meetings, for media subtitling and archiving, for education and training, and for gathering customer and market insights from focus groups, for example. Microsoft is already consuming its own dog food here - Copilot's Audio Expressions runs on MAI-Voice-1 while Copilot's Voice Mode transcription service uses MAI-Transcribe-1. Developers can try these two models via Azure Speech. When Microsoft announced that it had renegotiated its agreement with OpenAI, the Windows biz indicated that the partnership would continue at least to 2032 - a scenario that assumes no AI market implosion. But it also highlighted areas of competition. "Microsoft can now independently pursue AGI [artificial general intelligence] alone or in partnership with third parties," the company said at the time. That statement on its own frees Microsoft to go its own way on AI under the guise of AGI research. Microsoft has some incentive to hedge its bets. Its OpenAI ties showed strain back in January when Microsoft investors signaled dissatisfaction with the company's exposure to OpenAI's considerable spending. The AI hype-leader is burning cash and is expected to lose $14 billion this year, according to internal projections published by The Information. An internal effort to streamline its focus on enterprise customers is reportedly underway, and it killed its token-incinerating but not particularly useful video generator, Sora 2, late last month. Two weeks ago, Microsoft CEO Satya Nadella announced leadership changes affecting the company's Copilot products and superintelligence effort. Jacob Andreou was tapped to lead the company's Copilot experience as EVP across Microsoft consumer and commercial products, reporting directly to Nadella. Copilot now focuses on four areas: Copilot experience, Copilot platform, Microsoft 365 apps, and AI models. Presumably, Andreou's AI model remit isn't simply checking in with OpenAI to see what models are available. And if Microsoft's model ambitions were obvious enough, Nadella said Mustafa Suleyman will continue to steer Microsoft's AI research - entirely unnecessary if your ambition is to remain dependent on OpenAI. ®

[4]

Microsoft launches three in-house AI models in direct challenge to OpenAI

Six months after renegotiating the contract that once barred it from independently pursuing frontier AI, Microsoft has released three in-house models that directly challenge the partner it spent $13 billion cultivating. MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2 are now available in Microsoft Foundry, and they do not carry OpenAI's name anywhere on the label. The models are the first publicly released output of the MAI Superintelligence team that Mustafa Suleyman, CEO of Microsoft AI, formed in November 2025 with a stated mission of pursuing what the company calls "humanist superintelligence." In a March internal memo first reported by Business Insider, Suleyman wrote that he intended to focus all of his energy on superintelligence and deliver world-class models for Microsoft over the next five years. That ambition now has its first tangible evidence. MAI-Transcribe-1 is, on paper, the most immediately disruptive of the three. The speech-to-text model claims the lowest word error rate across 25 languages on the FLEURS benchmark, averaging 3.8 per cent, and Microsoft says it outperforms OpenAI's Whisper-large-v3 on all 25 languages, Google's Gemini 3.1 Flash on 22 of 25, and ElevenLabs' Scribe v2 on 15 of 25. It runs 2.5 times faster than Microsoft's previous Azure Fast transcription service and is priced at $0.36 per hour of audio. Perhaps most revealing is the team that built it: just 10 people. MAI-Voice-1 completes the audio loop. The text-to-speech model generates 60 seconds of natural-sounding audio in under one second on a single GPU and supports custom voice creation from a few seconds of sample audio. Combined with MAI-Transcribe-1 and a large language model of the customer's choosing, it forms a complete voice pipeline that runs entirely on Microsoft infrastructure without any dependency on OpenAI's technology. MAI-Image-2, the oldest of the three, had already debuted at number three on the Arena.ai text-to-image leaderboard in March, placing it behind only Google's Gemini 3.1 Flash and OpenAI's GPT Image 1.5. The model was developed in collaboration with photographers, designers, and visual storytellers, and WPP, one of the world's largest marketing groups, is among the first enterprise partners building with it at scale. The strategic context matters more than the benchmarks. Until the September 2025 renegotiation, Microsoft's original partnership agreement with OpenAI contractually prevented the company from independently pursuing general AI development. The revised memorandum of understanding changed that calculus fundamentally. Microsoft retained licensing rights to everything OpenAI builds through 2032, gained $250 billion in new Azure cloud business commitments, and crucially won the freedom to build competing models. Suleyman acknowledged the pivot directly: the contract renegotiation, he said, enabled Microsoft to independently pursue its own superintelligence. The timing is deliberate. Jacob Andreou, formerly a senior vice-president at Snap, took over as executive vice-president of Copilot on 17 March, freeing Suleyman from day-to-day product responsibilities. The MAI models landed barely two weeks later. Microsoft also hired Ali Farhadi, the former chief executive of the Allen Institute for AI, for Suleyman's superintelligence team in March, a recruitment signal that the ambitions extend well beyond transcription and image generation. For OpenAI, the development creates an awkward dynamic. Microsoft remains its single largest investor and its primary cloud infrastructure provider, and the two companies continue to share a platform in Foundry, which hosts both OpenAI and Microsoft models. But OpenAI's own push into commercial monetisation is accelerating in parallel, and the relationship is beginning to resemble two companies orbiting the same market with overlapping products rather than a partnership with a clear division of labour. OpenAI's $110 billion raise in February, backed by SoftBank, Nvidia, and Amazon, valued the company independently of Microsoft at a level that makes the original partnership framing increasingly anachronistic. The broader AI model market is fragmenting along similar lines. Anthropic's $30 billion raise at a $380 billion valuation established it as a credible third force in enterprise AI, with run-rate revenue of $14 billion. Google continues to iterate rapidly on Gemini. The era in which OpenAI was the only game in town for frontier AI capabilities, and Microsoft was content to be its exclusive distribution channel, is definitively over. Microsoft Foundry, the platform formerly known as Azure AI Foundry and before that Azure AI Studio (the second rebrand in twelve months), now serves developers at more than 80,000 enterprises including 80 per cent of Fortune 500 companies. That distribution advantage is what makes the MAI model family strategically significant: Microsoft does not need to beat OpenAI on every benchmark to shift enterprise spending toward in-house models. It needs to be competitive enough that customers choose the integrated option over the third-party alternative, a dynamic that the past year of AI industry consolidation has made increasingly plausible. Suleyman has said it will take another year or two before the superintelligence team produces frontier-class language models. What landed this week is the foundation: a multimodal toolkit that gives Microsoft its own voice, ears, and eyes independent of OpenAI. The $13 billion partnership is not ending. But the premise on which it was built, that Microsoft needed OpenAI to compete in AI, is being quietly dismantled one model release at a time.

[5]

Microsoft releases new AI models to expand further beyond OpenAI

Microsoft is expanding its roster of in-house AI models, releasing a new speech-to-text system and making two existing models broadly available to developers for the first time. The moves by Microsoft AI (MAI) are part of a broader effort by the company to expand its proprietary AI capabilities beyond its partnership with OpenAI, giving Microsoft more control over its own destiny in the competition against Google, Amazon, and others. Microsoft announced MAI-Transcribe-1 on Thursday, a speech-to-text model that it says is the most accurate currently available. The company also released its existing voice and image generation models, known as MAI-Voice-1 and MAI-Image-2, for broad commercial use. It's Microsoft's first major model release since a March reorganization, announced by CEO Satya Nadella, in which Microsoft AI CEO Mustafa Suleyman shifted away from day-to-day Copilot oversight to focus on frontier model development and superintelligence. Suleyman told The Verge that the transcription model runs at "half the GPU cost of the other state-of-the-art models." He told VentureBeat that the model was built by a team of just 10 people, and that Microsoft plans to eventually build a frontier large language model to be "completely independent" if needed. Microsoft also recently hired former Allen Institute for CEO Ali Farhadi and other top AI researchers from the Seattle-based institute to further bolster Suleyman's team, as GeekWire reported last week. MAI-Transcribe-1 is designed to handle noisy real-world conditions such as call centers and conference rooms, and Microsoft says it is testing integrations with Copilot and Teams. Microsoft says it offers the best price-performance of any large cloud provider, competing directly with OpenAI's Whisper and Google's Gemini on the FLEURS benchmark. In a blog post, Suleyman called the model "not just the most accurate but also lightning fast." MAI-Voice-1 generates natural-sounding speech and now lets developers create custom voices from short snippets of sample audio. MAI-Image-2 ranks in the top three on the Arena.ai image generation leaderboard and is rolling out in Bing and PowerPoint. All three are available on the Microsoft Foundry developer AI platform and MAI Playground.

[6]

Microsoft takes on Google and OpenAI with its own AI models

Microsoft just shipped its own AI models, and they're coming for OpenAI and Google. The company has publicly released three proprietary models: MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2. The models are available via the Microsoft Foundry platform and the MAI Playground. So, what can Microsoft's AI models actually do? The trio covers a variety of use cases: listening, speaking, and seeing. MAI-Transcribe-1, for instance, handles speech-to-text across 25 languages and is 2.5 times faster than Microsoft's own Azure Fast offering. It's worth mentioning that the audio model was built by a team of 10 people. Recommended Videos MAI-Voice-1 can produce 60 seconds of natural-sounding audio in just a second. It also supports creating custom voices from just a short audio clip. MAI-Image-2, meanwhile, has already secured a position in the top three on the Arena.ai image generation leaderboard. Rollouts are currently underway in Bing and PowerPoint. None of this has happened overnight, though. Until October 2025, the company was contractually restricted from building its own frontier AI by none other than OpenAI. Both companies signed a deal in 2019 that gave Microsoft a license to OpenAI's models in exchange for facilitating OpenAI's cloud infrastructure. Is Microsoft ready to cut ties with OpenAI? However, the deal also barred Microsoft from making its own AI models. Once that changed, Microsoft released its own AI models, the ones that quietly powered Copilot and Teams behind the scenes. The models are available for any developer on Foundry to build with. Not yet. Mustafa Suleyman, the CEO of Microsoft AI, has reaffirmed the company's commitment to its OpenAI partnership, even as those models signal a parallel strategy taking shape. The pricing is quite sharp as well. All three models are priced below the comparable offerings from Amazon and Google. If these models perform well, the MAI family could quietly become the backbone of Microsoft's entire AI product portfolio.

[7]

Microsoft releases foundational AI models targeting enterprises

Microsoft wants to offer the 'most complete AI and app agent factory'. Microsoft has released three new AI foundational models, created in-house, in a move that places the company in direct competition with enterprise AI rivals, despite its deep ties with OpenAI. The new foundational models target three of the most commercially viable modalities: text, voice and images. The models are already powering Microsoft's products, including Copilot, Bing, and Azure Speech, the company said, and will be available in a preview via the Microsoft Foundry and MAI Playground. With this, Microsoft is furthering its goals of delivering "the most complete AI and app agent factory", it said. The $2.7trn company already offers several AI-embedded apps and platform services. Its Copilot Studio lets users build agents, while the Foundry services offer a place to train and scale models. Meanwhile, a recently announced Copilot integration with Anthropic's Claude Cowork is meant to target the growing demand for autonomous agents. 'MAI-Transcribe-1' is a first-generation speech recognition model expected to deliver "enterprise-grade accuracy" across 25 languages at around 50pc lower GPU costs that its alternatives. The model shows less than 4pc average 'word error rate', while GPT-Transcribe is at 4.2pc and Gemini 3.1 Flash is at 4.9pc. 'MAI-Voice-1' is a speech generation model that, according to Microsoft, can produce 60 seconds of expressive audio in under one second on a single GPU. Together, the two models are meant to deliver an audio AI stack capable of assisting in call-centre workflows and other voice-driven interfaces, such as providing live captioning, automatic subtitling and converting interactions into structured data for research. Microsoft's second-generation image model, the 'MAI-Image-2', is expected to offer artists a way to "explore" different visual directions. The model is created in "close collaboration" with artists, the company said, and is meant to help enterprises create branding and communication material. MAI-Image-2 debuted at the third spot in the Arena.ai leaderboard for image model families. It is now in the fifth rank. Microsoft backed OpenAI in its recent $122bn funding round alongside the likes of Amazon, Nvidia and SoftBank. Late last year, the company announced a $10bn investment plan for a data centre in Portugal. The company announced plans to spend more than $37bn just this past quarter. Don't miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic's digest of need-to-know sci-tech news.

[8]

Microsoft launches new high-speed voice and image models - SiliconANGLE

Microsoft Corp. today introduced a trio of artificial intelligence models optimized to process images and audio. The algorithms are available through Microsoft Foundry, an Azure service that developers can use to build AI applications. The tech giant has also started rolling out the models to a number of other products. The first new algorithm, MAI-Image-2, can generate images with a resolution of up to 1024 by 1024 pixels based on user instructions. Each prompt may contain up to 32,000 tokens worth of text. Under the hood, MAI-Image-2 turns instructions into images using 10 billion to 50 billion non-embedding parameters. Non-embedding parameters are model components that focus on generating content rather than preliminary data preparation tasks. Microsoft says that MAI-Image-2 is at least twice as fast as its previous-generation image generator. The second new model that debuted today, MAI-Transcribe-1, also brings significant speed improvements. It can transcribe speech 2.5 times faster than Microsoft's earlier models. MAI-Transcribe-1's other selling point is its accuracy. Microsoft tested the model's mean word error rate, a measure of transcript quality, across 25 languages. MAI-Transcribe-1 logged an error rate of 3.9%, which put it ahead of Gemini 3.1 Flash and OpenAI Group PBC's GPT-Transcribe. One contributor to the model's accuracy is that it includes features for filtering environmental noise. On launch, MAI-Transcribe-1 supports batch transcription. That means the model can only process pre-prepared files such as audiobooks. According to Microsoft, a future update will add the ability to transcribe real-time audio streams. The company is also working on a so-called diarization feature that can split the text of a transcript into speaker-specific segments. The third model that Microsoft introduced today is called MAI-Voice-1. As the name suggests, it's optimized to generate synthetic speech based on user-provided scripts. Customers can choose from one of built-in AI voices or use their own voice. Microsoft says all three models offer competitive pricing compared to competitors. MAI-Image-2 is priced at $5 per 1 million input tokens and $33 per 1 million output tokens. MAI-Transcribe-1 costs $0.36 per hour of transcribed speech, while MAI-Voice-1 starts at $22 per 1 million characters. The models are available through not only Microsoft Foundry but also several other services. Microsoft is currently in the process of rolling out MAI-Image-2 to Bing and PowerPoint, while MAI-Voice-1 is accessible in an audio creation tool called Copilot Audio Expressions.

[9]

Microsoft launches MAI-Transcribe-1 for speech recognition in 25 languages

Microsoft has launched "MAI-Transcribe-1," an AI model designed for accurate speech-to-text transcription across 25 widely spoken languages. This model is intended for applications such as meetings, closed captioning, and dictation. MAI-Transcribe-1 will be available on Microsoft Foundry alongside two other models: MAI-Voice-1 and MAI-Image-2. Microsoft stated that this launch will enable customers to evaluate and build with these models across transcription, voice, and image generation. MAI-Voice-1 features hyper-realistic speech generation and allows for the creation of custom brand voices from just one minute of audio. Meanwhile, MAI-Image-2 specializes in text-to-image generation, excelling in natural lighting, accurate skin tones, and clarity of in-image text. Microsoft has expressed a desire to reduce reliance on OpenAI by developing its own AI models, following criticisms of limitations in OpenAI's GPT-4 technology. The company is restructuring its Copilot division into four pillars for better management: Copilot experience, Copilot platform, Microsoft 365 apps, and AI models. Jacob Andreou, a former Snap executive, will lead Copilot experiences, while Mustafa Suleyman, Microsoft's AI CEO, will focus on in-house developments. Salesforce CEO Marc Benioff predicted Microsoft would move away from OpenAI technology amid discontinuations like OpenAI's Stargate project. Microsoft's shift to in-house model development reflects a strategic response to ongoing challenges and market demands. Suleyman acknowledged that current in-house models would still be a secondary option compared to OpenAI's more advanced solutions. With the launch of MAI-Transcribe-1, Microsoft aims to broaden its capabilities and offer businesses enhanced tools for productivity and communication in the evolving AI ecosystem.

[10]

Microsoft's Three New AI Models Said to Rival OpenAI and Google

Voice-1 can generate realistic speech with an emotional range Microsoft released three specialised artificial intelligence (AI) models on Thursday, focusing on image generation, voice generation, and speech-to-text transcription. The Redmond-based tech giant claims that these models outperform specialised models from rival companies, such as Google, OpenAI, and others. The models, MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2, are also said to focus on fast generation and competitive pricing. These are currently available via the Microsoft Foundry, and they are also being rolled out to various consumer products. Microsoft Brings Three New AI Models In a newsroom post, the tech giant introduced the three new large language models (LLMs). All of them are currently available via Microsoft Foundry and the MAI Playground. The biggest highlight is the MAI-Transcribe-1, which the company claims delivers state-of-the-art (SOTA) speech-to-text transcription across the 25 most used languages. The claims are based on Microsoft's internal testing on the FLEURS benchmark. It is said to outperform Gemini 3.1 Flash and GPT-Transcribe in error rate. Additionally, the company says Foundry users will find it to be the "best price-performance of any large cloud provider." Coming to MAI-Voice-1, the LLM is said to generate "natural, realistic speech, rich with nuance, emotional range, and expression." The model is also said to deliver consistent speech and voice identity during long-form content generation. Inside Foundry, the model will also allow users to create a custom voice with a few seconds of audio. Microsoft claims that this process is safe and secure. It is said to generate 60 seconds of audio in a single second. Notably, the AI model will also power Copilot Audio Expressions and Copilot Podcasts. Finally, the MAI-Image-2 model builds on the capabilities of its predecessor and is said to deliver improved output quality at a faster speed. Microsoft revealed that the model was created in collaboration with photographers, designers, and visual storytellers, and it focuses on natural lighting, accurate textures, and clear in-image text. Notably, WPP is among the first enterprise partners to have adopted the AI model. The model, similar to the other two, will be available via the Microsoft Foundry and the MAI Playground. Additionally, it is also rolling out to Copilot, Bing, and PowerPoint.

[11]

Microsoft launches 3 AI models for transcription, image, and speech generation - The Economic Times

Microsoft on Thursday announced three new models from its Microsoft AI (MAI) model family for transcription, image, and speech generation. This includes MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2, as Microsoft aims to expand its push into multimodal artificial intelligence (AI) capabilities for developers. Starting today, the models are now available on Microsoft Foundry and the MAI Playground. Formerly Azure AI Studio, Foundry is a unified AI platform to build, customise, and scale generative AI (GenAI) applications and agents. Meanwhile, Playground is its public testing environment where users can experiment with features and provide feedback. "Consistent with our commitment to safe and responsible AI, these MAI models were developed, tested, and rigorously red-teamed. Through Microsoft Foundry, developers get built-in guardrails, governance, and enterprise-grade controls designed to support safe, compliant deployment at scale," wrote Mustafa Suleyman in a blog post. Suleyman leads the AI division at Microsoft. MAI-Transcribe-1 is a speech-to-text model that can support transcription across the 25 most widely used languages, including Hindi. According to Microsoft, the model produces fewer mean word errors (WER) than even Google's Gemini 3.1 Flash and OpenAI's GPT-Transcribe. WER evaluates the accuracy of Automatic Speech Recognition (ASR) systems by measuring the percentage of words a model gets wrong. The model offers batch transcription speeds up to 2.5 times faster than Microsoft's existing Azure Fast offering. The starting price of the model is $0.36 per hour. Meanwhile, using MAI-Voice-1, developers will be able to create custom voices with a few seconds of input audio. The model can generate up to 60 seconds of audio in one second, with pricing starting at $22 per one million characters. Finally, MAI-Image-2, Microsoft's latest image generation model, introduced only in the MAI Playground last month, is now broadly accessible via Foundry. The model delivers at least twice the generation speed compared to earlier versions, based on production data, while maintaining output quality. Pricing starts at $5 per one million text tokens and $33 per one million image tokens. The models are also being integrated into Microsoft products, including Copilot, Bing, and PowerPoint, with enterprise adoption already underway.

[12]

Microsoft Enters Next AI Phase with Three New Foundational Models

The newly introduced models include MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2, each focused on different aspects of multimodal AI capabilities. MAI-Transcribe-1 is designed for speech-to-text conversion across multiple languages and is claimed to be significantly faster than Microsoft's existing Azure transcription systems. MAI-Voice-1 focuses on generating synthetic audio, including the ability to produce up to a minute of audio in near real time and support custom voice creation. MAI-Image-2 is positioned as a generative model for visual and multimedia content creation.

[13]

Microsoft rolls out MAI-Transcribe-1, MAI-Voice-1 and MAI-Image-2 in Foundry public preview

Microsoft has announced three new AI models -- MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2 -- in public preview through its AI development platform Microsoft Foundry. The update is part of Microsoft's broader approach to building a unified AI and application agent platform that provides developers with access to models, infrastructure, and tools for building scalable AI systems. The same models are already used across Microsoft products such as Copilot, Bing, PowerPoint, and Azure Speech, and are now available for developers exclusively through Foundry. The MAI model family consists of three multimodal AI models designed to support speech, voice, and image workflows: Together, these models form a first-party AI stack that enables developers to build applications involving real-time transcription, voice interactions, and image generation within a single ecosystem. All three models are available in public preview via Microsoft Foundry. MAI-Transcribe-1 is a speech recognition model designed for enterprise transcription workloads. It supports up to 25 languages and is built to handle varied accents and real-world audio conditions. The model delivers competitive transcription accuracy while reducing computational requirements, achieving approximately 50% lower GPU cost compared to leading alternatives when benchmarked. This efficiency supports scalable deployment across large systems with predictable cost behavior. It is suitable for applications such as real-time transcription, call center analytics, voice input systems, and audio processing pipelines. The model is also used internally in Microsoft's Copilot features, including voice mode and dictation capabilities. MAI-Voice-1 is a speech synthesis model focused on producing natural and expressive audio with low latency. It can generate up to 60 seconds of audio in under one second on a single GPU, enabling fast response times in voice-based applications. The model supports a wide range of use cases including conversational agents, voice assistants, and audio content generation. It produces speech output that is designed to sound natural and expressive across different scenarios. MAI-Voice-1 is integrated into Microsoft's ecosystem, supporting features such as Copilot audio experiences and podcast-style outputs. Developers can also use Azure Speech's Personal Voice feature to create custom voices from short audio samples, subject to a responsible AI approval process. MAI-Image-2 is Microsoft's text-to-image generation model designed for creating high-quality visuals from text prompts. It focuses on photorealistic outputs, improved text rendering within images, and better handling of complex layouts and scenes. The model has been trained with input from designers, photographers, and visual storytellers. It debuted at #3 on the Arena.ai leaderboard for image model families, indicating strong performance among comparable systems. MAI-Image-2 can generate detailed and structured visuals suitable for design concepts, marketing materials, product visualization, and internal communications. It is also used within Microsoft products such as Copilot, Bing Image Creator, and PowerPoint, and is adopted by enterprise partners including WPP for creative workflows. Developers can begin experimenting in the Playground and deploy these models into production environments through Foundry while following Microsoft's responsible AI guidelines for features such as voice cloning.

[14]

Microsoft Seeks Self Sufficiency in AI Race, Launches Three Foundational Models

The three models aim to transcribe audio, have spoken conversations and create images and offer cheaper options to users compared to OpenAI and Google Microsoft cocked-a-snook at its big tech and startup AI rivals by releasing three new foundational models that it has trained internally, thus proceeding on the path of self-sufficiency in an era where circular deals are the norm. The three models aim to transcribe audio, have spoken conversations and create images. The three models - MAI-Transcribe-1, MAI-Voice-1 and MAI-Image-2 can generate text voice and images respectively. In a blog post Mustafa Suleyman, the CEO of Microsoft AI, said "You'll see more models from us soon in Foundry and directly in Microsoft products and experiences." "At Microsoft AI, we're building Humanist AI. We have a distinct view when creating our AI models -- putting humans at the centre, optimizing for how people actually communicate, training for practical use," Suleyman said. The MAI Superintelligence team, an AI research grouping led by Suleyman was formed and announced in November 2025. Consistent with our commitment to safe and responsible AI, these MAI models were developed, tested, and rigorously red-teamed. Through Microsoft Foundry, developers get built-in guardrails, governance, and enterprise-grade controls designed to support safe, compliant deployment at scale, he said. Microsoft had first launched its own AI models back in September last year to coincide with the launch of GPT-5 by OpenAI. The first two models - MAI-Voice-1 and MAI-1-Preview - was tested on Copilot Labs. The first is a speech model capable of generating a minute's audio in under a second, the second one "offers a glimpse of future offerings under Copilot." What has changed for Microsoft in recent times? With the latest launch, what is noteworthy is that Microsoft appears well on its way to build its own stack of multimodal AI models and compete with all the rival AI labs, be it from its Big Tech Rivals like Google and Gemini or the young Turks in the business such as OpenAI, Perplexity and even Anthropic. While MAI-Transcribe-1 is capable of transcribing speech across 24 different languages into text and is claimed to be 2.5 times faster than Microsoft's Azure Fast, MAI-Voice-1 is an audio generating model that allows users to generate 60 seconds of audio in one second with the ability to create a custom voice as per the user's needs. The MAI-Image-2 image generating model was originally released as MAI Playground last March. Now all three models are being released on Microsoft Foundry and the transcription and voice models are available in MAI Playground as well. The company said MAI-Image-2 was being used for creating work by WPP, one of the world's largest marketing and communications group. "MAI-Image-2 is a genuine game-changer. It's a platform that not only responds to the intricate nuance of creative direction, but deeply respects the sheer craft involved in generating real-world, campaign-ready images," said Rob Reilly, Global Chief Creative Officer, WPP. "WPP has some of the best creative talent in the world and MAI-Image-2 is making them even better." And yet, Microsoft wants to retain the bridges it built with OpenAI It is interesting that Microsoft has decided to go it alone in an increasingly crowded LLM market and is now hoping to sell their latest offerings at cheaper costs than Google and OpenAI. MAI-Transcribe-1 starts at $0.36 per hour. MAI-Voice-1 starts at $22 per 1 million characters, and MAI-Image-2 starts at $5 for 1 million tokens for text input and $33 for 1 million tokens for image output. However, in spite of the three new offerings, Suleyman sought to assuage fears of a break-up with OpenAI and told VentureBeat in an interview that the company continued to be committed to its partnership with OpenAI though post a recent renegotiation, Microsoft was free to pursue this superintelligence research. The company has invested over $13 billion into AI research lab.

[15]

Microsoft Releases AI Models for Transcription, Voice and Image Generation

Microsoft unveiled three new artificial intelligence models offering speech-to-text transcription as well as voice and image generation. The software giant said Thursday it's working to deploy the models to power its consumer and commercial products, and they are now available for its Foundry customers. One of the new models, MAI-Transcribe-1, offers speech-to-text transcription across 25 languages. The model transcribes more than two times faster than Microsoft's existing Azure Fast offering, the company said. The company's MAI-Voice-1 offering, meanwhile, aims to generate natural, realistic speech. Foundry users will also be able to create their own custom voice using a few seconds of audio. Microsoft's image generation model, MAI-Image-2, is already in use across some enterprise partners including marketing and communications firm WPP, Microsoft said. The model allows users to generate images quickly with natural lighting, accurate skin tones and textures, the company said. Microsoft has faced stumbling blocks in the race for dominance in AI. The company's Copilot chatbot, a product central to its AI strategy, hasn't won over users as a clear ChaptGPT alternative, and Wall Street has grown concerned that growth in its most important business unit, the Azure cloud-computing business, is slowing. Microsoft continues to double down on its AI efforts, with plans to invest billions globally in AI computing as demand booms.

[16]

Microsoft unveils three new AI models for speech and imaging: What they can do

The new models include MAI-Transcribe-1, MAI-Voice-1 and MAI-Image-2. Microsoft has introduced three new AI models that can generate text, voice and images. The new models- MAI-Transcribe-1, MAI-Voice-1 and MAI-Image-2- are now available through Microsoft Foundry and MAI Playground. The tech giant says these models focus on speed, accuracy and affordability. MAI-Transcribe-1 is designed to convert speech into text. It supports transcription in the 25 most-used languages and is designed to perform well even in noisy, real-world environments. Microsoft says MAI-Transcribe-1 can process batch transcription about 2.5 times faster than the company's existing Azure Fast offering. MAI-Transcribe-1 is 'not just the most accurate, but also lightning fast,' the company said. Also read: Google launches Gemma 4 AI models: Features, capabilities and how to use The second model, MAI-Voice-1, is designed to generate realistic AI voices. Microsoft says it can produce 'natural, realistic speech, rich with nuance, emotional range and expression that preserves speaker identity even across long-form content.' A key feature of MAI-Voice-1 is the ability for developers to create a custom AI voice using only a few seconds of recorded audio. Also, Microsoft claims this model can generate 60 seconds of audio in just one second. Also read: OpenAI buys Sam Altman favourite tech show TBPN, internet calls it PR move The third model, MAI-Image-2, focuses on image generation. The model is said to offer at least twice the image generation speed compared to previous systems. The MAI-Image-2 AI model has already started rolling out in Bing and PowerPoint. 'MAI-Image-2 was created with photographers, designers, and visual storytellers that demand natural lighting, accurate skin tones and texture, and clear in-image text for diagrams, layouts, and graphics,' the tech giant said. MAI-Transcribe-1 starts at $0.36 per hour, while MAI-Voice-1 starts at $22 per 1M characters. MAI-Image-2 starts at $5 per 1M tokens for text input and $33 per 1M tokens for image output. Also read: Google AI Pro plan now offers 5TB storage at no extra cost: How to get it

Share

Copy Link

Microsoft unveiled three foundational AI models—MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2—marking its first major independent release since renegotiating its OpenAI partnership. The models handle speech-to-text transcription, voice generation, and image creation, positioning Microsoft as a direct competitor to its $13 billion investment partner while expanding its proprietary capabilities in the crowded AI market.

Microsoft Launches In-House AI Models After OpenAI Contract Renegotiation

Microsoft has released three foundational AI models that generate text, voice, and images, signaling a strategic shift toward independence from its longstanding OpenAI partnership

1

. The announcement marks the first publicly released output from the MAI Superintelligence team, formed in November 2025 under Mustafa Suleyman, CEO of Microsoft AI4

. Six months after renegotiating a contract that previously barred independent frontier AI development, Microsoft now competes directly with the partner it spent $13 billion cultivating4

.

Source: CXOToday

The three models—MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2—are available through Microsoft Foundry and MAI Playground, with future plans to integrate MAI-Image-2 into Bing and PowerPoint . These in-house AI models do not carry OpenAI's name anywhere on the label, representing a clear departure from Microsoft's previous reliance on its partner's technology

4

.

Source: Gadgets 360

MAI-Transcribe-1 Delivers Enterprise-Grade Speech Recognition

The speech-to-text model claims the lowest word error rate across 25 languages on the FLEURS benchmark, averaging 3.8 percent

4

. Microsoft reports that MAI-Transcribe-1 outperforms OpenAI's Whisper-large-v3 on all 25 languages, Google's Gemini 3.1 Flash on 22 of 25, and ElevenLabs' Scribe v2 on 15 of 254

. The model runs 2.5 times faster than Microsoft's Azure Fast offering and operates at approximately 50 percent lower GPU cost than leading alternatives3

.Pricing starts at $0.36 per hour of audio, positioning it competitively in the LLM market

1

. Suleyman told The Verge that the transcription model runs at "half the GPU cost of the other state-of-the-art models" and was built by a team of just 10 people5

. The model handles noisy real-world conditions such as call centers and conference rooms, with Microsoft testing integrations with Copilot and Teams5

.Voice and Image Models Complete Multimodal AI Stack

MAI-Voice-1 generates 60 seconds of natural-sounding audio in under one second on a single GPU and supports custom voice creation from a few seconds of sample audio

4

. The text-to-speech model is priced at $22 per 1 million characters1

. Combined with MAI-Transcribe-1 and a large language model of the customer's choosing, it forms a complete voice pipeline that runs entirely on Microsoft infrastructure without any dependency on OpenAI's technology4

.MAI-Image-2, originally released on MAI Playground on March 19, debuted at number three on the Arena.ai text-to-image leaderboard, placing behind only Google's Gemini 3.1 Flash and OpenAI's GPT Image 1.5

4

. The model was developed in collaboration with photographers, designers, and visual storytellers, with WPP, one of the world's largest marketing groups, among the first enterprise partners building with it at scale4

. Pricing starts at $5 for 1 million tokens for text input and $33 for 1 million tokens for image output1

.Related Stories

Strategic Shift Follows Partnership Renegotiation

Until the September 2025 renegotiation, Microsoft's original partnership agreement with OpenAI contractually prevented the company from independently pursuing general AI development

4

. The revised memorandum of understanding changed that calculus fundamentally—Microsoft retained licensing rights to everything OpenAI builds through 2032, gained $250 billion in new Azure cloud business commitments, and crucially won the freedom to build competing models4

.

Source: GeekWire

Suleyman acknowledged the pivot directly, stating that the contract renegotiation enabled Microsoft to independently pursue its own superintelligence

4

. In a March internal memo first reported by Business Insider, Suleyman wrote that he intended to focus all of his energy on superintelligence and deliver world-class models for Microsoft over the next five years4

. He told VentureBeat that Microsoft plans to eventually build a frontier large language model to be "completely independent" if needed5

.Microsoft Positions Models as Cost-Effective Alternative

In an increasingly crowded market, Microsoft hopes a selling point for these models is that they are cheaper than those from Google and OpenAI

1

. "At Microsoft AI, we're building Humanist AI. We have a distinct view when creating our AI models—putting humans at the center, optimizing for how people actually communicate, training for practical use," Suleyman wrote in a blog post1

.Naomi Moneypenny, who leads the Microsoft Azure AI Foundry Models product team, noted that "these are the same models already powering our own products such as Copilot, Bing, PowerPoint, and Azure Speech"

3

. Copilot's Audio Expressions runs on MAI-Voice-1 while Copilot's Voice Mode transcription service uses MAI-Transcribe-13

.Microsoft Foundry, the platform formerly known as Azure AI Foundry and before that Azure AI Studio, now serves developers at more than 80,000 enterprises including 80 percent of Fortune 500 companies

4

. That distribution advantage makes the MAI model family strategically significant—Microsoft does not need to beat OpenAI on every benchmark to shift enterprise spending4

. The company also recently hired former Allen Institute for AI CEO Ali Farhadi and other top AI researchers to further bolster Suleyman's team5

.References

Summarized by

Navi

[3]

Related Stories

Microsoft Unveils In-House AI Models, Signaling Potential Shift from OpenAI Dependency

29 Aug 2025•Technology

Microsoft launches MAI-Image-2-Efficient, a 41% cheaper and 22% faster AI image model

15 Apr 2026•Technology

Microsoft Unveils MAI-Image-1: Its First In-House AI Image Generator

14 Oct 2025•Technology

Recent Highlights

1

Google stops first AI-developed zero-day exploit designed to bypass two-factor authentication

Technology

2

Anthropic Mythos evolves faster than expected, completing complex cyberattacks in 20 hours

Technology

3

Google unveils Gemini Intelligence, transforming Android into an AI-first smartphone platform

Technology