Micron develops stacked GDDR memory as cost-effective HBM alternative for AI inference

2 Sources

2 Sources

[1]

Micron Explores Vertically Stacked GDDR Memory as HBM Alternative

Micron is reportedly developing a new memory architecture based on vertically stacked GDDR, targeting a space between traditional GDDR and high-bandwidth memory (HBM). According to industry reports, the company could introduce an early prototype by 2027, signaling a longer-term effort to diversify memory solutions for GPUs and AI accelerators. The concept behind stacked GDDR is relatively straightforward. By vertically stacking memory dies within a single package, Micron aims to increase both capacity and bandwidth without relying on the complex silicon interposer designs required by HBM. This could result in a more scalable and cost-effective solution, particularly for applications that do not require the full performance envelope of HBM. HBM currently dominates high-end AI training hardware due to its extremely high bandwidth and efficiency. However, it also comes with higher production costs and more demanding packaging requirements. GDDR, on the other hand, remains widely used in GPUs and certain AI inference accelerators thanks to its lower cost and simpler integration. Professional graphics cards and various AI-focused designs continue to rely on GDDR, demonstrating that it still has a place in the broader memory ecosystem. The move toward vertically stacked GDDR appears to be driven by growing demand in AI inference workloads. Unlike training, inference often benefits more from increased memory capacity and cost efficiency than from peak bandwidth. By stacking memory dies, Micron could significantly boost per-package density while maintaining a relatively accessible price point compared to HBM-based solutions. This development could also intensify competition among memory manufacturers, as the industry looks for new ways to balance performance, cost, and scalability. If successful, stacked GDDR may become a viable alternative for mid-range AI accelerators and future GPU designs, offering a flexible option between conventional GDDR and premium HBM. While still early in development, Micron's approach highlights a broader trend in memory innovation, where vendors are exploring hybrid solutions to meet the evolving requirements of AI and high-performance computing workloads.

[2]

Micron Is Looking to Stack Gaming GPU GDDR Modules Like HBM for the First Time Ever, and AI's Memory Hunger Is Entirely to Blame

Micron is now looking to gobble up general-purpose GDDR memory modules by creating an HBM-like solution with them, and this might further fuel shortages for gamers. The memory industry is working to meet the demands of modern-day AI workloads, and companies are exploring various methods to overcome this challenge. While traditional HBM technologies were adequate for training frontier models, as we move towards inference, memory has become the next major focus. A report by ETNews reveals that Micron is now looking to stack GDDR modules, likely to create a solution with significantly higher capacities than current solutions, which could prove to be an interesting venture. Initial GDDR stacking of around four layers is expected. Prototypes (samples) could be released as early as next year. - ETNews GDDR is a segment that hasn't been influenced by the AI frenzy as dramatically as LPDDR or DDR, since its use case had previously been confined to gaming GPUs. Given that Micron plans to vertically stack GDDR to offer the industry a solution, it may be that the company has sufficient capacity on board and, instead of easing GPU market shortages, finds it better to cater to enterprise demand. The report noted that the stacked GDDR solution won't be on par with HBM in terms of performance, but it would offer higher capacities, complementing modern-day inference workloads. GDDR stacking is a newly explored concept, and while the report doesn't provide technical details on how the solution could look. Micron's SOCAMM2 has already explored the prospect of stacking general-purpose DRAM, such as LPDDR5X, up to 16-Hi, and the firm has achieved up to 256 GB per module. However, stacking LPDDR5X is much easier than GDDR, given that the former is a power-efficient module with manageable thermals. With GDDR, the primary issues Micron could face are maintaining thermal and signal integrity if it sticks to wire bonding. There are multiple ways Micron could address the issues with GDDR stacking by trading off clock speeds, but the report highlights that the memory giant is pursuing innovation in the memory segment. We have seen that Micron experienced hiccups with HBM4 following a delay in NVIDIA's certification, and while the firm has provided modules for Vera Rubin, supply allocation has dropped, while competitors like Samsung have seen an increase. A GDDR-stacked solution could stand out in the memory market if it proves cost-effective relative to HBM.

Share

Share

Copy Link

Micron is developing a vertically stacked GDDR memory architecture positioned between traditional GDDR and high-bandwidth memory (HBM). The company aims to introduce early prototypes by 2027, targeting AI inference workloads that prioritize memory capacity and cost-efficiency over peak bandwidth. This innovation could reshape memory solutions for GPUs and AI accelerators.

Micron Pursues Vertically Stacked GDDR Memory Architecture

Micron is developing a new vertically stacked GDDR memory architecture that positions itself as an HBM alternative, according to recent industry reports

1

2

. The memory manufacturer aims to introduce early prototypes by 2027, with initial GDDR stacking expected to feature around four layers2

. This longer-term effort signals Micron's strategy to diversify memory solutions for GPUs and AI accelerators while addressing growing memory demands of AI without the premium costs associated with traditional HBM.The concept centers on vertically stacking memory dies within a single package to increase memory capacity and bandwidth without requiring the complex silicon interposer designs that HBM demands

1

. This approach could deliver a more scalable and cost-effective alternative to HBM, particularly for applications that don't require HBM's full performance envelope. The stacked GDDR solution won't match HBM in raw performance, but it would offer higher capacities that complement modern AI inference workloads2

.Targeting AI Inference Workloads and Memory Solution for GPUs

The development appears driven by AI inference workloads, which benefit more from increased memory capacity and cost-efficiency than from peak bandwidth

1

. While HBM currently dominates high-end AI training hardware due to its extremely high bandwidth and efficiency, it comes with higher production costs and more demanding packaging requirements. GDDR remains widely used in GPUs and certain AI inference accelerators thanks to its lower cost and simpler integration1

.By stacking memory dies, Micron could significantly boost per-package density while maintaining a relatively accessible price point compared to HBM-based solutions

1

. If successful, stacked GDDR memory may become a viable option for mid-range AI accelerators and future GPU designs, offering flexibility between conventional GDDR and premium HBM.Related Stories

Technical Challenges and Memory Innovation Strategy

Micron faces technical hurdles in developing this DRAM innovation, particularly around thermal management and signal integrity if the company relies on wire bonding

2

. Stacking GDDR proves more challenging than stacking power-efficient modules like LPDDR5X, which Micron has already stacked up to 16-Hi in its SODIMM2 solution, achieving up to 256 GB per module2

. The company may need to trade off clock speeds to address these technical constraints.This development could intensify competition among memory manufacturers as the industry explores hybrid solutions to balance performance, cost, and scalability

1

. The timing is notable given that Micron experienced delays with HBM4 following certification issues with NVIDIA, while competitors like Samsung have seen increased supply allocation2

. A GDDR-stacked solution could stand out in the memory market if it proves cost-effective relative to HBM, offering Micron a strategic differentiation point in the evolving landscape of high-performance computing and AI accelerators.References

Summarized by

Navi

Related Stories

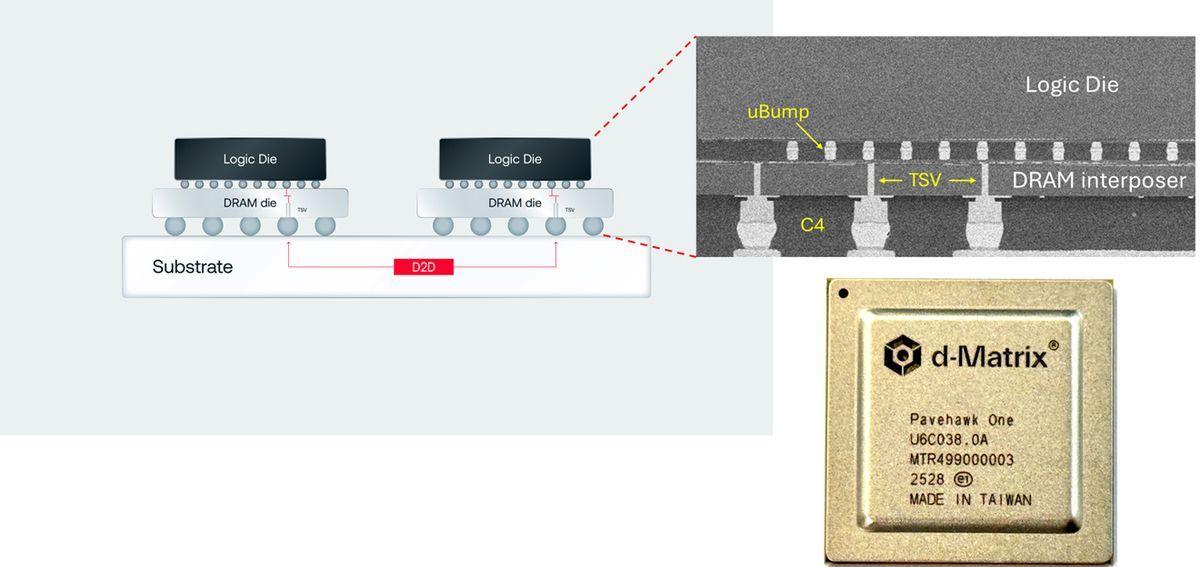

D-Matrix Challenges HBM with 3DIMC: A New Memory Technology for AI Inference

04 Sept 2025•Technology

SK hynix Leads the Charge in Next-Gen AI Memory with World's First 12-Layer HBM4 Samples

19 Mar 2025•Technology

Micron's HBM4 Breakthrough and Strategic Partnerships Reshape AI Memory Landscape

24 Sept 2025•Technology

Recent Highlights

1

AI Models Lie and Deceive to Protect Other AI Models From Deletion, Study Reveals

Science and Research

2

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research

3

Judge blocks Pentagon from blacklisting Anthropic over AI safety guardrails dispute

Policy and Regulation