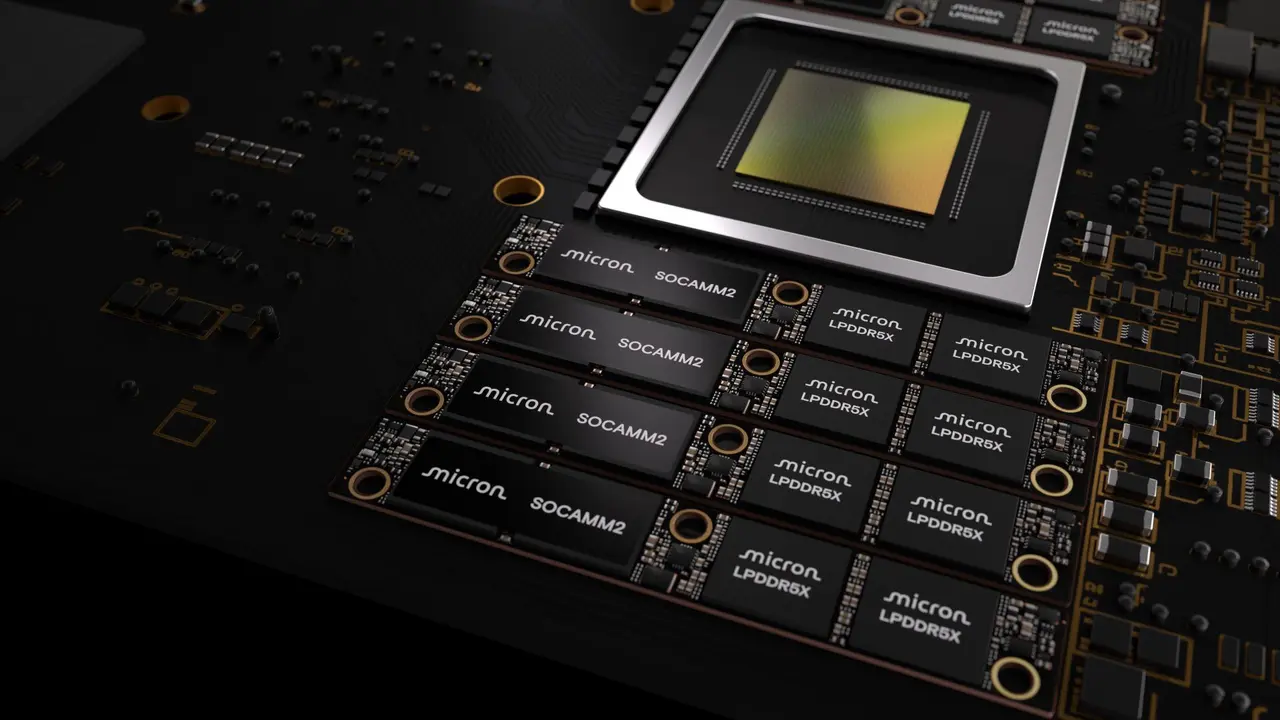

Micron ships 256GB SOCAMM2 modules to customers, bringing 2TB AI memory capacity per CPU

5 Sources

5 Sources

[1]

Micron sampling first 256GB SOCAMM2 memory packages to customers -- 2TB of RAM per CPU is now in reach of datacenter players

Most of the conversation about speed in AI datacenters revolves around the accelerators themselves, discussing tokens per second and the like. However, the battle for AI performance is fought on multiple fronts, and one of them is in memory capacity and power efficiency. Today, Micron unveiled what look to be the industry's first 256 GB SOCAMM2 units, a sizable step up from the last-best 192 GB modules released just six months ago. The company says it's now shipping samples to customers, who will most definitely be happy at the notion of having 2TB of memory wired to each CPU. For just one major example, the typical Nvidia NVL72 rack can now carry 72 TB of RAM for its 36 CPUs. The 33% improved density over previous-generation SOCAMM2 is excellent news on its own, but that's not the only advantage of this form factor. The new modules ought to offer 66% better power efficiency compared to bog-standard RDIMMs, and they're compatible with increasingly popular (and necessary) liquid cooling for AI servers. According to Micron, the new sticks are the first to employ its 32 Gb (4 GB) LPDDR5X monolithic dies, where "monolithic" means all the memory and relevant circuitry are part of a single die. Given the target market for these large SOCAMM2s, the firm touts the real-world performance improvements beyond just density and power efficiency. Having this much RAM available to a single processor lets AI models use much larger context windows. Consequently, it helps reduce the all-important TTFT (Time To First Token), meaning bots start answering your questions quicker. In an AI future where context is literally everything, every gigabyte of memory closer to the xPUs in a system matters, and Micron's advancement today will doubtless be found in massive AI server installations worldwide as companies allocate hundreds of billions of dollars of capex in the race toward AI supremacy. The SOCAMM2 form factor is the result of a partnership between Nvidia and memory makers Micron, Samsung, and SK hynix. The SOCAMM standard was originally designed by Nvidia, but the accelerator mogul reportedly had trouble getting the modules to operate without overheating on high-density servers. CEO Jensen Huang wisely teamed up with the folks who make computer memory for a living, resulting in SOCAMM2s with growing density and lower power consumption. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

Micron squeezes 64 32GB LPDDR5x chips into one module

Total capacity exceeds the previous module generation by a third * Micron introduces dense 256GB LPDDR5x module aimed squarely at AI servers * Eight SOCAMM2 modules can push server memory capacity to a massive 2TB * AI inference workloads increasingly shift performance bottlenecks toward system memory capacity Large language models (LLMs) and modern inference pipelines increasingly demand enormous memory pools, forcing hardware vendors to rethink server memory architecture. Micron has now introduced a 256GB SOCAMM2 memory module intended for data center systems where capacity, bandwidth, and power efficiency all influence overall performance. The module relies on 64 monolithic 32GB LPDDR5x chips, forming a dense LPDRAM package that addresses the growing memory footprint required by contemporary AI workloads. Scaling memory capacity for AI server platforms The module also increases the maximum memory available per processor configuration. When eight of these SOCAMM2 modules are installed in an eight-channel server CPU, the total capacity can reach 2TB of LPDRAM. That figure exceeds the previous 192GB module generation by roughly one-third, allowing systems to accommodate larger context windows and more demanding inference tasks. Micron describes the SOCAMM2 design as more efficient than conventional server memory modules. "Micron's 256GB SOCAMM2 offering enables the most power-efficient CPU-attached memory solution for both AI and HPC," said Raj Narasimhan, senior vice president and general manager of Micron's Cloud Memory Business Unit. "Our continued leadership in low-power memory solutions for data center applications has uniquely positioned us to be the first to deliver a 32Gb monolithic LPDRAM die, helping drive industry adoption of more power-efficient, high-capacity system architectures." The company says the new LPDRAM module consumes roughly one-third the power of comparable RDIMMs while occupying only one-third of the physical footprint. Its reduced energy demand and smaller module size allow higher rack density inside large data center deployments, while lower memory power reduces the thermal load and infrastructure costs. The SOCAMM2 architecture also follows a modular design intended to simplify maintenance and future expansion. The format supports liquid-cooled server systems and can accommodate additional capacity as memory requirements grow alongside model complexity and dataset scale. Micron states the 256GB SOCAMM2 module can influence certain inference operations under unified memory architectures. The company reports more than a 2.3 x improvement in time-to-first-token during long-context inference when the module is used for key value cache offloading. In standalone CPU workloads, the LPDRAM configuration reportedly delivers over 3 x better performance per watt compared with mainstream server memory modules. The company's LPDRAM portfolio spans components from 8GB to 64GB and SOCAMM2 modules ranging from 48GB to 256GB, and customer samples of the 256GB SOCAMM2 module are already shipping. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button! And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

[3]

Micron ships world's first 256GB LPDRAM SOCAMM2 memory module

TL;DR: Micron introduces the world's first 256GB LPDRAM SOCAMM2 module with a monolithic 32Gb LPDDR5X die, offering one-third the power consumption and a smaller footprint. Designed for AI and data centers, it delivers 2.3x faster performance and improved efficiency, advancing high-capacity, low-power memory solutions. With one-third of the power consumption and a smaller footprint, Micron has unveiled a memory world first, a monolithic 32Gb LPDDR5X die powering a high-capacity 256GB LPDRAM SOCAMM2 module. And with 2TB of LPDRAM per 8-channel CPU, Micron says you're looking at a 2.3x speed increase in the all-important 'time to first token' metric. Yes, this impressive memory module is designed to serve the AI and Data Center markets, targeting LLM inference and workloads where memory capacity, bandwidth, efficiency, and latency influence performance and scalability. SOCAMM2 presents an ideal solution, as its smaller footprint and lower power consumption make it more attractive than traditional server memory like RDIMMs. Micron says that it has been collaborating with NVIDIA in the development of sophisticated memory for AI, which has led to the world's first 256GB LPDRAM SOCAMM2 module. Although GPU VRAM is critical for AI, fast system or server memory is right there, as KV cache offloading moves key/value data from GPU memory to lower-cost solutions like this. "Micron's 256GB SOCAMM2 offering enables the most power-efficient CPU-attached memory solution for both AI and HPC," said Raj Narasimhan, senior vice president and general manager of Micron's Cloud Memory Business Unit. "Today's announcement highlights Micron's technology and packaging advancements to deliver the highest-capacity, lowest-power modular memory solution with the smallest footprint in the industry. Our continued leadership in low-power memory solutions for data center applications has uniquely positioned us to be the first to deliver a 32Gb monolithic LPDRAM die, helping drive industry adoption of more power-efficient, high-capacity system architectures."

[4]

Micron Ships Out the "World's First" 256GB SOCAMM2 Modules Targeted Toward the Agentic AI Frenzy

Micron's latest breakthrough in the memory industry is the debut of the more capable SOCAMM2 memory modules, featuring leading capacity and power efficiency. With the 'applications' layer of AI, the memory bottleneck is growing as workloads continue to scale, which is why DRAM manufacturers have paid special attention to advancements being made with HBM and other AI-specific memory products. In Micron's latest announcement, the firm has set a "new benchmark" with SOCAMM2 memory modules, as they ramp up the per-module capacity to 256 GB, marking a massive leap from the previous 192 GB threshold. According to the company, SOCAMM2 will be integrated into modern-day AI infrastructure equipment and help address memory constraints. Micron's achievements in delivering massive memory capacity and bandwidth using less power than traditional server memory with 256GB SOCAMM2 is enabling the next generation of AI CPUs. - Ian Finder, Head of Product, Data Center CPUs at NVIDIA With the latest SOCAMM2 iteration, Micron has increased the capacity of a single LPDRAM monolithic die to 32 GB. With the 256 GB model, 2 TB of LPDRAM per 8-channel CPU is provided, enabling AI servers to process long-context windows with ease. Micron also says that with the new SOCAMM2 solution, the TTFT has increased by 2.3 times for long-context inference, significantly helping complement agentic workloads, where standalone CPU applications are a key focus. SOCAMM2 is a solution developed in cooperation with NVIDIA, and in a previous post, we discussed how Vera Rubin will be one of the first AI infrastructure offerings to utilize the new memory standard. In the AI world, memory has become a significant asset in workloads where latency and context are important, but at the same time, SOCAMM2 is one of the products that would take up a decent portion of the DRAM supply, likely eating into allocations for general-purpose products like GDDR7. Micron says that 256GB SOCAMM2 samples have already been shipped to customers, and that the solution will be on showcase at this year's GTC 2026.

[5]

Micron Technology, Inc. Sets New Benchmark with the World's First High-Capacity 256Gb LPDRAM SOCAMM2 for Data Center Infrastructure

Micron Technology, Inc. extended its leadership in low-power server memory by shipping customer samples of the industry?s highest-capacity LPDRAM module ? 256GB SOCAMM2. Enabled by the industry's first monolithic 32Gb LPDDR5X design, this milestone represents a transformational step forward for AI data centers, delivering low-power memory capacity that can unlock new system architectures. The convergence of AI training, inference, agentic AI and general-purpose compute are driving more demanding memory requirements and reshaping data center system architectures. Modern AI workloads drive large model parameters, expansive context windows and persistent key value (KV) caches, while core compute continues to scale in data intensity, concurrency and memory footprint. Across these workloads, memory capacity, bandwidth efficiency, latency and power efficiency have become primary system level constraints, directly influencing performance, scalability and total cost of ownership. LPDRAM?s unique combination of these attributes position it as a cornerstone solution for both AI and core compute servers in increasingly power and thermally constrained data center environments. Micron is collaborating with NVIDIA to co-design sophisticated memory for the needs of advanced AI infrastructure. Micron?s 256GB SOCAMM2 delivers higher memory capacity, substantially lower power consumption and faster performance for a variety of AI and general-purpose computing workloads. With one-third more capacity than the prior highest capacity 192GB SOCAMM2, 256GB SOCAMM2 provides 2TB of LPDRAM per 8-channel CPU for larger context windows and complex inference workloads. SOCAMM2 consumes one-third of the power compared with equivalent RDIMMs, while using only one-third of the footprint, improving rack density and reducing the total cost of ownership. In unified memory architectures, 256GB SOCAMM2 improves time to first token by more than 2.3 times for long context, real-time LLM inference when used for KV cache offload compared to currently available solutions. In standalone CPU applications, LPDRAM delivers more than 3 times better performance per watt than mainstream memory modules for high-performance computing workloads. The modular SOCAMM2 design improves serviceability, supports liquid-cooled server architectures and enables future capacity expansion as AI and core compute memory requirements continue to grow. Micron continues to play a leading role in the JEDEC SOCAMM2 specification definition and maintains deep technical collaborations with system designers to drive industry-wide improvements in power efficiency and performance for next-generation data center platforms. Micron is now shipping customer samples of its 256GB SOCAMM2 and offers the industry?s broadest data center LPDRAM portfolio, spanning 8GB to 64GB components and 48GB to 256GB SOCAMM2 modules. One-third of the power consumption calculated based on watts of power used by one 128GB, 128-bit bus width SOCAMM2 module compared to two 64GB, 64-bit bus width DDR5 RDIMMs. One-third footprint calculation compares SOCAMM2 area (14x90mm) versus a standard server RDIMM. Results are based on Micron internal testing of real-time inference with Llama3 70B model (with FP16 quantization) using 500K context length and 16 concurrent users. The projected TTFT latency improvement is based on a latency of 0.12s for 2TB LPDRAM per CPU vs. 0.28s for 1.5TB LPDRAM per CPU. Micron internal testing measuring Pot3D solar physics HPC code performance on identical capacities of LPDDR5X and DDR5.

Share

Share

Copy Link

Micron has begun shipping samples of the industry's first 256GB LPDRAM SOCAMM2 memory module to customers, marking a 33% capacity increase over previous 192GB modules. The high-density memory module enables AI servers to reach 2TB of RAM per 8-channel CPU while consuming one-third the power of traditional RDIMMs and occupying just one-third of the footprint.

Micron Ships Industry's First 256GB SOCAMM2 to Transform AI Data Centers

Micron has begun shipping customer samples of what it claims is the industry's first 256GB LPDRAM SOCAMM2 memory module, a development that positions AI data centers to dramatically expand their memory capacity while reducing power consumption

1

. The new high-density memory module represents a 33% capacity increase over the previous generation's 192GB modules released just six months ago, enabling AI servers to reach 2TB of LPDRAM per 8-channel CPU2

. This advancement addresses a critical bottleneck as large language models and inference workloads increasingly demand enormous memory pools to handle expanding context windows and complex AI tasks.Monolithic 32Gb LPDDR5X Dies Power Unprecedented Density

The 256GB SOCAMM2 module achieves its capacity through Micron's industry-first monolithic 32Gb LPDDR5X dies, where all memory and relevant circuitry are integrated into a single die

3

. Each module packs 64 of these 32GB chips into a compact form factor that consumes approximately one-third the power of comparable RDIMMs while occupying only one-third of the physical footprint5

. This power efficiency translates directly into reduced thermal load and infrastructure costs for data center operators deploying hundreds of billions of dollars in AI infrastructure. The smaller footprint also improves rack density, allowing more computing power to fit within existing data center space constraints.

Source: Wccftech

NVIDIA Collaboration Drives AI Memory Innovation

Micron developed the 256GB SOCAMM2 in collaboration with NVIDIA, building on the SOCAMM2 standard that emerged from a partnership between NVIDIA and memory manufacturers Micron, Samsung, and SK hynix

1

. The original SOCAMM standard was designed by NVIDIA, but the company faced challenges with overheating on high-density servers, prompting collaboration with memory specialists to create the improved SOCAMM2 format. Ian Finder, Head of Product for Data Center CPUs at NVIDIA, noted that "Micron's achievements in delivering massive memory capacity and bandwidth using less power than traditional server memory with 256GB SOCAMM2 is enabling the next generation of AI CPUs"4

. The modular SOCAMM2 design supports liquid-cooled server architectures and enables future capacity expansion as AI memory requirements continue to grow.Related Stories

Performance Gains Target Time To First Token and Agentic AI

The increased memory capacity directly impacts AI performance metrics that matter most to users. Micron reports that the 256GB SOCAMM2 improves Time To First Token (TTFT) by more than 2.3 times for long-context, real-time LLM inference when used for key value cache offloading compared to currently available solutions

5

. This improvement was demonstrated in internal testing using the Llama3 70B model with FP16 quantization, 500K context length, and 16 concurrent users. In standalone CPU applications focused on High-Performance Computing (HPC) workloads, LPDRAM delivers more than 3 times better performance per watt than mainstream memory modules. The solution particularly benefits agentic AI workloads where standalone CPU applications play a key role in processing complex, multi-step tasks that require sustained access to large context windows4

.Datacenter Deployment and Industry Adoption

For typical NVIDIA NVL72 rack configurations, the new modules enable deployment of 72TB of RAM across 36 CPUs, a substantial increase that allows AI systems to accommodate larger model parameters and more demanding inference tasks

1

. Raj Narasimhan, senior vice president and general manager of Micron's Cloud Memory Business Unit, emphasized that "Micron's 256GB SOCAMM2 offering enables the most power-efficient CPU-attached memory solution for both AI and HPC"3

. Micron continues to play a leading role in the JEDEC SOCAMM2 specification definition and maintains deep technical collaborations with system designers to drive industry-wide improvements. The company now offers the industry's broadest data center LPDRAM portfolio, spanning 8GB to 64GB components and 48GB to 256GB SOCAMM2 modules, with the 256GB version set to be showcased at GTC 20264

. As AI workloads continue to scale and memory becomes an increasingly critical constraint on system performance and scalability, these high-capacity modules address the convergence of AI training, inference, and general-purpose compute that is reshaping data center system architectures.References

Summarized by

Navi

[1]

[4]

Related Stories

Nvidia Collaborates with Major Memory Makers on New SOCAMM Format for AI Servers

24 Mar 2025•Technology

SK hynix 256 GB DDR5 module slashes Intel Xeon power consumption by 18%, saving data centers millions

19 Dec 2025•Technology

Micron Leads Memory Innovation with World's First 1γ LPDDR5X for AI-Driven Smartphones

05 Jun 2025•Technology

Recent Highlights

1

Anthropic's Claude AI can now control your computer to complete tasks autonomously

Technology

2

Nvidia's Jensen Huang calls OpenClaw the next ChatGPT, sending Chinese AI stocks soaring

Technology

3

Elon Musk unveils $20 billion Terafab chip manufacturing project to produce terawatt of computing

Technology