Microsoft's MAI-Image-2 cracks top three on Arena.ai leaderboard behind Google and OpenAI

5 Sources

5 Sources

[1]

Microsoft's MAI-Image-2 enters the top three AI image generators

The second version of Microsoft's in-house image model lands at #3 on Arena.ai's leaderboard, behind only Google and OpenAI, and begins rolling out across Copilot and Bing Image Creator today. A year ago, Microsoft was generating images for Bing and Copilot almost entirely with OpenAI's models. On Thursday, the company's in-house team announced MAI-Image-2, a second-generation image model that has debuted at number three on the Arena.ai text-to-image leaderboard, placing Microsoft's own technology directly behind Google's Gemini 3.1 Flash and OpenAI's GPT Image 1.5. The announcement comes from the Microsoft AI Superintelligence team, the internal research group that Mustafa Suleyman formed in November 2025 and now leads full-time following a leadership reorganisation at Microsoft announced just two days ago. Mustafa Suleyman stepped back from his broader CEO role at Microsoft AI on Monday to focus exclusively on that team and its frontier model ambitions. MAI-Image-2 is the first model to arrive publicly since that shift. MAI-Image-1, the predecessor, launched in October 2025 and debuted in the top ten on LMArena, the same crowd-sourced preference leaderboard, then known by a slightly different name. At the time, it was Microsoft's first image generation model developed entirely in-house, and the company integrated it into Bing Image Creator and Copilot alongside DALL-E 3 and GPT-4o. MAI-Image-2 extends that trajectory: built with input from photographers, designers, and visual storytellers, and focused on three areas where creatives said the gap was largest. The first is photorealism, natural light, accurate skin tones, environments with physical texture and wear. Microsoft says the model is designed to reduce the post-production work that currently sits between generation and usable output. The second is in-image text: MAI-Image-2 is built to handle readable lettering within scenes, from signage to infographics to typographic layouts, a category where many image models still struggle to produce consistent, accurate characters. The third is detailed scene generation: dense compositions, surreal concepts, cinematic framing, and the kind of imaginative work where precise prompting and high fidelity matter most. Access is rolling out through multiple channels. The MAI Playground, Microsoft's public model testing environment at playground.microsoft.ai, has the model available now. MAI-Image-2 is also beginning to roll out across Copilot and Bing Image Creator. Enterprise customers can access the model via API today, and Microsoft says API access will open to any developer through Microsoft Foundry "soon", though no specific date has been given for that broader availability. A commercial application form is available for organisations interested in large-scale image generation use. The announcement also notes that the team's next-generation GB200 compute cluster is now operational, a reference to NVIDIA's Blackwell-architecture hardware. No details were provided on cluster scale. The infrastructure claim appears to be positioning context for the models the superintelligence team plans to release next, rather than a technically verifiable specification. The pace is notable. Microsoft announced its first in-house voice model (MAI-Voice-1) and its first text model preview (MAI-1-preview) in August 2025. MAI-Image-1 followed in October. Now, five months later, the second image generation model is placing in the top three on the most widely cited crowd-sourced image leaderboard in the field. That cadence suggests the superintelligence team is moving at a different pace from Microsoft's historically slower consumer product cycles, and doing so with hardware and infrastructure it increasingly owns rather than rents from OpenAI.

[2]

Microsoft's new image AI just cracked top 3 on a major leaderboard

MAI-Image-2 is now available through Copilot, Bing Image Creator, and MAI Playground, offering advanced capabilities for creative professionals. On Thursday, Microsoft unveiled a new AI model in a Microsoft AI blog post. It's called MAI-Image-2 and it's primarily built for the generation of images from text-based prompts -- with a twist. According to Microsoft, MAI-Image-2 is best used to generate photorealistic images with elements like "natural light, accurate skin tones, environments that feel lived-in." The AI model is purpose-built for "creative work," and Microsoft explains: For MAI-Image-2 we spoke with photographers, designers, and visual storytellers who made it clear where we could make the biggest difference for everyday creative work. In addition to photorealistic creations, MAI-Image-2 can also consistently generate text within its images, making it a strong option for infographics, slides, diagrams, and more. MAI-Image-2 is also suitable for "surreal concepts, ornate compositions, and ambitious worlds." With MAI-Image-2, Microsoft says MAI has been pushed up and now ranks as the third best text-to-image lab on the Arena.ai leaderboard. MAI-Image-2 is currently rolling out on both Copilot and Bing Image Creator. To experiment with the latest MAI models, you can do so via the MAI Playground (and also share feedback with the devs).

[3]

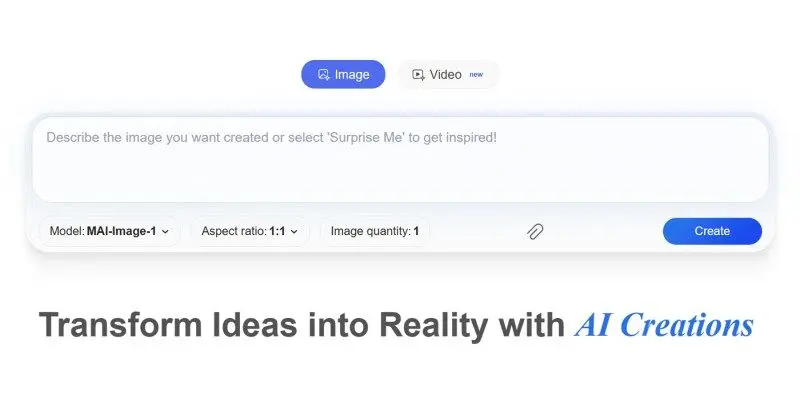

Microsoft Launches MAI-Image-2 Text-to-Image Model -- And It's Better Than Expected - Decrypt

Strict filters, usage caps, and missing features currently limit real-world usefulness, however. Microsoft has been quietly building its own image generator. Announced Thursday by the company's AI Superintelligence team, MAI-Image-2 has already landed at #3 on the Arena.ai leaderboard -- behind only the models from Google and OpenAI -- making Microsoft a legitimate player in a space it had previously outsourced to its partners. That last part is worth sitting with. Microsoft has been paying OpenAI billions to power Copilot and Bing Image Creator. Building a competing image model in-house is an interesting business move. MAI-Image-2 is available now in the MAI Playground, with a gradual rollout to Copilot and Bing Image Creator underway. API access is currently limited to select enterprise customers, with broader availability on Microsoft Foundry coming soon. The team says it built the model by talking directly to photographers, designers, and visual storytellers. Three things came out of those conversations: improved photorealism, more reliable in-image text generation, and stronger capacity for detailed, imaginative scene construction. Whether or not that process translated into a genuinely useful tool is a different question. The first thing you notice when you open the MAI Playground is how understated it is. The interface is minimal and clean, visually somewhere between Claude and Hume, with none of the maximalist dashboard energy you get from Midjourney or the chatbot experience you get from Gemini. The images themselves are genuinely pretty strong. Photorealism is a real strength here -- the model has a solid grasp of natural light, surface texture, and spatial relationships. It doesn't quite hit the level of Google's Nano Banana Pro, which still rules the leaderboard for a reason, but in some realism tests it comes surprisingly close. Better prompting likely pushes it further; our initial results improved noticeably as we dialed in our descriptions. Even complex, unrealistic scenes with parameters that defied logic were properly handled by the model, beating other models in details like the body proportions, limb position, depth, and spatial positioning. For example, this image of a dog riding a bike in the middle of the ocean is arguably the most accurate one we've produced in zero-shot tests. Text generation is a legitimate highlight. MAI-Image-2 handled complex typography with far more consistency than we expected -- large blocks of text in images, posters, signage -- without the typical garbling you see from most models. We even pushed it toward multilingual text: It managed to generate some hanzi Chinese characters, though the accuracy wasn't perfect. Still, the fact that it tried and got partway there is notable. The model understands artistic style well, shifting between photographic realism, graphic design aesthetics, and illustrated styles without much friction. It reads prompts carefully, including stylistic instructions, and delivers something coherent on the other end. For a broad range of visual tasks, it's versatile. Now for the harder truths. MAI-Image-2 is aggressively filtered -- more so than Google Imagen, and more so than OpenAI's DALL-E. We ran our usual test of a cartoon drawing of a spider chasing a woman, and got a flat refusal. Again, that's a drawing -- of a spider. The content moderation here is tuned to a level that will frustrate anyone doing creative work in gray areas, horror illustration, or anything that reads as remotely tense. The usage limits are equally restrictive. Each generation triggers a 30-second cooldown. After 15 images, you're locked out for 24 hours. For casual experimentation, that's manageable. For any kind of production workflow, it's a dealbreaker in the native UI. There's also only one resolution: 1:1. No landscape, no portrait, no custom ratios. In 2026, that's a significant limitation -- particularly for social media content, which is precisely where Microsoft presumably wants this embedded in Copilot. And speaking of Copilot: MAI-Image-2 isn't there yet. The rollout is happening, but as of today, the product you'd actually want it in doesn't have it. One more missing piece: This is purely a text-to-image tool. No image-to-image, no inpainting, no outpainting, no reference image support. For users expecting anything close to Firefly or Midjourney's editing capabilities, this will feel half-finished. MAI-Image-2 performs better than its leaderboard ranking suggests. In our hands-on tests, it beat GPT-Image on image quality and text rendering, which is interesting given that GPT-Image sits above it on Arena.ai's leaderboard. Benchmark positions don't always tell the full story. The strategic logic behind building this is clear. Microsoft has been licensing OpenAI's image models for Copilot while simultaneously funding OpenAI's biggest competitor, Anthropic. Having a capable in-house model reduces dependency, cuts costs at scale, and gives Microsoft something to iterate on without asking for permission. From that angle, MAI-Image-2 doesn't need to beat Nano Banana. It just needs to be good enough -- and it is. The problem is the product constraints. The generation caps, the strict content policy, the 1:1-only output, the missing editing features, etc; these are the kinds of limitations that put a ceiling on real-world utility. A model this capable deserves infrastructure that matches it. MAI-Image-2 is a strong technical foundation hamstrung by conservative product decisions. Once Microsoft loosens the restrictions, this becomes a serious contender. Right now, it's a promising preview of what Microsoft's image stack could actually become.

[4]

Microsoft launches MAI-Image-2: here's all you need to know

Microsoft launched its second text-to-image model, MAI-Image-2, on Thursday. This is aimed at improving creative workflows, with a focus on photorealism, reliable text rendering, and detailed scene generation. According to the company, the model produces images with natural lighting, accurate skin tones, and realistic environments, reducing the need for post-production work. A key upgrade the company claims it has achieved is its ability to generate consistent in-image text, enabling use cases such as infographics, slides, posters, and diagrammes with greater accuracy. This addresses a longstanding limitation in image generation models where text often appears distorted. The company claims the model is among the global top three 'family' models on the Arena.ai leaderboard. As of March 19, of the 51 models Arena.ai keeps track of, Microsoft's MAI-Image-2 is ranked fifth. Gemini's three models -- 3.1 Flash, 3 Pro Image 2K, and 3 Pro Image -- dominated the top five, with OpenAI's GPT-image-1.5 ranked second. Arena.ai is a crowdsourced platform that ranks large language models (LLMs) and other AI models based on user preferences. MAI-Image-2 also targets complex and imaginative outputs, supporting cinematic compositions, and surreal visuals. The model was developed with inputs from photographers, designers, and visual storytellers to better align with practical creative needs. The model is now available for preview through the MAI Playground, where users can test features and provide feedback. The Playground is the public testing environment for Microsoft's in-house- AI models. The company said the system will gradually roll out across its ecosystem, including Microsoft Copilot and Bing Image Creator. API access is currently limited to select enterprise customers such as WPP, with broader developer availability expected soon via Microsoft Foundry. Microsoft said further updates are planned as it expands its next-generation AI infrastructure and model capabilities. "It's shipping soon in Copilot and Bing Image Creator, as well as Microsoft Foundry... stay tuned for new releases and come join us on our Superintelligence mission," Microsoft AI CEO Mustafa Suleyman said in a post on X. The launch comes as competition intensifies in generative AI, particularly in image generation, where companies are racing to improve realism, controllability, and production-ready outputs.

[5]

Microsoft unveils MAI Image 2 with better photorealism and text generation: How to use it

The company is also rolling out MAI Image 2 in Copilot and Bing Image Creator. Microsoft has announced a new AI image generation model called MAI Image 2, which is said to create more realistic images and generate clearer text within visuals. According to the tech giant, the development of MAI Image 2 involved feedback from people working in creative fields. The company spoke with photographers, designers, and visual storytellers to understand where existing AI tools fall short in everyday creative work. One of the biggest upgrades in MAI Image 2 is enhanced photorealism. Microsoft says the model focuses on elements such as natural lighting, accurate skin tones, and realistic environments. The goal is to make images look as if they exist in the real world rather than appearing artificial. This could help creatives spend less time correcting details in post-production. Also read: OpenAI plans AI superapp to merge ChatGPT, Codex and Atlas browser: Here's why Another improvement comes in the way the model handles text within images. 'From poster type to the sign in the background of a scene, text can be a key part of imagery. MAI-Image-2 enables consistent creation of infographics, slides, diagrams, and more, with little lost between direction and creation,' the company said. The model is also designed to create complex and visually rich scenes. Microsoft says MAI Image 2 can handle surreal ideas, cinematic visuals, and detailed compositions more effectively. Also read: Perplexity launches Comet AI browser for iPhone users: Check features Microsoft has made a preview version of the model available through the MAI Playground. The company is also rolling out MAI Image 2 in Copilot and Bing Image Creator. API access is already available to select customers such as WPP that need large-scale image generation.

Share

Share

Copy Link

Microsoft unveiled MAI-Image-2, its second-generation AI image generation model, which debuted at #3 on Arena.ai's text-to-image leaderboard. The model excels at photorealism, accurate text rendering, and complex scene generation. It's now rolling out across Microsoft Copilot, Bing Image Creator, and MAI Playground, marking Microsoft's shift from relying on OpenAI's models to building competitive in-house technology.

Microsoft Claims Third Place with MAI-Image-2

Microsoft announced MAI-Image-2 on Thursday, a second-generation text-to-image model that landed at #3 on the Arena.ai leaderboard, positioning the company directly behind Google's Gemini 3.1 Flash and OpenAI's GPT Image 1.5

1

. The announcement comes from Microsoft's AI Superintelligence team, the internal research group that Mustafa Suleyman formed in November 2025 and now leads full-time following a leadership reorganization announced earlier this week1

. Just a year ago, Microsoft was generating images for Bing and Microsoft Copilot almost entirely with OpenAI's models, making this in-house achievement particularly significant for the company's AI ambitions1

.

Source: Decrypt

Built with Input from Creative Professionals

The development of MAI-Image-2 involved direct feedback from photographers, designers, and visual storytellers who identified three critical areas where existing AI image generation tools fall short in everyday creative work

2

. The first priority is photorealism, with the model designed to produce images featuring natural lighting, accurate skin tones, and environments with physical texture and wear1

. Microsoft says the model reduces the post-production work that currently sits between generation and usable output, helping creative professionals spend less time correcting details5

.

Source: PCWorld

Accurate Text Rendering Addresses Industry Pain Point

The second major improvement tackles in-image text generation, an area where many AI image generation models still struggle to produce consistent, accurate characters

1

. MAI-Image-2 handles readable lettering within scenes, from signage to infographics to typographic layouts, enabling use cases such as slides, posters, and diagrams with greater accuracy4

. In hands-on testing, the model demonstrated legitimate strength in text generation, handling complex typography with far more consistency than expected, including attempts at multilingual text such as Chinese hanzi characters3

.Complex Scene Generation for Ambitious Visual Work

The third focus area is detailed scene generation, where MAI-Image-2 targets dense compositions, surreal concepts, cinematic framing, and imaginative work requiring precise prompting and high fidelity

1

. The model understands artistic style well, shifting between photographic realism, graphic design aesthetics, and illustrated styles without friction3

. In testing, complex scene generation with unrealistic parameters was properly handled, with the model excelling at details like body proportions, limb position, depth, and spatial positioning3

.Availability Across Multiple Channels

MAI-Image-2 is now available through the MAI Playground, Microsoft's public model testing environment at playground.microsoft.ai

1

. The model is also beginning to roll out across Microsoft Copilot and Bing Image Creator, though the deployment is gradual3

. Enterprise customers including WPP can access the model via API today, with broader developer availability expected soon through Microsoft Foundry, though no specific date has been provided4

.Related Stories

Limitations and Real-World Constraints

Despite its leaderboard performance, MAI-Image-2 faces practical limitations that may frustrate users in production workflows. The model implements aggressive content filtering, more restrictive than Google Imagen or OpenAI's DALL-E, which could limit creative professionals working in certain visual genres

3

. Usage caps are equally restrictive, with each generation triggering a 30-second cooldown and a 15-image limit before a 24-hour lockout3

. The model currently only supports 1:1 resolution with no landscape, portrait, or custom ratios, a significant limitation for social media content in 20263

. Additionally, MAI-Image-2 is purely a text-to-image model with no image-to-image, inpainting, or outpainting capabilities3

.Strategic Shift from OpenAI Dependency

The launch represents a notable strategic move for Microsoft, which has been paying OpenAI billions to power its image generation services

3

. Building a competing in-house model reduces dependency and cuts costs at scale, particularly as Microsoft simultaneously funds Anthropic, OpenAI's biggest competitor3

. The pace of development is striking: Microsoft announced its first in-house voice model and text model preview in August 2025, followed by MAI-Image-1 in October, and now MAI-Image-2 just five months later1

. This cadence suggests the superintelligence team is moving at a different pace from Microsoft's historically slower consumer product cycles, using hardware and infrastructure it increasingly owns rather than rents from OpenAI1

. The team's next-generation GB200 compute cluster, based on NVIDIA's Blackwell architecture, is now operational, positioning Microsoft for future model releases1

.References

Summarized by

Navi

[1]

[3]

Related Stories

Microsoft Unveils MAI-Image-1: Its First In-House AI Image Generator

14 Oct 2025•Technology

Microsoft Launches MAI-Image-1: First In-House AI Image Generator Debuts in Top 10 Rankings

05 Nov 2025•Technology

Microsoft releases three in-house AI models in direct challenge to OpenAI partnership

02 Apr 2026•Technology