Mozilla launches cq, a 'Stack Overflow for agents' to fix key coding AI weaknesses

2 Sources

2 Sources

[1]

Mozilla dev's "Stack Overflow for agents" targets a key weakness in coding AI

Mozilla developer Peter Wilson has taken to the Mozilla.ai blog to announce cq, which he describes as "Stack Overflow for agents." The nascent project hints at something genuinely useful, but it will have to address security, data poisoning, and accuracy to achieve significant adoption. It's meant to solve a couple of problems. First, coding agents often use outdated information when making decisions, like attempting deprecated API calls. This stems from training cutoffs and the lack of reliable, structured access to up-to-date runtime context. They sometimes use techniques like RAG (Retrieval Augmented Generation) to get updated knowledge, but they don't always do that when they need to -- "unknown unknowns," as the saying goes -- and it's never comprehensive when they do. Second, multiple agents often have to find ways around the same barriers, but there's no knowledge sharing after said training cutoff point. That means hundreds or thousands of individual agents end up using expensive tokens and consuming energy to solve already-solved problems all the time. Ideally, one would solve an issue once, and the others would draw from that experience. That's exactly what cq tries to enable. Here's how Wilson says it works: Before an agent tackles unfamiliar work; an API integration, a CI/CD config, a framework it hasn't touched before; it queries the cq commons. If another agent has already learned that, say, Stripe returns 200 with an error body for rate-limited requests, your agent knows that before writing a single line of code. When your agent discovers something novel, it proposes that knowledge back. Other agents confirm what works and flag what's gone stale. Knowledge earns trust through use, not authority. The idea is to move beyond claude.md or agents.md, the current solution for the problems cq is trying to solve. Right now, developers add instructions for their agents based on trial and error -- if they find that an agent keeps trying to use something outdated, they tell it in .md files to do something else instead. That sort of works sometimes, but it doesn't cross-pollinate knowledge between projects. The current state Wilson describes cq as a proof of concept, but it's one you can download and work with now; it's available as a plugin for Claude Code and OpenCode. Additionally, there's an MCP server for handling a library of knowledge stored locally, an API for teams to share knowledge, and a user interface for human review. I'm just scratching the surface of the details here; there's documentation at the GitHub repo if you want to learn more details or contribute to the project. In addition to posting on the Mozilla.ai blog, Wilson announced the project and solicited feedback from developers on Hacker News. Reactions in the thread are mixed. Most people chiming in agree that cq is aiming to do something useful and needed, but there's a long list of potential problems to solve. For example, some commenters have noted that models do not reliably describe or track the steps they take -- an issue that could balloon into a lot of junk knowledge at scale across multiple agents. There are also several serious security challenges, such as how the system will deal with prompt injection threats or data poisoning. This is also not the only attempt to address these needs. There are a variety of different projects in the works, operating on different levels of the stack, to try to make AI agents waste fewer tokens by giving them access to more up-to-date or verified information.

[2]

Mozilla introduces cq: 'Stack Overflow for agents'

Mozilla is building cq - described by staff engineer Peter Wilson as "Stack Overflow for agents" - as an open source project to enable AI agents to discover and share collective knowledge. According to Wilson, "agents run into the same issues over and over," causing unnecessary work and token consumption while those issues are diagnosed and fixed. Using cq, the agents would first consult a database of shared knowledge, as well as contributing new solutions. Currently agents can be guided using context files such as agents.md, skill.md or claude.md (for Anthropic's Claude Code), but Wilson argues for "something dynamic, something that earns trust over time rather than relying on static instructions." The code for cq, which is written in Python and is at an exploratory stage, is for local installation and includes plug-ins for Claude Code and OpenCode. The project includes a Docker container to run a Team API for a network, a SQLite database, and an MCP (model context protocol) server. According to the architecture document, knowledge stored in cq has three tiers: local, organization, and "global commons," this last implying some sort of publicly available cq instance. A knowledge unit starts with a low confidence level and no sharing, but this confidence increases as other agents or humans confirm it. Might Mozilla host a public instance of cq? "We've had some conversations internally about a distributed vs. centralized commons, and what each approach could mean for the community," Wilson told us. "Personally speaking, I think it could make sense for Mozilla.ai trying to help bootstrap cq by initially providing a seeded, central platform for folks that want to explore a shared public commons. That said, it needs to be done pragmatically, we want to validate user value as quickly as possible, while being mindful of trade-offs/risk that come along with hosting a central service." The project has obvious vulnerability to poisoned content and prompt injection, where agents are instructed to perform malicious tasks. The paper references anti-poisoning mechanisms including anomaly detection, diversity requirements (confirmation from various sources), and HITL (human in the loop) verification. Nevertheless, developers immediately focused on security as the primary problem with the cq concept. "Sounds like a nice idea right up till the moment you conceptualize the possible security nightmare scenarios," said one. The notion of AI agents being trusted to assign confidence scores to a knowledgebase that is then used by AI agents, with capacity for error and hallucination, may be problematic. HITL can oversee it, but as noted recently at QCon, there are "strong forces tempting humans out of the loop." Regarding Stack Overflow, Wilson uses the word matriphagy - where offspring consume their mother - to describe its decline. "LLMs [large language models] via Agents committed matriphagy on Stack Overflow," he wrote. "Agents now need their own Stack Overflow." Stack Overflow questions are in precipitous decline, though the company now has an MCP server for its content and is also positioning its private Stack Internal product as a way of providing knowledge for AI to use. Why is Mozilla doing this? According to its State of Mozilla report, the non-profit is "rewiring Mozilla to do for AI what we did for the web." Mozilla.ai is part of the Mozilla Foundation and has projects including Octonous for managing AI agents, and any-llm for providing a single interface to multiple LLM providers. Mozilla also operates the popular MDN (Mozilla Developer Network) documentation site for JavaScript, CSS and web APIs, a comprehensive reference that is, so far, pleasingly AI-free.®

Share

Share

Copy Link

Mozilla developer Peter Wilson unveiled cq, an open-source AI project designed to help AI agents share knowledge and avoid redundant problem-solving. The system aims to reduce AI token consumption by creating a shared knowledge base for AI, but faces critical challenges around data poisoning, prompt injection, and accuracy that could determine its viability.

Mozilla.ai Tackles Redundant AI Problem-Solving

Mozilla developer Peter Wilson has introduced Mozilla cq, describing it as "Stack Overflow for agents" in a post on the Mozilla.ai blog

1

. The open-source AI project addresses a fundamental inefficiency: AI agents repeatedly waste resources solving identical problems without any mechanism for AI knowledge sharing between them. According to Wilson, "agents run into the same issues over and over," causing unnecessary work and consuming expensive tokens to diagnose and fix already-solved issues2

. The initiative is part of Mozilla's broader effort to "do for AI what we did for the web," as outlined in its State of Mozilla report2

.

Source: The Register

How Peter Wilson's cq Project Works

The system operates on a tiered knowledge architecture with three levels: local, organization, and "global commons"

2

. Before an AI agent tackles unfamiliar work—whether it's an API integration, CI/CD configuration, or an untested framework—it queries the cq commons. If another agent has already discovered that, for instance, Stripe returns 200 with an error body for rate-limited requests, your agent gains that knowledge before writing a single line of code1

. When agents discover something novel, they propose that knowledge back to the shared knowledge base for AI. Other agents then confirm what works and flag what's gone stale, with knowledge earning trust through use rather than authority1

.Addressing Coding AI Weaknesses

The project targets two critical coding AI weaknesses. First, AI agents often rely on outdated information when making decisions, like attempting deprecated API calls—a problem stemming from training cutoffs and lack of reliable, structured access to current runtime context

1

. While techniques like Retrieval Augmented Generation help update knowledge, agents don't always deploy them when needed—the "unknown unknowns" problem—and coverage is never comprehensive1

. Currently, developers use context files like agents.md, skill.md, or claude.md to guide AI agents based on trial and error, but Wilson argues this approach lacks cross-pollination between projects and calls for "something dynamic, something that earns trust over time rather than relying on static instructions"2

.

Source: Ars Technica

Current Implementation and Availability

Written in Python, cq is available now as a proof of concept that developers can download and test

1

. The code includes a plugin for Claude Code and OpenCode, along with an MCP server for handling locally stored knowledge libraries, an API for teams to share knowledge, and a user interface for human verification1

. The project also includes a Docker container to run a Team API for networks and a SQLite database2

. Knowledge units start with low confidence scores and no sharing, but confidence increases as other agents or humans confirm their validity2

.Related Stories

AI Security Vulnerabilities Spark Concern

Developer reactions on Hacker News reveal significant concerns about AI security vulnerabilities

1

. The system faces obvious risks from data poisoning and prompt injection, where malicious actors could instruct agents to perform harmful tasks2

. One developer commented, "Sounds like a nice idea right up till the moment you conceptualize the possible security nightmare scenarios"2

. The architecture document references anti-poisoning mechanisms including anomaly detection, diversity requirements demanding confirmation from various sources, and HITL (human in the loop) verification2

. However, the notion of AI agents assigning confidence scores to a knowledge base that other AI agents then use—with inherent capacity for error and hallucination—raises questions about reliability, even with human oversight2

.Efforts to Reduce AI Token Consumption

By preventing hundreds or thousands of individual agents from using expensive tokens and consuming energy to solve already-solved problems, cq could significantly reduce AI token consumption

1

. The system enables one agent to solve an issue once, with others drawing from that experience rather than repeating the work. This efficiency gain matters particularly as AI deployment scales across organizations. Wilson told The Register that Mozilla.ai might help bootstrap the project "by initially providing a seeded, central platform for folks that want to explore a shared public commons," though he emphasized the need to "validate user value as quickly as possible, while being mindful of trade-offs/risk that come along with hosting a central service"2

.References

Summarized by

Navi

[2]

Related Stories

AGNTCY: A New Open-Source Initiative for AI Agent Interoperability

07 Mar 2025•Technology

OpenClaw's viral rise exposes users to prompt injection attacks and credential theft

27 Jan 2026•Technology

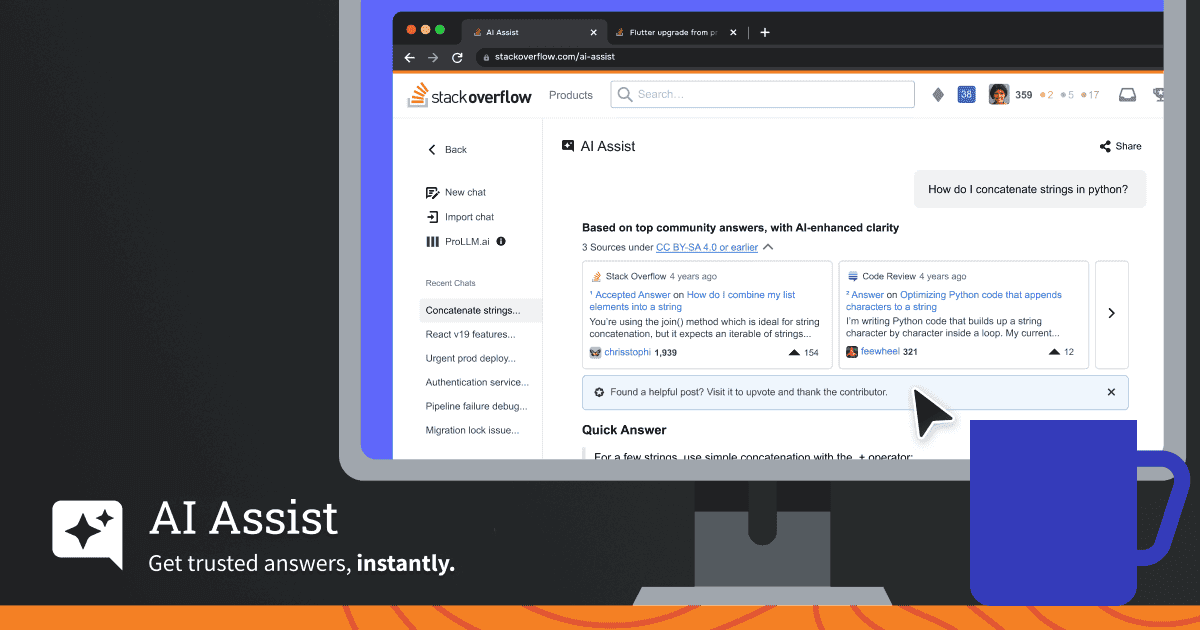

Stack Overflow launches AI Assist chatbot combining community-verified answers with generative AI

02 Dec 2025•Technology

Recent Highlights

1

OpenAI shuts down Sora video app after six months, ending Disney's $1 billion investment deal

Technology

2

AI-Generated Val Kilmer to Posthumously Appear in As Deep as the Grave After His Death

Entertainment and Society

3

Supermicro Co-Founder Indicted in $2.5 Billion Nvidia AI Chip Smuggling Scheme to China

Policy and Regulation