NVIDIA and Emerald AI Launch Power-Flexible Factories to Solve Grid Bottleneck for AI

4 Sources

4 Sources

[1]

Efficiency at Scale: NVIDIA, Energy Leaders Accelerating Power‑Flexible AI Factories to Fortify the Grid

Driving intelligence per watt and AI for energy advancements, Emerald AI, AES, Constellation, Invenergy, NextEra Energy, Nscale Energy & Power, Vistra, Maximo, TerraPower, Adaptive Construction Solutions, GE Vernova, Schneider Electric and Vertiv showed what's possible at CERAWeek. CERAWeek -- dubbed the Davos of energy -- is where policymakers, producers, technologists and financiers gather to discuss how the world powers itself next. NVIDIA and Emerald AI unveiled at the conference last week a new way forward -- treating AI factories not as static power loads but as flexible, intelligent grid assets. This collaboration unifies accelerated computing, AI factory reference architectures and real‑time energy orchestration, helping large AI deployments connect to the grid faster, operate more efficiently and fortify system reliability. Built on the NVIDIA Vera Rubin DSX AI Factory reference design and Emerald AI's Conductor platform, the approach brings together compute, power networking and control into a single architecture. The result is an AI factory that can generate high‑value AI tokens while dynamically responding to grid conditions -- flexing when needed, supporting reliability and reducing the need to overbuild infrastructure for peak demand. AES, Constellation, Invenergy, NextEra Energy, Nscale Energy & Power and Vistra are working to build the energy generation capacity needed to meet rapidly growing power demand. The companies plan to collaborate on optimized generation strategies to support AI factories built on the NVIDIA and Emerald AI architecture, including hybrid projects that use co‑located power to accelerate time to power while delivering value to the broader grid. By pairing large AI loads with flexible operations, new generation resources and intelligent controls, this approach strengthens grid reliability. It's an important milestone in grid resilience, supported by an ecosystem for advanced AI factories. This new computing infrastructure paradigm -- described by NVIDIA founder and CEO Jensen Huang as a five-layer AI cake -- has energy as its foundational layer. Driving Improvements in Tokens Per Second Per Watt Power constraints are reshaping AI data centers, with energy efficiency or performance per watt, specifically tokens per second per watt, the defining metric of our modern computing infrastructure. By prioritizing computational efficiency, organizations can lower operating costs, maximize revenue and create a resilient digital infrastructure for businesses and consumers across America and worldwide. "Power is a concern, but it's not the only concern," Huang said on a recent Lex Fridman podcast. "That's the reason why we're pushing so hard on extreme codesign, so that we can improve the tokens per second per watt orders of magnitude every single year." NVIDIA has a long history of driving performance and energy efficiency. From the NVIDIA Kepler GPU in 2012 to the NVIDIA Vera Rubin platform this year, the number of tokens generated within the same power budget has increased by more than 1 million times. It takes industry collaboration across the five-layer AI cake -- from energy to chips, infrastructure, models and applications -- to make this happen. Robotics, Digital Twins and AI Upskilling Drive Energy Advances NVIDIA ecosystem partners showcased at the event how AI, simulation and workforce innovation are accelerating the energy infrastructure needed to support the intelligence era. Announcements from Maximo, TerraPower and Adaptive Construction Solutions exemplify how AI is compressing timelines across construction, power generation and talent development. Maximo, a solar robotics company incubated at AES, announced the completion of a 100‑megawatt robotic solar installation at AES' Bellefield site. Using AI‑driven robotics developed with NVIDIA accelerated computing, NVIDIA Omniverse libraries and the NVIDIA Isaac Sim framework, Maximo demonstrated that autonomous installations can now operate reliably at utility scale. The approach improves installation speed, safety and consistency, helping close the gap between rising electricity demand and construction capacity. TerraPower, working with SoftServe, previewed an NVIDIA Omniverse‑powered digital twin platform designed to dramatically shorten advanced nuclear plant siting and design timelines. By applying AI and simulation to early‑stage engineering, the platform reduces design cycles from years to months, accelerating deployment of TerraPower's Natrium energy plants while improving design and grid integration. Adaptive Construction Solutions announced a national registered apprenticeship initiative, in collaboration with NVIDIA, to help build the skilled workforce required for AI factories and energy infrastructure. The program aims to scale training for critical trades, expanding access to high‑demand careers while supporting the rapid buildout of AI‑driven power systems. The efforts articulated how AI, digital twins and workforce innovation are converging to deliver faster, more resilient energy infrastructure. Coming Together on Scaling AI Factories for Grid Reliability GE Vernova, Schneider Electric and Vertiv highlighted how digital twins, validated reference designs and converged infrastructure are becoming essential to scaling AI factories as reliable grid participants. The announcements address the "power‑to‑rack" challenge -- designing AI infrastructure as an integrated energy and compute system from day one. GE Vernova outlined how high‑fidelity digital twins aligned with the NVIDIA Omniverse DSX Blueprint enable utilities and developers to simulate grid behavior, substations and AI factory loads together before deployment. Such system‑level modeling helps validate interconnection strategies, reduce risk and accelerate time to power in constrained grid environments. Schneider Electric announced new validated NVIDIA Vera Rubin reference designs and lifecycle digital twin architectures developed with AVEVA. By simulating power, cooling and controls in Omniverse, Schneider enables operators to optimize performance per watt, validate designs before buildout and operate AI factories more efficiently and predictably at scale. Vertiv outlined converged, simulation‑ready physical infrastructure built on repeatable power and cooling building blocks. Integrated with the Vera Rubin DSX reference design, Vertiv's approach reduces design and deployment complexity while supporting faster, more confident scaling of AI factories. Together, these industry efforts provide a digital path forward, including the validated architectures and physical infrastructure needed to turn AI factories into flexible, grid‑aware assets for efficiently powering the world. Learn more about how NVIDIA and its partners are advancing energy solutions with AI and high-performance computing.

[2]

AI doesn't need more power, it needs a smarter energy grid

Portland General Electric's partnership demonstrates hundreds of megawatts can be accelerated years ahead through latent capacity activation. As nations compete to power the AI revolution, a counterintuitive strategy is emerging: the infrastructure bottleneck constraining technological leadership is being solved not through copper and construction, but through code. What's known as flexible grid optimization could double effective capacity faster than any building programme, with profound implications for competitiveness and climate goals. When Satya Nadella acknowledged that Microsoft has GPU clusters sitting idle - depreciating assets waiting for power that may not arrive for years - he crystallized the defining constraint of the AI era. Sam Altman's assessment that OpenAI requires a gigawatt of power daily, roughly 20 times what the entire United States added in new generation capacity last year, reveals the scale of misalignment between AI ambitions and energy reality. This is no longer simply a utility planning issue. It's a question of national competitiveness and human prosperity. The conventional narrative frames this as an infrastructure deficit requiring massive capital deployment and decade-long construction timelines. Yet Stanford researchers studying grid utilization patterns across advanced economies have identified a paradox that reframes the entire challenge: these sophisticated electricity systems operate at approximately 30% utilization, with two-thirds of installed capacity sitting idle most hours. The grid reaches capacity constraints for perhaps 100 hours annually, under rare peak conditions. The rest of the time, vast capacity goes unused. The world is witnessing fundamentally different approaches to solving the AI power challenge, each reflecting distinct institutional capabilities and strategic priorities. China deployed nearly 550 GW of new power capacity last year while the United States added 53 GW. This rapid deployment model leverages state coordination, centralized decision-making and the ability to fast-track major infrastructure projects. For an economy building out its grid to serve expanding urban populations and industrial growth, this infrastructure-first approach makes strategic sense. Advanced Western economies face a different context. Their mature grids already serve developed populations, but patterns of use reveal massive untapped potential. Managing flexibility for less than 100 hours annually could unlock 100 GW of effective capacity nationwide - doubling the grid without doubling the infrastructure. The critical insight is recognizing which strategy aligns with institutional strengths and infrastructure realities. For countries with advanced grid coordination capabilities, market structures that price flexibility and regulatory frameworks that enable rapid innovation, the pathway of intelligent optimization offers compelling advantages. These include deployment timelines measured in months rather than decades, dramatically lower capital requirements and natural alignment with decarbonization goals. This shift means using AI to make the grid itself more intelligent, rather than relying only on making AI workloads flexible. It's a challenge of optimization, rather than scarcity. Power systems evolved around rigid patterns, sized for worst-case peak hours that occur <0.1% of the time, leaving massive installed capacity underused the rest of the time. The opportunity lies not in changing when data centres consume power, but in deploying AI to orchestrate every component of the system in real time. GridCARE's partnership with AI infrastructure developers and Portland General Electric demonstrates the concept at scale. By deploying predictive AI models that forecast renewable output and demand hours in advance, coordinating batteries and backup systems strategically, and dynamically managing loads across the grid, PGE has accelerated hundreds of megawatts of computing capacity years ahead of original timelines, without building new generation or transmission. The approach combines proven technologies into one AI-native orchestrated system: All of these technologies exist today. The innovation is using AI to integrate and deploy them at scale. People fear that data centres will drive up electricity rates. The reality is precisely the opposite. The fixed costs of electricity infrastructure - transmission networks, distribution systems, substations - exist whether they're operating at 30% or 90% utilization. When data centres consume power during off-peak periods and low-demand hours, those fixed costs spread across more kilowatt-hours, lowering average rates for all customers rather than raising them. Recent analysis by GridCARE shows that a 1 GW data centre utilizing spare grid capacity can reduce rates for the average consumer by as much as 5%, or $100/year. By turning data centres into flexible partners that absorb renewable surpluses and share grid expenses, utilities can expand capacity while reducing bills. The principle extends beyond data centres. As transportation and buildings electrify, the same coordination capabilities that optimize AI workloads can manage vehicle charging and heat pump operation. A grid optimized for one source of flexible demand becomes more valuable for all flexible loads. The fastest, cheapest, and cleanest megawatt is the one you don't need to build. This is the infrastructure challenge that will determine who leads the AI era. Whoever solves the power puzzle first will shape the next decade of technological development and economic competitiveness. Doubling the grid in five years through code rather than primarily through construction isn't merely possible. It may represent the highest-leverage strategy available for mature economies seeking to maintain technological leadership while accelerating climate goals. Abundance doesn't start with more resources. It starts with using what we already have intelligently. That's an opportunity that could reshape how we power AI - as well as how we think about infrastructure, abundance and the relationship between technological progress and resource constraints.

[3]

Emerald AI and Nvidia aim to offer the fast pass for data center grid connects, partnering with power producers and raising new funds | Fortune

While working at a major renewable energy developer, Varun Sivaram realized that the boom in AI and data centers were outpacing the construction of new power generation, even as wait times for grid interconnections grew longers. "I realized we couldn't build our way out of this. We needed intelligent demand," Sivaram told Fortune. In a bid to address this need, Sivaram founded a software company called Emerald AI to develop grid flexibility for data centers -- essentially reducing power consumption at times of peak load demand on the grid during the hottest or coldest days each year -- without harming the AI operations. In addition to heightened energy efficiency, the goal is to speed up the time for AI factories and their power generation to connect to the grid while maintaining "the five nines" -- the industry term for 99.999% reliability. Let's call it Disney FastPass approach -- now known as the Lightning Lane -- for quickly moving ahead in the grid queue. "We call it flexible-load fast track," Sivaram said, correcting the Disney reference with a laugh. Emerald AI's pitch quickly won financial backing and support from Nvidia, which has helped to fast-track the company's growth and the deployment of the AI software. "An AI for AI," he said. On March 31, Emerald AI announced the completion of a $25 million strategic funding round with Nvidia's NVentures, Eaton, GE Vernova, Radical Ventures, Salesforce, Samsung, Siemens, and more, including IQT, the venture capital arm of the CIA and other U.S. intelligence agencies. The round was led by Energy Impact Partners. That brings total funding to $68 million in 16 months since Emerald's founding. Last week, Emerald and Nvidia partnered with leading U.S. power producers, including AES, Constellation Energy, Invenergy, NextEra Energy, and Vistra. And, later this year, once a series of pilots prove successful, Emerald and Nvidia will open the first power-flexible, commercial AI factory, Nvidia's 96-megawatt Vera Rubin AI Factory Research Center, in Virginia. "The advent of the AI revolution meant that this idea should face prime time because, suddenly, AI factories don't have enough power," Sivaram said. "Historically, the data centers had no problem getting power. They've been less than 5% of the grid, but now they're headed toward 25% of the American power supply over the course of a decade." As Constellation CEO Joe Dominguez said, "We don't have a supply problem; we have a peak problem." And Emerald's "grid-friendly AI factories" aim to solve that problem. While Emerald's software aims to fast-track AI factories, it was Nvidia's early support that fast-tracked Emerald. "We're just excited for the opportunity to commercialize this and push it out there in a bigger way," said Marc Spieler, Nvidia senior managing director for global energy. "The pilots have been highly successful. We believe this will unlock the potential for getting more AI factories onto the grid faster, utilizing more of the untapped electrons on the grid." Their longer-term goal is for power-flexible AI factories to unlock up to 100 gigawatts of extra grid capacity from the existing U.S. power grid thanks to increased efficiencies. For context, 100 gigawatts can power roughly 75 million homes. A grid interconnection study can take years of regulatory reviews but, if you can offer power flexibility at peak demand times, developers may get almost immediate grid hookups, Spieler told Fortune. "Our goal is to have as much connected to the grid as possible and not go behind the meter, not being islanded, by being flexible," he said. "You can really think of it as highly reactive, demand response at scale." And Nvidia was happy to support Emerald's potential. It's far from NVentures' only support announced March 31. ThinkLabs, which has AI focused on compressing power grid studies from years to minutes, announced a $28 million Series A financing round also led by Energy Impact Partners. "We're an ecosystem company. We go to market through partners. It doesn't matter if they're a Fortune 100, or Fortune 10 company, or an AI startup," Spieler added. "If somebody has the right idea and is able to execute, we're going to get behind them and fill the gap." Eight years ago, Sivaram wrote the book, "Taming the Sun: Innovations to Harness Solar Energy and Power the Planet." In it, he documented Microsoft's work moving workloads between multiple locations to "chase" more clean energy. And Google later worked to move more computational work overnight to utilize wind power at its strongest. "I thought, 'Wouldn't it be nice if, instead of trying to move electrons to where the bits are, if bits could move to where the electrons are?' Or the bits could be virtually controllable -- slowed down or paused," Sivaram said. From that idea came the Emerald Conductor platform to "orchestrate" onsite energy resources alongside computational flexibility so projects can connect faster and support the power grid. "We found that there is inherent flexibility that we can tap into because some AI workloads can be delayed a little bit, and the customers are OK with that," he said. "Some AI workloads can be shifted from one location to another with latency that is acceptable for customers. "And there may be resources on the site of a data center, such as a [storage] battery or a [backup] generator, that we can also recruit. Emerald AI finds ways to recruit all these different flexibility levers to provide back to the grid a very precise response," Sivaram added. And through tests and pilots, customer's critical tasks continued to function without degradation, he said. "They kept chugging along at 100% performance."

[4]

Behind The AI Build-Out: The Energy Constraint Reshaping Where Billions In Infrastructure Capital Land

Growing Demands of AI Power CostsChallenges to New and Existing InfrastructurePotential in Distributed Power Generation Between data center site selection and data center power availability, a distributed power generation approach may serve to minimize data center energy costs. A combination of behind-the-meter power and traditional grid connection could address power consumption without overburdening members of the community. While developers are likely focused on construction for now, it is a future consideration. An Uncertain Future for AI Power Demands The data reflected by test facilities and commercial sites in the coming years may determine the kinds of energy demands expected of AI technology moving forward. Currently, it is difficult to discern exactly how many data centers will be constructed and what their average energy demand will be. Without this context, the expectations of AI energy demands are largely the subject of speculation. Certainly, AI power demand is introducing new expectations on power grids and impacting surrounding communities. As energy becomes a constraint for these developing data centers, the infrastructure necessary to support these initiatives will have to be constructed. The question is what shape that infrastructure will take, and exactly how much demand it must meet, considerations that lack clear answers. Image Credit: FreePik This post was authored by an external contributor and does not represent Benzinga's opinions and has not been edited for content. The information contained above is provided for informational and educational purposes only, and nothing contained herein should be construed as investment advice. Benzinga does not make any recommendation to not sell any security or any representation about the financial condition of any company. Investing involves risk and your investment may lose value. Past performance gives no indication of future results. These statements do not constitute and cannot replace investment advice. Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

Share

Share

Copy Link

NVIDIA and Emerald AI are transforming AI factories from static power loads into flexible grid assets, addressing the infrastructure bottleneck constraining AI deployment. With major energy producers like Constellation Energy, NextEra Energy, and Vistra backing the initiative, the approach could unlock 100 gigawatts of extra capacity from existing U.S. grids without massive construction projects.

AI Power Demand Meets Grid Reality

The AI revolution faces an unexpected constraint: not computing power, but electricity infrastructure. As Sam Altman revealed that OpenAI requires a gigawatt of power daily—roughly 20 times what the United States added in new generation capacity last year—and Microsoft CEO Satya Nadella acknowledged GPU clusters sitting idle waiting for power that may not arrive for years, the scale of misalignment between AI ambitions and energy reality has become stark

2

. This infrastructure bottleneck is reshaping where billions in infrastructure capital land and threatening national competitiveness in the AI era4

.

Source: Benzinga

At CERAWeek, dubbed the Davos of energy, NVIDIA and Emerald AI unveiled a solution that treats AI factories not as static power loads but as flexible, intelligent grid assets

1

. Built on the NVIDIA Vera Rubin DSX AI Factory reference design and Emerald AI's Conductor platform, this approach unifies accelerated computing, AI infrastructure reference architectures, and real-time energy orchestration1

.Grid Flexibility Over Construction

Stanford researchers studying grid utilization patterns across advanced economies identified a paradox: sophisticated electricity systems operate at approximately 30% utilization, with two-thirds of installed capacity sitting idle most hours

2

. The energy grid reaches capacity constraints for perhaps 100 hours annually under rare peak conditions. The rest of the time, vast grid capacity goes unused.Emerald AI's software develops grid flexibility for data centers by reducing power consumption at times of peak load demand without harming AI operations

3

. As Constellation CEO Joe Dominguez stated, "We don't have a supply problem; we have a peak problem"3

. Emerald AI founder Varun Sivaram, who previously worked at a major renewable energy developer, realized "we couldn't build our way out of this. We needed intelligent demand"3

.The result is an AI factory that can generate high-value AI tokens while dynamically responding to grid conditions—flexing when needed, supporting grid resilience, and reducing the need to overbuild infrastructure for peak demand

1

. Portland General Electric's partnership demonstrates hundreds of megawatts can be accelerated years ahead through latent capacity activation2

.Energy Efficiency as Competitive Advantage

Power constraints are reshaping data centers, with energy efficiency—specifically tokens per second per watt—becoming the defining metric of modern computing infrastructure

1

. NVIDIA founder and CEO Jensen Huang explained on a recent podcast: "Power is a concern, but it's not the only concern. That's the reason why we're pushing so hard on extreme codesign, so that we can improve the tokens per second per watt orders of magnitude every single year"1

.From the NVIDIA Kepler GPU in 2012 to the NVIDIA Vera Rubin platform this year, the number of tokens generated within the same power budget has increased by more than 1 million times

1

. This new computing infrastructure paradigm—described by Huang as a five-layer AI cake—has energy as its foundational layer1

.Fast-Tracking Grid Interconnections

Emerald AI announced completion of a $25 million strategic funding round with NVIDIA's NVentures, Eaton, GE Vernova, Radical Ventures, Salesforce, Samsung, Siemens, and IQT, the venture capital arm of the CIA and other U.S. intelligence agencies

3

. The round was led by Energy Impact Partners, bringing total funding to $68 million in 16 months since Emerald's founding3

.Emerald and NVIDIA partnered with leading U.S. power producers including AES, Constellation Energy, Invenergy, NextEra Energy, and Vistra

3

. These companies plan to collaborate on optimized generation strategies to support AI factories built on the NVIDIA and Emerald AI architecture, including hybrid projects that use co-located power to accelerate time to power while delivering value to the broader grid1

.A grid interconnection study can take years of regulatory reviews, but offering power flexibility at peak demand times may enable developers to get almost immediate grid hookups

3

. Marc Spieler, NVIDIA senior managing director for global energy, described it as "highly reactive, demand response at scale"3

. Later this year, once pilots prove successful, Emerald and NVIDIA will open the first power-flexible, commercial AI factory—NVIDIA's 96-megawatt Vera Rubin AI Factory Research Center in Virginia3

.Related Stories

Grid Optimization Through AI

The approach combines proven technologies into one AI-native orchestrated system. GridCARE's partnership with AI infrastructure developers and Portland General Electric demonstrates the concept at scale by deploying predictive AI models that forecast renewable output and demand hours in advance, coordinating batteries and backup systems strategically, and dynamically managing loads across the grid

2

. Recent analysis by GridCARE shows that a 1 GW data center utilizing spare grid capacity can reduce rates for the average consumer by as much as 5%, or $100 per year2

.

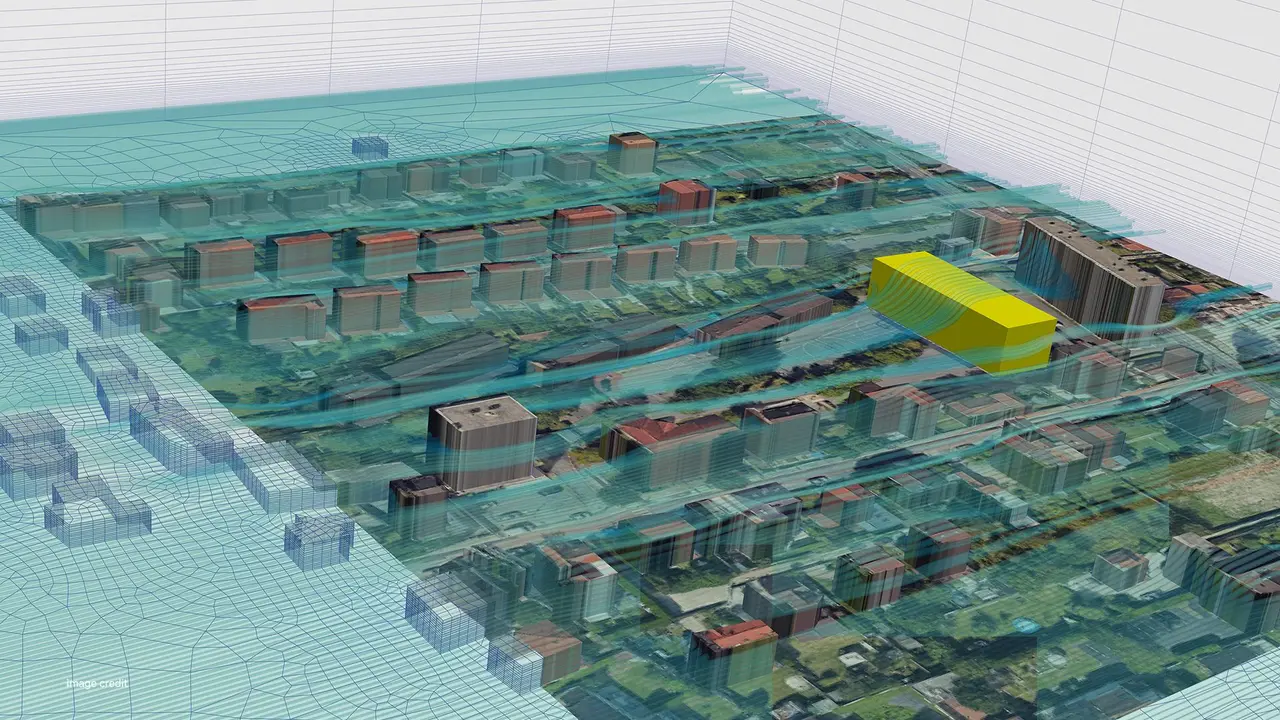

Source: NVIDIA

Their longer-term goal is for power-flexible AI factories to unlock up to 100 gigawatts of extra grid capacity from the existing U.S. power grid through increased efficiencies—enough to power roughly 75 million homes

3

. This shift means using AI to make the energy grid itself more intelligent, rather than relying only on making AI workloads flexible2

.Accelerating Energy Infrastructure

NVIDIA ecosystem partners showcased at CERAWeek how AI, simulation, and workforce innovation are accelerating the energy infrastructure needed to support the intelligence era

1

. Maximo, a solar robotics company incubated at AES, announced completion of a 100-megawatt robotic solar installation at AES' Bellefield site using AI-driven robotics developed with NVIDIA accelerated computing, NVIDIA Omniverse libraries, and the NVIDIA Isaac Sim framework1

.TerraPower, working with SoftServe, previewed an NVIDIA Omniverse-powered digital twins platform designed to dramatically shorten advanced nuclear plant siting and design timelines, reducing design cycles from years to months

1

. Adaptive Construction Solutions announced a national registered apprenticeship initiative in collaboration with NVIDIA to build the skilled workforce required for AI factories and energy infrastructure1

.As data centers are projected to grow from less than 5% to 25% of the American power supply over a decade, distributed power generation approaches combining behind-the-meter power and traditional grid connection could address power consumption without overburdening communities

3

4

. The data reflected by test facilities and commercial sites in coming years will determine the kinds of energy demands expected of AI technology moving forward4

.References

Summarized by

Navi

Related Stories

AI's Surging Energy Demands Pose Challenges and Opportunities for Power Grids

27 Jun 2025•Technology

US Power Grid Struggles to Keep Pace with AI Data Center Boom, While China Surges Ahead

15 Aug 2025•Business and Economy

AI's Surging Energy Demand Sparks Data Center Efficiency Crisis

04 Sept 2025•Technology

Recent Highlights

1

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research

2

Anthropic's Claude Code Source Leak Reveals Hidden AI Agent Plans and Extensive System Access

Technology

3

Judge blocks Pentagon from branding Anthropic a security risk over AI safety guardrails dispute

Policy and Regulation