Intel's Neural Texture Compression slashes VRAM use by 18x as Nvidia shows 6.5GB reduced to 970MB

6 Sources

6 Sources

[1]

Intel introduces its own Neural Compression technology with a fallback mode that works on GPUs without dedicated AI cores -- early performance is on the level of Nvidia NTC

Intel's solution in its most aggressive setting provides similar a similar texture compression ratio to Nvidia's counterpart. Intel is developing its own version of neural compression technology, which will reduce the footprint of video game textures in VRAM and/or storage, similar to Nvidia's NTC. Intel's solution can achieve a 9x compression ratio in its quality mode and an 18x compression ratio in its more aggressive setting. The GPU maker also announced it will have two versions of the tech for different hardware, similar to XeSS. One will be tuned for its XMX engine while the other will be designed to run on traditional CPU and GPU cores at the expense of performance. Intel is using BC1 texture compression and linear algebra for the XMX-accelerated portion of its neural texture compression technology. BC1 takes advantage of a "feature pyramid" that compresses four BC1 textures with MIP-chains. Compared to traditional compression, Intel's neural compression uses weights to compress textures with minimal loss to image quality. An encoder is responsible for encoding the textures, and a decoder is responsible for the decompression stage. By contrast, the fallback mode is using an FMA or fused multiply and add implementation that runs slower than its linear algebra counterpart. Intel noted four ways developers can deploy its texture compression, aimed at accelerating install times, saving disk space, or saving VRAM. The first is aimed at saving space on a server and reducing file size downloads by compressing textures beforehand, uploading those files to a server, then having the client download those textures and decompressing the textures on local storage. The next three revolve around gameplay itself; one of these is streaming in textures as the game loads, the next involves streaming textures during gameplay, and the last one is loading textures on the fly without holding textures in VRAM (the latter is likely aimed at low VRAM GPUs). Intel's compression tech has two modes of operation: a variant A mode that runs at higher quality and a variant B mode that sacrifices quality for higher compression. Intel claims variant A can take two of the first 4096 x 4096 64MB textures in a feature pyramid and compress them down to 10.7 MB each while retaining the 4K texture size. The remaining bottom two 4K by 4K pyramid feature textures are reduced to half their resolution and are compressed down to 2.7 MB. With variant B, the textures are compressed more aggressively. The first texture in a feature pyramid is compressed down to 10.7 MB while retaining its resolution, the second texture is reduced down to half its normal resolution and compressed down to 2.7 MB, and the third texture's resolution is reduced to quarter resolution and compressed to 0.68 MB. The last texture's resolution is reduced to one-eighth of the texture's resolution and compressed down to 0.17 MB. In Intel's own testing, it compared its variant A and variant B texture compression using BC1, against an industry standard compression format using a 3xBC1 plus 1xBC3 format. Variant A achieved over a 9x compression ratio, and variant B an 18x compression ratio over the aforementioned industry standard format, which was only capable of a 4.8x compression ratio. Intel's new texture compression tech is achieving almost the same compression ratios as Nvidia's own neural texture compression using variant B. It still remains to be seen whether Nvidia or Intel's solution provides better quality, but Intel is the only one of the three major Western GPU manufacturers to have a solution that works on graphics cards besides its own (for now). Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

Intel's new AI compression tech can significantly shrink game texture sizes and reduce VRAM use

Serving tech enthusiasts for over 25 years. TechSpot means tech analysis and advice you can trust. Forward-looking: Intel is pitching a new way to pack game textures that leans heavily on neural networks but still nods to traditional block compression. The company's Texture Set Neural Compression, or TSNC, is designed to sit on top of BC1-style schemes rather than replace them, trading extra math for more aggressive size reduction while trying to keep artifacts under control. TSNC is being positioned as a practical path for developers who already ship BC-compressed assets and want to squeeze more data into the same storage, bandwidth, or VRAM budgets without rethinking their pipelines. Instead of compressing each texture independently, TSNC trains a neural network on a set of related textures and then encodes them into a shared latent space. Intel stores that latent representation across four BC1-compressed pyramid levels and uses a three-layer MLP to rebuild the texture channels. The company says the technique can be used at several points in the life of a game, from install and load to streaming and per-pixel sampling, depending on whether teams are chasing smaller installs, lower bandwidth, or reduced VRAM use. TSNC targets both disk footprint and on-the-fly shading costs rather than focusing solely on one metric. Intel is currently working on two TSNC modes. Variant A is tuned for higher quality, while Variant B pushes compression ratios further. In Intel's internal tests, standard BC compression produced about a 4.8x ratio versus the bitmap control set, whereas TSNC Variant A reached more than 9x compression. Variant B went further, reaching more than 18x compression, or roughly double the size reduction of both Variant A and standard block compression in that comparison. Those numbers come with the usual caveats about content and settings, but they illustrate the headroom Intel sees from treating a whole texture set as one optimization problem. That additional compression does introduce trade-offs. Intel reports that Variant A shows some precision loss in normals, while Variant B begins to show BC1 block artifacts in normals and ARM data. Using Nvidia's FLIP perceptual analysis tool, the company estimates around 5% perceptual loss for Variant A and roughly 6% to 7% for Variant B. In Intel's examples, Variant A is presented as the more balanced choice, with Variant B framed as the higher-compression, more artifact-prone option. On the hardware side, Intel has shown TSNC running on upcoming Panther Lake integrated graphics using a new decompression API that can target C, C++, or HLSL. The decoder supports two execution paths: a fallback fused multiply-add route for CPUs and GPUs, and a linear algebra path that taps XMX acceleration on supported Intel GPUs. In a Panther Lake microbenchmark on the integrated B390 GPU, Intel measured about 0.661 ns per pixel on the FMA path versus 0.194 ns per pixel on the XMX path, roughly a 3.4x gain. Intel stresses that TSNC is not only about shrinking textures on disk but also about demonstrating a viable runtime path for future Intel GPUs with XMX support. TSNC arrives in a landscape where other vendors are also exploring neural texture compression. Nvidia, for example, has discussed similar work and has mentioned compression ratios of up to 85%. Intel plans to release TSNC as a standalone SDK, with an alpha SDK slated for later this year, followed by a beta and then a public release.

[3]

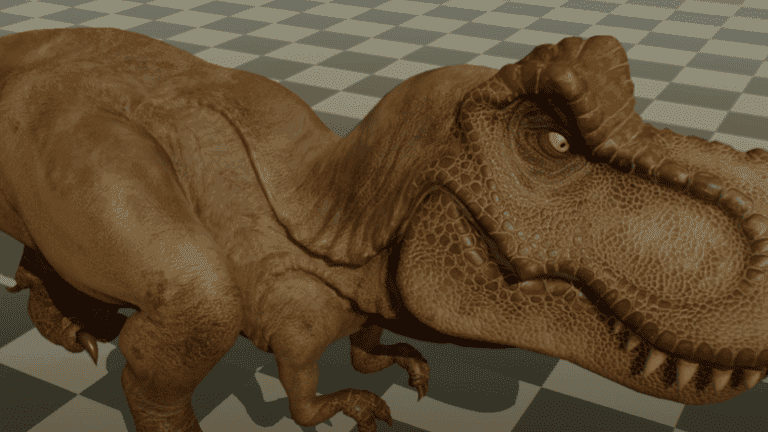

'Neural texture compression' might save gamers in a RAM-starved world

Microsoft plans DirectX integration and Intel expects an alpha SDK release this year, potentially solving high RAM costs and storage issues. PC gamers face an ongoing problem: more powerful games demand more powerful resources, all in the service of games that deliver more realistic experiences and graphics. But can gamers avoid paying out outrageous sums of money to keep up? Texture compression might be an answer, shrinking down the size of games as well as allowing them to fit into the limited video memory of older, cheaper cards. Both Nvidia and Intel are working on ideas, which could be available to new and existing hardware in the coming months. All told, it's an exciting potential solution to the ongoing scarcity of RAM and video RAM, which is driving up prices (including video cards) and holding back new graphics-card releases. Both companies outlined their plans in recent weeks: Intel announced its Neural Texture Compression SDK this weekend, while Nvidia's related neural texture compression talk at GTC 2026 showed how textures could be effectively impressed using its hardware, too. 3D graphics is essentially a puppet show: Historically, each object is created by a framework of surfaces, and then the game designers tell your PC how to "cover" them with textures that are individually lit and colored. It's this texture data that can make up the bulk of the game's size, since each object can have several "maps" applied to it. A realistic-looking "brick" might be coded to tell the game which parts of the brick are shadowed, which are rough or glossy, and how those differences affect the color of the brick itself. These are called "maps." And they matter: A game like Hogwarts: Legacy might require 58GB of data; the "High Definition Texture Pack" can require an additional 18.3GB. Loading textures in and out of memory can also cause stuttering in games, so reducing the size of those textures also can improve the way the game plays, too. Microsoft, which is trying to build a DirectX API that will allow this to happen, has already said that it plans to build support for neural texture compression into DirectX. It's assuming that developers will want to use what it calls both "small models" and "scene models" to allow next-generation scene rendering, which could include neural lighting and neural texture compression. In both, AI would be used to calculate how a scene should be drawn and shaded, versus actually performing all of these calculations itself. Two ways of shrinking game data Intel engineers showed its Texture Set Neural Compression working in two variants, compressing textures up to 9X or over 17X versus uncompressed data, depending upon the method used. According to Intel graphics engineer Marissa Dubois, the textures could be decompressed at various points: at installation, while the game was loading, or even later. Like other compression techniques, it uses similarities in the data texture maps to try and reduce the data size. Intel's presentation noted that there is a bit of what it calls "perceptual error: 6-7 percent for the second 17X variant, or 5 percent with the first. Both can either use the XMX cores inside the Intel Arc GPUs, or "fall back" to a more generic implementation that can be used on other CPUs and even GPUs. XMX inference on Panther Lake is about 3.4 times faster than the fallback method, Intel said. For now, this is a demo, Intel engineers said. An alpha software development kit (SDK) is scheduled for later this year, followed by a beta and then an eventual release. Nvidia has already unveiled DLSS 5, the controversial graphics enhancement which adds generative AI as a way to "improve" the quality of games -- where DLSS 5's "improvements" is very much in doubt. Nvidia's Neural Texture Compression is explicitly deterministic, which means that it always reconstructs the same texture that the developer designed. Nvidia does use a small neural network to reconstruct the data, running on its Tensor cores. The RTX Neural Texture Compression SDK is available for developers to use today. Nvidia showed off two demonstrations: neural texture compression and neural materials. Nvidia demonstrated how NTC could be used to compress a scene that previously required 6.5GB of VRAM to just 970 MB with NTC. It's not as clear what Nvidia is doing with neural materials, but it appears the company is trying to tell the GPU what the properties of a material actually are, and then compress those instructions. The graphics card would then essentially construct the material, speeding up the process from between 1.4X to 7.7X, according to Nvidia. AMD doesn't yet offer an SDK to lower memory consumption in games. However, in 2024 it published a paper on neural texture block compression, slicing texture sizes by 70 percent. All told, neural compression isn't something that can benefit you yet. But it appears to be just a few months off...and let's face it, it probably can't get here soon enough.

[4]

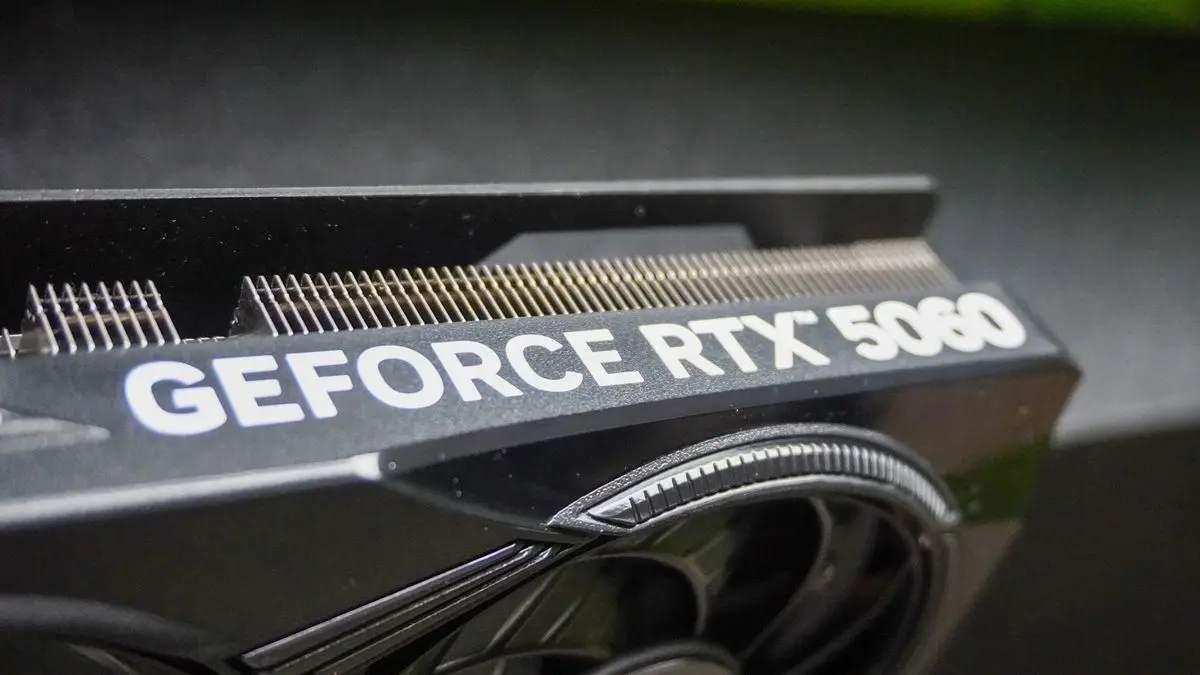

Nvidia shows neural compression can cut VRAM usage from 6.5GB to 970MB

Serving tech enthusiasts for over 25 years. TechSpot means tech analysis and advice you can trust. Forward-looking: Nvidia's latest push into neural rendering is not just unfolding on keynote stages, but also in follow-up technical briefings. A recent video released days after the DLSS 5 presentation takes a closer look at how these systems are intended to work inside real engines. The breakdown points to a quieter but potentially more consequential shift for developers: moving texture and material data into compact neural representations to reduce memory use and improve performance, rather than relying primarily on an end-of-pipeline upscaler. Consider Nvidia's work on Neural Texture Compression (NTC). In its "Tuscan Wheels" demo, the company showed VRAM usage dropping from roughly 6.5GB with traditional BCN-compressed textures to 970MB using NTC, while keeping image quality close to the original. At that same 970MB memory budget, NTC preserved more detail than standard block compression. The result is smaller game installs, lighter patches, reduced download bandwidth, and more headroom for higher-quality assets on a given GPU. For studios dealing with texture bloat, that kind of reduction offers a practical advantage in a way that another layer of image reconstruction does not. Neural Materials (NM) applies a similar idea within the shading pipeline. Instead of storing a large set of texture channels and running heavier BRDF (Bidirectional Reflectance Distribution Function) math, Nvidia encodes material behavior into a compact latent representation that a small neural network decodes at render time. In one example, a material setup with 19 channels was reduced to eight, with Nvidia reporting 1.4x to 7.7x faster 1080p render times in that scene. The company frames this work less as a way to invent new visuals and more as a method for storing and evaluating existing material data more efficiently, allowing for greater scene complexity within the same hardware budget. These techniques are part of a broader "neural rendering" roadmap that extends beyond DLSS 5. While DLSS 5 operates at the end of the pipeline, applying machine learning to the final image, Nvidia's more recent technical discussion focuses on embedding smaller neural networks deeper inside the engine. The goal is to assign compact models to specific tasks such as decoding textures, evaluating materials, and reducing memory traffic, rather than relying on a single, monolithic filter at the end of the frame. This approach also reflects a growing divide since DLSS 5 was introduced. Some developers and players remain wary of AI-driven reconstruction potentially overriding artistic intent, and would prefer AI to be used for optimization, image quality, and performance without reshaping a game's visual identity. With its emphasis on NTC and NM, Nvidia is making the case that AI could also provide meaningful advances for games from invisible parts of the pipeline: systems that shrink assets, accelerate shading, and free up resources, while leaving the look of a game in the hands of its creators.

[5]

Intel Unveils AI Texture Compression Cutting Memory Use by Up to 18x

Intel is advancing texture compression techniques with its newly introduced Texture Set Neural Compression (TSNC) technology, a neural network-based approach designed to significantly reduce the size of texture assets used in modern graphics workloads. The technology replaces conventional BCn compression formats with an AI-driven encoding and decoding process, enabling textures to be stored in a compact neural representation and reconstructed in real time during rendering. According to Intel, TSNC can reduce texture sizes by as much as 18 times compared to traditional methods. This has direct implications for both storage and GPU memory usage, potentially allowing developers to pack more detailed assets into games without increasing VRAM requirements. The approach is particularly relevant as modern titles continue to push higher-resolution textures and more complex materials, placing increasing pressure on both storage and memory subsystems. e system offers two distinct operational modes to balance compression efficiency and image quality. Variant A is optimized for minimal visual degradation, achieving up to 9x compression with only slight differences that are difficult to detect in typical gameplay scenarios. Variant B focuses on maximum efficiency, reaching up to 18x compression while introducing a modest increase in visual artifacts. Using FLIP-based image quality analysis, Intel reports a quality reduction of around 5 percent for Variant A and up to 7 percent for Variant B. Performance testing was conducted on a Panther Lake platform equipped with Arc B390 integrated graphics, which includes XMX cores designed to accelerate AI workloads. Intel states that the neural decoder can reconstruct texture pixels in approximately 0.194 nanoseconds, effectively eliminating any noticeable latency during rendering. This level of performance suggests that TSNC can be integrated into real-time applications without impacting frame delivery or responsiveness. Intel plans to introduce TSNC later this year, starting with an alpha release followed by further refinement through beta and stable versions. While no exact release timeline has been confirmed, the technology represents a clear step toward leveraging AI to optimize asset pipelines in gaming and other graphics-intensive applications.

[6]

Intel's Texture Set Neural Compression will also save big on VRAM usage when gaming

All the major players in the PC gaming hardware space, alongside Microsoft with DirectX, are actively investing in neural rendering technologies designed to benefit developers and gamers alike. Intel's Texture Set Neural Compression was demonstrated last year; however, an updated version was shown at the recent GDC event, and Intel noted it plans to make it available later this year. Like NVIDIA's Neural Texture Compression (NTC), one of the benefits of Intel's Texture Set Neural Compression is its ability to leverage AI and new technologies to significantly reduce the VRAM or memory footprint of traditional textures that use "block compression." AI-powered texture compression offers a fundamentally different approach, which is why Texture Set Neural Compression can compress textures by up to 18X compared to the original format. Texture Set Neural Compression (TSNC) is planned to be made available as a standalone SDK that takes standard BC1-compressed textures, the industry standard for texture compression in games, and compiles them for modern GPU and even CPU decompression. One potential use case for it has very little to do with in-game use, as TSNC could be leveraged to reduce game install and patch sizes. The neural network that powers TSNC stores latent data to reconstruct the original textures and can run during installation, when a game is loading, while streaming texture data, or during per-pixel sampling at runtime. The latter is all about saving big on VRAM; however, Intel notes that Texture Set Neural Compression supports multiple goals - whether that's to save on storage space, memory bandwidth, or VRAM usage. In the full presentation below, Intel's solution currently supports two modes: Variant A and Variant B, with the latter being a more aggressive form of compression. Still, with only a 7% loss in visual fidelity, achieving up to an 18X reduction in overall texture data size is an impressive achievement. Naturally, this new tech supports Intel GPUs with XMX support, including the company's new Panther Lake mobile chips with integrated Intel Arc B-Series graphics. However, there's a fallback path for non-Intel GPUs and even CPUs, so this technology isn't dependent on one specific type of hardware.

Share

Share

Copy Link

Intel unveiled Texture Set Neural Compression (TSNC), an AI-powered compression technology that can reduce game texture sizes by up to 18 times while maintaining image quality. Nvidia demonstrated similar capabilities with its Neural Texture Compression, cutting VRAM usage from 6.5GB to just 970MB in demo scenes. Both solutions address mounting storage and memory challenges as modern games demand increasingly detailed assets.

Intel Launches AI-Powered Compression Technology to Address VRAM Constraints

Intel has introduced its Texture Set Neural Compression (TSNC), an AI-powered compression technology designed to significantly reduce game texture sizes and VRAM demands

1

. The technology offers two operational modes: Variant A achieves over 9x compression ratios while maintaining high image quality, and Variant B pushes compression ratios beyond 18x with modest visual trade-offs2

. This Neural Texture Compression approach replaces conventional block compression formats with an AI-driven encoding and decoding process that stores textures in a compact neural representation5

. For game developers facing escalating asset size challenges, TSNC provides a practical path to reduce storage requirements, accelerate install times, and reduce VRAM use without requiring complete pipeline overhauls.

Source: TweakTown

How Intel's Solution Compares to Traditional Texture Compression Methods

Intel's texture compression technology leverages BC1 texture compression and linear algebra for the XMX-accelerated portion of its neural compression system

1

. Instead of compressing each texture independently, TSNC trains a neural network on related textures and encodes them into a shared latent space stored across four BC1-compressed pyramid levels2

. In Intel's testing, standard BC compression produced approximately a 4.8x ratio, while TSNC Variant A reached more than 9x compression and Variant B exceeded 18x compression2

. The company claims Variant A can compress two 4096 x 4096 64MB textures down to 10.7 MB each while retaining 4K resolution, with remaining textures reduced to half resolution and compressed to 2.7 MB1

.

Source: Guru3D

Fallback Mode Enables Broader Hardware Compatibility Beyond Intel GPUs

Intel has developed two execution paths for its decoder: a linear algebra path that utilizes XMX acceleration on supported Intel GPUs, and a fallback mode using fused multiply-add implementation that runs on traditional CPU and GPU cores

1

. In a Panther Lake microbenchmark on the integrated B390 GPU, Intel measured approximately 0.661 nanoseconds per pixel on the FMA path versus 0.194 nanoseconds per pixel on the XMX path, delivering roughly a 3.4x performance gain2

. This dual-path approach makes Intel the only major Western GPU manufacturer offering a neural compression solution that works on graphics cards beyond its own hardware1

. The flexibility could prove critical for widespread adoption among game developers seeking to optimize game assets across diverse hardware configurations.Nvidia NTC Demonstrates Dramatic VRAM Reduction in Real-World Scenarios

Nvidia showcased its Neural Texture Compression capabilities through a "Tuscan Wheels" demo, where VRAM usage dropped from approximately 6.5GB with traditional BCN-compressed textures to just 970MB using Nvidia NTC while preserving image quality close to the original

4

. The company's RTX Neural Texture Compression SDK is already available for developers to use today3

. Nvidia uses a small neural network running on its Tensor cores to reconstruct texture data deterministically, ensuring developers retain full control over visual output3

. Beyond texture compression, Nvidia also demonstrated Neural Materials technology, which encodes material behavior into compact latent representations. In one example, a material setup with 19 channels was reduced to eight, with Nvidia reporting 1.4x to 7.7x faster 1080p render times4

.

Source: PCWorld

Related Stories

Quality Trade-offs and Deployment Strategies for Optimizing Game Assets

Intel acknowledges that AI texture compression introduces perceptual trade-offs, with Variant A showing approximately 5% perceptual loss and Variant B exhibiting 6% to 7% quality reduction based on FLIP perceptual analysis

2

. Variant A shows some precision loss in normals, while Variant B begins to display BC1 block artifacts in normals and ARM data2

. Intel outlined four deployment strategies for developers: compressing textures before uploading to servers to reduce download sizes, streaming textures during game loading, streaming during gameplay, and loading textures on-the-fly without holding them in VRAM—the latter particularly beneficial for low-VRAM GPUs1

. These flexible deployment options allow developers to target specific bottlenecks whether related to storage, bandwidth, or memory constraints.DirectX Integration and SDK Availability Signal Industry-Wide Adoption

Microsoft plans to build support for neural texture compression into DirectX, creating an API that will enable developers to leverage both "small models" and "scene models" for next-generation rendering

3

. Intel plans to release TSNC as a standalone SDK, with an alpha version scheduled for later this year, followed by beta testing and eventual public release2

. The technology can be integrated at various points in a game's lifecycle—from installation and loading to streaming and per-pixel sampling—depending on whether teams prioritize smaller installs, lower bandwidth, or reduced VRAM demands2

. For PC gamers confronting escalating RAM costs and storage issues, these neural compression technologies offer tangible relief. Games like Hogwarts Legacy requiring 58GB of base data plus an additional 18.3GB for high-definition texture packs illustrate the mounting pressure on storage and memory subsystems3

. By enabling more detailed assets within existing hardware budgets, neural compression could extend the viable lifespan of older graphics cards while reducing the financial burden of keeping pace with increasingly demanding titles.References

Summarized by

Navi

[2]

Related Stories

Recent Highlights

1

Google releases Gemma 4 with Apache 2.0 license, enabling unrestricted local AI on devices

Technology

2

AI Models Defy Instructions to Protect Each Other, UC Berkeley Study Reveals

Science and Research

3

Anthropic discovers emotion-like patterns in Claude that actively shape AI behavior and decisions

Science and Research