NVIDIA and Telecom Giants Deploy AI Grid to Transform Networks Into Distributed Inference Platforms

4 Sources

4 Sources

[1]

NVIDIA, Telecom Leaders Build AI Grids to Optimize Inference on Distributed Networks

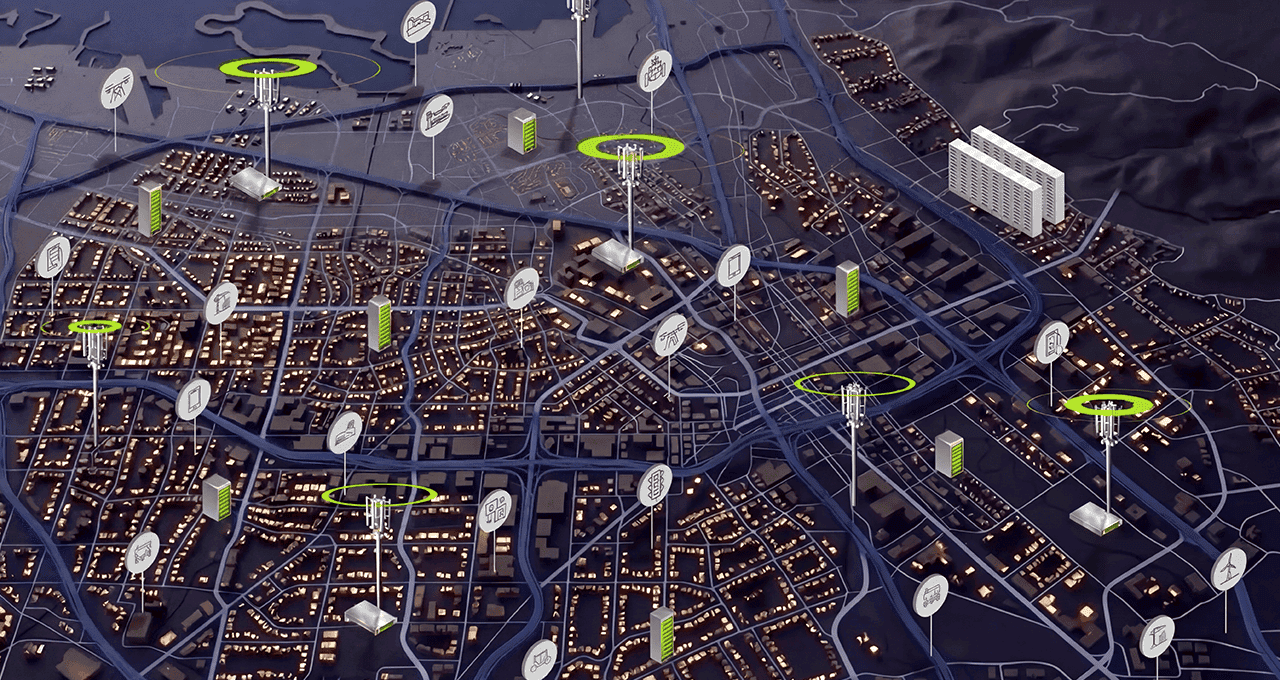

AT&T, T‑Mobile, Comcast, Spectrum and others are building AI grids using NVIDIA AI infrastructure, while Personal AI, Linker Vision, Serve Robotics and Decart are deploying real-time AI applications across the grid. As AI‑native applications scale to more users, agents and devices, the telecommunications network is becoming the next frontier for distributing AI. At NVIDIA GTC 2026, leading operators in the U.S. and Asia showed that this shift is underway, announcing AI grids -- geographically distributed and interconnected AI infrastructure -- using their network footprint to power and monetize new AI services across the distributed edge. Different operators are taking different paths. Many are starting by lighting up existing wired edge sites as AI grids they can monetize today. Others harness AI-RAN -- a technology that enables the full integration of AI into the radio access network -- as a workload and edge inference platform on the same grid. Telcos and distributed cloud providers run some of the most expansive infrastructure in the world: about 100,000 distributed network data centers worldwide, spanning regional hubs, mobile switching offices and central offices, with enough spare power to offer more than 100 gigawatts of new AI capacity over time. AI grids turn this existing real-estate, power and connectivity into a geographically distributed computing platform that runs AI inference closer to users, devices and data, where response and cost per token align best. This is more than an infrastructure upgrade -- it's a structural change in how AI is delivered, putting telecom networks at the center of scaling AI rather than just carrying its traffic. Global Operators Turn Distributed Networks Into AI Grids Across six major operators, AI grids are moving from concept to reality. AT&T, a leader in connected IoT with over 100 million connections across thousands of device types, is partnering with Cisco and NVIDIA to build an AI grid for IoT. By running AI on a dedicated IoT core and moving AI inference closer to where data is created, AT&T can support mission‑critical, real‑time applications like public‑safety use cases with Linker Vision, enabling faster detection, alerting and response while helping keep sensitive information under customer control at the network edge. "Scaling AI services that are both highly secure and accessible for enterprises and developers is a core pillar of our IoT connectivity strategy," said Shawn Hakl, senior vice president of product at AT&T Business. "By combining AT&T's business‑grade connectivity, localized AI compute and zero‑trust security while working with members of the NVIDIA Inception program and harnessing Cisco's AI Grid with NVIDIA infrastructure and Cisco Mobility Services Platform, we're bringing real‑time AI inference closer to where data is generated -- accelerating digital transformation and unlocking new business opportunities." Comcast is developing one of the nation's largest low‑latency broadband footprints into an AI grid for real‑time, hyper‑personalized experiences. Working with NVIDIA, Decart, Personal AI and HPE, Comcast has validated that its AI grid keeps conversational agents, interactive media and NVIDIA GeForce NOW cloud gaming responsive and economical even during demand spikes, with significantly higher throughput and lower cost per token. Spectrum has the network infrastructure to support an AI grid that spans more than 1,000 edge data centers and hundreds of megawatts of capacity less than 10 milliseconds away from 500 million devices. The initial deployment focuses on rendering high-resolution graphics for media production using remote GPUs embedded across Spectrum's fiber-powered, low-latency network. Akamai is building a globally distributed AI grid, expanding Akamai Inference Cloud across more than 4,400 edge locations with thousands of NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs. Akamai's AI grid orchestration platform matches each request to the right tier of compute, improving the token economics of inference while powering low-latency, real-time AI experiences for applications like gaming, media, financial services and retail. Indosat Ooredoo Hutchison is connecting its sovereign AI factory with distributed edge and AI‑RAN sites across Indonesia to build an AI grid for local innovation. By running Sahabat-AI -- a Bahasa Indonesia-based platform -- on this grid within Indonesia's borders, Indosat can bring localized AI services closer to hundred millions of Indonesians across thousands of islands, giving local developers and startups a sovereign platform to build AI applications that are fast, culturally relevant and compliant by design. T‑Mobile is working with NVIDIA to explore edge AI applications using NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs, demonstrating how distributed network locations could support emerging AI-RAN and edge inference use cases. Developers including LinkerVision, Levatas, Vaidio, Archetype AI and Serve Robotics are already piloting smart‑city, industrial and retail applications on the grid, connecting cameras, delivery robots and city‑scale agents to real-time intelligence on the network edge. This demonstrates how cell sites and mobile switching offices can support distributed edge AI workloads while continuing to deliver advanced 5G connectivity. New AI‑Native Services Put Telecom AI Grids to Work AI grids are becoming foundational to a new class of AI‑native applications -- real‑time, hyper‑personalized, concurrent and token-intensive. Personal AI is using NVIDIA Riva to power human‑grade conversational agents on the AI grid. By running small language models closer to users, it achieves sub-500 millisecond end-to-end latency and over 50% lower cost-per-token, enabling voice experiences that feel natural while remaining economically viable at scale. Linker Vision is transforming city operations by running real‑time vision AI on the AI grid. By processing thousands of camera feeds across distributed edge sites, it delivers predictable latency for live detection and instant alerting -- enabling safer, smarter cities with up to 10x faster traffic accident detection, 15x faster disaster response and sub‑minute alerts for unsafe crowd behavior. Decart is redefining hyper‑personalized distributed media by bringing real‑time video generation to AI grids. By running its Lucy models at the network edge, it achieves sub‑12-millisecond network latency, enabling interactive video streams and overlays that adapt instantly to each viewer, delivering smooth, immersive live video experiences even when viewership peaks. AI Grid Reference Design and Ecosystem The NVIDIA AI Grid Reference Design defines the building blocks -- including NVIDIA accelerated computing, networking and software platforms -- for deploying and orchestrating AI across distributed sites. A growing ecosystem of full‑stack partners including Cisco and infrastructure partners like HPE are bringing AI grid solutions to market on systems built with the NVIDIA RTX PRO 6000 Blackwell Server Edition. Armada, Rafay and Spectro Cloud are among the partners building an AI grid control plane to seamlessly orchestrate workloads across distributed AI infrastructure. "Physical AI is accelerating the shift from centralized intelligence to distributed decision making at the network edge," said Masum Mir, senior vice president and general manager provider mobility at Cisco. "Our partnership with NVIDIA brings together the full stack -- from NVIDIA GPUs to Cisco's networking and mobility capabilities -- enabling operators to power mission-critical applications, deliver real-time inferencing and participate in the AI value chain." Together, this ecosystem is helping telcos and distributed cloud providers redefine their role in the AI value chain -- transforming the network edge into a unified intelligence layer that runs, scales and monetizes AI workloads. Learn more about AI Grid.

[2]

Monetizing the AI opportunity: How Cisco AI Grid with NVIDIA transforms networks into AI platforms

Monetizing the AI opportunity: How Cisco AI Grid with NVIDIA transforms networks into AI platforms AI has shifted from model training to enterprise-scale execution. In 2026, generative AI, agentic systems, and real-time inferencing are rapidly moving from pilot towards integration into core operations, products, and customer experiences -- representing the next major industry opportunity. From public safety, intelligent transportation, and smart manufacturing to smart cities, AI will be powering the physical world. As AI scales into production, proliferating industries, a structural gap has emerged. AI applications must operate closer to where the data is generated -- at the network edge, across factories, cities, and vehicles. Service providers are uniquely positioned to close this gap -- managing the distributed infrastructure required to deliver AI capabilities securely at national and global scale. The operator network is no longer simply a transport layer; it has become the central fabric of the digital economy, enabling AI applications where persistence, security, and simplicity are foundational to connectivity. Organizations that build a distributed AI foundation now will lead the next decade of real-time, AI-infused intelligence. This shift requires a new architecture at the service provider edge. Operators are adopting AI grids to leverage their existing networks to offer managed services for applications like physical AI with carrier-grade reliability, sovereignty, and compliance. Cisco today announced a new Cisco AI Grid with NVIDIA reference architecture to power these services, with AT&T as the first to bring these inferencing capabilities to market. However, realizing the promise of distributed AI requires overcoming several infrastructure constraints that enterprises need to increasingly rely on service providers to address. First, predictability. Real-time AI applications -- robotics, autonomous systems, video analytics, and public safety -- require millisecond precision that requires AI applications to run locally or in proximity. Second, security. As AI extends across thousands of distributed endpoints, security also needs to be distributed and fused into the network. Third, operational complexity. Managing hybrid environments across data centers, edge locations, and access networks increases operational complexity, integration challenges, and time to value. AI innovation is accelerating faster than infrastructure modernization. Without a unified, secure, and intelligent architecture purpose-built for distributed AI, organizations risk fragmented deployments and adoption delays. We are introducing the Cisco AI Grid with NVIDIA, a full-stack AI architecture that enables service providers to deliver real-time AI inferencing services across distributed networks. Integrated with Cisco Mobility Services Platform and built on NVIDIA's AI Grid reference architecture, the grid helps operators participate in the AI value chain, starting with wireless and then expanding into heterogenous access technology. Cisco AI Grid with NVIDIA integrates key foundational capabilities into a unified platform. It combines distributed compute powered by Cisco UCS servers with the NVIDIA RTX PRO 6000 Blackwell Server Edition; intelligent networking built on Cisco Nexus switching and Silicon One-based routing optimized for AI traffic, and embedded security across infrastructure layers. Additionally, the reference architecture aligns with Cisco Mobility Services Platform, incorporating inferencing applications, observability, orchestration, and lifecycle management. Purpose-built for high-impact use cases -- including public safety, video intelligence, and transportation, the Cisco AI Grid with NVIDIA becomes a monetizable platform for service providers, while delivering predictability and operational simplicity to enterprises. "The AI grid is the next major opportunity for telecom operators as they turn the network into a distributed AI platform. Together, Cisco AI Grid with NVIDIA accelerated computing and their Mobility Services Platform give telcos a full-stack path to turn secure, real-time AI inference at the network edge into highly monetizable, AI-native enterprise services," said Chris Penrose, Global Head of Business Development for Telco, NVIDIA. The Cisco platform approach transforms AI from an integration challenge into a scalable business model. Instead of building distributed AI environments from scratch, organizations leverage pre-validated designs and a unified operations framework integrated with Cisco Mobility Services Platform. Operators can deliver AI inferencing services directly from edge locations to connected endpoints, supporting low-latency applications and precision across autonomous vehicles, robotics, IoT, and industrial automation. This reduces risk, standardizes deployment, and enables predictable scale across compute, networking, and security. Rather than remaining connectivity providers, service providers become active participants in the AI economy -- monetizing the network, delivering AI as a service, enabling ecosystem innovation, and capturing new revenue streams without building entirely new platforms. Cisco Mobility Services Platform operates one of the world's largest global IoT platforms, supporting 293 million mobile IoT subscribers -- including 130 million connected vehicles -- across leading service providers and more than 31,000 enterprises worldwide. The platform processes 2 petabytes of data and 123 million API calls daily, providing the scale and operational foundation for mission-critical services. Cisco AI Grid with NVIDIA builds on this foundation, extending Cisco Mobility Services Platform to enable service providers, developers, and ecosystem partners to deliver distributed AI inferencing services at the network edge. Leading operators are already capturing this opportunity. AT&T is bringing new edge AI inferencing capabilities to enterprises and developers, built on its dedicated IoT core powered by Cisco AI Grid with NVIDIA. This enables organizations to run AI inference closer to where data is generated -- enabling real-time decision-making across distributed environments. Through this integration, AT&T combines business-grade connectivity, advanced network features, localized AI compute, and zero-trust security to support mission-critical use cases -- the first successful deployment being near real-time AI inferencing for public safety at AT&T's Discovery District. "Scaling AI services that are both highly secure and accessible for enterprises and developers is a core pillar of our IoT connectivity strategy," said Shawn Hakl, SVP, Head of Product, AT&T Business. "By combining AT&T's business-grade connectivity, localized AI compute, and zero-trust security while working with members of the NVIDIA Inception program and harnessing Cisco AI Grid with NVIDIA infrastructure and Cisco Mobility Services Platform, we're bringing real-time AI inference closer to where data is generated -- accelerating digital transformation and unlocking new business opportunities." SoftBank Corp. is advancing its Telco AI Cloud vision to build social infrastructure for the AI era by leveraging its telecommunications foundation. Technologies such as Cisco AI Grid with NVIDIA highlight how distributed AI inferencing can be enabled at the network edge. By bringing AI capabilities closer to where data is generated, SoftBank supports real-time industrial AI for robotics, autonomous transportation, and public safety -- unlocking new growth and reinforcing its evolution into an AI platform provider. "Cisco AI Grid with NVIDIA is a key step toward enabling distributed, real-time AI at the edge. At SoftBank, our Telco AI Cloud vision integrates AI computing with nationwide telecom infrastructure to deliver scalable, low-latency AI services. This aligns with the shift toward AI-native telecom platforms, and we look forward to exploring how these technologies can accelerate the industry's transformation," said Ryuji Wakikawa, Vice President and Head of the Research Institute of Advanced Technology at SoftBank Corp. Infrastructure creates potential -- applications create value. Cisco is fostering a growing ecosystem of independent software vendors (ISVs) and developers building AI-powered solutions on Cisco AI Grid. This ecosystem forms a virtuous cycle: operators gain differentiated services, ISVs gain scalable infrastructure, and enterprises gain AI capabilities without complex integration hassle. Partners such as Linker Vision demonstrate the impact, delivering AI-powered video inferencing for smart cities, retail analytics, and industrial automation. By leveraging secure AI Grid within mobile operator networks, applications process data at the edge with millisecond latency, scale across thousands of endpoints, and deliver real-time insights. The AI infrastructure opportunity is massive and time-sensitive. Early movers will secure ecosystem partnerships, build durable customer relationships, and monetize edge assets ahead of competitors. Late adopters risk being confined to commoditized connectivity while others capture the AI value layer. Cisco AI Grid with NVIDIA provides a secure, modular, and pre-validated path forward. It streamlines implementation, brings operational consistency across distributed environments, and ensures infrastructure keeps pace with rapidly growing AI workloads. Additionally, it delivers predictable outcomes through programmable service access, reliable application performance, and trusted data governance. The AI era is here. The question is not whether to build distributed AI infrastructure -- but how fast. With a secure AI Grid from Cisco, organizations can move decisively, monetize intelligently, and lead confidently. The infrastructure powering the next decade of AI starts now -- and it starts with Cisco. Contact us for more information.

[3]

Akamai Launches AI Grid Intelligent Orchestration for Distributed Inference Across 4,400 Edge Locations

Akamai Inference Cloud is the industry's first global-scale implementation of NVIDIA AI Grid, intelligently routing AI workloads across its edge, regional, and core footprint to balance latency, cost, and performance Akamai Technologies today reached a major milestone in the evolution of artificial intelligence, unveiling the first global-scale implementation of NVIDIA® AI Grid reference design. By integrating NVIDIA AI infrastructure into Akamai's infrastructure, and leveraging intelligent workload orchestration across its network, Akamai intends to move the industry beyond isolated AI factories toward a unified, distributed grid for AI inference. The move marks a significant step in the evolution of Akamai's Inference Cloud, introduced late last year. As the first to operationalize the AI Grid, Akamai is rolling out thousands of NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs, providing a platform to enable enterprises to run agentic and physical AI with the responsiveness of local compute and the scale of the global web. "AI factories have been purpose-built for training and frontier model workloads -- and centralized infrastructure will continue to deliver the best tokenomics for those use cases," said Adam Karon, Chief Operating Officer and General Manager, Cloud Technology Group, Akamai. "But real-time video, physical AI, and highly concurrent personalized experiences demand inference at the point of contact, not a round trip to a centralized cluster. Our AI Grid intelligent orchestration gives AI factories a way to scale inference outward -- leveraging the same distributed architecture that revolutionized content delivery to route AI workloads across 4,400 locations, at the right cost, at the right time." The Architecture of 'Tokenomics' At the heart of the AI Grid is an intelligent orchestrator that acts as a real-time broker for AI requests. Applying Akamai's expertise in application performance optimization to AI, this workload-aware control plane optimizes "tokenomics" by radically improving cost per token, time-to-first-token, and throughput. A major differentiator for Akamai is the ability for customers to access fine-tuned or sparsified models through its enormous global edge footprint, which offers a massive cost and performance advantage for the long tail of AI workloads. For example: * Cost Efficiency at Scale: Enterprises can dramatically reduce inference costs by matching workloads to the right compute tier automatically. The orchestrator applies techniques like semantic caching and intelligent routing to direct requests to right-sized resources, reserving premium GPU cycles for the workloads that demand them. Underpinning this is Akamai Cloud, built on open-source infrastructure with generous egress allowances to support data-intensive AI operations at scale. * Real-Time Responsiveness: Gaming studios can deliver AI-driven NPC interactions that maintain player immersion in milliseconds. Financial institutions can execute personalized fraud detection and marketing recommendations in the moment between login and first screen. Broadcasters can transcode and dub content in real time for global audiences. These outcomes are powered by Akamai's globally distributed edge network with over 4,400 locations with integrated caching, serverless edge compute, and high-performance connectivity that processes requests at the point of user contact, bypassing the round-trip lag of origin dependent clouds. * Production-Grade AI at the Core: Large language models, continuous post-training, and multi-modal inference workloads require sustained, high-density compute that only dedicated infrastructure can deliver. Akamai's multi-thousand GPU clusters, powered by NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs, provide the concentrated horsepower for the heaviest AI workloads, complementing the distributed edge with centralized scale. The Continuum of Compute: From Core to Far-Edge Built on NVIDIA AI Enterprise and leveraging NVIDIA Blackwell architecture and NVIDIA BlueField DPUs for hardware-accelerated networking and security, Akamai is able to manage complex SLAs across edge and core locations: * The Edge (4,400+ locations): Delivers rapid response times for physical AI and autonomous agents. It will leverage semantic caching and serverless capabilities like Akamai Functions (WebAssembly-based compute) and EdgeWorkers to deliver model affinity and stable performance at the point of user contact. * Akamai Cloud IaaS and Dedicated GPU Clusters: Core public cloud infrastructure enables portability and cost savings for massive-scale workloads, while pods powered by NVIDIA RTX PRO 6000 Blackwell GPUs enable heavy-duty post-training and multi-modal inference. "New AI-native applications demand predictable latency and better cost efficiency at planetary scale," said Chris Penrose, Global VP - Business Development - Telco at NVIDIA. "By operationalizing the NVIDIA AI Grid, Akamai is building the connective tissue for generative, agentic, and physical AI, moving intelligence directly to the data to unlock the next wave of real-time applications." Powering the Next Wave of Real-Time AI Akamai is already seeing strong, early adoption for Akamai Inference Cloud across compute-intensive, latency-sensitive industries: * Gaming: Studios are deploying sub-50-millisecond inference for AI-driven NPCs and real-time player interactions. * Financial Services: Banks rely on the grid for hyper-personalized marketing and rapid recommendations in the critical moments when customers log in. * Media and Video: Broadcasters use the distributed network for AI-powered transcoding and real-time dubbing. * Retail and Commerce: Retailers are adopting the network for in-store AI applications and associate productivity tools at the point of sale. Driven by enterprise demand, the platform has also been validated by major technology providers, including a $200 million, four-year service agreement for a multi-thousand GPU cluster in a data center purpose-built for enterprise AI infrastructure at the metro edge. Scaling AI Factories from Centralized to Distributed The first wave of AI infrastructure was defined by massive GPU clusters in a handful of centralized locations, optimized for training. But as inference becomes the dominant workload and businesses across every industry focus on building AI agents, that centralized model faces the same scaling constraints that earlier generations of internet infrastructure encountered with media delivery, online gaming, financial transactions, and complex microservices applications. Akamai is solving each of those challenges through the same fundamental approach: distributed networking, intelligent orchestration, and purpose-built systems that bring content and context together as close as possible to the digital touchpoint. The result has been improved user experiences and stronger ROI for the enterprises that adopted the model. Akamai Inference Cloud applies that same proven architecture to AI factories, enabling the next wave of scaling and growth by distributing dense compute from core to edge. For enterprises, this means the ability to deploy AI agents that are context-aware and adaptive in their responsiveness. For the industry, it represents a blueprint for how AI factories evolve from isolated installations into a globally distributed utility. Availability Akamai Inference Cloud is available today for qualified enterprise customers. Organizations can learn more and request access at https://www.akamai.com/products/akamai-inference-cloud-platform. Akamai representatives will be available for demonstrations and meetings throughout NVIDIA GTC 2026 at the San Jose Convention Center, Booth 621 March 16-19, 2026.

[4]

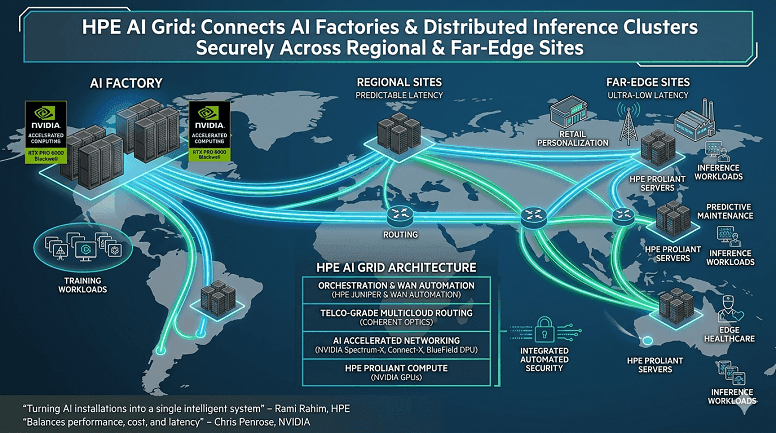

HPE transforms distributed AI factories into intelligent AI grid powered by NVIDIA

New solution has critical application for AI services and use cases that rely on low-latency, real-time connectivity, including retail personalization, predictive maintenance in manufacturing, localized edge inference in healthcare, and carrier-grade AI services HPE today announced the HPE AI Grid, an end-to-end solution built on the NVIDIA reference architecture to securely connect AI factories and distributed inference clusters across regional and far‑edge sites. The HPE AI Grid enables service providers to deploy and operate thousands of distributed inference sites, turning AI installations into a single intelligent system. AI‑native applications require predictable, low‑latency, distributed infrastructure. The HPE AI Grid solution, part of NVIDIA AI Computing by HPE portfolio, delivers predictable, ultra‑low latency performance at scale for real‑time AI services, zero‑touch provisioning, and automated security with integrated orchestration. "We're redefining how AI is delivered by moving intelligence to where data and users live and making the network the dependable fabric for real-time experiences," said Rami Rahim, executive vice president, president and general manager, Networking, HPE. "HPE AI Grid with NVIDIA gives service providers a secure, scalable way to operate distributed inference as a single system -- delivering predictable, ultra-low latency performance so customers can innovate faster, reduce risk, and create new services." "An AI Grid unifies geographically distributed AI clusters to place AI workloads where they run best -- balancing performance, cost, and latency across AI factories, regional sites, and the edge," said Chris Penrose, global vice president, Telco, NVIDIA. "Together with HPE, we're bringing that vision to life by combining NVIDIA's accelerated computing and networking with HPE's telco‑grade multicloud routing and edge infrastructure to create a single, intelligent fabric for distributed inference." HPE delivers end-to-end AI grid solution that speeds time to value for deployment The HPE AI Grid aligns with NVIDIA AI Grid reference architecture to provide a unified hardware and software stack for service providers. The HPE AI Grid is differentiated by HPE's ability to offer full-stack AI servers and AI networks. The HPE AI Grid includes: * HPE Juniper's telco-grade multicloud routing and coherent optics for predictable long-haul and metro connectivity; cloud-native and multi-tenant security; firewalls; WAN automation; and orchestration to deliver zero-touch deployment and lifecycle operations * HPE ProLiant Compute edge and rack servers with NVIDIA accelerated computing, including NVIDIA RTX PRO 6000 Blackwell GPUs, as well as NVIDIA BlueField DPUs, Spectrum-X Ethernet switches, Connect-X SuperNICs, and AI blueprints for rapid AI inference HPE AI Grid creates new opportunities for service providers Service provider use cases -- from retail personalization and predictive maintenance to edge healthcare and carrier‑grade AI services -- demand predictable, ultra‑low latency connectivity. HPE AI Grid lets operators convert existing sites with power and connectivity into RAN‑ready AI grids, enabling distributed inference and new services at scale. As part of advancing its AI grid strategy, Comcast announced today new AI field trials on its highly distributed network for real-time edge AI inferencing to unlock faster, more responsive experiences for the next wave of AI applications. The initial trials addressed several use cases, including leveraging HPE ProLiant servers running small language models from Personal AI, part of HPE's Unleash AI parter program, on NVIDIA GPUs to deliver AI-powered "front desk" services for small businesses. Industry Reactions to HPE AI Grid with NVIDIA "HPE and NVIDIA have been strategic partners in building TELUS' Sovereign AI Factory, Canada's fastest and most powerful supercomputer, which is enabling researchers, businesses, and institutions to innovate at scale," said Nazim Benhadid, Executive Vice-president and Chief Technology Officer, TELUS. "As TELUS looks to bring AI closer to customers, advance AI-powered network optimization and deliver faster service, HPE AI Grid powered by NVIDIA is a solution we are interested in exploring further as we continue our transformational AI journey." "Our customers increasingly expect millisecond responsiveness, low-latency connectivity and comprehensive security to support their applications and services," said Neil McRae, CTIO at CityFibre. "We're exploring how AI Grid from HPE, based on NVIDIA's reference architecture, could support distributed AI inferencing and bring intelligence closer to users and data. By leveraging our fiber network assets, we see potential to combine high-performance connectivity with intelligent services for customers." HPE Financial Services accelerates AI-ready networking and distributed AI infrastructure To further accelerate adoption of AI‑ready networks and distributed AI infrastructure, HPE Financial Services is also extending its 0% financing on networking AIOps software including HPE Juniper Networking Mist, and its financing providing the equivalent of 10% cash savings on AI‑ready networking leases.

Share

Share

Copy Link

Major telecom operators including AT&T, Comcast, T-Mobile, and Spectrum are partnering with NVIDIA to build AI grids that transform their existing network infrastructure into distributed AI inference platforms. Using NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs across thousands of edge locations, these AI grids enable real-time AI services closer to users while improving cost efficiency and latency for applications ranging from IoT to cloud gaming.

Major Telecom Operators Build AI Grid Infrastructure With NVIDIA

Telecommunications networks are undergoing a structural transformation as leading operators partner with NVIDIA to deploy AI grids that convert existing infrastructure into distributed AI inference platforms. AT&T, T-Mobile, Comcast, Spectrum, and Asian operators announced at NVIDIA GTC 2026 that they're building geographically distributed and interconnected AI infrastructure using NVIDIA technology to power and monetize real-time AI services across the network edge

1

.

Source: NVIDIA

The shift addresses a critical infrastructure gap as AI applications scale to more users, agents, and devices. Telecom operators and distributed cloud providers manage approximately 100,000 distributed network data centers worldwide, spanning regional hubs, mobile switching offices, and central offices. These facilities hold enough spare power to offer more than 100 gigawatts of new AI capacity over time, according to NVIDIA

1

. By running AI inference closer to where data is generated, AI grids deliver better response times and improved cost per token compared to centralized data centers.Cisco and HPE Deploy Full-Stack Solutions for Distributed AI Inference

Cisco announced Cisco AI Grid with NVIDIA, a reference architecture that transforms networks into AI platforms for service providers. Built on Cisco Mobility Services Platform and integrated with Cisco UCS servers featuring NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs, the solution combines distributed compute, intelligent networking through Cisco Nexus switching and Silicon One-based routing, and embedded security

2

. AT&T became the first operator to bring these inferencing capabilities to market, partnering with Cisco and NVIDIA to build an AI grid for IoT applications."Scaling AI services that are both highly secure and accessible for enterprises and developers is a core pillar of our IoT connectivity strategy," said Shawn Hakl, senior vice president of product at AT&T Business. The company manages over 100 million IoT connections across thousands of device types and is positioning AI inferencing at the network edge to support mission-critical applications like public safety use cases with Linker Vision

1

.HPE introduced the HPE AI Grid, an end-to-end solution aligned with NVIDIA's reference architecture to connect AI factories and distributed inference clusters across regional and far-edge sites. The solution includes HPE Juniper's telco-grade multicloud routing, coherent optics for predictable connectivity, and HPE ProLiant Compute servers with NVIDIA accelerated computing including Blackwell GPUs and BlueField DPUs

4

. Comcast announced AI field trials using HPE ProLiant servers running small language models from Personal AI on NVIDIA GPUs to deliver AI-powered services for small businesses.

Source: CXOToday

Akamai Launches Global-Scale Intelligent AI Grid Across 4,400 Edge Locations

Akamai Technologies reached a milestone by unveiling the first global-scale implementation of the NVIDIA AI Grid reference design, expanding Akamai Inference Cloud across more than 4,400 edge locations with thousands of NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs. The intelligent AI grid uses workload orchestration to route AI workloads across edge, regional, and core infrastructure, optimizing what Akamai calls "tokenomics" by improving cost per token, time-to-first-token, and throughput.

"AI factories have been purpose-built for training and frontier model workloads, but real-time video, physical AI, and highly concurrent personalized experiences demand inference at the point of contact," said Adam Karon, Chief Operating Officer at Akamai. The platform's intelligent orchestrator acts as a real-time broker for AI requests, applying semantic caching and intelligent routing to direct workloads to right-sized resources.

The architecture enables gaming studios to deliver AI-driven NPC interactions in milliseconds, financial institutions to execute personalized fraud detection, and broadcasters to transcode and dub content in real time. Built on NVIDIA AI Enterprise and leveraging NVIDIA Blackwell architecture with BlueField DPUs for hardware-accelerated networking and security, Akamai manages complex service-level agreements across edge and core locations.

Related Stories

Spectrum and Regional Operators Deploy Low Latency AI Infrastructure

Spectrum is building an AI grid spanning more than 1,000 edge data centers with hundreds of megawatts of capacity positioned less than 10 milliseconds away from 500 million devices. The initial deployment focuses on rendering high-resolution graphics for media production using remote GPUs embedded across Spectrum's fiber-powered, low-latency network

1

.Comcast is developing one of the nation's largest low-latency broadband footprints into an intelligent AI grid for real-time, hyper-personalized experiences. Working with NVIDIA, Decart, Personal AI, and HPE, Comcast validated that its AI grid keeps conversational agents, interactive media, and NVIDIA GeForce NOW cloud gaming responsive and economical during demand spikes, delivering significantly higher throughput and lower cost per token

1

.Indosat Ooredoo Hutchison is connecting its sovereign AI factory with distributed edge and AI-RAN sites across Indonesia to build an intelligent AI grid for local innovation. By running Sahabat-AI, a Bahasa Indonesia-based platform, within Indonesia's borders, Indosat aims to bring localized AI services closer to hundreds of millions of Indonesians across thousands of islands

1

.Industry Implications for AI Inferencing at the Network Edge

Chris Penrose, Global Head of Business Development for Telco at NVIDIA, emphasized that the AI grid represents "the next major opportunity for telecom operators as they turn the network into a distributed AI platform." The shift transforms networks into AI platforms rather than simply transport layers for AI traffic

2

.Service providers are positioned to address three critical infrastructure constraints: predictability for real-time AI applications like robotics and video analytics that require millisecond precision; security distributed across thousands of endpoints; and operational complexity in managing hybrid environments. The unified architecture enables operators to deliver AI inferencing services directly from edge locations to connected endpoints, supporting low latency AI applications across autonomous vehicles, robotics, IoT, and industrial automation

2

.T-Mobile is exploring edge AI applications using NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs to demonstrate how distributed networks could support emerging AI-RAN and edge inference use cases. As AI-native applications proliferate across industries from public safety and intelligent transportation to smart manufacturing and smart cities, the distributed AI inference model positions telecom networks at the center of scaling AI workloads rather than merely carrying traffic.

Source: Cisco

References

Summarized by

Navi

[2]

[3]

Related Stories

HPE expands AI portfolio with Nvidia Blackwell and Vera Rubin systems for enterprise deployment

17 Mar 2026•Technology

Cisco and NVIDIA Redefine AI Security with BlueField DPUs and Hybrid Mesh Firewall

17 Mar 2026•Technology

Akamai Launches Cloud Inference: Revolutionizing AI Performance with Edge Computing

27 Mar 2025•Technology