Photonic Startups Attract Major Investments as AI Data Centers Seek Efficiency Boost

5 Sources

5 Sources

[1]

Why VCs are suddenly hot on photonics startups

Lightmatter, announced it had raised $400 million in a venture capital deal led by T. Rowe Price that values the seven-year-old company at $4.4 billion. And Xscape Photonics announced it had closed a $44 million investment round led by IAG Capital, with the venture capital arm of network equipment maker Cisco and Nvidia among its other investors. No valuation figures were announced as part of either Xscape's or the Oriole Networks' fundraises, both of which were Series A rounds. The reason photonics is suddenly in vogue has to do with a series of challenges tech companies are encountering as they seek to build ever larger data centers stuffed with hundreds of thousands of specialized chips -- in most cases, graphics processing units (or GPUs) -- used for training and running AI applications. Conventional networking and switching equipment, which primarily uses copper wiring through which electricity is passed to convey information, is itself becoming a bottleneck to how quickly and easily large AI models can be trained. In other cases, fiberoptics are used, but with only a few colors of light traveling in a single cable, which also constrains how much information can be transmitted. AI models based on neural networks must shuttle a lot of data continuously back and forth through the entire network. But moving all this data between GPUs, including those that might be located in distant server racks, depends on wiring pathways and the capacity of switching equipment to send data zipping to the right place. The way many large AI supercomputing clusters are wired, data traveling from one computer chip to another located elsewhere in the cluster, might have to make as many as nine hops through different network switches before it reaches its destination, George Zervas, Oriole Network's cofounder and chief technology officer, said. The larger the AI model and the more server racks involved, the more likely it is that this roadway of wiring will become congested, similar to how traffic jams delay commuters. For the largest AI models, 90% of their training time can consist of waiting for data in transit across the supercomputing cluster as opposed to the time it actually takes the chips to run the necessary computations. Conventional networking equipment, which uses electricity to transmit data, also contributes significantly to the energy requirements of data centers, both by directly consuming power, and because the copper wiring dissipates heat, meaning more energy is required to cool the data center. In some data centers, the networking equipment alone can account for 20% of the facility's overall energy consumption. Depending on what energy source is used to power the data center, this electrical demand can result in a colossal carbon footprint. Meanwhile, many data centers require vast quantities of water to help cool the racks of chips used to run AI applications. Cloud computing companies are anticipating power needs for future AI data centers that are driving them to extreme lengths to secure enough energy. Google, Amazon, and Microsoft have all struck deals that would see nuclear reactors dedicated solely to powering their data centers. Meanwhile, OpenAI had briefed the U.S. government on a plan to possibly construct multiple data centers that would each consume five gigawatts of power annually, more than the entire city of Miami currently does. Photonics potentially solves all of these challenges. Using fiberoptics to transmit data in the form of light instead of electricity makes it possible to connect more of the chips in a supercomputing cluster directly to one another, reducing or eliminating the need for switching equipment. Photonics also uses far less electricity to transmit data than electronics and photonic signals produce no heat in transit. Different photonic companies have different ideas about how to use the technology to revamp data centers. Lightmatter is creating a product called Passage that is a light-conducting surface onto which multiple AI chips could be mounted, allowing photonic data transmission between any of the chips on that Passage surface without the need for cabled connections or copper wiring. Fiberoptic cabling would then be used to connect multiple Passage products in a single server rack and for the connections between racks. Xscape envisions using photonic equipment and cabling that can transmit and detect hundreds of different colors of light through a single cable, vastly increasing the amount of data that could flow through the network at any one time. But Oriole Networks' may have the most sweeping vision, using photonics to connect every AI chip in a supercomputing cluster to every other chip in the entire cluster. This could result in training times for the largest AI models -- such as OpenAI's GPT-4 -- that are up to 10 to 100 times faster, Oriole Networks said. It can also mean networks can be trained using a fraction less power than today's AI supercomputing clusters consume. To accomplish this, Oriole envisions not just new photonic communication equipment but also new software to help program the network, and a new hardware device that can act as the "brain" for the entire network, determining which packets of information will need to be sent between which chips at exactly what moment. "It's completely radical," Oriole CEO James Regan said. "There's no electrical packet switching in the network at all." Oriole Networks was spun-out from University College London in 2023, but it relies on technology that its founders, in particular Zervas, pioneered over the past two decades. In addition to Zervas, who is a veteran photonics researcher, UCL PhD. student Alessandro Ottino and post-doctoral fellow Joshua Benjamin, who is an expert in designing communication networks, cofounded the company. They brought on Regan, an experienced entrepreneur who helped create a previous photonics company, as CEO. The company currently employs 30 people. It raised an initial Seed funding round of $13 million in March from a group of investors that includes the venture capital arm of XTX Markets, which operates one of the largest GPU clusters in Europe. UCL Technology Fund, XTX Ventures, Clean Growth Fund, and Dorilton Ventures also all participated in both the Seed round and the most recent Series A investment. Regan said that Oriole is using other companies to manufacture the photonic equipment it is designing, which will enable the company to keep its capital requirements lower than would otherwise be the case and enable the company to move faster. He said it aims to have initial equipment with potential customers to test in 2025. The company has held discussions with most of the "hyperscale" cloud service providers as well as a number of semiconductor companies manufacturing GPUs and AI chips. Ian Hogarth, the partner at Plural who led the Series A investment, said that he was drawn to Oriole Networks because it represented "a paradigm shift" rather than an incremental approach to making AI data centers more energy and resource efficient. Hogarth, who is also the chair of the U.K.'s AI Safety Institute, said he was impressed by the "raw ambition and speed that [Oriole's] founders have brought to the problem." He said the company fit in with other investments Plural has made into companies helping to combat climate change. Finally, he said he felt it was important for Europe "to have really hard assets when it comes to the evolution of the compute stack, and to not squander the opportunity to translate brilliant inventions from European universities, UK universities, into iconic companies." Of course, there's been hype about photonics before, and it hasn't always panned out. During the first internet boom of the late 1990s and early 2000s, there was also great excitement about the possibility of photonics to become the primary backbone for the internet, including for switching equipment. Venture capitalists back then also poured money into the sector. But most of those investments failed to pan out because of a lack of maturity in the photonics industry. Parts were difficult and expensive to manufacture and had higher failure rates than semiconductors and more conventional electronic switching equipment. Then, when the dot com bubble burst, it largely took the photonics boom down with it. Regan says that things are different today. The ecosystem of companies making photonic integrated circuits and photonic equipment is more robust than it was and the technology far more reliable, he said. A decade ago, a company like Oriole Networks would have had to manufacture much of the equipment it wants to produce itself -- a much more capital intensive and risky proposition. Today, there is a reliable supply chain of contract manufacturers that can execute designs developed by Oriole, he said.

[2]

Lightmatter's $400M round has AI hyperscalers hyped for photonic datacenters | TechCrunch

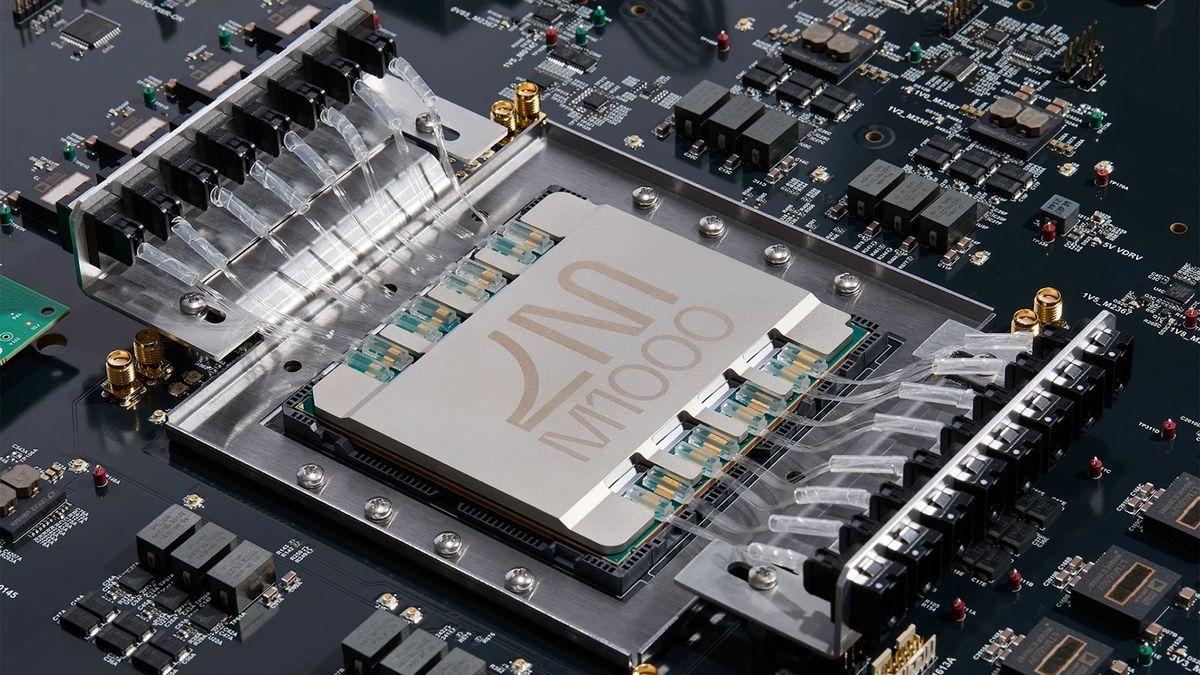

Photonic computing startup Lightmatter has raised $400 million to blow one of modern datacenters' bottlenecks wide open. The company's optical interconnect layer allows hundreds of GPUs to work synchronously, streamlining the costly and complex job of training and running AI models. The growth of AI and its correspondingly immense compute requirements have supercharged the datacenter industry, but it's not as simple as plugging in another thousand GPUs. As high performance computing experts have known for years, it doesn't matter how fast each node of your supercomputer is if those nodes are idle half the time waiting for data to come in. The interconnect layer or layers are really what turn racks of CPUs and GPUs into effectively one giant machine -- so it follows that the faster the interconnect, the faster the datacenter. And it is looking like Lightmatter builds the fastest interconnect layer by a long shot, by using the photonic chips it's been developing since 2018. "Hyperscalers know if they want a computer with a million nodes, they can't do it with Cisco switches. Once you leave the rack, you go from high density interconnect to basically a cup on a strong," Nick Harris, CEO and founder of the company, told TechCrunch. (You can see a short talk he gave summarizing this issue here.) The state of the art, he said, is NVLink and particularly the NVL72 platform, which puts 72 Nvidia Blackwell units wired together in a rack, capable of a maximum of 1.4 exaFLOPs at FP4 precision. But no rack is an island, and all that compute has to be squeezed out through 7 terabits of "scale up" networking. Sounds like a lot, and it is, but the inability to network these units faster to each other and to other racks is one of the main barriers to improving performance. "For a million GPUs, you need multiple layers of switches. and that adds a huge latency burden," said Harris. "You have to go from electrical to optical to electrical to optical... the amount of power you use and the amount of time you wait is huge. And it gets dramatically worse in bigger clusters." So what's Lightmatter bringing to the table? Fiber. Lots and lots of fiber, routed through a purely optical interface. With up to 1.6 terabits per fiber (using multiple colors), and up to 256 fibers per chip... well, let's just say that 72 GPUs at 7 terabits starts to sound positively quaint. "Photonics is coming way faster than people thought -- people have been struggling to get it working for years, but we're there," said Harris. "After seven years of absolutely murderous grind," he added. The photonic interconnect currently available from Lightmatter does 30 terabits, while the on-rack optical wiring is capable of letting 1,024 GPUs work synchronously in their own specially designed racks. In case you're wondering, the two numbers don't increase by similar factors because a lot of what would need to be networked to another rack can be done on-rack in a thousand-GPU cluster. (And anyway, 100 terabit is on its way.) The market for this is huge, Harris pointed out, with every major datacenter company from Microsoft to Amazon to newer entrants like xAI and OpenAI showing an endless appetite for compute. "They're linking together buildings! I wonder how long they can keep it up," he said. Many of these hyperscalers are already customers, though Harris wouldn't name any. "Think of Lightmatter a little like a foundry, like TSMC," he said. "We don't pick favorites or attach our name to other people's brands. We provide a roadmap and a platform for them -- just helping grow the pie." But, he added coyly, "you don't quadruple your valuation without leveraging this tech," perhaps an allusion to OpenAI's recent funding round valuing the company at $157 billion, but the remark could just as easily be about his own company. This $400 million D round values it at $4.4 billion, a similar multiple of its mid-2023 valuation that "makes us by far the largest photonics company. So that's cool!" said Harris. The round was led by T. Rowe Price Associates, with participation from existing investors Fidelity Management and Research Company and GV. What's next? In addition to interconnect, the company is developing new substrates for chips so that they can perform even more intimate, if you will, networking tasks using light. Harris speculated that, apart from interconnect, power per chip is going to be the big differentiator going forward. "In ten years you'll have wafer-scale chips from everybody -- there's just no other way to improve the performance per chip," he said. Cerebras is of course already working on this, though whether they are able to capture the true value of that advance at this stage of the technology is an open question. But for Harris, seeing the chip industry coming up against a wall, he plans to be ready and waiting with the next step. "Ten years from now, interconnect is Moore's Law," he said.

[3]

Lightmatter raises $400M at $4.4B valuation for next-gen photonic data center networking - SiliconANGLE

Lightmatter Inc., a startup building photonic supercomputing products, today announced it has raised $400 million in a late-stage round, raising the company's valuation to $4.4 billion. The Series D round was led by T. Rowe Price Associates and joined by existing investors, including Fidelity Management & Research Co. and GV, the venture capital arm of Alphabet Inc. The new round brings the total raised by the company to $850 million to date. Lightmatter builds full-stack photonic technologies, including networking interconnect for how computer chips communicate and trade data. The company looks to provide higher bandwidth, lower latency connections between chips using optical fiber connections as data centers scale to tackle larger artificial intelligence and high-performance computing workloads. In less than a decade, graphics processing units have gotten more than 1,000 times faster in terms of operations per second. They have outstripped interconnect networking in terms of sheer bandwidth, Lightmatter Chief Executive Nick Harris told SiliconANGLE in an interview. Modern-day AI large language models have become so large and complex that they sometimes require thousands or tens of thousands of GPUs working in concert and they spend part of their lifetime idle waiting for data. "If you're one of these GPUs, your life is like: OK, I'm waiting for data from memory, crunch, crunch, crunch, OK, waiting for data from another GPU, crunch, crunch, crunch, and just sitting there," said Harris. "Only 30% of the time you're doing calculations. So, you've got this Ferrari engine, it's in Manhattan, and there's stoplights everywhere." As frontier AI models expand the training clusters needed to handle them cannot keep up, Harris said. It's not the chips that are the bottleneck - it's their ability to communicate with one another. To solve this problem, Lightmatter developed Passage, a next-generation optical networking solution that interposes between data center chips with ultra-dense optical fibers. This can bring bandwidth up to 100 terabits per second, or around 100x current data center interconnect speeds. In a traditional data center, GPUs interconnect through a large number of networked switches that form a layered hierarchy to connect GPUs together, Harris explained. This generates a great deal of latency because for one GPU to reach another, it must go through several switches first. Much of that idle time GPUs spend is waiting for the data to flow through the network. "What we're able to do is flatten that hierarchy," said Harris. "So instead of six or seven layers of switches, you've got two, and each GPU can connect to thousands of GPUs." Harris also added that Passage saves power. To sustain one link between two layers of switches, he explained can require up to 300 watts, but with Lightmatter's solution, it's only about 50 watts, a sixfold power reduction. With this financing, Harris said Lightmatter will be preparing Passage for mass deployment in partner data centers and scaling up the company's team to build on the photonics technology stack. "We're innovating across the stack, with photonics," said Harris. "What we see going forward is that the future is all about interconnect. There's a limit to how big computer chips can be. It's wafer-scale, 300 millimeters squared in diameter, so 12 inches. When you get to that point, and I think it's 10 years away, the only thing that matters is the links between the chips."

[4]

Photonic startup Lightmatter raises $400 million amid AI datacenter boom, eyes IPO next

Oct 16 - Lightmatter, a Mountain View, California-headquartered startup building photonic tech in datacenter networking chips, said on Wednesday it has raised $400 million in a Series D funding round at a valuation of $4.4 billion. New investor T. Rowe Price led the funding round, with participation from existing backers including Fidelity and Alphabet's GV. The latest funding came less than a year since the last raise, bringing total funding since inception to $850 million as the company tries to capitalize on the datacenter boom and need for more efficient infrastructure in the artificial intelligence (AI) race set off by ChatGPT. Advertisement · Scroll to continue Lightmatter said its fresh capital will be used to manufacture and deploy its photonic chips in partner data centers, as well as expanding its 200-person team across the U.S. and Canada. The funding follows the appointment of former Nvidia executive Simona Jankowski as CFO, and could pave its way for a potential public offering. "This is probably our last private funding round," said co-founder and CEO Nick Harris. "We've booked very large deals. And that is the reason that this level of funding is possible. We see a future where most of the high performance computing and AI chips very soon are going to be based on Lightmatter tech." Advertisement · Scroll to continue The company, which develops methods for computer chips to communicate and perform calculations using silicon photonics, said it sees huge demand from cloud providers and AI companies, but declined to name its customers. While the concept of using light in computing isn't new and offers potential for faster, more energy-efficient processes, creating the necessary components has historically been challenging. Founded in 2017, Lightmatter says its proprietary technology enables photonics chips to move data, increasing AI cluster bandwidth and performance while reducing power consumption. Its first large clusters are expected to be running next year. Editing by Elaine Hardcastle Our Standards: The Thomson Reuters Trust Principles., opens new tab Krystal Hu Thomson Reuters Krystal reports on venture capital and startups for Reuters. She covers Silicon Valley and beyond through the lens of money and characters, with a focus on growth-stage startups, tech investments and AI. She has previously covered M&A for Reuters, breaking stories on Trump's SPAC and Elon Musk's Twitter financing. Previously, she reported on Amazon for Yahoo Finance, and her investigation of the company's retail practice was cited by lawmakers in Congress. Krystal started a career in journalism by writing about tech and politics in China. She has a master's degree from New York University, and enjoys a scoop of Matcha ice cream as much as getting a scoop at work.

[5]

Photonic Startup Lightmatter Raises $400M Amid Data Center Frenzy; Hits $4.4B Valuation

Lightmatter, a startup that uses light to link chips together and to do calculations for the deep learning necessary for AI, locked up a $400 million Series D led by new investor T. Rowe Price at a $4.4 billion valuation. The new round nearly quadruples its previous valuation of $1.2 billion in December after a $155 million raise led by GV -- which along with Fidelity Management and Research Co. also participated in the new round. As Big Tech pours hundreds of billions of dollars into new AI data centers, Lightmatter is trying to solve the problems around energy consumption and scalability of those new centers. The company's tech uses silicon photonics that can speed up processes while also using less power. While the idea of using light in computing isn't new, creating the components has historically been challenging. The new Series D is Lightmatter's largest to date, but the Boston-based company is not new to big raises. In May 2023, the startup locked up a $154 million raise before extending that round in December. Founded in 2017, Lightmatter has raised $850 million, per the company. Co-founder and CEO Nick Harris told Reuters, "This is probably our last private funding round." Lightmatter's was not the only large raise by a photonic startup this week. Xscape Photonics -- a New York-based startup also using photonics technology to address the energy, performance and scalability challenges of AI data centers -- raised a $44 million Series A led by IAG Capital Partners and with investment from the likes of Cisco Investments and Nvidia.

Share

Share

Copy Link

Lightmatter raises $400 million in Series D funding, while other photonic startups like Oriole Networks and Xscape Photonics also secure significant investments. The surge in funding highlights the growing importance of photonics in addressing AI data center challenges.

Photonic Startups Attract Major Investments

In a significant development for the AI industry, several photonic startups have secured substantial funding, highlighting the growing importance of photonics in addressing the challenges faced by AI data centers. Lightmatter, a leader in this space, has raised an impressive $400 million in a Series D funding round, valuing the company at $4.4 billion

1

2

3

.The Rise of Photonics in AI Infrastructure

The sudden interest in photonics is driven by the increasing demands placed on data centers by large AI models. Conventional networking equipment, which primarily uses copper wiring and electricity to transmit data, is becoming a bottleneck in AI model training and operation

1

. Photonics technology, which uses light to transmit data, offers solutions to several critical challenges:- Increased speed and efficiency in data transmission

- Reduced energy consumption and heat generation

- Improved scalability for large AI clusters

Lightmatter's Breakthrough Technology

Lightmatter's flagship product, Passage, is an optical interconnect layer that allows hundreds of GPUs to work synchronously

2

. This technology can potentially increase the bandwidth between GPUs to 100 terabits per second, a significant improvement over current data center interconnect speeds3

.Nick Harris, CEO of Lightmatter, explains the impact: "If you're one of these GPUs, your life is like: OK, I'm waiting for data from memory, crunch, crunch, crunch, OK, waiting for data from another GPU, crunch, crunch, crunch, and just sitting there. Only 30% of the time you're doing calculations."

3

Other Players in the Photonics Field

While Lightmatter has garnered significant attention, other startups are also making waves in the photonics space:

- Oriole Networks: Raised $22 million in a Series A round, focusing on connecting every AI chip in a supercomputing cluster directly

1

. - Xscape Photonics: Secured $44 million in funding, with investments from Cisco and Nvidia

1

5

.

Related Stories

Impact on AI Data Centers

The adoption of photonics technology could have far-reaching implications for AI data centers:

- Training times for large AI models could be reduced by 10 to 100 times

1

. - Significant reduction in power consumption, addressing concerns about the energy demands of AI infrastructure

1

3

. - Potential to flatten the hierarchy of networked switches in data centers, reducing latency

3

.

Future Outlook

The substantial investments in photonics startups signal a potential shift in the AI infrastructure landscape. Nick Harris of Lightmatter predicts, "Ten years from now, interconnect is Moore's Law," suggesting that the future of computing advancement lies in improving data transmission between chips rather than increasing chip size

2

.As these technologies move towards deployment in partner data centers, the AI industry may see a significant boost in performance and efficiency. With Lightmatter hinting at a potential IPO in the future, the photonics sector is poised for continued growth and innovation

4

.References

Summarized by

Navi

[2]

[3]

Related Stories

Lightmatter Unveils Groundbreaking Photonic Interconnects for AI Chips

01 Apr 2025•Technology

Lightium Raises $7M to Revolutionize Data Center Interconnects with Photonic Chips

19 Sept 2024

Xscape Photonics Secures $44M to Revolutionize Data Center Connectivity with Silicon Photonics

16 Oct 2024•Technology

Recent Highlights

1

Google releases Gemma 4 with Apache 2.0 license, enabling unrestricted local AI on devices

Technology

2

AI Models Lie and Deceive to Protect Other AI Models From Deletion, Study Reveals

Science and Research

3

OpenAI closes $122 billion funding round amid fierce AI competition and profitability questions

Startups