SambaNova raises $350M, partners with Intel to deploy SN50 chip claiming 5x speed over Nvidia B200

12 Sources

12 Sources

[1]

Sambanova introduces new AI accelerator, partners with Intel to deploy Xeon CPUs for inferencing and agentic workloads -- Sambanova claims SN50 chip is three times more efficient than Nvidia B200

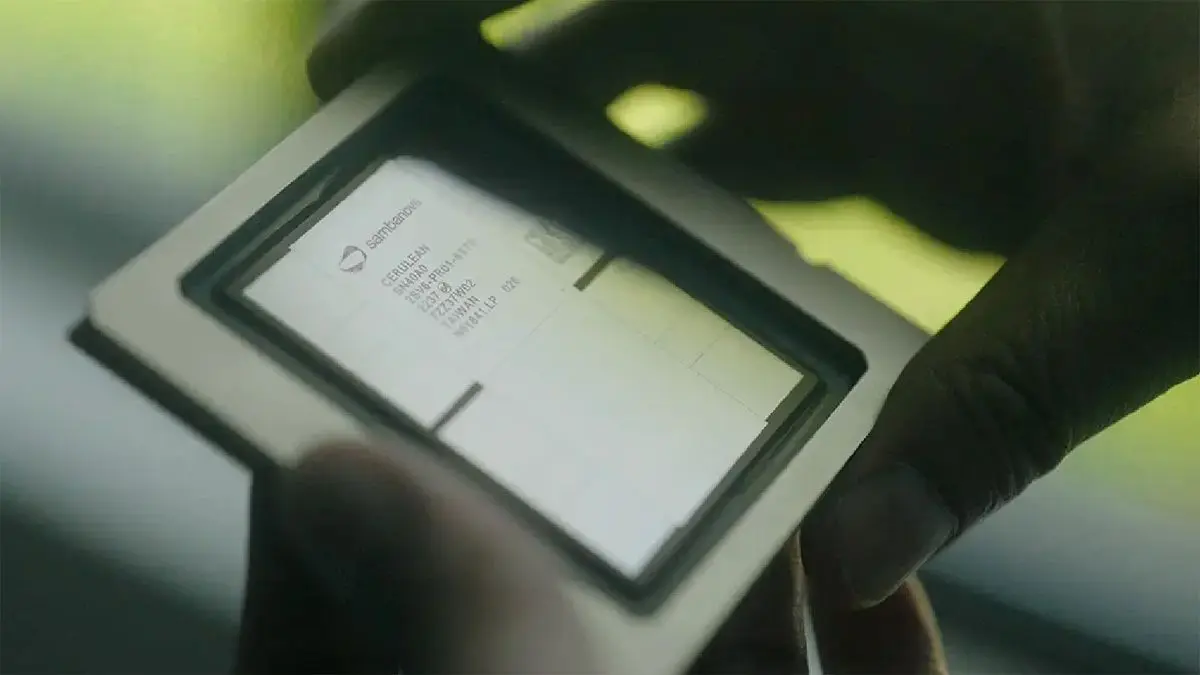

This week, Intel and SambaNova entered a multi-year strategic collaboration aimed at building large-scale AI inference infrastructure around Intel Xeon platforms and SambaNova AI accelerators. In addition, SambaNova introduced its SN50 AI processor for agentic inference, and inked a deal with SoftBank to deploy it at the latter's data centers. SambaNova says its SN50 AI accelerator platform is around five times faster and three times more efficient than Nvidia's B200. Additionally, the company has signed a pact with Intel to offer Xeon-based AI systems to enterprises and governments. "AI is no longer a contest to build the biggest model," said Rodrigo Liang, co‑founder and CEO of SambaNova. "With the SN50 and our deep collaboration with Intel, the real race is about who can light up entire data centers with AI agents that answer instantly, never stall, and do it at a cost that turns AI from an experiment into the most profitable engine in the cloud." SN50: Purpose-built for inference Seeing AI inference as potentially a larger market than AI training, SambaNova developed its SN50 accelerator for inference workloads, rather than training, focusing primarily on low latency for real-time applications such as voice assistants, memory and network bandwidth, and power consumption, rather than on pure compute performance. The dual-chiplet processor is based on SambaNova's Reconfigurable Data Unit (RDU) architecture and features a three-tier memory subsystem -- SRAM, HBM, and DDR5 -- designed to keep multiple models resident (for rapid hot-swapping), along with mechanisms to optimize memory usage, which is handy for both memory utilization and power consumption. The company says that the new SN50 processor delivers five times more compute per accelerator and four times the networking bandwidth compared to its predecessor. In addition, the company claims that the SN50 accelerator offers five times more compute than 'competing' offerings (presumably the B200, but we may be wrong), without disclosing which offerings it compares the SN50 to. The company also boasts a threefold lower cost of ownership for inference compared to GPU-based systems. In line with the latest industry trends, SambaNova positions the SN50 RDU mainly as an ingredient of its SambaRack SN50 rack-scale solution rather than a separate processor, which is how these AI accelerators will be primarily marketed. Each 20 kW SambaRack SN50 packs 16 SN50 RDU processors, and 16 racks can interconnect up to 256 accelerators using a multi-terabyte-per-second fabric. The company stresses that at 20 kW per rack, the SambaRack SN50 operates within existing data center power envelopes and relies on air cooling, eliminating the need for liquid cooling or data center modifications. A cluster of 256 SN50 accelerators is designed for extremely large models, including configurations exceeding 10 trillion parameters and context windows of more than 10 million tokens. SambaNova positions this capability as essential for reasoning-heavy and multi-model agentic AI workloads, which demand both scale and responsiveness. On the performance side of matters, SambaNova cites results from SemiAnalysis's InferenceX benchmark. When running with FP8 precision, a Llama 3.3 70B model with 1K input and 1K output tokens reportedly achieves 895 tokens per second per user on the SN50, compared to 184 tokens per second per user on Nvidia's B200. Across a range of configurations, throughput per-RDU is presented as significantly higher than per GPU, with an average advantage of approximately 3X when latency constraints are applied across Llama 70B, GPT-OSS 120B, and DeepSeek 671B, which translates to improved cost-per-token economics. The SambaRack SN50 systems will be available in the second half of 2026, pricing is unknown. The pact with Intel One of the key parts of this week's SambaNova announcements is arguably its pact with Intel. Under the terms of the deal, the companies will offer rack-scale solutions for AI workloads based on Intel Xeon processors and SambaNova AI accelerators for several years. SambaNova does not disclose which CPU powers its SambaRack SN50, but it looks like future racks from the company will be Xeon-based. The joint effort between Intel and SambaNova targets select applications and types of customers, such as AI inference solutions for AI-native companies, model providers, as well as enterprises and government organizations worldwide. The latter two are Intel's traditional customers, which makes us wonder whether this is a coordinated go-to-market strategy through Intel's enterprise and cloud channels for SambaNova's production-ready inference systems, or a less harmonized approach. In any case, an x86 Intel Xeon CPU, along with Intel networking technologies, will certainly make SambaRacks more appealing to enterprises and governments. Notably, Intel emphasized that this agreement with SambaNova complements its existing GPU roadmap rather than replacing it, so the company will continue offering its own GPUs for inference and eventually for training. Perhaps the most controversial part of the announcement is that Intel Capital is also participating in SambaNova's Series E financing round. SambaNova is chaired by Lip-Bu Tan, who also happens to be the chief executive of Intel. While it is common for companies of Intel's scale to make strategic investments in their smaller ecosystem partners, taking a stake in a business chaired by the investor's own top executive is far from routine. The deal with SoftBank A less sensitive, but no less interesting part of the SambaNova announcement is its pact with SoftBank. SoftBank will be the first to roll out SN50 in its next-generation AI data centers in Japan. These data centers will use the accelerator to drive low-latency inference for sovereign and enterprise customers across Asia-Pacific, running open-source and proprietary frontier models with strict performance requirements. The move expands SoftBank's existing partnership with SambaNova, which already operates SambaCloud in the region. The new clusters based on the SN50 will be standard for SoftBank's new data centers, which effectively makes SambaNova the core inference platform for SoftBank's sovereign AI programs and upcoming large-scale agentic deployments in the region. "With SN50, we are building an AI inference fabric for Japan that can serve our customers and partners with the speed, resiliency and sovereignty they expect from SoftBank," said Hironobu Tamba, Vice President and Head of the Data Platform Strategy Division of the Technology Unit at SoftBank Corp. "By standardizing on SN50, we gain the ability to deliver world‑class AI services on our own terms -- with the performance of the best GPU clusters, but with far better economics and control." $350 million in Series E funding Last but not least, SambaNova has secured $350 million in strategic Series E funding to expand manufacturing and cloud capacity from such investors as Vista Equity Partners, Cambium Capital, Intel Capital, and Battery Ventures. This all positions Sambanova into the AI inferencing market. As training workloads demand the best and fastest processors, inferencing introduces a different set of requirements, with the ongoing Intel partnership set to benefit the company, especially as Agentic AI inferencing workloads demand fast CPUs, in this case, from the likes of Intel.

[2]

Intel-Backed SambaNova Raises Cash, Touts SoftBank Chip Contract

Intel will combine SambaNova's offerings with its own chips in products for data center customers as part of a "multiyear collaboration", with the goal of serving customers until its own AI accelerators are ready. Artificial intelligence chip startup SambaNova Systems Inc. raised $350 million in a new funding round and said that customers such as SoftBank Group Corp. are preparing to use its latest technology in data centers. As part of the fundraising announcement, SambaNova introduced a chip called SN50 that's designed to run as much as five times faster than rival processors. The startup is working with investor Intel Corp. to speed adoption of the technology, which will ship to customers later this year. SambaNova is trying to carve out a piece of the massive market for AI computing -- an area currently dominated by Nvidia Corp. There's growing interest in alternatives to Nvidia's AI accelerators, the chips that help develop and run artificial intelligence models, especially if they can offer a cost advantage. "Customers are asking for more choice and more efficient ways to scale AI," Kevork Kechichian, general manager of Intel's data center group, said in a statement. As part of a "multiyear collaboration," Intel will combine SambaNova's offerings with its own chips in products for data center customers. SoftBank will be the first customer to deploy the SN50 platform, using it to power AI computing in Japan, SambaNova said. The Japanese company already uses older SambaNova products. The Series E funding round was led by Vista Equity Partners and Cambium Capital, with Battery Ventures, Mayfield Capital and other new investors participating. The financing was oversubscribed, with "strong participation" from Intel Capital, the chipmaker's venture arm, SambaNova said. Intel Chief Executive Officer Lip-Bu Tan also serves as chairman of SambaNova, and the latest fundraising followed stalled talks about the chip giant acquiring the startup. Intel said it took steps to make sure it wouldn't face questions about conflicts of interest during the discussions. "Lip-Bu Tan recused himself from this process and Kevork Kechichian was the executive sponsor," the company said in an emailed statement. The San Jose, California-based startup declined to give its valuation in the deal. A person familiar with the matter said it was above $2 billion. That would still be well below SambaNova's valuation in 2021, when a round led by SoftBank Group Corp.'s Vision Fund 2 put the business's worth at around $5 billion. For Intel, the combination of its processors and SambaNova's offerings will help serve customers until its own AI accelerators are ready. The interim arrangement is meant to keep its technology relevant during an explosive build-out of AI capacity.

[3]

SambaNova raises $350M with Intel backing

Upstart's 5th-gen RDU aims to undercut Nvidia's B200 on speed and cost AI infrastructure company SambaNova has raised $350 million to advance its dataflow architecture, which it pitches as an alternative to GPU-based AI systems. Some of the money came from Intel Capital, scotching rumors Chipzilla wanted to buy SambaNova. Other participants in this funding round include Vista Equity, Cambium Capital, and several other VC funds that expect strong returns when SambaNova brings its latest generation of reconfigurable dataflow units (RDUs) to market. Intel will get especially close to the upstart with a "multi-year" collaboration that aims to provide customers an alternative to GPUs for generative AI deployments. Naturally, that means SambaNova's new RDUs will use Xeon CPUs, but, beyond that, the alliance will include hardware-software co-design. "We've got a product that's very competitive. They've got scale; they've got capital; they've got customers that we can collaborate on," SambaNova CEO Rodrigo Liang told El Reg. Intel is not just off the pace in the generative AI arena - the giant has arguably missed the boat entirely following repeated missteps with its datacenter GPU and Gaudi product lines. "As we evolve and expand our AI engagements from edge to cloud, we're addressing these needs in multiple ways to remain a key player in the ecosystem and protect and grow market share," Kevork Kechichian, EVP of Intel's Datacenter Group, said in a statement. SambaNova expects to ship its SN50 accelerators later this year with Japan's SoftBank already signed up as one of the startup's first customers. The new chip represents a significant upgrade over SambaNova's 2024-vintage SN40L. The company says the SN50 will deliver a 2.5x higher 16-bit floating-point performance and 5x higher perf at FP8. That works out to 1.6 and 3.2 petaFLOPS respectively. SambaNova says its signature three-tier memory hierarchy, which allows it to swap between models in a fraction of a second and efficiently offload key-value caches, remains largely unchanged. Each RDU features 432 MB of on-chip SRAM, 64 GB of HBM2E memory good for 1.8 TB/s of bandwidth, and between 256 GB and 2 TB of DDR5 memory. Flexibility on the latter point will no doubt win SambaNova some points considering the skyrocketing price of memory. HBM2E might seem like an odd choice given its age, but Liang is keen to ensure his company can ship product at a time of rising memory prices. "From a cost perspective, it's important to make sure that we don't get into a supply chain fight," he said. While a big improvement over its predecessor, the SN50 doesn't look all that impressive on paper, at least compared to modern GPUs. It'll deliver about 64 percent of the dense FP8 compute, a third of the HBM capacity, and less than a quarter of the memory bandwidth of Nvidia's nearly two-year-old Blackwell generation. However, it's important to remember that "peak" advertised FLOPS and bandwidth aren't the same thing as achievable FLOPS or bandwidth. SambaNova argues that its dataflow architecture, which aims to reduce data movement overheads by overlapping computation and communication, allows it to use fewer, less powerful accelerators. In the case of the SN50, SambaNova boasts it can deliver up to five times higher per-user generation speed compared to Nvidia's B200. SambaNova's claims would be hard to swallow if it weren't already one of the highest performing inference providers. According to Artificial Analysis, SambaNova's SN40L accelerators are able to serve up LLMs like the 230 billion parameter MiniMax M2 model at up to 378 tokens per second, more than a hundred tokens per second faster than the next closest GPU-based inference provider. Having said that, GPU-based inference platforms are catching up as Nvidia's NVL72 racks see wider adoption. SambaNova's performance also varies from model to model, so it is not a clear leader in all scenarios. We should also note that Nvidia seems to have gotten the memo on dataflow, having acquihired Groq's engineering team and licensed its architecture late last year. While SambaNova says it doesn't need ultra-dense racks to be competitive, the company has designed its new architecture to scale. For the SN50, a single inference worker can now scale across up to 256 accelerators, more than 3.5x the number found in Nvidia's NVL72 rack. But with just 16 air-cooled RDUs and 15-30 kW per rack, SambaNova isn't packaging its chips nearly as densely. This larger scale-up domain is aided by faster interconnects. SambaNova tells us it equipped each RDU with 2.2 TB/s of bidirectional chip-to-chip bandwidth via a switched fabric. Inference performance isn't SambaNova's only shtick. The large pool of DDR5 memory available to each accelerator enables SambaNova to quickly move customer models and key-value caches - essentially the model's short-term memory - in and out of memory in a matter of milliseconds. "As we move into the world of agents, one of the things that you're starting to see is the customization of these models is causing these racks to run really inefficiently," Liang said. "Everybody wants their own models, but they don't use their own models to the same level that a shared model would be used." In other words, when everyone is accessing a common model, it's relatively easy to maintain high utilization, but when everyone is running their own model, this becomes much more difficult for service providers to manage. "The economics for every player today are not as good as they need to be for scale," Liang said. "What we spent the better part of 2025 doing was actually getting the product to the point where, per rack, we had the right economics for inference so that service providers could actually make a profit serving tokens." Having accomplished this, Liang reckons SambaNova's focus moving forward will be on selling infrastructure rather than following companies like Groq down the path of building a dedicated inference cloud. ®

[4]

AI chip startup SambaNova raises $350 million in Vista-led round, signs Intel partnership

Feb 24 (Reuters) - SambaNova Systems said on Tuesday it has raised $350 million in a new funding round and struck a partnership with Intel as it seeks to capitalise on surging demand for inference chips used in artificial intelligence applications. Inference chips, which run AI models and power real-time decisions, have attracted intense investor interest following a wave of dealmaking around rivals to Nvidia, as AI companies seek faster and more efficient hardware. Private equity firm Vista Equity Partners and Cambium Capital led the round, which also included Intel's (INTC.O), opens new tab investment arm, Intel Capital, SambaNova said on Tuesday, confirming a Reuters exclusive reported earlier this month. The proceeds will fund expansion of SambaNova's new SN50 AI chip, scale its SambaCloud platform and deepen enterprise software integrations. SoftBank Corp (9434.T), opens new tab will be the first customer to deploy the SN50 chip within its AI data centres in Japan. SambaNova and Intel also signed a multi-year agreement to deliver cost-effective AI inference solutions for AI-native companies, complementing Intel's existing data centre GPU commitments. The investment marks a rare foray by Vista beyond its traditional focus on enterprise software. The funding comes after acquisition talks between SambaNova and Intel stalled. Intel, whose chief executive Lip-Bu Tan also serves as SambaNova's executive chairman, had previously discussed acquiring the startup for roughly $1.6 billion, including debt, Reuters has reported. Reporting by Juby Babu in Mexico City; Editing by Tasim Zahid Our Standards: The Thomson Reuters Trust Principles., opens new tab

[5]

Intel partners with AI chip startup SambaNova after acquisition talks reportedly failed

In addition to running Intel, Lip-Bu Tan is chairman of artificial intelligence chipmaker SambaNova, which he first invested in eight years ago. Now Intel is pumping money into the startup as it tries to take on industry leader Nvidia. SambaNova, a maker of chips for running generative AI models, has agreed to adopt Intel server chips and graphics cards in a multiyear collaboration, according to a Tuesday release. Intel is also participating in a $350 million funding round, after initially investing in SambaNova in 2019. For years, Nvidia's graphics processing units have been the silicon of choice for AI model companies like Anthropic and OpenAI, which kickstarted the AI boom with the launch of ChatGPT in late 2022. While Nvidia has been the leading beneficiary of the AI craze and is now the world's most valuable publicly traded company, Intel's revenue has declined for four straight years. Intel is now assembling its own graphics card, Tan said at a Cisco event earlier this month. The stock is up 75% in the past year, largely thanks to massive investments from the U.S. government and Nvidia. But to become a real player in the AI chip market, Intel needs help. The company previously looked at buying SambaNova for $1.6 billion but talks fell apart, Bloomberg reported in January. SambaNova counts Hugging Face, Meta and major AI labs as customers. With the new partnership, Intel and SambaNova will work together on sales and marketing to boost adoption. "We're not doing all this overnight," Rodrigo Liang, SambaNova's co-founder and CEO, told CNBC in an interview. "It's not like we're showing up tomorrow with all these things ready, but it's something that we are doing some good planning work to make sure that we're actually working this out, and then we're delivering more streamlined solutions." Intel and SambaNova declined to comment on whether the two companies discussed an acquisition. Tan became SambaNova's chairman in 2017. Walden International, the venture firm he started in 1987, placed an early bet on the startup, alongside Google's venture arm. Tan recused himself from discussions about the collaboration, an Intel spokesperson said. SambaNova is touting a new chip called the SN50 that Liang said delivers higher performance than the GPUs in Nvidia's B200 systems, based on Blackwell, and provides more computing power for the same price. The company says customers can connect up to 256 of the processors together. SoftBank, a leading investor in OpenAI, will deploy the SN50 and is an existing SambaNova customer, the startup said. Liang said SambaNova is well aware of Nvidia's dominance, but he sees room for other players. "I think we have to be realistic about the fact that Nvidia is pervasive today, as far as kind of what people like to use, but there are new technologies that are coming out that are going to run those really, really efficiently," he said. "And if you route that traffic in a heterogeneous environment, like most people are starting to do, you know you're going to get much better value out of the infrastructure." SambaNova wants to expand its own cloud for running AI models and is looking to sell clusters that companies can run in their data centers. SambaNova's other investors include private equity firm Vista Equity Partners, Battery Ventures, Cambium Capital, Qatar Investment Authority, Seligman Ventures and T. Rowe Price.

[6]

SambaNova bags $350m, unveils deals with Intel, SoftBank

SoftBank will be the first to deploy the new SN50 chips, while Intel is partnering with SambaNova to rollout its Intel-powered AI cloud. Intel-backed Nvidia rival SambaNova has raised $350m in a Series E round led by Vista Equity Partners and Cambium Capital, with strong participation from Intel Capital. Reuters was first to report the planned raise earlier this month. While details of the investment were not disclosed, sources had told the publication that Intel Capital's contribution would be around $100m, with potential commitments of up to $150m. Other participants in the round included Gulf Development, Assam Ventures, Battery Ventures, Atlantic Bridge, GV and BlackRock. According to SambaNova, proceeds from the raise will be used to expand the production of the company's newly introduced SN50 chip, touted to deliver "the best tokens per watt". The raise will also be used to scale 'SambaCloud' and deepen enterprise software integrations. SoftBank will be the first to deploy SN50 within its AI data centres in Japan powering inference services for government and enterprise customers across the Asia-Pacific. Intel has close ties with the 2017-founded SambaNova, with CEO Lip-Bu Tan serving as chairperson on SambaNova's board. Alongside the raise, the two companies have also jointly announced a multi-year collaboration to deliver cost-efficient AI inference solutions for AI companies, model providers, enterprises and governments worldwide. As part of the collaboration, Intel is making a strategic investment in SambaNova to accelerate the rollout of an Intel-powered AI cloud. "AI is no longer a contest to build the biggest model," said Rodrigo Liang, co‑founder and CEO of SambaNova. "With the SN50 and our deep collaboration with Intel, the real race is about who can light up entire data centres with AI agents that answer instantly, never stall, and do it at a cost that turns AI from an experiment into the most profitable engine in the cloud." The company positions itself as a rising competitor looking to take some of Nvidia's gigantic share in the AI chips market. Liang, who previously worked as an executive at cloud provider Oracle, commented in 2024 that Nvidia "lost some of its sheen" and that "rivals are biting at its heels". Kevork Kechichian, the executive vice-president and general manager for Intel's data centre group said: "Customers are asking for more choice and more efficient ways to scale AI." "By combining Intel's leadership in compute, networking, and memory with SambaNova's full-stack AI systems and inference cloud platform, we are delivering a compelling option for organizations looking for GPU alternatives to deploy advanced AI at scale." Other companies are also looking for alternatives to Nvidia. Meta, yesterday (24 February) said that it would buy billions of dollars' worth of AMD's chips to develop AI tech and power new data centres. The deal could see Meta taking a stake of up to 10pc in AMD. Earlier this month, Cerebras Systems, which also positions itself as a rival to Nvidia, raised $1bn in a Series H round led by Tiger Global with participation from AMD. While in January, Cerebras and its early-backer OpenAI announced a partnership to deploy 750MW of Cerebras's wafer-scale systems to make OpenAI's chatbots faster. Positron, another Nvidia competitor that offers energy-efficient AI chips for inference, raised $230m from Arm Holdings and the Qatar Investment Authority in recent weeks, taking its valuation above $1bn. Don't miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic's digest of need-to-know sci-tech news.

[7]

SambaNova steps up its challenge to Nvidia with new chip, $350M funding and a powerful ally in Intel - SiliconANGLE

SambaNova steps up its challenge to Nvidia with new chip, $350M funding and a powerful ally in Intel Chipmaker SambaNova Systems Inc. unveiled its most advanced artificial intelligence processor today as it closed on a bumper $350 million late-stage round of funding from Vista Equity Partners, Cambium Capital and others. Alongside the funding, SambaNova said it's going to collaborate with Intel Corp. on the development of new, high-performance and cost-effective systems for AI inference. It's intended to give enterprises an alternative to the graphics processing units that power most workloads today. SambaNova's $350 million Series E round saw "strong participation" from Intel's investment arm, Intel Capital. A host of other investors joined in too, including Assam Ventures, Battery Ventures, Gulf Development Public Company Limited,, Mayfield Capital, QIA, Saudi First Data, Seligman Ventures, T. Rowe Price, &E, 8Square, Atlantic Bridge, BlackRock, GV, Nepenthe, Nuri Capital and Redline Capital. SambaNova has positioned itself as a rival to Nvidia. It develops high-performance computer chips that lend themselves to AI model training and inference. Its chips can be accessed via the cloud or deployed on-premises in its own hardware appliances. One of its biggest selling points is power efficiency: SambaNova claims that its chips can generate more tokens per kilowatt hour than comparable processors from rivals. The new SN50 chip announced today promises to improve inference performance dramatically. According to SambaNova, it delivers five times more compute and four times greater networking bandwidth than its previous-generation SN40 chipset. Customers will be able to link up to 256 accelerators over a blazing fast, multi-terabit-per-second interconnect, enabling them to support much bigger and longer-context AI models with greater throughput and responsiveness, without escalating their compute costs, the company said. SambaNova said the SN50 differs wildly from Nvidia's GPUs. Technically speaking, it's a Reconfigurable Dataflow Unit or RDU, essentially a specialized AI accelerator chip that's more similar to Google LLC's tensor processing units or Amazon Web Services Inc.'s Trainium chips. It's designed for high-performance training and inference of massive, trillion-parameter large language models. The SN50 is based on a three-tier memory architecture that can support AI models with up to 10 trillion parameters and 10 million context lengths, enabling deeper reasoning and more intelligent autonomous systems than previously possible. It also claims a lower cost per token, thanks to its resident multimodel memory and agentic caching capabilities that optimize power efficiency. The SN50 is targeted at applications such as AI voice assistants that demand ultra-low latency to run in real time. It'll be able to power thousands of simultaneous sessions, it said. "AI is no longer a context to build the biggest model," said SambaNova co-founder and Chief Executive Rodrigo Liang. "The real race is about who can light up entire data centers with AI agents that answer instantly, never stall, and do it at a cost that turns AI from an experiment into the most profitable engine in the cloud." Liang previously appeared on SiliconANGLE Media's livestreaming studio theCUBE, where he spoke in depth about the company's RDU architecture and why it excels at AI inference: Liang said SambaNova says it can already light up data centers now, but in order to continue doing so in the future, it has decided to work much more closely with Intel. The collaboration sees Intel invest in the startup to accelerate the deployment of a new, Intel-powered "AI cloud" that's based on the existing SambaNova Cloud platform. Intel will enhance SambaNova Cloud its Xeon central processing units to help create a more efficient infrastructure that's optimized for multimodal large language models. Intel's Xeon CPUs excel at general-purpose operations and managing system operations, while the SN50 is optimized for the rapid processing of large datasets and performing complex calculations. Combining them in a single cloud would allow more efficient task distribution, improving latency, throughput and the overall performance of AI workloads. Intel said it will be able to accelerate SambaNova's cloud expansion in other ways too, by providing reference architectures and deployment blueprints, and through its partnerships with software vendors and systems integrators. Once it's ready, Intel and SambaNova plan to co-market and co-sell the new platform by leveraging Intel's existing relationships with enterprises and its partner channels. The partnership holds a lot of promise for both companies. SambaNova can benefit from Intel's global reach and manufacturing base to scale its AI processors, while Intel is getting the chance to finally make its mark on a market that has largely passed it by. Until now, Intel has been unable to compete with Nvidia and other chipmakers, such as Advanced Micro Devices Inc., in the AI industry. SambaNova's powerful SN50 chips, coupled with Intel's Xeon processors, can potentially change that story. Constellation Research analyst Holger Mueller said it's still possible for Intel, with the help of SambaNova, to make a splash in the AI chip market. "Nvidia gets all of the attention and has most of the market share, but AI models don't actually care about who makes the chip they're running on," he said. "They care about performance. If SambaNova and Intel's inference platform is competitive, the biggest challenge will be to show companies that's the case and convince them to use it, instead of Nvidia's GPUs." It's thought that the companies have been planning this collaboration for some time. Indeed, reports emerged in December that Intel was even considering buying SambaNova outright. Bloomberg said the company was mulling an offer in the region of $1.6 billion. It's not known if Intel actually tabled such an offer, but it seems unlikely SambaNova would have agreed, for the amount was only a third of what it was valued at following its previous funding round in 2021. Kevork Kechichian, executive vice president and general manager of Intel's data center group, said there's a fantastic opportunity in the AI data center market. "Customers want more choice and more efficient ways to scale AI," he said. "By combining Intel's leadership in compute, networking and memory with SambaNova's full-stack AI systems and inference cloud platform, we are delivering a compelling option for organizations looking for GPU alternatives."

[8]

Intel strikes deal with a chip startup its CEO invested in

Intel, which has lagged in making chips for artificial intelligence, is turning for help to a startup that its CEO helps lead as an investor and chair. The ailing Silicon Valley chip company has struck a multiyear technical partnership with SambaNova Systems, a 9-year-old semiconductor company that is aiming to challenge the market leader, Nvidia. Intel and SambaNova will work on developing a cloud AI service run by SambaNova, among other things, the companies said Tuesday. Intel said it would also contribute an undisclosed amount to a $350 million funding round in SambaNova, which it announced along with a new line of AI chips. Intel had previously discussed buying SambaNova. The collaboration may attract attention for how Intel manages the multiple roles of Lip-Bu Tan, its CEO since March. Tan, a prolific venture capitalist, was among SambaNova's early investors as well as its chair. Some former Intel executives have expressed surprise that the company's directors did not seek to limit Tan from profiting from Intel acquisitions or other deals in the sector. But Intel said Tan's connections were a valuable asset and chose to restrict his involvement in negotiating transactions affecting his financial interests. In the SambaNova case, Intel said, Tan recused himself; talks were overseen by Kevork Kechichian, who joined Intel in September as an executive vice president in charge of its data center group. "We have well-established governance procedures to ensure decisions are made in Intel's best interests," a company spokesperson said. Nvidia's success in AI chips has spawned many startups, which have taken years to gain traction. Lately, some big companies have taken steps to reduce their dependence on Nvidia. OpenAI, a longtime Nvidia customer, announced a deal last month to also use chips from Cerebras, a prominent startup. With their collaboration, Intel and SambaNova hope to tap into that trend. "The intent is to shape the next generation of heterogeneous AI data centers," the Intel spokesperson said. (The New York Times has sued OpenAI and its partner, Microsoft, over copyright infringement claims. Both companies have denied wrongdoing.) SambaNova, which has raised $1.4 billion including the latest infusion, has had to adjust its business model multiple times. Like Nvidia, SambaNova offers AI services and sells entire systems -- typically in racks larger than a refrigerator -- instead of just chips. In most cases, those systems come with standard microprocessors from Advanced Micro Devices. Intel Xeon chips will now be part of those offerings under the new relationship. SambaNova and Intel would not discuss prior takeover talks. Rodrigo Liang, SambaNova's CEO, said sales and investor interest had been so strong lately that raising more funding as an independent company made the most sense. "It was very quickly obvious to all of us that that was the best path for the company," he said.

[9]

Intel Misses Another 'AI Opportunity' With SambaNova as Acquisition Talks Die Down, Settling for Xeon CPU Collaboration Instead

Intel's SambaNova acquisition was seen as a way to spearhead the company's AI strategy in inference, but it appears Team Blue has settled for much less. When we talk about Intel, the company is one of the only compute providers that hasn't managed to capitalize on the AI frenzy at all, and this has been a persistent problem for several quarters. Intel's former CEO, Pat Gelsinger, has acknowledged the lack of intent in AI, and it appears that missing out on the SambaNova opportunity is another example. Based on Intel's recent blog post, the firm is entering into an agreement with SambaNova for "Xeon-based" infrastructure, along with offering an inference solution that combines Intel and SambaNova components. This collaboration complements Intel's existing data center GPU commitments and does not alter its path forward to competing in AI. The company continues to invest across GPU IP, architecture, products, software, systems and strengthen its roadmap as part of its edge-to-cloud AI engagements. Together, Intel and SambaNova aim to help shape the next generation of heterogeneous AI data centers, integrating Intel Xeon processors, Intel GPUs, Intel networking and storage, and SambaNova systems -- to unlock a multi-billion-dollar inference market opportunity. The idea behind SambaNova is simple. The company has specialized processing engines called the Reconfigurable Data Unit (RDU), which map entire neural network graphs directly into hardware, similar to what Etched or Taalas AI is doing. SambaNova recently unveiled its SN50 AI chip, revealing that it has achieved 3x lower costs than GPUs in agentic AI workloads, along with 5x more compute per accelerator than the previous generation. For Intel, the plan was to acquire SambaNova and speed up the time to market for an inference-focused option, but it seems the direction is different. SambaNova is also raising $350M in investment in the Series E round, backed by the likes of SoftBank and Intel Capital, and, interestingly, Lip-Bu Tan is already an early SambaNova investor through his Walden Capital portfolio. So ultimately, Tan has two positions open up at SambaNova, which means that the CEO is confident in the company's ability to capitalize on the inference hype. But, now that an acquisition is ruled out, what could be next? Well, our estimates suggest that Intel might integrate SambaNova's RDUs into specific workloads, similar to what NVIDIA intends to do with Groq. Team Blue has already hinted at this in its latest blog post, and the company plans to build "heterogeneous" AI data centers with Intel GPUs, CPUs, and SambaNova systems. But when we could see such a solution come to fruition, it would be a lot more critical, considering that Intel has already delayed the training hype, and losing in inference would be a costly mistake.

[10]

Intel Inks 'Multiyear' AI Inference Deal With SambaNova After Acquisition Talks End

With Intel's venture capital arm backing SambaNova Systems' new $350 million funding round, the AI chip startup plans to tap into Intel's 'global enterprise, cloud and partner channels' to drive sales of joint offerings for 'cloud-scale AI inference.' Intel plans to tap into its "enterprise, cloud and partner channels" for a new "multiyear strategic collaboration" it has entered with AI chip startup SambaNova Systems after acquisition talks between the two companies recently ended. SambaNova announced the partnership Tuesday alongside a $350 million Series E funding round, which it said received "strong participation" from Intel Capital, and the unveiling of its next-generation SN50 AI chip, which it said can outperform rival products. The funding round was led by private equity firms Vista Equity Partners and Cambium Capital. [Related: Exclusive: Intel Taps Ex-Arm, HPE Exec For Data Center Systems Post Amid AI Reorg] Calling the SN50 the "most efficient chip for agentic AI," the San Jose, Calif.-based startup said that the chip, set to ship later this year, is up to five times faster than competitive chips and can run agentic AI workloads at three times lower costs than GPUs. "AI is no longer a contest to build the biggest model," Rodrigo Liang, co-founder and CEO of SambaNova, said in a statement. "With the SN50 and our deep collaboration with Intel, the real race is about who can light up entire data centers with AI agents that answer instantly, never stall, and do it at a cost that turns AI from an experiment into the most profitable engine in the cloud." SambaNova made the funding and partnership announcements after Bloomberg reported last month that discussions for Intel to acquire the startup stalled. The publication first reported on acquisition talks between the two companies last October. The startup's spokesperson said the acquisition deal is "not in discussion at this stage." An Intel spokesperson declined to comment on the matter. The Intel representative said that the company's strategic partnership with SambaNova is meant to complement its AI infrastructure strategy, which spans from Xeon CPUs to GPUs. Last year, the semiconductor giant committed to a data center GPU road map with an annual release cadence before hiring a new GPU chief architect in January. "Customers are asking for more choice and more efficient ways to scale AI," Kevork Kechichian, the head of Intel's Data Center Group, said in a statement. "By combining Intel's leadership in compute, networking and memory with SambaNova's full-stack AI systems and inference cloud platform, we are delivering a compelling option for organizations looking for GPU alternatives to deploy advanced AI at scale." Intel CEO Lip-Bu Tan has served as chairman of SambaNova's board since it was founded in 2017, according to his LinkedIn profile. His investment firms, Celesta Capital and Walden International, have been longtime investors. While SambaNova's spokesperson declined to say if the firms participated in the Series E funding round or currently hold stakes in the startup, the representative called them "long-standing investors." SambaNova said the funding round along with the new Intel partnership to "deliver cloud-scale AI inference," which it called a multibillion-dollar market opportunity, will help with the SN50's production ramp and distribution. The multiyear collaboration between SambaNova and Intel will focus on the delivery of "high-performance, cost-efficient AI inference solutions for AI-native companies, model providers, enterprises and government organizations around the world." This will involve the expansion of SambaNova's vertically integrated AI cloud platform using Intel's Xeon CPUs, which it said will be "supported by reference architectures, deployment blueprints and partnerships with systems integrators and software vendors." The startup said the combination of its systems with Intel's CPUs, accelerators and networking technologies will power "scalable, production-ready inference for reasoning, code generation, multimodal applications and agentic workflows." The two companies plan to engage in co-selling and co-marketing activities for these offerings, with Intel expected to tap into its "global enterprise, cloud and partner channels to accelerate adoption across the AI ecosystem." SambaNova said the first SN50 customer is Japanese investment giant SoftBank Group, which plans to integrate the chip into next-generation AI data centers in Japan. In addition to owning large stakes in Intel and rival Arm, SoftBank last year acquired Ampere Computing, which designs Arm-compatible CPUs, and AI chip startup Graphcore the year before as part of a new AI infrastructure push. SambaNova said that the SN50 uses its Reconfigurable Data Unit (RDU) architecture to enable "ultra-low latency" for "real-time responsiveness" in applications like voice assistants and "power thousands of simultaneous AI sessions with consistent high performance." The startup has previously said that one strength of the RDU architecture is its ability to combine multiple operations into a single kernel call, eliminating additional overhead associated with launching multiple kernel calls and accelerating performance as a result. It also said that the SN50 enables "higher hardware utilization" to lower the cost of generating tokens and improve return on investment for AI inference. In addition, SambaNova said that the SN50 uses three tiers of memory -- SRAM, HBM and DDR -- to offer "breakthrough model capacity" enabling the ability to run models with more than 10 trillion parameters and over 10 million context lengths. This three-tier memory architecture is optimized by the chip's "resident multi-model memory and agentic caching" to cut infrastructure costs for enterprise-scale AI deployments, according to the startup. While the startup didn't provide more details about the SN50's three-tier memory architecture, it has explained how each memory tier is used for its previous-generation SN40L chip: DDR provides capacity for hosting hundreds of models and the ability to quickly switch them out on a single socket, HBM "holds the currently running model and caches others," and distributed SRAM "enables high operational intensity through spatial kernel fusion and bank-level parallelism." The SambaNova spokesperson said its competitive claims about performance and total cost of ownership are "based on internal benchmarking of SN50 against widely deployed, current-generation GPU systems running large language models." While the performance boost claim is based on "end-to-end throughput gains on latency-sensitive inference workloads," the claim around lower costs is based on "system-level modeling across representative production deployments, incorporating hardware, power, cooling, networking, and sustained utilization," the representative added.

[11]

Intel : SambaNova Planning Multi-Year Collaboration for Xeon-Based AI Inference

As AI workloads become more diverse and complex, organizations are looking for different solutions for different needs. This is driving demand for more heterogeneous infrastructure-built on diverse compute, memory, networking and a consistent software foundation-to support inference at scale across the data center. Today, SambaNova and Intel announced a planned multi-year strategic collaboration to deliver high-performance, cost-efficient AI inference solutions for AI-native companies, model providers, enterprises and government organizations worldwide, built around Intel® Xeon® based infrastructure. Intel Capital is also participating in SambaNova's Series E financing round. For customers with AI workloads well-suited to SambaNova's approach, the combination of Intel CPUs and SambaNova's AI platform can provide a compelling rack-level inference option as Intel's GPU-based solutions come online. This collaboration complements Intel's existing data center GPU commitments and does not alter its path forward to competing in AI. The company continues to invest across GPU IP, architecture, products, software, systems and strengthen its roadmap as part of its edge-to-cloud AI engagements.

[12]

AI chip startup SambaNova raises $350 million in Vista-led round, signs Intel partnership

Feb 24 (Reuters) - SambaNova Systems said on Tuesday it has raised $350 million in a new funding round and struck a partnership with Intel as it seeks to capitalise on surging demand for inference chips used in artificial intelligence applications. Inference chips, which run AI models and power real-time decisions, have attracted intense investor interest following a wave of dealmaking around rivals to Nvidia, as AI companies seek faster and more efficient hardware. Private equity firm Vista Equity Partners and Cambium Capital led the round, which also included Intel's investment arm, Intel Capital, SambaNova said on Tuesday, confirming a Reuters exclusive reported earlier this month. The proceeds will fund expansion of SambaNova's new SN50 AI chip, scale its SambaCloud platform and deepen enterprise software integrations. SoftBank Corp will be the first customer to deploy the SN50 chip within its AI data centres in Japan. SambaNova and Intel also signed a multi-year agreement to deliver cost-effective AI inference solutions for AI-native companies, complementing Intel's existing data centre GPU commitments. The investment marks a rare foray by Vista beyond its traditional focus on enterprise software. The funding comes after acquisition talks between SambaNova and Intel stalled. Intel, whose chief executive Lip-Bu Tan also serves as SambaNova's executive chairman, had previously discussed acquiring the startup for roughly $1.6 billion, including debt, Reuters has reported. (Reporting by Juby Babu in Mexico City; Editing by Tasim Zahid)

Share

Share

Copy Link

SambaNova secured $350 million in Series E funding led by Vista Equity Partners and announced a multi-year strategic collaboration with Intel to deploy AI inference solutions. The startup unveiled its SN50 AI chip, designed specifically for inference workloads, claiming it delivers five times faster performance and three times better efficiency than Nvidia's B200. SoftBank will be the first customer to deploy the technology in its Japanese data centers.

SambaNova Secures $350 Million Funding and Unveils SN50 AI Chip

SambaNova Systems has raised $350 million in a Series E funding round led by Vista Equity Partners and Cambium Capital, with significant participation from Intel Capital, marking a rare move by Vista beyond its traditional enterprise software focus

2

4

. The funding round was oversubscribed, with Battery Ventures, Mayfield Capital, and other new investors participating, valuing the company above $2 billion—still below its 2021 valuation of around $5 billion2

. The proceeds will fund expansion of the new SN50 AI chip, scale its SambaCloud platform, and deepen enterprise software integrations4

.Intel Partnership Aims to Challenge Nvidia's Dominance

SambaNova announced a multi-year strategic collaboration with Intel to build large-scale AI inference infrastructure around Intel Xeon platforms and SambaNova AI accelerators

1

. The Intel partnership will combine SambaNova's offerings with Intel's own chips in products for data center customers, serving as an interim solution until Intel's own AI accelerators are ready2

. Intel CEO Lip-Bu Tan, who also serves as chairman of SambaNova after first investing in the startup eight years ago, recused himself from partnership discussions to avoid conflicts of interest2

5

. This collaboration comes after acquisition talks between Intel and SambaNova stalled, with Intel initially discussing acquiring the startup for roughly $1.6 billion, including debt4

.New AI Accelerator Built Specifically for Agentic Inference Workloads

The SN50 AI chip represents a fundamental shift in focus toward inference rather than training, targeting real-time applications such as voice assistants with emphasis on low latency, memory bandwidth, and power consumption

1

. The dual-chiplet processor is based on SambaNova's Reconfigurable Data Unit architecture and features a three-tier memory subsystem—SRAM, HBM, and DDR5—designed to keep multiple models resident for rapid hot-swapping1

. Each RDU features 432 MB of on-chip SRAM, 64 GB of HBM2E memory good for 1.8 TB/s of bandwidth, and between 256 GB and 2 TB of DDR5 memory3

. The new chip delivers 2.5x higher 16-bit floating-point performance and 5x higher performance at FP8, working out to 1.6 and 3.2 petaFLOPS respectively3

.Performance Claims Position SN50 Against Nvidia B200

SambaNova claims the SN50 accelerator offers five times more compute than competing offerings, specifically positioning it against the Nvidia B200, with three times better efficiency and a threefold lower cost of ownership for inference compared to GPU-based systems

1

. According to SemiAnalysis's InferenceX benchmark results, when running with FP8 precision, a Llama 3.3 70B model with 1K input and 1K output tokens achieves 895 tokens per second per user on the SN50, compared to 184 tokens per second per user on Nvidia's B2001

. Rodrigo Liang, co-founder and CEO of SambaNova, stated that "AI is no longer a contest to build the biggest model," emphasizing that "the real race is about who can light up entire data centers with AI agents that answer instantly, never stall, and do it at a cost that turns AI from an experiment into the most profitable engine in the cloud"1

.Related Stories

Rack-Scale AI Solutions Target Enterprise and Government Markets

SambaNova positions the SN50 primarily as part of its SambaRack SN50 rack-scale solution rather than a standalone processor

1

. Each 20 kW SambaRack SN50 packs 16 SN50 RDU processors, and 16 racks can interconnect up to 256 accelerators using a multi-terabyte-per-second fabric, with each RDU equipped with 2.2 TB/s of bidirectional chip-to-chip bandwidth via a switched fabric1

3

. The company emphasizes that at 20 kW per rack, the system operates within existing data center power envelopes and relies on air cooling, eliminating the need for liquid cooling or data center modifications1

. A cluster of 256 SN50 accelerators is designed for extremely large models, including configurations exceeding 10 trillion parameters and context windows of more than 10 million tokens, positioning this capability as essential for reasoning-heavy and multi-model agentic AI workloads1

.SoftBank as First Customer Signals Market Validation

SoftBank will be the first customer to deploy the SN50 platform, using it to power AI computing in Japan within its AI data centers

2

4

. The Japanese company already uses older SambaNova products, demonstrating continued confidence in the technology2

. SambaNova expects to ship its SN50 accelerators later this year, with full SambaRack SN50 systems available in the second half of 2026, though pricing remains undisclosed1

3

. The joint effort between Intel and SambaNova targets AI inference solutions for AI-native companies, model providers, as well as enterprises and government organizations worldwide, leveraging Intel's traditional customer relationships1

. SambaNova's dataflow architecture, which aims to reduce data movement overheads by overlapping computation and communication, already positions it as one of the highest performing inference providers, with its SN40L accelerators able to serve models like the 230 billion parameter MiniMax M2 at up to 378 tokens per second3

. The company counts Hugging Face, Meta, and major AI labs as customers, and through hardware-software co-design with Intel, aims to provide cost-effective alternatives in the AI chip market dominated by GPUs3

5

. Kevork Kechichian, general manager of Intel's data center group, noted that "customers are asking for more choice and more efficient ways to scale AI," highlighting the growing demand for alternatives in generative AI deployments2

.References

Summarized by

Navi

[3]

[4]

Related Stories

Vista Equity Partners and Intel lead $350M funding round for AI chip startup SambaNova Systems

07 Feb 2026•Startups

Intel Eyes $5 Billion SambaNova Acquisition to Revitalize AI Strategy

31 Oct 2025•Business and Economy

Intel moves to acquire SambaNova for $1.6 billion as AI chip battle with Nvidia intensifies

13 Dec 2025•Business and Economy