Intel and SambaNova unveil heterogeneous AI inference platform to challenge Nvidia's dominance

3 Sources

[1]

Intel and SambaNova team up on heterogenous AI inference platform -- different hardware performs different workloads

Inference platform can take advantage of Intel Xeon 6 CPUs, SambaNova SN50 RDUs, and Nvidia GPUs Intel and SambaNova on Wednesday announced their joint production-ready heterogeneous inference architecture that relies on AI accelerators or GPUs for prefill, SambaNova reconfigurable dataflow units (RDUs) SN50 for decode, and Xeon 6 processors for agentic tools and system orchestration. The platform is designed to address as broad a set of workloads as possible to siphon some of the market share away from Nvidia and other emerging players. The heterogeneous inference platform by Intel and SambaNova separates inference into distinct stages handled by different silicon: It uses AI GPUs or AI accelerators for ingesting long prompts and building key-value caches; SambaNova's SN50 RDU for decoding and generating tokens; and Xeon 6 processors for running agent-related operations (e.g., compiling and executing code and validating outputs) as well as coordinating and distributing workloads across hardware. Splitting prefill, decode, and token generation stages is similar to Nvidia's approach to its Rubin platform, which is based on the Rubin CPX and heavy-duty Rubin GPU with HBM4 memory -- with the obvious difference that the Rubin CPX is not coming to market. But, more importantly for Intel, the new platform will rely on its Xeon 6 processors -- not on competing offerings. The solution is scheduled to be available in the second half of 2026 to enterprises, cloud operators, and sovereign AI programs seeking scalable inference platforms in general and coding agents, and other agentic workloads in particular, completely in-house. According to SambaNova's internal data, Xeon 6 achieves over 50% faster LLVM compilation compared to Arm-based server CPUs, and delivers up to 70% higher performance in vector database workloads, relative to competing x86 processors -- namely, AMD EPYC. These gains are intended to shorten end-to-end development cycles for coding agents and similar applications, the two companies claim. Perhaps the biggest advantage of the joint production-ready heterogeneous inference architecture is that SambaNova SN50 and Xeon-based servers are drop-in compatible with data centers that can handle 30kW -- which is the vast majority of enterprise data centers. "The data center software ecosystem is built on x86, and it runs on Xeon -- providing a mature, proven foundation that developers, enterprises, and cloud providers rely on at scale," said Kevork Kechichian, Executive Vice President and General Manager of the Data Center Group (DCG) at Intel Corporation. "Workloads of the future will require a heterogeneous mix of computing, and this collaboration with SambaNova delivers a cost‑efficient, high‑performance inference architecture designed to meet customer needs at scale -- powered by Xeon 6." Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

'The CPU is the system's executive layer': Intel joins SambaNova as both face existential threat from Nvidia's Groq-powered inference

* GPUs handle prefill operations by converting prompts into key-value caches * SambaNova RDUs generate tokens at high throughput and low latency * Intel Xeon 6 processors manage workload distribution and execute compiled code Intel and SambaNova Systems have introduced a joint hardware blueprint combining GPUs, SambaNova RDUs, and Intel Xeon 6 processors for large-scale inference workloads. The system assigns GPUs to prefill operations, RDUs to decoding, and Xeon CPUs to execution and orchestration tasks across agent-driven environments. "Agentic AI is moving into production -- and the winning pattern we're seeing is GPUs to start the job, Intel Xeon 6 to run it, and SambaNova RDUs to finish it fast," said Rodrigo Liang, CEO and co-founder of SambaNova Systems. CPU is the execution and control layer This design is scheduled to be available in the second half of 2026 for enterprises, cloud providers, and sovereign deployments. The architecture places Intel Xeon 6 processors at the center of system control, where they manage workload distribution, execute code, and coordinate tool interactions. It includes handling compilation, validating outputs, and maintaining communication between simultaneous processes. "When thousands of simultaneous coding agents are generating tool calls, retrieval requests, code builds, and encrypted inter-agent messages, the CPU is not a background component -- it is the system's executive and action layer," said Harry Ault, CRO of SambaNova. The statement defines the CPU as the primary layer responsible for system behavior rather than a supporting component. According to SambaNova, Xeon 6 delivers more than 50% faster LLVM compilation times compared with Arm-based server CPUs. It also delivers up to 70% faster vector database performance compared with other x86-based systems. These figures relate to execution speed within coding and retrieval workflows, and in this configuration, GPUs process the prefill stage by converting prompts into key-value caches. SambaNova RDUs operate as the decoding layer, generating tokens at high throughput and low latency. Xeon 6 processors function as both host CPUs and execution engines, managing system-level operations and running compiled workloads. "Production inference is moving toward heterogeneous hardware -- no single chip type is optimal for every stage of an agentic workflow," said Banghua Zhu, co-founder and CTO at RadixArk. He added that combining RDUs with Xeon CPUs allows systems to maintain compatibility with existing software environments. The system is designed to run inside existing air-cooled data centers without requiring new builds. According to the companies, this allows scaling of inference workloads without additional strain on water and energy resources. As Nvidia and Groq continue to focus on improving inference throughput and latency, this announcement adds a layer of competition. It offers an alternative approach that distributes workloads across multiple hardware layers rather than relying on a single processing model. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button! And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

[3]

Intel-SambaNova Collaboration Is One Answer to NVIDIA's Groq Partnership, After It Became Clear GPUs Alone Can't Dominate Inference

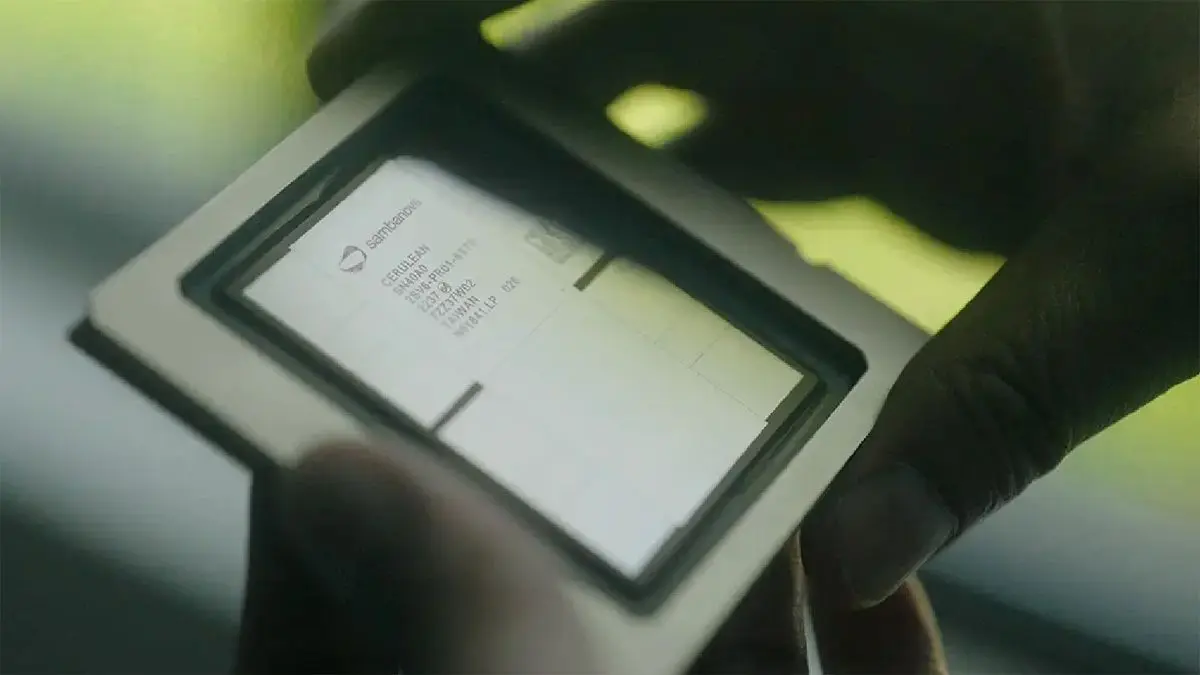

Inference is the next area of focus for compute providers, and after the NVIDIA-Groq partnership, the AI industry has realized it needs far more than just GPUs. This has led to a new pair emerging: Intel and SambaNova. At this year's GTC, we saw NVIDIA talking about disaggregated inference, and how it has become important for them as a manufacturer to shift from their 'GPU-only' mentality, and instead bring in a relatively newer form of compute units into the infrastructure race. With the Groq licensing agreement, we saw the SRAM-based LPUs debut in Rubin's LPX racks, and now Intel and SambaNova have decided to experiment with something similar, unveiling a new "inference architecture" featuring SambaNova's RDUs with Intel's Xeon 6 CPUs. SambaNova today announced the next phase of its collaboration with Intel: a heterogeneous hardware solution that combines GPUs for prefill, Intel® Xeon® 6 processors as both host and "action" CPUs, and SambaNova RDUs for decode to deliver premium inference for the most demanding Agentic AI applications. - SambaNova This arrangement aims to target RDUs for decode workloads, with GPUs handling prefill work and Xeon 6 CPUs handling tasks such as orchestration and general-purpose work. The Intel-SambaNova partnership doesn't lock in a specific hyperscaler for the GPU option, meaning you could integrate ASICs in this configuration as well, though SambaNova didn't go into much detail about GPU-specific performance. SambaNova will integrate their SN50 units, which we'll discuss in a bit, and, along with this, the firm says they found Xeon 6 CPUs as the ideal for "end‑to‑end coding agent workflows" compared to ARM options. Let's talk about the SN50 chip. The solution, revealed in early 2026, features the company's fifth-gen RDU units, with a combination of DRAM, SRAM, and HBM onboard. The SN50 features 2TB of DDR5 memory, along with 64 GB HBM3 and 520 MB SRAM, and, if you have guessed it by now, the idea of having such a memory architecture onboard is to provide minimal latency, high throughput, and sheer capacity. The SN50 is probably the only accelerator to feature such a memory layout, and according to the manufacturer, the DRAM + SRAM + HBM combo creates 'agentic caching'. On a more general level, the primary difference between Intel's approach with SambaNova and NVIDIA's is that the former focuses more on a 'safer' bet, given that it doesn't need to provide a hefty underlying infrastructure for disaggregated inference. For hyperscalers looking for a more modular rack-scale offering that targets the "prefill + decode" breakdown, the Intel-SambaNova option is a decent bet. We were expecting Intel to go much deeper with RDU integration, but it seems, for now, it might be limited to just the Xeon CPU as the host option. Intel's CEO has participated in SambaNova's latest funding round, and Lip-Bu is also an early investor in the company. There were plans to acquire them as well, but they were reportedly halted after a board disagreement, which is why Intel has settled on being a funding participant.

Share

Copy Link

Intel SambaNova announced a production-ready heterogeneous architecture that splits AI inference workloads across different hardware types. GPUs handle prefill operations, SambaNova SN50 RDUs manage decode and token generation, while Intel Xeon 6 CPUs orchestrate the system and execute agentic AI applications. The platform launches in the second half of 2026 as an alternative to Nvidia's approach.

Intel SambaNova Partner on Disaggregated AI Inference Platform

Intel and SambaNova Systems have unveiled a production-ready heterogeneous AI inference architecture designed to challenge Nvidia's market dominance by distributing large-scale AI inference workloads across specialized hardware components

1

2

. The collaboration marks a significant shift in how AI inference hardware is deployed, moving away from GPU-only solutions toward a multi-component approach that assigns specific tasks to the most suitable processing units. This strategic partnership comes as the industry recognizes that no single chip type can optimally handle every stage of complex agentic AI workflows2

.

Source: Wccftech

The platform splits inference into distinct stages: AI accelerators or GPUs handle prefill operations by converting prompts into key-value caches, SambaNova SN50 RDUs manage decode and token generation at high throughput and low latency, while Intel Xeon 6 CPUs serve as the system's executive layer for orchestration and workload distribution

1

. According to Rodrigo Liang, CEO of SambaNova Systems, "Agentic AI is moving into production -- and the winning pattern we're seeing is GPUs to start the job, Intel Xeon 6 to run it, and SambaNova RDUs to finish it fast"2

.SambaNova SN50 RDUs Feature Unique Memory Architecture

The SambaNova SN50, revealed in early 2025, represents the fifth generation of the company's reconfigurable dataflow units and features an unprecedented memory configuration combining 2TB of DDR5 memory, 64GB HBM3, and 520MB SRAM

3

. This hybrid memory architecture creates what SambaNova calls "agentic caching," designed to deliver minimal latency, high throughput, and substantial capacity for demanding inference tasks3

. The SN50 is reportedly the only AI accelerator to feature such a diverse memory layout, positioning it as a specialized solution for decode operations in the heterogeneous architecture3

.Intel Xeon 6 CPUs Positioned as Control and Execution Layer

Intel Xeon 6 CPUs play a central role in the heterogeneous AI inference architecture, functioning not as background components but as the primary execution and control layer

2

. Harry Ault, CRO of SambaNova, emphasized this positioning: "When thousands of simultaneous coding agents are generating tool calls, retrieval requests, code builds, and encrypted inter-agent messages, the CPU is not a background component -- it is the system's executive and action layer"2

. According to SambaNova's internal benchmarks, Xeon 6 achieves over 50% faster LLVM compilation compared to Arm-based server CPUs and delivers up to 70% higher performance in vector database workloads relative to competing x86 processors, specifically AMD EPYC1

. These performance gains are intended to accelerate end-to-end development cycles for coding agents and similar agentic AI applications1

.Related Stories

Alternative to Nvidia Emerges as Competition Intensifies

The Intel SambaNova partnership directly responds to Nvidia's recent moves in the AI inference hardware market, particularly its Groq licensing agreement and the Rubin platform architecture

3

. While Nvidia's Rubin platform combines the Rubin CPX with heavy-duty Rubin GPUs featuring HBM4 memory, the Intel-SambaNova approach offers enterprises and cloud operators a more modular alternative that doesn't require extensive infrastructure changes3

. A significant advantage is that SambaNova SN50 and Xeon-based servers are drop-in compatible with data centers handling 30kW, which encompasses the vast majority of enterprise data centers1

. This compatibility allows organizations to scale inference workloads within existing air-cooled facilities without additional strain on water and energy resources2

.

Source: Tom's Hardware

Platform Targets Enterprises and Sovereign AI Programs

The heterogeneous architecture is scheduled for availability in the second half of 2026, targeting enterprises, cloud operators, and sovereign AI programs seeking scalable inference platforms for coding agents and other agentic workloads

1

. Kevork Kechichian, Executive Vice President and General Manager of Intel's Data Center Group, stated: "The data center software ecosystem is built on x86, and it runs on Xeon -- providing a mature, proven foundation that developers, enterprises, and cloud providers rely on at scale"1

. The partnership doesn't lock organizations into specific GPU vendors, allowing integration of various AI accelerators alongside the SambaNova RDUs and Intel CPUs3

. This flexibility could appeal to organizations seeking to optimize their existing hardware investments while adopting new inference capabilities. Intel's CEO has participated in SambaNova's latest funding round, and there were reportedly discussions about acquisition that were halted after board disagreements, leading Intel to settle on being a funding participant instead3

.

Source: TechRadar

References

Summarized by

Navi

[1]

Related Stories

SambaNova raises $350M, partners with Intel to deploy SN50 chip claiming 5x speed over Nvidia B200

24 Feb 2026•Technology

Intel Xeon 6 selected as host CPU for Nvidia DGX Rubin NVL8 systems at GTC 2026

17 Mar 2026•Technology

Intel Unveils New Xeon 6 CPUs with Advanced AI Performance Features for Nvidia's DGX B300 Systems

23 May 2025•Technology

Recent Highlights

1

Google Search transforms with agentic AI, generative UIs, and intelligent search box at I/O 2026

Technology

2

Pope Leo calls to disarm AI in first encyclical, warning against new forms of domination

Policy and Regulation

3

AI passes the Turing Test as GPT-4.5 appears more human than actual people in landmark study

Science and Research