Stanford's Merlin AI delivers consistent diagnoses across hospitals using 3D CT scans

2 Sources

2 Sources

[1]

Radiology AI makes consistent diagnoses using 3D images from different health centres

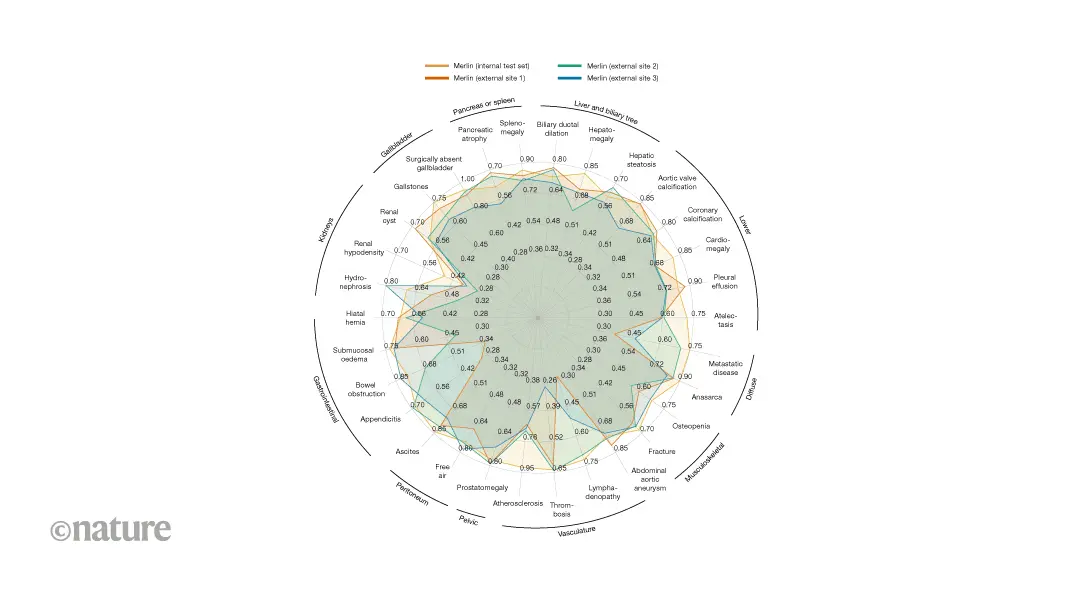

Study design: retrospective multi-centre evaluation of clinical computed-tomography images. Analysis: comparison of the ability of Merlin and other vision-language models to classify diseases and predict outcomes from abdominal scans across hospital sites. Conclusion: Merlin is a high-performing radiology model across diagnostic tasks and sites. Roughly 30 million abdominal computed-tomography (CT) scans are performed every year in the United States. In addition to a growing shortage of radiologists, a rising demand for imaging has increased radiologists' interpretation workload and therefore the risk of suboptimal care (see go.nature.com/4axzfgw). This imbalance has driven the development of artificial-intelligence tools for radiology, which now account for around 75% of all AI-enabled medical devices approved by the US Food and Drug Administration (FDA; see go.nature.com/4amujrj). Despite this progress, many FDA-approved radiology AI models lack multi-site evaluation and might underperform when used outside their training data, potentially limiting clinical adoption. Over the past few years, multimodal vision-language radiology models, trained on large-scale joint sets of imaging data and radiology reports, have demonstrated strong performance across various tasks, including disease classification and report generation. However, for these tools to be adopted clinically, they must generalize across diverse data distributions. To address the generalizability concerns of multimodal radiology models, we developed Merlin, a vision-language model that learns from volumetric (3D) abdominal CT scans, radiology reports and data from electronic health records. Merlin demonstrated consistent performance beyond its training distribution across three external hospital sites (outside the sources of the training data), highlighting its potential for clinical adoption. We have made the vision-language data set of 25,494 CT scans and radiology reports open source to spur further research. To test Merlin's generalizability, we assessed its accuracy at retrospectively classifying 30 abdominal abnormalities using 37,855 CT scans obtained at three external hospital sites, without fine-tuning the model (zero-shot). We compared Merlin with other vision-language models (Fig. 1) that had been fine-tuned using Merlin's multimodal data set to isolate effects of the differences in vision-encoder architecture, rather than in training data. Across all sites, Merlin consistently outperformed the second-best alternative architecture baseline, achieving a 19.7% average improvement in classification accuracy. Merlin outperformed the next-best vision-language model at the three hospital sites by 34.4%, 15.7% and 8.9%. These findings indicate that Merlin is robust to variation in patient demographics, imaging protocols and radiologists' reporting practices. We attribute Merlin's strong zero-shot performance across hospital sites to its full 3D image encoder and large-scale multimodal pre-training. These findings provide a path towards radiology AI systems that have robust performance across sites, supporting broader clinical adoption. Merlin demonstrates that multimodal training using radiology reports and data from electronic health records is a promising approach for developing robust models. Merlin achieves strong off-the-shelf performance across several health systems and sites. However, clinical deployment will require prospective validation and evaluation on other tasks (such as radiology-report generation and disease prediction) across external data distributions. By fully open-sourcing the model and data set, we invite the community to reproduce and extend our findings using other data sets, using our experiments as a foundation. -- Ashwin Kumar and Akshay S. Chaudhari are at Stanford University, Stanford, California, USA.

[2]

Researchers develop versatile machine learning tool to automate complex clinical diagnostics

National Institutes of Health (NIH)Mar 4 2026 A research team funded by the National Institutes of Health (NIH) has developed a versatile machine learning model that could one day greatly expand what medical scans can tell us about disease. Scientists used their tool, named Merlin, to assess 3D abdominal computed tomography (CT) scans, accomplishing tasks as simple as identifying anatomical features to as complex as predicting disease onset years in advance. Despite being developed as a general-purpose CT model, Merlin surpassed a gauntlet of similar automated tools in tasks they were specifically built to handle. The team trained their model on a unique set of patient CT scans linked to radiology reports and medical diagnosis codes collected from the Stanford University School of Medicine. The researchers note that it is the largest collection of abdominal CT data to date. Rich datasets like this are necessary to push the limits of what artificial intelligence models can accomplish in medicine. This work exemplifies how meticulously crafted training data can enable remarkable insights that significantly streamline workflows and assist in clinical decision-making." Bruce Tromberg, Ph.D., Director of NIH's National Institute of Biomedical Imaging and Bioengineering (NIBIB) CT is a common form of medical imaging, often performed in the early stage of medical evaluations. To obtain a diagnosis, a radiologist must interpret the results and, oftentimes, additional tests and clinical assessments are needed too. At baseline, this process is lengthy and only becomes more cumbersome when accounting for the growing shortage of physicians in the United States. "With Merlin, you could potentially go beyond traditional radiology and jump straight from imaging to a possible diagnosis. And that's just one potential use," said co-first author Louis Blankemeier, Ph.D., who conducted this work while a graduate student at Stanford University. Merlin represents a new class of models, commonly referred to as foundation models, that are trained using large-scale, unlabeled datasets, which span many kinds of information. In the new work, the researchers tested Merlin across six broad categories of activities, spanning more than 750 individual tasks that entailed diagnostics, prognostics, and quality assessment. To prepare Merlin for the wide breadth of tasks, the researchers initially trained it on their clinical data trove which connected more than 15,000 3D abdominal CT scans paired with their radiology reports and nearly one million diagnostic codes. Using this information as study material, Merlin learned about relationships between visual and written data. The researchers then quizzed Merlin on more than 50,000 previously unseen abdominal CT scans - coming from one of four different hospitals - to learn how closely their model could match the human-produced conclusions associated with each scan. "Merlin tackled some tasks, such as predicting diagnosis codes, head-on, while other more complicated tasks, such as drafting radiology reports from scratch or identifying and outlining organs in a 3D space, called for additional training," said co-first author Ashwin Kumar, a graduate student at Stanford University. The team also deployed state-of-the-art models, specializing in each task type, to serve as points of comparison. On average across 692 different diagnostic codes, Merlin successfully predicted which of two scans was more likely to be associated with a particular code over 81% of the time, outperforming several variants of two other models. For a subset of 102 codes, Merlin's performance rose to 90%. In another category, the team pushed Merlin to predict the onset of chronic diseases, such as diabetes, osteoporosis, and heart disease, in healthy patients based solely on CT scans. The study authors found that, when comparing scans from different subjects, Merlin could identify patients who were at higher risk of developing a particular disease in the next five years 75% of the time, versus 68% for the other model. These findings hint that the model can detect key features in scans that may be lost to human eyes, suggesting that the tool could help identify new biomarkers for disease, Blankemeier explained. The researchers ramped up the difficulty further by challenging Merlin to interpret CT scans of the chest, a body part completely absent from its CT study material. Merlin's unique ability to identify generalizable features of disease allowed it to perform as well as or better than models trained exclusively on chest scans. Despite being a jack-of-all-trades, Merlin exceeded or matched the specialist models across all tasks. The authors attribute Merlin's magic touch to its architecture and training data, which allowed it to process complex 3D scans and build associations between visual and written information. The researchers have high hopes that their approach could soon leverage prior precedent to obtain regulatory approval for simpler tasks but also plan to refine Merlin to better handle more complicated challenges, such as report writing. While the tool is powerful out of the box, they encourage users to fine-tune the model with their own data to address their specific needs. "Our model and the data will provide the community a robust backbone to build upon," said senior author Akshay Chaudhari, Ph.D., a professor of radiology and biomedical data science at Stanford University. "From here, the sky's the limit." This research was supported by NIBIB through grants R01EB002524 and P41EB027060, by the Medical Imaging and Data Resource Center (MIDRC) under contract 75N92020C00021, by the National Health, Lung, and Blood Institute (NHLBI) through grants R01HL167974 and R01HL169345, and by the National Institute of Arthritis and Musculoskeletal and Skin Diseases (NIAMS) through grants R01AR077604 and R01AR079431. National Institutes of Health (NIH) Journal reference: Blankemeier, L., et al. (2026). Merlin: a computed tomography vision-language foundation model and dataset. Nature. DOI: 10.1038/s41586-026-10181-8. https://www.nature.com/articles/s41586-026-10181-8

Share

Share

Copy Link

Stanford researchers developed Merlin AI, a vision-language model that analyzes 3D abdominal CT scans with 81% accuracy in predicting diagnoses. Trained on over 15,000 scans and nearly one million diagnostic codes, the NIH-funded tool outperformed specialized models across 750 tasks and demonstrated consistent performance across multiple hospital sites, offering a potential solution to the growing radiologist shortage.

Merlin AI Tackles Radiologist Shortage with Consistent Performance

Stanford University researchers have developed Merlin AI, a vision-language AI model designed to analyze 3D abdominal CT scans with remarkable diagnostic consistency across different healthcare settings

1

. The NIH-funded machine learning tool addresses a critical need in radiology, where roughly 30 million abdominal CT scans are performed annually in the United States amid a growing physician shortage1

. This imbalance has increased radiologists' workload and the risk of suboptimal care, driving development of radiology AI solutions that now account for approximately 75% of all AI-enabled medical devices approved by the US Food and Drug Administration1

.

Source: News-Medical

Foundation Model Trained on Unprecedented Clinical Dataset

Merlin represents a new class of foundation model trained on the largest collection of abdominal CT data to date

2

. The team at Stanford University School of Medicine trained the model on more than 15,000 3D abdominal CT scans paired with radiology reports and nearly one million diagnostic codes from electronic health records2

. This multimodal pre-training approach allowed Merlin to learn relationships between visual imaging data and written clinical information. The researchers have made their vision-language data set of 25,494 CT scans and radiology reports open-source to accelerate further research1

.Exceptional Zero-Shot Performance Across Multiple Hospital Sites

To test Merlin's generalizability, researchers assessed its accuracy at classifying 30 abdominal abnormalities using 37,855 CT scans obtained from three external hospital sites without fine-tuning the model—a capability known as zero-shot performance

1

. Across all sites, Merlin consistently outperformed the second-best alternative architecture baseline, achieving a 19.7% average improvement in classification accuracy1

. At individual hospital sites, Merlin outperformed the next-best vision-language model by 34.4%, 15.7%, and 8.9%, demonstrating robustness to variations in patient demographics, imaging protocols, and radiologists' reporting practices1

.

Source: Nature

Merlin AI Excels at Diverse Diagnostic and Prognostic Tasks

The researchers tested Merlin across six broad categories spanning more than 750 individual tasks that included diagnostics, prognostics, and quality assessment

2

. On average across 692 different diagnostic codes, Merlin successfully predicted which of two scans was more likely to be associated with a particular code over 81% of the time, outperforming several specialized models2

. For a subset of 102 codes, performance rose to 90%2

. Co-first author Louis Blankemeier, Ph.D., explained that "with Merlin, you could potentially go beyond traditional radiology and jump straight from imaging to a possible diagnosis"2

.Related Stories

Early Disease Diagnosis and Biomarker Discovery Potential

Merlin demonstrated capability in predicting the onset of chronic diseases such as diabetes, osteoporosis, and heart disease in healthy patients based solely on CT scans

2

. When comparing scans from different subjects, Merlin could identify patients at higher risk of developing a particular disease in the next five years 75% of the time, versus 68% for competing models2

. These findings suggest the model can detect key features in scans that may be invisible to human eyes, potentially helping identify new biomarkers for disease classification2

.Path Toward Clinical Adoption and Future Applications

Bruce Tromberg, Ph.D., Director of NIH's National Institute of Biomedical Imaging and Bioengineering, noted that "rich datasets like this are necessary to push the limits of what artificial intelligence models can accomplish in medicine"

2

. The researchers attribute Merlin's strong performance to its full 3D image encoder and large-scale multimodal training approach1

. Co-first author Ashwin Kumar and senior author Akshay S. Chaudhari acknowledge that clinical deployment will require prospective validation and evaluation on additional tasks such as radiology-report generation across external data distributions1

. The tool's ability to automate complex clinical diagnostics could significantly streamline clinical workflows, though its performance on chest CT scans—despite having no chest imaging in its training data—suggests even broader applications may be possible2

.References

Summarized by

Navi

Related Stories

Recent Highlights

1

OpenAI shuts down Sora video app after six months, ending Disney's $1 billion investment deal

Technology

2

AI-Generated Val Kilmer to Posthumously Appear in As Deep as the Grave After His Death

Entertainment and Society

3

Supermicro Co-Founder Indicted in $2.5 Billion Nvidia AI Chip Smuggling Scheme to China

Policy and Regulation