AI Threatens Academic Integrity as Online Courses Face Credibility Crisis

2 Sources

2 Sources

[1]

How AI is challenging the credibility of some online courses

Brock University provides funding as a member of The Conversation CA. Distance learning far precedes the digital age. Before online courses, people relied on print materials (and later radio and other technologies) to support formal education when the teacher and learner were physically separated. Today, there are varied ways of supporting distance learning with digital communication. With "asynchronous" online courses, teaching does not occur live. Students access course materials on the learning management system and complete assignments at their own pace. This allows flexibility across time zones and work schedules and affords accessible learning. Read more: Professor flexibility, recorded lectures: Some positive university legacies of the pandemic Nevertheless, some researchers have raised concerns regarding the quality and student outcomes associated with asynchronous online courses. As well, generative artificial intelligence (GenAI) has exposed fundamental challenges to this mode of delivery. While GenAI poses serious challenges to academic integrity in many formats of learning, including synchronous online and in-person learning, asynchronous courses face the most acute risk. Without real-time interaction or time constraints, students can use AI undetected while instructors never observe their thinking processes. Compromised learning models Asynchronous courses have long relied on conventional assessments: discussion board posts, written reflections, essay assignments and pre-recorded videos. These models in asynchronous assessment are now compromised. Distinguishing AI-generated content from human-written text has become increasingly difficult. Text discussions and reflections present the highest substitution risk. GenAI can generate personalized reflective posts and discussion replies rapidly, complete with a polished academic tone. An instructor may spend hours responding to these contributions yet gain little evidence about who actually learned and produced the material. AI agents like ChatGPT's Atlas browser can now navigate course sites, consume materials and complete some assignments with minimal student intervention -- if any. In written assignments, requiring precise citations from assigned course materials may seem like a safeguard. However, AI-enhanced tools can easily meet such requirements. This approach provides false security and fails to address underlying problems, including authorship with integrity in an AI world. Read more: ChatGPT and cheating: 5 ways to change how students are graded Students can be asked to provide time-stamped drafts, version history and checkpoints to document their process. But these can be easily fabricated -- while instructors become overloaded with policing rather than focusing on students' learning and progress. AI-generated infographics and videos are also becoming hard to distinguish from human-made ones. Detectors, remote proctoring not solutions AI detectors cannot solve the problem. Research suggests detection tools produce false positive rates far higher than advertised, with disproportionate harm to neurodivergent and second-language learners. Several universities now explicitly advise against using detection software as evidence of academic misconduct. Remote proctoring is intrusive and raises serious ethical, equity, privacy and reliability concerns. Students requiring accommodations, whether for disabilities, inadequate technology or lack of private space, must be granted leniency that undermines the system's purpose, rendering it unsustainable while diverting instructors away from their educational mission. Read more: Online exam monitoring can invade privacy and erode trust at universities These mounting challenges are neither hypothetical nor distant. Without meaningful intervention, institutions risk credentialing students who have not demonstrably engaged with course content, thereby undermining the integrity of academic credentials. Two less-than-ideal strategies Genuine protection against AI substitution requires approaches that fundamentally alter how instructors deliver asynchronous courses. Two strategies that somewhat meet this threshold are: 1) Short oral examinations can be scheduled for major assignments or throughout a term. While not without limitations, these conversations verify authorship and assess the depth of understanding. 2) Experiential learning components with external verification: Students can apply course concepts to real-world settings and include brief attestations from workplace supervisors, community partners or other external stakeholders in their capstone assignments that will be graded by course instructors. Combined with short oral examinations, this approach would deter offloading all learning to AI and augment asynchronous coursework with practical components. However, assessment strategies alone cannot solve the authenticity crisis. Rethinking program design The Community of Inquiry framework, a tool for conceptualizing online learning, identifies three essential elements for effective online learning: social presence (students engage authentically), cognitive presence (students construct understanding through inquiry) and teaching presence (instructors facilitate learning). GenAI threatens all three of these elements: it can simulate social engagement through generated posts, substitute for cognitive work and force instructors to focus on policing rather than teaching. Institutions must evaluate whether their asynchronous programs can maintain these elements given GenAI capabilities. Confronting an uncomfortable reality Institutions and educators must be honest about limitations. Few strategies provide genuine protection against AI substitution; most merely create friction that determined students can overcome. The recommended approaches named above require synchronous elements or external verification that fundamentally alter asynchronous delivery. Implementation of these imperfect solutions requires genuine institutional commitment, resources and policy support. Institutions now face a choice: invest substantially in what is required to restore some degree of assessment authenticity or acknowledge that asynchronous programs (as currently structured) cannot credibly assure learning outcomes. Band-aid solutions and deflection of responsibility to instructors will only deepen the credentialing crisis. In the absence of robust institutional efforts, asynchronous programs risk becoming credential mills in all but name. The question is not whether institutions can afford to act, but whether they can afford not to.

[2]

How AI is challenging the credibility of some online courses

Distance learning far precedes the digital age. Before online courses, people relied on print materials (and later radio and other technologies) to support formal education when the teacher and learner were physically separated. Today, there are varied ways of supporting distance learning with digital communication. With "asynchronous" online courses, teaching does not occur live. Students access course materials on the learning management system and complete assignments at their own pace. This allows flexibility across time zones and work schedules and affords accessible learning. Nevertheless, some researchers have raised concerns regarding the quality and student outcomes associated with asynchronous online courses. As well, generative artificial intelligence (GenAI) has exposed fundamental challenges to this mode of delivery. While GenAI poses serious challenges to academic integrity in many formats of learning, including synchronous online and in-person learning, asynchronous courses face the most acute risk. Without real-time interaction or time constraints, students can use AI undetected while instructors never observe their thinking processes. Compromised learning models Asynchronous courses have long relied on conventional assessments: discussion board posts, written reflections, essay assignments and pre-recorded videos. These models of asynchronous assessment are now compromised. Distinguishing AI-generated content from human-written text has become increasingly difficult. Text discussions and reflections present the highest substitution risk. GenAI can generate personalized reflective posts and discussion replies rapidly, complete with a polished academic tone. An instructor may spend hours responding to these contributions yet gain little evidence about who actually learned and produced the material. AI agents like ChatGPT's Atlas browser can now navigate course sites, consume materials and complete some assignments with minimal student intervention -- if any. In written assignments, requiring precise citations from assigned course materials may seem like a safeguard. However, AI-enhanced tools can easily meet such requirements. This approach provides false security and fails to address underlying problems, including authorship with integrity in an AI world. Students can be asked to provide time-stamped drafts, version history and checkpoints to document their process. But these can be easily fabricated -- while instructors become overloaded with policing rather than focusing on students' learning and progress. AI-generated infographics and videos are also becoming hard to distinguish from human-made ones. Detectors and remote proctoring are not solutions AI detectors cannot solve the problem. Research suggests detection tools produce false positive rates far higher than advertised, with disproportionate harm to neurodivergent and second-language learners. Several universities now explicitly advise against using detection software as evidence of academic misconduct. Remote proctoring is intrusive and raises serious ethical, equity, privacy and reliability concerns. Students requiring accommodations, whether for disabilities, inadequate technology or lack of private space, must be granted leniency that undermines the system's purpose, rendering it unsustainable while diverting instructors away from their educational mission. These mounting challenges are neither hypothetical nor distant. Without meaningful intervention, institutions risk credentialing students who have not demonstrably engaged with course content, thereby undermining the integrity of academic credentials. Two less-than-ideal strategies Genuine protection against AI substitution requires approaches that fundamentally alter how instructors deliver asynchronous courses. Two strategies that somewhat meet this threshold are: 1) Short oral examinations can be scheduled for major assignments or throughout a term. While not without limitations, these conversations verify authorship and assess the depth of understanding. 2) Experiential learning components with external verification: Students can apply course concepts to real-world settings and include brief attestations from workplace supervisors, community partners or other external stakeholders in their capstone assignments that will be graded by course instructors. Combined with short oral examinations, this approach would deter offloading all learning to AI and augment asynchronous coursework with practical components. However, assessment strategies alone cannot solve the authenticity crisis. Rethinking program design The Community of Inquiry framework, a tool for conceptualizing online learning, identifies three essential elements for effective online learning: social presence (students engage authentically), cognitive presence (students construct understanding through inquiry) and teaching presence (instructors facilitate learning). GenAI threatens all three of these elements: it can simulate social engagement through generated posts, substitute for cognitive work and force instructors to focus on policing rather than teaching. Institutions must evaluate whether their asynchronous programs can maintain these elements given GenAI capabilities. Confronting an uncomfortable reality Institutions and educators must be honest about limitations. Few strategies provide genuine protection against AI substitution; most merely create friction that determined students can overcome. The recommended approaches named above require synchronous elements or external verification that fundamentally alter asynchronous delivery. Implementation of these imperfect solutions requires genuine institutional commitment, resources and policy support. Institutions now face a choice: invest substantially in what is required to restore some degree of assessment authenticity or acknowledge that asynchronous programs (as currently structured) cannot credibly assure learning outcomes. Band-aid solutions and deflection of responsibility to instructors will only deepen the credentialing crisis. In the absence of robust institutional efforts, asynchronous programs risk becoming credential mills in all but name. The question is not whether institutions can afford to act, but whether they can afford not to. This article is republished from The Conversation under a Creative Commons license. Read the original article.

Share

Share

Copy Link

Generative AI is undermining the credibility of asynchronous online courses by enabling students to complete assignments without genuine learning. Traditional assessment methods are becoming obsolete as AI can generate discussion posts, essays, and even navigate course materials autonomously.

The Growing Threat to Online Education

Generative artificial intelligence is fundamentally challenging the credibility of asynchronous online courses, creating an unprecedented crisis in academic integrity. Unlike traditional distance learning that relied on print materials and radio, today's digital education faces a sophisticated threat that can seamlessly replicate human academic work

1

.

Source: The Conversation

Asynchronous online courses, which allow students to access materials and complete assignments at their own pace without real-time interaction, are particularly vulnerable to AI exploitation. Without time constraints or direct instructor observation, students can utilize AI tools undetected while instructors remain unaware of their actual thinking processes

2

.Traditional Assessment Methods Under Siege

Conventional assessment methods that have long supported asynchronous learning are now fundamentally compromised. Discussion board posts, written reflections, essay assignments, and pre-recorded videos can all be generated by AI with increasing sophistication. Text discussions and reflections present the highest substitution risk, as generative AI can rapidly produce personalized reflective posts complete with polished academic tone

1

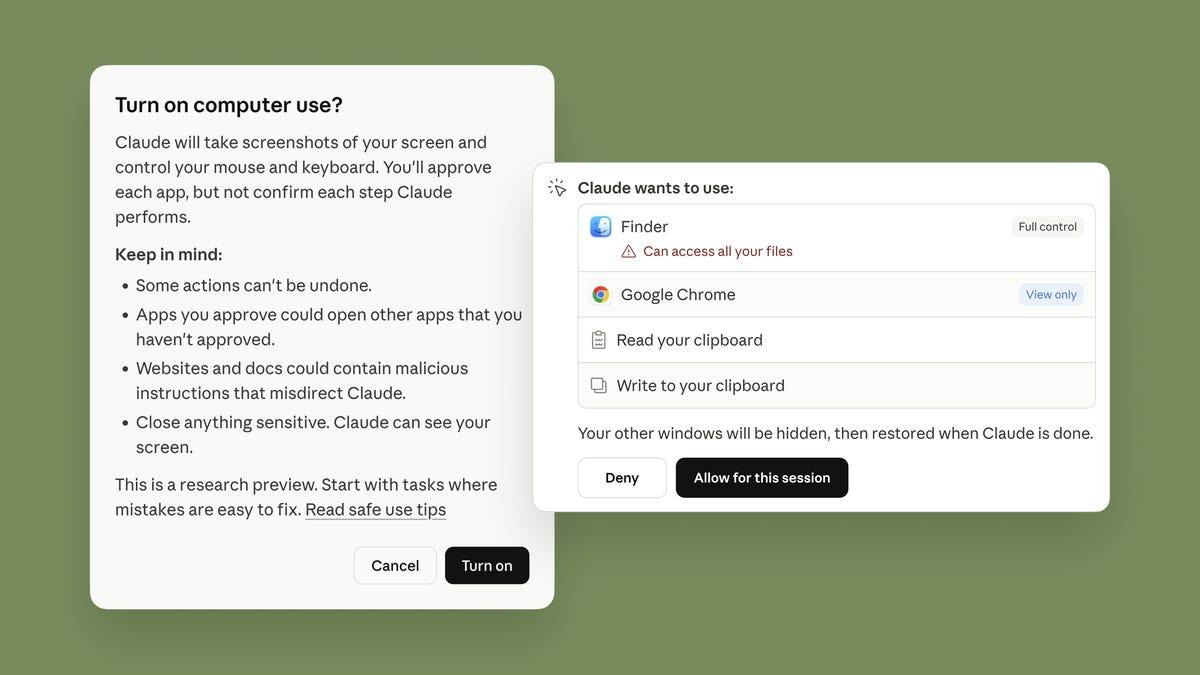

.The situation has become more alarming with the emergence of AI agents like ChatGPT's Atlas browser, which can navigate course sites, consume materials, and complete assignments with minimal or no student intervention. Even requiring precise citations from course materials provides false security, as AI-enhanced tools can easily meet such requirements while failing to address underlying authorship integrity issues

2

.Failed Solutions and False Securities

Institutions attempting to combat this crisis through AI detectors and remote proctoring are discovering these solutions create more problems than they solve. Research indicates that detection tools produce false positive rates significantly higher than advertised, with disproportionate harm to neurodivergent and second-language learners. Several universities now explicitly advise against using detection software as evidence of academic misconduct

1

.Remote proctoring presents equally problematic challenges, raising serious ethical, equity, privacy, and reliability concerns. Students requiring accommodations for disabilities, inadequate technology, or lack of private space must be granted leniency that undermines the system's purpose, rendering it unsustainable while diverting instructors from their educational mission

2

.Related Stories

Emerging Strategies for Academic Integrity

Educational institutions are exploring two primary strategies to combat AI substitution, though both present limitations. Short oral examinations scheduled for major assignments or throughout terms can verify authorship and assess understanding depth through direct conversation. While not perfect, these interactions provide evidence of genuine student engagement with course material

1

.Experiential learning components with external verification represent another approach, requiring students to apply course concepts in real-world settings with attestations from workplace supervisors, community partners, or other external stakeholders. Combined with oral examinations, this strategy could deter complete reliance on AI while augmenting asynchronous coursework with practical components

2

.Institutional Credibility at Stake

The challenges facing online education are neither hypothetical nor distant. Without meaningful intervention, institutions risk credentialing students who have not demonstrably engaged with course content, thereby undermining the integrity of academic credentials. This crisis threatens the fundamental value proposition of higher education and could erode public trust in online learning credentials.

Source: Phys.org

References

Summarized by

Navi

[1]

Related Stories

AI in Education: Reshaping Learning and Challenging Academic Integrity

12 Sept 2025•Technology

Universities Adapt to AI: Exeter's Innovative Approach to Academic Assessments

18 Jul 2024

AI Detection Software Falls Short in Combating Classroom Cheating, Experts Urge Assessment Reform

27 Feb 2025•Technology

Recent Highlights

1

Tennessee Teens Sue Elon Musk's xAI Over Grok AI-Generated Child Abuse Images

Policy and Regulation

2

Supermicro Co-Founder Indicted in $2.5 Billion Nvidia AI Chip Smuggling Scheme to China

Policy and Regulation

3

Val Kilmer to appear posthumously in As Deep as the Grave through AI-generated performance

Entertainment and Society