AMD and Intel report unprecedented CPU demand surge driven by agentic AI and inference workloads

3 Sources

3 Sources

[1]

'CPUs are cool again,' Intel and AMD reporting spikes in CPU demand due to agentic AI, shortages -- Lisa Su says business exceeded expectations while Intel is looking at long-term agreements with potential customers

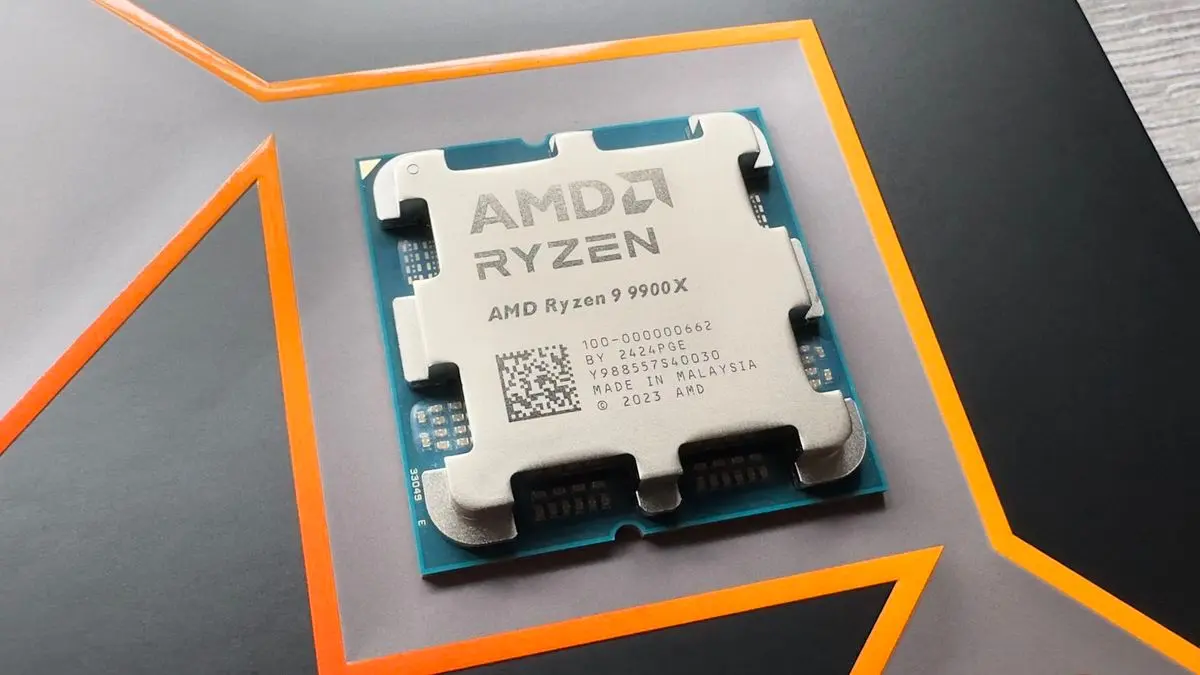

Both AMD and Intel said during the 2026 Morgan Stanley Technology, Media & Telecom Conference that demand for CPUs is seeing an uptick due to artificial intelligence. Intel CFO David Zinsner said during his question and answer (via Investing.com) that "the CPU has become cool again this year," especially as AI agents need CPUs to orchestrate the computationally-heavy tasks that the GPUs and NPUs will execute. It has even started seeing customers who are looking at long-term agreements, ensuring that they'll have a continuous supply of these chips needed to expand their operations. On the other hand, AMD CEO Lisa Su said during the same conference, "You know, we're seeing a significant CPU demand, frankly, as a result of the inference demand picking up." She also added later that "the CPU portion of the business has actually far exceeded my expectations in terms of demand." The AI boom has been driving a shortage in various components since ChatGPT showed some potential in late 2022. It first began with GPUs, especially as data centers and hyperscalers have been buying these components en masse to build massive servers with hundreds of thousands of GPUs. As graphics card supply started to normalize around the middle of 2025, experts and analysts have started warning about memory and storage chip shortages due to massive demand for high-bandwidth memory and enterprise-grade storage in AI data centers. We felt the full swing of this crisis in the fourth quarter of last year, with pricing for RAM modules and SSDs continuing to rise through February 2026. What makes this worse than the GPU shortage is that it has a much wider impact. While the GPUs that were in short supply were mostly limited to desktop PCs and gaming laptops with a discrete graphics card, virtually every modern digital device -- from consumer devices like smart TVs and smartphones to automobiles and industrial-grade equipment -- needs memory and storage. And consumer-grade memory and storage is fighting for the same wafer space that enterprise-grade memory and storage could occupy, usually with a far higher price tag. As AI advancements move forward from large language models and chatbots to agents that can observe, reason, plan, act, and learn independently, data centers require more multi-processor computing power -- that means combining CPUs, GPUs, NPUs, and more -- to support the entire agentic AI workflow. China is starting to see this spike in demand, with both Team Blue and Team Red reporting supply shortages for server CPUs in the region. We're also seeing a spike in demand for high-end Mac Studios and Mac minis, especially as we see more people build their own local AI agents with the rise in popularity of the open-source Clawdbot/Moltbot/OpenClaw. AMD and Intel are presumably talking about data center demand for their CPUs; consumer systems aren't equipped to handle the massive memory demands of agentic AI. If there is a shortage, however, it could trickle down to the consumer market, assuming the demand keeps pace. Over the past several generations, AMD and Intel have converged their data center and consumer offerings, allowing them to maximize yields by leveraging the same microarchitecture across both client and enterprise. Some of that silicon won't be useful in the data center, so the consumer market won't evaporate. But it could put downward pressure on supply if the focus shifts toward the data center, as we've seen with RAM and SSDs. Unlike Nvidia, which has seen exponential increases in revenue in its data center business, both AMD and Intel still see about half of their total revenue each quarter from the consumer market. It's still an important market, so although demand from data centers may increase, it shouldn't come at the cost of the consumer market, at least entirely. Hopefully, both Intel and AMD can keep up with the future demand, so as not to exacerbate the worsening situation of the computer industry. Otherwise, some are already predicting the end of the entry-level PC by 2028 if things continue as they are. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

AMD's CPU division is booming as CEO Dr. Lisa Su says sales 'far exceeded my expectations'

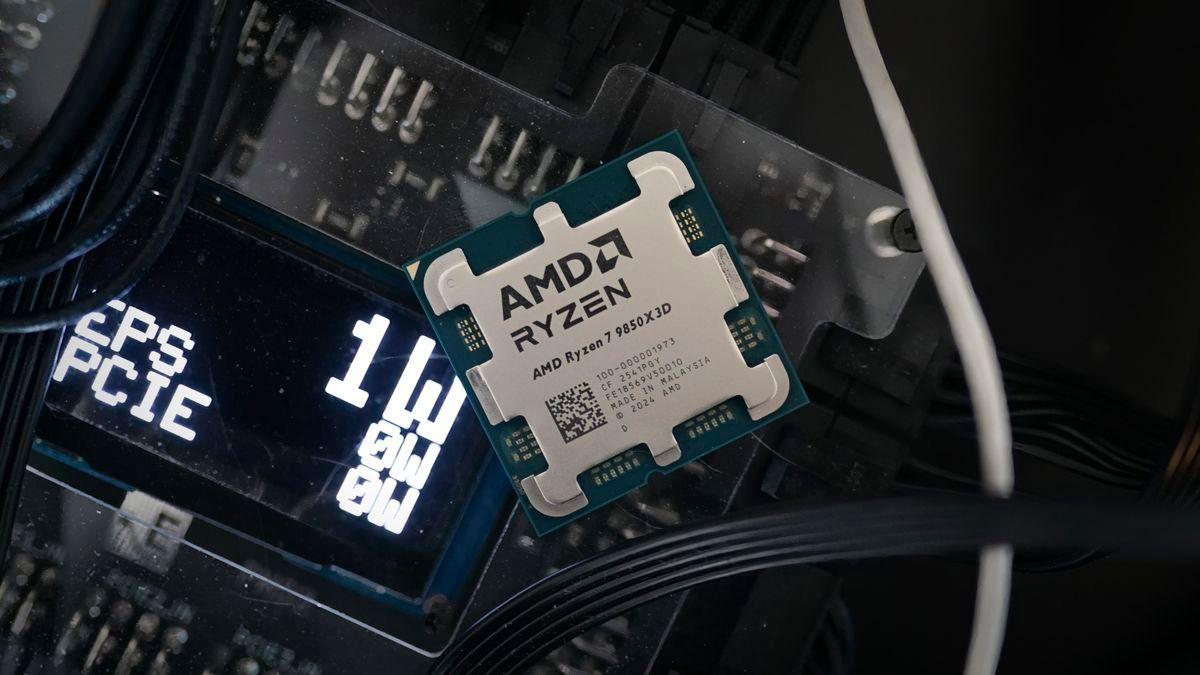

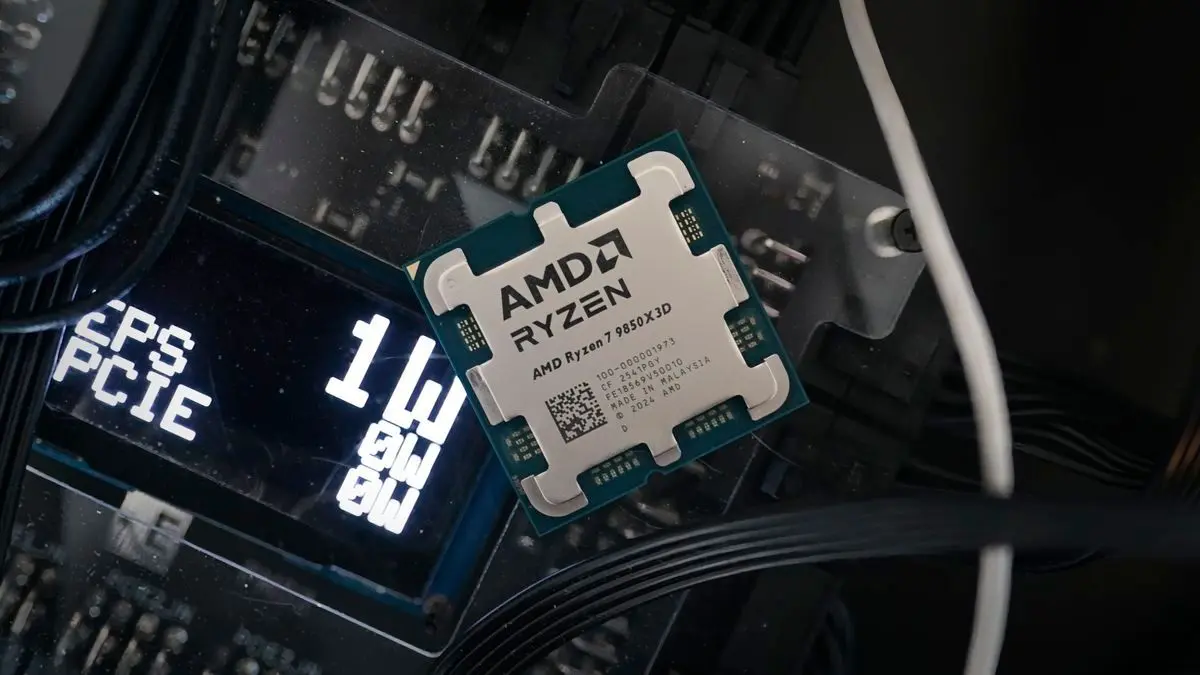

AMD CEO Dr. Lisa Su says the company's CPU sales have "far exceeded my expectations in terms of demand." That's the good news. The more ambivalent addendum, at least for we mere PC gamers, is that you can probably guess why AMD CPU sales are going gangbusters. Rejoice, because it is of course AI. "We're seeing a significant CPU demand, frankly, as a result of the inference demand picking up," Su told investors at the recent Morgan Stanley Conference. Inference, of course, involves the running of AI models and delivering AI services, as opposed to training or building AI models in the first place. Inference has slightly different requirements from a number of angles. The compute load is of a different character -- and broadly more CPU-intensive than training -- and so are the software requirements and platforms. Nvidia's GPUs are particularly dominant in training and not just in terms of the hardware and raw performance. Arguably, Nvidia's CUDA software framework is just as (if not more) important in explaining why it is so dominant in the AI training market. Indeed, AMD is increasingly tended to pitch its Instinct AI GPUs at inference rather than training. As a for instance, the new mega-deal between AMD and Meta involving semi-custom hardware is thought to be very much an inference-optimised design. Anywho, it is likely in reference to inference workloads that Su also explained that AMD's customers now think, "the demand for CPU compute sitting along AI was perhaps something that was under-forecasted." As for what all this means for ye olde consumer PCs, well, there are mixed signals. On the one hand, Su spoke of supply constraints due to massively increased demand for CPUs, which isn't good. On the other, she also "we are very, very well positioned from a supply standpoint to meet a large percentage of that demand." What's more, AMD's latest server Zen 6-based CPU, codenamed Venice, is being built on TSMC's most advanced N2 node. That's not a rumour, that's according to AMD. What we don't know is what node AMD will use for its Zen 6 consumer CPUs. AMD desktop and laptop CPU chiplets are currently built on TSMC N4 silicon. So, using N3 rather than the latest N2 would still provide a full node jump but also mean that consumer CPUs aren't competing with those new Venice server CPU chiplets for the most advanced TSMC N2 silicon. The bottom line, as ever, is that we just don't know for now. All this AI demand certainly helps when it comes to giving AMD lots of resources to spend on engineering new chips, including chips for gaming PCs. But it also makes everything more expensive.

[3]

AMD Witnesses "Unexpected" CPU Demand From Customers as Agentic AI Accelerates Adoption; CEO Lisa Su Warns Supply Is Tightening

AMD's CEO has discussed the company's situation with enterprise demand for its server CPUs, and according to Lisa Su, Team Red faces 'unexpected' customer volume. The ratio of CPU: GPU in modern-day AI compute workloads has evolved dramatically over the past few months, mainly since with agentic applications coming in, the role of CPUs has increased signifcantly. We have seen hyperscalers like Meta enter into standalone CPU agreements with infrastructure providers like AMD and NVIDIA, indicating that compute is diversifying away from GPUs. While talking at the Morgan Stanley Conference, AMD's CEO Lisa Su revealed that the company sees server CPU demand, which is 'unexpected', and that the demand will continue to grow. We're seeing actually, as much as, you know, I'm very, very excited about the GPU portion of the business, I mean, the CPU portion of the business has actually far exceeded my expectations in terms of demand. I was pretty bullish to begin with, right? If you talk to our top customers, they're like, "Wow, you know, Lisa, the, like, the demand for CPU compute sitting along AI was perhaps something that was under-forecasted." We are in the process of catching up. - AMD's CEO Lisa Su When asked about whether AMD could cater to the enterprise demand around CPUs, Lisa revealed that there is "supply tightness", but it comes from the fact that customer interest grew suddenly in the past few quarters, which gave little time for the supply chain to adjust itself. Team Red says it is working closely with partners to address existing bottlenecks and expects capacity to expand in the coming year. AMD's CEO is confident that the company's product offerings are well-positioned to address training, inference, and agentic demand. This isn't just the first time a CPU manufacturer has reported significant demand; Intel also revealed a similar situation, disclosing that it failed to meet hyperscaler commitments because it doesn't have enough production capacity. At the same time, we are seeing NVIDIA entering into Vera-only CPU commitments with infrastructure partners, which indeed underscores the growing importance of server CPUs in AI workloads.

Share

Share

Copy Link

AMD CEO Lisa Su reveals CPU sales far exceeded expectations as artificial intelligence inference workloads drive unprecedented demand. Both AMD and Intel report supply shortages for server CPUs, particularly in China, as agentic AI applications require more CPU compute power alongside GPUs. Intel CFO says CPUs have become 'cool again' with customers seeking long-term supply agreements.

AMD Reports CPU Demand Far Exceeded Expectations

AMD CEO Lisa Su disclosed at the Morgan Stanley Conference that the company's CPU division is experiencing demand levels that have far exceeded expectations, driven primarily by artificial intelligence inference workloads

2

. "We're seeing a significant CPU demand, frankly, as a result of the inference demand picking up," Su told investors, adding that "the CPU portion of the business has actually far exceeded my expectations in terms of demand"1

. This surge marks a significant shift in the AI compute landscape, where GPUs previously dominated headlines and supply concerns.

Source: Wccftech

Agentic AI Drives New CPU Requirements

The rise of agentic AI applications has fundamentally altered the balance between CPU and GPU requirements in AI compute workloads

3

. As AI systems evolve beyond simple chatbots to agents capable of observing, reasoning, planning, acting, and learning independently, data centers require more multi-processor computing power combining CPUs, GPUs, and NPUs to support entire agentic workflows1

. Intel CFO David Zinsner confirmed this trend, stating that "the CPU has become cool again this year," particularly as AI agents need CPUs to orchestrate computationally-heavy tasks that GPUs and NPUs execute1

. Hyperscalers like Meta have begun entering standalone CPU agreements with infrastructure providers, signaling that compute requirements are diversifying away from GPU-only solutions.

Source: Tom's Hardware

Supply Shortages Emerge as Demand Catches Industry Off Guard

Both chip manufacturers are now facing supply shortages for server CPUs, particularly in China, where the spike in demand has been most pronounced

1

. Lisa Su acknowledged the tightening CPU supply situation, explaining that customers told her "the demand for CPU compute sitting along AI was perhaps something that was under-forecasted"3

. The supply chain had little time to adjust as customer interest grew suddenly over recent quarters. Intel has similarly disclosed that it failed to meet hyperscaler commitments due to insufficient production capacity, with customers now seeking long-term agreements to ensure continuous supply1

. Even NVIDIA has entered into CPU-only commitments with infrastructure partners, underscoring the growing importance of processors in AI workloads.Related Stories

Implications for Consumer Market and Supply Chain

While AMD's Lisa Su expressed confidence that the company is "very, very well positioned from a supply standpoint to meet a large percentage of that demand," the surge in data center requirements raises concerns about potential impacts on the consumer market

2

. Both AMD and Intel have converged their data center and consumer offerings in recent generations, leveraging the same microarchitecture across client and enterprise segments to maximize yields1

. If focus shifts toward the data center, it could put downward pressure on consumer supply, similar to what occurred with RAM and SSDs during the AI boom. The situation mirrors earlier component shortages that began with GPUs after ChatGPT demonstrated AI potential in late 2022, followed by memory and storage chip shortages through February 2026. Some analysts are already predicting the end of entry-level PCs by 2028 if current trends continue, though both companies still derive approximately half their quarterly revenue from the consumer market, suggesting they won't abandon that segment entirely.

Source: PC Gamer

References

Summarized by

Navi

[2]

Related Stories

CPU shortage intensifies as Intel and AMD prioritize AI servers over consumer PCs

Yesterday•Business and Economy

Intel and AMD face severe CPU shortages as AI demand strains global chip supply chain

06 Feb 2026•Business and Economy

Intel's Resurgence: AI Demand Drives Supply Shortages and Strategic Shifts

24 Oct 2025•Technology

Recent Highlights

1

Anthropic's Claude AI can now control your computer to complete tasks autonomously

Technology

2

Nvidia's Jensen Huang calls OpenClaw the next ChatGPT, sending Chinese AI stocks soaring

Technology

3

Elon Musk unveils $20 billion Terafab chip manufacturing project to produce terawatt of computing

Technology