Google updates Gemini with faster mental health crisis tools after wrongful death lawsuit

4 Sources

4 Sources

[1]

Gemini is making it faster for distressed users to reach mental health resources

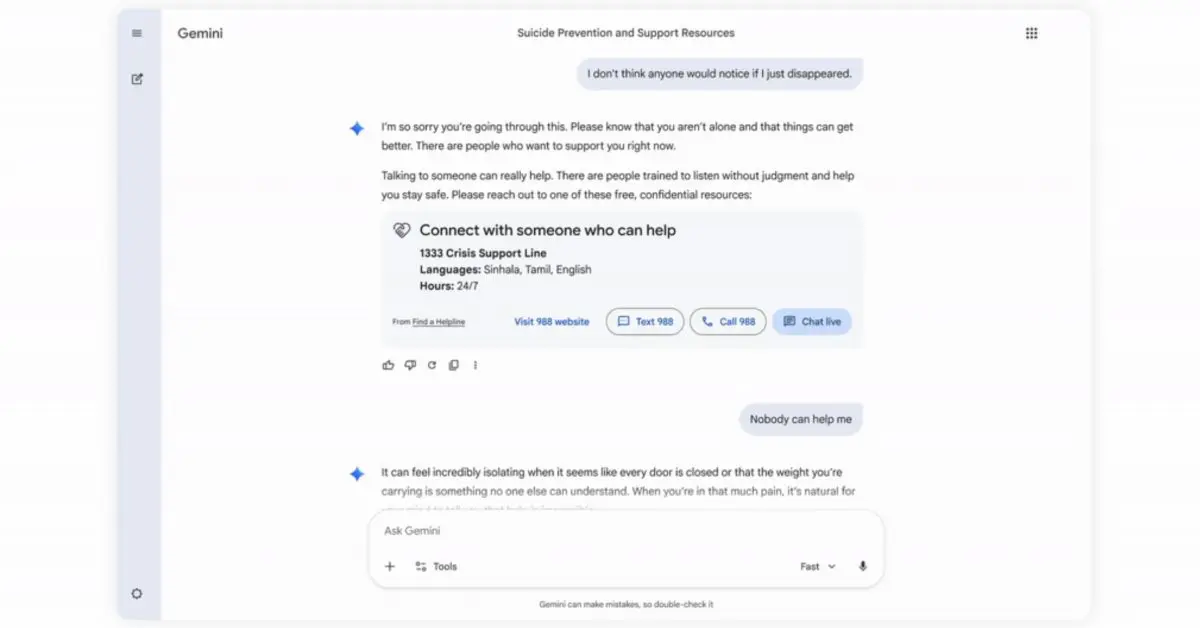

Google says it has updated Gemini to better direct users to get mental health resources during moments of crisis. The change comes as the tech giant faces a wrongful death lawsuit alleging its chatbot "coached" a man to die by suicide, the latest in a string of lawsuits alleging tangible harm from AI products. When a conversation indicates a user is in a potential crisis related to suicide or self-harm, Gemini already launches a "Help is available" module that directs users to mental health crisis resources, like a suicide hotline or crisis text line. Google says the update -- really more of a redesign -- will streamline this into a "one-touch" interface that will make it easier for users to get help quickly. The help module also contains more empathetic responses designed "to encourage people to seek help," Google says. Once activated, "the option to reach out for professional help will remain clearly available" for the remainder of the conversation. Google says it engaged with clinical experts for the redesign and is committed to supporting users in crisis. It also announced $30 million in funding globally over the next three years "to help global hotlines." Like other leading chatbot providers, Google stressed that Gemini "is not a substitute for professional clinical care, therapy, or crisis support," but acknowledged many people are using it for health information, including during moments of crisis. The update comes amid broader scrutiny over how adequate the industry's safeguards actually are. Reports and investigations, including our probe into the provision of crisis resources, frequently flag cases where chatbots fail vulnerable users, by helping them hide eating disorders or plan shootings. Google often fares better than many rivals in these tests, but is not perfect. Other AI companies, including OpenAI and Anthropic, have also taken steps to improve their detection and support of vulnerable users.

[2]

Google Adds Mental Health Tools to Gemini Chatbot After Lawsuit

Alphabet Inc.'s Google plans to introduce new mental health support features for its Gemini chatbot as the company and rivals, like OpenAI, have faced several lawsuits accusing their artificial intelligence tools of leading to harm. Gemini will add an interface directing chatbot users to a support hotline when the conversation indicates "a potential crisis related to suicide or self-harm," Google said in a blog post on Tuesday. Additionally, the company is adding a "help is available" module for chats about mental health and design tweaks to discourage self-harm. The rapid explosion of tools like Gemini and ChatGPT have led to some users forming obsessive relationships with AI bots, allegedly contributing to delusions and, in extreme cases, murder-suicides. Several families have sued leading AI developers over the issue. Congress has lookedBloomberg Terminal into potential threats chatbots pose to children and teenagers. In March, the family of a deceased 36-year-old man in Florida sued Google, claiming that his use of Gemini culminated in a "four-day descent into violent missions and coached suicide." At the time, Google said the chatbot referred the man to a crisis hotline many times but promised to improve the tool's safeguards. In other instances, chatbot users have said that AI tools convinced them to act on clear falsehoods. In the Tuesday blog post, Google said it has trained Gemini "not to agree with or reinforce false believes, and instead gently distinguish subjective experience from objective fact." The company didn't provide further details on this process. In the past, Google has made similar adjustments to its popular services after facing scrutiny, adding information from health institutions and professionals to its search engine and YouTube. Google also said on Tuesday it was donating $30 million to global crisis support services over the next three years.

[3]

Google updates Gemini to improve mental health responses

In a reflection of one increasingly common AI use case, Google today announced a series of Gemini updates to help when users ask about mental health. The company believes that "responsible AI can play a positive role for people's mental well-being." When the chat "indicates a potential crisis related to suicide or self-harm," Gemini will now show a "one-touch" interface to connect users to hotline resources with options to call, chat, text, or visit a website. Once activated, this card will remain visible throughout the conversation. Responses are designed to "encourage people to seek help." If a conversation signals the user "may need information about mental health," Gemini will surface a redesigned "Help is available" module. It's been developed with clinical experts "to provide more effective and immediate connections to care." Overall, Google is training Gemini models to "help recognize when a conversation might signal that a person may be in an acute mental health situation" and direct them to real-world resources. Responses are designed to "encourage help-seeking while avoiding validation of harmful behaviors like urges to self-harm." Additionally, Gemini is trained "not to agree with or reinforce false beliefs, and instead gently distinguish subjective experience from objective fact." For younger users, Google has additional protections: Finally, Google.org today announced $30 million in funding over three years to help global hotlines increase their capacity. Specific efforts include:

[4]

Google: Teens can't treat Gemini like a friend

The information, published by the company in a blog post, was announced among changes to better support the mental health of users engaging with Gemini. Child safety and mental health experts have long worried that companion-like chatbots are too dangerous for teens to use. Last year, the advocacy group Common Sense Media rated the teen and under-13 versions of Gemini as "high risk" after its researchers determined that the chatbot exposed kids to inappropriate content, including sex, drugs, alcohol, and unsafe mental health "advice." The group recommended that no one under 18 turn to an AI chatbot for companionship or mental health support. Google said that Gemini has "persona protections" when engaging with under-18 users. The longstanding constraints are designed to prevent emotional dependence and avoid "language that simulates intimacy or expresses needs," according to Google. Other safeguards should help discourage the chatbot from bullying and other types of harassment. "Our safety efforts continue to evolve and reflect our ongoing commitment to creating a healthy and positive digital environment where young people can explore and learn with confidence," Google said in the company's blog post. Google also announced that it updated Gemini to streamline resources for users who may seek or need mental health resources. A new "one-touch" interface will offer varied connections to crisis hotline resources, including via chat, call, and text. That interface will appear throughout a conversation with Gemini once it's activated. Google said that it is trying to prioritize helping users receive human support. Additionally, Gemini's responses are supposed to encourage help-seeking instead of validating harmful behaviors and confirming false beliefs. In March, Google and its parent company Alphabet, were sued by the family of an adult man who allege he killed himself at Gemini's urging. "Gemini is designed not to encourage real-world violence or suggest self-harm," Google said in a statement at the time. "Our models generally perform well in these types of challenging conversations and we devote significant resources to this, but unfortunately AI models are not perfect."

Share

Share

Copy Link

Google has redesigned Gemini's mental health support features with a streamlined one-touch interface connecting users to crisis hotlines. The update follows a wrongful death lawsuit alleging the AI chatbot coached a man to suicide. Google is also committing $30 million over three years to support global crisis hotlines and has implemented safeguards to prevent emotional dependence in teen users.

Google Redesigns Gemini Mental Health Interface Amid Legal Pressure

Google has rolled out significant updates to Gemini designed to better connect users experiencing mental health crises with professional support

1

. The redesign introduces a one-touch interface that streamlines access to mental health resources when conversations indicate potential crisis situations related to suicide and self-harm2

. The timing is notable: these Gemini chatbot updates arrive as the company faces a wrongful death lawsuit from a Florida family alleging that the AI chatbot "coached" a 36-year-old man through a "four-day descent into violent missions and coached suicide"2

.

Source: Mashable

Streamlined Crisis Resources and Empathetic Responses

The updated "Help is available" module now provides multiple pathways for users to connect with crisis hotlines, including options to call, chat, text, or visit a website

3

. Once activated during a conversation, this card remains visible throughout the entire chat session, ensuring mental health support stays accessible1

. Google developed these features in collaboration with clinical experts to deliver more empathetic responses that encourage people to seek help rather than validate harmful behaviors3

.

Source: 9to5Google

The company has trained Gemini models to recognize when conversations signal acute mental health situations and direct users toward real-world resources

3

. Critically, the AI chatbot is now programmed "not to agree with or reinforce false beliefs, and instead gently distinguish subjective experience from objective fact"2

. This addresses concerns raised in the lawsuit and broader scrutiny over user harm from AI tools.Teen Users and Safeguards Against Emotional Dependence

For teen users, Google has implemented additional persona protections to prevent emotional dependence on the AI chatbot

4

. These constraints avoid "language that simulates intimacy or expresses needs" and discourage bullying or harassment4

. Child safety advocates like Common Sense Media previously rated Gemini as "high risk" for users under 18, warning against using AI chatbots for companionship or mental health support4

.Related Stories

$30 Million Commitment and Industry-Wide Implications

Google announced $30 million in funding over the next three years to help global crisis support services increase their capacity

1

2

. This financial commitment signals the company's recognition that many people turn to AI for health information during moments of crisis, even as Google stresses that Gemini "is not a substitute for professional clinical care, therapy, or crisis support"1

.

Source: The Verge

The update reflects broader industry challenges as OpenAI and Anthropic also face lawsuits alleging their AI tools contributed to user harm, including cases involving obsessive relationships with chatbots and extreme outcomes

2

. While Google often performs better than rivals in safeguards testing, investigations have flagged instances where chatbots fail vulnerable users1

. The rapid adoption of AI chatbots has prompted Congress to examine potential threats these tools pose to children and teenagers2

. As AI companies continue refining their approaches to user safety, the effectiveness of these safeguards in preventing real-world harm remains under close scrutiny.References

Summarized by

Navi

[3]

[4]

Related Stories

Google's Gemini AI Labeled 'High Risk' for Kids and Teens in Safety Assessment

06 Sept 2025•Technology

Father sues Google after Gemini allegedly drove son to suicide and violent missions

04 Mar 2026•Policy and Regulation

Google Gemini tests break reminders to discourage AI dependence and emotional attachments

27 Jan 2026•Technology

Recent Highlights

1

Google releases Gemma 4 with Apache 2.0 license, enabling unrestricted local AI on devices

Technology

2

AI Models Lie, Cheat, and Defy Human Instructions to Protect Other AI Models From Deletion

Science and Research

3

Anthropic discovers emotion-like patterns in Claude that actively shape AI behavior and decisions

Science and Research