Anthropic secures 3.5 gigawatts of Google TPUs as revenue soars to $30 billion run rate

22 Sources

[1]

Anthropic ups compute deal with Google and Broadcom amid skyrocketing demand | TechCrunch

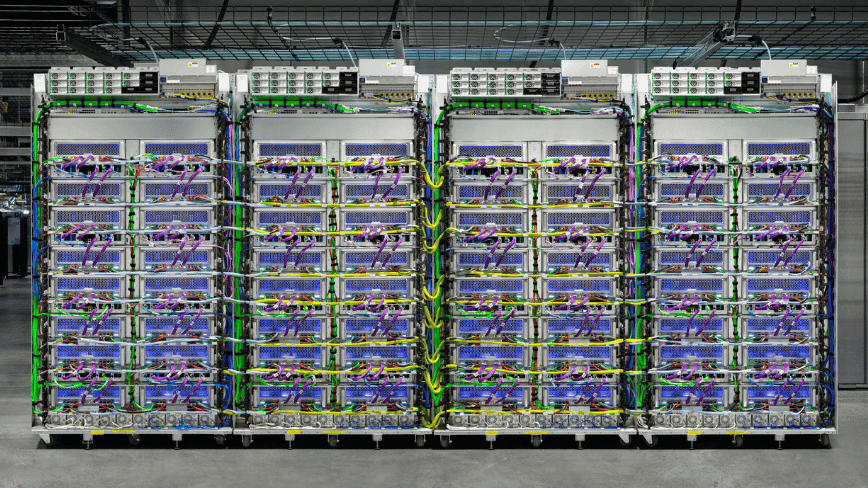

AI research lab Anthropic announced Monday that it signed a new agreement with Google and Broadcom for increased processing and compute capacity to power its Claude AI models. This reworking of its compute deals comes as demand for its AI models continues to soar. The deals would expand Anthropic's use of Google Cloud's tensor processing units, or TPUs, the company's advanced AI chips, and is an expansion of the deal the companies struck in October 2025 for more than a gigawatt of compute capacity. This new compute capacity will come online in 2027, Anthropic said in a blog post. The company did not give specifics for its compute expansion, but a recent Broadcom SEC filing shows the deal includes 3.5 gigawatts of compute. The majority of this compute will be housed in the U.S. and will be an extension of the company's $50 billion commitment to invest in U.S. compute infrastructure, Anthropic said in the post. "This groundbreaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development," Krishna Rao, CFO of Anthropic, said in the press release. "We are making our most significant compute commitment to date to keep pace with our unprecedented growth." Anthropic did not respond to TechCrunch's request for comment. The company has seen demand for its Claude models explode in recent months, buoyed by enterprise customers and despite the U.S. Defense Departments's labeling of Anthropic a supply chain risk. Anthropic also recently closed a $30 billion Series G funding round that valued the company at $380 billion. The company's run rate revenue is now $30 billion, the company announced, marking a drastic jump from the $9 billion the company recorded at the end of 2025. Anthropic also has more than 1,000 business customers spending more than $1 million on an annualized basis.

[2]

Broadcom to supply Anthropic with 3.5 gigawatts of Google TPU capacity from 2027 -- Claude pioneer says its annual revenue run rate has passed $30 billion

Securities filing confirms multi-year supply agreement runs through 2031. Broadcom disclosed in a securities filing on Monday that it'll supply Anthropic with roughly 3.5 gigawatts of Google TPU capacity starting in 2027, and separately committed to designing and supplying future generations of Google's TPUs through 2031. This new Anthropic capacity is in addition to the 1 GW already coming online in 2026 under the Google Cloud agreement announced last October, and the filing states that Anthropic's use of the expanded capacity is contingent on its continued commercial performance. The Monday filing covers two linked arrangements. The first is a supply assurance agreement under which Broadcom will provide networking and other components for Google's next-generation AI racks through 2031. The second is the expanded three-way collaboration with Anthropic, which routes Google-designed TPUs to the AI company via Broadcom as part of the multi-gigawatt commitment Anthropic has made for next-generation TPU-based compute. The vast majority of the new infrastructure will be located in the United States, extending the $50 billion American AI infrastructure commitment Anthropic made in November 2025. Anthropic said in a blog post that its annualized revenue run rate has now passed $30 billion, up from around $9 billion at the end of 2025, and that more than 1,000 business customers are spending over $1 million a year on its services, double the figure from February. "This groundbreaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base," Krishna Rao, Anthropic's chief financial officer, said in the blog post. Amazon Web Services remains Anthropic's primary cloud and training partner under Project Rainier, the Trainium 2-based supercluster in Indiana, and the new Google-Broadcom capacity sits alongside that arrangement rather than replacing it. Google, of course, owns both the TPU architecture and software stack, with Broadcom acting as the silicon implementation partner, converting Google's architecture into a manufacturable ASIC layout while supplying high-speed SerDes, power management, and packaging. TSMC handles fabrication. The same division of labor underpins Broadcom's separate $10 billion custom silicon program with OpenAI, announced as a 10 GW co-development effort last October, which makes Broadcom the implementation layer for two of the three largest U.S. frontier model developers. Analysts at Mizuho, led by Vijay Rakesh, estimated that Broadcom would record $21 billion in AI revenue from Anthropic in 2026 and $42 billion in 2027, figures Mizuho published in a note following Broadcom's March earnings call, though the SEC filing didn't contain any specific amounts. Both Anthropic and OpenAI continue to draw heavily on Nvidia GPUs through cloud providers including AWS, Google Cloud, and Microsoft Azure, and OpenAI has separately committed to 6GW of AMD GPU capacity, with the first gigawatt expected in the second half of this year. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[3]

Anthropic reveals $30bn run rate, plan to use new Google TPU

Broadcom's building the silicon and is chuffed about that, but also notes Anthropic remains a risk Broadcom has announced that Google has asked it to build next-generation AI and datacenter networking chips, and that Anthropic plans to consume 3.5GW worth of the accelerators it delivers to the ads and search giant. News of the two deals emerged today in a Broadcom regulatory filing that opens with two items of news. One is a "Long Term Agreement for Broadcom to develop and supply custom Tensor Processing Units ("TPUs") for Google's future generations of TPUs." Google and Broadcom have collaborated to produce custom TPUs. Broadcom CEO Hock Tan recently shared his opinion that hyperscalers don't have the skill to create custom accelerators and predicted Broadcom's chip business will therefore win over $100 billion of revenue from AI chips in 2027 alone. Working on next-gen TPUs for Google will presumably help to make that prediction a reality. So will the second part of Broadcom's announcement: a "Supply Assurance Agreement for Broadcom to supply networking and other components to be used in Google's next-generation AI racks through up to 2031." Broadcom's filing also revealed one user of Google's next-gen TPU will be Anthropic, which starting in 2027, "will access through Broadcom approximately 3.5 gigawatts as part of the multiple gigawatts of next generation TPU-based AI compute capacity committed by Anthropic." The filing includes the following notable statement: That sounds an awful lot like Broadcom putting on the record that the financial arrangements that will make it possible to deploy 3.5GW worth of custom TPUs for Anthropic represent sufficient risk that the company needs to put it on the record in a regulatory filing. In its announcement about the deal, Anthropic seemingly tries to reassure markets about its financial affairs by revealing that "Our run-rate revenue has now surpassed $30 billion -- up from approximately $9 billion at the end of 2025." "When we announced our Series G fundraising in February, we shared that over 500 business customers were each spending over $1 million on an annualized basis," Anthropic wrote. "Today that number exceeds 1,000, doubling in less than two months." Yet Broadcom still worries about the AI upstart. Google's take on the announcements points out that in addition to renting TPUs, Anthropic is a big Google Cloud customer. Anthropic pointed out that it also uses AWS's Trainium AI chips, plus Nvidia kit, so it can "match workloads to the chips best suited for them." ®

[4]

Exclusive: Anthropic weighs building it own AI chips, sources say

SAN FRANCISCO, April 9 (Reuters) - Artificial intelligence lab Anthropic is exploring the possibility of designing its own chips, three sources said, as the company and its rivals respond to a shortage of AI chips needed to power and develop more advanced AI systems. The plans are in early stages and the company may still decide to only buy AI chips and not design any, according to two people with knowledge of the matter and one person briefed on Anthropic's plans. The company has yet to commit to a specific design or put together a dedicated team to work on the project, one of the sources said. A spokesperson for the San Francisco-based company declined to comment on the article. Demand for its AI model Claude has accelerated in 2026, with the startup's run-rate revenue now surpassing $30 billion, up from about $9 billion at the end of 2025, Anthropic said earlier this week. Anthropic uses a range of chips, including tensor processing units (TPUs) designed by Alphabet's (GOOGL.O), opens new tab Google and Amazon's chips (AMZN.O), opens new tab to develop and run its AI software and chatbot Claude. Earlier this week, Anthropic signed a long-term deal with Google and Broadcom (AVGO.O), opens new tab, which helps design the TPUs. That deal builds on the company's commitment to invest $50 billion in strengthening U.S. computing infrastructure. Anthropic's discussions mirror similar efforts underway at large tech companies that are seeking to design their own AI chips, including Meta (META.O), opens new tab and OpenAI. Designing an advanced AI chip can cost roughly half a billion dollars, according to industry sources, as companies need to employ skilled engineers and spend to make sure the manufacturing process has no defects. Reporting by Max A. Cherney and Deepa Seetharaman in San Francisco; Editing by Sayantani Ghosh and Aurora Ellis Our Standards: The Thomson Reuters Trust Principles., opens new tab * Suggested Topics: * Artificial Intelligence Max A. Cherney Thomson Reuters Max A. Cherney is a correspondent for Reuters based in San Francisco, where he reports on the semiconductor industry and artificial intelligence. He joined Reuters in 2023 and has previously worked for Barron's magazine and its sister publication, MarketWatch. Cherney graduated from Trent University with a degree in history.

[5]

Anthropic strikes chips deal with Google and Broadcom

Anthropic will spend hundreds of billions of dollars on Google's chips and cloud services in a push to secure critical computing resources as surging demand for the company's tools pushes its annualised revenue to $30bn. The AI lab said on Monday it has committed to use "multiple gigawatts" of capacity from Google's TPU, a rival chip to Nvidia's dominant GPU, and the search giant's cloud services. Around 3.5GW of capacity on Google's hardware will come through a partnership with chipmaker Broadcom, starting from next year, according to a separate filing on Monday. In all, the deal would give Anthropic access to close to 5GW in new computing capacity over the coming years, according to a person with knowledge of the terms. The hardware and infrastructure required to develop a single gigawatt of capacity -- roughly equivalent to the power output of a nuclear reactor -- is estimated to cost from $35bn-50bn, with the bulk of that spent on chips. That suggests the lossmaking start-up's commitment could run to hundreds of billions of dollars. Anthropic executives are racing to secure enormous supplies of computing power in order to meet rapidly growing demand for the company's tools, particularly coding agent Claude Code, and to fund costly model training. The San Francisco-based group's annualised revenue has shot from $9bn at the end of last year to $30bn at the end of March, Anthropic said on Monday. The figure represents its revenues from the past 28 days extrapolated over a year. "We are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development," said Krishna Rao, Anthropic's chief financial officer. Broadcom shares rose almost 3 per cent after the market closed on Monday. The company also announced that it would develop and supply custom TPUs for Google as part of a long-term agreement through 2031. Google is seeking to expand sales of its in-house chips, which have helped power its own Gemini AI models, bringing it into increasingly direct competition with Nvidia, the world's largest semiconductor group. Anthropic's rival OpenAI last year struck a string of computing deals with Broadcom, Nvidia, AMD and others, in a push to lock in as much capacity as possible to power its own AI tools. The deals have been criticised for their circularity, with Big Tech groups acting as customers, suppliers and investors in the AI labs. Google has invested billions into Anthropic, giving it a 14 per cent stake as of March last year, according to a legal filing. Both companies have faced scrutiny for their heavy outlay, having repeatedly returned to venture capital and sovereign wealth backers to raise tens of billions of dollars on the promise that they can become profitable if they build sufficient scale and market dominance. Anthropic raised $30bn in February in a deal valuing it at $380bn, including the new money. In its filing on Monday, Broadcom said the deal was "dependent on Anthropic's continued commercial success" and that the parties "are in discussions with certain operational and financial partners". Monday's deal expands on a partnership Anthropic announced with Google last October, which it said at the time was "worth tens of billions of dollars and is expected to bring well over a gigawatt of capacity online in 2026". In November, Anthropic also committed to spend $50bn on new data centres in Texas and New York with cloud computing group Fluidstack, and agreed to purchase $30bn of additional capacity from Microsoft and Nvidia.

[6]

Anthropic is exploring building its own AI chips

The plans are early-stage and Anthropic may still decide to only buy chips rather than design them. The exploration comes days after the company signed a long-term deal with Google and Broadcom for 3.5 gigawatts of TPU compute starting in 2027. A company spokesperson declined to comment. Anthropic is exploring the possibility of designing its own AI chips, Reuters reported on Thursday, citing three sources familiar with the matter. The effort is at an early stage: the company has not committed to a specific design and has not assembled a dedicated team for the project. It may still decide to continue purchasing chips from third parties rather than building its own. A spokesperson for the San Francisco-based company declined to comment on the report. The exploration comes as Anthropic's revenue has accelerated sharply. The company disclosed earlier this week that its annualised revenue run rate has surpassed $30 billion, up from approximately $9 billion at the end of 2025. That trajectory has created a scale of compute demand that makes the economics of custom silicon increasingly worth examining. Anthropic currently runs Claude across a mix of chips: tensor processing units designed by Alphabet's Google, in partnership with Broadcom, alongside Amazon's custom chips and Nvidia hardware. The company said it matches workloads to whichever chips are best suited for them. Just days before the Reuters report, Anthropic signed a long-term deal with Google and Broadcom that will give it access to approximately 3.5 gigawatts of TPU-based compute capacity from 2027, roughly three times the roughly one gigawatt it was consuming earlier in 2026, according to Broadcom's SEC filing. The filing flagged that the expanded deployment is contingent on Anthropic's continued commercial success, an unusual hedge for a regulatory document. The deal builds on Anthropic's November 2025 commitment to invest $50 billion in US computing infrastructure. Broadcom is also already a chip design partner for OpenAI, and has a fifth undisclosed XPU customer, placing it at the centre of the custom AI silicon market that is emerging as an alternative to Nvidia's general-purpose GPUs. The possibility of Anthropic developing proprietary silicon mirrors moves already underway elsewhere in the industry. Meta has been building its own AI training chips, and OpenAI has been working on custom silicon as well. Industry sources cited by Reuters put the development cost of an advanced AI chip at roughly $500 million, reflecting the need to hire specialised engineers and validate the manufacturing process. That figure is not trivial for a company that remains, for now, unprofitable, but it is more manageable against a run-rate revenue base that has more than tripled in four months.

[7]

Anthropic signs biggest compute deal yet with Google and Broadcom as run rate hits $30bn | TNW

In short: Anthropic has agreed to access approximately 3.5 gigawatts of next-generation Google TPU compute capacity via Broadcom from 2027, its largest infrastructure commitment to date -- while simultaneously disclosing that its revenue run rate has surpassed $30bn, more than tripling from roughly $9bn at the end of 2025. Anthropic has announced it is securing multiple gigawatts of next-generation compute capacity through a new agreement with Google and Broadcom, while disclosing revenue growth figures that underscore why the AI lab now requires infrastructure at a scale that would have seemed implausible two years ago. The deal, announced on 6 April 2026, gives Anthropic access to approximately 3.5 gigawatts of Google tensor processing unit (TPU) capacity via Broadcom starting in 2027, building on the 1 gigawatt already being supplied to the company in 2026. Krishna Rao, Anthropic's chief financial officer, described it as "our most significant compute commitment to date," adding that the agreement represents a continuation of the company's "disciplined approach to scaling infrastructure." The majority of the new capacity will be located in the United States, extending Anthropic's November 2025 commitment to invest $50bn in American AI computing infrastructure. The announcement is as much about Broadcom as it is about Anthropic or Google. Under the new arrangement, Broadcom acts as the intermediary layer between Google's custom silicon and Anthropic's training and inference workloads. In parallel, Broadcom has signed a separate long-term agreement with Google to design and supply future generations of custom TPU chips, and a supply assurance agreement to provide networking and other components for Google's next-generation AI data racks through 2031. This makes Broadcom an increasingly indispensable node in the AI infrastructure graph. The chipmaker, led by CEO Hock Tan, is not building AI models; it is building the silicon and the interconnects on which AI models are built. Broadcom shares rose approximately 3% in extended trading on the announcement, a reaction that reflects investor appetite for companies positioned at the physical layer of the AI stack rather than the application layer on top of it. Analysts at Mizuho, led by Vijay Rakesh, estimated that Broadcom would record $21bn in AI revenue from Anthropic in 2026 alone, rising to $42bn in 2027, figures that, even as projections, illustrate the financial weight of what is being committed. Broadcom had first signalled the scale of its Anthropic relationship in September 2025, when Hock Tan disclosed during an earnings call that a mystery customer had placed a $10bn order for custom TPU racks. In December 2025, he confirmed the customer was Anthropic, and that an additional $11bn order had since followed. The April 2026 announcement is the third act of the same story: a partnership that has now graduated from a reported $21bn commitment to multi-gigawatt infrastructure with a defined delivery timeline. The compute deal is intelligible only against the backdrop of Anthropic's commercial growth. The company says its run-rate revenue has now exceeded $30bn, up from approximately $9bn at the end of 2025. That trajectory -- more than a threefold increase in roughly three months, is the result of a compounding enterprise sales motion that accelerated sharply after Anthropic closed its Series G funding round on 12 February 2026. That round raised $30bn at a post-money valuation of $380bn, led by GIC and Coatue, and co-led by D.E. Shaw Ventures, Dragoneer, Founders Fund, ICONIQ, and MGX. When the Series G closed, Anthropic reported that more than 500 business customers were each spending over $1m on an annualised basis. As of the April announcement, that number has exceeded 1,000, doubling in less than two months. The pace of enterprise adoption is the proximate cause of the compute expansion: more revenue requires more inference capacity, more inference capacity requires more training compute, and more training compute requires more gigawatts. What distinguishes Anthropic's infrastructure approach from many of its peers is an explicit multi-vendor chip strategy. Claude is trained and served across three hardware platforms: Amazon's Trainium chips, Google's TPUs, and Nvidia GPUs. Anthropic says Claude is the only frontier model available on all three major cloud platforms, AWS, Google Cloud, and Microsoft Azure, a claim that carries commercial as well as technical significance. The multi-vendor stance gives Anthropic both resilience and negotiating leverage. If capacity is constrained on any single platform, workloads can shift. If one chipmaker faces supply disruption, export controls, or pricing pressure, Anthropic is not exposed to the full force of that shock. The strategy has precedent: Microsoft's own AI models reflect a similar instinct to hedge against single-vendor dependence, though in Microsoft's case the hedge is against a partner rather than a hardware supplier. The AWS relationship remains foundational. In late 2024, Anthropic named Amazon its primary cloud and training partner, with total Amazon investment reaching $8bn. Project Rainier, an Anthropic supercomputer cluster running roughly 500,000 Amazon Trainium 2 chips in Indiana, is expected to scale beyond one million Trainium 2 chips by the end of 2025. The Google relationship, which now extends through the new Broadcom deal to multi-gigawatt scale in 2027, sits alongside this rather than replacing it. The April deal is framed explicitly as an extension of Anthropic's November 2025 domestic infrastructure pledge: a $50bn commitment to American AI computing infrastructure, developed initially in partnership with Fluidstack, the UK-based neocloud operator, with data centre sites in Texas and New York coming online through 2026. The new Broadcom capacity, the majority of which will be US-based, expands that footprint into 2027 and beyond. This domestic emphasis is not incidental. The Trump administration's AI Action Plan has explicitly targeted US-based compute capacity as a strategic priority, and Anthropic, like its peers, has positioned its infrastructure investments accordingly. Whether that alignment reflects sincere strategic conviction or tactical regulatory positioning -- or both -- the practical effect is the same: a substantial share of the world's next-generation AI training capacity is being locked into American geography. The Anthropic-Google-Broadcom announcement is a data point in a pattern that has been building for 18 months. SoftBank's $40bn bridge loan to fund its OpenAI commitment reflected the same underlying dynamic: AI labs have grown so fast that their compute requirements now exceed what can be financed from revenue alone, requiring financial engineering at a scale once reserved for infrastructure utilities. Meta's $27bn infrastructure deal with Nebius reflects a parallel logic at the hyperscaler level. The compute arms race is also reshaping how AI companies manage their relationships with the services built on top of their models. Anthropic has been attentive to this: the company recently moved to restrict access to Claude via certain third-party frameworks, a decision that illustrated how the cost dynamics of frontier model inference are forcing AI labs to make difficult choices about which use cases they subsidise and which they price explicitly. For Broadcom, the trajectory is simpler: a chipmaker that was not widely discussed in the context of AI two years ago is now a load-bearing element of the infrastructure on which two of the world's most consequential AI models, Google's Gemini and Anthropic's Claude -- are built and served. That position, cemented through 2031 for Google's custom silicon and through the new multi-gigawatt agreement for Anthropic's TPU access, is the real story beneath the headline numbers. Nvidia remains the dominant force in AI accelerators, and firms like Nvidia's enterprise AI platform continues to expand its reach. But Broadcom's rise as the custom silicon partner of choice for hyperscale AI compute is one of the defining semiconductor industry shifts of this decade.

[8]

Anthropic Google Broadcom TPU deal 3.5 gigawatts and $30 billion revenue

Anthropic has signed an agreement with Google $GOOGL and Broadcom $AVGO for approximately 3.5 gigawatts of computing capacity built on Google's tensor processing units, with the capacity expected to come online starting in 2027, the company said. The deal expands an existing relationship. Broadcom CEO Hock Tan said during an earnings call last month that Broadcom was providing one gigawatt of Google TPU compute for Anthropic in 2026, according to CNBC. Broadcom helps Google design its TPUs. In a securities filing Monday, Broadcom said Google and Broadcom have also entered a long-term supply assurance agreement running through 2031, according to Bloomberg. In tandem with the compute news, Anthropic said its revenue run rate has now crossed $30 billion on an annualized basis -- more than three times the roughly $9 billion figure it recorded at the close of 2025. Enterprise traction has also accelerated: the number of clients committing at least $1 million a year has surpassed 1,000, a threshold Anthropic said is twice what it was reporting around the time of its Series G announcement in February. "We are making our most significant compute commitment to date to keep pace with our unprecedented growth," Anthropic CFO Krishna Rao said in a statement. Anthropic said the majority of the new infrastructure will be built on U.S. soil, framing the commitment as a continuation of a pledge made in November 2025 to direct $50 billion toward domestic computing capacity. According to Monday's securities filing, Broadcom flagged that Anthropic's ability to draw on the additional compute hinges on its ongoing commercial performance, and noted that discussions with outside operational and financial partners are underway to support the rollout. Anthropic trains and runs its Claude models across multiple hardware platforms -- including AWS Trainium, Google TPUs, and Nvidia $NVDA GPUs -- and describes Amazon $AMZN Web Services as its primary cloud and training partner. Claude is available on AWS Bedrock, Google Cloud Vertex AI, and Microsoft $MSFT Azure Foundry. The revenue growth comes as Anthropic navigates a legal dispute with the Pentagon, which designated the company a supply-chain risk after a standoff over AI safety guardrails. Anthropic has warned the label could cost it billions in lost revenue. Still, the company's annualized revenue has more than tripled in the months since that dispute became public, driven in part by demand for its Claude Code developer tools and broader enterprise adoption. Broadcom's shares gained as much as 3.6% in after-hours trading following the filing's release, according to Bloomberg. No financial terms were attached to the agreement. A post-earnings research note from Mizuho analysts put Broadcom's prospective AI revenue from Anthropic at $21 billion for 2026 and $42 billion for 2027, per CNBC.

[9]

Anthropic reportedly mulls designing own chips amid shortage

Claude-creator Anthropic is considering designing its own chips, as advanced AI systems drive shortage, sources told Reuters. Anthropic continues to grab the headlines this week, as it fights the US administration in the courts, and the power of its unreleased Claude Mythos model strikes fear into the hearts of much of the industry, given its ability to exploit security vulnerabilities. Now Reuters is citing sources that say Anthropic is looking closely at the possibility of building its own chips, amid industry concerns that the supply of sophisticated chips required for new AI systems from itself and its competitors may not keep pace. Rivals Meta and OpenAI already have such projects underway. Earlier this week, Anthropic announced a new expanded agreement that will allow Anthropic to tap 3.5GW of Google's tensor processing unit (TPU) capacity from Broadcom. In a regulatory filing on 6 April, Broadcom said that Anthropic's consumption of TPU capacity is dependent on its continued commercial success. The multi-gigawatt capacity is expected to come online in 2027. Last October, Anthropic and Google announced a deal worth "tens of billions of dollars" for 1m of Google's TPUs. The deal is expected to bring more than 1GW of AI compute capacity online for Anthropic this year. The new agreement deepens that relationship, Anthropic said. Broadcom said that it is in a long-term agreement with Google to develop and supply custom TPUs. Anthropic already has multibillion-dollar deals for compute capacity with companies such as Nvidia and Microsoft. It runs Claude on a range of AI hardware, including Amazon Web Sevices' Trainium, Google TPUs and Nvidia GPUs. Amazon is Anthropic's primary cloud provider and training partner. Anthropic said that a vast majority of the new compute will be situated in the US, expanding on its $50bn commitment to strengthening the country's computing infrastructure. Demand for Anthropic's AI tools has accelerated in 2026. Recent data shows that Anthropic is now capturing more than 73pc of all spending among companies buying AI tools for the first time, while its rival OpenAI is down to around 27pc. According to the company, revenue run rate has already surpassed $30bn, up from around $9bn at the end of 2025. More than 1,000 of Anthropic's business customers spend more than $1m on an annualised basis, doubling in less than two months, it added. Given the growing fight for compute power, and the well reported chips shortage, it would not be a surprise for Anthropic to look into the albeit extremely costly business of designing its own chips, but the sources admitted that no project team has yet been set up, and plans have not yet been set in place. Don't miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic's digest of need-to-know sci-tech news.

[10]

Anthropic taps Google and Broadcom for yet more AI chips as revenue run rate tops $30B - SiliconANGLE

Anthropic taps Google and Broadcom for yet more AI chips as revenue run rate tops $30B Anthropic PBC said today its annual revenue run rate has now exceeded $30 billion, up from just $9 billion at the end of last year, after confirming an expanded partnership with Google LLC and Broadcom Inc. to power its artificial intelligence models. The company said it has seen accelerating demand for its Claude services this year. Now, more than 1,000 business customers are spending at least $1 million on its AI tools each year, up from just 500 at the end of February. The acceleration suggests that Anthropic's growth has not been stymied as much as feared by its ongoing dispute with the U.S. government. The company is currently caught up in a legal battle with the White House after military chiefs at the Pentagon decided to classify it as a "supply chain risk" following a standoff over its safety guardrails. Anthropic has previously said that the designation could see it lose billions of dollars in revenue from enterprise customers that do business with the U.S. military. Last week, it told a San Francisco judge that the government's decision prompted more than 100 businesses to contact it to express doubt over their ability to continue working with it. However, Anthropic Chief Commercial Officer Paul Smith last week told Bloomberg in an interview that some customers respected that the company "demonstrates its principles," despite the blow to its revenue. In any case, Anthropic appears confident that it's going to continue growing its business and require more computing resources in future. The expanded partnership with Google and Broadcom may also suggest that it's confident the dispute will ultimately be resolved. In a blog post today, Anthropic said the agreement will ensure it has the "capacity necessary to serve the remarkable growth we have seen in our customer base." Broadcom is the primary developer of Google's tensor processing units or TPUs, which are an alternative processor technology to Nvidia Corp.'s graphics processing units that are more efficient at inference workloads. Broadcom has a multiyear deal with Google to manufacture the TPUs on its behalf that runs until 2031, according to a regulatory filing published on Monday. Their collaboration with Anthropic will provide the AI startup with access to around 3.5 gigawatts of computing power, starting in 2027. "The consumption of such expanded AI compute capacity by Anthropic is dependent on Anthropic's continued commercial success," Broadcom said. "In connection with this deployment, the parties are in discussions with certain operational and financial partners." The news sent Broadcom's stock up more than 3.5% in late trading on Monday after it was announced. The chipmaker's chief executive officer Hock Tan discussed the partnership with Anthropic last month during an earnings call, where he also revealed that he expects AI chip sales to generate more than $100 billion in revenue next year. Google originally developed its TPUs to power Google Search, but soon realized that they're extremely effective at running AI software, enabling it to provide customers with an alternative to Nvidia's GPUs.

[11]

Anthropic may develop its own AI chips

Anthropic is exploring the design of its own AI chips amid a shortage of necessary components for advanced AI development, according to Reuters. The company's plans are in early stages, and it may still opt to continue purchasing chips rather than developing them in-house. Anthropic has not yet committed to a specific chip design or assembled a dedicated team for this initiative, according to sources. Demand for its AI model Claude has surged in 2026, with run-rate revenue exceeding $30 billion, a significant increase from approximately $9 billion at the end of 2025. The company employs a variety of chips, including tensor processing units (TPUs) from Google and chips from Amazon, to support its AI software and chatbot Claude. Recently, Anthropic entered a long-term agreement with Google and Broadcom to address its chip requirements and infrastructure needs. This agreement aligns with Anthropic's broader strategy to invest $50 billion in enhancing U.S. computing infrastructure. Similar initiatives are underway at major tech companies like Meta and OpenAI, reflecting a trend toward developing proprietary AI chips. Industry sources estimate that designing an advanced AI chip can cost around half a billion dollars, necessitating skilled engineers and thorough manufacturing processes to mitigate defects.

[12]

Anthropic, Google, Broadcom announce 3.5GW TPU deal

Revenue rate has surpassed $30bn at Anthropic, up from around $9bn at the end of 2025. A new expanded agreement will allow Anthropic to tap 3.5GW of Google's Tensor Processing Units (TPU) capacity from Broadcom. In a regulatory filing yesterday (6 April), Broadcom said that Anthropic's consumption of TPU capacity is dependent on its continued commercial success. The multi-gigawatt capacity is expected to come online starting 2027. Last October, Anthropic and Google announced a deal worth "tens of billions of dollars" for 1m of Google's TPUs. The deal is expected to bring more than 1GW of AI compute capacity online for Anthropic this year. The new agreement deepens that relationship, Anthropic said. Broadcom said that it is in a long term agreement with Google to develop and supply custom TPUs. Anthropic already has multibillion dollar deals for compute capacity with companies such as Nvidia and Microsoft. It runs Claude on a range of AI hardware, including Amazon Web Sevices' Trainium, Google TPUs, and Nvidia GPUs. Amazon is Anthropic's primary cloud provider and training partner. Anthropic said that a vast majority of the new compute will be situated in the US, expanding on its $50bn commitment to strengthening the country's computing infrastructure. Demand for Anthropic's AI tools has accelerated in 2026. Recent data shows that Anthropic is now capturing more than 73pc of all spending among companies buying AI tools for the first time, while its rival OpenAI is down to around 27pc. According to the company, revenue rate has already surpassed $30bn, up from around $9bn at the end of 2025. More than 1,000 of Anthropic's business customers spend more than $1m on an annualised basis, doubling in less than two months, it added. "We are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development," said Krishna Rao, the chief financial officer at Anthropic. "We are making our most significant compute commitment to date to keep pace with our unprecedented growth." Anthropic raised $30bn in a Series G round led by Coatue Management and Singapore's GIC in February. The last raise values the company at $380bn. Don't miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic's digest of need-to-know sci-tech news.

[13]

Anthropic, Google, Broadcom strike 3.5GW TPU deal

Anthropic's revenue rate has exceeded $30 billion, a significant rise from approximately $9 billion at the end of 2025. This increase accompanies a newly expanded agreement allowing Anthropic to utilize 3.5 gigawatts (GW) of Google's Tensor Processing Units (TPU) capacity from Broadcom. A regulatory filing from Broadcom indicated that Anthropic's TPU capacity usage will depend on its ongoing commercial success. This multi-gigawatt capacity is anticipated to start coming online in 2027. The expanded agreement is expected to strengthen the partnership between Anthropic and Google. In October, the companies announced a deal valued in the "tens of billions of dollars" for 1 million TPUs. This initial agreement is projected to deliver over 1 GW of AI compute capacity for Anthropic by the end of 2026. Broadcom confirmed its long-term agreement with Google for the development and supply of custom TPUs. Anthropic has also secured multibillion-dollar agreements for computing capacity with Nvidia and Microsoft. The company operates its AI model, Claude, on various platforms, including Amazon Web Services' Trainium, Google TPUs, and Nvidia GPUs, with Amazon identified as its primary cloud provider and training partner. The majority of the newly acquired compute capacity is expected to be based in the United States, further enhancing Anthropic's $50 billion investment aimed at bolstering the country's computing infrastructure. This strategic move follows a surge in demand for Anthropic's AI tools in 2026, with the company capturing 73% of first-time AI tool spending, compared to OpenAI's decline to 27%. Currently, more than 1,000 of Anthropic's business clients are spending over $1 million annually, with customer numbers doubling in under two months. Krishna Rao, Anthropic's Chief Financial Officer, stated, "We are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development." Rao further noted, "We are making our most significant compute commitment to date to keep pace with our unprecedented growth." In February, Anthropic raised $30 billion in a Series G funding round led by Coatue Management and Singapore's GIC, valuing the company at $380 billion.

[14]

Anthropic tops $30 billion run rate, seals deal with Broadcom - The Economic Times

Anthropic PBC said its revenue run rate has now topped $30 billion, up from $9 billion at the end of 2025, and confirmed plans to work with Broadcom and Google to power its burgeoning operations. The AI startup said that demand for its Claude services has accelerated this year, with more than 1,000 business customers spending over $1 million on an annual basis. That figure has more than doubled since February. The collaboration with Broadcom and Google, which was first announced last month, will help Anthropic build "the capacity necessary to serve the remarkable growth we have seen in our customer base," chief financial officer Krishna Rao said in a statement. The annual run rate -- a popular benchmark among tech startups -- extrapolates the current sales level over a full year. The latest numbers suggest that a high-profile dispute with the US government hasn't stymied growth. Anthropic is waging a legal fight over the Pentagon's decision to declare the company a supply-chain risk following a standoff over AI safety guardrails. Anthropic has warned that the labeling could cost it billions in lost revenue, and an attorney for the company recently told a judge in San Francisco that the federal government's actions led to more than 100 enterprise customers contacting the company to express doubt about continuing their work with Anthropic. Still, some customers respect that Anthropic "demonstrates its principles" in its dealings with the US government, Paul Smith, Anthropic's chief commercial officer, said in an interview last week. Broadcom is developing chips using Google's tensor processing units, or TPUs, offering an alternative to technology from Nvidia. Broadcom and Alphabet's Google have entered a long-term agreement to provide the chips and a supply assurance pact that runs through 2031, according to a Broadcom filing Monday. The three companies also are expanding a strategic collaboration that will let Anthropic access about 3.5 gigawatts' worth of computing power. That will begin in 2027. "The consumption of such expanded AI compute capacity by Anthropic is dependent on Anthropic's continued commercial success. In connection with this deployment, the parties are in discussions with certain operational and financial partners," Broadcom said in the filing. Broadcom shares climbed as much as 3.6% in late trading after the filing was announced. The company's chief executive officer, Hock Tan, previously discussed the collaboration during an earnings call last month. He also said Broadcom expects its AI chip sales to top $100 billion next year, making it a bigger competitor to Nvidia. Google's TPUs were originally designed to speed up its ubiquitous search engine, but have become useful at creating and running AI software. Broadcom takes Google's specifications and creates fully-formed designs that can then be sent for manufacturing.

[15]

Anthropic Eyes Custom AI Chips Amid Global Shortage As Claude Demand Surges Past $30 Billion Run Rate: Re

Claude-parent Anthropic is reportedly in the preliminary stages of evaluating in-house chip development. Early-Stage Chip Ambitions The effort remains exploratory, with no formal commitment, finalized design, or dedicated team in place, Reuters reported on Thursday, citing people familiar with the matter. The company could still opt to continue purchasing chips rather than building its own, the report said. Anthropic did not immediately respond to Benzinga's request for comments. The development comes as the company and its competitors grapple with a shortage of AI chips required to power and advance next-generation AI systems. AI Boom Fuels Urgency The discussions come amid accelerating demand for Anthropic's Claude models. The company earlier this week said its annualized revenue run rate has surged past $30 billion, up from roughly $9 billion at the end of 2025. Meanwhile, Anthropic is currently embroiled in a legal controversy with the Pentagon. Earlier this week, a federal appeals court in Washington, D.C., declined to temporarily block the Pentagon's decision to label Anthropic a national security risk. The Donald Trump administration classified the company as a supply-chain concern after it refused to ease safeguards on its Claude chatbot for uses such as surveillance or autonomous weapons. Reliance On Big Tech HardwareIndustry-Wide Shift Toward Custom Silicon Anthropic's deliberations mirror a broader trend across the AI sector. Designing advanced AI chips is both complex and costly, with estimates suggesting development can run as high as $500 million, the report said. Meta stock ranks in the 89th percentile for Quality in Benzinga Edge Ratings, but is currently trending downward across short, medium and long-term timeframes. Disclaimer: This content was partially produced with the help of AI tools and was reviewed and published by Benzinga editors. Photo Courtesy: gguy on Shutterstock.com Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[16]

Broadcom Signs Anthropic, Google TPU 'Groundbreaking' Deals To Drive AI Capacity

'Anthropic, beginning in 2027, will access through Broadcom approximately 3.5 gigawatts as part of the multiple gigawatts of next-generation TPU-based AI compute capacity committed by Anthropic,' says Broadcom in a government filing. Broadcom is making huge moves in the AI market as the $77 billion tech giant signs deals with Google and Anthropic around developing and supplying Tensor Processing Units (TPUs) as well as supplying networking components. Broadcom, Anthropic and Google signed a deal for approximately 3.5 gigawatts of next-generation TPU capacity starting in 2027. "Anthropic, beginning in 2027, will access through Broadcom approximately 3.5 gigawatts as part of the multiple gigawatts of next-generation TPU-based AI compute capacity committed by Anthropic," said Broadcom in a recent filing with the U.S. Securities and Exchange Commission. [Related: The 20 Hottest AI Cloud Companies: The 2026 CRN AI 100] The compute capacity will draw on Google's TPUs and come online in 2027 as Anthropic's annual run rate has more than tripled since late 2025. "The consumption of such expanded AI compute capacity by Anthropic is dependent on Anthropic's continued commercial success," Broadcom said. Anthropic said this week that the AI startup's annual revenue run rate has now crossed $30 billion. This represents an increase of over 300 percent compared with the roughly $9 billion Anthropic recorded at the close of 2025. "We are making our most significant compute commitment to date to keep pace with our unprecedented growth," said Krishna Rao, CFO of Anthropic, in a blog post. "This groundbreaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: We are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development," Rao said. Claude is Anthropic's most popular AI model family, initially released in 2023. The vast majority of the new compute infrastructure will be built in the U.S., Anthropic said. Separately, Broadcom and Google also entered into a long-term agreement "for Broadcom to develop and supply custom TPUs for Google's future generations of TPUs," said Broadcom in its filing. The two tech giants also entered a "Supply Assurance Agreement for Broadcom to supply networking and other components to be used in Google's next-generation AI racks through up to 2031," Broadcom said. Broadcom CEO Hock Tan recently spoke about the company's growing partnership with Google during its financial earnings report in March. "For Google, we continue our trajectory of growth in 2026 with strong demand for the seventh-generation iNode TPU. In 2027 and beyond, we expect to see even stronger demand from next generations of TPU," Tan said. In late 2025, Broadcom also created a new partnership with Anthropic rival OpenAI around custom silicon innovation for AI. Both AI model companies rely on GPUs from Nvidia through giant cloud providers like Google, AWS and Microsoft. In fact, many rivals are both competing and partnering with each other as the AI market booms. Anthropic, for its part, said customer needs are front and center when it comes to interoperability and cost optimization. "We train and run Claude on a range of AI hardware -- AWS Trainium, Google TPUs, and Nvidia GPUs -- which means we can match workloads to the chips best suited for them," said Anthropic in a statement regarding its new Broadcom and Google deal. "This diversity of platforms translates to better performance and greater resilience for customers who depend on Claude for critical work," said Anthropic. "Claude remains the only frontier AI model available to customers on all three of the world's largest cloud platforms: Amazon Web Services (Bedrock), Google Cloud (Vertex AI), and Microsoft Azure (Foundry)."

[17]

Anthropic Locks Multi-Gigawatt Compute Deal with Google and Broadcom Amid Surging AI Demand

The expansion represents one of Anthropic's largest compute commitments to date and underscores the intensifying competition among leading AI developers to secure long term high performance infrastructure at scale. Massive expansion of frontier AI compute capacity Under the new agreement, Anthropic will access multi gigawatt scale TPU infrastructure developed in collaboration with Google and Broadcom. The capacity is expected to come online progressively starting in 2027 and will significantly increase the company's ability to train and deploy frontier Claude models. According to the company, the majority of the new infrastructure will be located in the United States, aligning with its broader strategy to strengthen domestic AI compute capabilities. This expansion builds on Anthropic's earlier commitment announced in November 2025 to invest 50 billion dollars in US based computing infrastructure.

[18]

Anthropic signs AI compute deal with Google and Broadcom

Revenue growth accelerates: The deal marks a significant expansion of Anthropic's infrastructure as demand for its Claude models increases. The company said its annualised revenue run rate (ARR) has crossed $30 billion in 2026, up from about $9 billion at the end of 2025. It also reported that more than 1,000 enterprise customers are now spending over $1 million annually, doubling in under two months. This growth places Anthropic ahead of rival OpenAI on reported annualised revenue. A Reuters report last month said OpenAI had crossed $25 billion in annualised revenue as of early 2026, highlighting the rapid expansion and intensifying competition in the enterprise AI market. ARR metrics under scrutiny: The surge in reported ARR across AI firms has also drawn scrutiny. Recent debates around startups like Emergent have raised questions about how "run rate" revenue is calculated, especially when based on short-term usage or token consumption rather than long-term contracts, making comparisons across companies less straightforward. Rising costs reshape pricing: At the same time, changes in pricing models point to rising infrastructure costs. Anthropic has begun charging separately for tools like OpenClaw, citing the high compute load from agent-based tasks, signalling a shift away from flat subscriptions as usage becomes more resource-intensive. "This groundbreaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development," said Krishna Rao, CFO of Anthropic. Most of the new compute capacity will be located in the United States, extending the company's earlier commitment to invest $50 billion in US-based AI infrastructure. Anthropic currently relies on a mix of hardware platforms, including chips from Amazon Web Services, Google TPUs, and NVIDIA GPUs. The company said this multi-platform strategy helps optimise performance and reduce dependency on a single supplier. Despite the expanded partnership with Google, Amazon remains Anthropic's primary cloud and training partner, with ongoing collaboration on Project Rainier. The company said its Claude models are available across all three major cloud platforms, Amazon Web Services, Google Cloud, and Microsoft Azure.

[19]

Anthropic secures 3.5 GW AI Compute Deal with Google and Broadcom as Revenue Hits $30 Billion

The Artificial Intelligence (AI) race is entering a new phase of infrastructure growth, as Anthropic announced a long-term collaboration with Google and Broadcom, aiming to gain the next-generation AI compute capacity. The acquisition is timed with the annualized revenue run rate of Anthropic exceeding $30 billion amid the growing requirements for its Claude models globally. Anthropic secured around 3.5 gigawatts of compute capacity based on the Tensor Processing Unit (TPU), which is expected to come online by 2027. The training and deployment of the next-generation AI systems of Anthropic will be powered by these TPUs designed by Google and Broadcom's hardware supply. The size of this transaction underscores the increasing value of compute as a competitive asset in the AI industry. As continue to grow in complexity, high-performance infrastructure is now a significant bottleneck, just like algorithmic innovation. Broadcom independently confirmed a long-term partnership with Google to produce future generations of TPU, solidifying its status in the AI semiconductor ecosystem while ensuring a steady hardware supply to hyperscale AI workloads.

[20]

Anthropic weighs building its own AI chips- Reuters By Investing.com

Investing.com-- Anthropic is considering designing its own artificial intelligence chips amid a shortage of processors needed to power advanced AI, Reuters reported on Thursday. The plans are still in early stages, and Anthropic may still decide to only buy AI chips over designing them, Reuters reported, citing people with knowledge of the matter. Get more breaking news on the biggest AI firms by subscribing to InvestingPro Anthropic- which is backed by several major tech firms, including Alphabet and Amazon, uses a host of different chips to run its flagship AI model Claude. The company had earlier this week signed a long-term deal with Google (NASDAQ:GOOGL) and Broadcom Inc (NASDAQ:AVGO) for AI chips, specifically Google's Tensor Processing Units. Anthropic's consideration of building in-house AI chips comes amid similar discussions at other major tech firms, including Meta Platforms Inc (NASDAQ:META) and OpenAI.

[21]

Anthropic weighs building it own AI chips, sources say

SAN FRANCISCO, April 9 (Reuters) - Artificial intelligence lab Anthropic is exploring the possibility of designing its own chips, three sources said, as the company and its rivals respond to a shortage of AI chips needed to power and develop more advanced AI systems. The plans are in early stages and the company may still decide to only buy AI chips and not design any, according to two people with knowledge of the matter and one person briefed on Anthropic's plans. The company has yet to commit to a specific design or put together a dedicated team to work on the project, one of the sources said. A spokesperson for the San Francisco-based company declined to comment on the article. Demand for its AI model Claude has accelerated in 2026, with the startup's run-rate revenue now surpassing $30 billion, up from about $9 billion at the end of 2025, Anthropic said earlier this week. Anthropic uses a range of chips, including tensor processing units (TPUs) designed by Alphabet's Google and Amazon's chips to develop and run its AI software and chatbot Claude. Earlier this week, Anthropic signed a long-term deal with Google and Broadcom, which helps design the TPUs. That deal builds on the company's commitment to invest $50 billion in strengthening U.S. computing infrastructure. Anthropic's discussions mirror similar efforts underway at large tech companies that are seeking to design their own AI chips, including Meta and OpenAI. Designing an advanced AI chip can cost roughly half a billion dollars, according to industry sources, as companies need to employ skilled engineers and spend to make sure the manufacturing process has no defects. (Reporting by Max A. Cherney and Deepa Seetharaman in San Francisco; Editing by Sayantani Ghosh and Aurora Ellis)

[22]

Anthropic may build its own AI chips amid rising demand and supply crunch

In-house chip plans are still early-stage with no final design or dedicated team yet Anthropic is again making headlines and this time it is for something new. As per the reports, the AI startup is reportedly considering designing its own AI chips. The company is reportedly aiming to reduce its dependence on third-party hardware providers and address growing shortages. This comes at a time when demand for high-performance AI infrastructure continues to surge globally. According to a report by Reuters and The Information, the company is evaluating the feasibility of developing in-house chips, although the effort is still in its early stages with no final design or dedicated engineering team in place yet. At present, Anthropic relies on a mix of hardware from big players like Nvidia, Google and Broadcom. It also uses cloud-based chips such as Amazon's Trainium and Inferentia processors, along with Google's tensor processing units (TPUs) for training and running its AI models, including the Claude chatbot. However, with AI adoption, access to such resources is getting difficult. Also read: Apple iOS 26.4.1 update is here and it fixes a critical iCloud issue Anthropic has already partnered with Google and Broadcom to co-develop the TPU infrastructure to expand its AI computing capacity in the United States. While the company is expanding its strategic partnerships, this new in-house chip development shows the pressure on AI firms to secure dedicated resources. And for established chipmakers like Nvidia, this can be a gradual shift as customers look to reduce reliance on external suppliers. As per the reports, the industry estimates suggest that development costs can exceed $500 million. Not only that, but the company will also need specialised talent and long timelines for design, testing and large-scale manufacturing. But for now, these ambitions remain exploratory.

Share

Copy Link

Anthropic expanded its compute agreement with Google and Broadcom, securing 3.5 gigawatts of tensor processing unit capacity starting in 2027. The deal reflects explosive demand for Claude AI models, with the company's annualized revenue jumping from $9 billion to $30 billion in just three months. More than 1,000 business customers now spend over $1 million annually on Anthropic's services.

Anthropic Expands Google and Broadcom Deal to Secure Massive AI Compute Capacity

Anthropic announced Monday it has signed an expanded agreement with Google and Broadcom to secure approximately 3.5 gigawatts of additional AI compute capacity, marking the company's most significant infrastructure commitment to date

1

. The new capacity, which will come online in 2027, builds on the company's October 2025 deal for more than a gigawatt of compute and extends Anthropic's $50 billion commitment to invest in U.S. AI infrastructure investment2

. The majority of this infrastructure will be housed in the United States, positioning Anthropic to meet what CFO Krishna Rao described as "exponential growth" in the company's customer base while enabling Anthropic Claude AI models to "define the frontier of AI development"1

.

Source: DT

The deal gives Anthropic access to Google Cloud TPUs, or Tensor Processing Units, Google's advanced AI chips that compete directly with Nvidia's dominant GPUs in the semiconductor industry

5

. According to Broadcom's securities filing, the multi-year supply agreement runs through 2031, with Broadcom providing networking and other components for Google's next-generation AI racks2

. The filing notably states that Anthropic's use of the expanded capacity is contingent on its continued commercial performance, suggesting Broadcom views the arrangement as carrying financial risk3

.Revenue Surge Drives Infrastructure Expansion Amid Soaring AI Demand

The infrastructure expansion comes as Anthropic's annualized revenue run rate has surpassed $30 billion, up dramatically from approximately $9 billion at the end of 2025

1

. This represents a more than threefold increase in just three months, driven largely by enterprise adoption of Claude AI models. The company now serves more than 1,000 business customers spending over $1 million annually, double the figure from February when Anthropic closed its $30 billion Series G funding round that valued the company at $380 billion2

.The hardware and infrastructure required to develop a single gigawatt of capacity costs an estimated $35 billion to $50 billion, with the bulk spent on chips

5

. This suggests Anthropic's total commitment to Google and Broadcom could run into hundreds of billions of dollars as the company races to secure computing resources for both AI model training and serving customer demand. In all, the deal would give Anthropic access to close to 5 gigawatts in new computing capacity over the coming years, according to sources familiar with the terms5

.

Source: The Next Web

Multi-Cloud Strategy and Potential Move to Design Its Own AI Chips

Despite the expanded Google partnership, Amazon Web Services remains Anthropic's primary cloud and training partner under Project Rainier, the Trainium 2-based supercluster in Indiana

2

. The new Google-Broadcom capacity sits alongside that arrangement rather than replacing it, as Anthropic continues to draw heavily on Nvidia GPUs through cloud services including AWS, Google Cloud, and Microsoft Azure2

. This multi-cloud approach allows Anthropic to "match workloads to the chips best suited for them"3

.In a development that could reshape its hardware strategy, Anthropic is exploring the possibility of designing its own AI chips, according to three sources cited by Reuters

4

. The plans remain in early stages and the company has yet to commit to a specific design or assemble a dedicated team, with Anthropic potentially deciding to only purchase AI chips rather than design them4

. These discussions mirror similar efforts at Meta and OpenAI, though designing an advanced AI chip can cost roughly half a billion dollars as companies need skilled engineers and must ensure the manufacturing process has no defects4

.Related Stories

Industry Implications and Competitive Landscape

The Anthropic deal represents a significant win for Broadcom, which acts as the silicon implementation partner for Google, converting the search giant's TPU architecture into manufacturable ASIC layouts while supplying high-speed SerDes, power management, and packaging

2

. TSMC handles fabrication, while Google owns both the TPU architecture and software stack. Broadcom shares rose almost 3 percent after markets closed following the announcement5

. The same division of labor underpins Broadcom's separate $10 billion custom silicon program with OpenAI, announced as a 10 gigawatt co-development effort last October, making Broadcom the implementation layer for two of the three largest U.S. frontier model developers2

.

Source: Tom's Hardware

Analysts at Mizuho estimated that Broadcom would record $21 billion in AI revenue from Anthropic in 2026 and $42 billion in 2027, though the SEC filing didn't contain specific amounts

2

. These massive compute deals have drawn scrutiny for their circularity, with Big Tech groups like Google acting simultaneously as customers, suppliers, and investors in AI labs5

. Google has invested billions into Anthropic, giving it a 14 percent stake as of March last year. The arrangements reflect an industry-wide scramble to secure AI chip capacity amid an ongoing AI chip shortage, with OpenAI having struck similar computing deals with Broadcom, Nvidia, AMD and others to lock in as much capacity as possible5

.References

Summarized by

Navi

[1]

[2]

[3]

Related Stories

Amazon Considers Multibillion-Dollar Investment in Anthropic, Pushing for Adoption of Its AI Chips

08 Nov 2024•Business and Economy

Google Invests $1 Billion in Anthropic, Fueling AI Race Among Tech Giants

22 Jan 2025•Business and Economy

Anthropic Secures Massive Google Cloud Deal for AI Computing Power

22 Oct 2025•Technology

Recent Highlights

1

Taylor Swift files trademark applications to protect voice and image from AI deepfakes

Entertainment and Society

2

AI outperforms doctors in emergency room diagnosis, but researchers push for collaborative care

Health

3

AI chatbots provide detailed biological weapons instructions, raising urgent biosecurity concerns

Technology