Grammarly faces class action lawsuit as journalist challenges identity-stealing AI feature

13 Sources

13 Sources

[1]

Grammarly Is Facing a Class Action Lawsuit Over Its AI 'Expert Review' Feature

Julia Angwin, an award-winning investigative journalist who founded The Markup, a nonprofit news organization that covers the impact of technology on society, is the only named plaintiff in the suit, which does not call for a specific amount in damages but argues that damages across the plaintiff class are in excess of $5 million. She was among the many individuals, alongside Stephen King and Neil deGrasse Tyson, offered up via Grammarly's "Expert Review" tool as a kind of virtual editor for users. The federal suit, filed Wednesday afternoon in the Southern District of New York, states that Angwin, on behalf of herself and others similarly situated, "challenges Grammarly's misappropriation of the names and identities of hundreds of journalists, authors, writers, and editors to earn profits for Grammarly and its owner, Superhuman." The complaint comes as Superhuman has already decided to discontinue the feature amid significant public backlash. "After careful consideration, we have decided to disable Expert Review as we reimagine the feature to make it more useful for users, while giving experts real control over how they want to be represented -- or not represented at all," said Ailian Gan, Superhuman's Director Product Management, Agents, in a statement to WIRED shortly before the claim was filed. "We built the agent to help users tap into the insights of thought leaders and experts, and to give experts new ways to share their knowledge and reach new audiences. Based on the feedback we've received, we clearly missed the mark. We are sorry and will do things differently going forward." As WIRED reported earlier this month, Superhuman last year added a suite of AI-powered widgets to the platform, including one that purported to have a veteran writer (living or dead) weigh in with a critique of the user's text. While a disclaimer clarified that none of the people cited had endorsed or directly participated in the development of this tool, which leveraged an underlying large language model, various writers, including WIRED journalists, expressed frustration over Grammarly invoking their likenesses and apparently regurgitating their life's work with these AI agents. Angwin's attorney Peter Romer-Friedman says that longstanding laws in New York and California, where Superhuman is based, clearly prohibit the commercial use of a person's name and likeness without their permission. "Legally, we think it's a pretty straightforward case," he tells WIRED. "More broadly, one of the reasons why we're filing this case is, you know, we can see what's happening in our society: that lots of professionals who spend years, or in Julia's case, decades, honing a skill or a trade, then see that their name or their skills are being appropriated by others without their consent." As a New York Times opinion writer, Angwin has written extensively about how Silicon Valley giants have eroded privacy in the 21st century. "Contrary to the apparent belief of some tech companies, it is unlawful to appropriate peoples' names and identities for commercial purposes, whether those people are famous or not," the lawsuit states. "Through this action, Ms. Angwin seeks to stop Grammarly and its owner, Superhuman, from trading on her name and those of hundreds of other journalists, authors, editors, and even lawyers, and to stop Grammarly from attributing words to them that they never uttered and advice that they never gave."

[2]

Grammarly Is Offering 'Expert' AI Reviews From Your Favorite Authors -- Dead or Alive

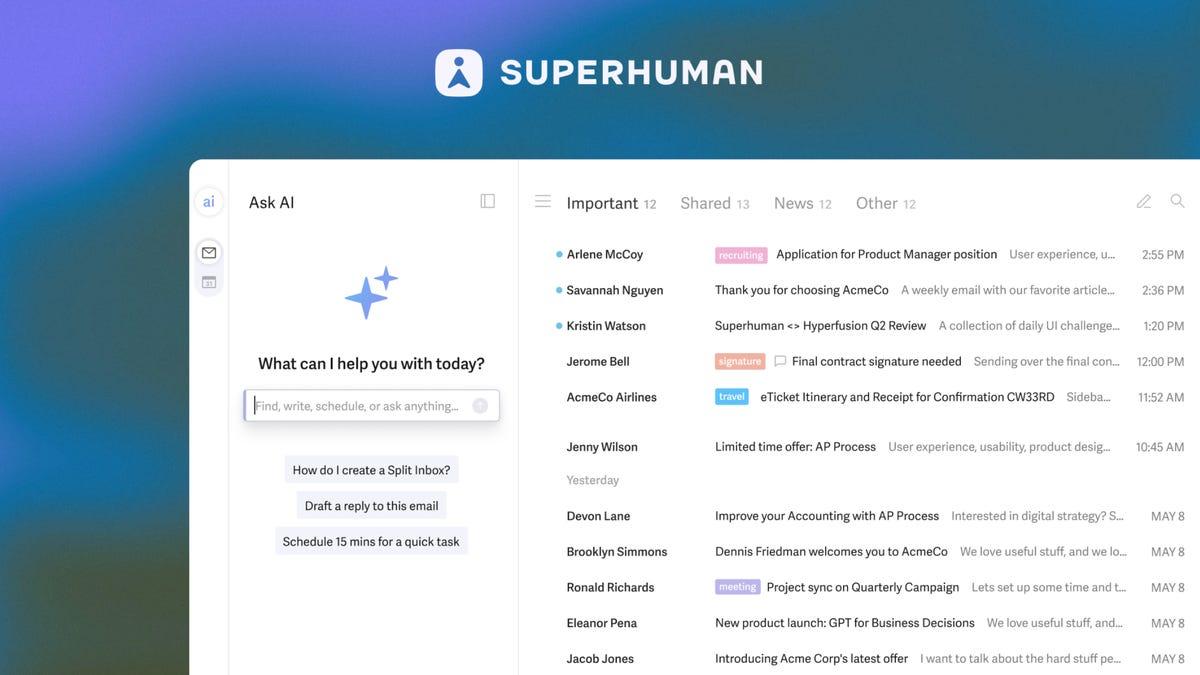

Do you have fond memories of being a teacher's pet? Wish you could still get notes from your favorite college professor? Dream about some implacable voice of authority correcting your every word choice and punctuation mark? Well, great news: a certain software company has engineered a way to simulate criticism not just from bestselling authors and famous academics of our time, but also many who died decades ago -- and they evidently didn't need permission from anybody to do it. Once relied upon only to proofread for correct grammar and spelling, the writing tool Grammarly has added a host of generative AI features over the past several years. In October, CEO Shishir Mehrotra announced that the overall company was rebranding as "Superhuman" to reflect a new suite of AI-powered products. However, the AI writing "partner" remains called Grammarly. "When technology works everywhere, it starts to feel ordinary," Mehrotra wrote in his press release. "And that usually means something extraordinary is happening under the hood." The expanded Grammarly platform now offers an AI solution for every imaginable need -- and some you've probably never had. There's an AI chatbot that will answer specific questions as you compose a draft, a "paraphraser" feature that suggests changes in style, a "humanizer" that revises according to a selected voice, an AI grader that predicts how your document would score as college coursework, and even tools for flagging and tweaking phrases commonly produced by large language models. (Sure, you're using AI to do everything here, but you don't want it to sound like that.) Perhaps most insidiously, however, Grammarly now has an "expert review" option that, instead of producing what looks like a generic critique from a nameless LLM, lists a number of real academics and authors available to weigh in on your text. To be clear: those people have nothing to do with this process. As a disclaimer clarifies: "References to experts in this product are for informational purposes only and do not indicate any affiliation with Grammarly or endorsement by those individuals or entities." As advertised on a support page, Grammarly users can solicit tips from virtual versions of living writers and scholars such as Stephen King and Neil deGrasse Tyson (neither of whom responded to a request for comment) as well as the deceased, like the editor William Zinsser and astronomer Carl Sagan. Presumably, these different AI agents are trained on the oeuvres of the people they are meant to imitate, though the legality of this content-harvesting remains murky at best, and the subject of many, many copyright lawsuits. "Our Expert Review agent examines the writing a user is working on, whether it's a marketing brief or a student project on biodiversity, and leverages our underlying LLM to surface expert content that can help the document's author shape their work," Jen Dakin, senior communication manager at Superhuman. "The suggested experts depend on the substance of the writing being evaluated. The Expert Review agent doesn't claim endorsement or direct participation from those experts; it provides suggestions inspired by works of experts and points users toward influential voices whose scholarship they can then explore more deeply." Someone like King may see the advance of AI as unstoppable, and there may be nobody left to defend Zinsser's 1976 handbook On Writing Well from the big tech vultures, but what of the countless other luminaries who still want to keep their material from being compressed into an algorithm? Vanessa Heggie, an associate professor of the history of science and medicine at the University of Birmingham, recently took to LinkedIn to share an especially grim example of how the feature works, accusing Superhuman of "creating little LLMs" based on the "scraped work" of the living and dead alike, trading on "their names and reputations." The screenshot she posted showed the availability of analysis from an AI agent modeled on David Abulafia, an English historian of the medieval and Renaissance periods who died in January. "Obscene," Heggie wrote.

[3]

One of Grammarly's 'experts' is suing the company over its identity-stealing AI feature

For months, Grammarly has been using the identities of real people (including us) for its "Expert Review" AI suggestions without getting their permission, and now it's facing a lawsuit from one of the journalists included, as previously reported by Wired. The class-action complaint filed by journalist Julia Angwin on Wednesday alleges that Superhuman violated the "experts'" privacy and publicity rights by breaking laws against using someone's identity for commercial purposes without their consent. Angwin says she found out her identity was used by way of Casey Newton, who is also one of the experts that The Verge uncovered being used by Grammarly when we tested the feature this week. Several current Verge staff members popped up attached to Grammarly's AI-generated suggestions, too, including editor in chief Nilay Patel. Superhuman announced earlier Wednesday that it's disabling the feature, after initially launching an email inbox earlier this week where writers and academics could ask to opt out. CEO Shishir Mehrotra says that "the agent was designed to help users discover influential perspectives and scholarship relevant to their work, while also providing meaningful ways for experts to build deeper relationships with their fans. We hear the feedback and recognize we fell short on this. I want to apologize and acknowledge that we'll rethink our approach going forward."

[4]

Grammarly says it will stop using AI to clone experts without permission

Superhuman CEO Shishir Mehrotra: Back in August, we launched a Grammarly agent called Expert Review. The agent draws on publicly available information from third-party LLMs to surface writing suggestions inspired by the published work of influential voices. Over the past week, we received valid critical feedback from experts who are concerned that the agent misrepresented their voices. This kind of scrutiny improves our products, and we take it seriously. As context, the agent was designed to help users discover influential perspectives and scholarship relevant to their work, while also providing meaningful ways for experts to build deeper relationships with their fans. We hear the feedback and recognize we fell short on this. I want to apologize and acknowledge that we'll rethink our approach going forward. After careful consideration, we have decided to disable Expert Review while we reimagine the feature to make it more useful for users, while giving experts real control over how they want to be represented -- or not represented at all. We deeply believe in our mission to solve the "last mile of AI" by bringing AI directly to where people work, and we see this as a significant opportunity for experts. For millions of users, Grammarly is a trusted writing sidekick -- ever-present in every application, ready to help. We're opening up this platform so anyone can build agents that work like Grammarly -- expanding from one sidekick to a whole team. Imagine your professor sharpening your essay, your sales leader reshaping a customer pitch, a thoughtful critic challenging your arguments, or a leading expert elevating your proposal. For experts, this is a chance to build that same ubiquitous bond with users, much like Grammarly has. But in this world, experts choose to participate, shape how their knowledge is represented, and control their business model. That future excites me, and I hope to build it with experts who want to develop it alongside us.

[5]

Grammarly has disabled its tool offering generative-AI feedback credited to real writers

Superhuman has taken its writing assistant Grammarly on quite the merry-go-round ride regarding its approach to AI tools. In August, the company launched a feature called Expert Review that would offer feedback on your writing, offering AI-generated feedback that would appear to come from a famous writer or academic of note. These recreations were based on "publicly available information from third-party LLMs," which sounds a lot like web crawlers of dubious legality were involved. The suggested experts would be based on the subject matter and could be anyone from great scientific minds to bestselling fiction authors to your friendly neighborhood tech bloggers. Living or dead, these writers' names appeared on Grammarly without their permission or knowledge. "References to experts in this product are for informational purposes only and do not indicate any affiliation with Grammarly or endorsement by those individuals or entities," the company hedged in a disclaimer on the service. As one might imagine, once people took notice, a large number of the living contingent of those writers were none too pleased with Grammarly and Superhuman. The company initially attempted to address the complaints by allowing writers to opt out of the platform. Which I'm sure was a big relief to the deceased contingent and to those living ones who aren't closely following AI news and might still not know they were being cited by the tool. Today, Superhuman CEO Shishir Mehrotra wrote in a LinkedIn post that the company will disable Expert Review while it reassesses the feature. "The agent was designed to help users discover influential perspectives and scholarship relevant to their work, while also providing meaningful ways for experts to build deeper relationships with their fans," he said. Yes, Carl Sagan must be bemoaning the lack of deep relationships with his fans from the afterlife.

[6]

Grammarly Allegedly â€~Misappropriated’ Names of Journalists, Says Class Action Suit

The Grammarly Expert Review feature included the names of writers and literary figures without consulting them first. Last week, Wired’s Miles Klee reported on Grammarly’s AI text editing feature, called “Expert Review†which uses the names of journalists and other literary figures in conjunction with revision advice for writers. These expertsâ€"including my Gizmodo colleague Raymond Wongâ€"served as “inspiration,†according to Grammarly. Writers had not been consulted about their inclusion. On Wednesday, the Expert Review feature was pulled. But on that same day, a class-action lawsuit was filed against Grammarly, alleging that the feature “misappropriated†the identities of the figures who ostensibly inspired it. The class in the class action lawsuit currently only has one named member: investigative journalist Julia Angwin, although it name checks notable figures named by Grammarly such as Stephen King. According to the text of the filing, the suit “challenges Grammarly’s misappropriation of the names and identities of hundreds of journalists, authors, writers, and editors to earn profits for Grammarly and its owner, Superhuman.†As noted in the lawsuit, California Civil Code § 3344(a)(1) reads as follows: “Any person who knowingly uses another’s name, voice, signature, photograph, or likeness, in any manner, on or in products, merchandise, or goods, or for purposes of advertising or selling, or soliciting purchases of, products, merchandise, goods, or services, without that person’s prior consent, or, in the case of a minor, the prior consent of their parent or legal guardian, shall be liable for any damages sustained by the person or persons injured as a result thereof.†There's no demand in the suit for a specific sum of money in damages, though it does say "the amount in controversy exceeds $5 million." Angwin spoke to Wired’s Klee for a story about the suit, and told him the feature wasn’t the sort of “anodyne†AI fluff she expected trying to sand down people’s writing, but instead was “kind of actively making it worse,†and added, “I was surprised at how bad it was.†In his Wednesday LinkedIn post apologizing saying and the feature is temporarily disabled, CEO Shishir Mehrotra wrote that “the agent was designed to help users discover influential perspectives and scholarship relevant to their work, while also providing meaningful ways for experts to build deeper relationships with their fans.†He and his company “recognize we fell short on this,†he says. The LinkedIn post is not about the class action suit. Gizmodo reached out to Grammarly for a comment on the suit, and will update if we hear back.

[7]

Grammarly will keep using authors' identities without permission unless they opt out

Last week, my colleagues discovered that Superhuman's Grammarly had turned me into an AI editor, using my real name, without ever asking my permission. They did the same to my boss Nilay Patel, my colleagues David Pierce and Tom Warren, and -- as Wired initially reported last Wednesday -- many authors far more famous than us. Grammarly's new "Expert Review" feature uses our names to give its AI suggestions credibility that they don't deserve. Now, Grammarly has finally addressed the backlash -- but not by apologizing, and not by walking the feature back. For now, it will graciously give us the chance to opt-out of something we didn't know it was doing to begin with. "Grammarly declined my request to interview CEO Shishir Mehrotra today," writes my former colleague Casey Newton in the latest issue of Platformer. "But it told me that in response to criticisms, it will allow experts to opt out of the feature by emailing [email protected]." The company also provided this statement to Casey and to The Verge, from Alex Gay, Vice President of Product & Corporate Marketing at Superhuman: We've heard the feedback about this tool and appreciate the engagement from those who have taken the time to raise thoughtful questions about the functionality and the experts surfaced. We agree that the product experience can be improved for both users and experts. The agent was designed to help users discover influential perspectives and scholarship that add value to their work. We want the people behind those perspectives to have greater control over whether their name is used, while providing new ways for influential voices to reach new audiences. Our goal is to improve Expert Review to deliver this outcome. There's not a single word about "permission" in that statement, and no sign that Grammarly is walking back the idea. It sounds like the company fully intends to keep pretending real human beings are behind its edits, just with "greater control". For what it's worth, we asked Superhuman whether it would provide any protection for our names other than an opt-out email. This was the reply from spokesperson Jen Dakin: "We are working on further refining the feature in addition to the opt-out option." Superhuman had better offer authors "greater control" over their own names than an email address, because email is a ridiculous solve for the problem. How would we have known our names were being appropriated unless we tried the product ourselves? Shouldn't people deserve to have their names protected even if they've never heard of Grammarly? Shouldn't they have that opportunity even if they don't know anyone who uses Grammarly? Why should we have to do the work of protecting our own names at all? I don't use Grammarly, and the only reason I found out my name was appropriated is because two journalists at The Verge decided to test. We can't do that for everyone.

[8]

Grammarly Offering Manuscript Reviews by AI Versions of Recently Deceased Professors

Can't-miss innovations from the bleeding edge of science and tech Grammarly is being accused of "necromancy" after users discovered a feature for reviewing manuscripts with AI versions of real professors -- some of whom have already left this mortal coil. The issue was first flagged by Verena Krebs, a medieval historian and Ruhr-University Bochum professor. On Sunday, Krebs shared a screenshot showing the "Expert Review" tool allowing users to pick historian David Abulafia as one of the available "experts" to check their paper. If Abulafia objected to his inclusion here, we'll probably never know, since he died in January. The news sparked a flurry of fiery responses across academic circles. "Grammarly is now offering 'expert review' of your work by living and dead academics," Vanessa Heggie, an associate professor in the history of science and medicine at the University of Birmingham, wrote in a LinkedIn post. "Without anyone's explicit permission it's creating little LLMs based on their scraped work and using their names and reputation." "I have seen a lot of cursed stuff in my time in academia but this is among the most cursed," Claire E. Aubin, a historian and host of the "This Guy Sucked" podcast, wrote in a now viral post on Bluesky. Grammarly describes "Expert Review" as an AI agent that can help you "meet the expectations of your discipline and your project by drawing on insights from subject-matter experts and trusted publications," which comes packed with Grammarly's suite of new AI tools it released last summer. To use it, you open your document in Grammarly's AI platform, select the Expert Review agent, and let it make suggestions based on your expert of choice. The tool will even generate revised versions of your writing based on the suggestions being made. "Revise the draft yourself or let Expert Review rework things for you," Grammarly's website claims. The tool already feels invasive for essentially impersonating real academics by providing AI-generated feedback under their name, to say nothing of the flouting of copyright protections that every LLM in existence relied on to be built. That it's also masquerading as dead professors, in the eyes of many scholars, adds grievous insult to injury. This is "literally digital necromancy," wrote Kathleen Alves, an associate professor of English at CUNY, in a Bluesky post. "NecromancerLLM," echoed Hisham Zerriffi, an associate professor in forest resources management at the University of British Columbia. "Seriously, dead or alive, this is just wrong." This isn't the only AI tool from Grammarly that will pose as a real pedagogue. It also provides an "AI grader agent" that provides students with personalized feedback on their homework by looking up "publicly available instructor information" on their teachers and professors.

[9]

Grammarly is using our identities without permission

Grammarly's "expert review" feature offers to give users writing advice "inspired by" subject matter experts, including recently-deceased professors, as Wired reported on Wednesday. When I tried the feature out myself, I found some experts that came as a surprise for a different reason -- one of them was my boss. The AI-generated feedback included comments that appeared to be from The Verge's editor-in-chief, Nilay Patel, as well as editor-at-large David Pierce and senior editors Sean Hollister and Tom Warren, none of whom gave Grammarly permission to include them in the "expert reviews." The feature, which launched in August, claims to help you "sharpen your message through the lens of industry-relevant perspectives." When users select the "expert review" button in the Grammarly sidebar, it analyzes their writing and surfaces AI-generated suggestions "inspired by" related experts. Those "industry-relevant perspectives" include the likes of Stephen King, Neil deGrasse Tyson, and Carl Sagan, among many others. The Verge found numerous other tech journalists named in the feature, as well, including former Verge editors Casey Newton and Joanna Stern, former Verge writer Monica Chin, Wired's Lauren Goode, Bloomberg's Mark Gurman and Jason Schreier, the New York Times' Kashmir Hill, The Atlantic's Kaitlyn Tiffany, PC Gamer's Wes Fenlon, Gizmodo's Raymond Wong, Digital Foundry founder Richard Leadbetter, Tom's Guide editor-in-chief Mark Spoonauer, former Rock Paper Shotgun editor-in-chief Katharine Castle, and former IGN news director Kat Bailey. The descriptions for some experts contain inaccuracies, such as outdated job titles, which could have been accurately updated had Superhuman asked those people for permission to reference their work. In a statement to The Verge, Alex Gay, vice president of product and corporate marketing at Grammarly parent company Superhuman, commented: "The Expert Review agent doesn't claim endorsement or direct participation from those experts; it provides suggestions inspired by works of experts and points users toward influential voices whose scholarship they can then explore more deeply." When asked if Superhuman considered notifying the people named in its AI feature, or requesting their permission, Gay said, "The experts in Expert Review appear because their published works are publicly available and widely cited." However, the experts' work proved difficult to "explore more deeply." The feature crashed frequently and its "sources" linked to spammy copies of legit websites, or other archived copies that aren't the actual source page. Some sources even went to completely unrelated links that weren't written by the person whose work they were supposedly an example of, potentially indicating that the suggestions Grammarly's AI offers with one person's name may be based on a different person's work. This is only apparent if users click "see more" to expand suggestions, then click the "source" button at the end of the suggestion. Additionally, the way the suggestions are presented could be misleading. In Google Docs, the suggestions look similar to comments from real users, seemingly simulating the experience of receiving edits from whichever expert the AI is imitating. One suggestion from Grammarly's AI "inspired by" Verge senior editor Sean Hollister was about adding a parenthetical with context that was already included elsewhere. The only problem is that I've actually been edited by the real Sean Hollister, who prefers avoiding repetitive or unnecessary explanations while using straightforward wording and organization. If I'd taken that advice and run it by him, the real Sean probably would have removed the parenthetical Grammarly suggested. An AI might be able to ingest vast amounts of someone's writing and learn to mimic it, sure, but the same strategy cannot teach an AI how to edit the way that person would, based only on the writing they've published, even if you give the bot a check mark logo and call it an "expert."

[10]

Grammarly Disables AI 'Expert Review' After Backlash From Authors and Journalists - Decrypt

The company said it will redesign the feature to give experts more control over how they are represented. Popular writing assistant platform Grammarly said on Wednesday that it has disabled its Expert Review AI feature following online backlash and criticism that the tool used the identities and mannerisms of various writers and editors without permission. The Expert Review feature analyzes a user's text and generates feedback on what was written from the perspective of scholars, journalists, and other specialists. Parent company Superhuman said Wednesday that it is changing course after substantial backlash. "Over the past week, we received valid critical feedback from experts who are concerned that the agent misrepresented their voices," Superhuman CEO Shishir Mehrotra wrote on LinkedIn. "This kind of scrutiny improves our products, and we take it seriously." Grammarly launched the Expert Review feature last summer as part of its expansion into AI productivity agents. The tool lets users select an expert and receive AI-generated feedback modeled on that person's work. After it was discovered that the AI referenced real people, including deceased scholars, the feature drew swift criticism from academics and journalists. After criticism, Superhuman said it disabled the Expert Review feature and will redesign it so experts can control whether and how they are represented. Before the change, experts had to manually opt out if they did not want to be included, a policy critics said was unacceptable. "I'm no lawyer, but I think "We're going to keep stealing your stuff until you tell us you don't want us to steal your stuff" isn't quite the defense Grammarly thinks it is -- at least not in the court of public opinion," former creative director at The Verge, James Bareham, wrote on Bluesky. "I hope this company is sued into oblivion. I canceled my pro account today." Others, including author and editor Benjamin Dreyer, mocked the company's opt-out policy in a post responding to the feature. "I may ultimately simply take advantage of their bountifully generous opt-out offer, oh thank you, thank you. But in the meantime, if I can cause some corporate shyster a few moments' worth of agita, I will feel as though my hard work ain't been in vain for nothin'," Dreyer wrote. In a statement shared with Decrypt, Ailian Gan, Grammarly's director of product management for agents, said the company disabled the Expert Review feature after feedback showed it had "missed the mark." "As we innovate at the edge of AI, it is important to us that we continue to seek the best ways to help people feel agency over technology and that they can shape it to meet their needs," Gan wrote. "Thanks for holding us accountable. We are committed to getting it right next time and will be transparent about how we improve from here."

[11]

Grammarly shuts down "Expert Review" after pushback from the real experts - SiliconANGLE

Grammarly shuts down "Expert Review" after pushback from the real experts After lighting the ire of journalists, academics, and authors this week, Superhuman Platform Inc. this week pulled a feature from Grammarly that impersonated writers. The "Expert Review" let users of Grammarly improve their writing by selecting from a roster of "leading professionals, authors, and subject-matter experts," whose artificial-intelligence-generated advice imitated the style and judgment of real writers - both living and dead. The reaction was swift. Tech journalist Casey Newton, former senior editor at The Verge, penned the blog post, "Grammarly turned me into an AI editor against my will and I hate it." Newton said he wasn't asked to appear as one of the experts. "I've long assumed that before too long, AI might take my job," Newton wrote. "I just assumed that someone would tell me when it happened." Grammarly did at least inform its users - albeit not readily accessible: "References to experts in Expert Review are for informational purposes only and do not indicate any affiliation with Grammarly or endorsement by those individuals or entities." Nonetheless, the living authors conceived the feature to be a cheap trick. Some of the names associated with Expert Review included Stephen King, Neil deGrasse Tyson, Carl Sagan, as well as a slew of journalists, including Kara Swisher, The Verge's Monica Chin, Bloomberg's Mark Gurman, The New York Times' Kashmir Hill, and The Atlantic's Kaitlyn Tiffany. Julia Angwin, an investigative journalist formerly of the Wall Street Journal, took the next step and launched a lawsuit. "I have worked for decades honing my skills as a writer and editor, and I am distressed to discover that a tech company is selling an imposter version of my hard-earned expertise," she said. Unsurprisingly, Superhuman disabled the feature soon after. The company's chief executive, Shishir Mehrotra, acknowledged that the experts may have felt the "agent misrepresented their voices." He added, "I want to apologize and acknowledge that we'll rethink our approach going forward." San Francisco-based Grammarly may have hit hard times since the rise of the chatbots. The company has an estimated 40 million users across more than 500,000 organizations, but with ChatGPT and Claude correcting people's work, the company has had to redefine itself. In 2024, it acquired AI productivity platform Coda Project Inc., bringing Coda's then-Chief Executive Mehrotra in as Grammarly's new CEO. What Grammarly was doing with its 'experts' was no different from what ChatGPT users can do when they ask the chatbot to edit or write in the style of a writer of their choosing, but as Newton said, "Grammarly just had the bad manners to put my name on it."

[12]

'Obscene': Grammarly's New AI Tool Offers Writing Feedback From Dead Scholars - Decrypt

Critics question whether the company can use scholars' identities without consent. Grammarly's new AI feature that provides writing feedback from the purported perspective of noted "experts" is drawing criticism from academics who say the tool appears to "resurrect" scholars to review users' work. The feature, called Expert Review, analyzes text and generates feedback framed through the perspective of specific scholars, journalists, and other specialists. Many of the experts that the AI tool claims to mimic are no longer living -- a feature that one medieval historian on BlueSky called "morbid." Launched in 2009 as an AI-assisted writing and grammar tool, Grammarly's parent company rebranded to Superhuman in October to reflect its shift from a single writing assistant into a suite of AI productivity agents, including tools for research, scheduling, email, and workflow automation. Grammarly introduced the Expert Review feature last summer. Through the Grammarly browser extension, users who opt into the Superhuman Go version can select an expert and receive AI-generated feedback based on that scholar's field or published work. "Our Expert Review agent examines the writing a user is working on, whether it's a marketing brief or a student project on biodiversity, and leverages our underlying LLM to surface expert content that can help the document's author shape their work," a Superhuman spokesperson told Decrypt. "The suggested experts depend on the substance of the writing being evaluated." The Expert Review agent, the spokesperson explained, doesn't claim endorsement or direct participation from those experts, but provides "suggestions inspired by works of experts and points users toward influential voices whose scholarship they can then explore more deeply." "The experts in Expert Review appear because their published works are publicly available and widely cited," they said. When testing the feature for this article, expert reviewers suggested by the app included Margaret Sullivan, media columnist and former editor at the New York Times, Jack Shafer, former senior media writer at Politico, and Lawrence Lessig, a professor at Harvard Law. Other options included AI ethics researcher Timnit Gebru and Helen Nissenbaum, professor of information science at Cornell Tech. While the feature aims to help students and professionals improve their writing abilities, Vanessa Heggie, professor of history at the University of Birmingham, questioned whether the "reviewers" gave their consent before the company used them in the app. "I don't know where to start with this, but... Grammarly is now offering "expert review" of your work by living and dead academics," Heggie wrote on LinkedIn. "Yes, dead ones -- without anyone's explicit permission it's creating little LLMs based on their scraped work and using their names and reputations. Obscene." Brielle Harbin, a former associate professor of political science at the United States Naval Academy, called it "an odd and concerning development." "Choices like this -- especially when made without context, consent, or meaningful partnership with educators -- risk deepening skepticism about AI tools in higher education," she wrote on LinkedIn. "Ironically, decisions meant to accelerate adoption may end up strengthening resistance instead. Trust and collaboration matter a lot right now." Grammarly is just one of the companies creating AI programs designed to mimic real people. In 2023, Meta released a line of chatbots for its Meta AI platform built around celebrity identities, including Snoop Dogg, Tom Brady, Kendall Jenner, and Naomi Osaka. That same year, Khan Academy launched its AI tutor Khanmigo, which allows students to role-play conversations with historical figures, including British Prime Minister Winston Churchill, and U.S. Civil War spy and Underground Railroad conductor Harriet Tubman.

[13]

Grammarly AI "Expert Review" Cites Real Experts Without Consent

Grammarly's AI-powered "Expert Review" feature attributes its writing suggestions to prominent individuals and claims that it draws on their advice. However, these individuals may not be aware that the grammar-correction tool is associating their names and credentials with its suggestions. When MediaNama tested this feature, it referenced several individuals. These included MediaNama's Editor Nikhil Pahwa, Pranesh Prakash, Principal Consultant at Anekaanta, and Sunil Abraham, co-founder of Centre for Internet and Society (CIS), among others. Without any external user prompts, Grammarly voluntarily suggests edits from the "subject matter experts." However, a small legal disclaimer at the bottom clarifies : "References to experts in this product are for informational purposes only and do not indicate any affiliation with Grammarly or endorsement by those individuals or entities." What are Grammarly's AI Agents? They are proactive, autonomous AI systems that can independently work toward specific goals, with contextual awareness and the ability to perform multi-step reasoning. Personality Rights & Anthropomorphisation: "Pranesh Prakash, Principal Consultant at Anekaanta, a Chennai-based policy advisory firm, in a phone call with MediaNama, there are two major issues with this incident: personality rights and anthropomorphisation. Anthropomorphisation is the attribution of human characteristics, such as emotions, intentions, or identity, to non-human entities, including animals, machines, and even software. Meanwhile, personality rights protect an individual's likeness, including their voice, face, style, etc., from unauthorised exploitation. "Neither of these is necessarily covered under current Indian law. So neither of these is necessarily a legal concern," he said. However, "Personality rights are recognised in India, but they are not uniform," he added, citing the recent judgements from various courts that guarded the personality rights of various celebrities. He warned that anthropomorphising AI, such as giving it a human identity or name, could lead people to treat it like a human and cause negative psychological effects and unforeseen long-term consequences. It shouldn't be legal to use a name to promote their services: "They are making use of my name's social purpose without any kind of authorisation, in a way that suggests they have learned from me," he said. He said the issue is not whether the comment is defamatory, good, bad, or something a person would write. He added that judging Grammarly's AI expert reviews on those grounds is not a plausible objection. 'It has no real understanding': "Because it is a model, it has no real understanding of these things. It can replicate or generalise from some of what I have written. Even then, the body of my writing is not very large, so it cannot perform gradient fitting well with such limited data." Additionally, he said the end user might feel disappointed if the AI provides substandard advice that does not match the level of expertise the user expected.

Share

Share

Copy Link

Award-winning journalist Julia Angwin filed a class action lawsuit against Grammarly over its Expert Review tool, which used names and identities of hundreds of writers without consent to generate AI feedback. Superhuman, Grammarly's parent company, disabled the feature amid significant backlash, acknowledging it missed the mark on giving experts control over their representation.

Journalist Files Class Action Against Grammarly Over Expert Review

Julia Angwin, an award-winning investigative journalist who founded The Markup, filed a federal class action lawsuit against Grammarly on Wednesday in the Southern District of New York, challenging the company's misappropriated names and identities of hundreds of journalists, authors, writers, and editors through its Expert Review AI feature

1

. The complaint argues that damages across the plaintiff class exceed $5 million, though it does not call for a specific amount1

.

Source: The Verge

Angwin discovered her identity was being used without consent after learning from Casey Newton, another expert featured in the tool

3

. The lawsuit alleges that Superhuman, Grammarly's parent company, violated publicity rights by breaking laws against unauthorized use of names for commercial purposes without consent3

. Her attorney Peter Romer-Friedman stated that longstanding laws in New York and California clearly prohibit such commercial use of a person's name and likeness without their permission1

.How the Identity-Stealing AI Feature Operated

The Expert Review tool, launched in August by Superhuman CEO Shishir Mehrotra, offered users generative-AI feedback credited to real writers, both living and deceased

5

. The AI feature listed real academics and authors available to weigh in on user text, including Stephen King, Neil deGrasse Tyson, deceased editor William Zinsser, and astronomer Carl Sagan2

.

Source: The Verge

These AI agents leveraged an underlying large language model to produce writing suggestions inspired by influential voices, drawing on publicly available information from third-party LLMs

4

. While a disclaimer clarified that none of the people cited had endorsed or directly participated in developing this tool, various writers expressed frustration over Grammarly invoking their likenesses and apparently regurgitating their life's work1

. The suggested experts depended on the substance of the writing being evaluated, whether a marketing brief or student project2

.Superhuman Disables Feature Amid Backlash

Shortly before the lawsuit was filed, Superhuman announced it would disable Expert Review as it reimagines the feature to give experts real control over how they want to be represented, or not represented at all

1

. Ailian Gan, Superhuman's Director of Product Management, Agents, acknowledged that the company clearly missed the mark based on feedback received1

.Mehrotra apologized in a LinkedIn post, stating the company received valid critical feedback from experts concerned that the agent misrepresented their voices

4

. The discontinued feature had initially prompted Superhuman to launch an opt-out option earlier in the week, allowing writers and academics to request removal from the platform3

. However, this approach proved inadequate for addressing ethical concerns, particularly for deceased individuals and those unaware their identities were being used5

.

Source: Gizmodo

Related Stories

Legal and Ethical Implications for AI Development

The lawsuit highlights growing concerns about how AI companies clone experts without permission and use their writing styles without consent. Romer-Friedman emphasized that professionals who spend years or decades honing skills see their names and expertise appropriated by others without authorization

1

. The complaint states that contrary to the apparent belief of some tech companies, it is unlawful to appropriate people's names and identities for commercial purposes, whether those people are famous or not1

.The legality of content-harvesting for training these AI agents remains murky at best and is the subject of many copyright infringement lawsuits

2

. Vanessa Heggie, an associate professor at the University of Birmingham, accused Superhuman of creating little LLMs based on scraped work of the living and dead alike, trading on their names and reputations2

. The case raises questions about misrepresentation and whether companies can attribute words to individuals they never uttered and advice they never gave1

.Mehrotra envisions a future where experts choose to participate, shape how their knowledge is represented, and control their business model, allowing them to build ubiquitous bonds with users similar to Grammarly

4

. Whether this vision can be realized while respecting publicity rights and obtaining proper consent remains to be seen as the legal proceedings unfold.References

Summarized by

Navi

[3]

Related Stories

Grammarly faces class-action lawsuit over AI Expert Review feature that mimicked writers without consent

12 Mar 2026•Policy and Regulation

Grammarly Unveils AI Agents to Revolutionize Writing Assistance for Students and Professionals

18 Aug 2025•Technology

Grammarly Rebrands as Superhuman, Launches AI-Powered Productivity Suite

29 Oct 2025•Business and Economy

Recent Highlights

1

OpenAI shuts down Sora video app after six months, ending Disney's $1 billion investment deal

Technology

2

AI-Generated Val Kilmer to Posthumously Appear in As Deep as the Grave After His Death

Entertainment and Society

3

Supermicro Co-Founder Indicted in $2.5 Billion Nvidia AI Chip Smuggling Scheme to China

Policy and Regulation