Intel unveils Xeon 6+ with 288 cores on 18A process, targeting edge AI and 6G networks

3 Sources

3 Sources

[1]

Intel's 18A node debuts in the data center with the 288-core Xeon 6+

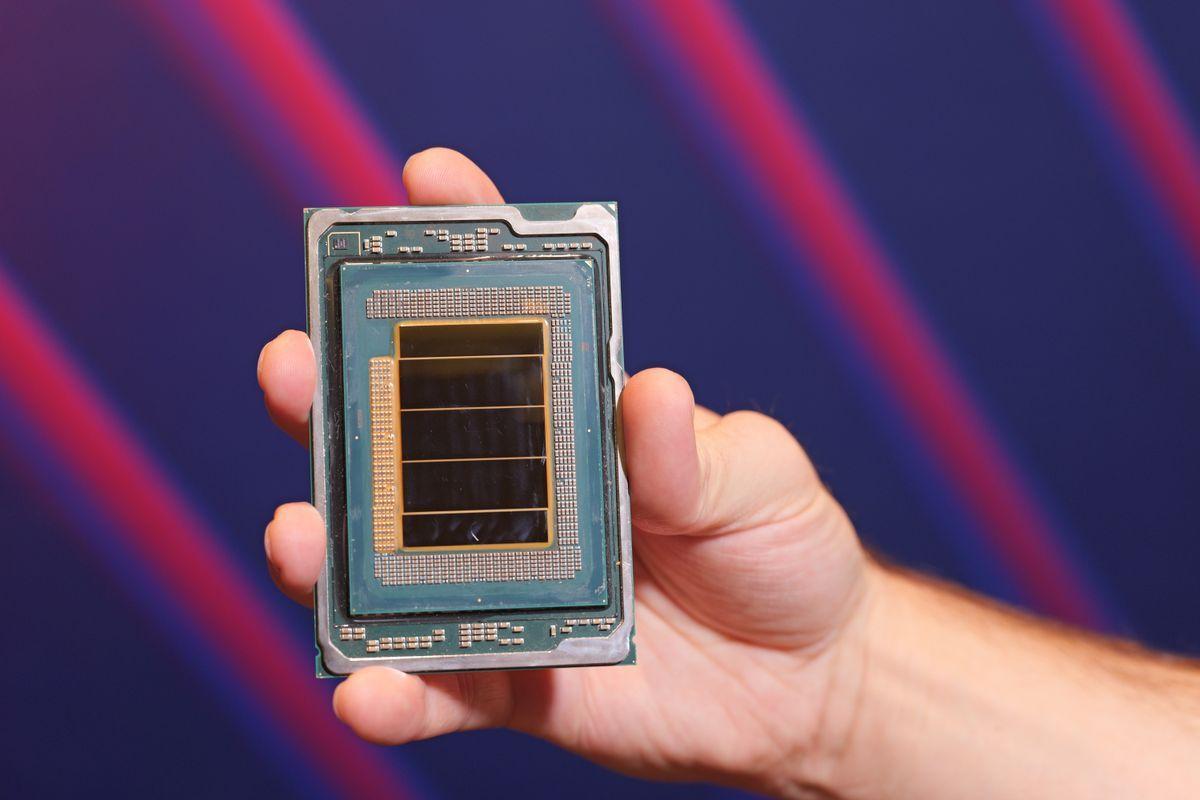

Serving tech enthusiasts for over 25 years. TechSpot means tech analysis and advice you can trust. First look: Intel's next leap in data center design starts not with a GPU or accelerator card, but with a single CPU running nearly 300 efficiency cores. The company this week introduced its Xeon 6+ line, codenamed Clearwater Forest, built on the Intel 18A process, Intel's most advanced fabrication technology yet and the first in the 1.8-nanometer class. The new chips mark a turning point for Intel's strategy in cloud and telecommunications workloads, where efficiency and integration are now prioritized over sheer clock speed. Clearwater Forest is based on Intel's all-new Darkmont core microarchitecture. Each processor packs up to 288 of these cores across 12 compute tiles, an unprecedented density for a server-grade CPU. Each compute tile holds 24 Darkmont cores, fabricated using the 18A node, and connects through Intel's 3D stacking technology Foveros Direct. Two separate input/output tiles, built on Intel 7, handle memory, PCIe, and network interfaces, while three base tiles fabricated on Intel 3 anchor the entire structure. Communication between tiles runs through Intel's EMIB (Embedded Multi-Die Interconnect Bridge) links, the same packaging innovation driving the company's high-end GPUs. That complex assembly is designed in large part to keep data as close as possible to the cores while keeping power draw to a minimum. In Clearwater Forest, the caches were completely re-engineered to suit that goal. Four Darkmont cores share a 4 MB L2 cache, while the chip's last-level cache surpasses a gigabyte - about 1,152 MB in total - giving hundreds of cores rapid access to frequently used data without depending heavily on external memory bandwidth. Darkmont itself represents a major step forward for Intel's efficiency-core design. The cores feature an expanded instruction cache of 64 KB, a wider fetch-and-decode path, and a deeper out-of-order execution engine that can track more simultaneous operations. Adding more execution ports boosts both scalar and vector performance. Although the Xeon 6+ family is built for efficiency, it's equipped with accelerators increasingly vital to data center operators. Each chip includes support for Intel Advanced Matrix Extensions (AMX), QuickAssist Technology (QAT) for cryptographic and compression workloads, and vRAN Boost - a hardware block tailored for virtualized radio access networks. These integrated accelerators target workloads that would normally require separate AI or networking cards, particularly in edge and telecom deployments running 5G Advanced and upcoming 6G systems. Intel's argument is that by embedding these capabilities directly into the CPU, operators can avoid redesigning their server racks and still scale AI inference and network processing efficiently. The platform remains compatible with the existing Xeon socket, easing deployment for system builders. It brings 12 channels of DDR5 memory running up to 8,000 MT/s and up to 96 PCIe 5.0 lanes, with 64 of them supporting Compute Express Link (CXL) 2.0 for coherent memory or device expansion. Dual-socket configurations double the available computing resources to 576 Darkmont cores in a single server. Clearwater Forest reflects a broader shift within Intel's data center roadmap - away from monolithic designs toward disaggregated packaging and from brute performance toward workload specialization through integrated acceleration. For cloud and telecom operators, where every watt and rack unit matters, that emphasis on efficiency is less a design philosophy than an operational requirement. Intel expects systems featuring Xeon 6+ processors to ship later this year.

[2]

AI in networks isn't CPU vs. GPU": Intel unveils 18A-based Clearwater Forest Xeon 6+ for edge AI and early 6G infrastructure

288-core design targets efficiency gains across telecom and data centers * Intel extends Xeon 6 roadmap with 18A-based processors targeting AI in telecom networks * 288-core Clearwater Forest reduces rack power and improves performance per watt * Testing shows 38% lower runtime rack power versus comparable Sierra Forest systems At MWC 2026, Intel introduced its upcoming Clearwater Forest Xeon 6+ processors, built on the 18A process and aimed at edge AI and early 6G infrastructure. The update adds a higher density option to the Xeon 6 lineup for network and data center deployments. Clearwater Forest, which was first previewed in October 2025, follows the current Xeon 6 generation and is expected to arrive by 2027. AI in networks isn't "CPU vs. GPU" Intel is expanding Xeon 6 across radio access networks, or RAN, which connect devices like smartphones to the broader mobile network, as well as mobile core systems and edge sites. The strategy keeps network functions, security workloads, enterprise services, and AI inference on standard server hardware. Kevork Kechichian, executive vice president and general manager of Intel's Data Center Group, said: "AI in networks isn't "CPU vs. GPU" -- it's right compute for the workload". The idea is that not every AI task inside a telecom network requires a separate accelerator. In many cases, inference can run directly on Xeon processors depending on performance and power constraints. In the RAN, Xeon 6 SoC integrates Advanced Matrix Extensions and vRAN Boost, allowing inference workloads to run on the same server that handles virtualized network software. That can limit the need for extra hardware in certain deployments. Rakuten Mobile is working with Intel to train and deploy AI models for low latency RAN workloads using Xeon 6 SoC. Vodafone has committed to adopting Xeon 6 SoCs for Open RAN and vRAN modernization projects across Europe. Clearwater Forest, branded simply Xeon 6+, increases core density and shifts to Intel's 18A process. In testing by Ericsson, a single 288-core Xeon 6990E+ Clearwater Forest processor reduced runtime rack power by 38 percent, delivered more than 60 percent better performance per watt, and improved overall performance by 30 percent compared with a dual socket 288-core Xeon 6780E Sierra Forest system. Higher core counts and lower power consumption sit at the center of Intel's pitch as AI workloads expand inside telecom infrastructure and networks move closer to early 6G development. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button! And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

[3]

Intel unveils cutting-edge Xeon 6+ CPUs with 288 cores, targeting AI-ready networks - SiliconANGLE

Intel unveils cutting-edge Xeon 6+ CPUs with 288 cores, targeting AI-ready networks Intel Corp. today unveiled its most advanced central processing unit so far, targeting artificial intelligence networks and other data center applications. Announced at MWC26 in Barcelona, the Xeon 6+ CPU, codenamed "Clearwater Forest," is targeted at networks and cloud infrastructures and is based on a complex multi-chiplet design that combines 12 compute tiles in an extremely dense core arrangement. Intel is positioning the Xeon 6+ processor in its AI-ready networking lineup, targeting applications such as edge and on-site AI inference at a time when networks are transitioning to 6G. The company has employed various new technologies, including its high-bandwidth, on-chip fabric 2.5D EMIB links and Foveros Direct 3D die stacking innovations to prime it for the intense demands of the new network standard. Those technologies are what allow Intel to combine 12 compute tiles manufactured on its most advanced 18A process node (1.8 nanometer) into a single package, together with three active base tiles made on the Intel 3 process (7nm) and two I/O tiles made on the Intel 7 process (10nm). Each tile can be broken down further into six modules that contain four Darkmont E-cores, adding up to 24 compute cores per tile. That means a total of 288 cores per CPU, and 576 cores on dual-socket platforms. The company said each of those cores benefits from improved parallelism, vector throughput and a beefier instruction cache. The I/O tiles are made up of two chiplets containing eight accelerators each, plus 48 PCIe Gen 5 lanes, 32 CXL 2.0 lanes, and 96 UPI 2.0 lanes, the company said, referring to its point-to-point interconnects for linking Xeon CPUs in large clusters. That means a single Xeon 6+ CPU features a total of 16 accelerators, including four Intel Dynamic Load Balancers, four Intel QuickAssist Technology, four Intel Data Streaming Accelerators and four Intel In-Memory Analytics Accelerators. In terms of memory, Intel said the Xeon 6+ CPUs can support 12 memory channels and up to DDR5-8000 modules. Intel said the Xeon 6+ chips deliver double the core count of its previous Xeon 6700E CPUs, resulting in a 17% greater increase in instructions per clock per core, five-times more last-level cache and 20% faster memory speed. Getting away from the nitty gritty, Intel said it's planning to launch the Xeon 6+ processors in the first half of the year to its primary target market of network providers and data center operators. The company said cloud providers will be able to support dozens, if not hundreds of virtual machines on a single Xeon 6+ CPU. In networking environments, the chips are targeted at Radio Access Network, 5G core and edge workloads, it said. Kevork Kechichian, who is the executive vice president and general manager of Intel's Data Center Group, said the new chip will support real-time inference in virtualized RAN deployments, allowing data to be processed by AI models where it lives instead of shifting it back and forth between cloud-based servers. He said the chipmaker is planning to expand its existing partnership with the Swedish telecommunications giant Telefonaktiebolaget LM Ericsson to jointly develop and market "AI-native 6G solutions." The details of this are scarce, but Kechichian described the collaboration as "advancing future high-performance and energy-efficient compute architectures for both AI for networks and networks for AI." According to him, AI-native 6G combines intelligent and programmable networks with advanced compute and real-time sensing. These capabilities will "underpin more responsive, efficient and capable services, and ultimately result in closer integration between sensing and compute."

Share

Share

Copy Link

Intel introduced its Xeon 6+ processor, codenamed Clearwater Forest, built on the advanced 18A process with 288 Darkmont efficiency cores. The chip targets AI inference in telecom networks and data centers, delivering 38% lower rack power and 60% better performance per watt. Major carriers including Ericsson, Vodafone, and Rakuten Mobile are already deploying the technology for 5G and early 6G infrastructure.

Intel Debuts Advanced 18A Process in Data Center CPU

Intel announced its Xeon 6+ processor lineup at MWC 2026 in Barcelona, marking the commercial debut of the company's 18A process technology in data center workloads

1

. Codenamed Clearwater Forest, the new chips pack up to 288 cores into a single processor, representing the first server-grade CPU in the 1.8-nanometer class1

. The launch signals a strategic shift for Intel toward efficiency and integration rather than raw clock speed, particularly for cloud infrastructure and telecom networks preparing for 6G infrastructure2

.

Source: TechRadar

Multi-Tile Architecture Drives Unprecedented Core Density

Clearwater Forest employs a complex multi-tile architecture that combines 12 compute tiles, each containing 24 Darkmont efficiency cores fabricated on the 18A process

3

. The chiplet design connects these tiles using Foveros Direct 3D stacking technology and EMIB (Embedded Multi-Die Interconnect Bridge) links, the same packaging innovations used in Intel's high-end GPUs1

. Two input/output tiles built on Intel 7 handle memory, PCIe, and network interfaces, while three base tiles fabricated on Intel 3 anchor the structure1

. This disaggregated approach keeps data close to the cores while minimizing power consumption, a critical requirement for data center workloads where every watt matters1

.Efficiency Gains Target AI Inference and Telecom Networks

Testing by Ericsson demonstrated that a single 288-core Xeon 6990E+ Clearwater Forest processor reduced runtime rack power by 38 percent while delivering more than 60 percent better performance per watt compared to a dual-socket 288-core Xeon 6780E Sierra Forest system

2

. Overall performance improved by 30 percent in the same comparison2

. These efficiency metrics matter particularly for edge AI deployments where power budgets are constrained and cooling infrastructure is limited.Integrated Accelerators Enable AI Inference Without Dedicated GPUs

Intel equipped the Xeon 6+ with accelerators designed to handle AI inference and network processing directly on the CPU. Each chip includes Advanced Matrix Extensions (AMX) for AI workloads, QuickAssist Technology (QAT) for cryptographic and compression tasks, and vRAN Boost tailored for virtualized Radio Access Network (RAN) deployments

1

. A single Xeon 6+ CPU features 16 total accelerators across its I/O tiles, including four Intel Dynamic Load Balancers, four QAT units, four Data Streaming Accelerators, and four In-Memory Analytics Accelerators3

. Kevork Kechichian, executive vice president of Intel's Data Center Group, emphasized that "AI in networks isn't 'CPU vs. GPU' -- it's right compute for the workload"2

. This approach allows operators to avoid redesigning server racks while still scaling AI inference efficiently1

.Related Stories

Major Carriers Commit to Deployment for 5G and 6G Networks

Rakuten Mobile is working with Intel to train and deploy AI models for low-latency RAN workloads using Xeon 6+ processors

2

. Vodafone has committed to adopting the chips for Open RAN and vRAN modernization projects across Europe2

. Intel is expanding its partnership with Ericsson to jointly develop "AI-native 6G solutions" that combine intelligent, programmable networks with advanced compute and real-time sensing capabilities3

. The collaboration aims to advance high-performance, energy-efficient compute architectures for both AI-driven network optimization and networks designed to support AI workloads3

.Platform Specifications Support Massive Scale

The Xeon 6+ platform maintains compatibility with existing Xeon sockets, easing deployment for system builders

1

. It supports 12 channels of DDR5 memory running up to 8,000 MT/s and up to 96 PCIe 5.0 lanes, with 64 lanes supporting Compute Express Link (CXL) 2.0 for coherent memory or device expansion1

. Dual-socket configurations double available computing resources to 576 Darkmont cores in a single server1

. The cache architecture was completely re-engineered, with four Darkmont cores sharing a 4 MB L2 cache and total last-level cache exceeding 1,152 MB1

. This massive cache allows hundreds of cores to access frequently used data without heavy reliance on external memory bandwidth1

. Cloud providers will be able to support hundreds of virtual machines on a single Xeon 6+ CPU3

. Intel expects systems featuring Xeon 6+ processors to ship in the first half of 20263

.References

Summarized by

Navi