Nvidia's DGX Station brings trillion-parameter AI models to your desk with GB300 Superchip

6 Sources

6 Sources

[1]

Nvidia launches DGX Station with its bleeding-edge GB300 Grace Blackwell Superchip -- now available to order and will begin shipping in the coming months

DGX Station serves as the middle-ground between the DGX Spark and full-blown GB300-powered servers. Nvidia has officially released its DGX Station workstation PC that the company unveiled last year during GTC 2025. The new system is targeted at software developers, researchers, data scientists, and anyone who needs more AI horsepower than what Nvidia's smaller DGX Spark is capable of. Nvidia states that DGX Station systems are now available to order and will begin shipping in the coming months from Asus, Dell, Gigabyte, MSI, Supermicro, and HP. DGX Station takes advantage of Nvidia's latest GB300 Grace Blackwell Ultra Desktop Superchip that combines a 72-core Grace CPU with a Blackwell Ultra GPU linked together with a 900 GB/s NVLink C2C interface. The system comes armed with a whopping 784GB of total onboard memory; the CPU portion is paired to 496GB of LPDDR5X rated at 396GB/s of bandwidth, and the GPU is paired with 252GB of HBM3e memory rated at 7.1 TB/s of bandwidth. Both memory pools are unified, allowing the CPU and GPU to share each other's memory for maximum AI performance. Just like a typical workstation PC, Nvidia has armed the DGX Station with three PCIe Gen 5 x16 slots, one wired with 16 lanes and eight lanes for the other two. The workstation system officially supports discrete GPU options to plug into its PCIe slots for extra tasks such as simulation and ray-traced visualization. Supported GPUs are the RTX Pro 6000 Workstation Edition, RTX Pro 6000 Blackwell Max-Q Workstation Edition, RTX Pro 4000 Blackwell SFF Edition, and RTX Pro 2000 Blackwell graphics cards. There are also four M.2 slots, audio connectors, and USB ports. The workstation uses Nvidia's ConnectX-8 SuperNIC for networking, which supports speeds of up to 800 Gb/s through two QSFP112 ports. The system is designed to accelerate AI projects by hooking up to two DGX Stations together to scale model capacity and performance. Power connectivity comes in the form of a single 24-pin ATX power connector, a single 8-pin EPS connector, and three 12V-2x6 power connectors for the GPU, to feed the system's 1600W official power rating. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

Nvidia Opens Orders for DGX Station, Ready to Run Giant AI Models at Your Desk

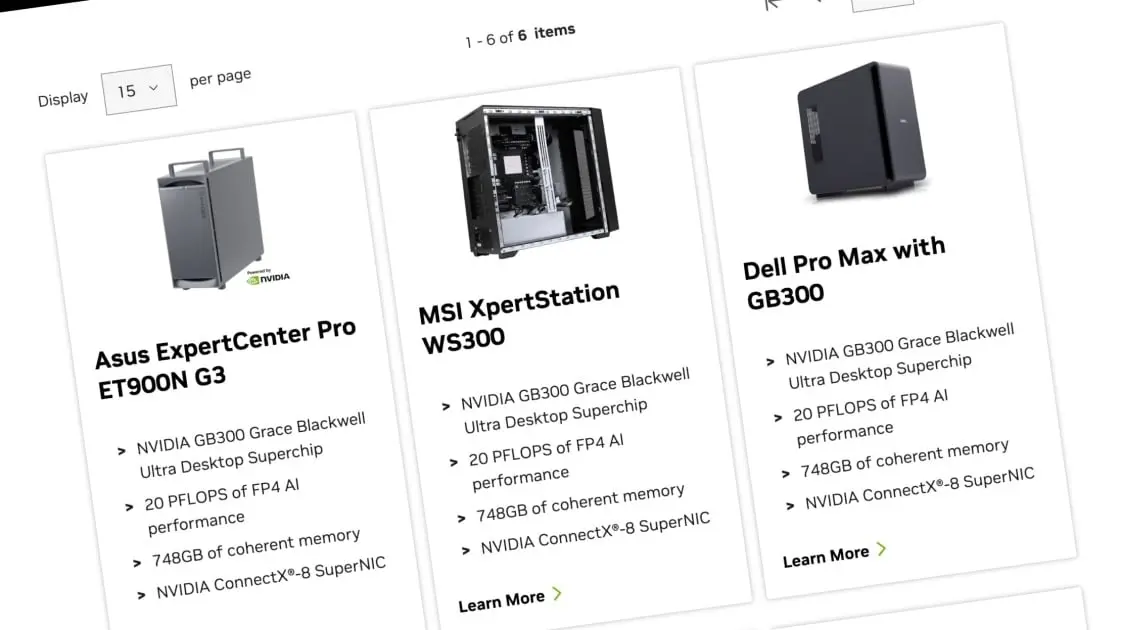

Following a delay, Nvidia is ready to release the DGX Station, a desktop-like computer that can run AI models locally, bringing AI data center-like performance to your home or office. At the company's annual GTC event, Nvidia revealed that PC manufacturers are starting to accept orders for their DGX Station units. The company's website offers a catalog of the six upcoming models from six companies: Asus, Dell, HP, Gigabyte, MSI, and server provider Supermicro. All the units come with their own names and are packed in desktop tower cases. However, pricing is unclear. The vendors are asking interested customers to fill out a form with their contact information. The companies will then follow up with more details. Nvidia also declined to reveal pricing, but told journalists in a briefing that buyers can expect the DGX station units to begin shipping out within weeks. DGX Station: A Pumped-Up Spark Introduced a year ago, the DGX Station is basically the more powerful big brother of the DGX Spark, a $4,000 mini PC that can also run AI models locally. The portable DGX Spark features a smaller GB10 Grace Blackwell chip and 128GB of RAM, enabling it to run AI models with up to 200 billion parameters. In contrast, the DGX Station features a larger Nvidia GB300 chip and a staggering 748GB of "coherent memory" shared between the processing and graphics portions. Nvidia says the computing power is enough to run even larger, more advanced AI models that span up to 1 trillion parameters. But like DGX Spark, the larger DGX Station is almost certainly going to be expensive and is being advertised toward enterprises. The DGX Station was supposed to arrive last year, but it was pushed back to this spring. In a press briefing, Nvidia indicated that packing the GB300 chip and its motherboard into a desktop case was a challenge that required more time. The company is marketing the product to AI researchers and software developers looking to run large language models (LLMs) at their desk, instead of paying a third-party server provider. The DGX Station can ensure the model runs privately while delivering fast performance, including running multiple AI-related tasks concurrently, Nvidia says. The same machine can also be shared over a local network as an "on-demand compute node for teams," the company added. NemoClaw: Nvidia Jumps on the OpenClaw Bandwagon Despite the likely high price of the DGX Station, Nvidia sees a market for users who want to run AI models locally, pointing to the popularity of OpenClaw, an open-source autonomous AI agent that can run on a laptop or even a mini PC. Nvidia sees so much potential in OpenClaw that it announced its own contribution to the software, called NemoClaw. On OpenClaw's growing popularity, company CEO Jensen Huang even said: "This is as big of a deal as HTML, this is as big of a deal as Linux." NemoClaw has been designed to install OpenClaw alongside the company's own AI programs, including Nvidia's Nemotron models and the company's newly-announced Nvidia Agent Toolkit. The result promises to bolster the intelligence and security of an OpenClaw installation, given that the AI agent software has been known to go rogue, such as deleting emails without permission or collecting sensitive data. NemoClaw can also run on all kinds of platforms, including laptops with Nvidia GPUs and on the DGX Spark and DGX Station. The company notes the Agent Tooklit "now includes Nvidia OpenShell -- an open-source runtime that enforces policy-based security, network, and privacy guardrails that make autonomous agents, or claws, safer to deploy."

[3]

MSI's XpertStation WS300 brings data-center-class AI to desktops

Data center class performance delivered directly to the desktop * XpertStation WS300 supports trillion-parameter models without relying on cloud infrastructure * Dual 400GbE LAN ports enable high-speed distributed multi-node AI workloads * Unified HBM3e GPU and LPDDR5X CPU memory maximizes bandwidth for AI MSI has officially launched the XpertStation WS300, a deskside AI workstation based on Nvidia's DGX Station architecture. This system is designed to handle demanding large language models, generative AI, and advanced data science workloads. The platform is powered by the Nvidia GB300 Grace Blackwell Ultra Desktop Superchip and supports up to 748GB of unified, large coherent memory. Unified memory architecture for high-bandwidth AI processing The XpertStation WS300 combines HBM3e GPU memory with LPDDR5X CPU memory for high-bandwidth data sharing. This configuration allows local processing of trillion-parameter models and supports extensive AI workflows without relying on cloud infrastructure. The workstation includes dual 400GbE LAN ports, which enable multi-node distributed computing with up to 800Gbps aggregate bandwidth. MSI claims that the XpertStation WS300 delivers data center class performance directly to the desktop environment, with its setup intended to help organizations move from experimentation to production while maintaining consistent compute reliability. The XpertStation WS300 supports the full AI lifecycle, including large-scale model training, data-intensive analytics, and real-time inference. By functioning as a centralized AI compute node, the platform enables collaborative fine-tuning and on-demand deployment, but maintains control over its data and intellectual property. High-speed PCIe Gen5 and Gen6 NVMe storage accelerates dataset ingestion and AI pipelines, ensuring sustained utilization during compute-intensive operations. Combined with the Nvidia AI Software Stack, the workstation integrates hardware and software to allow seamless workflow transitions from research to production environments. MSI also integrated Nvidia NemoClaw, an open-source stack that runs OpenShell within a policy-controlled sandbox. This allows autonomous AI agents to operate continuously and safely at the deskside, using the workstation's 20petaFLOPS compute potential. The configuration supports always-on AI processes locally, enabling experiments with advanced AI and robotics applications without transferring sensitive workloads to cloud servers. "MSI has a strategic vision to advance AI-first computing," said Danny Hsu, General Manager of MSI's Enterprise Platform Solutions. "With Nvidia, we are defining the next era of AI infrastructure, bridging centralized performance and distributed innovation, and enabling organizations to move from experimentation to production with greater speed, scale, and confidence." The platform provides extensive capabilities for advanced AI workflows, but its $84,999.99 price tag raises concerns about cost efficiency. Organizations that do not require maximum memory or continuous trillion-parameter model operation may find the investment difficult to justify. The system delivers unprecedented local AI performance, enabling demanding computations at the desk. However, the practical value of this workstation is likely limited to enterprises with high-throughput AI workloads and specific infrastructure requirements. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button! And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

[4]

Nvidia's DGX Station is a desktop supercomputer that runs trillion-parameter AI models without the cloud

Nvidia on Monday unveiled a deskside supercomputer powerful enough to run AI models with up to one trillion parameters -- roughly the scale of GPT-4 -- without touching the cloud. The machine, called the DGX Station, packs 748 gigabytes of coherent memory and 20 petaflops of compute into a box that sits next to a monitor, and it may be the most significant personal computing product since the original Mac Pro convinced creative professionals to abandon workstations. The announcement, made at the company's annual GTC conference in San Jose, lands at a moment when the AI industry is grappling with a fundamental tension: the most powerful models in the world require enormous data center infrastructure, but the developers and enterprises building on those models increasingly want to keep their data, their agents, and their intellectual property local. The DGX Station is Nvidia's answer -- a six-figure machine that collapses the distance between AI's frontier and a single engineer's desk. What 20 petaflops on your desktop actually means The DGX Station is built around the new GB300 Grace Blackwell Ultra Desktop Superchip, which fuses a 72-core Grace CPU and a Blackwell Ultra GPU through Nvidia's NVLink-C2C interconnect. That link provides 1.8 terabytes per second of coherent bandwidth between the two processors -- seven times the speed of PCIe Gen 6 -- which means the CPU and GPU share a single, seamless pool of memory without the bottlenecks that typically cripple desktop AI work. Twenty petaflops -- 20 quadrillion operations per second -- would have ranked this machine among the world's top supercomputers less than a decade ago. The Summit system at Oak Ridge National Laboratory, which held the global No. 1 spot in 2018, delivered roughly ten times that performance but occupied a room the size of two basketball courts. Nvidia is packaging a meaningful fraction of that capability into something that plugs into a wall outlet. The 748 GB of unified memory is arguably the more important number. Trillion-parameter models are enormous neural networks that must be loaded entirely into memory to run. Without sufficient memory, no amount of processing speed matters -- the model simply won't fit. The DGX Station clears that bar, and it does so with a coherent architecture that eliminates the latency penalties of shuttling data between CPU and GPU memory pools. Always-on agents need always-on hardware Nvidia designed the DGX Station explicitly for what it sees as the next phase of AI: autonomous agents that reason, plan, write code, and execute tasks continuously -- not just systems that respond to prompts. Every major announcement at GTC 2026 reinforced this "agentic AI" thesis, and the DGX Station is where those agents are meant to be built and run. The key pairing is NemoClaw, a new open-source stack that Nvidia also announced Monday. NemoClaw bundles Nvidia's Nemotron open models with OpenShell, a secure runtime that enforces policy-based security, network, and privacy guardrails for autonomous agents. A single command installs the entire stack. Jensen Huang, Nvidia's founder and CEO, framed the combination in unmistakable terms, calling OpenClaw -- the broader agent platform NemoClaw supports -- "the operating system for personal AI" and comparing it directly to Mac and Windows. The argument is straightforward: cloud instances spin up and down on demand, but always-on agents need persistent compute, persistent memory, and persistent state. A machine under your desk, running 24/7 with local data and local models inside a security sandbox, is architecturally better suited to that workload than a rented GPU in someone else's data center. The DGX Station can operate as a personal supercomputer for a solo developer or as a shared compute node for teams, and it supports air-gapped configurations for classified or regulated environments where data can never leave the building. From desk prototype to data center production in zero rewrites One of the cleverest aspects of the DGX Station's design is what Nvidia calls architectural continuity. Applications built on the machine migrate seamlessly to the company's GB300 NVL72 data center systems -- 72-GPU racks designed for hyperscale AI factories -- without rearchitecting a single line of code. Nvidia is selling a vertically integrated pipeline: prototype at your desk, then scale to the cloud when you're ready. This matters because the biggest hidden cost in AI development today isn't compute -- it's the engineering time lost to rewriting code for different hardware configurations. A model fine-tuned on a local GPU cluster often requires substantial rework to deploy on cloud infrastructure with different memory architectures, networking stacks, and software dependencies. The DGX Station eliminates that friction by running the same NVIDIA AI software stack that powers every tier of Nvidia's infrastructure, from the DGX Spark to the Vera Rubin NVL72. Nvidia also expanded the DGX Spark, the Station's smaller sibling, with new clustering support. Up to four Spark units can now operate as a unified system with near-linear performance scaling -- a "desktop data center" that fits on a conference table without rack infrastructure or an IT ticket. For teams that need to fine-tune mid-size models or develop smaller-scale agents, clustered Sparks offer a credible departmental AI platform at a fraction of the Station's cost. The early buyers reveal where the market is heading The initial customer roster for DGX Station maps the industries where AI is transitioning fastest from experiment to daily operating tool. Snowflake is using the system to locally test its open-source Arctic training framework. EPRI, the Electric Power Research Institute, is advancing AI-powered weather forecasting to strengthen electrical grid reliability. Medivis is integrating vision language models into surgical workflows. Microsoft Research and Cornell have deployed the systems for hands-on AI training at scale. Systems are available to order now and will ship in the coming months from ASUS, Dell Technologies, GIGABYTE, MSI, and Supermicro, with HP joining later in the year. Nvidia hasn't disclosed pricing, but the GB300 components and the company's historical DGX pricing suggest a six-figure investment -- expensive by workstation standards, but remarkably cheap compared to the cloud GPU costs of running trillion-parameter inference at scale. The list of supported models underscores how open the AI ecosystem has become: developers can run and fine-tune OpenAI's gpt-oss-120b, Google Gemma 3, Qwen3, Mistral Large 3, DeepSeek V3.2, and Nvidia's own Nemotron models, among others. The DGX Station is model-agnostic by design -- a hardware Switzerland in an industry where model allegiances shift quarterly. Nvidia's real strategy: own every layer of the AI stack, from orbit to office The DGX Station didn't arrive in a vacuum. It was one piece of a sweeping set of GTC 2026 announcements that collectively map Nvidia's ambition to supply AI compute at literally every physical scale. At the top, Nvidia unveiled the Vera Rubin platform -- seven new chips in full production -- anchored by the Vera Rubin NVL72 rack, which integrates 72 next-generation Rubin GPUs and claims up to 10x higher inference throughput per watt compared to the current Blackwell generation. The Vera CPU, with 88 custom Olympus cores, targets the orchestration layer that agentic workloads increasingly demand. At the far frontier, Nvidia announced the Vera Rubin Space Module for orbital data centers, delivering 25x more AI compute for space-based inference than the H100. Between orbit and office, Nvidia revealed partnerships spanning Adobe for creative AI, automakers like BYD and Nissan for Level 4 autonomous vehicles, a coalition with Mistral AI and seven other labs to build open frontier models, and Dynamo 1.0, an open-source inference operating system already adopted by AWS, Azure, Google Cloud, and a roster of AI-native companies including Cursor and Perplexity. The pattern is unmistakable: Nvidia wants to be the computing platform -- hardware, software, and models -- for every AI workload, everywhere. The DGX Station is the piece that fills the gap between the cloud and the individual. The cloud isn't dead, but its monopoly on serious AI work is ending For the past several years, the default assumption in AI has been that serious work requires cloud GPU instances -- renting Nvidia hardware from AWS, Azure, or Google Cloud. That model works, but it carries real costs: data egress fees, latency, security exposure from sending proprietary data to third-party infrastructure, and the fundamental loss of control inherent in renting someone else's computer. The DGX Station doesn't kill the cloud -- Nvidia's data center business dwarfs its desktop revenue and is accelerating. But it creates a credible local alternative for an important and growing category of workloads. Training a frontier model from scratch still demands thousands of GPUs in a warehouse. Fine-tuning a trillion-parameter open model on proprietary data? Running inference for an internal agent that processes sensitive documents? Prototyping before committing to cloud spend? A machine under your desk starts to look like the rational choice. This is the strategic elegance of the product: it expands Nvidia's addressable market into personal AI infrastructure while reinforcing the cloud business, because everything built locally is designed to scale up to Nvidia's data center platforms. It's not cloud versus desk. It's cloud and desk, and Nvidia supplies both. A supercomputer on every desk -- and an agent that never sleeps on top of it The PC revolution's defining slogan was "a computer on every desk and in every home." Four decades later, Nvidia is updating the premise with an uncomfortable escalation. The DGX Station puts genuine supercomputing power -- the kind that ran national laboratories -- beside a keyboard, and NemoClaw puts an autonomous AI agent on top of it that runs around the clock, writing code, calling tools, and completing tasks while its owner sleeps. Whether that future is exhilarating or unsettling depends on your vantage point. But one thing is no longer debatable: the infrastructure required to build, run, and own frontier AI just moved from the server room to the desk drawer. And the company that sells nearly every serious AI chip on the planet just made sure it sells the desk drawer, too.

[5]

NVIDIA DGX Station GB300 Superchip Specifications and 748GB Unified Memory

NVIDIA has officially opened orders for its DGX Station, a desktop-class AI system built around the new GB300 Superchip. Announced during the company's GPU Technology Conference keynote, the system is positioned as a local AI development platform that brings data center-class performance into a workstation form factor. Compared to the previously released DGX Spark, the DGX Station represents a significant step forward, scaling performance from 1 PFLOP to up to 20 PFLOPS using FP4 precision with sparsity. At the heart of the DGX Station is NVIDIA's GB300 Superchip, which integrates a 72-core Grace CPU with a Blackwell Ultra GPU featuring 20,480 CUDA cores. This combination reflects the company's Grace-Blackwell architecture, designed to tightly couple CPU and GPU resources into a single coherent compute platform. The system runs NVIDIA's DGX software stack, enabling compatibility with enterprise AI workflows and allowing developers to move projects between local systems and large-scale data center deployments with minimal friction. A major highlight is the memory subsystem. The DGX Station features a total of 748GB of unified memory, consisting of 252GB of HBM3e on the GPU and 496GB of LPDDR5X attached to the CPU. Through NVLink-C2C interconnect, both processors can access the full memory pool, significantly reducing latency and eliminating traditional bottlenecks between system and graphics memory. This unified approach is particularly beneficial for large AI models, with NVIDIA indicating support for workloads approaching the trillion-parameter range depending on optimization. The platform also offers a range of expansion and connectivity options. The baseboard includes PCIe 5.0 slots for add-in cards, multiple M.2 interfaces for high-speed storage, and advanced networking capabilities such as dual 400 Gbit/s ConnectX-8 adapters alongside 10GbE connectivity. Unlike some previous DGX products, NVIDIA will not sell a Founders Edition system. Instead, it supplies the core motherboard with the integrated CPU and GPU, leaving partners such as ASUS, Dell, Gigabyte, MSI, and Supermicro to deliver complete workstation solutions. While pricing has not yet been disclosed, the DGX Station is clearly aimed at professionals working with large-scale AI models who require substantial local compute resources. Systems from OEM partners are expected to become available in the coming months.

[6]

NVIDIA DGX Station Upgraded With GB300 Blackwell Ultra Desktop Superchip: 748 GB Memory, 20 PFLOPs AI Compute & AI Ready

NVIDIA has introduced its updated DGX Station AI Supercomputer powered by the GB300 Blackwell Ultra Desktop Superchip. NVIDIA & Its Partners Introduce GB300 "Blackwell Ultra" Powered DGX Station: A Powerful AI Workstation With Meaty Specs Last year, NVIDIA introduced its DGX Station powered by the GB200 "Blackwell" Superchip, and also promised that a Blackwell Ultra variant was in the works. Now, at GTC 2026, NVIDIA is finally launching the GB300 "Blackwell Ultra" variant, featuring powerful specifications, and available in various options from its partners. The NVIDIA DGX Station is essentially an AI workstation that takes the revolutionary Blackwell Ultra Superchip and integrates it on a desktop-styled motherboard with various workstation-centric ports & components. This is more similar to a desktop PC that most of us are used to, though with some serious hardware integration. Starting with the specifications, the NVIDIA DGX Station for 2026 is equipped with a single NVIDIA GB200 "Blackwell Ultra" GPU. The NVIDIA Blackwell Ultra GB300 GPU packs a total of 160 SMs, each with a total of 128 CUDA cores, four 5th Gen Tensor cores with FP8, FP6, NVFP4 precision compute, 256 KB of Tensor memory or TMEM, and SFUs. This rounds up to a total of 20,480 CUDA cores and 640 Tensor cores, plus 40 MB of TMEM. Blackwell Ultra also brings a huge upgrade to memory, offering 252 GB of HBM3e capacities versus a max of 192 GB on the previous Blackwell GB200 solutions. The memory offers 7.1 TB/s bandwidth. The result is that NVIDIA's Blackwell Ultra GB300 platform is able to achieve a 50% increase in Dense Low Precision Compute output using the new NVFP4 standard. The new model delivers near FP8 accuracy, & the differences are often less than 1%. This also reduces the memory footprint by 1.8x versus FP8 and 3.5x versus FP16. As for the CPU, the DGX Station houses a single Grace chip with 72 cores based on the Neoverse V2 architecture. The system comes with 496 GB of LPDDR5X in addition to the HBM memory. The system memory offers 396 GB/s of bandwidth, and combined, you get 784 GB of memory. Both the CPU and GPU are connected using a 900 GB/s NVLink-C2C interconnect, and the system offers high-speed CX8 SuperNIC that delivers 800 Gb/s of networking speeds. The system comes with a 1600W TDP, features support for NVIDIA's latest RTX PRO Blackwell graphics cards, includes four M.2 Gen5 ports, four Ethernet ports (2 x 400 Gbs, 1 x 10 GbE, 1 x 1 GbE), a full PCIe Gen5 x16 slot, and two PCIe Gen5 x16 (x8 electrical) slots. The latest NVIDIA DGX Station is not only offered by NVIDIA but also by its partners, who have designed their own respective solutions. The following are the major GB300 DGX Station releases: None of the partners has the pricing listed, but we can expect the DGX Station GB300 to cost several thousand dollars based on the specs alone. These systems will be shipping this month. Follow Wccftech on Google to get more of our news coverage in your feeds.

Share

Share

Copy Link

Nvidia officially opened orders for the DGX Station, a desktop supercomputer powered by the GB300 Grace Blackwell Ultra Superchip. The system delivers 20 petaflops of compute and 748GB of unified memory, enabling AI professionals to run trillion-parameter models locally without cloud infrastructure. Available from Asus, Dell, Gigabyte, MSI, Supermicro, and HP, the workstation targets researchers and developers building autonomous AI agents.

Nvidia Opens Orders for Desktop Supercomputer Targeting AI Professionals

Nvidia has officially opened orders for its DGX Station, a desktop AI system that brings data-center-class AI performance directly to workstations

1

. Announced at the company's annual GTC conference, the system is now available through six manufacturers: Asus, Dell, HP, Gigabyte, MSI, and Supermicro2

. Systems will begin shipping within weeks to months, though pricing remains undisclosed by most vendors. MSI's XpertStation WS300 variant carries a price tag of $84,999.99, signaling the premium positioning of these desktop supercomputer units3

.

Source: TechRadar

The DGX Station represents a significant evolution from Nvidia's $4,000 DGX Spark mini PC, which features a smaller GB10 chip and 128GB of RAM capable of running AI models with up to 200 billion parameters

2

. This new workstation targets software developers, researchers, data scientists, and anyone requiring substantial local AI development capabilities beyond what cloud infrastructure or smaller systems can provide1

.GB300 Superchip Delivers 20 Petaflops of Compute Power

At the core of the DGX Station sits Nvidia's GB300 Grace Blackwell Ultra Desktop Superchip, which integrates a 72-core Grace CPU with a Blackwell Ultra GPU featuring 20,480 CUDA cores

5

. The processors connect through a 900 GB/s NVLink C2C interface, delivering 1.8 terabytes per second of coherent bandwidth between the CPU and GPU—seven times faster than PCIe Gen 64

. This architecture enables the system to deliver up to 20 petaflops of performance using FP4 precision with sparsity5

.

Source: Guru3D

To put this in perspective, 20 petaflops—20 quadrillion operations per second—would have ranked among the world's top supercomputers less than a decade ago. The Summit system at Oak Ridge National Laboratory, which held the global number one spot in 2018, delivered roughly ten times that performance but occupied a room the size of two basketball courts

4

. Nvidia is now packaging a meaningful fraction of that capability into a system that plugs into a standard wall outlet and fits beside a monitor.Unified Memory Architecture Enables Trillion-Parameter Models

The DGX Station features 748GB of unified memory, consisting of 252GB of HBM3e memory on the GPU rated at 7.1 TB/s bandwidth and 496GB of LPDDR5X memory on the CPU rated at 396GB/s bandwidth

1

. Both memory pools are unified through the NVLink interconnect, allowing the CPU and GPU to share each other's memory seamlessly5

.This unified memory configuration is crucial for running trillion-parameter models—neural networks roughly the scale of GPT-4—which must be loaded entirely into memory to function

4

. Without sufficient memory, no amount of processing speed matters because the model simply won't fit. The coherent architecture eliminates the latency penalties typically associated with shuttling data between separate CPU and GPU memory pools, significantly reducing bottlenecks that cripple desktop AI work4

.Designed for Always-On Autonomous AI Agents

Nvidia designed the DGX Station explicitly for what it identifies as the next phase of AI: autonomous AI agent systems that reason, plan, write code, and execute tasks continuously rather than simply responding to prompts

4

. The system pairs with NemoClaw, a new open-source stack that bundles Nvidia's Nemotron models with OpenShell, a secure runtime that enforces policy-based security, network, and privacy guardrails for autonomous agents2

.Nvidia CEO Jensen Huang called OpenClaw—the broader agent platform NemoClaw supports—"the operating system for personal AI," comparing it directly to Mac and Windows and stating it's "as big of a deal as HTML, as big of a deal as Linux"

2

. The argument centers on architectural fit: cloud instances spin up and down on demand, but always-on agents require persistent compute, persistent memory, and persistent state. A workstation running 24/7 with local data and models inside a security sandbox is better suited to that workload than rented GPU capacity in someone else's data center4

.Related Stories

Extensive Connectivity and Expansion Options

The DGX Station includes three PCIe Gen 5 x16 slots—one wired with 16 lanes and eight lanes for the other two—officially supporting discrete GPU options for additional tasks such as simulation and ray-traced visualization

1

. Supported GPUs include the RTX Pro 6000 Workstation Edition, RTX Pro 6000 Blackwell Max-Q Workstation Edition, RTX Pro 4000 Blackwell SFF Edition, and RTX Pro 2000 Blackwell graphics cards1

.For networking, the system uses Nvidia's ConnectX-8 SuperNIC, supporting speeds up to 800 Gb/s through two QSFP112 ports, with dual 400GbE LAN ports enabling high-speed distributed multi-node AI workloads

3

. The design allows connecting up to two DGX Station units together to scale model capacity and performance1

. Storage options include four M.2 slots for high-speed NVMe drives, accelerating dataset ingestion and AI pipelines3

.Power delivery comes through a single 24-pin ATX power connector, a single 8-pin EPS connector, and three 12V-2x6 power connectors for the GPU, feeding the system's 1,600W official power rating

1

.

Source: PC Magazine

Seamless Path from Desk Prototype to Data Center Production

One strategic advantage of the DGX Station is architectural continuity with Nvidia's GB300 NVL72 data center systems—72-GPU racks designed for hyperscale AI factories

4

. Applications built on the workstation migrate seamlessly to these data center configurations without rearchitecting code, creating a vertically integrated pipeline where developers can prototype at their desk and scale to cloud infrastructure when ready4

.This matters because the biggest hidden cost in AI development today isn't compute—it's the engineering time lost rewriting code for different hardware configurations. Models fine-tuned on local GPU clusters often require substantial rework to deploy on cloud infrastructure with different memory architectures, networking stacks, and software dependencies

4

. The DGX Station runs the same Nvidia AI software stack that powers every tier of Nvidia's infrastructure, eliminating that friction5

.The system supports the full AI lifecycle, including large-scale model training, data-intensive analytics, and real-time inference

3

. It can function as a personal supercomputer for solo developers or as a shared compute node for teams, with support for air-gapped configurations in classified or regulated environments where data cannot leave the building4

. Organizations maintain control over their data and intellectual property while conducting collaborative fine-tuning and on-demand deployment for generative AI applications3

.References

Summarized by

Navi

[1]

[4]

Related Stories

Nvidia Unveils DGX Spark and DGX Station: Personal AI Supercomputers for the Desktop

19 Mar 2025•Technology

Dell Unveils Groundbreaking 20-PetaFLOPS Desktop Powered by Nvidia's GB300 Superchip

19 Mar 2025•Technology

ASUS Unveils ExpertCenter Pro ET900N G3: A Desktop Powerhouse with NVIDIA's GB300 Blackwell Ultra Superchip

22 Jul 2025•Technology