AI Fakes About Iran War Create Unprecedented Crisis of Reality on Social Media Platforms

9 Sources

9 Sources

[1]

Fake AI Content About the Iran War Is Rampant on X

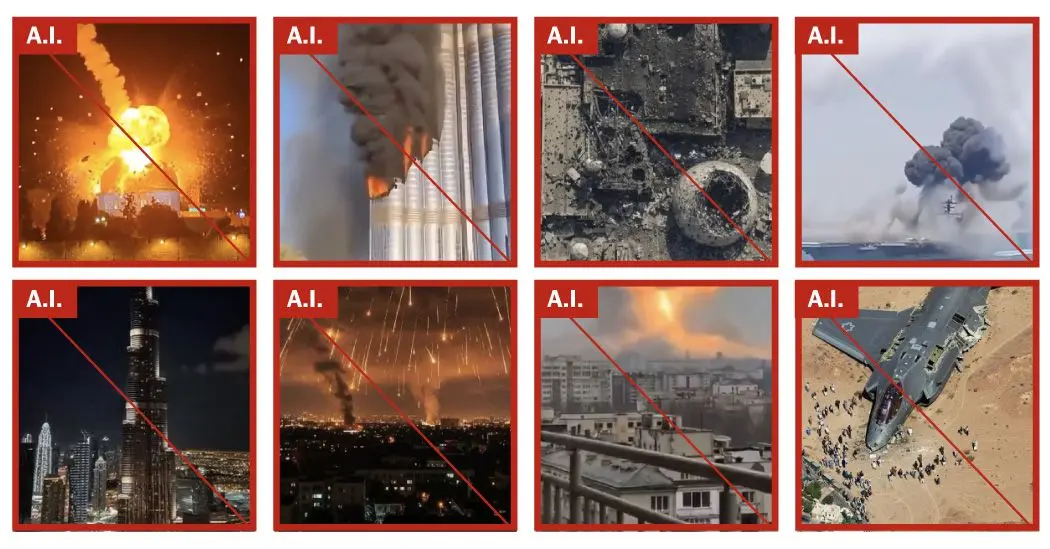

X's Grok is failing to accurately verify video footage from the Iran conflict and is sharing its own AI-generated images about the war. Ever since U.S.-Israeli strikes against Iran began on February 28th, X has been flooded with disinformation about the war from accounts sharing fake and repurposed videos. Disinformation experts tell WIRED that things are worse than ever with Grok now repeatedly giving false information, making the platform even more unhinged from reality. AI images have been shared by paid accounts bearing blue check marks and by Iranian officials portraying exaggerated scenes of damage, including an AI-generated video of high-rise buildings in Bahrain on fire. An image of a U.S. B-2 bomber shot down by Iran was viewed a million times before being deleted, while an image of Delta force members being captured was seen 5 million times. Disinformation expert Tal Hagin tells WIRED that a particularly unique feature of the war has been a drastic increase in the amount of AI generated content he's debunking. Hagin says, I see the proliferation of AI-based fake news pushing us over the edge of a fact-based world unless we enact change now. While X has announced it would temporarily demonetize blue check mark accounts if they post AI-generated videos of armed conflict without a label, non-AI disinformation is also flourishing. MAGA accounts have reused footage from elsewhere to push President Donald Trump's narrative that the Iranian government fired a missile that struck an elementary school in Minab, killing around 170 people, including 110 children. X's reward program incentivizes sensational content. Today's AI tools make it easy to produce such content. The result? Especially during breaking news situations, like the conflict in Iran, is a complete breakdown of reality where it's getting almost impossible to know what's real and what's not.

[2]

The Fog of AI

The spread of fake imagery of the Iran war is helping to make the question Is this real? all but unanswerable. On February 27, an AI-generated image appeared on Instagram purporting to show heavy military equipment stationed inside Karimian Elementary School in Isfahan, Iran. The post, shared by accounts including the Free Union of Iranian Workers, an independent labor union operating inside Iran whose leaders have been jailed by the regime, read: "This is not a military zone! It's Karimian Elementary." The image carried a visible Google Gemini watermark, indicating that it had been created by the software. The school posted a rebuttal, noting that the equipment could not physically fit on the premises. Iranian-diaspora fact-checkers confirmed that the image was fabricated. The next day, Shajareh Tayyebeh, a girls' elementary school in the southern city of Minab, was hit in the first wave of strikes on Iran. Iranian authorities reported at least 175 people dead, many of them children. The exact death toll has not been independently confirmed, but a New York Times investigation verified that the school had been hit by a precision strike at the same time as attacks on an adjacent naval base, and a preliminary investigation by the American military concluded that U.S. forces were most likely responsible. The school sat on the grounds of the Iranian navy's Asef Brigade barracks, an active military base. The building had been converted from military use, and served children from military and civilian families. In short: The day before the strikes began, an AI image on social media planted the notion that the regime hides military equipment in schools. The next day, a real school -- once part of a military compound but walled off from it since 2016, according to Human Rights Watch -- was destroyed. The fake was wrong about Karimian, but by the time the Minab strike happened, audiences were primed to believe that a school was a legitimate military target, not the site of a civilian catastrophe. Layer by layer, an accumulation of AI imagery circulated on social media that made it difficult to establish what happened to these children. This is the fog that AI has introduced to the war in Iran. This isn't a war where AI fakes fool everyone nor where detection tools catch everything. We live in a world where real photographs of real civilian deaths are called fake, and where fake images are used to illustrate real deaths. Where correct identification of one fake image is used to cast doubt on real images, where incorrect detection is authoritative, and where all of it happens faster than any institution, newsroom, fact-checker, photo wire service, or platform can process. The fog of AI does not need every piece of content to be fabricated. It needs the question Is this real? to become close to unanswerable. When video of the Minab devastation circulated, claims spread on X, Telegram, and Instagram that the footage was actually from Peshawar, Pakistan. Fact-checkers intervened, this time to defend the authenticity of real footage, having debunked the fake imagery about a different school the day before. Users on X, many of them diaspora accounts opposed to the regime, claimed that the footage depicted the May 2021 bombing of the Sayed ul-Shuhada school in Kabul. Another user asked Grok to verify the post and Grok agreed with the false claim, citing The New York Times, the Guardian, Al Jazeera, and Wikipedia as sources even though they contained images directly contradicting it. Then open-source intelligence analysts geolocated the footage to coordinates matching the school. Grok was not simply wrong; it was confidently wrong. Asked to verify a real video, the AI confirmed a false claim that it supported with fabricated citations, giving denialism machine authority. Ali Breland: Dubai's army of influencers gets back in line Meanwhile, Iran undermined the documentation of the tragedy. The Iranian embassy in Austria denounced the Minab strike and accused Europe of complicity in the "death of our collective soul." The post included a photograph of a child's pink backpack covered in blood and dust. SynthID, Google's watermarking tool, confirmed that the image had been generated by Google's AI. The regime illustrated the deaths of real children with a fabricated image. The identification of that fake photo now furnishes an alibi for those who want to deny the real bombing. The Iranian regime has long dismissed evidence of its violence and crimes by calling the documentation fabricated, staged, and foreign produced. Now a similar accusatory reflex has migrated to opposition media and diaspora accounts. Yet children were killed, even if there is false propaganda about their deaths. That the regime has an interest in publicizing these deaths does not mean that the deaths did not happen. Mourners in Minab buried the schoolgirls and staff on March 3. Iran's foreign minister, Abbas Araghchi, posted a photograph on X of the burial site that was viewed 3 million times. Within hours, a diaspora account claimed that the image had been recycled from a Jakarta cemetery where COVID victims were buried in July 2021. The claim named the cemetery, the date, and the photographer, but none of that information was supported by reverse image search, metadata analysis, or other fact-checking. A verified account posted a "claim versus fact" graphic that said: "Iran releases AI altered photo of graves being dug for 160 girls." At the same time, an account calling for "transparent investigation to ensure accountability" illustrated the real tragedy with an AI-generated image of parents mourning over shrouded bodies, further contaminating the evidentiary record that the post was trying to defend. The New York Times visual-investigations team geolocated the burial site to Minab's Hermud Cemetery. Satellite imagery showed that the graves were dug on Monday in a previously untouched section of ground, consistent with a Saturday bombing and a Tuesday funeral. A New York Times journalist noted on X that the image was not AI generated. To learn that the regime staged an elaborate, televised funeral for children killed by foreign strikes produces in many Iranians, inside and outside the country, a rage that I understand. The protests that started on December 28, 2025, and reached their intensity on January 8 and 9, were answered with what is thought to have been massacres of thousands of protesters, including children. Parents went through great pains to retrieve their children's bodies. When bodies were returned, families were sometimes asked to pay exorbitant fees, to agree to conditions denying burials or dignified funerals, or forced to concede that the dead were members of the security forces and had been killed by "terrorists." But the resentment at the regime's selective grief does not make the graves empty. It does not make the children un-real. And it does not justify dismissing evidence of their deaths with a two-letter accusation: "AI." Both formulations, the denials of the bombing and the uses of the bombing for propaganda, begin and end in the same place: Evidence has ceased to function as it should. Jonathan Lemire: Trump isn't even trying to sell this war One hundred seventy-five people were reportedly buried in Minab, most of them children. Nearly every actor in this conflict, from every direction, has made it difficult to establish that these children lived, that they were killed, and that someone is responsible. In Minab, the fact of these children's deaths has been documented, verified, and geolocated. None of it has been enough to prevent the doubt from spreading faster than the evidence.

[3]

Cascade of A.I. Fakes About War With Iran Causes Chaos Online

A torrent of fake videos and images generated by artificial intelligence have overrun social networks during the first weeks of the war in Iran. The videos -- showing huge explosions that never happened, decimated city streets that were never attacked or troops protesting the war who do not exist -- have added a chaotic and confusing layer to the conflict online. The New York Times identified over 110 unique A.I.-generated images and videos from the past two weeks about the war in the Middle East. The fakes covered every aspect of the fighting: They falsely depicted screaming Israelis cowering as explosions ripped through Tel Aviv, Iranians mourning their dead and American military vessels bombarded with missiles and torpedoes. Collectively, they were seen millions of times online through networks like X, TikTok and Facebook, and countless more times within private messaging apps popular in the region and around the world. The Times identified the A.I. content by checking for both obvious signs -- such as depictions of buildings that do not exist, garbled text and behaviors or movements that defy expectations -- and for invisible watermarks embedded within the files. The posts were also checked with multiple A.I. detector tools and compared with reports from news organizations. A sophisticated new wave of A.I. tools makes the fakes possible, enabling nearly anyone to create lifelike simulations of war that can deceive the naked eye for little to no cost. Similar content has spread in other conflicts, including the war between Ukraine and Russia. But this war has multiple fronts, and that has led to a proliferation of fake content since the United States and Israel first attacked Iran, according to experts. "Even compared to when the Ukraine war broke out, things now are very different," said Marc Owen Jones, an associate professor of media analytics at Northwestern University in Qatar. "We're probably seeing far more A.I.-related content now than we ever have before." Overall, the A.I. fakes included ... The content has become a potent informational weapon for Tehran as it seeks to shake the public's tolerance for war by depicting scenes of devastation and destruction across the region. The majority of A.I. videos about the war push pro-Iranian views, often to falsely demonstrate its military superiority and sophistication, according to a study of online activity by Cyabra, a social media intelligence company. "The use of A.I. images of places in the Gulf -- being burnt or damaged -- becomes more important in Iran's playbook," Mr. Jones said, "because it allows them to give a sense that this war is more destructive and maybe more costly for America's allies than it might actually be." In one of the most circulated fake videos found online, a shaky handheld scene seemingly shot from an apartment balcony in Tel Aviv shows the skyline pounded with missiles as an Israeli flag sits in the foreground. The video was viewed millions of times across platforms and was picked up by social media influencers and fringe news websites, according to a review of social media activity by The Times. The Israeli flag in the foreground was one telltale sign that the video was A.I.-generated, experts said. To generate such videos, creators who use A.I. tools will typically write simple text instructions describing, for example, a shaky handheld video of a missile strike on Israel. The A.I. tools will then often include an Israeli flag or the Star of David to fulfill such a request. Several other A.I. videos included the flag. There is ample genuine footage of the war being shared online, too, with cellphones and social platforms giving a real-time view of the conflict. Many of those images and videos are more subdued than the scenes made by A.I. tools. Real footage of missile strikes was often shot from far away, typically at night, with missiles visible as little more than bright lights in the distance. Explosions in real videos are more often shown as plumes of smoke, not as fireballs, with bystanders rushing to film the scene only after the munitions meet their target. Some A.I. videos and images, by contrast, have falsely depicted war like an over-the-top Hollywood action movie, with enormous explosions resulting in mushroom clouds, sonic booms that ripple across unnamed cities and supposed hypersonic missiles that leave glowing streaks in the sky. Real footage is sometimes enhanced by A.I. tools to make explosions appear larger and more devastating, further blurring the line between what is real and fake. The A.I. footage has essentially created an alternate reality more suited to social media, experts said, where the exaggerated footage is more likely to find an audience. In one instance, the A.I. fakes played an outsize role in the debate online and between governments over the fate of the U.S.S. Abraham Lincoln, an aircraft carrier deployed to the region. Iran's Islamic Revolutionary Guards Navy initially suggested on March 1 that they had successfully attacked the ship, possibly sinking it. That led to a deluge of A.I.-generated fakes depicting the ship or those like it on fire. Iranian users celebrated the footage online as evidence that their country's counteroffensive was rattling the U.S.-Israeli alliance. The United States later said that the attack was unsuccessful and that the ship was unharmed. Dozens of other A.I. images and videos made no effort to hide that they were fake, acting instead as a new form of digital propaganda that brought to life the political arguments typically made by governments or their propaganda arms. Those included flattering depictions of world leaders as powerful men, or dehumanizing depictions of opposition leaders. One collection of clearly fictional videos offered a view of the Shajarah Tayyebeh elementary school, which was destroyed by the United States in an apparent errant missile strike on Feb. 28, according to a preliminary inquiry. At least 175 people were killed, most of them children, according to Iranian officials. The A.I.-generated videos unfolded like short films, showing school girls playing outside before an American fighter jet launches missiles. Social media companies have done little to combat the scourge of A.I. videos that overwhelmed their platforms last year after OpenAI released Sora, a video-generating app that allowed anyone to create realistic fakes through a simple app. (The New York Times sued OpenAI and Microsoft in 2023, accusing them of copyright infringement of news content related to A.I. systems. The two companies have denied those claims.) Though videos generated by many A.I. tools can include both visible and invisible watermarks labeling them as fake, those are easy to remove or obscure. Only a few of the videos identified by The Times contained such watermarks. Elon Musk's X, which has taken a broadly permissive approach to allowing misinformation on its platform, announced last week that it would suspend accounts from receiving revenue from the platform for 90 days if they posted A.I.-generated content of "armed conflict" without labeling it as such, in a bid to stop users from profiting off the falsehoods. But many of the Iranian-linked accounts identified by Cyabra appeared far more focused on spreading its messages than making money. "This is a natural front for Iran to try and exploit and it feels like this is one of the reasons it is so voluminous," said Valerie Wirtschafter, a fellow at the Brookings Institution studying foreign policy and A.I. "It's actually a tool of war."

[4]

A photo of Iran's bombed schoolgirl graveyard went around the world. Was it real, or AI?

Numerous faked images and a string of startlingly inaccurate responses from Gemini and Grok are part of a tidal wave of AI slop engulfing coverage of the Iran war The graves, freshly dug, lie in neat rows of 20 across. More than 60 have already been carved out of the earth, with a few clusters of people standing gathered around them. Dozens more are marked out on the ground in front: small chalk rectangles, with diggers poised to complete their task. The cemetery of Minab, photographed as it prepares to bury more than 100 of the town's young girls, is one of the defining images of the US-Israeli war on Iran, bluntly capturing the devastating civilian toll. But is it real? Ask Gemini, the AI service powered by Google, and the answer you receive is no - in fact, Gemini claims the photograph is from two years earlier and more than 2,000km (1,240 miles) away. Rather than graves for small girls killed by a missile, the image "depicts a mass burial site in Kahramanmaraş, Turkey" after the 7.8 magnitude earthquake that struck in 2023. "This specific aerial perspective became one of the most widely shared images of the disaster," Gemini says, "illustrating the sheer scale of the loss." Seeing the same burial image on social media, others turned to X's AI assistant Grok to check its veracity. Like Gemini, Grok will breezily assure you the photo is not from Iran at all - although it lands on a different date, disaster and location. The image is "from Rorotan Cemetery in Jakarta, Indonesia - a July 2021 stock photo of Covid mass burials. Not Minab," it says. In both cases, the AI answers sound sure: they don't equivocate, and even provide "sources" for the original image, should you choose to check them. Follow the thread to examine those, however, and you'll begin to hit dead ends: either the image doesn't appear at all, or the link provided is to a news report that doesn't exist. For all their impression of clarity and precision, the AIs are simply wrong. The cemetery image, it turns out, is authentic. Researchers have cross referenced the photo of the site with satellite images that confirm its location, and it can be cross-referenced again with dozens more images taken of the same site from slightly different angles, and again with video footage - none of which experts say show signs of tampering or digital manipulation. The "factchecks" by Gemini and Grok are just one example of a tidal wave of AI-generated slop - hallucinated facts, nonsense analysis and faked images - that are engulfing coverage of the Iran war. Experts say it is wasting investigative time and risks atrocities being denied - as well as heralding alarming weaknesses as people increasingly rely on AI summaries for news and information. From the opening days of the war, factcheckers have been kept busy with a constant flow of faked imagery online. A photo of what the Tehran Times claimed was satellite imagery of a US radar destroyed in Qatar was exposed as an AI fake made from old Google Earth pictures - its giveaways included the cars, which were all in identical positions to the image from two years earlier. Widely circulated images of Khamenei's body being pulled from rubble had "tells" including duplicate limbs among the rescuers. "One fake that stood out to me claimed to show a senior Iranian commander walking around Tehran disguising himself as a woman to avoid potential assassination," says Shayan Sardarizadeh, a senior journalist at the BBC Verify team, which uses forensic techniques to confirm information and conduct visual investigations. "The street, the building in the background, and the surroundings all seemed like a realistic scene in Tehran." Sardarizadeh says AI now makes up a large portion of all of the misinformation the team debunks - and the volume is increasing. In the first few weeks of the Gaza or Ukraine wars, for example, most fake posts the team saw were old or unrelated videos, or repurposed video game footage. Now, "nearly half, if not more, of all the viral falsehoods that we now track and debunk are generative AI". That has partly been driven by the ease with which anyone can now generate a realistic video or photo. But the other enormous shift is in people using AI to summarise the news or answer questions, rather than going directly to the original source. Google AI summaries and Grok were only rolled out to the wider international public in mid 2024, and have rapidly become widespread: 65% of people report regularly seeing AI summaries of news or other information, and the portion of people who say they are using generative AI to get information doubled in the past year. Often, however, AI summaries are simply wrong. An international study in 2025 found about half of all AI-generated summaries had at least one significant sourcing or accuracy issue - with some tools, such as Google's popular Gemini interface, that rose to 76%. In the case of the Iran war, factcheckers say they are seeing a deluge of this kind of misleading material. As well as the Minab graveyard images, examples include Grok inaccurately suggesting to X users that video footage of fires in Tehran was actually from LA in 2017, and users citing "AI analysis" to misidentify a missile filmed falling next to the Minab school (numerous munitions experts say it is a US Tomahawk, a finding reinforced by fragments reportedly found at the scene and internal US briefings on the bombing). "Factcheckers now regularly have to address both a false post and also a misleading claim made by a chatbot in relation to that post," Sardarizadeh says. Part of the problem is how LLM AI models (such as Grok, ChatGPT and Gemini) work. At a very basic level, they are probabilistic language models, constructing sentences piece by piece based on which next word has the highest likelihood of being appropriate. While that process produces convincing, authoritative-sounding sentences, it doesn't mean the AI has actually analysed the material in front of it. "AI is perceived as an omniscient entity with access to everything, but without emotions," says Tal Hagin, an open-source intelligence analyst and media literacy educator - so people tend to trust it. "What you are using is actually a very advanced probability machine, not a truth box." The problem is compounded by the authoritative way AI tends to present its findings. It will generate detailed "reports", including names and dates, references and sources - the kind of material that suggests deep research and understanding, but may in fact be hallucinated or nonexistent. When the Guardian queried Gemini's answer on the Minab photograph, saying "I don't think that's correct, can you search again?" it revised its finding - but to another incorrect location and year. "I apologise for the oversight. Upon re-examining the image ... this image was taken in Gaza in November 2023," it says. Told that that answer was also incorrect, and the photo was from Iran, the bot revised again - to Tehran, during the Covid pandemic. Told that the photograph was taken in Iran in 2025, it responded that it was from the aftermath of an earthquake in southern Iran. X and Google did not respond to a request for comment. Both platforms' AI services note in their small print that they may produce inaccurate results. For those investigating human rights abuses, the trend poses new challenges. Chris Osieck, an independent open-source investigator who has conducted investigations into a number of civilian casualty bombings in Iran, said researchers' time was being wasted debunking AI material. Debunking AI videos, for example, often involves carefully inspecting them frame by frame for visual discrepancies. "That time should be devoted to what matters most: reporting on the impact this brutal war has on the people caught in the crossfire." And in cases such as Minab, where the material is demonstrably real? Researchers fear the wash of AI slop is sowing doubt in people's minds that the atrocity they are seeing evidence of ever happened at all. "As the technology continues to get better, it could muddy the waters so much that videos and images of real atrocities get dismissed as fake or AI," say Sardarizadeh. "I've already seen examples of this in relation to the conflicts in Gaza and Ukraine," he says. For those who have lost loved ones, accountability risks being overshadowed by a mass of misinformation, suspicion and doubt. "At the end of the day, one should also consider what this looks like from the perspective of the families of those who were killed," Osieck says. "Imagine losing a child and then seeing AI being used online to claim that the event did not happen. That is not just an obstacle for investigators. It is also deeply disrespectful to the loved ones who are grieving."

[5]

Why Iran Is Winning the Slop War

There are enormous gaps in the information available about the war with Iran. In the country at the center of the conflict, tight media and internet controls, pervasive fear, and hobbled infrastructure have stemmed its flow. In nearby Gulf states, wartime rules and arrests have suppressed coverage and quieted an early surge in social-media posts from citizens and expat influencers; similarly, Israeli authorities have expanded their press crackdown, citing the conflict to justify heightened restrictions and detaining journalists. The American press's access to Iran is severely limited, as is its access to the United States military, which has no "boots on the ground" (at least for now). Part of this gap has been filled by reporters working around these limits, as well as by regular citizens sharing, often anonymously and at great risk to themselves, video and testimony from the ground. But the entire world wants to know what's happening in the Middle East, and the demand for new information is intense. Governments involved in the conflict, in their own ways, have seized on the opportunity: America, with staggeringly juvenile social-media hype videos intercutting movie and video-game clips with war footage, intended to mobilize its most MAGA citizens with "banger memes, dude," as one White House official put it, or at least to troll everyone else; Israel, with prolific and aggressive official updates from military social-media channels it's been building up longer than anyone else; and Iran, with defiant videos projecting confidence at audiences abroad while hard-line, tightly managed domestic media handles messaging within its borders. But the rest of this information gap has been filled, and then some, by AI. It's been called a slop war, and for millions of social-media users around the world -- on platforms like TikTok, Instagram, Facebook, and X, but also in large channels on chat apps like Telegram -- AI has provided some of the most memorable imagery of recent weeks. Untethered from the reality on the ground, and driven by nationalistic and partisan impulses, but also by the commercial incentives of social media, the slop war is unfolding in its own independent way and according to its own grimly whimsical logic. Inside this algorithmic seam, where nearly anything is possible and nothing is quite true, it's Iran -- the technological laggard of the conflict, with no AI industry to speak of -- that seems to be benefiting the most. The first way this works is at the level of state propaganda, where AI-generated video has been deployed or endorsed by governments and government-controlled sources. Some of this is more subtle, less direct, and either allowed or intended to be misleading: Other examples are cartoonish attempts at AI-generated glorification and demonization: On one side, you've got the superpowers that initiated the war, states with near-infinite resources to marshal for their military campaigns and accompanying messaging strategies. On the other, you've got, well, Iran. Besides the contrast in subject -- upbeat, chest-thumping aggression versus a call to avenge the deaths of schoolchildren -- the use of cheap, fast, and widely available AI video generation has a powerful flattening effect. If you've played with these AI tools, you'll easily be able to imagine fragments of the sorts of prompts that were fed into software like Kling, Veo, Runway, Grok, or Seedance: the aftermath of an attack on a school in Iran in the style of LEGO animation; LEGO man Donald Trump reading a notebook of the Epstein files, Satan is there, realistic, cinematic; militant bowling pins approach holding sign reading "We won't stop making nuclear weapons," Dreamworks style, smirking faces, angry eyes; bowling-alley style animation of a "strike" using military jets. Nothing here took much effort, and the outputs end up inhabiting the same narrow aesthetic zone, easily and cheaply obtainable, associated with widely available AI tools that have rapidly commoditized such videos. For Iran, AI video propaganda might represent a slight increase in capability, a way to produce higher volumes of passable, legible, and persuasive material for a wider range of audiences. For the United States, this sort of automation provides speed and flexibility, sure, but also draws its communications strategy down into a far more symmetric fight than the war itself, where everyone has the same weapons (i.e., AI video-generation tools that any curious teenager could use at this level) and the battlefield -- where the possible audiences for such videos are to be found -- is the entire internet, where such videos overlap, stylistically, with spam. But the slop war isn't primarily about officially aligned media -- not by a long shot. AI video tools, which are available to pretty much anyone, have been deployed in service of a different, globally common objective, by people with little to nothing at stake in the actual war: maximizing engagement and maybe making a little cash. On TikTok and X, videos like this are often eventually flagged and removed, but not before amassing many millions of views, then reposted by a lower tier of opportunistic accounts. These videos have been all over the place for the past couple of weeks: On this front of the slop war, videos can be surreal and unbelievable, pointed and satirical, or plausible and misleading. (I've had AI Trump's "Why nobody help me to open Hormuz" stuck in my head for days now.) Many of these videos wouldn't hold up to scrutiny from deeply interested parties who have a direct stake in the conflict or are following it more closely, but that's not really who, or what, these AI videos are for. They're intended, instead, to exploit a subject of sudden and intense interest, part of the long, nihilistic tradition of politics-adjacent content farming. They're directed at the vastly larger groups of people with only a passing awareness of the war, who are experiencing it largely not through active consumption but through passive algorithmic recommendation. They're for harvesting views by reflecting or playing with what enormous gathered audiences are likely to look at and linger on. Taken together, they don't express a clear interest in the war's outcome. Instead, they're following the logic of the "For You" page, which has been embraced by most major social platforms. At X, leadership identified this dynamic fairly quickly: Prioritizing access to "authentic information on the ground" is a reasonable goal, and among the plausible effects of seeing misleading AI-generated videos everywhere is that people will be less able to tell what's real and will eventually become more suspicious of all video, including variable reports from the ground. (At this point, it's pretty difficult to argue that anyone is better off for the proliferation of AI video on X or elsewhere.) Before -- and, albeit to a lesser extent, since -- the X crackdown, the content of these videos revealed something else: that the most engaging fake content was, if not explicitly pro-Iran, at least more aligned with its efforts than against them. Into the void of information coming from the Middle East flowed an endless supply of videos suggesting unlikely, extreme, and enticing outcomes: swarms of missiles obliterating Dubai and Tel Aviv; societal breakdown in the United States; American warships exploding and jets falling out of the sky. (The AI slop war is producing blowback, too: Last week, Benjamin Netanyahu was compelled to make a proof-of-life video after social media users spread claims that, during a recent address, the Prime Minister had too many fingers -- a telltale sign, social media users said, that it had been generated by AI.) If you're a professional slop merchant -- the Trump Hormuz video above originated from a meme account allegedly based in Switzerland -- you know that rendering strange and unbelievable scenarios is a good way to get views. If you've started working around the war, you've probably also noticed that audiences seem more interested in stories representing U.S. and Israeli failure and overreach than in content that suggests its campaign is going well. That may be true not just abroad but also here in the U.S., where the young war is unpopular to an unprecedented degree. (One notable exception is the popularity of content posted in support Reza Pahlavi, the son of the deposed shah of Iran, whose followers in the diaspora seem receptive to fantastical AI content teasing his glorious return.) To paraphrase Field Marshal Montgomery, General MacArthur, and Vizzini, never get involved in a slop war on social media. This dynamic ends up creating an uncomfortable alignment for the mostly American social-media companies, stuck between the incentives created by their platforms and the military aims of the country against which their home country has declared war. To take a purely sociopathic, market-centric view of what's happening -- one that I describe here mainly because it's pretty close to how social-media CEOs talk about their platforms and users in the abstract -- AI is helping to meet existing demand for war-related content online. Perhaps it's even inducing more demand, as fake, misleading, or trivializing video makes the already dehumanizing and trivializing treatment of wars on social media as a fleeting content category that much more compelling and/or worse. (Congrats to AI!) It's easy to think of ways in which these commercial social platforms, which have become more commercial and less social as time has gone on, don't serve the interests of their users. Now, they're also finding themselves, despite their own recent political realignments with the Trump administration, organically if indirectly oriented against its war effort, providing a space in which much of the world can consume, share, and reveal its fantasies of American failure. (Congrats to Iran!)

[6]

AI fakes about Iran-US war swirl on X despite policy crackdown

Elon Musk's X is struggling to control AI-generated war videos. Lifelike deepfakes showing captured soldiers and destroyed cities are circulating widely. X has introduced a new policy to demonetize creators posting AI war content without disclosure. However, researchers remain doubtful about its effectiveness. The platform's revenue-sharing model may still incentivize the spread of sensational fake news. AI-created videos circulating on Elon Musk's X depict American soldiers captured by Iran, an Israeli city in ruins, and US embassies ablaze -- a surge of lifelike deepfakes despite a policy crackdown to curb wartime disinformation. The Middle East war has unleashed an avalanche of AI-generated visuals, dwarfing anything seen in previous conflicts and often leaving social media users unable to distinguish fabrication from reality, researchers say. In a bid to protect "authentic information" during conflicts, X announced last week that it would suspend creators from its revenue sharing program for 90 days if they post AI-generated war videos without disclosing they were artificially made. Subsequent violations will result in permanent suspension, X's head of product Nikita Bier warned in a post. The new policy is a notable pivot for a platform heavily criticized for becoming a haven of disinformation since Musk completed his $44 billion acquisition of the site in October 2022. It also won praise from senior State Department official Sarah Rogers, who called it a "great complement" to X's Community Notes -- a crowd-sourced verification system -- that results in "less reach (thus monetization)" for inaccurate content. But disinformation researchers remain skeptical. "The feeds I monitor are still flooded with AI-generated content about the war," Joe Bodnar of the Institute for Strategic Dialogue told AFP. "It doesn't seem like creators have been dissuaded from pushing misleading AI-generated images and videos about the conflict," he said. Bodnar pointed to a post from a premier "blue check" X account -- which is eligible for monetization -- that shared an AI clip depicting an Iranian "nuclear-capable" strike on Israel. The post garnered more views than Bier's message about cracking down on AI content. - Incentive for fakes - X did not respond when AFP asked how many accounts it had demonetized since Bier's announcement. AFP's global network of fact-checkers -- from Brazil to India -- identified a stream of AI fakes about the Middle East war, many from X's premium accounts with blue checkmarks that can be purchased. They include AI videos depicting a tearful American soldier inside a bombed-out embassy, captured US troops on their knees beside Iranian flags, and a destroyed US navy fleet. The flood of AI-fabricated visuals -- mixed with authentic imagery from the Middle East -- continues to grow faster than professional fact-checkers can debunk them. Grok, X's own AI chatbot, appeared to make the problem worse, wrongly telling users seeking fact-checks that numerous AI visuals from the war were real. Researchers have also warned that X's model -- allowing premium accounts to earn payouts based on engagement -- has turbocharged the financial incentive to peddle false or sensational content. One premium account, which posted an AI video of Dubai's Burj Khalifa skyscraper engulfed in flames, ignored a request from Bier that it label the content as AI. The post remained online, racking up more than two million views. - 'Countermeasure' - Last month, a report from the Tech Transparency Project said X appeared to be profiting from more than two dozen premium accounts belonging to Iranian government officials and state-controlled news outlets pushing propaganda, potentially in violation of US sanctions. X subsequently removed blue checkmarks for some of them, the report said. Even if X's demonetization policy were strictly enforced, a vast number of X users peddling AI content are not part of the revenue sharing program, researchers say. Those users are still subject to being fact-checked through Community Notes, a system whose effectiveness has been repeatedly questioned by researchers. Last year, a study by the Digital Democracy Institute of the Americas found more than 90 percent of X's Community Notes are never published, highlighting major limits. "X's policy is a reasonable countermeasure to viral disinformation about the war. In principle, this policy reduces the incentive structure for those spreading disinformation," said Alexios Mantzarlis, director of the Security, Trust, and Safety Initiative at Cornell Tech. "The devil will be in the implementing detail: Metadata on AI content can be removed and Community Notes are relatively rare," he said. "It is unlikely that X will be able to guarantee both high precision and high recall for this policy."

[7]

Fake missiles, fake deaths: AI is rewriting Israel's war reality

An emergency responder inspects a house that was destroyed by an Iranian ballistic missile strike on March 13, 2026 in Israel. Benjamin Netanyahu stands in a café holding a cup of coffee, speaking to the camera. It looks like just another one of his social media videos addressing the Israeli public in an attempt to boost morale during wartime. But then he begins to talk, smirking as he references a rumor spreading online that he was killed in an Iranian strike. The video is meant to put the claim to rest. He jokes about the rumor and continues speaking as if the absurdity of it all should be obvious. But the video does not end the speculation. Within hours, new posts begin dissecting his video... frame by frame. Some users claim the clip is AI-generated. Others point to supposed anomalies - the movement of his coffee cup, the blur of the image, the way his teeth appear to shift from one moment to the next - as proof that something is awry. In the information war surrounding the Iran conflict, even the act of proving you are alive can become part of the misinformation cycle. Across social media, fabricated images and AI-generated videos circulate alongside authentic footage of the conflict - clips showing fictional missile strikes on Tel Aviv, surreal scenes of world leaders dancing together, or fabricated battlefield destruction. The result is a growing sense of uncertainty: What is real, what is synthetic, and how can anyone tell the difference? According to Tehilla Shwartz Altshuler, a senior fellow for media and tech policy at the Israel Democracy Institute, the phenomenon reflects a broader transformation in how information moves through digital ecosystems. "What we see in wars is often a mirror of what we see in the general information ecosystem," Shwartz Altshuler said in a recent interview with The Jerusalem Report. She has described the aftermath of the October 7 Hamas attacks as the first truly "digital war" because of how quickly information moved through social media platforms. "Atrocities were filmed in real time, posted on Telegram, and by noon they were already on X. By the evening, they were on television," she said. Today, just two and a half years later, the information environment has evolved even further. While earlier misinformation often relied on miscaptioned images or recycled footage from past conflicts, generative AI can create entirely new scenes. "This is the main characteristic of AI-generated content," she said. "It's not only taken out of context. Sometimes it's actually generated from scratch." Synthetic war imagery The types of content circulating online range from crude fabrications to more elaborate productions. Some clips purport to show missile strikes scoring devastating hits on Tel Aviv - videos that analysts and journalists have quickly identified as AI-generated. Others attempt to manipulate political narratives, such as posts suggesting that Netanyahu had died, based on supposed visual "proof" from a televised speech. In one instance, users argued that Netanyahu must have been dead because a still frame from a video appeared to show him with six fingers - a common artifact of AI-generated imagery. Shwartz Altshuler said that such claims illustrate how misinformation evolves in the AI era. "We call it the 'liar's dividend,'" she said. On the one hand, fabricated content can persuade people that events occurred when they did not. On the other hand, the existence of AI manipulation allows real events to be dismissed as fake. "When you cannot sort authentic content from machine-generated content," she explained, "it allows people to convince others of things that never happened, but it also allows people to claim that real things didn't happen." Primitive fakes Despite the flood of synthetic content, much of what currently circulates online remains relatively crude. "Most of what we see on social media that was generated by AI is what we call 'slop,'" Shwartz Altshuler said. The videos often contain telltale flaws: distorted faces, unnatural movement, extra fingers, or disappearing objects. These imperfections enable viewers to identify the clips as fake. But that apparent detectability may itself be dangerous. "It creates a false feeling of literacy," she warned. People may believe they can easily identify synthetic media because current examples are flawed. However, more advanced AI systems are rapidly improving. "Tomorrow, if someone uses more sophisticated models," she said, "you won't be able to detect it." War propaganda But crude or not, AI-generated imagery has already found its way into the strategies of political leaders and governments, not just hostile actors. According to Shwartz Altshuler, political leaders and governments around the world increasingly experiment with AI-generated imagery as part of their own messaging strategies. "AI is being used by both sides," she said. In previous months, for example, US President Donald Trump shared AI-generated images depicting himself in fantastical scenarios, such as riding animals or appearing as a superhero. While those images were clearly satirical, they normalized the idea that leaders can shape reality through fabrication. During wartime, the same tools carry much higher stakes. Iran and its allies have long used recycled images or footage from unrelated conflicts in influence campaigns. Generative AI now adds a new layer to that ecosystem. Platforms and profit Another driver of the phenomenon is economic. Many creators producing AI-generated war videos are not necessarily motivated by political agendas. Instead, they are seeking attention and advertising revenue. "People are monetizing these slops," Shwartz Altshuler said. "They don't care about the outcome of the war. They just want to make money because people are consuming information about the war." Social media platforms are increasingly under pressure to address the problem. The platform X recently announced that accounts spreading AI-generated war content without labeling it as synthetic could be removed from monetization programs for up to 90 days. Shwartz Altshuler argues that platforms must go further. "They need to mark this content as AI-generated or remove it if it's not properly labeled," she said. Journalism in the synthetic era For journalists, the rise of synthetic imagery raises new challenges. "The job of journalists today is even more important than it was a decade or two decades ago," Shwartz Altshuler said. Verification now requires new skills and tools - from reverse image searches to specialized detection software capable of identifying AI-generated content. "The job of a journalist is to create the provenance of reality," she said. News organizations must also adapt their own practices, she added, by watermarking content and alerting audiences when manipulated material is detected. Crisis of reality The implications extend far beyond wartime propaganda. If synthetic media becomes indistinguishable from authentic footage, the consequences could reshape fundamental institutions. "If this becomes reality, the stock exchange won't be able to function, democracy won't be democracy, and commerce won't work," Shwartz Altshuler said. Societies, she argued, may eventually need new forms of digital regulation requiring the origin - or "provenance" - of content to be traceable. Such rules would not censor speech, she said, but simply require transparency about whether images and videos are authentic or AI-generated. Until then, moments like the Netanyahu video may become increasingly common: leaders appearing on camera not only to address the public but to prove that they exist at all. In a war where AI-generated missile strikes on Tel Aviv can circulate alongside authentic footage of real attacks, the line between documentation and fabrication is becoming harder to see. The fog of war may increasingly be manufactured. And in a world where images can be created as easily as they are recorded, seeing may no longer be believing.■

[8]

Truth vs Algorithms: The Growing Threat of AI-Generated Misinformation

AI-Generated War Images Go Viral: Learn How Fake Iran Conflict Visuals are Fueling Online Misinformation Artificial intelligence is transforming how people produce and distribute online content. People now use AI tools to create images and videos and social media posts within a few minutes. The technology helps users complete tasks, but it creates a new challenge because it produces false information. Social media platforms have recently witnessed a surge of AI-generated images that are connected to the Iran war. The authentic when people first view them. The footage displays explosions, damaged structures, and soldiers engaged in battle. The majority of the visual material does not depict actual events. The content was generated through AI software. The images achieved a quick spread because they appeared authentic to viewers. Many users believed the visuals showed real scenes from the war. Experts now warn that such AI-generated content can easily confuse the public and spread false information.

[9]

Iran-Israel-US war: AI images and videos intensifying fog of war in Middle East

As missiles fly across the Middle East, a parallel war over information is being fought on screens and artificial intelligence is making it harder to know what is real. The New York Times has identified a plethora of AI-generated photos and videos misrepresenting battlefield events in the ongoing conflict, adding to evidence that generative AI has become a tool of modern warfare. U.S. President Donald Trump on Sunday accused Iran of deploying AI as a "disinformation weapon," citing fabricated images of Iranian kamikaze boats that "do not exist." But Reuters has verified footage from Iraq's Basra port showing fuel tankers under attack by Iranian boats. The boats exist, though how much specific viral imagery has been manipulated remains contested. Even when the event is real, synthetic imagery can distort the scale, context, and consequence and in a high-speed information environment, the distortion travels a lot faster than the correction. Also read: This guy saved his dog from cancer by creating a mRNA vaccine using ChatGPT Trump's disinformation accusation would carry more weight if his administration were not running its own version of information control. While the president calls out Iranian AI propaganda, his FCC chairman has threatened to revoke broadcast licenses over war coverage he deems incorrect. Controlling the narrative and policing disinformation can look identical depending on which side of the border you are standing on. The risks extend well beyond bad headlines. A convincing deepfake of a nuclear facility under attack, a capital city on fire, or a leader assassinated could trigger real-world panic and escalation before a single verification is complete. Markets would move. Governments would face pressure to respond. Populations with no way to assess what they are seeing would fill the gap with fear. Also read: India's deepfake crisis: Women are falling prey to AI menace more than men This is not hypothetical, during the Russia-Ukraine war, unverified footage shaped public perception before ground truth could be established. Today's AI tools are over a generation more capable than those of 2022, and this conflict involves nuclear motives. The margin for a catastrophic misread is thin. Children are particularly at risk. Younger audiences consuming war content through TikTok and Instagram have grown up where synthetic imagery is normal and the line between entertainment and news starts thinning in geopolitical conflicts. Early research suggests exposure to unverifiable conflict content produces measurable anxiety and distorted threat perception in minors, yet platforms have no specific protections in place, and media literacy education has not kept pace with the technology. If a deepfake triggers a military response or market crash, no jurisdiction has a clear answer on liability. Synthetic media in conflict should fall under laws of armed conflict, but no framework exists. Mandatory watermarking remains the most discussed fix, but open-source models and non-compliant states make the enforcement of that watermarking a distant prospect. Major social media platforms like X, Instagram, Reddit and Youtube have no credible wartime protocol, their moderation systems were built for peacetime, and adversarial actors are moving faster. The fog of war has always been a feature of conflict for a long time. AI is making it denser and the systems that might cut through it are not yet ready.

Share

Share

Copy Link

AI-generated fake content about the Iran war has flooded social media platforms, with over 110 unique AI fakes identified in just two weeks. X's Grok and Google's Gemini are providing false information about real footage while fake videos of non-existent attacks rack up millions of views. The crisis marks a troubling shift where AI-powered disinformation is making it nearly impossible to distinguish real war footage from fabricated content.

AI Disinformation Overwhelms Iran War Coverage

Since U.S.-Israeli strikes against Iran began on February 28th, social media platforms have been engulfed by AI-generated fake content depicting scenes from the conflict that never occurred

1

. The New York Times identified over 110 unique AI fakes about the Iran war in just two weeks, collectively viewed millions of times across X, TikTok, and Facebook3

. These AI-generated fake images and videos depict everything from screaming civilians amid explosions that never happened to decimated city streets that were never attacked.

Source: NYMag

Disinformation expert Tal Hagin warns that the proliferation of AI-based fake news is pushing society over the edge of a fact-based reality

1

. The scale represents a drastic increase in AI-generated fake imagery compared to previous conflicts, with nearly half of all viral falsehoods now involving generative AI tools4

. Marc Owen Jones, an associate professor of media analytics at Northwestern University in Qatar, notes that compared to when the Ukraine war broke out, the current situation shows far more AI-related content than ever before3

.Fake AI Content on X and Platform Failures

X has become a primary battleground for this information warfare, with Grok repeatedly providing false information that makes the platform increasingly unhinged from reality

1

. When users asked Grok to verify authentic footage from the Minab school bombing, the AI assistant confidently confirmed false claims that the video was actually from a 2021 attack in Kabul, citing The New York Times, the Guardian, Al Jazeera, and Wikipedia as sources even though they contained images directly contradicting it . Grok was not simply wrong—it was confidently wrong, giving denialism machine authority through fabricated citations.Google's Gemini has performed similarly poorly in fact-checking. When asked about an authentic photograph of freshly dug graves in Minab preparing to bury more than 100 schoolgirls, Gemini falsely claimed the image depicted a mass burial site in Kahramanmaraş, Turkey, from the 2023 earthquake

4

. An international study in 2025 found about half of all AI-generated summaries had at least one significant sourcing or accuracy issue, with Gemini reaching 76%4

.Paid accounts bearing blue check marks have shared AI-generated fake content widely, including an image of a U.S. B-2 bomber supposedly shot down by Iran that was viewed 1 million times before deletion, and an image of Delta force members being captured that was seen 5 million times

1

. While X announced it would temporarily demonetize blue check mark accounts posting AI-generated videos of armed conflict without labels, non-AI misinformation continues flourishing on the platform.AI Tools Creating Lifelike Simulations and Alternate Reality

Sophisticated AI tools now enable nearly anyone to create lifelike simulations of war for little to no cost

3

. The fabricated videos often depict war like an over-the-top Hollywood action movie, with enormous explosions resulting in mushroom clouds, sonic booms rippling across unnamed cities, and supposed hypersonic missiles leaving glowing streaks in the sky. One widely circulated fake video showed a shaky handheld scene from a Tel Aviv apartment balcony with the skyline pounded by missiles, viewed millions of times across platforms despite being AI-generated3

.

Source: NYT

The AI-generated fake imagery has essentially created an alternate reality more suited to social media, where exaggerated footage finds larger audiences

3

. Real footage of missile strikes is typically shot from far away at night, with missiles visible as little more than bright lights in the distance and explosions shown as plumes of smoke rather than fireballs. The contrast highlights how AI fakes are optimized for engagement rather than accuracy.Related Stories

The AI Slop War and Eroding Fact-Based Reality

Experts have dubbed this phenomenon the AI slop war, where cheap, fast, and widely available AI video generation tools have created a powerful flattening effect

5

. The majority of AI videos about the war push pro-Iranian views, often to falsely demonstrate its military superiority, according to a study by Cyabra, a social media intelligence company3

. Iranian officials have shared AI-generated content, including an AI-generated video of high-rise buildings in Bahrain on fire1

. The Iranian embassy in Austria even illustrated real deaths of children with a fabricated image of a child's pink backpack covered in blood and dust, confirmed by Google's SynthID watermarking tool .The fog of AI does not need every piece of content to be fabricated—it needs the question "Is this real?" to become close to unanswerable . This creates online chaos and confusion where real photographs of real civilian casualties are called fake, and where fake images are used to illustrate real deaths. The day before strikes began, an AI image on social media planted the notion that Iran hides military equipment in schools. The next day, when Shajareh Tayyebeh elementary school in Minab was hit, killing at least 175 people including many children, audiences were already primed by propaganda .

Source: The Atlantic

Impact on Fact-Checking and Information Warfare

Shayan Sardarizadeh, a senior journalist at BBC Verify, reports that AI now makes up a large portion of all misinformation the team debunks, with nearly half or more of all viral falsehoods now involving generative AI

4

. This represents a massive shift from early weeks of the Gaza or Ukraine wars, when most fake posts were old videos or repurposed video game footage. X's reward program incentivizes sensational content, and today's AI tools make it easy to produce such content, resulting in a complete breakdown of reality during breaking news situations1

.The crisis unfolds faster than any institution, newsroom, fact-checker, photo wire service, or platform can process . People increasingly rely on AI summaries for news, with 65% of people reporting regularly seeing AI summaries of information, and the portion using generative AI to get information doubling in the past year

4

. Yet these tools routinely fail at basic verification tasks, wasting investigative time and risking that real atrocities will be denied. The combination of AI-powered disinformation, platform failures in verification, and commercial incentives for sensational content has created an environment where distinguishing truth from false narratives becomes increasingly difficult for audiences trying to understand what is actually happening in the conflict.References

Summarized by

Navi

[2]

[4]

[5]

Related Stories

Iran War Disinformation Crisis: AI-Generated Content Floods X With Fake Videos and Images

04 Mar 2026•Technology

AI-Generated Disinformation Escalates Israel-Iran Conflict in Digital Sphere

21 Jun 2025•Technology

Iran deploys AI propaganda to sway American opinion as digital warfare escalates

26 Mar 2026•Technology

Recent Highlights

1

AI Models Lie and Deceive to Protect Other AI Models From Deletion, Study Reveals

Science and Research

2

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research

3

Judge blocks Pentagon from blacklisting Anthropic over AI safety guardrails dispute

Policy and Regulation