NVIDIA and Google Cloud Build Massive AI Infrastructure Scaling to Nearly 1 Million GPUs

4 Sources

[1]

NVIDIA and Google Cloud Collaborate to Advance Agentic and Physical AI

Companies can build AI factories with NVIDIA Vera Rubin-powered A5X instances scaling up to nearly 1 million Rubin GPUs, Gemini on Google Distributed Cloud, confidential NVIDIA Blackwell GPUs and agentic AI built on Gemini Enterprise Agent Platform with NVIDIA Nemotron and NeMo. NVIDIA and Google Cloud have collaborated for more than a decade, co‑engineering a full‑stack AI platform that spans every technology layer -- from performance‑optimized libraries and frameworks to enterprise‑grade cloud services. This foundation enables developers, startups and enterprises to push agentic and physical AI out of the lab and into production -- from agents that manage complex workflows to robots and digital twins on the factory floor. At Google Cloud Next this week in Las Vegas, the partnership reaches a new milestone, with advancements to expand Google Cloud AI Hypercomputer for AI factories that will power the next frontier of agentic and physical AI. These include the new NVIDIA Vera Rubin-powered A5X bare-metal instances; a preview of Google Gemini on Google Distributed Cloud running on NVIDIA Blackwell and NVIDIA Blackwell Ultra GPUs; confidential VMs with NVIDIA Blackwell GPUs; and agentic AI on Gemini Enterprise Agent Platform with NVIDIA Nemotron open models and the NVIDIA NeMo framework. Next-Generation Infrastructure: From NVIDIA Blackwell to Vera Rubin At Google Cloud Next, Google announced A5X powered by NVIDIA Vera Rubin NVL72 rack-scale systems, which -- through extreme codesign across chips, systems and software -- deliver up to 10x lower inference cost per token and 10x higher token throughput per megawatt than the prior generation. A5X will use NVIDIA ConnectX-9 SuperNICs, combined with next-generation Google Virgo networking, scaling to up to 80,000 NVIDIA Rubin GPUs within a single site cluster and up to 960,000 NVIDIA Rubin GPUs in a multisite cluster, enabling customers to run their largest AI workloads on NVIDIA‑optimized infrastructure. "At Google Cloud, we believe the next decade of AI will be shaped by customers' ability to run their most demanding workloads on a truly integrated, AI‑optimized infrastructure stack," said Mark Lohmeyer, vice president and general manager of AI and computing infrastructure at Google Cloud. "By combining Google Cloud's scalable infrastructure and managed AI services with NVIDIA's industry‑leading platforms, systems and software, we're giving customers flexibility to train, tune and serve everything from frontier and open models to agentic and physical AI workloads -- while optimizing for performance, cost and sustainability." Google Cloud's broad NVIDIA Blackwell portfolio ranges from A4 VMs with NVIDIA HGX B200 systems to rack-scale A4X VMs with NVIDIA GB200 NVL72 and A4X Max NVIDIA GB300 NVL72 systems, all the way to fractional G4 VMs with NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs. Customers can right-size their acceleration capabilities, whether using multiple interconnected NVL72 racks that scale out to tens of thousands of NVIDIA Blackwell GPUs, a single rack that can scale up to 72 Blackwell GPUs with fifth-generation NVIDIA NVLink and NVLink 5 Switch, or just one-eighth of a GPU. This comprehensive platform helps teams optimize every workload, from mixture-of-experts reasoning, multimodal inference and data processing to complex simulations for the next frontier of physical AI and robotics. Leading frontier AI labs are already putting this infrastructure to work. Thinking Machines Lab is scaling its Tinker application programming interface (API) on A4X Max VMs with GB300 NVL72 systems to accelerate training, while OpenAI is running large‑scale inference on NVIDIA GB300 (A4X Max VMs) and GB200 NVL72 systems (A4X VMs) on Google Cloud for some of its most demanding inference workloads, including for ChatGPT. Secure AI Wherever It Needs to Run: Sovereign and Confidential Google Gemini models running on NVIDIA Blackwell and Blackwell Ultra GPUs are now in preview on Google Distributed Cloud, so customers can bring Google's frontier models wherever their most sensitive data resides. NVIDIA Confidential Computing with the NVIDIA Blackwell platform enables Gemini models to run in a protected environment where prompts and fine‑tuning data stay encrypted and can't be seen or altered by unauthorized parties, including the infrastructure operators. In the public cloud, the preview of Confidential G4 VMs with NVIDIA RTX PRO 6000 Blackwell GPUs brings these protections to multi‑tenant environments -- helping safeguard prompts, AI models and data so customers in regulated industries can access the power of AI without compromising on security or performance. This is the first confidential computing offering of NVIDIA Blackwell GPUs in the cloud, giving Google Cloud customers a new foundation for secure, high‑performance AI. Open Models and APIs for Agentic AI The NVIDIA platform on Google Cloud is optimized to run every kind of model -- from Google's frontier Gemini and Gemma families to NVIDIA Nemotron open models and the broader open weight ecosystem -- equipping developers to build agentic AI systems that reason, plan and act. NVIDIA Nemotron 3 Super is available on Gemini Enterprise Agent Platform, giving developers a direct path to discovering, customizing and deploying NVIDIA‑optimized reasoning and multimodal models for agentic workflows. Google Cloud and NVIDIA are also making it easier to train and customize open models at scale. Managed Training Clusters on Gemini Enterprise Agent Platform introduced a new managed reinforcement learning (RL) API built with NVIDIA NeMo RL for accelerating RL training at scale while automating cluster sizing, failure recovery and job execution, so teams can focus on agent behavior and model quality instead of infrastructure management. Cybersecurity leader CrowdStrike uses NVIDIA NeMo open libraries such as NeMo Data Designer, NeMo Automodel and NeMo Megatron Bridge to generate synthetic data and fine-tuning Nemotron and other open large language models for domain-specific cybersecurity. Running on Managed Training Clusters on Gemini Enterprise Agent Platform with NVIDIA Blackwell GPUs, these capabilities accelerate threat detection, investigation and response. Building the Future of Industrial and Physical AI Building industrial and physical AI at scale demands powerful hardware and a combination of open models, libraries and frameworks to develop these complex end-to-end workflows. NVIDIA AI infrastructure, open models and physical AI libraries available on Google Cloud, is mainstreaming industrial and physical AI applications, enabling customers to simulate, optimize and automate real-world workflows. Solutions from leading industrial software providers, including Cadence and Siemens Digital Industries Software, are now available on Google Cloud, accelerated on NVIDIA AI infrastructure. These applications are powering the next-generation design, engineering and manufacturing of everything from chips to autonomous vehicles, robotics, aerospace platforms, heavy machinery and large-scale production systems. With NVIDIA Omniverse libraries and the open source NVIDIA Isaac Sim robotics simulation framework available on Google Cloud Marketplace, developers can build physically accurate digital twins and develop custom robotics simulations pipelines to train, simulate and validate robots before real-world deployment. NVIDIA NIM microservices for models like NVIDIA Cosmos Reason 2 can be deployed to Google Vertex AI and Google Kubernetes Engine. This enables robots and vision AI agents to see, reason and act in the physical world like humans, powering use cases such as automated data curation and annotation, advanced robot planning and reasoning, and intelligent video analytics agents for real-time insights and decision-making. Together, these technologies help developers seamlessly move from computer-aided design to living industrial digital twins and AI‑driven robots, accelerating processes from design sign‑off to factory optimization on the NVIDIA platform running on Google Cloud. Proven Impact: From Startups to Global Enterprises Global enterprises, AI labs and high‑growth startups are using NVIDIA and Google Cloud's co-engineered platform to move from prototyping to production faster, including Snap, Schrödinger and Salesforce. Snap is cutting the cost of large‑scale A/B testing by shifting data pipelines to GPU‑accelerated Spark on Google Cloud. Schrödinger is shrinking weekslong drug discovery simulations into just hours with NVIDIA accelerated computing on Google Cloud. Startups are orchestrating the next wave of AI innovation -- building new agents and AI‑native applications using NVIDIA accelerated computing on Google Cloud. As part of a broader ecosystem highlighted through NVIDIA Inception and Google for Startups, CodeRabbit and Factory are using NVIDIA Nemotron‑based models on Google Cloud to power code review and autonomous software development agents, while Aible, Mantis AI, Photoroom and Baseten are building enterprise data, video intelligence, generative imagery and managed inference solutions on the full‑stack NVIDIA platform on Google Cloud. More than 90,000 developers have become a part of the joint NVIDIA and Google Cloud developer community in just over a year, tapping this platform to build and scale new AI applications. In addition, NVIDIA has been honored at Next as Google Cloud Partner of the Year in two categories -- AI Global Technology Partner and Infra Modernization Compute -- in recognition of deep technical expertise and go-to-market alignment. Together, NVIDIA and Google Cloud are giving customers a cloud‑scale platform to turn experimental agents and simulations into production systems that review code, secure fleets, enable new AI applications and optimize factories in the real world. Learn more about the companies' collaboration by attending NVIDIA sessions, demos and workshops at Google Cloud Next.

[2]

From GPUs to AI factories: Inside the Nvidia-Google Cloud superstack - SiliconANGLE

From GPUs to AI factories: Inside the Nvidia-Google Cloud superstack Nvidia Corp. and Google LLC used the search giant's annual Cloud Next event to deepen their long-running partnership, creating a full-stack "artificial intelligence factory" that integrates Google's AI Hypercomputer infrastructure with Nvidia's latest solutions, including Blackwell, open models and agentic and physical AI tooling. With this announcement, Google expands its distribution of Nvidia's accelerated computing stack, while customers gain a faster, lower-risk path from AI experimentation to large-scale deployment. Nvidia and Google Cloud have been co-developing the accelerated cloud stack for about a decade, starting with early K80/P100 GPU instances and evolving into the AI Hypercomputer architecture. That collaboration has been expanded to address the entire AI stack: For customers, co-engineering means there is no need to stitch together GPUs, schedulers and frameworks, as the combined stack is designed to be turnkey and is approaching "utility" status. Google has quietly built out one of the world's largest accelerated infrastructure deployments, with well over a million Nvidia GPUs deployed across its global fleet for internal products and Google Cloud services. There are two implications for this scale. The first is that it shortens deployment times. Because the backbone, supply chain, and data center footprint are already GPU‑centric, adding each new GPU generation (Hopper, Blackwell, Vera Rubin) can roll out faster, and those accelerators show up quickly in customer‑facing SKUs like A3/A5X and DGX Cloud. The second point is that there should be plenty of capacity for AI factories. The technology footprint that underpins Google's AI Hypercomputer concept -- multitenant, massively scaled clusters where training, fine-tuning and inference share the same fabric -- makes it realistic for enterprises to spin up large language model and agent workloads that run across tens of thousands of Nvidia GPUs without bespoke infrastructure engineering. Information technology leaders no longer have to guess which region or instance type will still be available at scale in 18 months -- Google is standardizing on Nvidia as the default accelerator fabric, alongside its tensor processing units. Nvidia has rewritten the computing stack by shifting heavy compute workloads away from general-purpose central process units toward GPU-accelerated architectures optimized for parallel workloads. Key aspects of that shift: That "accelerated computing" mindset is why Nvidia maps so cleanly onto Google's AI Hypercomputer strategy: Both focus on building dense, software-defined supercomputers rather than generic cloud infastructure as a service. Specialized accelerators like TPUs and other application-specific integrated circuits are powerful and often positioned as a threat to Nvidia, but they are narrow. Nvidia's bet has always been horizontally broad programmability. This has the following benefits: So although TPUs will remain strong within Google for specific workloads, Nvidia's cross-industry, multicloud footprint makes it attractive to enterprises that need to ship software to any customer, anywhere. For Google Cloud, aligning with Nvidia broadens the appeal of its AI infrastructure to customers who want a neutral, portable accelerated platform rather than a proprietary stack that locks them into a single cloud or architecture. For customers, this partnership is about reducing risk and shortening time-to-value. Specifically: This combination lowers the organizational friction of adopting AI because infra teams, data scientists and app teams share a common, battle‑tested platform. While customers want choice, too many variables in an equation can slow things down. The Google-Nvidia stack provides enterprises with a reference design for building AI factories, cloud-scale clusters for training, fine-tuning, inference and simulation -- that they can consume as a service or emulate on-premises with similar building blocks. Google has spent a decade playing third fiddle to Amazon Web Services and Microsoft Azure, but its partnership with Nvidia gives it a first-fiddle story in AI: a co-designed AI Hypercomputer, tuned for agentic and physical AI, that turns Google's Nvidia-powered supercomputers into a product enterprises and startups can actually buy. Google has a decade-long partnership with Nvidia and offers the widest range of Blackwell instances today. In the AI era, choice is important, and Google gives customers that.

[3]

Google Bets On The Agentic AI Era With Its AI Hypercomputer, Merges 8th-Gen TPUs, NVIDIA Rubin, & Axion CPUs Together

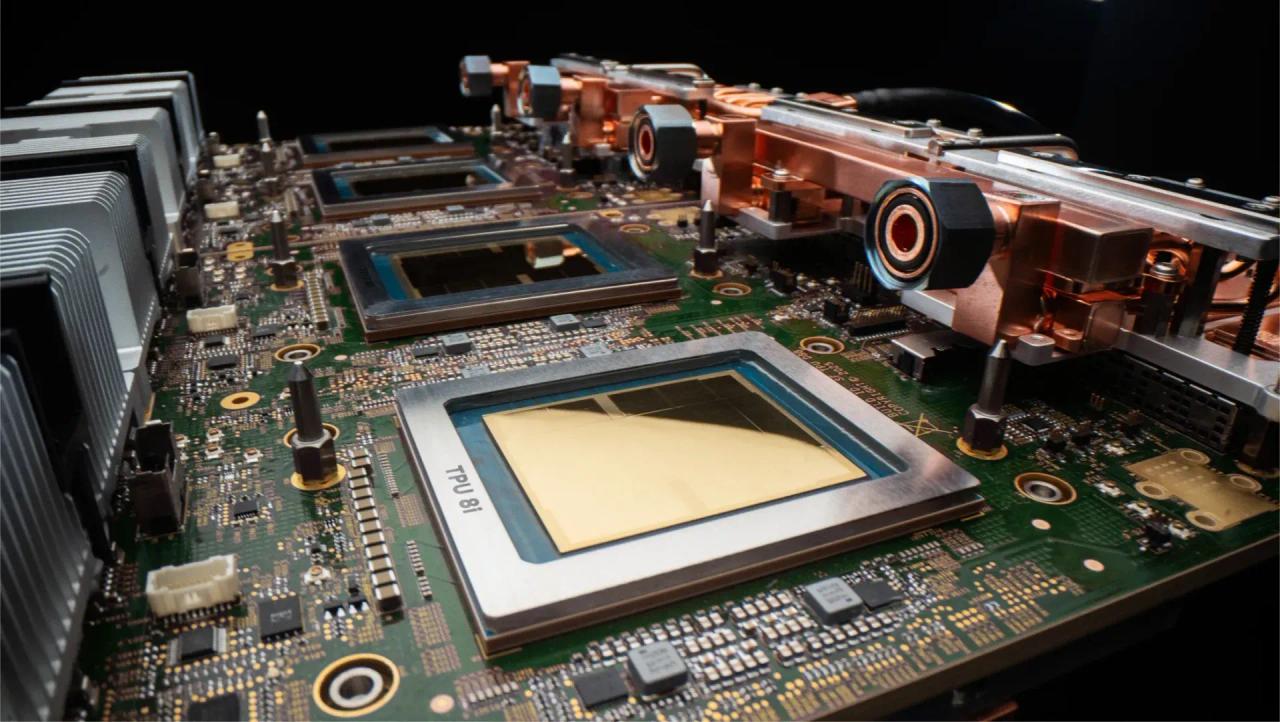

Google has announced the AI Hypercomputer, which brings together TPUv8 series, NVIDIA Rubin, & Axion CPUs to power the Agentic AI era. Gone are the days of supercomputers; the Agentic AI era will be all about hypercomputers, which will combine various compute options to deliver customers the most flexible and performant AI architecture ever built. Today, at Google's Cloud Next 26 event, the company formally announced its AI Hypercomputer. The new high-performance computing datacenter for Agentic AI houses an advanced, purpose-built architecture that unifies performance-optimized hardware for compute, storage, networking, open software, and ML frameworks. To make Google's AI Hypercomputer possible, the company had to go above and beyond. It will house its latest custom TPUv8 series, Axion Cloud CPUs, and will also deploy NVIDIA Rubin GPUs. Today's announcement also comes with the launch of Google's 8th Gen TPU lineup, which comes in two flavors: the TPU 8t and the TPU 8i. Google TPU 8t - Training Chip The Google TPU 8t chip is designed as a training powerhouse, reducing the deployment of frontier models from months to weeks. The chip offers the highest possible compute throughput, shared memory, and interchip bandwidth in the most power-efficient package ever built. The TPU 8t chip has a total FP4 compute capacity of 121 Exaflops per pod, 2.84x higher than Ironwood. The second chip, TPU 8i, is designed for inference and pairs an incredible 288 GB of HBM memory with 384 MB of on-chip SRAM, which is a 3x boost in capacities over the previous generation. With such a large SRAM, you can keep models active entirely on the chip. The TPU 8i chip has a total FP8 compute capacity of 331.8 Exaflops per pod, 6.74x higher than Ironwood. The salient features of TPU 8i include: When it comes to generation over generation improvements, the TPU8t Training chip offers a 2.7x better performance per dollar improvement over Ironwood "TPUv7" in large-scale training, the TPU8i Inference chip offers a 80% performance per dollar improvement over Ironwood "TPUv7" in low-latency targets for MoE model. Both chips also deliver twice the performance per watt improvement, which is vital for AI TCO. Both chips support Google's 4th Gen liquid cooling technology that is able to sustain the higher compute and performance densities, not possible with air cooling. And with that, let's round up the main highlights of the Google AI Hypercomputer, which are listed below: Google Cloud will also be one of the first AI infrastructures to offer NVIDIA VR200 (Vera Rubin) accelerators. The Rubin GPUs will be paired with Google's brand new Virgo network, offering massive-scale training clusters alongside Google's own 8th Gen TPU family. The Google AI hypercomputer will be used by several customers, including big names such as the US DOE, Boston Dynamics, Citadel Securities, Thinking Machine Labs, and Axia Energy.

[4]

NVIDIA and Google Cloud expand AI collaboration with new infrastructure By Investing.com

Investing.com -- NVIDIA and Google Cloud announced on Wednesday an expansion of their partnership to advance agentic and physical AI capabilities, introducing new infrastructure and services at Google Cloud Next in Las Vegas. The companies unveiled NVIDIA Vera Rubin-powered A5X bare-metal instances, which can scale up to 960,000 NVIDIA Rubin GPUs in a multisite cluster. The A5X instances use NVIDIA ConnectX-9 SuperNICs combined with Google Virgo networking, scaling to up to 80,000 NVIDIA Rubin GPUs within a single site cluster. The new systems deliver up to 10 times lower inference cost per token and 10 times higher token throughput per megawatt compared to the prior generation, according to the announcement. Google Cloud's NVIDIA Blackwell portfolio includes A4 VMs with NVIDIA HGX B200 systems, rack-scale A4X VMs with NVIDIA GB200 NVL72 and A4X Max NVIDIA GB300 NVL72 systems, and fractional G4 VMs with NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs. OpenAI is running large-scale inference on NVIDIA GB300 and GB200 NVL72 systems on Google Cloud for some of its inference workloads, including for ChatGPT. Thinking Machines Lab is scaling its Tinker API on A4X Max VMs with GB300 NVL72 systems. Google Gemini models running on NVIDIA Blackwell and Blackwell Ultra GPUs are now in preview on Google Distributed Cloud. The companies also introduced Confidential G4 VMs with NVIDIA RTX PRO 6000 Blackwell GPUs, marking the first confidential computing offering of NVIDIA Blackwell GPUs in the cloud. NVIDIA Nemotron 3 Super is now available on Gemini Enterprise Agent Platform. Google Cloud and NVIDIA introduced a new managed reinforcement learning API built with NVIDIA NeMo RL for accelerating training at scale. CrowdStrike uses NVIDIA NeMo open libraries to generate synthetic data and fine-tune Nemotron and other open large language models for cybersecurity applications, running on Managed Training Clusters on Gemini Enterprise Agent Platform with NVIDIA Blackwell GPUs. Solutions from Cadence and Siemens Digital Industries Software are now available on Google Cloud, accelerated on NVIDIA AI infrastructure. NVIDIA Omniverse libraries and the NVIDIA Isaac Sim robotics simulation framework are available on Google Cloud Marketplace. NVIDIA received Google Cloud Partner of the Year recognition in two categories: AI Global Technology Partner and Infra Modernization Compute. This article was generated with the support of AI and reviewed by an editor. For more information see our T&C.

Share

Copy Link

NVIDIA and Google Cloud announced a major expansion of their decade-long partnership at Google Cloud Next, unveiling AI infrastructure that can scale to 960,000 Rubin GPUs across multisite clusters. The collaboration introduces new capabilities for agentic AI and physical AI, including NVIDIA Vera Rubin-powered A5X instances, confidential computing with Blackwell GPUs, and Google Gemini models running on NVIDIA hardware across distributed cloud environments.

NVIDIA and Google Cloud Partnership Reaches New Scale

Source: NVIDIA

NVIDIA and Google Cloud unveiled a significant expansion of their collaboration at Google Cloud Next in Las Vegas, introducing infrastructure designed to power the next generation of agentic AI and physical AI applications

1

. The partnership, which spans more than a decade of co-engineering efforts, now enables customers to build AI factories with NVIDIA Vera Rubin-powered A5X instances that can scale up to 960,000 NVIDIA Rubin GPUs in a multisite cluster4

. This represents a fundamental shift in how enterprises can approach large-scale AI deployment, moving complex workflows from laboratory environments into production systems.The announcement positions the full-stack AI platform as a turnkey solution for developers, startups, and enterprises seeking to deploy everything from agents managing complex workflows to robotics and digital twins on factory floors

1

. Google has quietly built one of the world's largest accelerated infrastructure deployments, with well over a million NVIDIA GPUs already deployed across its global fleet for internal products and Google Cloud services2

.

Source: SiliconANGLE

Google Cloud AI Hypercomputer Unifies Computing Resources

At the heart of this expansion sits the Google Cloud AI Hypercomputer, a high-performance computing architecture that unifies performance-optimized hardware for compute, storage, networking, open software, and machine learning frameworks

3

. The AI Hypercomputer brings together Google's custom TPUv8 series, Axion CPUs, and NVIDIA Rubin GPUs to create what both companies describe as cloud-scale clusters for training, fine-tuning, inference, and simulation2

.

Source: Wccftech

The new NVIDIA Vera Rubin NVL72 rack-scale systems deliver up to 10 times lower inference cost per token and 10 times higher token throughput per megawatt than the prior generation through extreme co-design across chips, systems, and software

1

. The A5X instances use NVIDIA ConnectX-9 SuperNICs combined with next-generation Google Virgo networking, scaling to up to 80,000 NVIDIA Rubin GPUs within a single site cluster1

.NVIDIA Blackwell GPUs Power Diverse Workloads

Google Cloud's comprehensive NVIDIA Blackwell portfolio ranges from A4 VMs with NVIDIA HGX B200 systems to rack-scale A4X VMs with NVIDIA GB200 NVL72 and A4X Max NVIDIA GB300 NVL72 systems, extending to fractional G4 VMs with NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs

4

. This variety allows customers to right-size their acceleration capabilities, whether using multiple interconnected NVL72 racks that scale out to tens of thousands of NVIDIA Blackwell GPUs or just one-eighth of a GPU1

.Leading AI organizations are already deploying this AI infrastructure at scale. OpenAI is running large-scale inference on NVIDIA GB300 and GB200 NVL72 systems on Google Cloud for some of its most demanding inference workloads, including for ChatGPT

4

. Thinking Machines Lab is scaling its Tinker API on A4X Max VMs with GB300 NVL72 systems to accelerate training clusters1

.Related Stories

Confidential Computing and Sovereign AI Capabilities

Google Gemini models running on NVIDIA Blackwell and Blackwell Ultra GPUs are now in preview on Google Distributed Cloud, enabling customers to bring frontier models wherever their most sensitive data resides

1

. The introduction of Confidential G4 VMs with NVIDIA RTX PRO 6000 Blackwell GPUs marks the first confidential computing offering of NVIDIA Blackwell GPUs in the cloud4

.Confidential computing with the NVIDIA Blackwell platform enables Gemini models to run in a protected environment where prompts and fine-tuning data stay encrypted and cannot be seen or altered by unauthorized parties, including infrastructure operators

1

. This capability addresses critical security concerns for regulated industries seeking to access AI power without compromising on data protection.Physical AI and Robotics Applications Expand

The partnership extends into physical AI with NVIDIA Omniverse libraries and the NVIDIA Isaac Sim robotics simulation framework now available on Google Cloud Marketplace

4

. Solutions from Cadence and Siemens Digital Industries Software are now available on Google Cloud, accelerated on NVIDIA AI infrastructure for complex simulations and digital twins applications4

.For agentic AI development, NVIDIA Nemotron 3 Super is now available on Gemini Enterprise Agent Platform, and a new managed reinforcement learning API built with NVIDIA NeMo RL accelerates training at scale

4

. CrowdStrike uses NVIDIA NeMo open libraries to generate synthetic data and fine-tune Nemotron and other open large language models for cybersecurity applications4

.Customers including the US Department of Energy, Boston Dynamics, Citadel Securities, and Axia Energy are already leveraging the Google AI Hypercomputer for their workloads

3

. The collaboration reduces organizational friction by providing infrastructure teams, data scientists, and application teams with a common, battle-tested platform that shortens time-to-value for AI adoption2

.References

Summarized by

Navi

Related Stories

Google and NVIDIA Expand AI Partnership to Tackle Real-World Challenges

19 Mar 2025•Technology

Google Cloud bets big on AI agents with Gemini Enterprise platform and new chips

22 Apr 2026•Technology

Google and NVIDIA Partner to Bring Gemini AI Models On-Premises with Enhanced Security

10 Apr 2025•Technology

Recent Highlights

1

Meta AI chatbot let hackers hijack Instagram accounts by simply asking to change email addresses

Technology

2

Florida sues OpenAI and Sam Altman over ChatGPT safety, alleging AI harms linked to violence

Policy and Regulation

3

Apple's Siri overhaul for iOS 27 brings Gemini integration and standalone app to compete with ChatGPT

Technology