Google's TurboQuant sparked memory market panic, but analysts say AI demand will grow stronger

3 Sources

3 Sources

[1]

Will Google's TurboQuant algorithm hurt AI demand for memory chips?

Samsung Electronics' blowout first quarter has eased investor concerns that a new Google algorithm might threaten the AI-driven boom in South Korea's memory chip industry. Citing an "unprecedented supercycle" in the memory chip market, Samsung this week estimated higher profits in a single quarter than in the whole of last year, with no sign that memory was becoming less of a bottleneck for AI companies. The earnings guidance sent Samsung shares close to all-time highs and eased two weeks of anxiety sparked by TurboQuant, a technology outlined in a Google Research blog post in late March, which promises to drastically reduce the amount of memory required for AI. The post ignited a fierce and ongoing debate about future demand for high-bandwidth memory, the advanced chips made by Samsung and its South Korean rival SK Hynix that power AI servers. Some investors believe the memory boom will turn to bust, others think TurboQuant will have little impact, while optimists argue that if the technology does make AI cheaper, it will simply create demand for even more AI, and thus more chips. TurboQuant "potentially slashes the cost of running large language models by a factor of four to eight", said Kwon Seok-joon, a professor at Sungkyunkwan University in Seoul. "At first glance, this appears to threaten demand for high-bandwidth memory chips." However, "dramatically cheaper inference unlocks workloads previously too expensive to run", such as real-time coding assistants and multiple AI agents running at the same time, added Kwon, "driving total compute demand higher, not lower". TurboQuant works by compressing the so-called key value cache -- the short-term memory that allows AI models such as ChatGPT and Claude to retain conversational context -- and reconstructing it when needed, with little apparent loss in accuracy. As AI interactions lengthen and user numbers rise, demands on the KV cache are surging, putting strain on how much memory AI services can afford to use. TurboQuant offers a way out, reducing the "cost per token", the amount of computing and memory expense required to process each unit of data handled by an AI system. Google's researchers claim the approach could cut memory usage by as much as sixfold. The blog post caused shares of Samsung and SK Hynix to fall sharply last month. But analysts and researchers now suggest that if TurboQuant does work, it is more likely to expand overall memory demand than reduce it -- an example of the Jevons paradox, in which greater efficiency increases overall usage of a resource. Economist William Stanley Jevons noted in his 1865 book The Coal Question that James Watt's more efficient steam engine had resulted in greater usage of the fuel because it made coal-powered technologies economically viable in far more contexts. Han In-su, one of the researchers upon whose work TurboQuant is based, told the FT that the algorithm "can serve as a foundation for realising previously impossible high-difficulty tasks, such as processing much longer contexts within limited memory resources without sacrificing accuracy, or implementing high-performance AI on smaller devices". In a research note, Kim Young-gun of Mirae Asset Securities invoked "déjà vu" over Kubernetes, a Google-designed "containerisation" technology that made it possible to run multiple applications on a single server, greatly improving hardware efficiency. Upon its widespread adoption in the late 2010s, there were concerns that demand for servers and memory would fall as companies would need fewer resources to produce the same results. In practice, the opposite occurred, with lower costs encouraging much greater usage. "The market has largely misread TurboQuant," said Ray Wang of research firm SemiAnalysis. "We continue to believe that increasing memory demand will be required for both training and inference as AI models evolve and innovation advances." Any potential blow to the South Korean chipmakers would be cushioned by the increasing use of long-term contracts from AI service providers seeking to lock in supply, said Wang. "Memory is becoming a bit less cyclical, driven by accelerating and sustainable AI demand," he said. "Contract pricing now matters more than spot pricing." At Samsung's annual meeting last month, co-chief executive Jun Young-hyun said the company was pursuing "contracts of three or five years with major clients, shifting from the existing quarterly and annual terms". For now, TurboQuant remains a concept in a blog post. Its real-world impact will become clear after it is presented at the International Conference on Learning Representations in Brazil in late April and people outside Google are expected to be able to test it. Its ultimate success will depend on whether the largest tech groups are able to use it at scale. "We never imagined that a technology that started from the academic question of 'How can we compress data more perfectly?' would cause such a huge social and economic ripple effect," said Han.

[2]

Google's TurboQuant Made The Memory Industry Fear The Boom Was Over; Even The Researcher Behind It Is Shocked By The Reaction

The memory industry saw a rollercoaster ride in the past few weeks following the debut of Google's TurboQuant, but the idea of shortages being over is seen as a "misinterpretation", to say the least. With DDR prices coming down over the past few days, we did discuss the role of Google's TurboQuant algorithm; however, tying it to the end of memory shortages was a mere misperception, according to The Financial Times' latest report. Following the blog post about TurboQuant, we saw a huge sell-off in the memory market, affecting suppliers like Micron, Samsung, and SK hynix, and it instilled widespread panic, not just among retailers but also RAM scalpers, who thought it was finally the end of DRAM inflation. However, recent indicators, including revenue figures and the demand outlook, suggest that shortages are here to stay. We never imagined that a technology that started from the academic question of 'How can we compress data more perfectly?' would cause such a huge social and economic ripple effect. - Han In-su via Financial Times While going into the technical details of TurboQuant would make this coverage much longer, the idea with the compression algorithm is to run LLMs on accelerators while reducing memory consumption, thereby making memory use much more efficient. Many experts have drawn a TurboQuant analogy with Jevon's Paradox, but in terms of actual memory demand, it appears the cycle is now transitioning from aggressiveness to broader adoption and, indirectly, longer as well. This is clearly seen in how DRAM suppliers are now entering into multi-year contracts with hyperscalers to gain a clearer view of demand. In Samsung's recent Q1 revenue report, we saw the company generate up to $37 billion from its DRAM segment alone, with operating figures on par with those of mainstream hyperscalers. At the same time, it is reported that DRAM contract pricing is expected to grow in the upcoming quarters, and that memory is now entering a phase in which no entity in the AI world can 'survive' without it. Dell's CEO, Michael Dell, recently noted that demand could skyrocket to unprecedented levels, driven by a dramatic rise in per-processor memory consumption. The only situation in which we could see memory shortages easing is when new production capacity comes online, since demand is unlikely to drop. From this perspective alone, memory shortages could persist through H2 2027 and even beyond, depending on how quickly suppliers can bring new production lines online.

[3]

Google's TurboQuant may drive more memory demand not less, analysts say

It doesn't take a genius to figure out that making memory for AI datacenters is way more profitable than making it for your gaming rig and that most of these big companies are not coming back to the consumer market. However, Google announcing TurboQuant and causing Micron's stocks to fall and then RAM prices going down ever so slightly gave us that slight sliver of hope. That hope may be short-lived. Also read: Why are RAM prices falling, and is it the best time to upgrade? When Google Research published its TurboQuant blog post in late March, the reaction was swift and visceral. SK Hynix lost 7.3% of its market value within 48 hours. Samsung fell sharply. Cloudflare's CEO called it Google's "DeepSeek moment." The narrative wrote itself, an algorithm that compresses AI memory usage sixfold must surely be bad news for the companies selling that memory. Except analysts aren't so sure. In fact, many think the market got this completely backwards. Chae Min-suk of Korea Investment & Securities said in a report that the sell-off stemmed from "an interpretation error caused by confusing the roles of memory capacity and memory bandwidth." The argument the bulls are making hinges on the Jevons Paradox, a 19th century economic observation that when a resource becomes more efficient to use, total consumption tends to go up because efficiency makes it viable in far more contexts. When DeepSeek launched dramatically more efficient inference in early 2025, the same fear spread, and high bandwidth memory (HBM) demand climbed anyway. The market has now seen this exact movie twice, but panicked both times. Also read: Google's TurboQuant explained: The JPEG approach to AI compression The technical reality supports this reading. TurboQuant only addresses inference memory - specifically the KV cache. Training a model requires fundamentally different memory driven by activations, gradients, and optimizer states. TurboQuant has zero effect on any of that. There's also the matter of actual order books. Micron's CEO stated plainly that the company's entire 2026 HBM supply is already sold out, not a company facing a demand destruction event. Meanwhile, Ray Wang of SemiAnalysis said "the market has largely misread TurboQuant," adding that "increasing memory demand will be required for both training and inference as AI models evolve." For consumers hoping TurboQuant would be their ticket back to affordable RAM, the picture is bleak. The structural shift toward AI datacenter memory was never going to be reversed by a compression algorithm. If anything, cheaper inference means more applications become economically viable, which means more infrastructure, which means more chips. The cruelest irony of TurboQuant may just be that the very efficiency it promises could end up making the memory boom bigger, not smaller.

Share

Share

Copy Link

Google's TurboQuant algorithm triggered a sharp sell-off in memory chip stocks, with SK Hynix dropping 7.3% in 48 hours. But analysts now argue the market misread the technology. Rather than reducing memory demand, TurboQuant's efficiency could unlock new AI applications, driving total consumption higher through the Jevons Paradox effect.

Google's TurboQuant Algorithm Triggers Memory Market Turbulence

When Google Research published its TurboQuant blog post in late March, the reaction sent shockwaves through global memory markets. SK Hynix lost 7.3% of its market value within 48 hours, while Samsung and Micron shares fell sharply

3

. The sell-off reflected widespread investor concerns that this compression algorithm could threaten the AI-driven boom in memory chips. TurboQuant promises to drastically reduce AI memory requirements by compressing the key-value cache—the short-term memory allowing AI models like ChatGPT to retain conversational context—potentially slashing memory usage by as much as sixfold1

. Cloudflare's CEO even called it Google's "DeepSeek moment," suggesting a paradigm shift for the industry3

.

Source: FT

Samsung's Blowout Quarter Eases Market Fears

Samsung Electronics' first-quarter results offered a powerful counternarrative to the panic. The company estimated higher profits in a single quarter than in the whole of last year, citing an "unprecedented supercycle" in the memory chip market

1

. Samsung generated up to $37 billion from its DRAM segment alone, with operating figures matching those of mainstream hyperscalers2

. The earnings guidance sent Samsung shares close to all-time highs and demonstrated that memory remains a critical bottleneck for AI companies, with no signs of weakening demand. At Samsung's annual meeting, co-chief executive Jun Young-hyun revealed the company is pursuing contracts of three or five years with major clients, shifting from existing quarterly and annual terms1

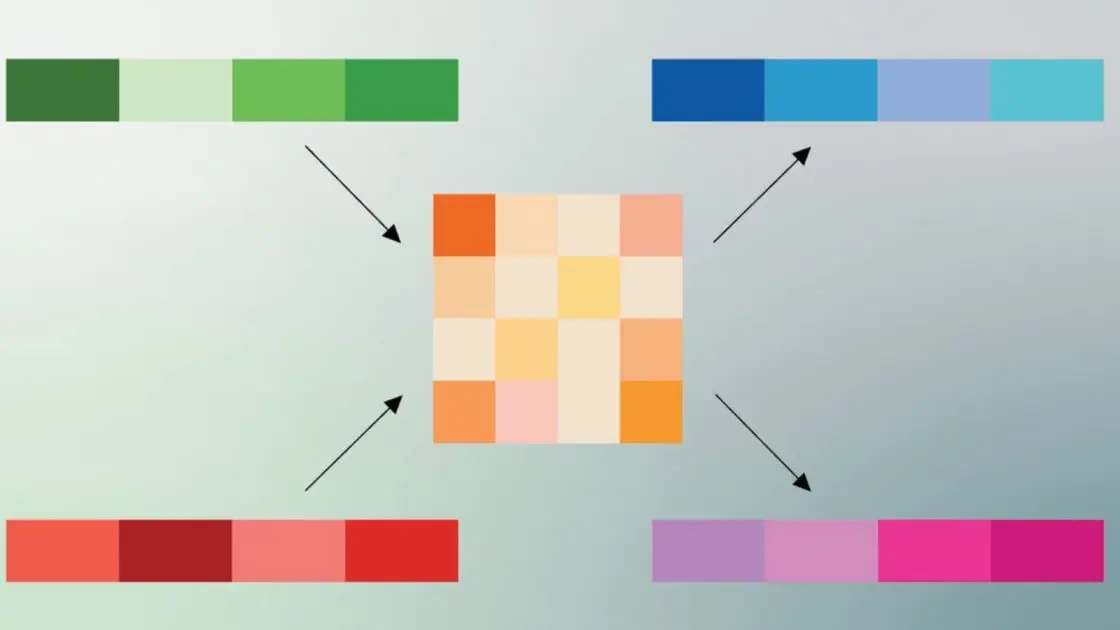

.Jevons Paradox: Why Efficiency May Increase Memory Demand

Analysts now suggest the market fundamentally misread TurboQuant's implications. Chae Min-suk of Korea Investment & Securities said the sell-off stemmed from "an interpretation error caused by confusing the roles of memory capacity and memory bandwidth"

3

. Rather than reducing overall memory consumption, many experts invoke the Jevons Paradox—a 19th-century economic observation that greater efficiency often increases total resource usage. Economist William Stanley Jevons noted in 1865 that James Watt's more efficient steam engine resulted in greater coal usage because it made coal-powered technologies economically viable in far more contexts1

. "Dramatically cheaper inference unlocks workloads previously too expensive to run," explained Kwon Seok-joon, a professor at Sungkyunkwan University, "such as real-time coding assistants and multiple AI agents running at the same time, driving total compute demand higher, not lower"1

.

Source: Digit

Technical Reality: Training Versus AI Inference

The technical specifics of TurboQuant reveal why its impact may differ from initial expectations. The compression algorithm only addresses AI inference memory, specifically the KV cache used during model interactions. Training AI models requires fundamentally different memory driven by activations, gradients, and optimizer states—areas where TurboQuant has zero effect

3

. Han In-su, one of the researchers whose work forms the foundation for TurboQuant, told the Financial Times the algorithm "can serve as a foundation for realising previously impossible high-difficulty tasks, such as processing much longer contexts within limited memory resources without sacrificing accuracy, or implementing high-performance AI on smaller devices"1

. He added: "We never imagined that a technology that started from the academic question of 'How can we compress data more perfectly?' would cause such a huge social and economic ripple effect"2

.Related Stories

High-Bandwidth Memory Supply Remains Tight

Actual order books tell a compelling story about sustained memory demand. Micron's CEO stated plainly that the company's entire 2026 HBM supply is already sold out—hardly indicative of a company facing demand destruction

3

. Ray Wang of SemiAnalysis said "the market has largely misread TurboQuant," adding that "increasing memory demand will be required for both training and inference as AI models evolve and innovation advances"1

. DRAM contract pricing is expected to grow in upcoming quarters, and memory is entering a phase where no entity in the AI world can operate without it. Dell's CEO Michael Dell recently noted that demand could skyrocket to unprecedented levels, driven by dramatic rises in per-processor memory consumption2

. The structural shift toward AI datacenters means memory shortages could persist through the second half of 2027 and beyond, depending on how quickly suppliers like Samsung, SK Hynix, and Micron can bring new production lines online2

.What This Means for the Memory Boom

Kim Young-gun of Mirae Asset Securities invoked "déjà vu" over Kubernetes, Google's containerization technology that made it possible to run multiple applications on a single server, greatly improving hardware efficiency. Upon widespread adoption in the late 2010s, concerns emerged that demand for servers and memory would fall. In practice, the opposite occurred, with lower costs encouraging much greater usage

1

. Any potential impact on South Korean chipmakers would be cushioned by the increasing use of long-term contracts from AI service providers seeking to lock in supply. "Memory is becoming a bit less cyclical, driven by accelerating and sustainable AI demand," Wang noted. "Contract pricing now matters more than spot pricing"1

. For now, TurboQuant remains a concept awaiting real-world validation. Its actual impact will become clearer after presentation at the International Conference on Learning Representations in Brazil in late April, when people outside Google are expected to test it. Ultimate success depends on whether the largest tech groups can deploy it at scale, but the consensus among analysts suggests that even if TurboQuant delivers on its promises, the result will be expanded applications for large language models rather than reduced memory chip demand.🟡 centrifugal pump.References

Summarized by

Navi

Related Stories

Google's TurboQuant slashes AI memory usage by 6x while maintaining quality and speed

26 Mar 2026•Technology

Google's TurboQuant slashes AI memory needs by 6x, sending memory chip stocks tumbling

26 Mar 2026•Technology

Memory shortage forces PC makers to raise prices up to 30% as AI demand drains supply

17 Mar 2026•Business and Economy