Memory costs surge to 30% of hyperscaler spending as Nvidia secures preferential supply terms

2 Sources

2 Sources

[1]

Memory will consume 30% of hyperscaler data center spending this year, a 4X increase over 2023 -- Nvidia gets preferential supply terms well below standard market rates, says analyst firm

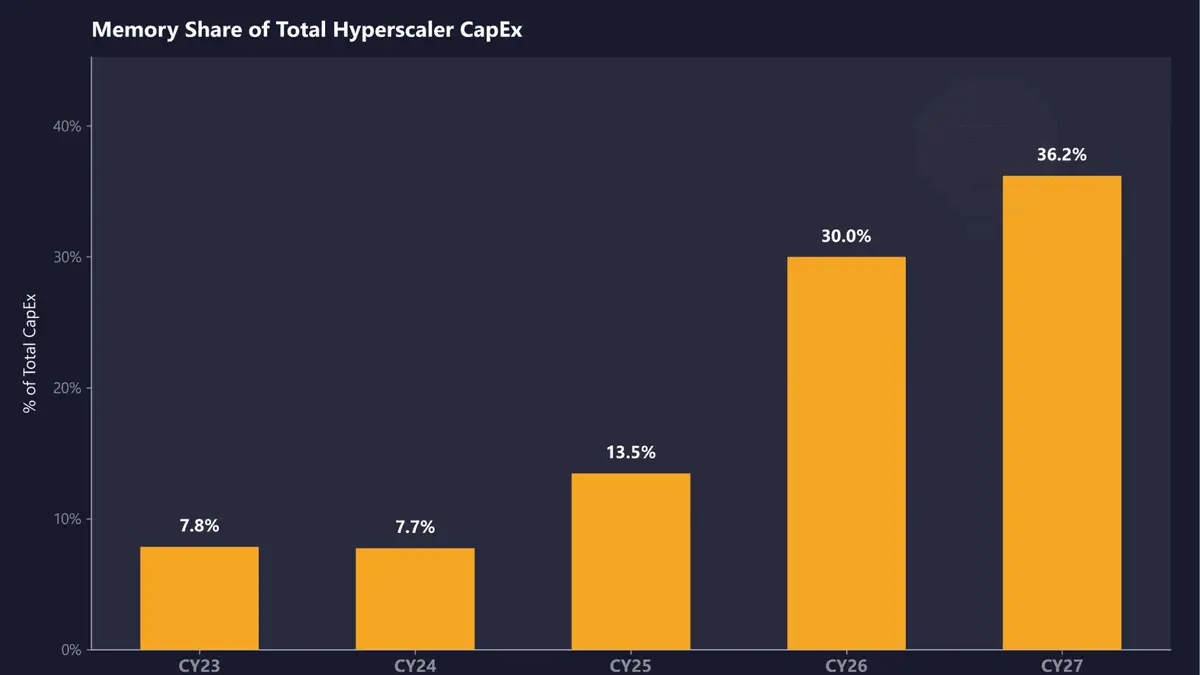

SemiAnalysis estimates that memory will account for roughly 30% of total hyperscaler capex in calendar year 2026, up from approximately 8% in CY23 and CY24. The firm projects that share will climb further in CY27, representing a near four-fold shift in just four years as DRAM prices surge beyond imagination and HBM remains massively undersupplied. SemiAnalysis expects DRAM prices to more than double in CY26, with another double-digit ASP increase in CY27. LPDDR5 contract pricing has already risen more than three times since Q1 2025, and the firm estimates open-market pricing will likely exceed $10/GB this quarter. HBM, the vertically stacked memory at the core of AI accelerators, remains undersupplied through CY27 according to SemiAnalysis's findings, with memory now constituting a massive share of the approximately $250 billion in incremental hyperscaler spend projected for this calendar year. This is already reflected in AI server pricing, with SemiAnalysis noting that B200 prices are set to rise by up to 20% by year-end, driven in large part by memory cost inflation. That aligns with the broader industry, with manufacturers having acknowledged steep component cost increases in recent earnings calls. Dell's COO, Jeff Clarke, described the rate of cost movement as "unprecedented" in its Q325 earnings call back in November. Counterpoint Research has separately projected that DDR5 64GB RDIMM modules could cost twice as much by the end of 2026 as they did in early 2025. AI servers built on Nvidia's LPDDR-based platforms are seeing some of the steepest increases because of the sheer volume of memory per system. An interesting dynamic SemiAnalysis noted is that Nvidia receives what the firm calls "VVP" (Very Very Preferred) DRAM pricing from suppliers, "well below [the rates paid by] both hyperscalers and the broader market." This, according to SemiAnalysis, compresses Nvidia's own server cost exposure and pushes down overall market pricing benchmarks, masking how severe the supply crunch actually is for everyone else. AMD sits on the other side of that dynamic, with its AI accelerator SKUs generally carrying higher memory content per unit, and the company doesn't benefit from the same preferential supplier pricing. At a time when AMD operates at far lower AI accelerator volume than Nvidia, making AMD "structurally more exposed [to memory cost inflation] at a time when it operates at far lower AI accelerator scale." In other words, Nvidia's purchasing scale across HBM and conventional DRAM gives it leverage that smaller-volume buyers simply can't replicate. SemiAnalysis concluded that while memory inflation is already partially reflected in CY26 capex guidance from major cloud operators, CY27 repricing is not yet captured in Wall Street estimates. Samsung, SK hynix, and Micron have all diverted production capacity toward HBM and high-margin enterprise DRAM, leaving conventional DDR5 and LPDDR5 supply constrained, and new fab capacity from Micron's $9.6 billion Hiroshima HBM facility and SK hynix's Icheon and Cheongju expansions won't be delivering meaningful output until 2027 or 2028 at the earliest. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

Hyperscalers Are 'Scratching Their Heads' with Rising Memory Costs, But NVIDIA Might Be the Only One Smiling

The rising cost of memory has started to bite into the CapEx of major hyperscalers and their infrastructure buildouts, but NVIDIA enjoys an exclusive position in the supply chain. DRAM shortages have disrupted broader supply chains, affecting segments like AI and consumer markets, but, interestingly, for hyperscalers, there are few options other than buying DRAM at outrageous prices, either on spot or contract terms. Based on information from SemiAnalysis and supply chain reports, memory prices have started to influence hyperscaler investments to a much greater extent, to the point that they now account for up to 30% of total spend, which is a shocking figure to say the least. Memory inflation is a serious concern for AI giants right now, yet there are no signs that spending is being affected. SemiAnalysis says that the increase in memory spending could rise significantly higher in CY2027 as well, which is an indirect indication that the ongoing DRAM shortages are here to stay. When we talk about hyperscalers in particular, the need for memory rises from memory pools connected via CXL switches to work alongside the rack-scale infrastructure. Technologies like DDR5 and LPDDR5 have seen massive adoption by hyperscalers in recent times, which is why shortages have been much more brutal for markets dependent on general-purpose DRAM. Another area where memory is important for hyperscalers is their custom silicon and rack efforts. Interestingly, SemiAnalysis also notes an interesting aspect about the memory shortage in a follow-up post, claiming that NVIDIA received a VVP (Very Very Preferred) DRAM customer status within the supply chain, which gives the company both a capacity and a pricing leverage that allows the firm to beat competition, at least in getting the best memory deal. This is also in line with Jensen's past comments about memory shortages, claiming that NVIDIA isn't affected at all because the firm saw the aggressive demand coming in well ahead of others, which is why it entered into extensive supply contracts. We have talked about how NVIDIA, and its close relations with supply chain partners, have helped the company gain the edge in the modern-day infrastructure buildout, and this isn't just limited to DRAM; this superior position extends to other key dynamics such as semiconductors, advanced packaging, and other elements within the broader AI supply chain. On one end, you have high-end infrastructure; on the other, being unfazed by the supply crunch is one of the reasons competing with NVIDIA requires far more than the general perception suggests.

Share

Share

Copy Link

Memory cost now accounts for 30% of total hyperscaler capital expenditure in 2026, a dramatic jump from just 8% in 2023 and 2024. SemiAnalysis reports that soaring DRAM prices and persistent HBM shortages are reshaping AI infrastructure economics, with Nvidia enjoying exclusive pricing advantages that competitors like AMD cannot match.

Memory Cost Explosion Reshapes Hyperscaler Economics

Memory cost has emerged as a dominant factor in hyperscaler budgets, now consuming roughly 30% of total capital expenditure (capex) in calendar year 2026, according to

SemiAnalysis

. This represents a dramatic escalation from approximately 8% in both CY23 and CY24, with projections indicating the share will climb even higher in CY271

. The shift reflects a near four-fold increase in just four years as soaring DRAM prices and persistent memory supply shortage fundamentally alter the economics of AI infrastructure buildout.

Source: Tom's Hardware

DRAM Prices Double as Supply Constraints Intensify

SemiAnalysis expects DRAM prices to more than double in CY26, followed by another double-digit average selling price increase in CY27

1

. LPDDR5 contract pricing has already risen more than three times since Q1 2025, with open-market pricing projected to exceed $10 per gigabyte this quarter1

. Counterpoint Research separately forecasts that DDR5 64GB RDIMM modules could cost twice as much by the end of 2026 compared to early 20251

. Dell's COO Jeff Clarke described the rate of cost movement as "unprecedented" during the company's Q325 earnings call in November1

. This cost inflation is already impacting AI server pricing, with B200 prices set to rise by up to 20% by year-end, driven largely by memory cost pressures1

.Nvidia's Preferential Supply Terms Create Competitive Moat

Nvidia receives what SemiAnalysis calls "VVP" (Very Very Preferred) DRAM pricing from suppliers, securing rates "well below" those paid by both hyperscalers and the broader market

1

2

. This preferential supply terms arrangement compresses Nvidia's own server cost exposure and pushes down overall market pricing benchmarks, effectively masking how severe the supply crunch is for everyone else1

. The company's VVP customer status within the supply chain provides both capacity and pricing leverage that competitors struggle to replicate2

. Jensen Huang previously commented that Nvidia anticipated aggressive demand well ahead of others, entering into extensive supply contracts that insulated the company from shortages2

.

Source: Wccftech

AMD Faces Structural Disadvantage in Memory Economics

AMD sits on the opposite side of this dynamic, with its AI accelerators generally carrying higher memory content per unit while lacking the same preferential supplier pricing that Nvidia enjoys

1

. Operating at far lower AI accelerator volume than Nvidia makes AMD "structurally more exposed" to memory cost inflation at a time when scale matters most1

. Nvidia's purchasing scale across High Bandwidth Memory (HBM) and conventional DRAM grants leverage that smaller-volume buyers simply cannot replicate, creating a competitive advantage that extends beyond chip architecture1

.Related Stories

HBM Undersupply Persists Through 2027

High Bandwidth Memory (HBM), the vertically stacked memory at the core of AI accelerators, remains undersupplied through CY27 according to SemiAnalysis findings

1

. Memory now constitutes a massive share of the approximately $250 billion in incremental hyperscaler spend projected for this calendar year1

. Samsung, SK hynix, and Micron have all diverted production capacity toward HBM and high-margin enterprise DRAM, leaving conventional DDR5 and LPDDR5 supply constrained1

. New fab capacity from Micron's $9.6 billion Hiroshima HBM facility and SK hynix's Icheon and Cheongju expansions won't deliver meaningful output until 2027 or 2028 at the earliest1

.Wall Street Underestimates Future Memory Impact

SemiAnalysis concluded that while memory inflation is already partially reflected in CY26 capex guidance from major cloud operators, CY27 repricing is not yet captured in Wall Street estimates

1

. For hyperscalers specifically, memory requirements extend beyond AI accelerators to include memory pools connected via CXL switches working alongside rack-scale infrastructure, as well as custom silicon and rack efforts2

. Hyperscalers face limited options beyond purchasing DRAM at elevated prices through either spot or contract terms2

. The indication that memory spending could rise significantly higher in CY2027 suggests ongoing DRAM shortages will persist, fundamentally reshaping hyperscaler data center spending priorities and competitive dynamics in the AI infrastructure market.References

Summarized by

Navi

Related Stories

Memory prices spike up to 400% as AI demand creates global shortage lasting through 2026

14 Dec 2025•Business and Economy

Memory shortage forces PC makers to raise prices up to 30% as AI demand drains supply

17 Mar 2026•Business and Economy

Nvidia's Memory Chip Shift Could Double Server Prices by 2026

19 Nov 2025•Business and Economy

Recent Highlights

1

Google releases Gemma 4 with Apache 2.0 license, enabling unrestricted local AI on devices

Technology

2

AI Models Lie and Deceive to Protect Other AI Models From Deletion, Study Reveals

Science and Research

3

OpenAI closes $122 billion funding round amid fierce AI competition and profitability questions

Startups