Meta abandons advanced AI chips after design roadblocks, turns to Nvidia and AMD instead

3 Sources

3 Sources

[1]

Meta scraps advanced AI training chip after design roadblocks

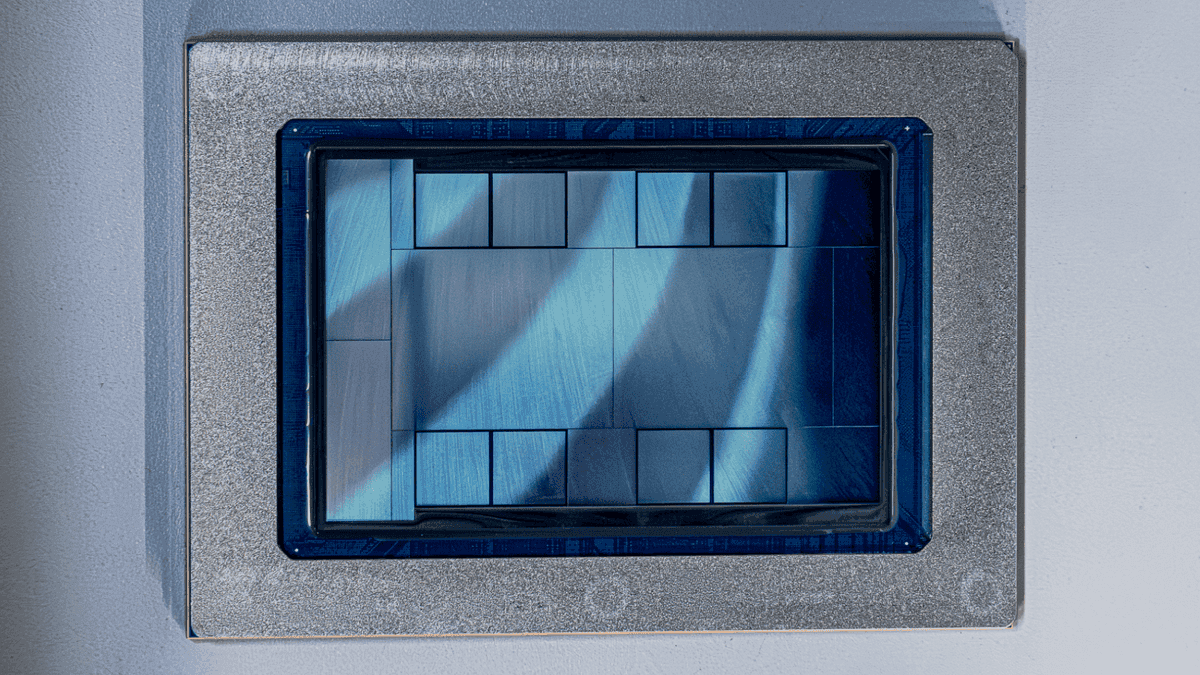

Meta scrapped its most advanced in-house AI training chip due to design challenges and is shifting to a simpler version, according to The Information. The setback highlights the difficulty of building custom silicon to rival Nvidia and raises questions about Meta's ability to reduce its dependence on external suppliers. The company's internal chip design efforts "hit roadblocks," forcing the abandonment of its most ambitious training chip. The Meta Training and Inference Accelerator program has a history of setbacks. Meta scrapped an earlier inference chip after it underperformed in small-scale testing and pivoted in 2022 to billions of dollars' worth of Nvidia GPUs. The company eventually deployed an MTIA chip for inference tasks on Facebook and Instagram, but the training chip has proven more elusive. Meta began testing its first in-house AI training chip manufactured by TSMC after completing a tape-out. Analyst Jeff Pu noted in January that Meta appeared to be scaling back its in-house ASIC program, turning to AMD instead of its own chips or Google's TPUs. Meta announced a multiyear agreement with AMD on February 24 worth more than $100 billion for up to six gigawatts of MI450 GPUs. Shipments are scheduled to begin in the second half of this year. AMD issued Meta a performance-based warrant for up to 160 million shares of its common stock under the deal. A week earlier, Meta expanded its partnership with Nvidia for millions of next-generation Vera Rubin GPUs and Grace CPUs. That deal is likely worth tens of billions of dollars. Meta also signed a deal to rent Google TPUs for developing new AI models. Meta has committed up to $135 billion in capital expenditures for 2026 to build out AI infrastructure. The company plans to expand across more than 30 data centers. Meta co-developed its MTIA chips with Broadcom, which also partners with Google on its TPUs. Meta Chief Product Officer Chris Cox described the company's chip development journey last year as a "walk, crawl, run situation." The company scrapped an earlier inference chip after it underperformed in small-scale testing. The Financial Times previously reported that Meta experienced technical challenges with its next-generation training chips.

[2]

Meta Wants To Build Its Own AI Chips - Here's Why - Meta Platforms (NASDAQ:META)

Meta Platforms Inc. (NASDAQ:META) is advancing its artificial intelligence strategy through custom chip development, new AI tools, and content partnerships to strengthen its technology ecosystem and unlock future growth. Meta Pushes Ahead With In-House AI Chips Meta continues to pursue in-house chip development even after securing major supply agreements with leading semiconductor companies. Speaking at a Morgan Stanley technology conference, Meta CFO Susan Li said the company is developing custom processors tailored to its own workloads, particularly those tied to ranking and recommendation systems, where Meta has already deployed custom silicon at scale. Li said Meta plans to expand the use of its custom chips over time, including eventually building processors capable of training future AI models, Bloomberg reported on Thursday. Although Meta is not a cloud provider, it operates some of the largest data centers used to train and run AI models. Li said Meta evaluates different chip types for different tasks and considers custom silicon a key part of its long-term strategy for handling AI workloads. Meta Expands AI Tools, Infrastructure, And Content Deals Meta is expanding its AI strategy with new tools, infrastructure, and content partnerships to strengthen its AI ecosystem and unlock new revenue opportunities. The company is testing a shopping research feature in its Meta AI chatbot, allowing users to request product recommendations with images, pricing, and links to merchant sites. Meta is also building a new applied AI engineering organization, led by Reality Labs executive Maher Saba, to improve model training and development alongside its Superintelligence Lab. Analysts Weigh AI Spending And Long-Term Growth Despite these initiatives, Meta shares have faced some pressure as investors question the scale of its AI investments. Meta stock gained just 1.72% in the last 12 months, trailing the NASDAQ Composite Index's 23% returns. Analysts say Meta faces near-term investor skepticism over AI spending but could still offer long-term upside as its AI strategy develops. Lynch noted the drop at a price-to-earnings ratio around 20 appears largely "self-inflicted" and said the shares could rebound if Meta shows greater discipline in AI-related spending. Meanwhile, Jefferies analyst Brent Thill views the recent pullback as a potential buying opportunity. Thill expects Meta's new text and image AI models, set to launch in the first half of 2026, to help reshape investor perceptions of the company's AI capabilities. He also highlighted cost cuts in the metaverse division, improved AI-driven ad performance, and WhatsApp's monetization potential, projecting the platform's revenue could grow fourfold by fiscal 2029. META Price Action: Meta Platforms shares were down 0.80% at $662.36 during premarket trading on Thursday, according to Benzinga Pro data. Photo by PJ McDonnell via Shutterstock Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[3]

Meta's internal chip design efforts face hurdles

Meta Platforms (META) is facing issues with AI chips being developed internally and has discarded its most advanced chip, shifting focus to a less complicated version, The Information reported, citing people with knowledge of the matter. Last week, the company scrapped Meta's decision to shift from in-house advanced chips to renting and partnering for established AI chips highlights its current difficulty matching Nvidia's capabilities, forcing greater reliance on competitors' products. Meta faces design complexities, internal skepticism, a lack of stable training software, potential for costly delays, and difficulties scaling the production of more advanced chips, making in-house solutions less viable currently. Meta's multi-billion-dollar deals and partnerships with these companies will provide access to leading AI chips, enabling it to power next-generation AI infrastructure through established, scalable technologies rather than its own unfinished chips.

Share

Share

Copy Link

Meta scrapped its most advanced in-house AI training chip due to design challenges and is shifting to a simpler version. The setback highlights the difficulty of building custom silicon to rival Nvidia and raises questions about Meta's ability to reduce its dependence on external suppliers despite committing up to $135 billion in capital expenditures for 2026.

Meta scraps most advanced training chip amid design challenges

Meta abandoned its most ambitious in-house AI training chip after encountering significant design roadblocks, according to a report from The Information

1

. The company is now pivoting to a simpler version of the chip, marking another setback in its efforts to reduce reliance on external suppliers like Nvidia and AMD. The decision underscores the complexity of custom silicon development and the challenges tech giants face when attempting to match Nvidia's dominance in AI chip manufacturing3

.

Source: Benzinga

The Meta Training and Inference Accelerator (MTIA) program has experienced multiple setbacks since its inception. Meta previously scrapped an earlier inference chip after it underperformed in small-scale testing, forcing the company to pivot in 2022 toward billions of dollars' worth of Nvidia GPUs

1

. While Meta eventually deployed an MTIA chip for inference tasks on Facebook and Instagram, the training chip has proven far more elusive. The company had begun testing its first in-house AI training chip manufactured by TSMC after completing a tape-out, but design complexities, internal skepticism, and a lack of stable training software made the in-house solution less viable3

.Meta commits over $100 billion to AMD and Nvidia partnerships

Following the abandonment of its advanced training chip, Meta announced a multiyear agreement with AMD on February 24 worth more than $100 billion for up to six gigawatts of MI450 GPUs, with shipments scheduled to begin in the second half of this year

1

. AMD issued Meta a performance-based warrant for up to 160 million shares of its common stock under the deal. A week earlier, Meta expanded its partnership with Nvidia for millions of next-generation Vera Rubin GPUs and Grace CPUs in a deal likely worth tens of billions of dollars. Meta also signed an agreement to rent Google TPUs for developing new AI models1

.Analyst Jeff Pu noted in January that Meta appeared to be scaling back its in-house ASIC program, turning to AMD instead of its own chips or Google's TPUs

1

. These multi-billion-dollar deals and partnerships will provide Meta with access to leading AI chips, enabling the company to power next-generation AI infrastructure through established, scalable technologies rather than its own unfinished chips3

.Meta maintains long-term commitment to custom silicon despite setbacks

Despite these challenges, Meta continues to pursue in-house AI chip development as part of its long-term strategy. Speaking at a Morgan Stanley technology conference, Meta CFO Susan Li said the company is developing custom processors tailored to its own workloads, particularly those tied to ranking and recommendation systems, where Meta has already deployed custom silicon at scale

2

. Li stated that Meta plans to expand the use of its custom chips over time, including eventually building processors capable of AI model training for future generations2

.Meta Chief Product Officer Chris Cox described the company's chip development journey last year as a "walk, crawl, run situation," acknowledging the gradual nature of progress in custom silicon development

1

. Meta co-developed its MTIA chips with Broadcom, which also partners with Google on its TPUs. Although Meta is not a cloud provider, it operates some of the largest data centers used to train and run AI models, making chip efficiency critical to its operations2

.Related Stories

Massive capital expenditures fuel AI infrastructure expansion

Meta has committed up to $135 billion in capital expenditures for 2026 to build out AI infrastructure across more than 30 data centers

1

. This massive investment reflects the company's determination to compete in the AI race, even as it faces near-term investor skepticism over AI spending. Meta stock gained just 1.72% in the last 12 months, trailing the NASDAQ Composite Index's 23% returns2

. Analysts note that Meta faces pressure as investors question the scale of its AI investments, with the stock trading at a price-to-earnings ratio around 202

.Jefferies analyst Brent Thill views the recent pullback as a potential buying opportunity, expecting Meta's new text and image AI models set to launch in the first half of 2026 to help reshape investor perceptions of the company's AI capabilities

2

. The company is also building a new applied AI engineering organization, led by Reality Labs executive Maher Saba, to improve model training and development alongside its Superintelligence Lab. Meta is testing a shopping research feature in its Meta AI chatbot, allowing users to request product recommendations with images, pricing, and links to merchant sites2

. These initiatives signal Meta's broader strategy to expand AI tools and unlock new revenue opportunities, even as AI chip design challenges force greater reliance on competitors' products in the near term.

Source: Seeking Alpha

References

Summarized by

Navi

[3]

Related Stories

Meta Tests In-House AI Training Chip, Challenging Nvidia's Dominance

12 Mar 2025•Technology

Meta unveils four MTIA chip generations to power AI inference and reduce Nvidia dependence

12 Mar 2026•Technology

Meta's AI Ambitions: Acquiring Rivos and Investing Billions in Compute Power

01 Oct 2025•Technology