Nvidia's networking business overtakes Cisco as AI infrastructure dominance expands beyond chips

3 Sources

3 Sources

[1]

Nvidia is quietly building a multibillion-dollar behemoth to rival its chips business | TechCrunch

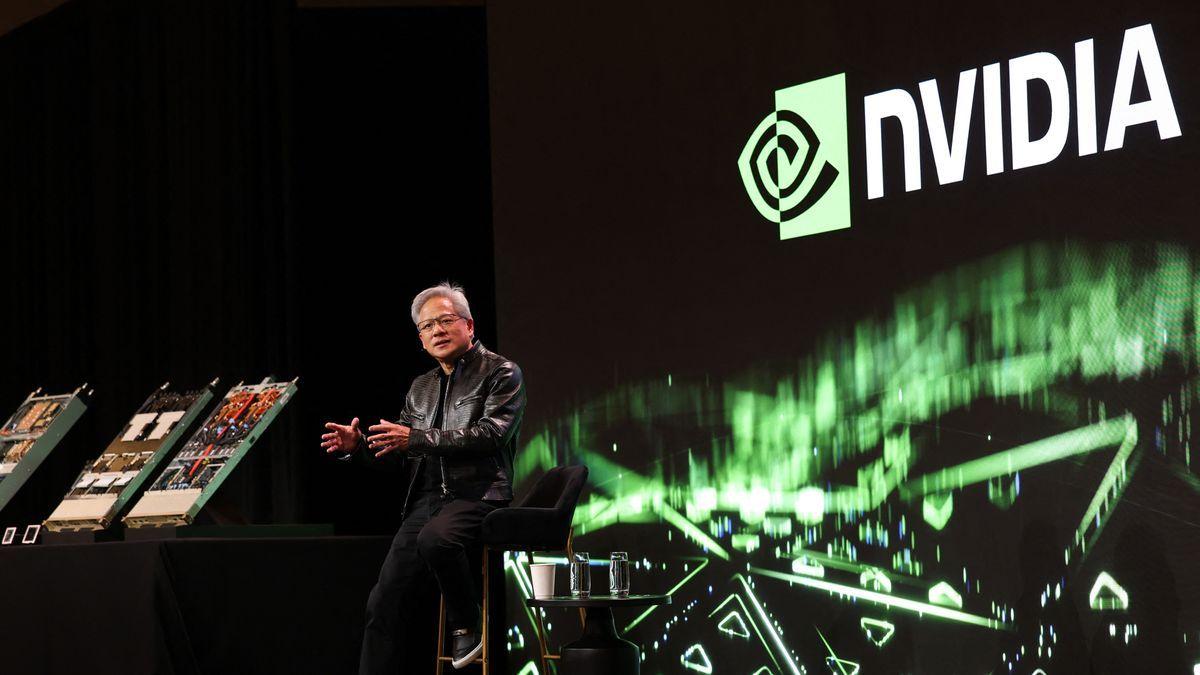

Nvidia CEO Jensen Huang was years ahead of the market when he pushed the company to start tinkering with building AI-specific chips back in 2010, more than a decade before the current buzz around AI. A similar move in 2020 -- doubling down on data center networking with a strategic acquisition -- has led to one of the company's most lucrative and quickly growing divisions, but with little fanfare. In just a few years, Nvidia's networking business, designed to connect data centers, has grown into the company's second-largest revenue driver behind compute. Last quarter, it reported $11 billion in revenue, a year-over-year increase of 267%, and brought in more than $31 billion for the full year, according to Nvidia's most recent earnings. Driven by growth in AI processing, the division includes tech like NVLink, which powers communication between GPUs on a data center rack, Nvidia InfiniBand Switches, an in-network computing platform, Spectrum-X, the ethernet platform for AI networking, and co-packaged optics switches, among others. Together, Nvidia's networking business includes all the tech needed for building an "AI factory," a data center designed for training AI models. Kevin Cook, a senior equity strategist at Zacks Investment research, told TechCrunch that Nvidia's networking business is one of the most impressive new segments from the company. "[Nvidia's networking business] reports $11 billion for the quarter; that number is greater than Cisco's networking business, almost as big as the full-year estimates,' Cook said, adding it does in one quarter what Cisco's business does in a year. And yet -- the business segment doesn't draw the same attention as the company's chip business, which is significantly larger. It also doesn't get as much fanfare as the company's gaming business, it's original bread-and-butter business, which is nearly three times smaller. The origin of Nvidia's networking business comes from Mellanox, a networking company founded in Israel in 1999 that Nvidia acquired in 2020 for $7 billion. Kevin Deierling is a senior vice president of networking at Nvidia and joined the company through the acquisition of Mellanox. Deierling told TechCrunch that people not knowing about Nvidia's networking business could be his fault for doing a bad job of marketing it -- but he doesn't like that answer. "People think of networking as just, 'I got a printer, and I need to connect to it,'" Deierling said. "Jensen said this the first day when he acquired us, he said the data center is the new unit of computing. Networking is a lot more than just moving the smaller amounts of data between a compute node, it's actually a foundation." While Deierling said he didn't really understand why Huang bought the company when he did -- he gets it now. Having a networking business alongside its GPU business allows the company to sell its chips with the tech that they work best with. "When Jensen bought Mellanox in 2020, he saw that was the missing piece to make GPUs a complete package," Cook, the Zack's analyst, said. Deierling added that he thinks another aspect of Nvidia networking's success is that they only sell the tech as a full-stack solution, as opposed to individual components, and they don't actually sell the tech themselves, but rather through their partners. "I can't think of other companies that have [the] full-stack capabilities that we have," Deierling said. "We are really different. We build the full compute stack, fully integrated stack, and then we go to market through all of our partners." Nvidia just announced a whole new slew of updates to its networking system during Huang's keynote address on March 16 at the company's annual Nvidia GTC technology conference. The company launched the Nvidia Rubin platform, which includes six new chips to power an "AI supercomputer." Nvidia also announced a new Nvidia Inference Context Memory Storage platform and more efficient Nvidia Spectrum-X Ethernet Photonics switches, among other products. "It's no longer a peripheral to connect the printer, some other slow I/O device," Deierling said about networking. "It's fundamental to the computer. In the old days, we had what was called the back lining inside the computer. Today, the network is the back lining of the AI factory, and it's super important."

[2]

Nvidia wants to own your AI data center from end to end

Nvidia's broadening ambition includes robotics and even AI in space. The image Nvidia suggested to the media for its GTC conference in San Jose, Calif., this week is a line of 40 rectangles representing data center server racks of various kinds. No labels, just the racks standing like a bookshelf of the complete works of Shakespeare, or, more ominously, a phalanx of soldiers. The implicit message of the imposing wall of racks is that Nvidia, if it doesn't already, will ultimately own all processing in the data center, from one end to the other. Also: This OS quietly powers all AI - and most future IT jobs, too On stage at the show, Nvidia CEO Jensen Huang used Monday's keynote address to announce a broadening of the company's chip and system offerings. Existing product lines include the Vera CPU chip, the Rubin GPU chip, and, now, a new kind of rack of equipment joins them, for ultra-fast inference, called the LPX. The LPX rack, which will be available later this year, is made up of chips Nvidia has designed using intellectual property it licensed in December from AI startup Groq for $20 billion. The transformed Groq approach, implemented in the Nvidia Groq 3 LPU, will be used in the LPX in combination with Rubin GPUs to achieve an optimal balance between inference speed and the total amount of data that can be handled. The Groq 3 LPU "can combine the extreme FLOPS [floating-point operations per second] of GPUs and the bandwidth of LPUs into one," said Ian Buck, Nvidia's head of hyper-scale and high-performance computing, in a media pre-briefing. Also: Cloud attacks are getting faster and deadlier - here's your best defense plan The original Groq LPU, which stands for "language processing unit," has 500 megabytes of on-chip SRAM, a form of fast memory much larger than a normal chip memory cache. The SRAM can hold the weights -- aka neural parameters -- of large language models, as well as the "KV cache," the intermediate results of calculations that speed up inference. By using the LPU in a rack alongside GPUs, the LPU's SRAM can fetch the most-needed data, reducing the need to request data from off-chip DRAM, which GPUs have to do. That local SRAM cache dramatically lowers the latency, the round-trip time to retrieve and output an answer to a query, said Buck. "Things that took day-long queries are going to be produced in less than an hour," said Buck. The LPU can also perform query processing much more efficiently, Nvidia claims. Market research firm TechInsights has reported, based on existing Groq silicon prior to the Nvidia deal, that the LPU's "energy per bit" for memory access is one third of a picojoule, or 20 times less than a GPU's 6 picojoules to access DRAM. For the same amount of money per token, Groq LPUs in the LPX rack will deliver 35 times as many tokens per second per megawatt of power, said Buck, using the example of 500,000 tokens processed per second for a price of $45 per million tokens. Also: Why you'll pay more for AI in 2026, and 3 money-saving tips to try That drastic speed-up in fetching and delivering tokens also leads to a 10-fold increase in the dollars of revenue an AI provider can make per second per megawatt, said Buck. Though not explicitly mentioned, reducing off-chip DRAM use is increasingly important given that DRAM prices are soaring at the moment. The LPX rack is part of Huang's overall pitch to the AI world: that the company offers better economics by selling all parts of the equation -- not just the Vera, Rubin, and LPU chips, but also the software that runs on top of them. "From the five-layer-cake of energy, chips, the infrastructure itself, the models, and the applications, this multi-layer infrastructure is driving the revenue and job creation," Nvidia's Buck told reporters. The LPX stands in that row of 40 rectangles alongside four other racks that Huang talked about, which make up his company's pitch for a complete AI infrastructure. There is the Vera-Rubin NVL72, a rack made up of 72 Rubin CPUs and 36 Vera CPUs; a new CPU-only rack, the Vera CPU rack, consisting of 256 Vera CPUs and 400 terabytes of DRAM; a new kind of data storage rack, the Bluefield 4 STX that acts as a kind of repository for the KV cache across all GPUs; and the latest version of Nvidia's Ethernet networking equipment rack, the Spectrum-6 SPX. Also: Nvidia's physical AI models clear the way for next-gen robots - here's what's new Buck explained that the Veru CPU racks speed up all the tasks of agentic AI that would be too much for a conventional Intel- or AMD-based x86 CPU. "GPUs today actually call out to CPUs in order to do the tool calling, SQL query, and the compilation of code," said Buck. "This sandbox execution is a critical part of both training and deploying agents across the data centers, and those CPUs need to be fast." He said the Vera CPU rack can be one and a half times faster on single-threaded CPU tasks versus existing x86 CPUs. As a result, the STX racks will quadruple performance per watt, double pages per second for enterprise data, and deliver five times the tokens per second of context memory required for AI factories running GenTech workflows. "The results are astounding," said Buck. The new data storage rack, explained Buck, is "a high-bandwidth shared layer optimized for storing and retrieving the massive key-value cache data generated by LLMs and GenTech workflows." Although the rack is made up of Nvidia Bluefield DPU (data-processing units, a companion to CPUs), the STX is only a "reference architecture," said Buck, meaning that the actual racks will be designed and built by Nvidia partners. The scale and breadth of ambition on display in Huang's keynote is remarkable. As my colleague Radhika Rajkumar details in her coverage, Huang also talked up its own offering for agentic AI, NemoClaw, and multiple offerings for so-called physical AI, principally robotics. Huang even talked up AI in space, though the details of satellite-based server deployments remain vague, according to Radhika. Buck characterized the wall of different servers as "an extreme end-to-end co-design in order to deliver the maximum value out of the AI factory for all of the workloads across AI and all industries." Also: Nvidia bets on OpenClaw, but adds a security layer - how NemoClaw works It is also a canny way for Nvidia to make its value proposition evident to anyone who would consider using competitor AMD's CPUs and GPUs, or using exotic AI equipment from startup challengers such as Cerebras Systems. With a portfolio of five racks of equipment, spanning all the functions of the data center, Huang is telling customers it will all work more efficiently, and generate more AI revenue, when it's all supplied by Nvidia. For Huang, it is also the culmination of a decades-long quest to take over parts of computing from the incumbents. In the past, he attempted to storm the server CPU market with beefy server CPUs such as Denver. But Huang had to withdraw when the entrenched power of Intel's Xeon CPU became too much to overcome. With a bookshelf now of the complete collected parts for a data center, Huang's company stands poised to define the computing age and overwhelm the companies that defined the prior age.

[3]

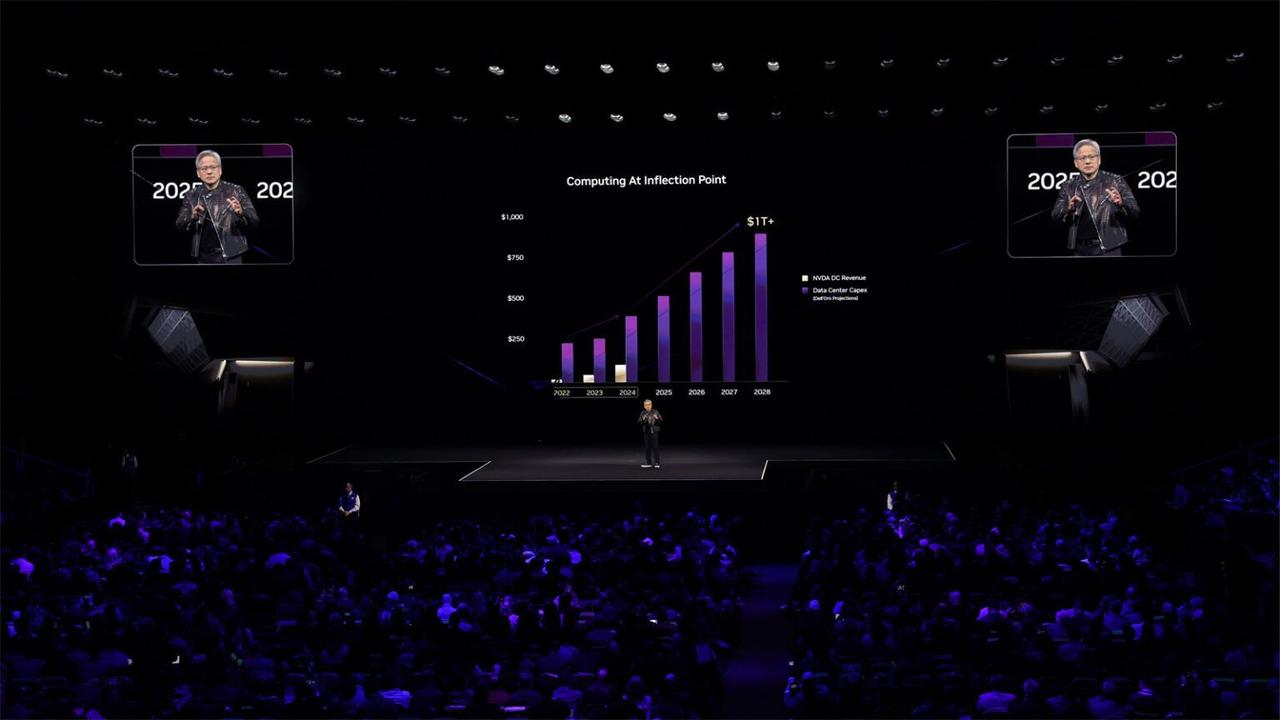

Analysis: Nvidia's AI Dominance Expands To Networking As It Makes Bigger CPU Push

Nvidia's recent networking revenue milestone is a sign of how the company's dominance of the AI infrastructure market is starting to extend well beyond AI chips into other product categories where it did not participate 10 years ago. Nvidia's push to provide the essential components and systems for AI infrastructure received a major validation point last month when the company revealed that its annual networking revenue surpassed that of Cisco's. While Nvidia CEO Jensen Huang did not mention Cisco by name in its latest earnings call, he repeated a point that the company made in its fourth-quarter earnings presentation pointing to the milestone: "Nvidia is the world's largest networking business." This was based on networking revenue within Nvidia's data center business reaching $31 billion for its 2026 fiscal year, which ended in late January, representing a 142 percent increase from the previous year. It was also "up more than 10 times" from when Nvidia acquired the foundation for the business, Mellanox Technologies, back in 2020. By contrast, Cisco made $28 billion in networking revenue for its 2025 fiscal year, which ended last July. And even for their most recent quarters that recently ended, Nvidia made nearly $3 billion more than Cisco did in networking. It's a sign of how Nvidia's dominance of the AI infrastructure market -- where it expects hyperscalers to spend nearly $700 billion this year -- is starting to extend well beyond AI chips into other product categories where the company did not participate 10 years ago. This, in turn, is putting Nvidia into more direct competition with a growing number of companies, including those it counts as partners, like Cisco. What drove Nvidia's networking revenue in the fourth quarter was a "continued ramp" of its NVLink compute fabric for its Grace Blackwell GB200 and GB300 rack-scale platforms as well as growth of its Spectrum-X Ethernet and Quantum InfiniBand networking platforms. "The invention of NVLink really turbocharged our networking business. Every rack comes with nine nodes of switches, and each one of them has two chips in it, and in the future, they'll have more. And so the amount of switching that we do per rack is really quite incredible," Huang said on the Feb. 25 fourth-quarter earnings call. "We're also now the largest networking company in the world and if you look at Ethernet, we came into the Ethernet market about a couple of years ago, into Ethernet switching. And I think that we're probably the largest Ethernet networking company in the world today -- and surely will be soon. And so Spectrum-X Ethernet has been a home run for us," he added. The company has expanded into areas like networking and CPUs because of its view that it needs to develop these technologies in tandem with each other to deliver data center-scale computers that provide the fastest and most efficient performance for AI workloads. Nvidia calls this level of vertical integration "extreme co-design." "Every single generation, we are committed to deliver many X factors of performance per watt and performance per dollar, and that pace and our ability to do extreme co-design allows us to deliver that value and that benefit to the customers, and that is the single most vital thing as it relates to our value delivered," Huang said. Even with this "extreme co-design" push, Nvidia is beginning to see real interest in its CPUs as a stand-alone offering, signaling a greater threat to Intel and AMD. While the company has largely focused on integrating CPUs alongside GPUs into bespoke server tray designs for rack-scale platforms like the GB300, Nvidia recently announced deals with neocloud provider CoreWeave and hyperscaler Meta to supply a stand-alone offering of its upcoming Vera CPU for their data centers. In explaining Nvidia's evolving view of CPUs in the data center, Huang said that the company is seeing the need for a stand-alone offering because AI applications are now learning to use tools, many of which run in CPU-only compute environments. At the same time, other tools can run in environments powered by CPUs and GPUs, he added. "And Vera was designed to be an excellent CPU for post-training. And some of the use cases in the entire pipeline of artificial intelligence includes using a lot of CPUs," he said. While this signals increased competition for Intel and AMD, the company is also finding new ways to expand its accelerator chip capabilities -- where Nvidia is up against traditional rivals, startups and hyperscalers -- through its recent non-exclusive licensing deal with AI chip designer Groq that was reportedly worth $20 billion. As part of the deal, Nvidia hired members of Groq's team, including co-founder and CEO Jonathan Ross, to implement Groq's inference technology into Nvidia offerings. "As we did with Mellanox, we will extend Nvidia's architecture with Groq innovations to enable new levels of AI infrastructure, performance and value," Huang said. Nvidia is facing a greater threat from competitors than it has over the past several years. Just look at AMD's deal announced on Tuesday to supply 6 gigawatts of AI infrastructure powered by its Instinct GPUs to Meta. Or the continuing success of homegrown AI chip efforts by Amazon Web Services or Google, the latter of which is reportedly considering supplying TPUs to data centers owned by customers. Nevertheless, Huang and his lieutenant, CFO Collete Kress, gave an air of confidence and perhaps inevitability about the company's expectation for continued growth and dominance, which it is fueling with a large research and development budget along with a massive war chest it has been using to invest in companies of all sizes. "Our pace of innovation, particularly at our scale, is unmatched, fueled by an annual R&D budget approaching $20 billion and our ability to extreme co-design across compute and networking, across chips, systems, algorithms and software," Kress said on the call. When a financial analyst asked if Nvidia is confident that hyperscalers, including cloud service providers, will continue growing their capital expenditures on AI infrastructure beyond this year, Huang said he is "confident in their cash flow growing." "And the reason for that is very simple," he added. "We have now seen the inflection of agentic AI and the usefulness of agents across the world and enterprises everywhere. You're seeing incredible compute demand because of it. In this new world of AI, compute is revenues. Without compute, there's no way to generate tokens. Without tokens, there's no way to grow revenues. So in this new world of AI, compute equals revenues." This has led Huang to believe that the industry has reached an "inflection point" because of what he called "exponential" growth in the number of tokens being generated by AI models that corresponds with demand for compute. With Nvidia set to reveal expanded offerings at its GTC 2026 event Monday, the company will illuminate exactly how it will continue to feed this new stage of demand. "The number of tokens that are being generated has really gone exponential, and so we need to inference at a much higher speed," Huang said in February.

Share

Share

Copy Link

Nvidia's networking division generated $31 billion in annual revenue, surpassing Cisco to become the world's largest networking company. The business grew 267% year-over-year, driven by demand for AI data center infrastructure. This expansion signals Nvidia's push beyond chips into end-to-end AI solutions, challenging established players across multiple categories.

Nvidia Becomes World's Largest Networking Company

Nvidia has quietly built a networking business that now rivals the scale of its flagship chip operations, achieving a milestone that underscores the company's expanding grip on AI infrastructure. The networking division reported $11 billion in revenue last quarter, marking a 267% year-over-year increase, and brought in more than $31 billion for the full year

1

. This figure surpasses Cisco's $28 billion in networking revenue for its 2025 fiscal year, making Nvidia the world's largest networking company3

. Kevin Cook, a senior equity strategist at Zacks Investment Research, noted that Nvidia's networking business does in one quarter what Cisco's business accomplishes in a year1

.

Source: CRN

From Mellanox Acquisition to Market Leadership

The foundation of Nvidia's networking business traces back to its 2020 acquisition of Mellanox, an Israeli networking company founded in 1999, for $7 billion

1

. At the time, the strategic rationale wasn't immediately clear to everyone, including Kevin Deierling, now senior vice president of networking at Nvidia, who joined through the acquisition. However, Jensen Huang's vision has proven prescient. "When Jensen bought Mellanox in 2020, he saw that was the missing piece to make GPUs a complete package," Cook explained1

. The networking revenue is now "up more than 10 times" from when Nvidia acquired Mellanox3

.Building Complete AI Factories Through Vertical Integration

Nvidia's data center networking portfolio now encompasses the complete technology stack needed for building AI factories—data centers designed specifically for training AI models. The division includes NVLink, which powers communication between GPU units on data center racks, InfiniBand Switches for in-network computing, Spectrum-X ethernet platform for AI networking, and co-packaged optics switches

1

. What drove networking revenue in the fourth quarter was a "continued ramp" of NVLink compute fabric for the Grace Blackwell GB200 and GB300 rack-scale platforms, along with growth of Spectrum-X Ethernet and Quantum InfiniBand networking platforms3

.Related Stories

End-to-End AI Solutions Strategy

At the GTC conference in San Jose, Nvidia presented a vision of complete AI data center ownership through what it calls "extreme co-design"

3

. The company showcased a lineup of 40 server racks representing different components of its vertically integrated solutions2

. During his keynote address on March 16, Huang announced the Nvidia Rubin platform, which includes six new chips to power an "AI supercomputer," along with new Inference Context Memory Storage platform and more efficient Spectrum-X Ethernet Photonics switches1

. The company also introduced the LPX rack for ultra-fast inference, incorporating technology from its $20 billion licensing deal with AI startup Groq2

.

Source: TechCrunch

Expanding Competition Across Multiple Fronts

Nvidia's dominance of the AI infrastructure market is extending well beyond AI chips into product categories where it didn't participate a decade ago, putting the company in direct competition with partners like Cisco, Intel, and AMD

3

. The company is seeing real interest in its Vera CPU as a stand-alone offering, with deals from CoreWeave and Meta to supply the upcoming CPU for their data centers3

. Ian Buck, Nvidia's head of hyper-scale and high-performance computing, emphasized the economic advantages: "From the five-layer-cake of energy, chips, the infrastructure itself, the models, and the applications, this multi-layer infrastructure is driving the revenue and job creation"2

. Huang expects hyperscalers to spend nearly $700 billion this year on AI infrastructure, a market where Nvidia is positioning itself to capture value across every layer3

. The company's approach of selling only full-stack solutions through partners, rather than individual components, differentiates it from traditional networking vendors and reflects its data center-scale AI compute philosophy1

.References

Summarized by

Navi

[1]

Related Stories

Nvidia CEO Jensen Huang Unveils "Agentic AI" Vision at CES 2025, Predicting Multi-Trillion Dollar Industry Shift

07 Jan 2025•Technology

Nvidia's Strategic Shift: From Graphics Leader to AI Infrastructure Provider

22 Mar 2025•Technology

Nvidia GTC 2026: Jensen Huang shifts focus from chips to AI agents with NemoClaw launch

13 Mar 2026•Technology