NVIDIA launches Physical AI Data Factory Blueprint to accelerate robotics and autonomous vehicles

2 Sources

2 Sources

[1]

NVIDIA Announces Open Physical AI Data Factory Blueprint to Accelerate Robotics, Vision AI Agents and Autonomous Vehicle Development

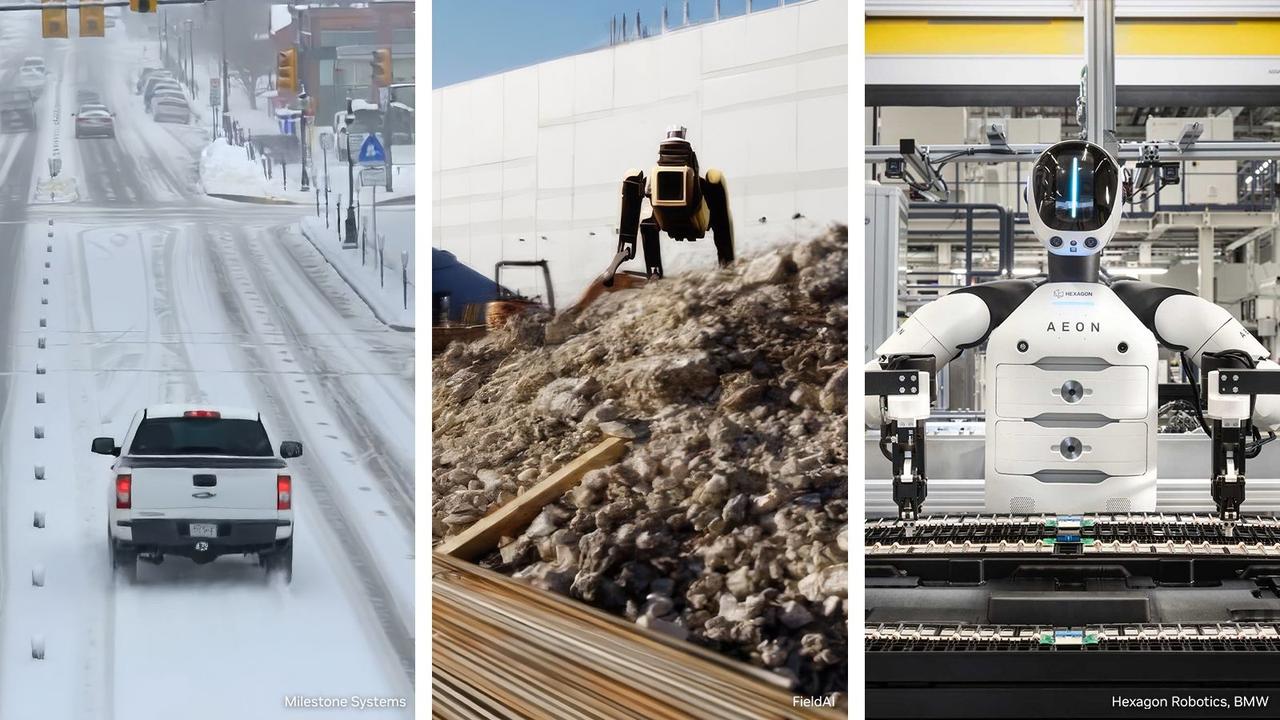

* Blueprint enables massive-scale data processing and curation, synthetic data generation, reinforcement learning and evaluation of physical AI models for vision AI agents, robotics and autonomous vehicles. * Cloud service providers including Microsoft Azure and Nebius provide the blueprint to transform world-scale compute into agent-driven turnkey data production engines. * Leading physical AI developers FieldAI, Hexagon Robotics, Linker Vision, Milestone Systems, Skild AI, Uber and Teradyne Robotics are using the blueprint to accelerate robotics, vision AI agents and autonomous vehicle development. GTC -- NVIDIA today announced the NVIDIA Physical AI Data Factory Blueprint, an open reference architecture that unifies and automates how training data is generated, augmented and evaluated, reducing the costs, time and complexity of training physical AI systems at scale. The blueprint enables developers to use NVIDIA Cosmos™ open world foundation models and leading coding agents to transform limited training data into large, diverse datasets -- including rare edge cases and long-tail scenarios that are expensive, time-consuming and often impractical to capture in the real world. NVIDIA is collaborating with Microsoft Azure and Nebius to integrate the open blueprint with their cloud infrastructure and services, enabling developers to turn accelerated computing power into high-volume training data. Leading physical AI developers FieldAI, Hexagon Robotics, Linker Vision, Milestone Systems, RoboForce, Skild AI, Teradyne Robotics and Uber are using the blueprint to accelerate robotics, vision AI agents and autonomous vehicle development. "Physical AI is the next frontier of the AI revolution, where success depends on the ability to generate massive amounts of data," said Rev Lebaredian, vice president of Omniverse and simulation technologies at NVIDIA. "Together with cloud leaders, we're providing a new kind of agentic engine that transforms compute into the high-quality data required to bring the next generation of autonomous systems and robots to life. In this new era, compute is data." A Unified Engine for Physical AI Development Physical AI follows scaling laws: Performance improves as data, compute and model capacity grow. The Physical AI Data Factory Blueprint serves as a single reference architecture that moves teams from raw data to model-ready training sets through modular, automated workflows: * Curate and Search: NVIDIA Cosmos Curator processes, refines and annotates large-scale real-world and synthetic datasets. * Augment and Multiply: Cosmos Transfer exponentially expands and diversifies curated data, multiplying real and simulated inputs to better capture rare and long-tail scenarios across environments and lighting conditions. * Evaluate and Validate: NVIDIA Cosmos Evaluator, powered by Cosmos Reason and now available on GitHub, automatically scores, verifies and filters generated data to ensure physical accuracy and training readiness. NVIDIA is using the Physical AI Data Factory Blueprint to train and evaluate NVIDIA Alpamayo, the world's first open reasoning-based vision language action models for long-tail autonomous driving. Skild AI is applying the blueprint to advance general-purpose robot foundation models, while Uber is using it to accelerate autonomous vehicle development. Agent Driven Orchestration at Scale Many robotics developers are not equipped to stand up and manage the complex AI infrastructure required to generate data at scale. NVIDIA OSMO, an open source orchestration framework, unifies and manages these workflows across compute environments, reducing manual tasks so developers can focus on building their models. OSMO now integrates with leading coding agents such as Claude Code, OpenAI Codex and Cursor, enabling AI-native operations where agents proactively manage resources, resolve bottlenecks and accelerate model delivery at scale. Powering the Global Physical AI Ecosystem Cloud service providers play a critical role in providing the accelerated AI infrastructure, machine learning operations and orchestration services developers need to build and deploy physical AI at scale. Microsoft Azure is integrating the Physical AI Data Factory Blueprint into an open physical AI toolchain, now available on GitHub. The blueprint offers integration with Azure services -- including Azure IoT Operations, Microsoft Fabric, Real-Time Intelligence, Microsoft Foundry and GitHub Copilot -- to provide enterprise-grade, agent-driven workflows for training and validating physical AI systems quickly and at scale. FieldAI, Hexagon Robotics, Linker Vision and Teradyne Robotics are among the first to test the Azure physical AI toolchain for accelerating and scaling data generation, augmentation and evaluation across their perception, mobility and reinforcement learning pipelines. Nebius has integrated OSMO into its AI Cloud, enabling developers to use the blueprint to deploy production-ready data pipelines tailored to their needs. Nebius's infrastructure powers the physical AI stack end to end, blending NVIDIA RTX PRO™ 6000 Blackwell Server Edition GPUs with ultrafast object storage, native data management and labeling, serverless execution and built-in managed inference. Early users Milestone Systems, Voxel51 and RoboForce are harnessing the blueprint on Nebius infrastructure to accelerate model development for video analytics AI agents, autonomous vehicles and industrial humanoid robots. The NVIDIA Physical AI Data Factory Blueprint is expected to be available on GitHub in April. Watch the GTC keynote from NVIDIA founder and CEO Jensen Huang and explore sessions. Feature image courtesy of Milestone Systems (left), FieldAI (middle) and Hexagon Robotics and BMW (right).

[2]

Nvidia expands physical AI with communication and data processing infrastructure blueprints - SiliconANGLE

Nvidia expands physical AI with communication and data processing infrastructure blueprints Nvidia Corp. today announced blueprints for artificial intelligence training data generation to enable massive-scale processing and generation of data for the AI models needed to drive the next generation of robots. The company also said it is partnering with T-Mobile and Nokia to work with a growing ecosystem of developers to bring robots, autonomous cars, sensors and edge applications into AI networks. The collaboration will use high-performance communication networks and AI radio access networks to distribute and deploy AI compute and apps over wide areas. As AI moves beyond purely digital environments, the screen, reading files, summarizing emails and chatbots, and combines with sensors, cameras and robot limbs, it gains agency. This technology is called physical AI, which includes robots, drones and autonomous vehicles -- systems that must interact with and understand surroundings, reason through tasks and adapt to the environment in real time. "Physical AI is the next frontier of the AI revolution, where success depends on the ability to generate massive amounts of data," said Rev Lebaredian, vice president of Omniverse and simulation technologies at Nvidia. To support this need, Nvidia released a new Nvidia Physical AI Data Factory Blueprint, an open reference architecture that defines how training data is generated, augmented and evaluated for physical AI systems. It is designed to reduce costs and speed up training for the complex work of building physical AI systems at scale. The company collaborated with Microsoft Corp. and Nebius Group N.V. to integrate the open blueprint with their cloud infrastructure. Physical AI development firms, including FieldAI, Hexagon Robotics, Linker Vision, Milestone Systems, RoboForce Inc., Skild AI, Teradyne Inc. and Uber Technologies Inc., have all signed on to use the blueprint to accelerate their own vision AI projects and autonomous vehicle development. Under the blueprint, Nvidia said it is combining a number of technologies. Including its Cosmos world foundation model tools, it provides curated search for annotating real-world data and evaluation for automatically scoring, filtering and evaluating generated data to ensure physical accuracy. Cosmos also exponentially expands and diversifies curated data by multiplying real and simulated inputs to better capture rare and long-tail scenarios across environment and lighting conditions. Additionally, Nvidia OSMO, an open-source orchestration framework, will help unify and manage robotics workflows across compute environments and reduce manual tasks so developers can focus on building models. The framework now integrates with coding agents such as Anthropic PBC's Claude Code, OpenAI Group PBC's Codex and Cursor. Nvidia, T-Mobile US Inc. and Nokia said today they're working to bring AI applications into the next generation of AI-RAN infrastructure. AI-RAN, or artificial intelligence radio access networking, represents an ongoing evolution of telecommunications to transform wireless networking into platforms that can distribute edge AI computing. This will allow high-performance AI vision, computation and other capabilities across broad regions, allowing AI agents to understand the physical world across cities, utilities and industrial worksites. T-Mobile became the first company in the United States to pilot Nvidia's AI-RAN infrastructure with Nokia's anyRAN software. It is now working with select Nvidia physical AI partners to demonstrate how cellular sites and mobile switches can support distributed edge AI workloads on 5G. "Telecommunication networks are evolving into the AI infrastructure enabling billions of devices -- from vision AI agents to robots and autonomous vehicles -- to see, hear and act in real time," said founder and Nvidia Chief Executive Jensen Huang. Nvidia said AI-RAN is built to address what it believes is a critical infrastructure gap: the lack of low-latency, secure and widely available connectivity. T-Mobile's 5G standalone network will provide wide-area backbone connectivity and Nvidia's powered infrastructure will offload the heavy computation from devices to the nearest edge locations. By providing near-edge compute, developers can build use cases such as smart city operations, automated utility inspection, vision-based facility management and real-time industrial safety. Although very small models can run on devices, they lack the power and intelligence to do significant processing, which means very tiny models miss important details. Conversely, extremely large models require tremendous amounts of power and data to run, which means offloading the data across telecommunications lines to distant datacenters for processing. That adds a delay in getting critical information back to a device. The happy middle ground is running a large-enough AI model nearby on a server that could be within meters or kilometers with a delay of milliseconds or under and enough computational power to "think" about images, videos and safely react in real time. "Turning networks into distributed AI computing platforms to unlock the full potential of Physical AI will require ultra-low latency and space-time coherency at the network edge for billions of endpoints," said T-Mobile CEO Srini Gopalan. At an industrial scale, nearly 1.5 billion cameras run globally, but less than 1% of the footage generated ever gets reviewed by humans. To close this gap, Nvidia introduced Metropolis VSS 3 Blueprint, which allows AI agents to reason over video from the edge to the cloud. It allows them to decompose video to understand safety issues, lighting changes, predict potential hazards and understand specific events. An agent watching a factory floor could reason across an assembly line on the verge of failing and warn workers before an event happens on the floor. Another agent connected with a pipeline could watch for leaks and send out repair crews when wet spots appear, or send notices to disaster recovery after a major storm strikes infrastructure. Partners using VSS to enhance safety include Caterpillar, KION, Hitachi, HCLTech, Siemens Energy, Tulip and Telit Cinterion.

Share

Share

Copy Link

NVIDIA introduced its Physical AI Data Factory Blueprint, an open reference architecture designed to automate training data generation for robotics, vision AI agents and autonomous vehicles. Cloud providers Microsoft Azure and Nebius are integrating the blueprint, while companies like Uber, Skild AI and Teradyne Robotics use it to scale development. NVIDIA also partnered with T-Mobile and Nokia to deploy AI-RAN infrastructure for distributed edge AI computing.

NVIDIA Unveils Open Blueprint to Transform Physical AI Development

NVIDIA announced the Physical AI Data Factory Blueprint, an open reference architecture that automates how training data is generated, augmented and evaluated for AI systems that interact with the real world

1

. The blueprint addresses a critical bottleneck in robotics and autonomous vehicle development: the massive-scale processing required to train models capable of navigating real-world environments. By unifying data workflows, the architecture reduces costs, time and complexity associated with building physical AI at scale1

.Rev Lebaredian, vice president of Omniverse and simulation technologies at NVIDIA, emphasized the importance of this shift: "Physical AI is the next frontier of the AI revolution, where success depends on the ability to generate massive amounts of data"

2

. The blueprint enables developers to transform limited training data into large, diverse datasets that capture edge cases and long-tail scenarios—situations that are expensive and impractical to record in real-world conditions1

.Cloud Partners and Early Adopters Drive Adoption

Microsoft Azure and Nebius are integrating the Data Factory Blueprint into their cloud infrastructure, enabling developers to convert accelerated computing power into high-volume generation of training data

1

. Microsoft Azure has made the blueprint available on GitHub as part of an open physical AI toolchain, offering integration with Azure IoT Operations, Microsoft Fabric, Real-Time Intelligence, Microsoft Foundry and GitHub Copilot1

.Leading physical AI developers are already applying the blueprint. Uber is using it to accelerate autonomous vehicle development, while Skild AI applies the architecture to advance general-purpose robot foundation models

1

. Companies including FieldAI, Hexagon Robotics, Linker Vision, Milestone Systems, RoboForce, Teradyne Robotics and others are testing the Azure physical AI toolchain to scale data generation across perception, mobility and reinforcement learning pipelines1

2

.

Source: NVIDIA

NVIDIA Cosmos Powers Data Workflows

At the core of the blueprint sits NVIDIA Cosmos, a suite of open world foundation models that curate, augment and evaluate training data

1

. Cosmos Curator processes and annotates large-scale real-world and synthetic datasets, while Cosmos Transfer exponentially expands curated data by multiplying real and simulated inputs across different environments and lighting conditions1

. Cosmos Evaluator, now available on GitHub, automatically scores and filters generated data to ensure physical accuracy and training readiness1

.NVIDIA OSMO, an open-source orchestration framework, manages these workflows across compute environments and now integrates with coding agents such as Claude Code, OpenAI Codex and Cursor

1

2

. This integration enables AI-native operations where agents proactively manage resources and resolve bottlenecks, allowing developers to focus on model development rather than infrastructure management1

.Related Stories

AI-RAN Infrastructure Extends Physical AI Reach

NVIDIA also partnered with T-Mobile and Nokia to bring vision AI agents into next-generation AI-RAN infrastructure, transforming wireless networking into platforms that distribute edge AI computing across broad regions

2

. T-Mobile became the first U.S. company to pilot NVIDIA's AI-RAN infrastructure with Nokia's anyRAN software, working with select NVIDIA physical AI partners to demonstrate how cellular sites can support distributed edge AI workloads on 5G networks2

.Jensen Huang, founder and CEO of NVIDIA, stated: "Telecommunication networks are evolving into the AI infrastructure enabling billions of devices -- from vision AI agents to robots and autonomous vehicles -- to see, hear and act in real time"

2

. The AI-RAN infrastructure addresses a critical gap by providing low-latency, secure connectivity that offloads heavy computation from devices to nearby edge locations, enabling use cases like smart city operations, automated utility inspection and real-time industrial safety2

.This infrastructure approach solves a key challenge: very small models running on devices lack the intelligence for significant processing, while extremely large models in distant datacenters introduce delays. By running appropriately sized models on nearby servers through 5G networks, developers can balance computational power with response time for robotics applications that demand real-time decision-making

2

.References

Summarized by

Navi

Related Stories

NVIDIA Expands Omniverse Platform to Revolutionize AI Factory Design and Industrial Robotics

19 Mar 2025•Technology

NVIDIA Expands Omniverse with Generative AI for Physical AI Applications

07 Jan 2025•Technology

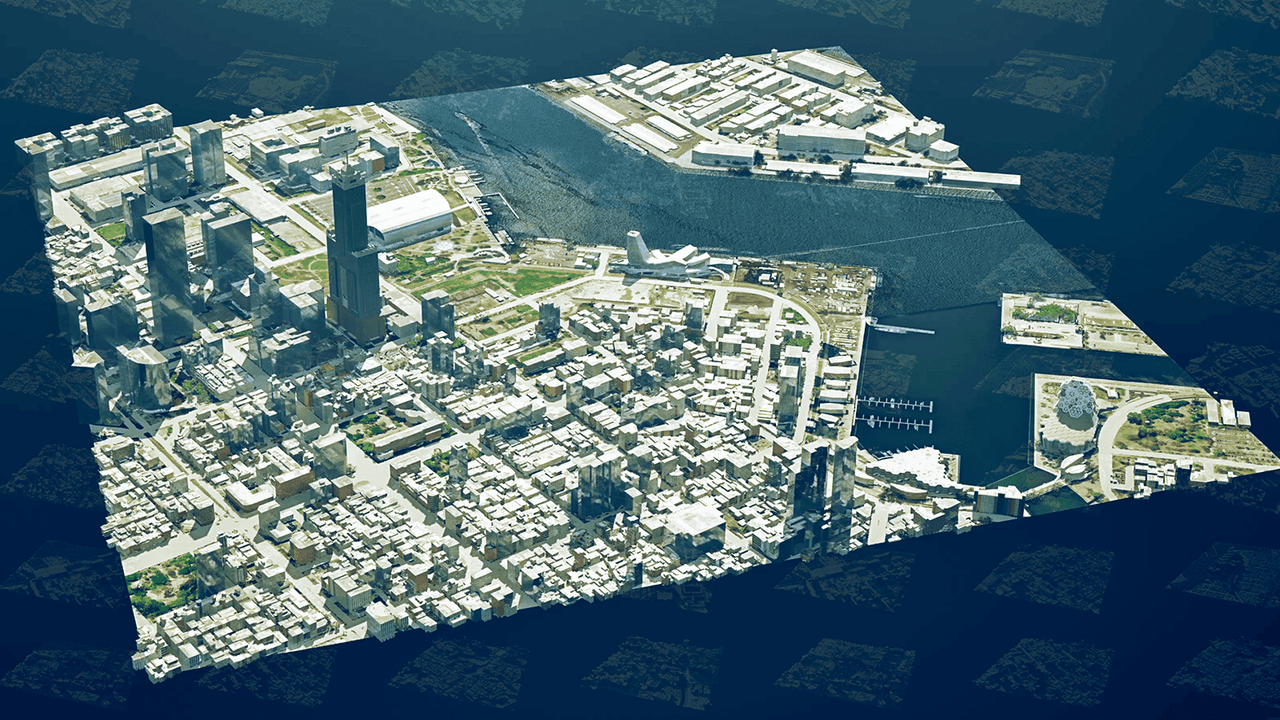

NVIDIA Unveils Smart City AI Blueprint to Revolutionize Urban Planning and Management

11 Jun 2025•Technology