Nvidia Plans Major Supply Chain Shift: From GPU Vendor to Complete AI Server Provider

3 Sources

3 Sources

[1]

JP Morgan says Nvidia is gearing up to sell entire AI servers instead of just AI GPUs and components -- Jensen's master plan of vertical integration will boost Nvidia profits, purportedly starting with Vera Rubin

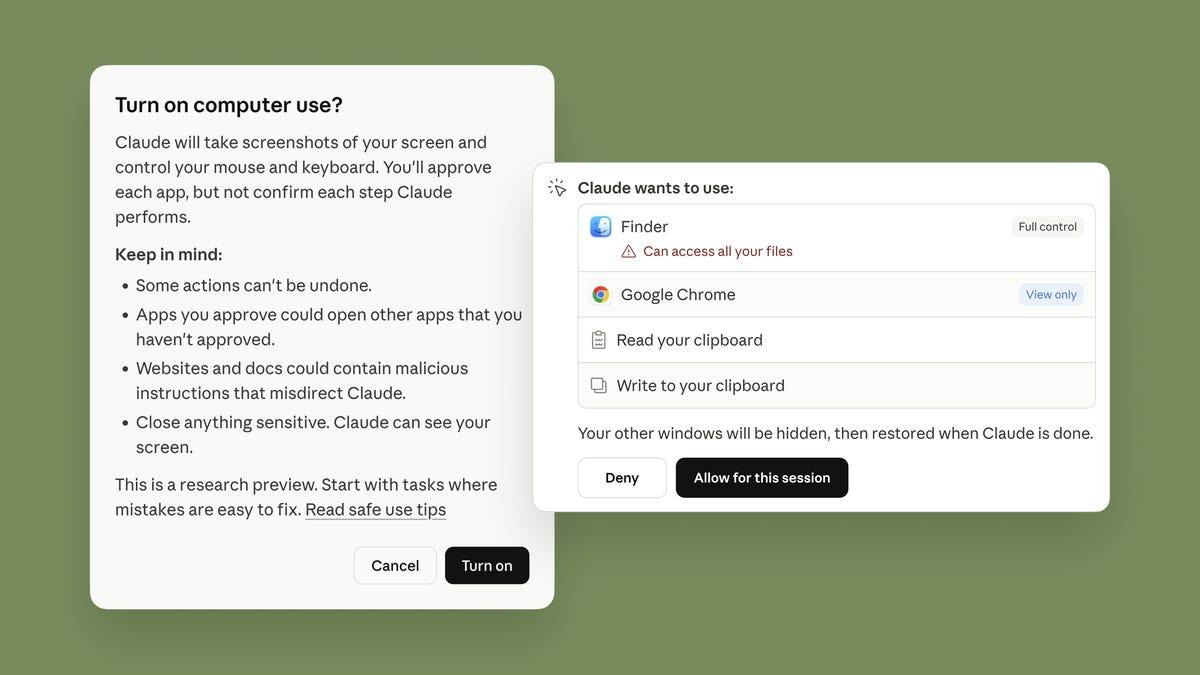

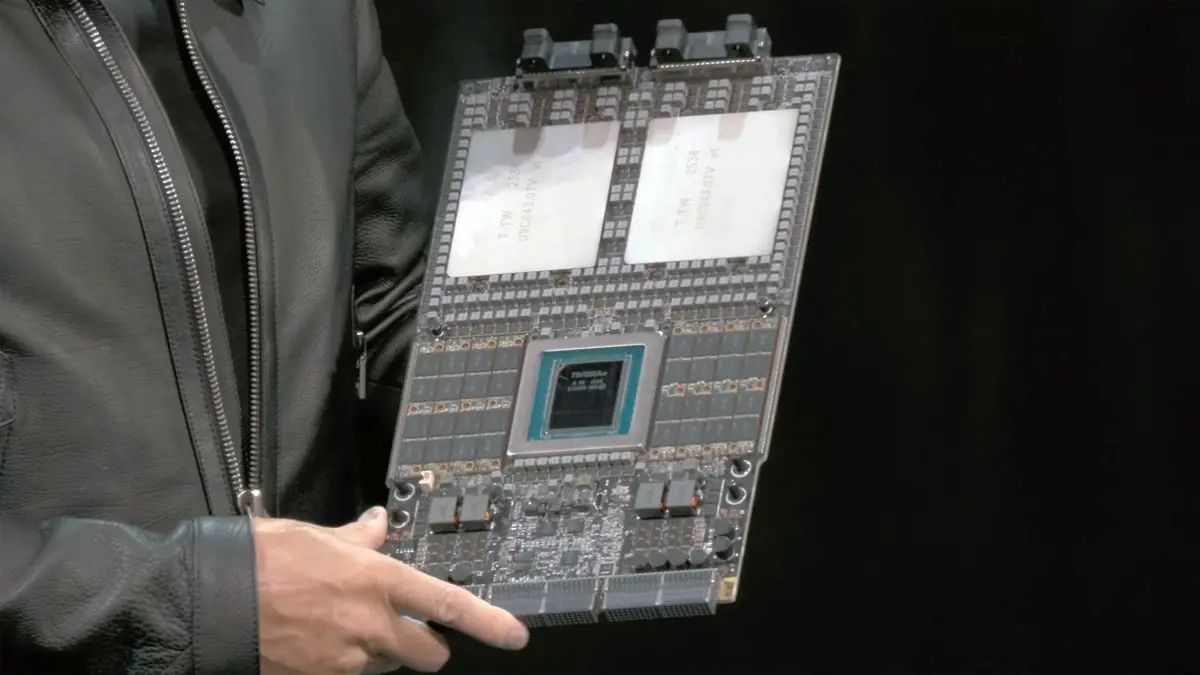

The launch of Nvidia's Vera Rubin platform for AI and HPC next year could mark significant changes in the AI hardware supply chain as Nvidia plans to ship its partners fully assembled Level-10 (L10) VR200 compute trays with all compute hardware, cooling systems, and interfaces pre-installed, according to J.P. Morgan (via @Jukanlosreve). The move would leave major ODMs with very little design or integration work, making their lives easier, but would also trim their margins in favor of Nvidia's. The information remains unofficial at this stage. Starting with the VR200 platform, Nvidia is reportedly preparing to take over production of fully built L10 compute trays with a pre-installed Vera CPU, Rubin GPUs, and a cooling system instead of allowing hyperscalers and ODM partners to build their own motherboards and cooling solutions. This would not be the first time the company has supplied its partners with a partially integrated server sub-assembly: it did so with its GB200 platform when it supplied the whole Bianca board with key components pre-installed. However, at the time, this could be considered as L7 - L8 integration, whereas now the company is reportedly considering going all the way to L10, selling the whole tray assembly -- including accelerators, CPU, memory, NICs, power-delivery hardware, midplane interfaces, and liquid-cooling cold plates -- as a pre-built, tested module. If the information is correct, and Nvidia will indeed ship its partners L10 compute trays (which probably account for 90% of the cost of a server), then Nvidia will only leave its partners with rack-level integration rather than server design. They would still build the outer chassis, integrate power supplies depending on requirements, install sidecars or CDUs for rack-level cooling, add their own BMC and management stack, and perform final assembly and testing. These tasks matter operationally, but they do not differentiate hardware in a meaningful way. This move promises to shorten the ramp for VR200 as Nvidia's partners will not have to design everything in-house and could lower production costs due to the volume of scale ensured by a direct contract between Nvidia and an EMS (most likely Foxconn as the primary supplier and then Quanta and Wistron, but that is speculation). For example, a Vera Rubin Superchip board recently demonstrated by Jensen Huang uses a very complex design, a very thick PCB, and only solid-state components. Designing such a board takes time and costs a lot of money, so using select EMS provider(s) to build it makes a lot of sense. J.P. Morgan reportedly mentions the increase in power consumption of one Rubin GPU from 1.4 kW (Blackwell Ultra) to 1.8 kW (R200) and even 2.3 kW (a previously unannounced TDP for an allegedly unannounced SKU (Nvidia declined a Tom's Hardware request for comment on the matter) and increased cooling requirements as one of the motivations for moving to supply the whole tray instead of individual components. However, we know from reported supply chain sources that various OEMs and ODMs, as well as hyperscalers like Microsoft, are experimenting with very advanced cooling systems, including immersion and embedded cooling, which underscores their experience. However, Nvidia's partners will shift from being system designers to becoming system integrators, installers, and support providers. They are going to keep enterprise features, service contracts, firmware ecosystem work, and deployment logistics, but the 'heart' of the server -- the compute engine -- is now fixed, standardized, and produced by Nvidia rather than by OEMs or ODMs themselves. Also, we can only wonder what will happen with Nvidia's Kyber NVL576 rack-scale solution based on the Rubin Ultra platform, which is set to launch alongside the emergence of 800V data center architecture meant to enable megawatt-class racks and beyond. Now the only question is whether Nvidia further increases its share in the supply chain to, say, rack-level integration?

[2]

Nvidia May Shift to Fully Assembled AI Servers Starting With Vera Rubin

J.P. Morgan believes Nvidia is getting ready to take a much bigger slice of the AI server market by shifting from selling just GPUs to selling nearly complete AI systems. According to the report, the first big step in that direction will be the Vera Rubin platform. Instead of shipping GPUs, CPUs, boards, and cooling hardware separately, Nvidia may start delivering fully assembled L10 compute trays -- essentially the entire heart of an AI server in one pre-built module. If this pans out, it would be a major change for the AI hardware supply chain. Today, OEMs and ODMs design motherboards, engineer power delivery layouts, choose the cooling approach, and put everything together. With Rubin, Nvidia may take most of that away. Partners would still build the outer chassis, install rack-level cooling gear, attach power shelves, add their management controllers, and handle system validation. But the complex part -- the compute engine that eats most of the budget -- would arrive ready-made from Nvidia. For OEMs, that means less engineering effort and fewer design risks, but also fewer opportunities to differentiate their hardware. This move looks like a continuation of a trend that started with the GB200 platform, where Nvidia supplied the Bianca board pre-populated with major components. But while that earlier design still allowed OEMs some freedom at the server level, a full L10 tray would lock down almost everything. It would contain the Vera CPU, Rubin GPUs, memory, networking NICs, all power-delivery components, and the liquid-cooling cold plates. Partners would essentially become rack integrators rather than system designers. There are practical reasons for Nvidia to centralize this. Rubin GPUs are expected to draw between 1.8 kW and 2.3 kW each, which drives up the engineering complexity of the PCB, power network, and cooling solution. Building hardware at that density requires specialized expertise and expensive qualification cycles. By outsourcing this to large EMS manufacturers -- Foxconn is the most likely candidate -- Nvidia can produce these trays at scale while ensuring consistent build quality. This would also speed up deployment. Hyperscalers wouldn't need to spend months designing custom boards or validating thermal layouts. They would simply order trays, slide them into racks, connect liquid cooling, and go. Nvidia, in turn, would capture a larger portion of the revenue that used to go to ODMs. It's a classic vertical-integration play: reduce delays, reduce variability, and keep more of the profit inside the company. But the shift also raises questions for Nvidia's partners. Their role becomes more about installation, fleet management, and long-term service contracts than about server innovation. There's still value there, especially in large data center environments, but the competitive landscape changes when everyone is shipping essentially identical compute hardware made by Nvidia. Another open question is how this affects Nvidia's larger rack-scale ambitions. The Kyber NVL576 system, built around Rubin Ultra hardware, is expected to debut alongside the move to 800-volt data center power. If Nvidia begins owning the compute tray, it is not hard to imagine them pushing deeper into rack-level or even pod-level integration later on. For now, none of this is officially confirmed. But if J.P. Morgan's assessment is correct, Rubin could be the point where Nvidia begins selling not just GPUs, but nearly complete AI servers -- and that would reshape the industry in ways OEMs are going to feel immediately. Source: @Jukanlosreve

[3]

NVIDIA Reportedly Planning a Major Shift in Its AI Business Model, Moving to Control More of the AI Server Stack to Boost Margins

NVIDIA has been working towards a shift into its AI rack-scale server strategy, and instead of just being responsible for a piece of the supply chain, Team Green looks to get the 'whole pie'. For those unaware, NVIDIA's AI supply chain is built upon several partners responsible for various elements of the end products. However, when it comes to AI server racks, Taiwanese firms such as Foxconn, Quanta, and Wistron account for a major portion of the manufacturing stages. In the conventional approach, NVIDIA would only supply components such as AI GPUs or the boards required for server configurations such as the Bianca Port UPB. However, during Wistron's Q3 earnings call (via Ray Wang), a JPMorgan analyst mentioned that NVIDIA is moving towards "directly supplying" entire systems. The traditional approach for NVIDIA has been to keep the more essential elements of its server rack, such as GPUs and the board, in-house, while assigning the rest of the rack architecture to suppliers like Foxconn and Quanta. While this approach was initially fruitful for the firm, as rack-scale configurations weren't as large as they are now, it appears that Team Green is looking to switch things up. JPMorgan analyst mentions that NVIDIA will now directly supply Level-10 systems to partners, with the intention of unifying rack designs and ensuring a significant reduction in time-to-market. If we sum up this development, it appears that NVIDIA will now provide 'blueprints' to companies like Foxconn and Quanta, which they will adhere to in producing AI systems. This will prevent suppliers from designing individual rack architectures. It would not be incorrect to say that NVIDIA had already intended to adopt this approach once it introduced its 'MGX architecture', which defines the entire server's physical and electrical architecture, transitioning from a single node to complete rack-scale 'AI factories'. With this approach, NVIDIA essentially reduces deployment times from 9 to 12 months to just 90 days, since 80% of the system is pre-defined and validated by NVIDIA. This means that newer architectures, such as Rubin/Rubin CPX racks, will be delivered to customers a lot more quickly. This also brings in higher margins for Team Green, as it is responsible for full system sales, and also helps the firm expand its TAM figures. As Wistron mentions, this approach also brings benefits to suppliers. What I can say is that regardless of whether it's NVIDIA or any of our other customers, and regardless of who the end customer is, the work is still done by Wistron. So from Wistron's perspective, this business model doesn't really create a major impact. In fact, I believe this is all positive for Wistron. It appears that NVIDIA intends to transition from solely the 'AI chip' business to the entire infrastructure being developed by the firm, which, based on what we are seeing, will speed up the deployment of AI systems out in the market. For now, it's essential to note that NVIDIA has not officially confirmed this transition. As it's an internal matter among suppliers, we'll have to wait and see how it develops.

Share

Share

Copy Link

Nvidia is reportedly planning to transition from selling individual AI components to delivering fully assembled AI server systems, starting with its upcoming Vera Rubin platform. This vertical integration strategy would significantly reshape the AI hardware supply chain and boost Nvidia's profit margins.

Nvidia's Strategic Pivot to Full System Integration

Nvidia is reportedly preparing a fundamental shift in its business model, transitioning from a component supplier to a complete AI system provider. According to J.P. Morgan analysts, this transformation will begin with the company's upcoming Vera Rubin platform, where Nvidia plans to deliver fully assembled Level-10 (L10) compute trays rather than individual GPUs and components

1

.The L10 compute trays would include pre-installed Vera CPUs, Rubin GPUs, memory modules, networking interfaces, power delivery hardware, midplane interfaces, and liquid cooling cold plates as tested, ready-to-deploy modules

2

. This represents a significant escalation from Nvidia's previous GB200 platform approach, which supplied the Bianca board with major components pre-installed but still allowed original equipment manufacturers (OEMs) considerable design freedom.

Source: Wccftech

Impact on Supply Chain Partners

This vertical integration strategy would fundamentally reshape the roles of Nvidia's manufacturing partners, including major Taiwanese firms like Foxconn, Quanta, and Wistron. Instead of designing custom motherboards, engineering power delivery systems, and developing cooling solutions, these partners would be relegated to rack-level integration tasks

3

.Partners would retain responsibility for building outer chassis, integrating power supplies, installing rack-level cooling infrastructure, adding baseboard management controllers (BMCs), and performing final assembly and testing. However, the compute engine—which typically accounts for approximately 90% of a server's cost—would arrive as a standardized, Nvidia-manufactured module

1

.Technical Drivers Behind the Shift

The move toward complete system integration is partly driven by escalating power requirements and thermal management challenges. Rubin GPUs are expected to consume between 1.8 kW and 2.3 kW each, representing a significant increase from the 1.4 kW consumption of Blackwell Ultra processors

1

. These power densities require sophisticated printed circuit board designs, complex power delivery networks, and advanced cooling solutions that demand specialized engineering expertise.By centralizing production through select electronics manufacturing services (EMS) providers—likely Foxconn as the primary supplier—Nvidia can achieve economies of scale while ensuring consistent build quality across deployments

2

.Related Stories

Business and Operational Benefits

The integration strategy promises substantial operational advantages for all stakeholders. Deployment timelines could be reduced from the current 9-12 months to approximately 90 days, since 80% of the system would be pre-defined and validated by Nvidia

3

. Hyperscale customers would no longer need to invest months in custom board design or thermal validation processes.For Nvidia, this approach represents a classic vertical integration play that captures a larger portion of revenue previously distributed among ODM partners while reducing variability in system performance and reliability

2

.Future Implications and Broader Strategy

This shift aligns with Nvidia's broader MGX architecture initiative, which defines complete server physical and electrical architectures rather than individual components. The strategy effectively transforms Nvidia from an AI chip supplier to a comprehensive infrastructure provider

3

.Questions remain about how this approach will extend to Nvidia's Kyber NVL576 rack-scale solution based on the Rubin Ultra platform, which is scheduled to launch alongside 800-volt data center architecture designed for megawatt-class racks. The success of the L10 tray approach could potentially lead Nvidia to pursue even deeper integration at the rack or pod level

1

.

Source: Tom's Hardware

References

Summarized by

Navi

Related Stories

NVIDIA's AI AI server evolution: GB300 production ramps up as Vera Rubin looms on the horizon

19 Jul 2025•Technology

Nvidia ships first Vera Rubin AI chips to customers, promising 10x efficiency gains over Blackwell

25 Feb 2026•Technology

Nvidia Vera Rubin architecture slashes AI costs by 10x with advanced networking at its core

06 Jan 2026•Technology

Recent Highlights

1

Tennessee Teens Sue Elon Musk's xAI Over Grok AI-Generated Child Abuse Images

Policy and Regulation

2

Pentagon designates Palantir Maven AI as core US military system in major defense shift

Technology

3

Supermicro Co-Founder Indicted in $2.5 Billion Nvidia AI Chip Smuggling Scheme to China

Policy and Regulation