Nvidia unveils Vera CPU with 88 Arm cores to power next-generation AI infrastructure

12 Sources

12 Sources

[1]

Nvidia updates data center roadmap with Rosa CPU and stacked Feynman GPUs -- optical NVLink, Groq LPUs with NVFP4, and NVLink also on deck

Both quantitative and qualitative improvements over the next several years. Nvidia presented its updated data center product roadmap at its GPU Technology Conference this week, revealing several surprises but mostly reassuring that the company is on track to introduce a brand-new GPU architecture every couple of years and to update the AI GPU family every year. As it turns out, Nvidia intends to use die stacking and custom HBM memory with its Feynman GPUs, which will also be accompanied by its Rosa CPUs, previously never mentioned in the roadmap. 2026: Rubin, Vera, LP30, BlueField-4 Just as expected, Nvidia plans to roll out its Vera Rubin platform this year, based on the Vera CPU and Rubin GPU. It will be accompanied by five additional processors, including the Groq LP30 low-latency inference accelerator, the BlueField-4 data processing unit (DPU), the NVLink-6 switch, Spectrum-X Ethernet with co-packaged optics, and the ConnectX 9 1600G SuperNIC. The Vera Rubin platform is interesting not only because of the new CPU and GPU architectures, but also because Nvidia is integrating its Groq LPUs into its hardware portfolio. Furthermore, it looks like the company favors LPUs over its own Rubin CPX processors to the point that it no longer mentions the latter on the roadmap. 2027: Rubin Ultra, LP35, NVLink 7 Next year, the company plans to update its offerings with the Rubin Ultra AI accelerators, which will feature four compute chiplets and be equipped with 1 TB of HBM4E memory, thus dramatically increasing performance compared to this year's Rubin. In addition, these GPU accelerators will be mated with the Groq LP35 LPU, which will support the NVFP4 data format and therefore improve performance. Yet another tangible performance improvement for Nvidia's AI platforms is the introduction of the company's Kyber NVL144 rack-scale solution, which will pack 144 Rubin Ultra GPU packages (enabled by an NVLink 7 switch) and therefore offer at least 4X performance improvement compared to Oberon NVL72 racks with 72 Blackwell GPU packages. 2028: Feynman, Rosa, LP40, NVLink goes optics Nvidia's data center portfolio will improve in 2027 by increasing the number of GPUs per rack (i.e., quantitative improvements) and introducing a new LPU with NVFP4 support. The company's 2028 data center products will be based on all-new architectures that will bring qualitative improvements to the company's products. "The next generation from here is Feynman," said Jensen Huang, chief executive of Nvidia, at the GTC. "Feynman has a new GPU, of course; it also has a new LPU LP40 [...] now uniting the scale of Nvidia and the Groq building together LP40, it is going to be incredible. A brand-new CPU called Ros, short for Rosalyn, Bluefield-5, which connects the next CPU with the next SuperNIC CX10. We will have Kyber, which is copper scale up, and we will have Kyber CPO scale-up. So, for the first time we will scale up with both copper and co-packaged optics." First up, Nvidia's Feynman data center GPU will adopt die stacking, which will enable a new way for the company to scale performance. Secondly, Feynman GPUs will also use custom high-bandwidth memory (most likely a variant of C-HBM4E), which will likely enable Nvidia to boost HBM capacity beyond 1 TB per GPU package and increase memory bandwidth. Thirdly, the Feynman platforms will be powered by Rosa CPUs, Nvidia's next-generation processors developed in-house with the focus on ultimate single-thread performance. The emergence of Rosa shows that the company has shortened its CPU development cycle from four years to two (probably by introducing a new design team), putting it on par with leading CPU developers AMD and Intel, which tend to release new microarchitectures every couple of years. Fourthly, this platform will also integrate the LP40 LPU, which will not only support Nvidia's NVFP4 format but also connect to other system components using the NVLink protocol, thereby integrating Groq hardware with Nvidia's GPUs. Fifthly, the Feynman platform will also be the first one to adopt NVLink switches with co-packaged optics, which will enable optical interconnections using the NVLink protocol (they are not impossible today, but CPO makes them significantly easier and cheaper to implement). Optical interconnects will enable Nvidia to increase scale-up world size of its rack-scale solutions to 576 GPU packages (using Oberon chassis) or even 1152 GPU packages (using Kyber chassis), which will make the company's rack-scale systems even more competitive against alternative solutions like AMD's Instinct or custom accelerators deployed by hyperscalers than they are today. Last but not least, Nvidia plans to introduce BlueField 5 DPU, 7 Generation SpectrumX Ethernet with co-packaged optics, as well as ConnectX 10 SuperNIC in 2028. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

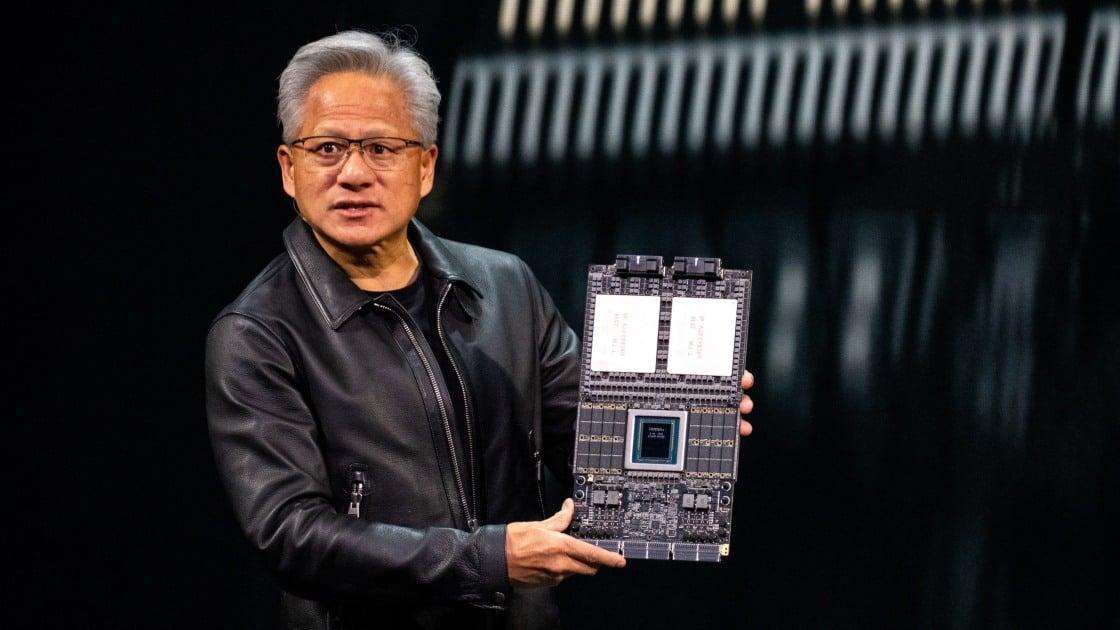

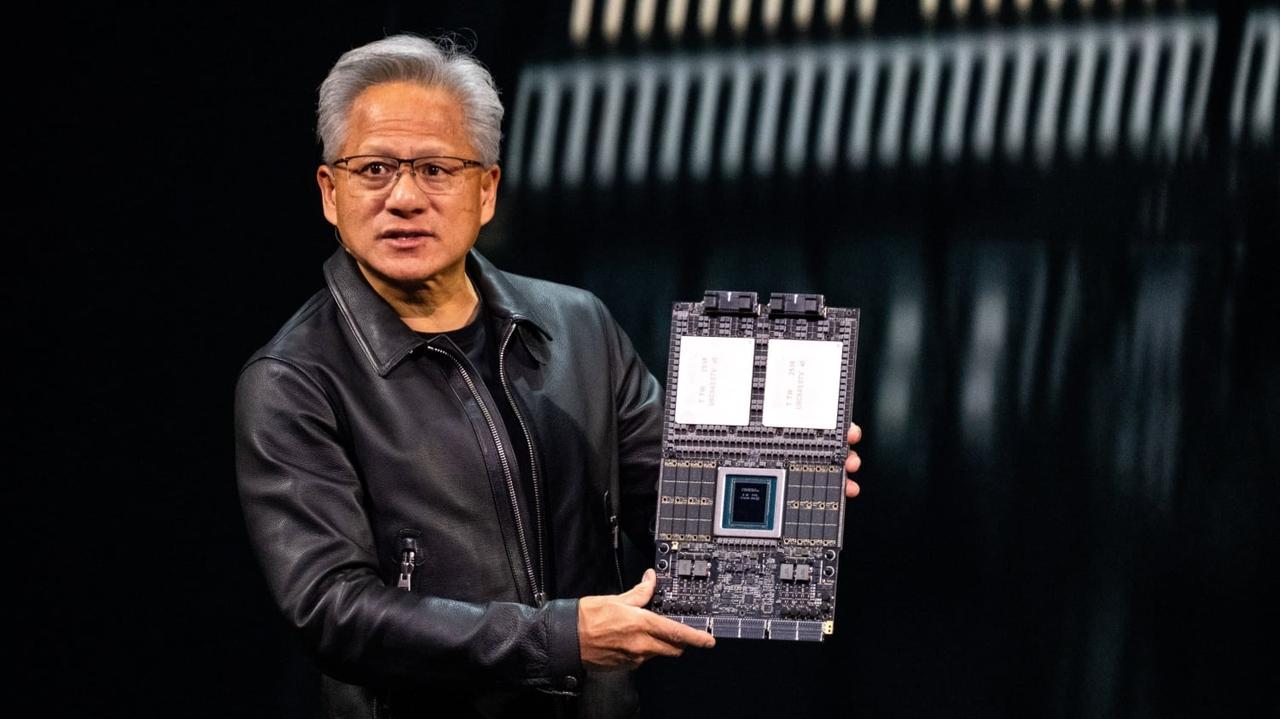

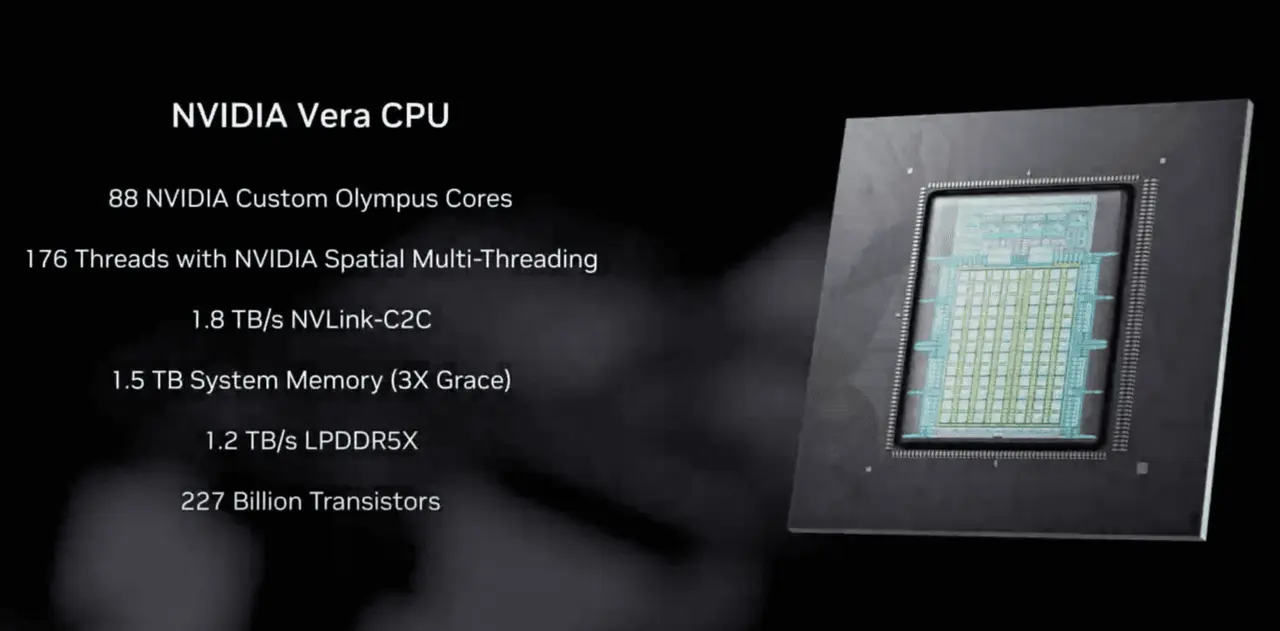

Nvidia crams 256 Vera CPUs into a single liquid cooled rack

GTC Intel and AMD take notice. At GTC on Monday, Nvidia unveiled its latest liquid-cooled rack systems. But unlike its NVL72 racks, this one isn't powered by GPUs or even Groq LPUs, but rather 256 of its custom Vera CPUs. The system is designed to support AI training techniques like reinforcement learning as well as agentic AI frameworks and services that can't run on GPUs alone. "Agents don't operate on GPUs alone. They need CPUs in order to do their work, whether we're training agentic models or serving them, GPUs today actually call out to CPUs in order to do the tool calling, SQL queries and the compilation of code," Ian Buck, VP of Hyperscale and HPC at Nvidia told press on Sunday. "This sandbox execution is a critical part of both training and deploying agents across data centers." Those CPUs need to be fast to avoid becoming a bottleneck. That requires a new kind of AI-optimized CPU which balances per core frequency, density, and power efficiency, Buck argues. Nvidia is no stranger to CPU design. Its first datacenter CPU, Grace, was announced nearly five years ago and has become an integral part of the company's Grace-Hopper and Grace-Blackwell rack systems since. While most of these deployments were tied to GPU systems or HPC clusters, Meta recently revealed plans to deploy Nvidia's standalone Grace CPUs at scale within its datacenters. Vera is Nvidia's latest CPU and brings several notable improvements, including 88 custom Olympus Arm cores, support for simultaneous multithreading, a much wider memory bus, and faster chip-to-chip interconnects. In addition to powering Nvidia's Vera-Rubin superchips, paper-launched at CES earlier this year, Nvidia plans to offer its CPUs as an alternative to x86 chips from Intel and AMD. The company is making some rather bold claims about its latest CPU superchips. If Nvidia is to be believed, Vera will deliver 3x more memory bandwidth and 1.5x the performance per core than contemporary x86 processors. Much of that performance is down to Nvidia's new Olympus Arm cores, which now feature a 10-wide decode pipeline with what Nvidia describes as a "neural branch predictor" that can perform two branch predictions per cycle. Branch prediction is key to performance in modern CPUs, and involves anticipating future code paths and executing down them before they're needed. By predicting two paths per cycle, Vera decreases the likelihood of a miss predict, theoretically boosting its performance in the process. Nvidia also benefits from its use of LPDDR5X memory, more commonly found in notebook computers, rather than the RDIMMS used by conventional servers. Each Vera CPU can be equipped with up to 1.5 TB of LPDDR5 SOCAMM memory modules good for 1.2 TB/s of bandwidth per socket. For reference, Intel's top 6900P processors top out at 825 GB/s of bandwidth when using 8800 MT/s MRDIMMs, while AMD's Turin processors top out between 560 and 600 GB/s. The chips also feature faster NVLink-C2C interconnects, enabling them to shuffle data to and from other CPU or GPUs at up to 900 GB/s (advertised as 1.8 TB/s bidirectional bandwidth) in either direction. Many of the tasks performed by agentic systems involve retrieving data and executing code against it, making high memory bandwidth key to avoiding bottlenecks. Vera will be available in both single- and dual-socket configurations from the usual ODM and OEM suspects, including Foxconn, Wistron, Dell Tech, Lenovo, and HPE, to name a handful. That means that this time around Nvidia will actually be competing head-to-head with AMD and Intel in the CPU space. To this end, Nvidia says that its NVL8 HGX systems, which have traditionally used x86 processors from Intel, will be offered with Vera CPUs for the Rubin generation. For high-density deployments, Nvidia also has a new MGX reference design that packs up to 256 Vera processors along with 64 BlueField-4 data processing units into a single liquid-cooled rack, providing more than 22,500 CPU cores, and 400 TB of memory for agents to retrieve data and execute code. It doesn't look like Nvidia will need to fight to win over customers. When Vera makes its debut later this year, Alibaba, ByteDance, Meta, Oracle, CoreWeave, Lambda, Nebius, and NScale have all committed to deploying the chips in their datacenters. ®

[3]

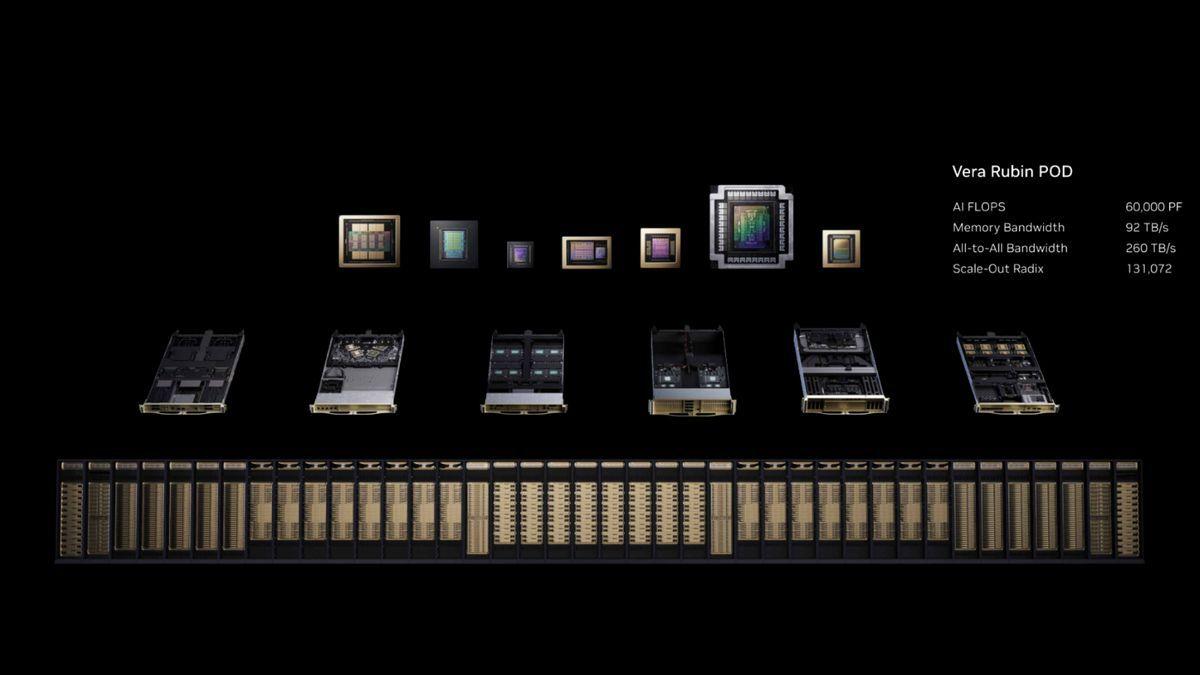

Examining Nvidia's 60 exaflop Vera Rubin POD -- how seven chips underpin company's 40 rack AI factory supercomputer

Every chip in the stack has a defined job, from training trillion-parameter models to caching inference tokens on NVMe storage. Nvidia announced seven chips in full production at GTC 2026 on Monday, composing the Vera Rubin platform that the company intends to ship in the second half of this year. Rather than a single product launch, several announcements covered the full silicon stack required to build what Nvidia now calls an AI factory: GPUs, CPUs, a dedicated inference accelerator, networking ASICs, a data processing unit, and an Ethernet switch. All seven are designed to operate as a single co-designed system across five rack types, scaling from individual racks to 40-rack PODs delivering 60 exaflops of compute. The so-called AI factory is a massive shift in how Nvidia packages and sells its hardware, where the unit of compute is no longer a GPU or even a server; it's the rack, and increasingly, the POD. Each of the seven chips fills a specific architectural role -- and understanding what each does is the fastest route to understanding what Vera Rubin fundamentally is. The compute layer: Rubin GPU, Vera CPU, and Groq 3 Three chips handle the core compute workload, each optimized for a different phase of the AI pipeline. The Rubin GPU is the training and inference workhorse built on TSMC's 3nm process. Each GPU uses a dual-die design packing 336 billion transistors, carries 288 GB of HBM4 memory with 22 TB/s of bandwidth, and delivers 50 PFLOPS of inference compute and 35 PFLOPS of training compute in the NVFP4 format. Those figures represent 5 and 3.5 times improvements over Blackwell, respectively. In the flagship Vera Rubin NVL72 rack, 72 Rubin GPUs connect via NVLink 6 to behave as a single accelerator. Nvidia claims that the NVL72 can train Mixture-of-Experts models with one quarter the GPU count required by Blackwell, and cut inference token costs by 10 times. The Vera CPU, meanwhile, is Nvidia's first data center CPU built from the ground up. It uses 88 custom Arm-based Olympus cores with Spatial Multithreading for 176 threads, up to 1.5TB of SOCAMM LPDDR5X memory, and 1.2 TB/s of memory bandwidth. Vera connects to Rubin GPUs via NVLink-C2C at 1.8 TB/s of coherent bandwidth, which is seven times faster than PCIe Gen 6. Its role in the rack is orchestration: scheduling workloads, routing KV cache data, managing context, and running the control plane for agentic AI workflows. It also handles reinforcement learning environments and CPU-native workloads. The Groq 3 LPU -- purpose-built for low-latency decode-phase inference -- is the most unexpected addition to the platform, and a direct product of Nvidia's $20 billion acquisition of Groq in December. Where Rubin GPUs offer massive memory capacity through HBM4, the Groq 3 trades capacity for bandwidth: Each LPU can carry roughly 500MB of stacked SRAM and delivers approximately 80 TB/s of bandwidth per chip. The Groq 3 LPX rack houses 256 LPUs with about 128GB of aggregate on-chip SRAM and 640 TB/s of scale-up bandwidth. Rubin GPUs handle the compute-heavy prefill phase of inference, processing long input contexts, with the Groq 3 LPUs stepping in to handle the decode phase, generating output tokens at low latency. Nvidia claims the combination delivers 35 times higher inference throughput per megawatt and 10 times more revenue opportunity for trillion-parameter models, compared with running both phases on GPUs alone. The fabric: NVLink 6, ConnextX-9, and Spectrum-6 As for moving data between chips at rack scale and between racks at cluster scale, Nvidia has architected this via three dedicated networking ASICs. The NVLink 6 Switch, due to be upgraded to a 7th generation, handles scale-up connectivity within the rack. Each switch delivers 3.6 TB/s of bidirectional bandwidth per GPU, doubling Blackwell's NVLink performance, while a single switch tray provides 28.8 TB/s of total switching bandwidth and 14.4 TFLOPS of FP8 in-network compute, which accelerates collective operations like the all-to-all communication patterns used in MoE routing. Nine switch trays per NVL72 rack deliver 260 TB/s of aggregate scale-up bandwidth. The ConnectX-9 SuperNIC provides a scale-out networking endpoint at 1.6 Tb/s throughput per GPU. Where NVLink 6 connects GPUs within a rack, ConnectX-9 connects racks, linking NVL72 systems into multi-rack clusters via either Nvidia's Spectrum-X Ethernet or Quantum-X800 InfiniBand fabrics. Each compute tray uses eight ConnectX-9 NICs to deliver the aggregate bandwidth quoted for the tray's four GPUs. The Spectrum-6 Ethernet Switch is the switching silicon and the backbone of the Spectrum-6 SPX networking rack, delivering 102.4 Tb/s of aggregate bandwidth, and is Nvidia's first switch to use co-packaged optics, employing silicon photonics to reduce optical power consumption. Nvidia claims five times improved power efficiency and 10 times improved resiliency over prior Spectrum-X generations. Available in two configurations, the SN6800 offers 512 ports of 800G Ethernet or 2,048 ports at 200G. The infrastructure layer: BlueField-4 The final chip, BlueField-4 DPU, functions as a specialized processor that handles networking and storage tasks that would otherwise consume CPU and GPU cycles. A dual-die package that combines a 64-core Grace CPU with an integrated ConnectX-9 NIC, the BlueField-4 DPU offloads networking, storage, encryption, virtual switching, telemetry, and security enforcement from the main compute path, running Nvidia's DOCA software framework for infrastructure services. Nvidia says it features twice the bandwidth, three times the memory bandwidth, and six times the compute of BlueField-3. BlueField-4 underpins the new BlueField-4 STX storage rack, which implements Nvidia's CMX context memory storage platform, essentially extending GPU memory into NVMe storage to cache the key-value data generated by agentic AI workflows. As context windows grow to hundreds of thousands, and in some cases millions, of tokens, operations on the KV cache are becoming a bottleneck, and the STX rack is designed to break through it. Nvidia claims up to five times higher inference throughput when the STX storage tier is deployed alongside compute racks, via the new DOCA Memos software framework. Five racks, one supercomputer All seven chips map into five rack types that compose the Vera Rubin POD: the NVL72 for core training and inference (72 Rubin GPUs, 36 Vera CPUs); the Groq 3 LPX rack for decode acceleration (256 LPUs); the Vera CPU rack for RL and orchestration (256 Vera CPUs); the BlueField-4 STX rack for KV cache storage; and the Spectrum-6 SPX rack for Ethernet networking. A full POD spans 40 racks, 1,152 Rubin GPUs, nearly 20,000 Nvidia dies, 1.2 quadrillion transistors, and 60 exaflops. Vera Rubin-based products are scheduled to ship in the second half of 2026.

[4]

Nvidia unveils Vera, an 88-core Arm CPU for AI and analytics racks

Serving tech enthusiasts for over 25 years. TechSpot means tech analysis and advice you can trust. Forward-looking: Nvidia used its GTC 2026 developer conference in San Jose to unveil new details about its Vera data center CPU line, highlighting an 88-core Arm-based design and a dense rack architecture that positions the company directly in the mainstream CPU market. Alongside the chip, Nvidia is introducing a liquid-cooled rack that aggregates 256 Vera CPUs, signaling its intent to compete for CPU sockets in large-scale AI and data analytics deployments. Unlike Nvidia's earlier Grace processors, which were primarily sold as companions to GPUs, Vera is positioned as a general-purpose data center CPU with a strong focus on AI-centric workloads such as agentic frameworks, scripting-heavy pipelines, analytics, and code compilation. The chip is built on 88 Nvidia-designed Arm v9.2-A "Olympus" cores, up from Grace's 72 Arm Neoverse cores. Nvidia claims a 1.5× increase in instructions per cycle over the previous generation, which it translates into roughly 50% higher performance versus "standard" CPUs in its internal comparisons. Vera targets both training-adjacent CPU tasks and pure CPU workloads, aiming to provide a platform that can efficiently run Python-heavy agent logic, SQL queries, and compilation pipelines while keeping GPUs fully saturated. At the microarchitectural level, Nvidia's most distinctive change is the introduction of what it calls spatial multi-threading in the Olympus cores. Unlike the usual simultaneous multi-threading model, where two threads time-slice shared resources, Vera's design physically partitions key structures - such as execution units, caches, and register files - so that each hardware thread can make forward progress without waiting for access to the same datapath. The goal is to increase instruction-level parallelism and throughput by allowing one thread to opportunistically use idle resources while the other continues running. Nvidia argues that this approach delivers more predictable performance in multi-tenant environments. In practice, both threads are intended to run in a genuinely concurrent manner on a single core. The core complex is organized as a single coherent domain rather than multiple NUMA regions, marking a notable departure from the topology used in contemporary high-core-count x86 designs. Nvidia is leveraging a new generation of its Scalable Coherency Fabric, built on Arm's CMN lineage, to tie the 88 cores together. The company has not disclosed exactly how it manages intra-chip latency at this scale but appears to rely on an updated mesh network. Memory bandwidth and capacity are a major focus for Nvidia. Grace delivered 546 GB/s to its mesh and about 7.6 GB/s per core; Vera more than doubles aggregate bandwidth to 1.2 TB/s and triples capacity to up to 1.5 TB of SOCAMM LPDDR5 or LPDDR5X, providing roughly 13.6 GB/s per core under full load. The fabric can also deliver up to 80 GB/s per core when other cores are not fully saturated - an important feature for bandwidth-hungry threads pinned to a subset of cores, such as those used in graph analytics or certain parts of LLM runtimes. The front-end and execution pipeline are explicitly designed for AI and data workloads. Vera's execution pathway includes a 10-wide instruction decode block, aggressive for a general-purpose server CPU, intended to keep the widened back end fully fed. A neural branch predictor capable of handling two branch predictions per cycle, along with a custom prefetch engine optimized for graph analytics, aims to reduce stalls in irregular control-flow and pointer-chasing code, including graph traversal and complex analytics kernels. Nvidia also highlights a PyTorch-optimized instruction buffer, suggesting that common instruction sequences in AI frameworks have been treated as first-class optimization targets. On the platform side, Vera meets the expected standards - support for PCIe 6.0, CXL 3.1, and two-socket configurations - while adding features aimed at secure, tightly coupled CPU - GPU systems. Confidential computing is supported across both CPU and GPU domains, enabling encrypted execution and isolation that extend into GPU memory and multi-socket nodes, a capability Nvidia did not offer with Grace. A second-generation NVLink-C2C interface provides up to 1.8 TB/s of die-to-die bandwidth, doubling Grace's 900 GB/s link and significantly exceeding what PCIe 6.0 can deliver. The Vera CPU rack integrates up to 256 liquid-cooled Vera CPUs alongside BlueField-4 DPUs and ConnectX-class SuperNICs, creating a CPU-centric system designed to host CPU-only AI and analytics workloads at high density. The rack supports up to 400 TB of LPDDR5-class memory and around 300 TB/s of aggregate memory bandwidth, exposing more than 22,500 CPU environments backed by over 22,000 Olympus cores and 45,000 threads. Nvidia positions this configuration as capable of delivering up to 6× higher CPU throughput and roughly 2× better performance in agentic AI workloads versus traditional CPU racks, according to its internal benchmarks. In its testing, the company reports that Vera achieves roughly 1.5× better performance per sandbox compared with x86 competitors, with about 3× the memory bandwidth per core and twice the efficiency, and that it outpaces Grace by 1.8× to 2.2× across scripting, compilation, analytics, and HPC workloads. These claims have not yet been independently verified, but they align with Vera's architectural focus on bandwidth, instruction-level parallelism, and multi-threading. The chips are now in full production and are expected to ship to partners in the second half of the year, with systems coming from major OEMs and ODMs, and integration into Nvidia's Rubin-generation HGX NVL8 and broader Vera Rubin platform. For data center operators already standardizing on Nvidia's GPU platforms, Vera converts the CPU from an external dependency into a controllable part of the stack, with a design tuned for AI-heavy, multi-tenant environments rather than traditional general-purpose server loads. How much that matters in practice will depend on whether Nvidia's performance and efficiency claims hold up once third-party benchmarks arrive later this year.

[5]

Nvidia unveils AI infrastructure spanning chips to space computing

The company says the new processor and rack-scale architecture are built to handle the rapid growth of AI workloads, where software agents increasingly plan tasks, execute code and interact with other systems autonomously. The Vera CPU is designed specifically for these emerging workloads. According to Nvidia, the processor delivers results with twice the efficiency and is 50 percent faster than traditional rack-scale CPUs. The chip is expected to power AI data centers used for training models, running AI agents and managing massive computing clusters across cloud platforms and enterprise systems. Nvidia says the Vera CPU marks a shift in how processors support modern AI systems. Rather than simply supporting GPUs, CPUs are becoming central to coordinating AI workloads across large computing environments. "Vera is arriving at a turning point for AI. As intelligence becomes agentic -- capable of reasoning and acting -- the importance of the systems orchestrating that work is elevated," said Jensen Huang, founder and CEO of Nvidia. "The CPU is no longer simply supporting the model; it's driving it. With breakthrough performance and energy efficiency, Vera unlocks AI systems that think faster and scale further."

[6]

Nvidia demonstrates Rubin Ultra tray, the world's first AI GPU with 1TB of HBM4E memory -- new chips will slot into Kyber racks

Nvidia on Monday demonstrated its next-generation tray for its data center GPU known as Rubin Ultra, which is due to arrive sometime in 2027. The Rubin Ultra features four compute chiplets and features 1TB of HBM4E memory, making it the industry's first AI accelerator equipped with a terabyte of memory. The Rubin Ultra platform will use the company's new rack-scale design known as Kyber, which will integrate 144 GPU packages, greatly improving performance over the current NVL72 rack design. Based on what Nvidia demonstrated, the quad-chiplet Rubin Ultra package will adopt a new packaging technology, though we are not sure about exactly how the GPU is implemented, as its heatspreader hides everything. In fact, we do not even know whether Rubin Ultra has taped out yet. The only thing that strikes the eye is the relatively small size of the Rubin Ultra package, which may mean that we are dealing with a stacked design, though that is speculation. The Nvidia Rubin Ultra tray also nearly completely lacks cables, which will simplify server assembly, but this could mean that Nvidia will sell complete trays, reducing the role of its partners to essentially assembling rack-scale machines and not building actual motherboards and server trays. With Rubin Ultra, Nvidia will also adopt a new type of rack called Kyber, which will use vertical rather than horizontal trays as well as liquid cooling by default. The new rack will enable Nvidia to put 144 GPU packages into one rack, which means that Kyber NVL144 systems based on Rubin Ultra GPUs will offer at least four times more performance than Oberon NVL72 based on 72 Rubin GPUs, as the system will double the number of GPU tiles per package and the number of packages. In addition, Nvidia's Kyber rack will upgrade the NVLink switch from a 6th Generation switch to a 7th Generation switch, which will retain the 3600 GB/s speed but will enable increasing the number of GPUs. In addition, Nvidia plans to introduce its CX9-1600G Ethernet processor to speed up scale-out communications. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[7]

NVIDIA Launches Vera CPU, Purpose-Built for Agentic AI

GTC -- NVIDIA today launched the NVIDIA Vera CPU, the world's first processor purpose-built for the age of agentic AI and reinforcement learning -- delivering results with twice the efficiency and 50% faster than traditional rack-scale CPUs. As reasoning and agentic AI advances, scale, performance and cost are increasingly driven by the infrastructure supporting the models that plan tasks, run tools, interact with data, run code and validate results. The NVIDIA Vera CPU builds on the success of the NVIDIA Grace™ CPU, enabling organizations of all sizes and across industries to build AI factories that unlock agentic AI at scale. With the highest single-thread performance and bandwidth per core, Vera is a new class of CPU that delivers higher AI throughput, responsiveness and efficiency for large-scale AI services such as coding assistants, as well as consumer and enterprise agents. Leading hyperscalers collaborating with NVIDIA to deploy Vera include Alibaba, CoreWeave, Meta and Oracle Cloud Infrastructure, as well as global system makers Dell Technologies, HPE, Lenovo, Supermicro and others. This broad adoption establishes Vera as the new CPU standard for the AI workloads that matter most for developers, startups, public-private institutions and enterprises -- helping democratize access to AI and accelerating innovation. "Vera is arriving at a turning point for AI. As intelligence becomes agentic -- capable of reasoning and acting -- the importance of the systems orchestrating that work is elevated," said Jensen Huang, founder and CEO of NVIDIA. "The CPU is no longer simply supporting the model; it's driving it. With breakthrough performance and energy efficiency, Vera unlocks AI systems that think faster and scale further." Configurable for Every Data Center NVIDIA announced a new Vera CPU rack integrating 256 liquid-cooled Vera CPUs to sustain more than 22,500 concurrent CPU environments, each running independently at full performance. AI factories can quickly deploy and scale to tens of thousands of simultaneous instances and agentic tools in a single rack. The new Vera rack is built using the NVIDIA MGX™ modular reference architecture, supported by 80 ecosystem partners worldwide. As part of the NVIDIA Vera Rubin NVL72 platform, Vera CPUs are paired with NVIDIA GPUs through NVIDIA NVLink™-C2C interconnect technology, with 1.8 TB/s of coherent bandwidth -- 7x the bandwidth of PCIe Gen 6 -- for high-speed data sharing between CPUs and GPUs. Additionally, NVIDIA introduced new reference designs that use Vera as the host CPU for NVIDIA HGX™ Rubin NVL8 systems, coordinating data movement and system control for GPU-accelerated workloads. Vera systems partners are providing both dual and single-socket CPU server configurations, optimal for workloads such as reinforcement learning, agentic inference, data processing, orchestration, storage management, cloud applications and high-performance computing. Across all configurations, Vera systems integrate NVIDIA ConnectX SuperNIC cards and NVIDIA BlueField-4 DPUs for accelerated networking, storage and security, which are critical for agentic AI. This enables customers to optimize for their specific workloads while maintaining a single software stack across the NVIDIA platform. Designed for Agentic Scaling By combining high-performance, energy-efficient CPU cores, a high-bandwidth memory subsystem and the second-generation NVIDIA Scalable Coherency Fabric, Vera enables faster agentic responses under the extreme utilization conditions common for agentic AI and reinforcement learning. Vera features 88 custom NVIDIA-designed Olympus cores, delivering high performance for compilers, runtime engines, analytics pipelines, agentic tooling and orchestration services. Each core can run two tasks, using NVIDIA Spatial Multithreading, to deliver consistent, predictable performance -- ideal for multi-tenant AI factories running many jobs at once. To further enhance energy efficiency, Vera introduces the second generation of NVIDIA's low-power memory subsystem, now built on LPDDR5X memory and delivering up to 1.2 TB/s of bandwidth -- twice the bandwidth and at half the power compared with general-purpose CPUs. Widespread Ecosystem Support Cursor, an innovator in AI-native software development, is adopting NVIDIA Vera to boost performance for its AI coding agents. "We're excited to use NVIDIA Vera CPUs to improve overall throughput and efficiency so we can deliver faster, more responsive coding agent experiences for our customers," said Michael Truell, cofounder and CEO of Cursor. Redpanda, a leading streaming data and AI platform, is using Vera to dramatically boost performance. "Redpanda recently tested NVIDIA Vera running Apache Kafka-compatible workloads and saw dramatically better performance than other systems we've benchmarked, delivering up to 5.5x lower latency," said Alex Gallego, founder and CEO of Redpanda. "Vera represents a new direction in CPU architecture, with more memory and less overhead per core, enabling our customers to scale real-time streaming workloads further than ever and unlock new AI and agentic applications." National laboratories planning to deploy Vera CPUs include Leibniz Supercomputing Centre, Los Alamos National Laboratory, Lawrence Berkeley National Laboratory's National Energy Research Scientific Computing Center and the Texas Advanced Computing Center (TACC). "At TACC, we recently tested NVIDIA's Vera CPU platform as we prepare for deployment in our upcoming Horizon system -- and running six of our scientific applications, we saw impressive early results," said John Cazes, director of high-performance computing at TACC. "Vera's per-core performance and memory bandwidth represent a giant step forward for scientific computing, and we look forward to bringing Vera-based nodes to our CPU users on Horizon later this year." Leading cloud service providers planning to deploy Vera CPUs include Alibaba, ByteDance, Cloudflare, CoreWeave, Crusoe, Lambda, Nebius, Nscale, Oracle Cloud Infrastructure, Together.AI and Vultr. Leading infrastructure providers adopting Vera CPUs include Aivres, ASRock Rack, ASUS, Compal, Cisco, Dell, Foxconn, GIGABYTE, HPE, Hyve, Inventec, Lenovo, MiTAC, MSI, Pegatron, Quanta Cloud Technology (QCT), Supermicro, Wistron and Wiwynn. Availability NVIDIA Vera is in full production and will be available from partners in the second half of this year.

[8]

Nvidia unveils details of new 88-core Vera CPUs positioned to compete with AMD and Intel - new Vera CPU rack features 256 liquid-cooled chips that deliver up to a 6X gain in CPU throughput

Nvidia announced more details about its new 88-core Vera data center CPUs at GTC 2026 here in San Jose, California, claiming impressive 50% performance gains over standard CPUs, fueled by a 1.5X increase in IPC from its Olympus cores and an innovative high-bandwidth design that Nvidia says delivers the fastest single-threaded performance on the market. The company also unveiled its new Vera CPU Rack architecture, which brings 256 liquid-cooled CPUs into one rack for CPU-centric workloads, claiming a 6X gain in CPU throughput and twice the performance in agentic AI workloads. The evolution of the Vera CPU and its integration into deployable rack-scale systems marks Nvidia's entry into direct CPU sales, positioning itself as a competitor to Intel and AMD in the traditional CPU market. That's not to mention competing against the many flavors of custom Arm processors used by the world's largest hyperscalers. This doesn't come as a complete surprise, coming in the wake of the company's announcement that Meta will now deploy multiple generations of Nvidia CPU-only systems across its infrastructure. Nvidia will also continue to use the CPUs for its own GPU-focused systems, such as the Vera Rubin platform we covered more in depth here. Nvidia originally introduced its first-gen Grace CPUs at GTC in 2022, foreshadowing that its continued evolution of the series would eventually position it to compete with the broader CPU market. The new processors target both AI-centric and more general-purpose use-cases, with a heavy emphasis on the former, and Nvidia's broadening of both the capabilities and its target markets will provide stiff competition for AMD and Intel as they battle for sockets in AI data centers. The chips are now in full production and will be available to Nvidia's partners in the second half of this year. Let's take a closer look at the new chips, and then the rack-scale architecture. Nvidia Vera CPU specifications and performance Nvidia designed the Vera CPU to provide the best of many worlds, with the intention of melding the high core counts of hyperscale cloud CPUs with the high single-thread performance of gaming CPUs and the power efficiency of mobile chips, all with the goal of speeding common GPU-driven tasks in agentic AI, training, and inference workloads, such as Python execution, SQL queries, and code compilation. All told, Nvidia claims 1.5x the performance-per-sandbox over x86 competitors, 3x the memory bandwidth per core, and twice the efficiency. To meet those goals, the company designed an 88-core CPU with 144 threads, an increase over the first-gen Grace's 72 cores. Nvidia also claims the cores offer a 1.5X improvement in instructions per cycle (IPC) throughput, a massive generational jump relative to other competing architectures, which tend to gain a single-digit or a low-teens percentage increase with each generation. With the previous-gen Grace, Nvidia used off-the-shelf Arm Neoverse cores, but the firm does stipulate that the new Olympus cores found on Vera are 'Nvidia designed,' signaling that the company has made custom modifications to the reference design. The Arm v9.2-A Olympus cores feature spatial multi-threading, which physically isolates the various components of the pipeline by not time-slicing the key elements, like the execution units, caches and register files, with the other thread running on the same core. This contrasts with the standard time-slicing found in other simultaneous multi-threading (SMT) implementations, a process that has the threads take turns utilizing the resources. Spatial Multi-Threading increases Instruction Level Parallelism (ILP), throughput, and performance predictability by pulling instructions from other threads when execution elements are idle, thus ensuring full utilization. In effect, this allows both threads to truly run simultaneously on a single core, whereas in a standard SMT implementation the threads essentially take turns running on a single core. Naturally, this will be a boon for multi-tenancy environments. Nvidia arranges all 88 cores in a single domain, so there are no latency-inducing NUMA eccentricities to be found, in stark contrast to current high core-count x86 competitors. This has dramatic implications for latency, predictability, bandwidth, and ease-of-programmability. The firm has not shared the full details of how it accomplished this feat while maintaining adequate latency to each core, but the chip features a new generation of the Nvidia Scalable Coherency Fabric (SCF), a mesh topology built from Arm's CMN-700 Coherent Mesh Network used in Grace's Arm Neoverse cores. Arm has moved forward to the newer Neoverse CMN S3 mesh with its latest designs, and Vera likely employs that design, or a variant thereof. The mesh network can deliver impressive memory throughput to the cores in aggregate, and even more when certain cores are more bandwidth-hungry than others. Grace supported 546 GB/s of memory throughput to the mesh, working out to an average of 7.6 GB/s per core. Vera more than doubles that to 1.2 TB/s of bandwidth fed by 1.5TB of SOCAMM LPPDDR5 modules (a 3x increase in capacity), which works out to an average of 13.6 GB/s per core in full-load conditions. Importantly, the architecture now supports up to 80 GB/s of throughput to any single core when load conditions aren't consistent across the mesh, an impressive uplift for bandwidth-hungry threads. The execution pathway includes a 10-wide Instruction Decode unit, a neural branch predictor that supports two branch predictions per cycle, a custom graph database analytics prefetch engine, and a PyTorch-optimized Instruction Buffer. The chip fully supports Confidential Computing, a notable advance over Grace that allows for fully protected CPU+GPU domains. The CPU also features an NVLink-C2C die-to-die interface with up to 1.8 TB/s of throughput, a doubling of Grace's 900 GB/s interconnect and seven times faster than PCIe 6.0. It also supports two-processor (2P) configurations. Overall, Vera supports the full suite of technologies expected from a modern data center processor, including PCIe 6.0 and CXL 3.1 support, but with a bandwidth and latency-focused compute design that positions its uniquely well for use in AI workflows. The Vera CPU Rack and Benchmark Performance Grace has already served as a fundamental building block in many Nvidia GPU+CPU systems, including some of the fastest AI supercomputers on the planet, but Nvidia's expanded goal is to leverage Vera in pure-play CPU racks that can be more widely deployed. The Vera CPU rack meets that goal with 256 liquid-cooled Vera CPUs paired with 74 Bluefield-4 DPUs and ConnectX SuperNIC networking. The rack weighs in with up to 400 TB of LPDDR5 and 300 TB/s of aggregate memory throughput. That feeds the 45,056 threads, which Nvidia says supports 22,500 concurrent CPU environments running independently. Nvidia shared benchmarks in a wide range of workloads, touting from a 1.8x to 2.2x performance improvement over Grace in scripting, compilation, data analytics, graph analytics, and HPC workloads, among others. Naturally one would expect this system to be deployed at Meta, which recently announced its partnership with Nvidia for CPU-only systems, but Nvidia says it will also offer the Vera CPU rack system to hyperscalers, including Oracle, Coreweave, Nebius, Alibaba, and others. A broad range of OEMs and ODMs will also provide single- and dual-socket servers for the broader market for a wide range of use cases, including industry heavyweights like Dell, HPE, Lenovo, Supermicro, Foxconn, and many others. The Vera CPUs will also be used for Nvidia HGX NVL8 systems. Perhaps most importantly, these racks will also serve as an integral part of Nvidia's broader Vera Rubin platform, which features seven chips in total, including the Rubin GPU, NVLink6 Switch for rack-scale interconnect, ConnectX-9 SuperNIC for networking, Bluefield 4 DPU, Spectrum-X 102.4T Co-packaged Optics switch, and Nvidia's Groq 3 LPUs. The Vera CPUs are in full production now and are slated for deliveries beginning in the second half of this year. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[9]

Nvidia's new Vera CPU has been designed for 'extremely high' single-core performance, but it's not coming to the PC for now

Dare we hope that those Olympus cores turn up in a future PC chip? What with all the controversy surrounding Nvidia's new DLSS 5 tech, the company's new Vera CPU has gone somewhat unnoticed among the more meme-worthy announcements at the GTC event. But the Vera chip -- or perhaps more specifically the Olympus cores it contains -- looks like a beast. So, will it ever come to the PC? We already knew of the existence of the Nvidia Vera CPU and some basic details, including the 88-core structure, the fact that its Arm-based and is a custom Nvidia design. Oh, and of course that it's designed for AI servers, at least at first. However, for GTC Nvidia has revealed more details and Vera is looking like a bit of a beast. The CPU cores, as we've previously noted, are codenamed OIympus and use the Arm rather than x86 instruction set, but have been designed in-house at Nvidia. Nvidia CEO Jensen Huang says those cores have, "been designed for extremely high single-threaded performance." He also claims that Vera has "unrivalled" performance per watt. If that's the high-level overview of Nvidia's ambitions for the chip, the details are arguably more interesting. Nvidia has now revealed that the Olympus core has a 10-wide instruction fetch and decode front-end. That's basically the same as Apple's M silicon, which currently leads the industry for per-core and per-clock performance. Olympus also has a "neural branch predictor" that can evaluate two branches per cycle and promises to further improve single-thread performance. Oh, and Nvidia has its own take on multi-threading or SMT in the form of "Spatial Multithreading." Unlike AMD and Intel's SMT, Nvidia's Spatial Multithreading is not time-spliced, and so does not rely on "time-shared resources and frequent context switching between threads", which Nvidia says can introduce performance variation. The overall result, Nvidia claims, is that an Olympus CPU core is 50% faster than any single x86 core in compilation, scripting, and compression in an "agentic sandbox container." Oh and it's 90% more efficient. Another interesting detail is that Vera uses plain old LPDDR5X memory, essentially the same stuff used in laptop PCs, but thanks to a monster memory bus has 1.2 TB/s of raw bandwidth. Speaking of bandwidth, there's also a 16-lane PCIe Gen 6 interface. Yup, Nvidia has got to PCIe Gen 6 before AMD or Intel. The specifics of the Vera CPU die also include the 88 cores with support for 176 threads, plus 162MB of L3 Cache. But if what we're interested in is how the Olympus core might apply to the PC, the specific configuration of Vera probably isn't hugely relevant. The bottom line here is that Nvidia has said nothing about this Vera chip and its Olympus cores outside of the AI server context into which the chip is being launched. Vera is all about AI factories and supporting agentic AI. But it certainly looks like a very powerful CPU core. If you trawl around online, you'll find reference to rumour and speculation that Nvidia could be lining up Olympus for the second generation of its upcoming PC processor, supposedly codenamed N2. The first-gen N1 or N1X Nvidia CPU for the PC has been rumoured for some time and latterly confirmed by CEO Jensen Huang to be based on the GB10 Superchip as found in the DGX Spark box. GB10 is also an Arm-based CPU, but uses off-the-shelf Arm Cortex cores rather than an in-house architecture from Nvidia. So, in this narrative, Nvidia's second-gen PC processor would make the switch from bought-in Arm cores to Nvidia's own design and that design would essentially be the Olympus cores in the new Vera chip. For what it's worth, Nvidia also revealed that it has something of a forward roadmap of custom Arm-based CPUs. Vera will be followed by a CPU codenamed "Rosa" that will be paired with the upcoming Feynman generation of GPUs. That's two generations out and follows Nvidia's next-gen Rubin GPUs. Realistically, then, we're a long way off seeing an Olympus Nvidia CPU core, or at least something derived from Olympus, in the PC. Nvidia hasn't even released the first-gen N1 PC processor with its Arm-designed cores yet. But Nvidia does seem to be laying the ground work for a whole series of potential future PC processors based on its own in-house CPU cores. And that is pretty exciting, even if it's all pretty theoretical for now, never mind the fact that running PC games on any Arm CPU is currently quite problematic.

[10]

Nvidia reinvents the CPU for the age of agentic AI - SiliconANGLE

For a while, it was thought that generic central processing units had little role to play in the artificial intelligence revolution, but Nvidia Corp. begs to differ. At its GTC 2026 developer conference today, it announced the all-new Vera CPU, said to be the first chip of its kind designed specifically for agentic AI workloads and reinforcement learning. As AI evolves from simple chatbots towards autonomous AI agents that can reason, use third-party software tools, write and execute code on behalf of humans, the underlying infrastructure requirements are changing. According to Nvidia, AI agents need more than just the sheer power and performance of graphics processing units. They also require orchestration, data movement and validation logic, which are tasks best performed by traditional CPUs. That's why Nvidia developed the Vera CPU, which it says is 50% faster and twice as efficient as traditional x86-based CPUs when handling these complex operations. "Vera is arriving at a turning point for AI," said Nvidia Chief Executive Jensen Huang. "The CPU is no longer simply supporting the model; it's driving it. With breakthrough performance and energy efficiency, Vera unlocks AI systems that think faster and scale further." Vera is the successor to Nvidia's Grace CPU, and it has been designed to slot inside the vast "AI factories" that power today's most powerful large language models. The new chip features 88 custom-designed Olympus cores that utilize Nvidia's Spatial Multithreading technology to run two tasks simultaneously with more predictable performance than its predecessor. That's a must-have for cloud infrastructure providers that need to run thousands of AI agents at once. To feed these cores, Nvidia has equipped the Vera CPUs with a new low-power memory subsystem that uses LPDDR5X memory. It delivers a massive 1.2 terabytes-per-second of bandwidth, which is about twice that of the general-purpose CPUs, while using only half the amount of power. Nvidia said the Vera chips excel at AI "thinking" tasks. By that, it's referring to the compilers, analytics pipelines and orchestration services that tell GPUs what they should be doing next. The GPUs remain the workhorse of AI models, while the CPUs perform the management tasks. Early adopters have already reported substantial performance gains, with Vera delivering 5.5 times lower latency when running Apache Kafka workloads, according to the data streaming company Redpanda Data Inc. Nvidia isn't just selling bags of the new chips. It's also offering a new Vera CPU rack system that integrates 256 liquid-cooled chips together to tackle the biggest AI workloads. According to Nvidia, it's able to sustain more than 22,500 concurrent CPU environments, which means AI factories will be able to scale agentic services to tens of thousands of instances within a remarkably small physical footprint. The Vera CPUs are also integrated in Nvidia's new NVL72 platform, which is a liquid-cooled rack-scale system made up of 72 Rubin GPUs and 36 Vera CPUs connected over its high-speed NVLink 6 interconnects. The company said it's able to provide up to 1.8 TB-per-second of coherent bandwidth between the CPU and GPUs, which is about seven times more than the latest PCIe Gen 6 standard. It's fast enough that Nvidia promises the CPU will no longer be a bottleneck that causes GPUs to sit idle, waiting for data to perform training and inference tasks. Nvidia is betting on broad industry adoption of the Vera CPU platform, and it has an extensive list of partners at launch. Hyperscale data center operators including Oracle Corp., Meta Platforms Inc. and Alibaba Group Holding Ltd. will be among the first to deploy the Vera CPU rack-scale systems, together with "neocloud" platforms such as CoreWeave Inc. and Nebius Group NV. Meanwhile, Nvidia has a long list of hardware providers backing it too. These include Dell Technologies Inc., Hewlett Packard Enterprise Co., Supermicro Computer Inc. and Lenovo Group Ltd., which are all planning to launch Vera CPU-based servers in the coming months. These will range from specialized HGX Rubin systems for GPU-accelerated AI workloads, to dual-socket configurations for general data processing. Nvidia said the Vera CPUs are in full production now, with the first Vera-based systems set to come available in the second half of the year.

[11]

NVIDIA updates roadmap, with new details on its next-gen GPU 'Feynman' coming in 2028

TL;DR: NVIDIA's updated data center roadmap reveals the Vera Rubin architecture launching this year, followed by Rubin Ultra in 2027 with enhanced memory and cooling. In 2028, the Feynman GPU will introduce advanced 3D stacking, custom HBM memory, and new components, advancing AI infrastructure and integrated systems. NVIDIA has updated its data center roadmap for its GPU and CPU lineup at GTC 2026, with the company focused on updating its AI-focused GPU architecture every year. With NVIDIA set to roll out its Vera Rubin architecture and platform this year, which includes the Vera CPU and Rubin GPU, we also got details on its next major GPU architecture, called Feynman, set to arrive in 2028. The successor to Rubin and 2027's Rubin Ultra, Feynman, will represent a major step for NVIDIA by introducing advanced 3D stacking technology to increase density and improve communication among the various hardware components that power the next generation of AI. In addition, Feynman will be paired with the new Rosa CPU for integrated systems, building on the foundation of Grace in the current Blackwell era. NVIDIA's roadmap, with Feynman coming in 2028, also lists "custom HBM," which would most likely refer to the next memory evolution beyond HBM4 and HBM4e. We'll have to wait for more details on this new high-bandwidth memory solution. Still, it's safe to assume that its bandwidth and performance will be tailored for increasingly more complex AI models and systems where memory bandwidth and capacity are key. The arrival of Feynman will also introduce next-gen components and hardware like LP40 memory, BlueField-5 processing, NVLink-8 interconnect technology, and more. This highlights NVIDIA's current focus on delivering a complete AI infrastructure solution, which pairs cutting-edge hardware with its software. As for Rubin Ultra, which is set to arrive in 2027, NVIDIA showcased the hardware at GTC, with CEO Jensen Huang standing on stage during the opening keynote with a system featuring 1TB of HBM4e memory and a new rack called Kyber that features vertical trays and liquid cooling as standard. The new rack is designed to double the number of GPUs, while upgrading communication to a 7th-generation NVLink switch.

[12]

NVIDIA unveils Vera Rubin at GTC 2026, the brain behind the next era AI

TL;DR: NVIDIA's Vera Rubin AI platform, unveiled by CEO Jensen Huang, integrates seven chips and six racks to optimize AI inference and agent-based workloads. Featuring the Vera CPU and Rubin GPU with 288GB HBM4 memory and 50 PFLOPS performance, it aims to reduce inference costs by up to 10x and enhance autonomous AI capabilities. NVIDIA's CEO Jensen Huang has officially unveiled its next-generation AI platform, which includes seven chips and six individual racks. Vera Rubin was unveiled at GTC 2026, where Huang broke down the components that make up NVIDIA's new flagship AI platform. NVIDIA has positioned the unveiling of Vera Rubin as the foundation of a new era of AI infrastructure, with the platform being specifically designed around AI inference and agent-based workloads. At its core, Vera Rubin is a full-stack AI supercomputing platform that combines a set of tightly integrated components, including the Vera CPU, Rubin GPU, NVLink interconnect, networking, and data processing units. Huang explained during the GTC Keynote that AI is moving toward continuous generation of responses, decision-making, and action-taking. The NVIDIA CEO added that Vera Rubin has been specifically designed to support this new direction of AI inference. Notably, NVIDIA's Vera Rubin is an AI system designed to reason, plan, and act autonomously. As for performance, NVIDIA has said Vera Rubin will cut inference token costs by up to 10x and reduce the number of GPUs required for complex models. Furthermore, each NVIDIA Rubin GPU features 288GB of HBM4 memory, providing up to 22 TB/s of total bandwidth, along with 50 PFLOPS of NVFP4 compute performance. At the transistor level, each NVIDIA Rubin GPU has 336 billion transistors, with an additional 2.5 trillion transistors in HBM4 memory. As for the CPU, the Vera CPU is NVIDIA's first fully custom, next-generation Arm-based CPU designed solely for AI data centers. Its purpose is to keep the massive GPU cluster running at maximum efficiency 24/7.

Share

Share

Copy Link

Nvidia introduced its Vera CPU at GTC 2026, featuring 88 custom Olympus Arm cores designed for agentic AI workloads. The processor delivers 1.2 TB/s memory bandwidth and 50% higher performance than standard CPUs. Nvidia now offers liquid cooled rack systems with 256 Vera CPUs, competing directly against Intel and AMD in the data center CPU market for the first time.

Nvidia Positions Vera CPU as Core Component of AI Infrastructure

Nvidia unveiled its Vera CPU at the GPU Technology Conference (GTC) 2026, marking a strategic shift in how the company approaches AI infrastructure

1

. The processor features 88 custom Olympus Arm cores built on Arm v9.2-A architecture, representing a significant upgrade from the 72 Arm Neoverse cores found in Nvidia's previous Grace processor4

. Unlike Grace, which primarily served as a companion to GPUs, the Vera CPU is positioned as a general-purpose data center CPU designed to compete directly with offerings from Intel and AMD2

.

Source: TweakTown

"Vera is arriving at a turning point for AI. As intelligence becomes agentic -- capable of reasoning and acting -- the importance of the systems orchestrating that work is elevated," said Jensen Huang, founder and CEO of Nvidia

5

. The chip addresses a critical need in modern AI workloads where agents must execute code, perform SQL queries, and handle tool calling—tasks that cannot run on GPUs alone2

.Technical Architecture Delivers Breakthrough Memory Bandwidth

The Vera CPU introduces several architectural innovations that differentiate it from traditional server processors. Each chip delivers 1.2 TB/s of memory bandwidth through support for up to 1.5 TB of SOCAMM LPDDR5X memory

2

. This represents approximately 13.6 GB/s per core under full load, with the fabric capable of delivering up to 80 GB/s per core when other cores are not fully saturated4

. Nvidia claims this provides 3x more memory bandwidth compared to contemporary x86 processors from Intel and AMD2

.

Source: SiliconANGLE

The Olympus Arm cores feature a distinctive spatial multi-threading design that physically partitions execution units, caches, and register files between two hardware threads

4

. This approach enables 176 threads to run concurrently without time-slicing shared resources, delivering more predictable performance in multi-tenant environments. The core complex operates as a single coherent domain using Nvidia's Scalable Coherency Fabric, avoiding the NUMA topology challenges common in high-core-count x86 designs4

.The execution pipeline includes a 10-wide instruction decode block and a neural branch predictor capable of handling two branch predictions per cycle

2

. Nvidia has also integrated a PyTorch-optimized instruction buffer and custom prefetch engine designed for graph analytics, targeting the irregular control-flow patterns common in AI frameworks and data analytics workloads4

.Liquid Cooled Rack System Enables Massive AI Deployments

Nvidia introduced a high-density liquid cooled rack system that packs 256 Vera CPUs alongside 64 BlueField-4 data processing units

2

. This rack-scale architecture delivers more than 22,500 CPU cores and 400 TB of memory, providing approximately 300 TB/s of aggregate memory bandwidth4

. The system is designed specifically for agentic AI frameworks, reinforcement learning, and AI training techniques that require substantial CPU resources2

.Ian Buck, VP of Hyperscale and HPC at Nvidia, explained that agents require CPUs for critical tasks: "Agents don't operate on GPUs alone. They need CPUs in order to do their work, whether we're training agentic models or serving them, GPUs today actually call out to CPUs in order to do the tool calling, SQL queries and the compilation of code"

2

. This sandbox execution capability is essential for both training and deploying agents across data centers.The Vera CPU will be available in both single- and dual-socket configurations from ODM and OEM partners including Foxconn, Wistron, Dell Tech, Lenovo, and HPE

2

. Nvidia's NVL8 HGX systems, which traditionally used x86 processors from Intel, will now offer Vera CPU configurations for the Rubin generation2

.Related Stories

Vera Rubin Platform Integrates Seven Chips for Complete AI Factory

The Vera CPU forms a critical component of Nvidia's broader Vera Rubin platform, which integrates seven chips designed to operate as a single co-designed system

3

. The platform includes the Rubin GPU built on TSMC's 3nm process with 288 GB of HBM4 memory, the Groq 3 LPU for low-latency inference, NVLink 6 switches, ConnectX-9 SuperNICs, and Spectrum-6 Ethernet switches3

.

Source: Tom's Hardware

The Vera CPU connects to Rubin GPUs via NVLink-C2C at 1.8 TB/s of coherent bandwidth, which is seven times faster than PCIe Gen 6

3

. This high-speed interconnect enables the CPU to handle orchestration tasks including scheduling workloads, routing KV cache data, managing context, and running the control plane for agentic AI workflows3

. The platform supports confidential computing across both CPU and GPU domains, enabling encrypted execution and isolation that extend into GPU memory and multi-socket nodes4

.Major Cloud Providers Commit to Vera Deployments

When the Vera CPU debuts in the second half of 2026, major cloud and AI infrastructure providers have already committed to deployments. Alibaba, ByteDance, Meta, Oracle, CoreWeave, Lambda, Nebius, and NScale have all announced plans to integrate the chips into their data centers

2

. Meta recently revealed plans to deploy Nvidia's standalone Grace CPUs at scale, suggesting strong demand for Nvidia's CPU offerings beyond GPU-attached configurations2

.Nvidia claims the Vera CPU delivers 1.5x the performance per core compared to standard CPUs and operates with twice the efficiency

5

. For customers, this translates to the ability to run more AI workloads per rack while reducing power consumption—a critical consideration as AI infrastructure scales. The company's roadmap also reveals plans for the Rosa CPU in 2028, which will focus on ultimate single-thread performance and shorten Nvidia's CPU development cycle from four years to two, matching the cadence of AMD and Intel1

. This aggressive development timeline signals Nvidia's commitment to competing in the data center CPU market long-term, potentially reshaping the competitive landscape dominated by x86 architectures for decades.References

Summarized by

Navi

[1]

[2]

[3]

[5]

Related Stories

NVIDIA Unveils Next-Gen AI Powerhouses: Rubin and Rubin Ultra GPUs with Vera CPUs

19 Mar 2025•Technology

NVIDIA Unveils Roadmap for Next-Gen AI GPUs: Blackwell Ultra and Vera Rubin

28 Feb 2025•Technology

Nvidia Unveils Vera Rubin Superchip: Six-Trillion Transistor AI Platform Set for 2026 Production

29 Oct 2025•Technology