Nvidia unveils BlueField-4 STX to eliminate storage bottlenecks stalling agentic AI inference

4 Sources

4 Sources

[1]

Nvidia launches BlueField-4 STX storage architecture for agentic AI at GTC 2026

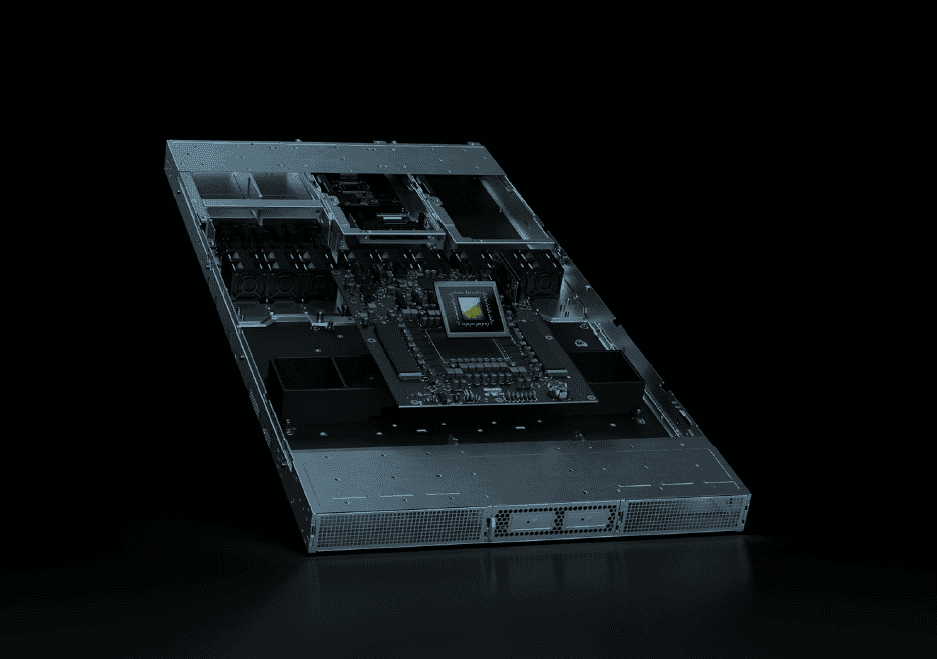

Nvidia announced BlueField-4 STX at GTC 2026 on March 16, a modular reference architecture for accelerated storage designed to address the data access bottleneck limiting agentic AI inference. Built around a new storage-optimized BlueField-4 DPU and ConnectX-9 SuperNIC, the platform targets GPU underutilization that occurs when AI agents operating across extended sessions and expanding context windows exceed the throughput of conventional storage paths. Nvidia says STX delivers up to five times the token throughput, four times better energy efficiency, and twice the page ingestion speed compared with traditional CPU-based storage architectures. The specific issue that Nvidia is targeting with STX is KV cache management. During transformer inference, the attention mechanism computes KV pairs for every token in context, which must be stored and retrieved for each subsequent generation step. But these context windows are growing into the hundreds of thousands of tokens, meaning that the KV cache is outgrowing GPU HBM capacity. The usual fallback is to offload to host DRAM or NVMe storage, but both routes pass through the CPU, adding latency that compounds with context length and stalls GPU execution as data transits. STX bypasses the host CPU by routing data through a dedicated accelerated storage layer via RDMA over Spectrum-X Ethernet. BlueField-4 manages NVMe SSDs directly and handles data integrity and encryption for the KV cache, keeping context accessible at the storage processor rather than transiting the host. The full stack runs on the Vera Rubin platform and integrates the Vera CPU -- also announced at GTC on March 16 -- alongside ConnectX-9, Spectrum-X Ethernet, DOCA software, and AI Enterprise software. The first rack-scale implementation built on STX is the Nvidia CMX context memory storage platform. Storage and infrastructure vendors co-designing systems based on STX include DDN, Dell Technologies, HPE, IBM, NetApp, and VAST Data, alongside manufacturing partners AIC, Supermicro, and Quanta Cloud Technology. Meanwhile, eight cloud and AI providers -- including CoreWeave, Lambda, Mistral AI, and Oracle Cloud Infrastructure -- committed to early adoption for context memory storage. STX-based platforms are expected from partners in the second half of 2026. "Agentic AI is redefining what software can do -- and the computing infrastructure behind it must be reinvented to keep pace," Jensen Huang, founder and CEO of Nvidia, said at GTC. "AI systems that reason across massive context and continuously learn require a new class of storage." Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

Nvidia BlueField-4 STX adds a context memory layer to storage to close the agentic AI throughput gap

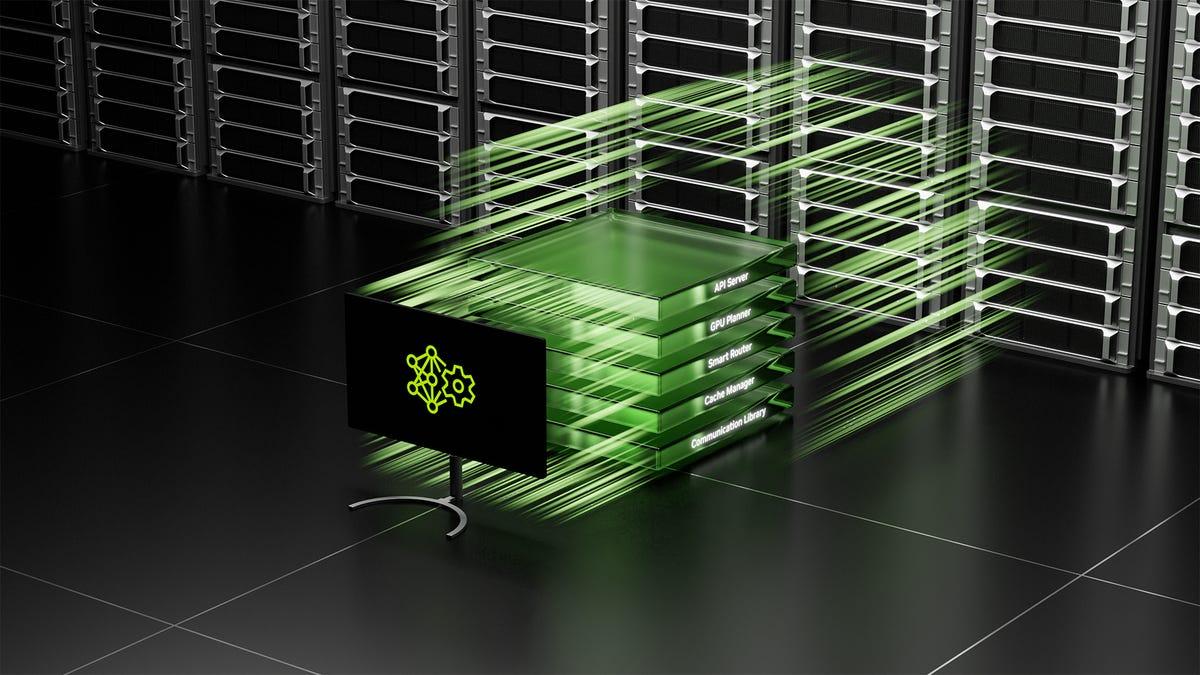

When an AI agent loses context mid-task because traditional storage can't keep pace with inference, it is not a model problem -- it is a storage problem. At GTC 2026, Nvidia announced BlueField-4 STX, a modular reference architecture that inserts a dedicated context memory layer between GPUs and traditional storage, claiming 5x the token throughput, 4x the energy efficiency and 2x the data ingestion speed of conventional CPU-based storage. The bottleneck STX targets is key-value cache data. KV cache is the stored record of what a model has already processed -- the intermediate calculations an LLM saves so it does not have to recompute attention across the entire context on every inference step. It is what allows an agent to maintain coherent working memory across sessions, tool calls and reasoning steps. As context windows grow and agents take more steps, that cache grows with them. When it has to traverse a traditional storage path to get back to the GPU, inference slows and GPU utilization drops. STX is not a product Nvidia sells directly. It is a reference architecture the company is distributing to its storage partner ecosystem so vendors can build AI-native infrastructure around it. STX puts a context memory layer between GPU and disk The architecture is built around a new storage-optimized BlueField-4 processor that combines Nvidia's Vera CPU with the ConnectX-9 SuperNIC. It runs on Spectrum-X Ethernet networking and is programmable through Nvidia's DOCA software platform. The first rack-scale implementation is the Nvidia CMX context memory storage platform. CMX extends GPU memory with a high-performance context layer designed specifically for storing and retrieving KV cache data generated by large language models during inference. Keeping that cache accessible without forcing a round trip through general-purpose storage is what CMX is designed to do. "Traditional data centers provide high-capacity, general-purpose storage, but generally lack the responsiveness required for interaction with AI agents that need to work across many steps, tools and different sessions," Ian Buck, Nvidia's vice president of hyperscale and high-performance computing said in a briefing with press and analysts. In response to a question from VentureBeat, Buck confirmed that STX also ships with a software reference platform alongside the hardware architecture. Nvidia is expanding DOCA to include a new component referred to in the briefing as DOCA Memo. "Our storage providers can leverage the programmability of the BlueField-4 processor to optimize storage for the agentic AI factory," Buck said. "In addition to having a reference rack architecture, we're also providing a reference software platform for them to deliver those innovations and optimizations for their customers." Storage partners building on STX get both a hardware reference design and a software reference platform -- a programmable foundation for context-optimized storage. Nvidia's partner list spans storage incumbents and AI-native cloud providers Storage providers co-designing STX-based infrastructure include Cloudian, DDN, Dell Technologies, Everpure, Hitachi Vantara, HPE, IBM, MinIO, NetApp, Nutanix, VAST Data and WEKA. Manufacturing partners building STX-based systems include AIC, Supermicro and Quanta Cloud Technology. On the cloud and AI side, CoreWeave, Crusoe, IREN, Lambda, Mistral AI, Nebius, Oracle Cloud Infrastructure and Vultr have all committed to STX for context memory storage. That combination of enterprise storage incumbents and AI-native cloud providers is the signal worth watching. Nvidia is not positioning STX as a specialty product for hyperscalers. It is positioning it as the reference standard for anyone building storage infrastructure that has to serve agentic AI workloads -- which, within the next two to three years, is likely to include most enterprise AI deployments running multi-step inference at scale. STX-based platforms will be available from partners in the second half of 2026. IBM shows what the data layer problem looks like in production IBM sits on both sides of the STX announcement. It is listed as a storage provider co-designing STX-based infrastructure, and Nvidia separately confirmed that it has selected IBM Storage Scale System 6000 -- certified and validated on Nvidia DGX platforms -- as the high-performance storage foundation for its own GPU-native analytics infrastructure. IBM also announced a broader expanded collaboration with Nvidia at GTC, including GPU-accelerated integration between IBM's watsonx.data Presto SQL engine and Nvidia's cuDF library. A production proof of concept with Nestlé put numbers on what that acceleration looks like: a data refresh cycle across the company's Order-to-Cash data mart, covering 186 countries and 44 tables, dropped from 15 minutes to three minutes. IBM reported 83% cost savings and a 30x price-performance improvement. The Nestlé result is a structured analytics workload. It does not directly demonstrate agentic inference performance. But it makes IBM and Nvidia's shared argument concrete: the data layer is where enterprise AI performance is currently constrained, and GPU-accelerating it produces material results in production. Why the storage layer is becoming a first-class infrastructure decision STX is a signal that the storage layer is becoming a first-class concern in enterprise AI infrastructure planning, not an afterthought to GPU procurement. General-purpose NAS and object storage were not designed to serve KV cache data at inference latency requirements. STX-based systems from partners including Dell, HPE, NetApp and VAST Data are what Nvidia is putting forward as the practical alternative, with the DOCA software platform providing the programmability layer to tune storage behavior for specific agentic workloads. The performance claims -- 5x token throughput, 4x energy efficiency, 2x data ingestion -- are measured against traditional CPU-based storage architectures. Nvidia has not specified the exact baseline configuration for those comparisons. Before those numbers drive infrastructure decisions, the baseline is worth pinning down. Platforms are expected from partners in the second half of 2026. Given that most major storage vendors are already co-designing on STX, enterprises evaluating storage refreshes for AI infrastructure in the next 12 months should expect STX-based options to be available from their existing vendor relationships.

[3]

The convergence of context: Why Nvidia's BlueField-4 STX marries the network and storage admin - SiliconANGLE

The convergence of context: Why Nvidia's BlueField-4 STX marries the network and storage admin For years, the "wall" between storage and networking administrators has been a fixture of the enterprise data center. I spent the early part of my career as a network engineer and the arena of storage was a bit of a black box, and for most companies, that's still the case. This is because these networks operated differently. Storage teams obsessed over IOPS and durability, while networking teams lived and breathed latency and throughput. But at Nvidia GTC 2026, Chief Executive Jensen Huang just introduced a platform that effectively tears that wall down: the Nvidia BlueField-4 STX storage architecture (pictured). Nvidia announced a modular reference architecture that delivers up to five times more token throughput and four times more energy efficiency compared with traditional central processing unit-based storage designs. However, it's important to look past numbers. This innovation that just increases speed but is a rethink of how we define "storage" for the era of agentic artificial intelligence. We are moving past the era of simple "chatbots" into the era of agentic AI -- systems that don't just answer questions but execute multistep tasks across sessions. These agents require contextual working memory. Traditional storage (think high-capacity, general-purpose arrays) is too slow for this. When an AI agent needs to recall a specific detail from a 10-hour conversation or a massive technical manual to take its next step, waiting for a traditional data path creates a bottleneck that leaves expensive graphics processing units sitting idle and there is no bigger waste of money than GPUs that aren't being used. The BlueField-4 STX introduces the Nvidia CMX (Context Memory Storage) platform. This isn't just "more disk," but rather a high-performance context layer that expands GPU memory across the rack. It allows AI factories to ingest data twice as fast and maintain the responsiveness required for long-context reasoning. The technical differentiation behind STX lies in its integration with the Nvidia Vera Rubin platform. The architecture employs a storage-optimized BlueField-4 processor that combines: By offloading storage tasks from the general-purpose CPU to this specialized STX architecture, Nvidia is claiming a fourfold jump in energy efficiency. In an era where power availability is the single biggest constraint on data center expansion, that's not just a "nice-to-have" -- it's the difference between scaling or stalling. This announcement serves as a final notice: The silos must end. If you are a network administrator, you are now in the storage business. If you are a storage administrator, you are now in the networking business. The Nvidia BlueField-4 STX architecture is a product of what Huang calls extreme co-design. At the GTC, Nvidia held an analyst session on this topic and how it was used to create the new solution. Extreme co-design is a multidisciplinary engineering approach that treats the entire data center as a single, integrated unit to eliminate the traditional "wall" between networking and storage. By tightly coupling the Vera CPU, ConnectX-9 SuperNIC and Spectrum-X Ethernet, Nvidia has created a distributed context layer that allows AI agents to access working memory with four times the energy efficiency and five times the token throughput of CPU-based designs. This synergy ensures that the network effectively becomes the storage bus, providing the ultra-low latency required for the multistep reasoning tasks of agentic AI. Regarding the role of storage within this co-designed ecosystem, Senior Vice President of Networking Kevin Deierling noted: "Thinking takes planning. You write a to-do list. You need to store that somewhere, and so when Jensen was talking about STX and CMX, CMX is the cache optimized version of that. All of this needs to be optimized, because thinking requires memory, and that memory ultimately is part of this co-design optimization across the entire data center." This is just the latest product that Nvidia has created using this methodology. Others include Vera Rubin, Groq 3 LPX, Spectrum-X, IGX Thor and many others. It's this ability to think at a system level that has created the moat Nvidia seems to have around it. The industry isn't waiting around to see if this works. The list of partners is a "who's who" of the infrastructure world. It's easy to look at BlueField-4 STX as a storage-optimized hardware refresh, but it's bringing storage into the AI factory as an integrated component. It recognizes that storage for AI isn't about long-term archiving -- it's about active reasoning. For the information technology professional, the message from GTC is about staying ahead of the curve. Get out of your comfort zone and start learning the other side of the aisle. Storage and networking are coming together, and those engineers who work in silos will be on the outside looking in. The most successful data center architects of 2026 will be those who can speak "Spectrum-X" and "context memory" in the same breath. Platforms based on STX are expected to hit the market in the second half of 2026. The clock is ticking.

[4]

Nvidia introduces BlueField-4 STX reference architecture for AI storage systems - SiliconANGLE

Nvidia introduces BlueField-4 STX reference architecture for AI storage systems Nvidia Corp. today launched a reference architecture that hardware makers can use to build storage equipment for artificial intelligence clusters. The BlueField-4 STX made its debut at the company's GTC developer event. "AI systems that reason across massive context and continuously learn require a new class of storage," said Nvidia Chief Executive Officer Jensen Huang. "NVIDIA STX reinvents the storage stack, providing a modular foundation for AI-native infrastructure that keeps AI factories operating at peak performance." The architecture's first building block is the BlueField-4 data processing unit, or DPU, that Nvidia unveiled in January. A DPU offloads infrastructure management tasks from a server's main processor to leave more computing capacity for applications. The BlueField-4 handles tasks such as processing data traffic between GPUs and flash storage. According to Nvidia, the BlueField-4 STX also includes its Spectrum-X Ethernet switches and ConnectX-9 SuperNICs. Usually, the data that a server fetches from storage has to pass through its central processing unit and operating system. Spectrum-X and ConnectX-9 support a technology called RDMA that skips those pit stops, which speeds up the flow of traffic. Nvidia says that BlueField-4 STX can process tokens, units of data used by AI models, up to 5 times faster than earlier storage architectures. The company also expects a fourfold improvement in energy-efficiency. The first rack-scale implementation of the BlueField-4 STX architecture is a storage system design called CMX. It's optimized to hold key-value caches, data structures that large language models use to store information. LLMs include an attention mechanism that analyzes each prompt, determines which of its elements are most important and prioritizes them. Along the way, the attention mechanism works turns the contents of the prompt into mathematical objects called vectors. It uses two main types of vectors: keys that help the LLM find information and values that hold the information. CMX stores an AI cluster's key-value cache in high-speed flash storage. BlueField-4 chips offload key data management tasks from the host cluster's CPUs to boost performance. According to Nvidia, CMX also speeds up AI workloads in other ways. Storage systems use multiple hardware-intensive algorithms to reduce the risk of data loss. CMX doesn't run those algorithms on the KV cache that it holds, which avoids the associated hardware overhead. The system can skip that step because a KV cache often doesn't require the same data loss protection as standard business records. The information in a KV cache is relatively easy to recover and is usually only retained for a short amount of time before it's deleted.

Share

Share

Copy Link

Nvidia announced BlueField-4 STX at GTC 2026, a modular AI storage reference architecture that inserts a dedicated context memory layer between GPUs and traditional storage. The platform delivers 5x the token throughput and 4x better energy efficiency compared to CPU-based storage, targeting the key-value cache bottleneck that causes GPU underutilization during extended AI agent sessions.

Nvidia Targets Storage Bottleneck Limiting Agentic AI Performance

Nvidia announced BlueField-4 STX at GTC 2026 on March 16, introducing a modular AI storage reference architecture designed to address the data access bottleneck that limits agentic AI inference . Built around a new storage-optimized BlueField-4 Data Processing Unit (DPU) and ConnectX-9 SuperNIC, the platform targets GPU underutilization that occurs when AI agents operating across extended sessions and expanding context windows exceed the throughput of conventional storage paths

1

. Nvidia claims BlueField-4 STX delivers up to 5x the token throughput and energy efficiency, 4x better energy efficiency, and 2x the data ingestion speed compared with traditional CPU-based storage architectures2

.Context Memory Layer Solves Key-Value Cache Management Crisis

The specific challenge that BlueField-4 STX addresses is key-value cache management during transformer inference

1

. Large Language Models (LLMs) compute KV pairs for every token in context, which must be stored and retrieved for each subsequent generation step. As context windows grow into hundreds of thousands of tokens, the KV cache outgrows GPU HBM capacity, forcing offloads to host DRAM or NVMe storage that pass through the CPU, adding latency that compounds with context length and stalls GPU execution1

.

Source: VentureBeat

The context memory layer that STX introduces between GPUs and traditional storage is designed to maintain coherent working memory across sessions, tool calls, and reasoning steps without forcing round trips through general-purpose storage

2

. "Traditional data centers provide high-capacity, general-purpose storage, but generally lack the responsiveness required for interaction with AI agents that need to work across many steps, tools and different sessions," Ian Buck, Nvidia's vice president of hyperscale and high-performance computing, said in a briefing2

.AI-Native Infrastructure Built on Extreme Co-Design

BlueField-4 STX bypasses the host CPU by routing data through a dedicated accelerated storage layer via RDMA over Spectrum-X Ethernet

1

. The BlueField-4 processor manages NVMe SSDs directly and handles data integrity and encryption for the KV cache, keeping context accessible at the storage processor rather than transiting the host1

. The full stack runs on the Vera Rubin platform and integrates the Vera CPU alongside ConnectX-9, Spectrum-X Ethernet, DOCA software, and AI Enterprise software1

. The architecture employs what Jensen Huang calls extreme co-design, a multidisciplinary engineering approach that treats the entire data center as a single integrated unit to eliminate traditional silos between networking and storage3

. "AI systems that reason across massive context and continuously learn require a new class of storage," Jensen Huang, founder and CEO of Nvidia, said at GTC1

.Related Stories

CMX Platform and Partner Ecosystem Signal Industry Shift

The first rack-scale implementation built on BlueField-4 STX is the Nvidia CMX context memory storage platform, which extends GPU memory with a high-performance context layer designed specifically for storing and retrieving KV cache data generated by Large Language Models during inference

2

. Storage vendors co-designing systems based on STX include DDN, Dell Technologies, HPE, IBM, NetApp, and VAST Data, alongside manufacturing partners AIC, Supermicro, and Quanta Cloud Technology1

.

Source: SiliconANGLE

Eight cloud providers including CoreWeave, Lambda, Mistral AI, and Oracle Cloud Infrastructure committed to early adoption for context memory storage

1

. Buck confirmed that STX ships with a software reference platform alongside the hardware architecture, with Nvidia expanding DOCA to include a new component called DOCA Memo2

. STX-based platforms are expected from partners in the second half of 20261

. The combination of enterprise storage incumbents and AI-native cloud providers signals that Nvidia is positioning STX as the reference standard for anyone building storage infrastructure serving agentic AI workloads, which within the next two to three years is likely to include most enterprise AI deployments running multi-step inference at scale2

. For long-context reasoning tasks that define agentic AI, improved agentic AI throughput means the difference between scaling AI factories or watching expensive GPUs sit idle waiting for data3

.References

Summarized by

Navi

[2]

[3]

Related Stories

NVIDIA Expands AI Infrastructure with New Storage Certification and Reference Architectures

19 Mar 2025•Technology

Nvidia Unveils Blackwell Ultra GPUs and AI Desktops, Focusing on Reasoning Models and Revenue Generation

19 Mar 2025•Technology

NVIDIA Unveils NVLink Fusion: Enabling Custom AI Infrastructure with Industry Partners

19 May 2025•Technology