Nvidia unveils Groq 3 LPU to accelerate AI inference with SRAM-powered architecture

14 Sources

14 Sources

[1]

Nvidia's Groq 3 LPU Signals AI's Inferencing Era

This week, over 30,000 people are descending upon San Jose, Calif., to attend Nvidia GTC, the so-called Superbowl of AI -- a nickname that may or may not have been coined by Nvidia. At the main event Jensen Huang, Nvidia CEO, took the stage to announce (among other things) a new line of next generation Vera Rubin chips that represent a first for the GPU giant: a chip designed specifically to handle AI inference. The Nvidia Groq 3 language processing unit (LPU) incorporates intellectual property Nvidia licensed from the start-up Groq last Christmas Eve for US $20 billion. "Finally, AI is able to do productive work, and therefore the inflection point of inference has arrived," Huang told the crowd. "AI now has to think. In order to think, it has to inference. AI now has to do; in order to do, it has to inference." Training and inference tasks have distinct computational requirements. While training can be done on huge amounts of data at the same time and can take weeks, inference must be run on a user's query when it comes in. Unlike training, inference doesn't require running costly backpropagation. With inference, the most important thing is low latency -- users expect the chatbot to answer quickly, and for thinking or reasoning models inference runs many times before the user even sees an output. Late last year, it looked like Nvidia had picked one of the winners among the crop of inference chips, when it announced its deal with Groq. The Nvidia Groq 3 LPU reveal came a mere two and a half months after, highlighting the urgency of the growing inference market. Groq's approach to accelerating inference relies on interleaving processing units with memory units on the chip. Instead of relying on high-bandwidth memory (HBM) situated next to GPUs it leans on SRAM memory integrated within the processor itself. This design greatly simplifies the flow of data through the chip, allowing it to proceed in a streamlined, linear fashion. "The data actually flows directly through the SRAM," Mark Heaps said at the Supercomputing conference in 2024. Heaps was a chief technology evangelist at Groq at the time and is now director of developer marketing at Nvidia. "When you look at a multi-core GPU, a lot of the instruction commands need to be sent off the chip, to get into memory and then come back in. We don't have that. It all passes through in a linear order." Using SRAM allows that linear data flow to happen exceptionally fast, leading to the low latency required for inference applications. "The LPU is optimized strictly for that extreme low latency token generation," says Ian Buck, VP and general manager of hyperscale and high-performance computing at Nvidia. Comparing the Rubin GPU and Groq 3 LPU side by side highlights the difference. The Rubin GPU has access to a whopping 288 gigabytes of HBM and is capable of 50 quadrillion floating-point operations per second (petaFLOPS) of 4-bit computation. The Groq 3 LPU contains a mere 500 megabytes of SRAM memory, and is capable of 1.2 petaFLOPS of 8-bit computation. On the other hand, while the Rubin GPU has a memory bandwidth of 22 terabytes per second, at 150 TB/s the Groq 3 LPU is seven times as fast,. The lean, speed-focused design is what allows the LPU to excel at inference. The new inference chip underscores the ongoing trend of AI adoption, which shifts the computational load from just building ever bigger models to actually using those models at scale ."NVIDIA's announcement validates the importance of SRAM-based architectures for large-scale inference, and no one has pushed SRAM density further than d-Matrix," says d-Matrix CEO Sid Sheth. He's betting that data center customers will want a variety of processors for inference. "The winning systems will combine different types of silicon and fit easily into existing data centers alongside GPUs." Inference-only chips may not be the only solution. Late last week, Amazon Web Services said that it will deploy a new kind of inferencing system in its data centers. The system is a combination of AWS' Tranium AI accelerator and Cerebras Systems' third generation computer CS-3, which is built around the largest single chip ever made. The two-part system is meant to take advantage of a technique called inference disaggregation. It separates inference into two parts -- processing the prompt, called prefill, and generating the output, called decode. Prefill is inherently parallel, computationally intensive, and doesn't need much memory bandwidth. While decode is a more serial process that needs a lot of memory bandwidth. Cerebras has maximized the memory bandwidth issue by building more 44 GB of SRAM on its chip connected by a 21 PB/s network. Nvidia, too, intends to take advantage of inference disaggregation in its new, combined compute tray called the Nvidia Groq 3 LPX. Each tray will house 8 Groq 3 LPUs and a Vera Rubin, which pairs Rubin GPUs with a Vera CPU. The pre-fill and the more computationally intensive parts of the decode are done on Vera Rubin, while the final part is done on the Groq 3 LPU, leveraging the strengths of each chip. "We're in volume production now," Huang said.

[2]

GTC 2026: Ian Buck press Q&A transcript -- VP of Hyperscale and HPC speaks out on shelving CPX and shipping LPU decode this year

We sat down at GTC 2026 with Nvidia's VP of Hyperscale and HPC, Ian Buck. Following Nvidia's GTC 2026 keynote, where CEO Jensen Huang laid out the company's Vera Rubin architecture and the Groq 3 LPU acquisition, Nvidia VP of Hyperscale and HPC Ian Buck sat down with press for a Q&A session in San Jose. Buck addressed CPX's delay, the LPU-GPU decode architecture, the Vera CPU's role in the AI data center, and took questions on the Intel NVLink Fusion partnership. This is a full transcript of a session attended by Tom's Hardware at GTC 2026, and as such, the transcript can occasionally be unclear; we have denoted any moments as such within the copy. Before diving into the transcript, it's worth refamiliarizing yourself with Jensen Huang's keynote from last week, which we've linked below. CPX delay and LPU decode architecture Ian Buck: As part of bringing the LPU to market this year with Vera Rubin... we've pulled CPX. It's still a good idea, but in order to dedicate our focus on... optimizing the decode with LPU this year. So we'll be thinking about CPX more in the next generation [and] we're going to execute on decode with LPU now, this year. A couple other things I wanted to touch on. I also get a lot of questions about how we're doing this. How does the LPU work? How does it work with the GPU? Jensen went over it briefly. This might be more technical, but it's an important point. The way we do the decode is with a Groq 3 LPU LPX rack. Here we have 256 LPU chips combined with a Vera Rubin NVL72. We're going to do the decode using Dynamo. We've combined the two teams, so Groq's software team has joined our Dynamo team. We now do not only disaggregation of separate GPUs that you pre-fill and decode, but also the decode itself is actually split between the LPU and GPU. That's what makes the extremely fast token generation economical. We can focus and run the computations that benefit from the fast SRAM of the LPU over here in one layer, and literally the next layer, we can send the intermediate activation state over to the GPUs to do all the attention math, all the softmax, all the routing, all the KV calculations, so that only the LPUs need to have copies of the weights. All the per-query state, all the KV[cache] state, which can get quite large, can operate and stay in the HBMs. Of course, both processors can do both things. The LPUs can do the attention math. Obviously the GPUs can do the [...] as well. So you can optimize for resiliency. Dynamo was launched a year ago. It was nicknamed the operating system of [the] AI factory. I will say it's been a roaring success. If any of you got to go to the Dynamo meetups, it was where all of the different users of Dynamo, developers, and customers were all talking. I think we get about 100 GitHub submissions a day now, and about a third of them are coming from external [sources]. Vera CPU positioning Ian Buck: Definitely happy to talk about Vera. I've actually brought with me the Vera board. This is the Vera module. It's a reference module that we give to system partners, which they can build. It has two Vera CPUs and LPDDR5 memory. So this is a dual-socket server that will operate and run all of the tooling: PyTorch, compiling, SQL queries, as well as for HPC partners. It is the world's best agentic CPU. It has 88 cores designed so that you can put everything on. Turn on everything. Run them all at full speed. Compile on every core. Browse or render on every core. Python on every core. SQL on every core. The agentic world needs a CPU that has the world's best single-threaded performance under load, has the world's best memory bandwidth under load, and has the best energy efficiency under load. And it turns out, while it started with a CPU that was an excellent CPU to be married with our GPUs, it makes perfect sense that all the things we needed to do to operate and run AI with our GPUs also makes a great CPU as well. Journalist 1: Is it safe to say [Rubin] CPX is not going to come out in 2026? Ian Buck: I'm never going to say no to how fast we can innovate. But I think we can do a lot better [with LPU decode first]. Journalist 2: Jensen was asked about the target market, target use case. And he said he was very careful about how he answered that. He didn't want to position it as a direct drop-in replacement for x86. Ian Buck: We only are going to build one Vera SKU [...] other people are going to build x86 SKUs [...] the world is not going to be served by one SKU of CPU. And that's not our intention. The intention is that we'd like to solve a workload problem. It's not designed to be a dollar-per-vCPU chip. The amount of technology and, frankly, just the cost to build something that solves that critical workload makes it not for that market; it's a bad gaming chip. But it is inspired by single-threaded performance. You may not need 88 cores, but it's actually a unique workload because in agentic AI, it's in the critical path for both training and running these models. When you're training a model to code, for example, you start from a model that needs to learn how to code better. And as you're training on Vera Rubin, you're halfway through training, and you say: go write a program that computes the Fibonacci series, or solves a New York Times crossword puzzle. The AI model will then try to write that program. It then needs to score how well it did. We're not going to run that Python on the GPU. It's a CPU job. The GPU tells the CPU to go run it. So it opens up a sandbox, boots a Linux instance, starts the Python interpreter, executes, compiles, and runs that code. And it's got to score it -- how well did it do? Lines of code, accuracy, did it crash? -- very quickly. So that all those results from the training run can get back to the GPU in order to do the next iteration of training. It is in the critical path. There are ways of overlapping. You'll hear about off-policy, where maybe you're training on the N-minus-one data, doing pipelining. But you can't do too much of that because you get model drift. So what the world is asking for, what it needs, is a really fast CPU that can generate a lot of training data while you're training in order to make the model faster, and never let [the] GPU go idle. This might be a $30 billion, gigawatt data center of GPUs. I'm not going to skimp on the CPU side and have it sit idle, or have the potential of that model come up short because I couldn't run the compilation for too long and had to cut it off. And then finally, when you actually deploy AI after you're done training, it's not just the AI model. The GPUs are telling the CPUs what to do. They run a SQL query, or they render an image, or they go to a website -- all that's happening on the CPUs. The more tool calling that can happen in fixed power, the more efficient it can be and still maintain these interactive use cases, the more valuable those tokens are. And lastly, as we get to the agent world, where it's not just us doing chatbot with humans in the loop, we're going to have agents talking to agents at machine speed. You just took humans out of the loop again. That can happen as fast as the computers can compute. Journalist 2: So, just to be clear, your customers -- your ODMs, your Dell, HPs -- if they want to build a system, that's what they'll get? Ian Buck: They can build it like this [referring to the reference board brought to the Q&A] or, we will ship the chip itself. Journalist 2: So, in theory then, your partners could go off the reservation and build a gaming PC, or whatever they wanted to do with it? Ian Buck: They could. I think they're all highly motivated to build what Nvidia recommends [and take advantage of] the opportunities with agentic use cases. Intel NVLink Fusion partnership Journalist 3: Ian, what's become of the partnership with Intel? Last year, you guys announced a partnership. Ian Buck: We didn't talk about it in this keynote, but it's progressing. Fusion is a key part of that strategy. It's an IP block plus a chiplet that allows CPUs like x86 to talk across NVLink to our GPUs, or even other accelerators. We've announced multiple partnerships including Intel, and that is definitely progressing. It takes a little while to integrate at the silicon level. Obviously, it's pretty intimate integration. But I think we'll see some more announcements about that shortly. Journalist 4: Is that partnership going to involve implementing Nvidia IP on Intel process technology? And if so, who's going to be doing the lifting there? Ian Buck: There's a separation between the manufacturing of who builds the chip or chiplets from the IP integration. The integration I talk about is the IP hooking into the fabric of the processor. This [the Vera module] is actually multiple chiplets. You've got multiple I/O dies, memory interface tiles, as well as the core. If you look at the right angle, you can see one, two, three, four, five, six pieces of silicon come together. So who builds which piece, in which factory, and who does the integration, that's up to the partners. It'll be different for each integration. Journalist 2: We asked Jensen about that, and he said, look, our bits will be coming from TSMC, the Intel stuff will be coming from wherever they choose to get it. Journalist 4: I think we're trying to determine, is this a toe in the water to develop Nvidia IP on Intel process technology? I asked Jensen yesterday, and he said he's not excited. Ian Buck: Obviously those questions are his [Jensen's] domain. He's a good person to be asking about those questions. CPX is still a good idea, says Buck Journalist 4: Looking at the disaggregated architecture that you've implemented with LPX, it does strike me as a situation where it almost makes more sense to pair CPX with LPX racks rather than relying on an HBM-based product like Rubin. Ian Buck: CPX is still a good idea. It is the opportunity to improve token throughput, to get to that next tier of agents talking to agents that need to run a 1 trillion, 2 trillion parameter model with 400,000 to 500,000 KV input context at token rates of about 1,000 tokens per second, because there's no human in the loop. Input tokens do impact decode speed [...] so 400,000 tokens of context significantly changes the token rate. When we talk about pivoting from CPX to LPU, that's where the focus was. Right now there's a limit to how many chips [...] we want to do this this year. We want to do this with Vera Rubin. Just because of that effort, this will help those agentic AI frontier labs be able to take that level of intelligence to market. The volume AI market is offline inference, non-reasoning chatbots, recommendation systems, reasoning chatbots, multimodal, deep research. This [LPX] will not add value to all of those. Everything can be served on [Vera Rubin NVL72]. But that next tier is super important as we turn the corner, and it was important to make sure we had that brought to market this year. CPX is an optimization, it's still a good idea [and] it would help break down the cost of the pre-fill stage, but sing these GPUs for the pre-fill portion of the workload is sufficient right now. LPX paired with Vera Rubin Journalist 4: If CPX is not coming until 2027, but Vera as a standalone is available sooner than that, is the Vera CPU going to be available sooner [unintelligible] will there be something that doesn't use AI to compare it with before 2027? Ian Buck: The LPU racks, Groq, they would run the whole model on the LPU racks alone. That capability exists. But the challenge with doing that is you had to feed not only the entire model, but all of the KV cache and all of the multiple queries on an SRAM chip that only has 500 megabytes. This [Vera Rubin GPU] has 280 gigabytes. So as models got bigger and contexts got larger, and you just had to keep all of that state around, as well as do all that attention math, it gets costly to have that many LPUs run a trillion-parameter model with the weights plus KV cache. It didn't need to be paired with anything. But it was very expensive. And Jensen showed that in the chart as well. You could get to 1,000 tokens per second, but the economics of doing that with that many chips just don't work. It has nothing to do with prefill. Pre-fill is just step one, how quickly can you get to your first token. After you've done that, there's pre-fill, GPUs, or whatever you're using in pre-fill. It doesn't matter. Your token rate is all about the number of processors you're using to generate every token after that. So it's not as simple as that. If you just did [Groq 3] LPX, you would need a lot of chips because of all that context. By combining the LPX with Vera Rubin, we don't need all that. We just do on the LPU what it's good at, which is basically the memory bandwidth, seven times faster than HBM. That lets the mixture-of-experts layers that are inside each expert group run here. The whole rest of the model, all the attention math, can run on the GPUs. So instead of dozens of racks of LPX, we can deliver that level of performance with just two racks of LPX and one rack of Vera Rubin. And as a result, the token rate gets to 1,000 tokens per second, but the economics go back to the sweet spot. Tokens will be higher value for sure, tens or hundreds of tokens per second rather than thousands. And you can also deploy at data center scale to serve a market. Building it once, serving a few customers in a highly constrained environment is nice, but that doesn't create a market. You have to build an architecture that, by combining LPX with Vera Rubin at one-to-one, or one-to-two, or maybe one-to-four rack ratios, can activate a market to deploy a 100-megawatt data center, a 500-megawatt, a gigawatt data center and serve those models economically. Journalist 4: And just to be clear, all those benefits you're talking about are on the decode side, after we're over the pre-fill hump? Ian Buck: Pre-fill [...] it's just the first token. How long does it take to get the first token? That's all CPX was trying to optimize. It's an important problem, but you can solve it with existing hardware. You can solve it with NVL72, with the older architectures. We can reduce the time to first token, and we can also solve it today by just adding a few more [...] it parallelizes very easily. But it's just the first token. [LPU decode] will increase the speed of every token after that. LPX chip-to-chip Journalist 3: I was wondering if you could talk a little bit more about how the LPX is going to connect to other chips, both in the Nvidia ecosystem and outside the Nvidia ecosystem? What about working with CPUs that other companies might make or that customers might procure from elsewhere? Ian Buck: When we licensed the IP, obviously there was limited stuff that we could change. But there were some last-minute changes that we were able to make to bring it to market. So this is the version [Groq 3/LP30], which is almost largely what it was. We're still using the chip-to-chip signaling that was already there. Journalist 4: So there's no Nvidia NVLink chip-to-chip on it yet. Ian Buck: Not yet. As the roadmap shows, it's coming with LP40 in the next generation. That's when we'll add the NVLink interconnect. On the next version, we'll also rev the compute side, and we'll add FP4 capabilities and all the Tensor Core math stuff that we have in our GPUs. Scale-up rack-to-rack Journalist 1: Can you walk us through how scale-up rack-to-rack works? Ian Buck: [Pointing to the Vera Rubin module] This is a Vera Rubin. I have two Rubin GPUs here, one Vera CPU. These are the NVLink connections. This is purposely that close. This stuff is flying. The bandwidth in this direction [NVLink] is between 10x to 20x more, in terabytes per second, than in this direction [PCIe]. Here, we just use the same connector, but we have multiple lanes of PCIe connected to networking, multiple NICs, storage planes, or other systems. The significance between the amount of bandwidth [intelligible] this way... you need that much bandwidth to have all GPUs within one rack really operating as one. The main use of scale-up is parallelism, taking the most compute-intensive part of the computation and doing tensor parallelism across all the GPUs. When the world went to mixture of experts, where every layer has many experts [intelligible], Kimi K2 has over 200 experts per layer but only activates eight of them. Imagine using your entire brain to do two plus two. All those experts have to talk to all those experts extremely fast. That's why NVLink is so fast and why we have a dedicated NVSwitch, a lot of them in the rack, purposely in the middle of the racks so the signal is super fast. We do it all in copper. You'll see the copper cables in the back. There's over 5,000 of them, because short-run copper is both fast and cheap. There's no retimers. It's also the lowest power. I don't have to drive a retimer or a transceiver or an optical fiber. One of the real reasons we went to liquid cooling was not just to get GPU performance, but to connect as many GPUs together in copper so that we could provide scale-up without exploding the cost. You could build 72 GPUs all with fiber and that many transceivers, but it's expensive and would consume a lot of power. A transceiver can consume significant power just slamming the laser on and off. This generation, we're all in copper. Jensen did talk about going beyond 72 GPUs per rack. In the overall design of the NVSwitches, we're actually building an NVSwitch that has ports in the front. We can do a level two of NVLink and actually scale up the number of GPUs to 576. We have a prototype of that working with Grace Blackwell right now. The models today don't benefit from that, but the models tomorrow will. It's a chicken-and-egg relationship between what capability we have versus what the models can do. And it's important that we show we're doing that, so that next-generation models can take advantage of it and design for that future where we have two layers of NVLink scale-up. With the Kyber rack, we can densify further. We can put 144 GPUs in a single rack, again all in copper. And then we can even go a step further: with 144, we can scale to 576, and then double to 1,152. I really look forward to showing you that when we get there. Journalist 4: Could you clarify how optical connections intersect with the roadmap? If there's an optical-capable rack with Grace Blackwell in it, does that mean you're going to put co-packaged optics with that generation? Ian Buck: Rubin gets optical. And Ambulink is CPO [co-packaged optics]. Journalist 1: With LP30 only supporting FP8, are you looking at on-the-fly dequantization, or do you need to run everything in FP8? Ian Buck: You don't need to run everything in FP8. Today's FP4, NVFP4, is done layer by layer, or actually block by block. When you go and look at Nvidia-optimized versions of all these models, you'll see that some of the math is FP16, FP8, and FP4. We can mix and match. The way the engineers do it is they explore the space and then they run both hand-coded and AI-generated kernels to try all the different combinations. We then run that on a fleet of GPUs that we've rented back from the clouds to explore the space to make sure it's performant and accurate. We also test to make sure the accuracy didn't fall off or drop to a point where it's no longer valuable. One big data point that's kind of fun: in October to January, the team was optimizing specifically focused on DeepSeek and DeepSeek-like models. They got that 4x uplift in just four months. Same GPUs, all the ones that everyone's already bought, the whole install base 4x faster. To do that, they actually ran about 250 simulations and then about 1.2 million GPU hours. We had all sorts of ideas exploring the entire space across a massive fleet of GPUs for four months to get those results. There is still more to come. Software is an untold story here. People like to say who's got the faster chip, and I'm like, who's got the better software ecosystem? That's why it's so hard to benchmark these things, because the whole stack end-to-end matters. How efficiently all of your different chips work together. We need six chips today, seven chips tomorrow to make all this stuff actually get performance. And the combinatorial optimization space is massive. We've got 400 engineers that work on that, and it came to 1.2 million GPU hours.

[3]

Nvidia To Upgrade AI Chatbot Performance WIth New 'LPU' Chip

When he's not battling bugs and robots in Helldivers 2, Michael is reporting on AI, satellites, cybersecurity, PCs, and tech policy. To improve chatbot performance, Nvidia is going to sell a new kind of processor called an LPU, which has been optimized to run large language models. The "Nvidia Groq 3 LPU" chip was among 7 upcoming chips Nvidia touted at the company's annual GTC event, where it pitched the AI industry on why Nvidia's chips continue to lead. The LPU or Language Processing Unit comes from Nvidia's deal this past December to license technology from a California AI company called Groq. Founded in 2016, Groq and it's earlier LPU chips have been specifically designed for large language models to offer faster speeds and energy efficiency, creating an alternative to Nvidia's enterprise GPUs, which can be used for a wider range of AI workloads. Nvidia now wants to pair the newly-revealed Groq 3 LPU with the rest of the company's next-generation AI chips, dubbed the "Vera Rubin" platform, which include the upcoming Rubin GPU and Vera CPU tech for data centers. Groq's LPU chips use even faster SRAM (static RAM), instead of HBM (high-bandwidth memory) typically found on Nvidia's GPUs. But on the downside, Groq's LPUs can only offer "hundreds of megabytes" in SRAM, whereas HBM memory can span over a hundred gigabytes or more per chip. It's why a single Groq 3 LPU only contains 500MB of SRAM while Nvidia's upcoming Rubin GPU will feature 288GB of HBM4 memory. To compensate for the lower memory size, Nvidia is preparing to sell large batches of LPUs to work alongside the rest of its data center chips, giving a way for AI companies to squeeze out even more performance. Nvidia noted "the LPX rack with 256 LPU processors features 128GB of on-chip SRAM and 640 TB/s of scale-up bandwidth. Deployed with Vera Rubin NVL72 (server unit), Rubin GPUs and LPUs boost decode by jointly computing every layer of the AI model for every output Token." A data center could thus harness both the LPUs and Nvidia's GPUs, divvying up the AI workloads between them to increase efficiency. Nvidia's CEO Jensen Huang said the combined approach excels at helping AI companies boost performance for longer prompts. Combined, the LPUs and Rubin GPUs also promise to offer an up to 35x increase in throughput when running a large language model reaching 1 trillion parameters in size, according to Nvidia's benchmarks. "We're in production with the Groq chip," Huang said, adding that it'll likely ship in Q3. Nvidia has contracted Samsung to manufacture the LPU. One analyst already expects Nvidia to ship out 4 to 5 million LPUs through 2026 and 2027. The new LPU and Vera Rubin systems will likely costs tens of thousands of dollars per chip, putting them far out of reach of consumers. Instead, expect the biggest AI companies including OpenAI, Anthropic, Meta to adopt the technologies, meaning they could power your chatbot queries or image generation requests in the near future. At GTC, Nvidia also talked up Vera Rubin, which the company has gone into detail before including at January's CES, where the company revealed the Rubin chips were in "full production." Nvidia plans on shipping the Vera Rubin-related chips, including the new LPU chip, in this year's second half.

[4]

A closer look at Nvidia's Groq-powered LPX rack systems

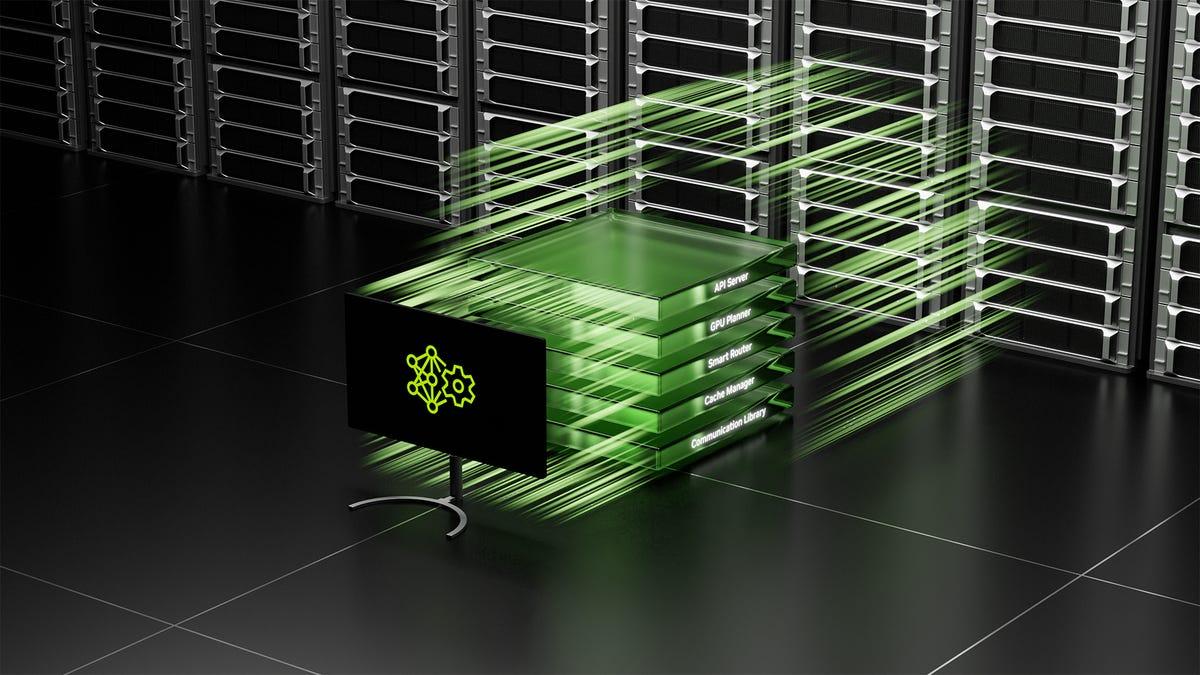

From LPUs and GPUs to CPUs and switches, everything you need to know about Nvidia's latest kit GTC DEEP DIVE At Nvidia's GTC conference this week, CEO Jensen Huang finally addressed a $20 billion question he's dodged for months: Why spend so much to license AI chip startup Groq's tech and hire away its engineers rather than build it themselves? As we've said before, if Nvidia wanted to build an SRAM-heavy inference accelerator, it didn't need to buy Groq to do it. The company's newly announced Groq 3 LPX racks, which pack 256 LP30 language processing units (LPUs) into a single system, show time-to-market was the reason Nvidia bought rather than built. We're told the chip is based on Groq's second-gen LPU tech with a handful of last-minute tweaks made just before tapping out at Samsung's fabs. The chip doesn't use Nvidia's proprietary NVLink interconnect, it lacks NVFP4 hardware support, and it isn't CUDA-compatible at launch. We can therefore conclude that the $20 billion paid to acquire Groq's intellectual property rights and engineering staff was an opportunity cost to get the chips out the door and into customers' hands this year. One of the defining characteristics of SRAM-heavy architectures from Groq and its rival Cerebras is that they are very fast when running LLM inferencing workloads, routinely achieving generation rates exceeding 500 and even 1000 tokens a second. The faster Nvidia can generate tokens, the faster code assistants and AI agents can act. But this kind of speed also opens the door to what Huang describes as test-time scaling. The idea is that by letting "reasoning" models generate more "thinking" tokens, they can produce smarter, more accurate results. So, the faster you can generate tokens, the less of a latency penalty test-time scaling incurs. On stage at GTC, Huang suggested that this high-performance and low-latency inference provider could eventually charge as much as $150 per million tokens for this capability. Unfortunately for Nvidia, GPUs are great for batch processing but don't scale nearly as efficiently as per-user-output speeds increase. At least not on their own. By combining its GPUs and Groq's LPU tech, Nvidia aims to deliver the best of both worlds: an inference platform that scales much more efficiently at higher tokens per second per user. Nvidia is also under some pressure to maintain its dominance of the AI infrastructure market as rival chip designers like AMD close the gap on hardware and software. Last week, Amazon and Cerebras announced a collaboration to pair AWS' Trainium-3 accelerators with the latter's wafer-scale accelerators for many of the same reasons Nvidia built LPX. Of course, AWS has also announced plans to deploy more than a million Nvidia GPUs in addition to fielding Nvidia-Groq LPUs, so the cloud giant hasn't suddenly started picking sides. The LP30 is very different from Nvidia's GPUs. It's built by Samsung Electronics rather than TSMC and uses only on-chip SRAM. It also ditches the conventional Von Neumann architecture for another commonly referred to as data flow. Rather than fetching instructions from memory, decoding, executing, and then writing that back to a register, data flow architectures process data as it's streamed through the chip. The processor's compute units don't have to wait for a bunch of load and store operations to shuffle data around, which, in theory results in higher achievable utilization. According to Nvidia, each LP30 can deliver 1.2 petaFLOPS of FP8 compute. But, as we mentioned earlier, support for 4-bit block floating point data types, like MX or NV FP4, won't arrive until the LP35 arrives sometime next year. That compute is tied to a relatively large pool of SRAM memory, which is orders of magnitude faster than the high-bandwidth memory (HBM) found in GPUs today, but is also incredibly inefficient in terms of space required. Each LPU only has enough die space for 500 MB of on-chip memory. For comparison, just one of the eight HBM4 modules on Nvidia's Rubin GPUs contains 36GB of memory. What the LP30 lacks in capacity, it more than makes up for in bandwidth, achieving speeds up to 150 TB/s - nearly 7 times more than Nvidia's Rubin accelerators. This makes LPUs ideal for the auto-regressive decode phase of the inference pipeline, during which all of a model's active parameters need to be streamed from memory for every token generated. Of course, to do that, you need to fit the model in memory, which is no easy task for the trillion-parameter models Nvidia is targeting. For models this large, multiple racks are required. Because of this, LP30 bristles with interconnects. Each chip features 96 of them - specifically, 112 Gbps SerDes - totalling 2.5 TB/s of bidirectional bandwidth. Each LPX rack is equipped with 256 LPUs. Those are spread across 32 compute trays, each containing eight LPUs, some fabric expansion logic and DRAM, and the host CPU and a BlueField-4 data processing unit (DPU). Some of that network connectivity is funnelled out the back of these blades into a new copper Ethernet backplane Nvidia calls the Oberon ETL256, while the remainder is directed out the front of the system enabling multiple NVL72 and LPX racks to be stitched together. While it's entirely possible to run large language models (LLMs) entirely on an LPX cluster, that's not how Nvidia is positioning the product. Instead, one or more LPX racks is paired with a Vera-Rubin NVL72, which we discussed in more detail back when Nvidia showed it off in January, with various parts of the inference stack distributed across the GPUs and LPUs. Nvidia's reference design has a relatively small number of GPUs handling the compute-heavy prompt-processing (prefill) phase, while the bandwidth-intensive decode phase, where tokens are generated, is split between a separate pool of GPUs and the LPUs. During this decode phase, Nvidia takes advantage of GPUs' comparatively large memory and compute capacity to handle the attention operations, while the bandwidth-constrained feed forward neural network ops are offloaded to LPUs sitting in the LPX rack over Ethernet. Nvidia's Dynamo disaggregated inference platform handles orchestration for all of this. The whole system requires a lot of LPUs. The exact ratio of GPUs to LPUs depends on the workload. Tasks requiring extremely large contexts, batch sizes, or concurrency may need a larger pool of GPUs. A general-purpose chatbot might run well on a single rack. This is because longer context windows require more memory for the key-value (KV) caches that store model state (think short-term memory) and attention operations. By keeping these on the GPU, Nvidia is able to get by with fewer LPUs. The actual number of required LPUs is directly proportional to the size of a model. For a trillion-parameter model, that translates to between four and eight LPX racks, or 1,024 to 2,048 LPUs, depending on whether the weights are stored in SRAM at 4-bit or 8-bit precision. If you're not a hyperscaler, neocloud, model dev, LPX is probably not for you. The sheer number of LPUs required to serve large open models will likely put Nvidia's LPX platform out of reach for most enterprises. Speaking to press ahead of this week's keynote, Buck said Nvidia is focusing primarily on model builders and service providers that need to serve trillion-plus-parameter models with token rates exceeding 500 to 1,000 a second. Having said that, in a technical blog, Nvidia presented another use case for the LPUs as a speculative decode accelerator, something we suggested the company might do back in December. Speculative decoding is a method for juicing inference performance by using a smaller, more performant "draft" model to predict the outputs of a larger model. When it works, the technique can speed token generation by anywhere from 2x to 3x. And since the approach fails back to the larger model anytime it guesses wrong, there's no loss in quality or accuracy. Nvidia proposes hosting the draft model on LPUs and the larger target model on a set of GPUs. Since draft models tend to be fairly small, this might present an opportunity for Nvidia to sell LPUs to enterprise customers. You may be scratching your head, wondering "wasn't there supposed to be some kind of special Rubin chip optimized for large-context prefill processing?" You're not hallucinating. Back at Computex last northern spring, Nvidia unveiled the Rubin CPX, a version of Rubin that used slower, less expensive GDDR7 memory to speed up the time to first token - how long users or agents have to wait for the model to start generating an output - when working with large inputs. The idea was that Rubin CPX could cut down on wait times for applications that might involve processing large quantities of documents, freeing up the non-CPX Rubins, and speeding up overall decode times. However, by early 2026, Nvidia stopped mentioning CPX. This week, we learned the project had been put on the back burner so Nvidia can prioritize LPX. It's important to note that LPX is not a replacement for CPX. The two platforms were designed to accelerate opposite ends of the inference pipeline: LPUs are designed to speed up token generation during the decode phase, while CPX was intended to cut the time users or agents spent waiting for the model to respond during prefill. Nvidia hasn't given up on the concept either. Ian Buck, VP of Hyperscale and HPC at Nvidia, told press that CPX is still a good idea and that we may see the concept resurface in future generations. While LPX is the most interesting addition to Nvidia's rack-scale lineup, it's not the only one. At GTC, Nvidia also unveiled three more rack-scale designs, one each for networking, storage, and agentic compute. We looked at Nvidia's new Vera CPU racks in more detail earlier this week, but the system uses the same ETL network backplane as its LPX racks and HGX systems, and features 32 compute blades, each with eight 88-core Vera CPUs and up to 12 TB of LPDDR5X SOCAMM memory modules on board. In addition to serving as the host processor on Nvidia's latest generation products, the Vera CPU rack is intended as an execution environment for agentic systems, like Open Claw, that require high-memory bandwidth and single-threaded performance. Alongside the CPU racks is a new storage rack called the BlueField-4 STX. As the name suggests, the reference design combines Nvidia's BlueField-4 data processing units (DPUs, aka SmartNICs) with a Vera CPU and ConnectX-9 NICs. Nvidia intends this offering to serve as a KV-cache offload target. Any time an LLM processes a prompt, it generates KV caches that store the model state in vectors. By keeping those pre-computed vectors in GPU or system memory, or flash storage, only new tokens have to be computed while repeating ones can be recycled from cache. Earlier this year, Nvidia showed off its context-memory storage platform, which is meant to automate the offload of KV caches to compatible storage targets. The AI infrastructure giant claims that this approach can boost token rates by up to 5x by freeing up GPU resources to handle other elements of the inference pipeline. Finally, there's the Spectrum-6 SPX network rack, which also leverages the MGX ETL reference design to simplify cabling of Spectrum-X and Quantum-X switches. Together, these rack systems form a sort of assembly line. Think of it this way: Vera CPU racks running AI agents make API calls to models running Vera-Rubin NVL72 systems with Groq LPX decode accelerators. KV caches generated by these agents are offloaded to STX storage, and everything is connected to one another by SPX racks packed with Spectrum or Quantum switches. And as long as the AI boom continues, Nvidia keeps printing money. ®

[5]

Nvidia Groq 3 LPU and Groq LPX racks join Rubin platform at GTC -- SRAM-packed accelerator boosts 'every layer of the AI model on every token'

Nvidia's Vera Rubin platform is poised to massively power up the next generation of AI data centers, or "factories," as CEO Jensen Huang calls them, when those systems start arriving later this year. Today, during his GTC keynote, Huang revealed how Nvidia is using the IP it acquired from Groq last year to expand Rubin's capabilities. The Rubin platform now includes a new chip, the Nvidia Groq 3 LPU, an inference accelerator that bolsters these systems' ability to deliver tokens in volume and at low latency for high interactivity at the leading edge of AI models. Recall that the Rubin platform already includes six chips from which Nvidia builds up rack-scale systems and scales them out into AI factories: the Rubin GPU itself, the Vera CPU, NVLink 6 scale-up switches, the ConnectX 9 smart NIC, the Bluefield 4 data processing unit, and the Spectrum-X scale-out switch with co-packaged optics. The Groq 3 LPU becomes another building block for Rubin at scale. Unlike most AI accelerators, which rely on HBM as their working memory tier, each Groq 3 LPU incorporates 500 MB of SRAM, the same memory used for ultra-high-speed caches on CPUs and GPUs. That's paltry compared to the vastly more capacious 288GB of HBM4 on each Rubin GPU, but as you would expect, that SRAM delivers 150 TB/s of bandwidth, far more than the 22 TB/s of that same HBM. For bandwidth-sensitive AI decode operations, the massive bandwidth boost of the Groq 3 chip offers tantalizing benefits for inference applications. In turn, Nvidia will build up Groq 3 LPX racks comprising 256 Groq 3 LPUs. That rack offers 128GB of SRAM with 40 PB/s of bandwidth for inference acceleration, and it joins those chips together with a dedicated scale-up interface of 640 TB/s per rack. Nvidia envisions Groq LPX as a co-processor for Rubin that will boost decode performance at "every layer of the AI model on every token," according to Nvidia hyperscale VP Ian Buck, and it positions Rubin to serve the next frontier of AI: multi-agent systems that need to deliver interactive performance while inferencing models of trillions of parameters with context windows of millions of tokens. As the AI agents in those multi-agent systems begin talking more and more to other AIs rather than humans looking at chatbot windows, the frontier for responsiveness requirements also shifts. What might seem like a reasonable rate of tokens generated per second for a human is glacial for an AI agent. In the future of multi-agent systems that Buck describes, the combination of Rubin GPUs and Groq LPUs moves us from a world where 100 tokens per second is a reasonable throughput to one of 1500 TPS or more for AI agent intercommunication. The addition of the Groq 3 LPU to the Rubin arsenal could help the platform fend off challengers in the low-latency inference frontier. Cerebras, whose wafer-scale engines fuse massive amounts of SRAM and compute for low-latency inference with advanced models, has frequently needled Nvidia regarding the perceived disadvantages of its GPUs for that purpose, and customers as large as OpenAI have signed up for Cerebras capacity to serve some of their state-of-the-art models with the favorable latency characteristics of that platform. Buck also hinted that the Groq 3 LPU might lead to a reduced role for the Rubin CPX inference accelerator, saying that the company is currently focused on integrating the Groq 3 LPX rack with Rubin. While he didn't offer more details, that focus shift would make sense in today's memory-constricted world, since the two chips are meant to offer similar enhancements for inference performance and the Groq LPU doesn't require the large amount of GDDR7 memory that each Rubin CPX module does. We're on the ground at GTC this week, and we'll be exploring what the fusion of Groq and Nvidia IP means for the future of AI inference through conversations and sessions at the event. Stay tuned. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[6]

Nvidia's next AI chip may move beyond the all-purpose GPU

Serving tech enthusiasts for over 25 years. TechSpot means tech analysis and advice you can trust. Forward-looking: Nvidia is poised to move beyond the graphics processors that powered its rise to dominance. Nvidia GTC kicks off this week - now branded more explicitly as an "AI conference" - where chief executive Jensen Huang is expected to introduce a new processor designed specifically for inference, according to people familiar with the company's plans. The shift would mark a rare departure from the philosophy that has guided Nvidia for most of its 33-year history: that a single class of GPU can handle every stage of AI computing, from training massive models to delivering fast, real-time responses. As competition intensifies and demand grows for quicker, more efficient AI output, the company is beginning to explore a more specialized approach. The upcoming chip is said to be based on technology from Groq, the startup Nvidia acquired late last year in a transaction valued at roughly $20 billion. The acquisition combined a licensing partnership with the recruitment of Groq's core engineering team, including founder and former Google chip designer Jonathan Ross. Groq's hardware - known as language processing units, or LPUs - was originally designed to accelerate the inference side of machine learning, focusing on rapid reasoning and text-generation tasks with lower latency than conventional GPUs. If unveiled as expected, the processor would be the first major product to emerge from that acquisition and a signal that Nvidia intends to expand its silicon lineup beyond its GPU-centric systems. According to people briefed on Nvidia's plans, the Groq-based processor will be designed to operate alongside the company's next-generation Vera Rubin GPU. Together, the two chips are expected to anchor a new platform aimed at handling an increasingly diverse mix of AI workloads across modern data centers. The move comes as Nvidia's grip on the AI hardware market faces pressure from several directions. Large customers such as Google and Meta are investing heavily in their own custom chips, while startups are developing specialized processors aimed at reducing cost and power consumption. Earlier this week, Meta unveiled an internal lineup of four inference-focused chips, reinforcing the broader shift toward heterogeneous AI infrastructure. For Nvidia, the challenge is both technical and logistical. The company's Blackwell and Rubin-class GPUs rely on high-bandwidth memory (HBM) to process the massive datasets behind modern AI models. But HBM has become scarce and increasingly expensive, as suppliers including SK Hynix and Micron struggle to keep pace with surging demand. To work around that bottleneck, the new Groq-derived processor is expected to use static random-access memory (SRAM) - a faster, more accessible, though typically smaller-capacity form of memory. SRAM's low latency makes it particularly suited for inference based on reasoning rather than the bulk computations required for training. Many analysts expect inference to dominate AI infrastructure spending within the next few years. Bank of America estimates that by 2030 - when the AI data-center market could reach roughly $1.2 trillion - inference will represent about 75% of total spending, up from roughly half in 2025. In a recent note, the firm said it expects Nvidia to unveil a "broadened AI portfolio," including an SRAM-based processor derived from its Groq acquisition. The pivot toward inference hardware also addresses a practical constraint facing many enterprises deploying AI today. A large share of existing data centers were never designed to support the liquid-cooling systems required by Nvidia's newest high-performance GPUs. "Many enterprises want to do inference using their existing data centers, but the vast majority of today's data centers... can't support the latest liquid-cooled GPUs," said June Paik, chief executive of AI chip startup FuriosaAI. That flexibility could make Nvidia's new chips appealing to companies reluctant to rebuild facilities from the ground up. Ben Bajarin, analyst at Creative Strategies, said the market is moving toward a more diverse mix of AI accelerators. "The future of the data center is not going to be a one-size-fits-all world," he said.

[7]

Nvidia slaps Groq into new LPX racks for faster AI response

GPUzilla's $20B acquihire paves to way to AI agents that halucinate faster than ever GTC Nvidia will use Groq's language processing units (LPUs), a technology it paid $20 billion for, to boost the inference performance of its newly-announced Vera Rubin rack systems, CEO Jensen Huang revealed during his GTC keynote on Monday. Using this technology, the GPU giant can now serve massive trillion parameter large language models (LLMs) at hundreds or even thousands of tokens a second per user, Ian Buck, VP of Hyperscale and HPC at Nvidia told press ahead of Huang's keynote Sunday. Until now, ultra-low latency inference has been dominated by a handful of boutique chip slingers like Cerebras, SambaNova, and of course, Groq, the latter of which Nvidia all but absorbed as part of an acquihire late last year. Demand for these so-called premium tokens has grown over the past year. OpenAI is using Cerebras' dinner-plate sized accelerators to achieve near nearly instantaneous code generation for models like GPT-5.3 Codex-Spark. By combining its GPUs with Groq's LPUs, Nvidia wagers inference providers will be able to charge as much as $45 per million tokens generated. To put that in perspective, OpenAI currently charges about $15 per million output tokens for API access to its top GPT-5.4 model. To be clear, LPUs won't replace Nvidia's GPUs but rather augment them. LLM inference encompasses two stages: the compute-heavy prefill phase in which the prompts are processed, and the bandwidth-heavy decode phase during which a response is generated. With up to 50 petaFLOPs each, Nvidia's newly announced Rubin GPUs aren't hurting for compute, but with 22 TB/s of HBM4 memory bandwidth, Groq's latest chip tech is nearly 7x faster, achieving 150 TB/s apiece. This makes Groq's LPU an ideal decode accelerator. Nvidia plans to cram 256 of the chips into a new LPX rack system that'll be connected via a custom Spectrum-X interconnect to a neighboring Vera-Rubin NVL72 rack system. The GPUs will handle the compute-intensive prompt processing, while the LPUs spew out tokens. The GPU giant needs that many chips because, while SRAM may be fast, the chips are neither capacious nor compute-dense. Each Groq 3 LPU is capable of 1.2 petaFLOPS of FP8 and contains 500 MB of on board memory. That's about 1/500th of the capacity of Nvidia's Rubin GPU. "The LPU is optimized strictly for that extreme, low-latency token generation, offering token rates in the 1000s of tokens per second. The trade off, of course, is that you need many chips in order to perform that kind of performance," Buck explained. "The tokens per second per chip, is actually quite low." In other words, to do anything interesting, Nvidia is going to need a lot of them. Even with 256 chips per rack, that's only 128 GB of ultra fast memory, which is nowhere near enough for trillion-parameter models like Kimi K2. At 4-bit precision you'd need at least 512 GB of memory or about a thousand LPUs to hold a 1 trillion-parameter model in memory. Nvidia says multiple LPX racks can be ganged together to support these larger models. The integration of Groq's latest LPUs into Nvidia's LPX racks represents a bit of a course correction for the AI infrastructure magnate. Nvidia had previously announced a dedicated prefill processor called Rubin CPX at Computex last year. The basic idea was to use GDDR7-equipped Rubin CPX processors for prefill processing and HBM-equipped Rubin GPUs for decode. However, that project appears to have been abandoned in favor of Groq's LPU-based decode accelerators. "Integrating LPU and LPX into our written platform to optimize the decode, that's where we're focused right now," Buck said. Nvidia isn't the only one looking to fuse its compute-heavy AI accelerators to an SRAM heavy architecture like Groq's. On Friday, Amazon Web Services (AWS) announced a collaboration with Cerebras to develop a combined inference platform, not unlike Nvidia's Groq 3 LPX. In this case, the platform will use AWS' Trainium 3 accelerators for prompt processing and Cerebras' WSE-3 ASICs, each of which pack 44 GB of SRAM onto a wafer-sized chip, to generate low-latency tokens. Nvidia's Groq-based LPX systems are expected to ship alongside its Vera Rubin rack systems later this year, though it appears both access and software support may be somewhat limited. At least initially, Nvidia is focusing on model builders and service providers that need to serve trillion-plus parameter models with high token rates. Buck also notes that while Nvidia is using Groq's ASICs to accelerate its inference platform, they don't support its CUDA natively just yet. "There are no changes to CUDA at this time. We are leveraging the LPU as an accelerator to the CUDA that's running on the Vera NVL 72 platform," he explained. ®

[8]

NVIDIA Vera Rubin Opens Agentic AI Frontier

Seven New Chips in Full Production to Scale the World's Largest AI Factories With Configurable AI Infrastructure Optimized for Every Phase of AI, From Pretraining, Post-Training and Test-Time Scaling to Agentic Inference News Summary: The NVIDIA Vera Rubin platform is opening the next AI frontier with: GTC -- NVIDIA today announced the NVIDIA Vera Rubin platform is opening the next frontier of agentic AI, with seven new chips now in full production to scale the world's largest AI factories. The platform brings together the NVIDIA Vera CPU, NVIDIA Rubin GPU, NVIDIA NVLink™ 6 Switch, NVIDIA ConnectX-9 SuperNIC, NVIDIA BlueField-4 DPU and NVIDIA Spectrum™-6 Ethernet switch, as well as the newly integrated NVIDIA Groq 3 LPU. Designed to operate together as one incredible AI supercomputer, the chips power every phase of AI -- from massive-scale pretraining, post-training and test-time scaling to real-time agentic inference. "Vera Rubin is a generational leap -- seven breakthrough chips, five racks, one giant supercomputer -- built to power every phase of AI," said Jensen Huang, founder and CEO of NVIDIA. "The agentic AI inflection point has arrived with Vera Rubin kicking off the greatest infrastructure buildout in history." "Enterprises and developers are using Claude for increasingly complex reasoning, agentic workflows and mission-critical decisions. That demands infrastructure that can keep pace," said Dario Amodei, CEO and cofounder of Anthropic. "NVIDIA's Vera Rubin platform gives us the compute, networking and system design to keep delivering while advancing the safety and reliability our customers depend on." "NVIDIA infrastructure is the foundation that lets us keep pushing the frontier of AI," said Sam Altman, CEO of OpenAI. "With NVIDIA Vera Rubin, we'll run more powerful models and agents at massive scale and deliver faster, more reliable systems to hundreds of millions of people." Shift to POD-Scale Systems AI infrastructure is rapidly evolving -- from discrete chips and standalone servers to fully integrated rack-scale systems, POD-scale deployments, AI factories and sovereign AI. These advances are driving dramatic gains in performance, improving cost efficiency for organizations of all sizes and across industries -- from startups and mid-sized businesses to public-private institutions and enterprises -- while helping democratize access to AI and improving energy efficiency to power the world's most demanding workloads. Through deep codesign across compute, networking and storage, supported by an ecosystem of more than 80 NVIDIA MGX ecosystem partners with a global supply chain, NVIDIA Vera Rubin offers the most extensive NVIDIA POD-scale platform -- a supercomputer where multiple racks purpose-built for AI work together as one massive, coherent system. NVIDIA Vera Rubin NVL72 Rack Integrating 72 Rubin GPUs and 36 Vera CPUs connected by NVLink 6, along with ConnectX-9 SuperNICs and BlueField-4 DPUs, Vera Rubin NVL72 delivers breakthrough efficiency -- training large mixture-of-experts models with one-fourth the number of GPUs compared with the NVIDIA Blackwell platform and achieving up to 10x higher inference throughput per watt at one-tenth the cost per token. Designed for hyperscale AI factories worldwide, NVL72 scales seamlessly with NVIDIA Quantum-X800 InfiniBand and Spectrum-X Ethernet to sustain high utilization across massive GPU clusters while reducing time to train and total cost of ownership. NVIDIA Vera CPU Rack Reinforcement learning and agentic AI workloads rely on large numbers of CPU-based environments to test and validate the results generated by models running on GPU systems. The NVIDIA Vera CPU Rack delivers dense, liquid-cooled infrastructure built on NVIDIA MGX, integrating 256 Vera CPUs to provide scalable, energy-efficient capacity with world- class single-threaded performance, unlocking agentic AI at scale. Integrated with Spectrum-X Ethernet networking, Vera CPU racks keep CPU environments tightly synchronized across the AI factory. Together with GPU compute racks, they provide the CPU foundation for large-scale agentic AI and reinforcement learning -- with Vera delivering results twice as efficiently and 50% faster than traditional CPUs. NVIDIA Groq 3 LPX Rack NVIDIA Groq 3 LPX marks a milestone in accelerated computing. Designed for the low-latency and large-context demands of agentic systems, LPX and Vera Rubin unite the extreme performance of both processors to deliver up to 35x higher inference throughput per megawatt and up to 10x more revenue opportunity for trillion-parameter models. At scale, a fleet of LPUs function as a giant single processor for fast, deterministic inference acceleration. The LPX rack with 256 LPU processors features 128GB of on-chip SRAM and 640 TB/s of scale-up bandwidth. Deployed with Vera Rubin NVL72, Rubin GPUs and LPUs boost decode by jointly computing every layer of the AI model for every output token. Optimized for trillion-parameter models and million-token context, the codesigned LPX architecture pairs with Vera Rubin to maximize efficiency across power, memory and compute. The additional throughput per watt and token performance unlocks a new tier of ultra-premium, trillion-parameter, million-context inference, expanding revenue opportunity for all AI providers. Fully liquid cooled and built on MGX infrastructure, LPX integrates seamlessly into next-generation Vera Rubin AI factories to be available in the second half of this year. NVIDIA BlueField-4 STX Storage Rack The NVIDIA BlueField-4 STX rack-scale system is an AI-native storage infrastructure that extends GPU memory seamlessly across the POD. Powered by BlueField-4 -- combining the NVIDIA Vera CPU and NVIDIA ConnectX-9 SuperNIC -- STX delivers a high-bandwidth shared layer optimized for storing and retrieving the massive key-value cache data generated by large language models and agentic AI workflows. NVIDIA DOCA Memos™ -- a new DOCA framework that supercharges BlueField-4 storage -- enables dedicated KV cache storage processing to boost inference throughput by up to 5x while significantly improving power efficiency compared with general-purpose storage architectures. The result is POD-wide context that delivers faster multi-turn interactions with AI agents, more scalable AI services and higher overall infrastructure utilization. "The NVIDIA BlueField-4 STX rack-scale context memory storage system will enable a critical performance boost needed to exponentially scale our agentic AI efforts," said Timothée Lacroix, cofounder and chief technology officer of Mistral AI. "By delivering a new storage tier purpose-built for AI agents memory, STX is ideally positioned to ensure that our models can maintain coherence and speed when reasoning across massive datasets." NVIDIA Spectrum-6 SPX Ethernet Rack Spectrum-6 SPX Ethernet is engineered to accelerate east-west traffic across AI factories. Configurable with either Spectrum-X Ethernet or NVIDIA Quantum-X800 InfiniBand switches, it delivers low-latency, high-throughput rack-to-rack connectivity at scale. Spectrum-X Ethernet Photonics with co-packaged optics achieves up to 5x greater optical power efficiency and 10x higher resiliency compared with traditional pluggable transceivers. Improving Resiliency and Energy Efficiency NVIDIA, along with over 200 data center infrastructure partners, announced the NVIDIA DSX platform for Vera Rubin. This includes DSX Max-Q to enable dynamic power provisioning across the entire AI factory, resulting in the deployment of 30% more AI infrastructure within a fixed-power data center. The new DSX Flex software enables AI factories to be grid-flexible assets, unlocking 100 gigawatts of stranded grid power. NVIDIA also today released the Vera Rubin DSX AI Factory reference design, a blueprint for codesigned AI infrastructure that maximizes tokens per watt and overall goodput, improving system resiliency and accelerating time to first production. By tightly integrating compute, networking, storage, power and cooling, the architecture increases energy efficiency and ensures AI factories can scale reliably under continuous, high-intensity workloads with maximum uptime. Broad Ecosystem Support Vera Rubin-based products will be available from partners starting the second half of this year. This includes leading cloud providers Amazon Web Services, Google Cloud, Microsoft Azure and Oracle Cloud Infrastructure, along with NVIDIA Cloud Partners CoreWeave, Crusoe, Lambda, Nebius, Nscale and Together AI. Global system manufacturers Cisco, Dell Technologies, HPE, Lenovo and Supermicro are expected to deliver a wide range of servers based on Vera Rubin products, as well as Aivres, ASUS, Foxconn, GIGABYTE, Inventec, Pegatron, Quanta Cloud Technology (QCT), Wistron and Wiwynn. AI labs and frontier model developers including Anthropic, Meta, Mistral AI and OpenAI are looking to use the NVIDIA Vera Rubin platform to train larger, more capable models and to serve long-context, multimodal systems at lower latency and cost than with prior GPU generations.

[9]

Nvidia introduces Vera Rubin, a seven-chip AI platform with OpenAI, Anthropic and Meta on board

Nvidia on Monday took the wraps off Vera Rubin, a sweeping new computing platform built from seven chips now in full production -- and backed by an extraordinary lineup of customers that includes Anthropic, OpenAI, Meta and Mistral AI, along with every major cloud provider. The message to the AI industry, and to investors, was unmistakable: Nvidia is not slowing down. The Vera Rubin platform claims up to 10x more inference throughput per watt and one-tenth the cost per token compared with the Blackwell systems that only recently began shipping. CEO Jensen Huang, speaking at the company's annual GTC conference, called it "a generational leap" that would kick off "the greatest infrastructure buildout in history." Amazon Web Services, Google Cloud, Microsoft Azure and Oracle Cloud Infrastructure will all offer the platform, and more than 80 manufacturing partners are building systems around it. "Vera Rubin is a generational leap -- seven breakthrough chips, five racks, one giant supercomputer -- built to power every phase of AI," Huang declared. "The agentic AI inflection point has arrived with Vera Rubin kicking off the greatest infrastructure buildout in history." In any other industry, such rhetoric might be dismissed as keynote theater. But Nvidia occupies a singular position in the global economy -- a company whose products have become so essential to the AI boom that its market capitalization now rivals the GDP of mid-sized nations. When Huang says the infrastructure buildout is historic, the CEOs of the companies actually writing the checks are standing behind him, nodding. Dario Amodei, the chief executive of Anthropic, said Nvidia's platform "gives us the compute, networking and system design to keep delivering while advancing the safety and reliability our customers depend on." Sam Altman, the chief executive of OpenAI, said that "with Nvidia Vera Rubin, we'll run more powerful models and agents at massive scale and deliver faster, more reliable systems to hundreds of millions of people." The Vera Rubin platform brings together the Nvidia Vera CPU, Rubin GPU, NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 DPU, Spectrum-6 Ethernet switch and the newly integrated Groq 3 LPU -- a purpose-built inference accelerator. Nvidia organized these into five interlocking rack-scale systems that function as a unified supercomputer. The flagship NVL72 rack integrates 72 Rubin GPUs and 36 Vera CPUs connected by NVLink 6. Nvidia says it can train large mixture-of-experts models using one-quarter the GPUs required on Blackwell, a claim that, if validated in production, would fundamentally alter the economics of building frontier AI systems. The Vera CPU rack packs 256 liquid-cooled processors into a single rack, sustaining more than 22,500 concurrent CPU environments -- the sandboxes where AI agents execute code, validate results and iterate. Nvidia describes the Vera CPU as the first processor purpose-built for agentic AI and reinforcement learning, featuring 88 custom-designed Olympus cores and LPDDR5X memory delivering 1.2 terabytes per second of bandwidth at half the power of conventional server CPUs. The Groq 3 LPX rack, housing 256 inference processors with 128 gigabytes of on-chip SRAM, targets the low-latency demands of trillion-parameter models with million-token contexts. The BlueField-4 STX storage rack provides what Nvidia calls "context memory" -- high-speed storage for the massive key-value caches that agentic systems generate as they reason across long, multi-step tasks. And the Spectrum-6 SPX Ethernet rack ties it all together with co-packaged optics delivering 5x greater optical power efficiency than traditional transceivers. Why Nvidia is betting the future on autonomous AI agents -- and rebuilding its stack around them The strategic logic binding every announcement Monday into a single narrative is Nvidia's conviction that the AI industry is crossing a threshold. The era of chatbots -- AI that responds to a prompt and stops -- is giving way to what Huang calls "agentic AI": systems that reason autonomously for hours or days, write and execute software, call external tools, and continuously improve. This isn't just a branding exercise. It represents a genuine architectural shift in how computing infrastructure must be designed. A chatbot query might consume milliseconds of GPU time. An agentic system orchestrating a drug discovery pipeline or debugging a complex codebase might run continuously, consuming CPU cycles to execute code, GPU cycles to reason, and massive storage to maintain context across thousands of intermediate steps. That demands not just faster chips, but a fundamentally different balance of compute, memory, storage and networking. Nvidia addressed this with the launch of its Agent Toolkit, which includes OpenShell, a new open-source runtime that enforces security and privacy guardrails for autonomous agents. The enterprise adoption list is remarkable: Adobe, Atlassian, Box, Cadence, Cisco, CrowdStrike, Dassault Systèmes, IQVIA, Red Hat, Salesforce, SAP, ServiceNow, Siemens and Synopsys are all integrating the toolkit into their platforms. Nvidia also launched NemoClaw, an open-source stack that lets users install its Nemotron models and OpenShell runtime in a single command to run secure, always-on AI assistants on everything from RTX laptops to DGX Station supercomputers. The company separately announced Dynamo 1.0, open-source software it describes as the first "operating system" for AI inference at factory scale. Dynamo orchestrates GPU and memory resources across clusters and has already been adopted by AWS, Azure, Google Cloud, Oracle, Cursor, Perplexity, PayPal and Pinterest. Nvidia says it boosted Blackwell inference performance by up to 7x in recent benchmarks. The Nemotron coalition and Nvidia's play to shape the open-source AI landscape If Vera Rubin represents Nvidia's hardware ambition, the Nemotron Coalition represents its software ambition. Announced Monday, the coalition is a global collaboration of AI labs that will jointly develop open frontier models trained on Nvidia's DGX Cloud. The inaugural members -- Black Forest Labs, Cursor, LangChain, Mistral AI, Perplexity, Reflection AI, Sarvam and Thinking Machines Lab, the startup led by former OpenAI executive Mira Murati -- will contribute data, evaluation frameworks and domain expertise. The first model will be co-developed by Mistral AI and Nvidia and will underpin the upcoming Nemotron 4 family. "Open models are the lifeblood of innovation and the engine of global participation in the AI revolution," Huang said. Nvidia also expanded its own open model portfolio significantly. Nemotron 3 Ultra delivers what the company calls frontier-level intelligence with 5x throughput efficiency on Blackwell. Nemotron 3 Omni integrates audio, vision and language understanding. Nemotron 3 VoiceChat supports real-time, simultaneous conversations. And the company previewed GR00T N2, a next-generation robot foundation model that it says helps robots succeed at new tasks in new environments more than twice as often as leading alternatives, currently ranking first on the MolmoSpaces and RoboArena benchmarks. The open-model push serves a dual purpose. It cultivates the developer ecosystem that drives demand for Nvidia hardware, and it positions Nvidia as a neutral platform provider rather than a competitor to the AI labs building on its chips -- a delicate balancing act that grows more complex as Nvidia's own models grow more capable. From operating rooms to orbit: how Vera Rubin's reach extends far beyond the data center The vertical breadth of Monday's announcements was almost disorienting. Roche revealed it is deploying more than 3,500 Blackwell GPUs across hybrid cloud and on-premises environments in the U.S. and Europe -- the largest announced GPU footprint in the pharmaceutical industry. The company is using the infrastructure for biological foundation models, drug discovery and digital twins of manufacturing facilities, including its new GLP-1 facility in North Carolina. Nearly 90 percent of Genentech's eligible small-molecule programs now integrate AI, Roche said, with one oncology molecule designed 25 percent faster and a backup candidate delivered in seven months instead of more than two years. In autonomous vehicles, BYD, Geely, Isuzu and Nissan are building Level 4-ready vehicles on Nvidia's Drive Hyperion platform. Nvidia and Uber expanded their partnership to launch autonomous vehicles across 28 cities on four continents by 2028, starting with Los Angeles and San Francisco in the first half of 2027. The company introduced Alpamayo 1.5, a reasoning model for autonomous driving already downloaded by more than 100,000 automotive developers, and Nvidia Halos OS, a safety architecture built on ASIL D-certified foundations for production-grade autonomy. Nvidia also released the first domain-specific physical AI platform for healthcare robotics, anchored by Open-H -- the world's largest healthcare robotics dataset, with over 700 hours of surgical video. CMR Surgical, Johnson & Johnson MedTech and Medtronic are among the adopters. And then there was space. The Vera Rubin Space Module delivers up to 25x more AI compute for orbital inferencing compared with the H100 GPU. Aetherflux, Axiom Space, Kepler Communications, Planet Labs and Starcloud are building on it. "Space computing, the final frontier, has arrived," Huang said, deploying the kind of line that, from another executive, might draw eye-rolls -- but from the CEO of a company whose chips already power the majority of the world's AI workloads, lands differently. The deskside supercomputer and Nvidia's quiet push into enterprise hardware Amid the spectacle of trillion-parameter models and orbital data centers, Nvidia made a quieter but potentially consequential move: it launched the DGX Station, a deskside system powered by the GB300 Grace Blackwell Ultra Desktop Superchip that delivers 748 gigabytes of coherent memory and up to 20 petaflops of AI compute performance. The system can run open models of up to one trillion parameters from a desk. Snowflake, Microsoft Research, Cornell, EPRI and Sungkyunkwan University are among the early users. DGX Station supports air-gapped configurations for regulated industries, and applications built on it move seamlessly to Nvidia's data center systems without rearchitecting -- a design choice that creates a natural on-ramp from local experimentation to large-scale deployment. Nvidia also updated DGX Spark, its more compact system, with support for clustering up to four units into a "desktop data center" with linear performance scaling. Both systems ship preconfigured with NemoClaw and the Nvidia AI software stack, and support models including Nemotron 3, Google Gemma 3, Qwen3, DeepSeek V3.2, Mistral Large 3 and others. Adobe and Nvidia separately announced a strategic partnership to develop the next generation of Firefly models using Nvidia's computing technology and libraries. Adobe will also build a cloud-native 3D digital twin solution for marketing on Nvidia Omniverse and integrate Nemotron capabilities into Adobe Acrobat. The partnership spans creative tools including Photoshop, Premiere Pro, Frame.io and Adobe Experience Platform. Building the factories that build intelligence: Nvidia's AI infrastructure blueprint Perhaps the most telling indicator of where Nvidia sees the industry heading is the Vera Rubin DSX AI Factory reference design -- essentially a blueprint for constructing entire buildings optimized to produce AI. The reference design outlines how to integrate compute, networking, storage, power and cooling into a system that maximizes what Nvidia calls "tokens per watt," along with an Omniverse DSX Blueprint for creating digital twins of these facilities before they are built. The software stack includes DSX Max-Q for dynamic power provisioning -- which Nvidia says enables 30 percent more AI infrastructure within a fixed-power data center -- and DSX Flex, which connects AI factories to power-grid services to unlock what the company estimates is 100 gigawatts of stranded grid capacity. Energy leaders Emerald AI, GE Vernova, Hitachi and Siemens Energy are using the architecture. Nscale and Caterpillar are building one of the world's largest AI factories in West Virginia using the Vera Rubin reference design. Industry partners Cadence, Dassault Systèmes, Eaton, Jacobs, Schneider Electric, Siemens, PTC, Switch, Trane Technologies and Vertiv are contributing simulation-ready assets and integrating their platforms. CoreWeave is using Nvidia's DSX Air to run operational rehearsals of AI factories in the cloud before physical delivery. "In the age of AI, intelligence tokens are the new currency, and AI factories are the infrastructure that generates them," Huang said. It is the kind of formulation -- tokens as currency, factories as mints -- that reveals how Nvidia thinks about its place in the emerging economic order. What Nvidia's grand vision gets right -- and what remains unproven The scale and coherence of Monday's announcements are genuinely impressive. No other company in the semiconductor industry -- and arguably no other technology company, period -- can present an integrated stack spanning custom silicon, systems architecture, networking, storage, inference software, open models, agent frameworks, safety runtimes, simulation platforms, digital twin infrastructure and vertical applications from drug discovery to autonomous driving to orbital computing. But scale and coherence are not the same as inevitability. The performance claims for Vera Rubin, while dramatic, remain largely unverified by independent benchmarks. The agentic AI thesis that underpins the entire platform -- the idea that autonomous, long-running AI agents will become the dominant computing workload -- is a bet on a future that has not yet fully materialized. And Nvidia's expanding role as a provider of models, software, and reference architectures raises questions about how long its hardware customers will remain comfortable depending so heavily on a single supplier for so many layers of their stack. Competitors are not standing still. AMD continues to close the gap on data center GPU performance. Google's TPUs power some of the world's largest AI training runs. Amazon's Trainium chips are gaining traction inside AWS. And a growing cohort of startups is attacking various pieces of the AI infrastructure puzzle. Yet none of them showed up at GTC on Monday with endorsements from the CEOs of Anthropic and OpenAI. None of them announced seven new chips in full production simultaneously. And none of them presented a vision this comprehensive for what comes next. There is a scene that repeats at every GTC: Huang, in his trademark leather jacket, holds up a chip the way a jeweler holds up a diamond, rotating it slowly under the stage lights. It is part showmanship, part sermon. But the congregation keeps growing, the chips keep getting faster, and the checks keep getting larger. Whether Nvidia is building the greatest infrastructure in history or simply the most profitable one may, in the end, be a distinction without a difference.

[10]

Nvidia debuts the Groq 3 language processing unit, a dedicated inference chip for multiagent workloads - SiliconANGLE