Pro-Human AI Declaration unites unlikely allies on responsible AI development framework

3 Sources

3 Sources

[1]

A roadmap for AI, if anyone will listen

While Washington's breakup with Anthropic exposed the complete lack of any coherent rules governing artificial intelligence, a bipartisan coalition of thinkers has assembled something the government has so far declined to produce: a framework for what responsible AI development should actually look like. The Pro-Human Declaration was finalized before last week's Pentagon-Anthropic standoff, but the collision of the two events wasn't lost on anyone involved. "There's something quite remarkable that has happened in America just in the last four months," said Max Tegmark, the MIT physicist and AI researcher who helped organize the effort, in conversation with this editor. "Polling suddenly [is showing] that 95% of all Americans oppose an unregulated race to superintelligence." The newly published document, signed by hundreds of experts, former officials, and public figures, opens with the no-nonsense observation that humanity is at a fork in the road. One path, which the declaration calls "the race to replace," leads to humans being supplanted first as workers, then as decision-makers, as power accrues to unaccountable institutions and their machines. The other leads to AI that massively expands human potential. The latter scenario depends on five key pillars: keeping humans in charge, avoiding the concentration of power, protecting the human experience, preserving individual liberty, and holding AI companies legally accountable. Among its more muscular provisions is an outright prohibition on superintelligence development until there's scientific consensus it can be done safely and genuine democratic buy-in; mandatory off-switches on powerful systems; and a ban on architectures that are capable of self-replication, autonomous self-improvement, or resistance to shutdown. The declaration's release coincides with a period that makes its urgency far easier to appreciate. On the last Friday in February, Defense Secretary Pete Hegseth designated Anthropic -- whose AI already runs on classified military platforms -- a "supply chain risk" after the company refused to grant the Pentagon unlimited use of its technology, a label ordinarily reserved for firms with ties to China. Hours later, OpenAI cut its own deal with the Defense Department, one that legal experts say will be difficult to enforce in any meaningful way. What it all laid bare is how costly Congressional inaction on AI has become. As Dean Ball, a senior fellow at the Foundation for American Innovation, told The New York Times afterward, "This is not just some dispute over a contract. This is the first conversation we have had as a country about control over AI systems." Tegmark reached for an analogy that most people can understand when we spoke. "You never have to worry that some drug company is going to release some other drug that causes massive harm before people have figured out how to make it safe," he said, "because the FDA won't allow them to release anything until it's safe enough." Washington turf wars rarely generate the kind of public pressure that changes laws. Instead, Tegmark sees child safety as the pressure point most likely to crack the current impasse. Indeed, the declaration calls for mandatory pre-deployment testing of AI products -- particularly chatbots and companion apps aimed at younger users -- covering risks including increased suicidal ideation, exacerbation of mental health conditions, and emotional manipulation. "If some creepy old man is texting an 11-year-old pretending to be a young girl and trying to persuade this boy to commit suicide, the guy can go to jail for that," Tegmark said. "We already have laws. It's illegal. So why is it different if a machine does it?" He believes that once the principle of pre-release testing is established for children's products, the scope will widen almost inevitably. "People will come along and be like -- let's add a few other requirements. Maybe we should also test that this can't help terrorists make bioweapons. Maybe we should test to make sure that superintelligence doesn't have the ability to overthrow the U.S. government." It is no small thing that former Trump advisor Steve Bannon and Susan Rice, President Obama's National Security Advisor, signed the same document -- along with former Joint Chiefs Chairman Mike Mullen and progressive faith leaders. "What they agree on, of course, is that they're all human," says Tegmark. "If it's going to come down to whether we want a future for humans or a future for machines, of course they're going to be on the same side."

[2]

The AI political resistance has arrived

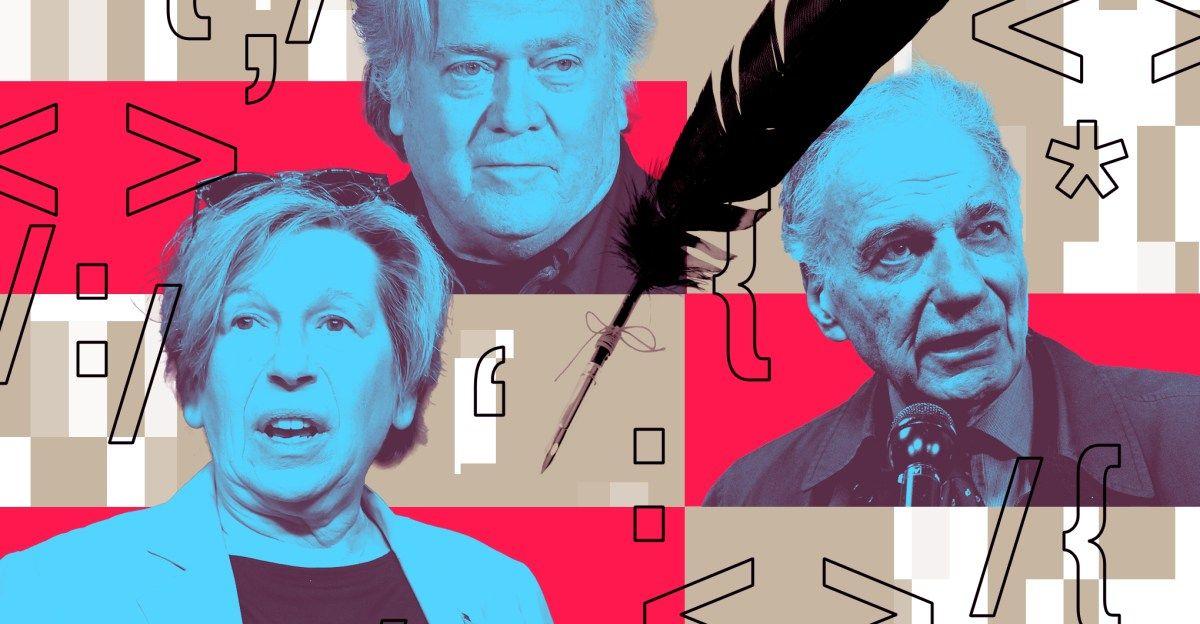

In early January, a group of 90 or so political, community and thought leaders gathered in a New Orleans Marriott for a secret conference on artificial intelligence -- so secret, in fact, that no one knew who else had been invited until they walked into the room. Church leaders and conservative academics were sitting next to labor union representatives. Progressive power brokers who'd drafted Bernie Sanders to run for president suddenly found themselves breathing the same air as MAGA talking heads. And the AI thought leaders who'd invited them to New Orleans were hoping that none of them would kill each other. On Wednesday, the Future of Life Institute, one of the most authoritative voices in the world of AI safety, released the results of that meeting: the Pro-Human AI Declaration, a concise document with five guidelines on how AI development must be centered on humanity first, with a pointed focus on avoiding the concentration of power in the hands of the powerful; preserving the well-being of children, families and communities; and preserving human agency and liberty. It has the broadest range of signatories that I personally have ever seen on a single political document. Powerful civic organizations well outside the tech world have signed onto the Declaration: major unions like the AFL-CIO, the American Federation of Teachers, and the Screen Writers Guild; religious organizations like the G20 Interfaith Forum Association and the Congress of Christian Leaders; the Progressive Democrats of America, the group that drafted Bernie Sanders to run as a Democrat in 2016; think tanks like the conservative Institute for Family Studies and advocacy groups like Parents RISE!. The individual signatories range even further: Democratic presidential candidate Ralph Nader, AFT president Randi Weingarten, Signal Foundation president Meredith Whittaker, The Blaze's Glenn Beck, War Room's Steve Bannon, Virgin Group founder Sir Richard Branson, former National Security Advisor Susan Rice, SAG-AFTRA members, leaders of major evangelical organizations. More are expected to sign on in the next several days. The meeting was under Chatham House Rules and the list of attendees remains private. But the participants who agreed to speak to The Verge about the experience said that they'd been invited by Max Tegmark, the co-founder of FLI and an MIT professor who had been named to the TIME 100 AI list. "We spent a lot of time talking to him over the course of the last few months," Weingarten, a powerful teachers' union advocate, told The Verge in a phone interview. Though she was unable to make it to New Orleans, she was involved in drafting the document, and she'd found remarkable similarities in FLI's worldview and AFT's own "common sense guardrails" for using AI in schools. "We've been on parallel tracks for quite a while without knowing it." Joe Allen, the cofounder of Humans First and a former correspondent for Bannon's show War Room, told The Verge that Tegmark had also invited him to New Orleans, as well as an earlier proof-of-concept meeting in Manhattan. Though the wide range of attendees was jarring and the political tensions weren't completely gone, Allen was surprised by how quickly they all agreed on similar topics: autonomous lethal weapons should not be solely AI-powered. AI companies should not leverage children's emotional attachment for profit. AI should not be granted legal personhood. (The least popular position in the Declaration still got approved by 94% of attendees.) "I think about it like, if there's knowledge that there's poison in the water supply, or that drugs are flooding schools -- anything like that, in general -- most people are going to be against it and it isn't partisan," he said. AI was slightly trickier in that people's general opinion about specific AI models divided along party lines -- Grok was the "based" AI and Anthropic was the "woke" AI -- but to Allen, the distinction was meaningless. "Like, what does 'based' and 'woke' even mean at this point?" Nearly a decade ago, FLI had laid out a more optimistic set of principles for AI research -- 23 principles, to be exact, written during the 2017 Asilomar Conference for Beneficial AI, which drew over 100 tech luminaries of the day. Signatories and endorsers of the Asilomar AI Principles included AI leaders like Sam Altman, Elon Musk, and Demis Hannabis; luminaries like Stephen Hawking and Ray Kurzweil, and representatives from major companies like Google, Intel and Apple. But this time, no one from the industry was invited, to say nothing of people on the level of Altman and Musk. "That was actually a very deliberate design choice," Emilia Javorsky, the director of the Futures Program at FLI, told The Verge. Whenever she'd attended conferences and events about AI's impact across society, she noticed that corporate interests would eventually become the dominant perspective in the room, "just by nature of their size and weight and funding capabilities." Instead, the invitees were from civil society organizations, all of whom were experiencing mass disruption due to artificial intelligence, and all of whom were fed up with Big Tech shrugging off their concerns. Anthony Aguirre, another co-founder of FLI and a prominent cosmology professor at UC Santa Cruz, emphasized that this declaration was not their attempt to redo the Asilomar Principles, but a somber acknowledgement of a dark new reality -- one where their former colleagues were now the heads of major corporations, trying to achieve artificial general intelligence before their rivals did and satisfy shareholders before addressing safety. The power to steer AI's development was increasingly concentrated in the hands of the few, and the Trump administration's aggressive deregulation had further empowered them. "Other than the overall mass of humanity, there was one entity that would have put meaningful control on what they could do, and that was the US government," he told The Verge. "Now that it's backing them and wants to keep them unrestrained, the only thing that's a real threat are other companies." In the absence of Big Tech and public scrutiny, said Javorsky, there was something unique about how quickly this group coalesced around the same issues and came to the same conclusions. Over the course of the next few days, Javorsky kept hearing the same refrain: "'We will not have the luxury of debating all of those other issues if we don't get this thing right. So let's get this thing right.'" In Weingarten's view, the Declaration served as the mission statement of what she called a "key demanding coalition" -- a strategic alliance of political opponents -- and a way to keep all their efforts coordinated against a government that elevated enterprise over society. "What is really important is that there are other people who have said, let's try to create a bigger coalition to say that we need humanity to be at the center of AI," she noted. On its own, AFT could have perhaps pushed the issue of child safety, but there was only so much pressure they could exert on lawmakers. But if they joined forces with several other trade unions, plus religious organizations, plus some allies on the other side of the aisle? Now those lawmakers would be nervous. "If the government won't do it, then the people have to force the government to do it. And you start with a statement of principles." "If there's one statement I would make about the whole thing, which is what I said to the group when I had their attention, is that no one is going to engineer a pro-human movement. The only thing you can do is inspire it," said Allen. "I do think that statements like this should inspire a pro-human movement. Like a fundamental document that's setting the tone...There's no amount of social engineering, or money, or media, or any of that, that's really gonna do it." Exactly what that looks like, however, remains unclear -- or at least, not easily translated into elections. FLI is running an ad campaign called "Protect What's Human," but as a 501(c)3, cannot endorse or campaign for candidates or ballot initiatives during the midterms. They did, however, conduct a poll with Tavern Research in February, testing the popularity of the Declaration's principles among voters. Though respondents were split neatly down partisan lines in whom they voted for and which party they belonged to, they overwhelmingly supported the statements that appeared in the Declaration, by a wide margin. The worst-performing principles -- AI must not create monopolies or concentrate control in a few hands -- still garnered 69% support from respondents. The best-performing principle -- humans needed to stay in charge of AI and prevent it from harming children, families and communities -- won 80% support. To Javorsky, the poll results validated the conference's points. "It's one thing to have a whole bunch of civil society actors in a room together and think something's representative. But you have to actually validate those with real people. This is actually resonating with them." When we spoke on Thursday, Anthropic, which had recently floated the possibility that its AI had gained consciousness, was in the middle of a fight with the Pentagon over whether the military could use its AI for autonomous lethal weapons without human oversight. By Friday evening, OpenAI threw Anthropic under the bus to score their own Pentagon contract. Days after that fight resolved, and the United States used Anthropic-powered tools to assassinate the Ayatollah of Iran, and several more reports of looming AI layoffs emerged, and the scale of the Pentagon's asks on mass surveillance was made more evident, Alan Minsky, the CEO of the Progressive Democrats of America and a meeting attendee, told The Verge that he could not foresee any political opposition towards the declaration, either from the left or the right. "Altman and Musk, certainly, have taken a flippant manner towards what are serious threats to communities: the psychological deterioration of a population that lives increasingly online, the impact of continual economic maldistribution of wealth, and, of course, contempt for the idea that basic protection must come before profits," he said. " The risk of an existential threat to humanity is no longer something they even blink at. As the public realizes that this is their attitude, that they have utter contempt for the average person's welfare -- yes, we think the public will be on our side."

[3]

Pro-human AI declaration brings together unlikely group calling for trustworthy tech

An unlikely band of prominent business, religious, government and academic leaders have set aside their political differences and signed onto a new declaration of human rights for the AI age. The Pro-Human AI Declaration, released Wednesday and backed by more than 40 organizations, asserts the importance of humans and human values as AI becomes increasingly powerful and, in some regards, humanlike. Signatories include former Trump administration adviser Steve Bannon, conservative firebrand Glenn Beck and billionaire mogul Richard Branson, as well as consumer advocate Ralph Nader, Biden administration national security adviser Susan Rice and Nobel Prize-winning economist Daron Acemoglu. "As companies race to develop and deploy AI systems, humanity faces a fork in the road," the statement's preamble declares. "One path is a race to replace: humans replaced as creators, counselors, caregivers and companions, then in most jobs and decision-making roles, concentrating ever more power in unaccountable institutions and their machines." "There is a better path," the statement continues, "where trustworthy and controllable AI tools amplify rather than diminish human potential, empower people, enhance human dignity, protect individual liberty, strengthen families and communities, preserve self-governance and help create unprecedented health and prosperity." The declaration was drafted by a coalition of organizations from across the political spectrum, including the Congress of Christian Leaders, the American Federation of Teachers and the Progressive Democrats of America. The Future of Life Institute, a nonprofit advocacy group whose mission is to guide advanced technology toward beneficial purposes and avert large-scale risks to humanity, convened the participants and facilitated the drafting process. The declaration was drafted in multiple in-person gatherings and finalized after a wider ratification meeting in New Orleans in January. The declaration, also signed by AI pioneer Yoshua Bengio, covers five main topics with titles such as "Keeping Humans in Charge" and "Responsibility and Accountability for AI Companies." Within each topic area, a list of finer-grained statements detail the signers' pro-human ideology. "No AI Monopolies," "Democratic Authority Over Major Transitions" and "Shared Prosperity" are several of the statements comprising the second major topic area, entitled "Avoiding Concentration of Power." Joe Allen, senior fellow at Humans First, a nonpartisan social advocacy organization campaigning to raise awareness about the future of AI, and the former technology editor at Steve Bannon's War Room podcast, told NBC News the declaration was "the product of painstaking consensus among intellectuals and activists who have been thinking about the dangers and downsides of artificial intelligence for many years." According to Allen, the signers spanned a wide "axis, with reasonable techno-optimists at the top and a few of us quasi-Luddites below." "As with free speech, and freedom in general, the ideal position is that every human being -- even one's ideological opponents -- has some say over a fundamentally anti-human technology," Allen shared in written comments. AI systems have become dramatically more capable over the past few years and even months, with AI systems reshaping or eliminating software development jobs and outperforming scientists' ability to create new tests to measure their performance in areas like mathematics. "Big Tech is racing to create AI smarter than humans," said Brendan Steinhauser, director of the Alliance for Secure AI, a Washington, D.C.-based advocacy organization and a former Republican campaign strategist. "The Alliance for Secure AI remains steadfast in its mission to keep humanity in control of AI, not the other way around." "If we want AI to benefit humanity and not just Silicon Valley CEOs," Steinhauser told NBC News, "then we must come together to protect our future."

Share

Share

Copy Link

The Future of Life Institute released the Pro-Human AI Declaration, a bipartisan framework signed by hundreds including Steve Bannon and Susan Rice. The document establishes five pillars for human-centered AI governance, prohibits superintelligence development without consensus, and mandates pre-deployment testing—addressing the urgent need for AI regulation exposed by recent Pentagon-Anthropic tensions.

Bipartisan Consensus Emerges on AI Development

The Future of Life Institute has released the Pro-Human AI Declaration, a framework for responsible AI development that achieves something Washington has failed to produce: bipartisan consensus on artificial intelligence regulation

1

. The document, signed by hundreds of experts and public figures including former Trump advisor Steve Bannon and Obama's National Security Advisor Susan Rice, establishes guidelines to keep AI development focused on human values rather than corporate interests2

3

.

Source: TechCrunch

Max Tegmark, the MIT physicist who organized the effort, notes that polling suddenly shows 95% of Americans oppose an unregulated race to superintelligence

1

. The declaration's release follows the Pentagon-Anthropic standoff, where Defense Secretary Pete Hegseth designated Anthropic a "supply chain risk" after the company refused unlimited military access to its technology—exposing how costly Congressional inaction has become1

.Five Pillars for Human-Centric AI

The Pro-Human AI Declaration opens with a stark observation: humanity faces a fork in the road between "a race to replace" humans as workers and decision-makers, and a path where trustworthy tech and controllable AI tools amplify human potential

3

. The framework rests on five pillars: keeping humans in charge, preventing concentration of power, protecting the human experience, preserving individual liberty, and holding AI companies legally accountable1

.Among its provisions, the declaration prohibits superintelligence development until scientific consensus confirms it can be done safely with democratic buy-in. It mandates mandatory off-switches on powerful systems and bans architectures capable of self-replication, autonomous self-improvement, or resistance to shutdown

1

. The document also addresses AI monopolies and calls for "Democratic Authority Over Major Transitions" and "Shared Prosperity"3

.Secret Meeting Produces Unlikely Coalition

In January, approximately 90 political, community, and thought leaders gathered at a New Orleans Marriott under Chatham House Rules for a secret conference on artificial intelligence

2

. Church leaders sat beside labor union representatives, while progressive power brokers who drafted Bernie Sanders found themselves alongside MAGA talking heads. No one knew who else had been invited until they entered the room2

.

Source: The Verge

The bipartisan framework attracted support from major unions like the AFL-CIO, American Federation of Teachers, and Screen Writers Guild, alongside religious organizations and advocacy groups

2

. Individual signatories include Ralph Nader, Signal Foundation president Meredith Whittaker, Glenn Beck, Richard Branson, former Joint Chiefs Chairman Mike Mullen, and Nobel Prize-winning economist Daron Acemoglu3

. The least popular position still received approval from 94% of attendees2

.Related Stories

Child Safety as Pressure Point

Tegmark sees child safety as the issue most likely to break the current regulatory impasse. The declaration calls for mandatory pre-deployment testing of AI products, particularly chatbots and companion apps aimed at younger users, covering risks including increased suicidal ideation, exacerbation of mental health conditions, and emotional manipulation

1

."If some creepy old man is texting an 11-year-old pretending to be a young girl and trying to persuade this boy to commit suicide, the guy can go to jail for that," Tegmark explained. "We already have laws. It's illegal. So why is it different if a machine does it?"

1

He believes once pre-deployment testing is established for children's products, the scope will expand to include testing for bioweapon assistance and threats to government stability.Industry Voices Notably Absent

Unlike the 2017 Asilomar AI Principles, which drew signatures from Sam Altman, Elon Musk, Demis Hassabis, and representatives from Google, Intel, and Apple, no one from the AI industry was invited to this effort

2

. Emilia Javorsky, director of the Futures Program at Future of Life Institute, called this "a very deliberate design choice," noting that corporate interests typically dominate such conversations2

.Joe Allen of Humans First, a former correspondent for Bannon's War Room, told NBC News the declaration represents "painstaking consensus among intellectuals and activists who have been thinking about the dangers and downsides of artificial intelligence for many years"

3

. The bipartisan coalition demonstrates that when it comes to protecting human agency against increasingly capable AI systems, political divisions take a back seat to shared humanity.References

Summarized by

Navi

[1]

[2]

Related Stories

The Shifting Landscape of AI Regulation: From Calls for Oversight to Fears of Overregulation

27 May 2025•Science and Research

Global AI Summit in Paris Shifts Focus from Safety to Opportunity, Sparking Debate

12 Feb 2025•Policy and Regulation

Pentagon's Anthropic showdown exposes who controls AI guardrails in military contracts

03 Mar 2026•Policy and Regulation

Recent Highlights

1

OpenAI shuts down Sora video app after six months, ending Disney's $1 billion investment deal

Technology

2

AI-Generated Val Kilmer to Posthumously Appear in As Deep as the Grave After His Death

Entertainment and Society

3

Supermicro Co-Founder Indicted in $2.5 Billion Nvidia AI Chip Smuggling Scheme to China

Policy and Regulation