Samsung and SK Hynix win exclusive HBM4 supply for Nvidia's Vera Rubin AI accelerator

3 Sources

3 Sources

[1]

Samsung and SK Hynix to dominate Nvidia Vera Rubin HBM4 supply

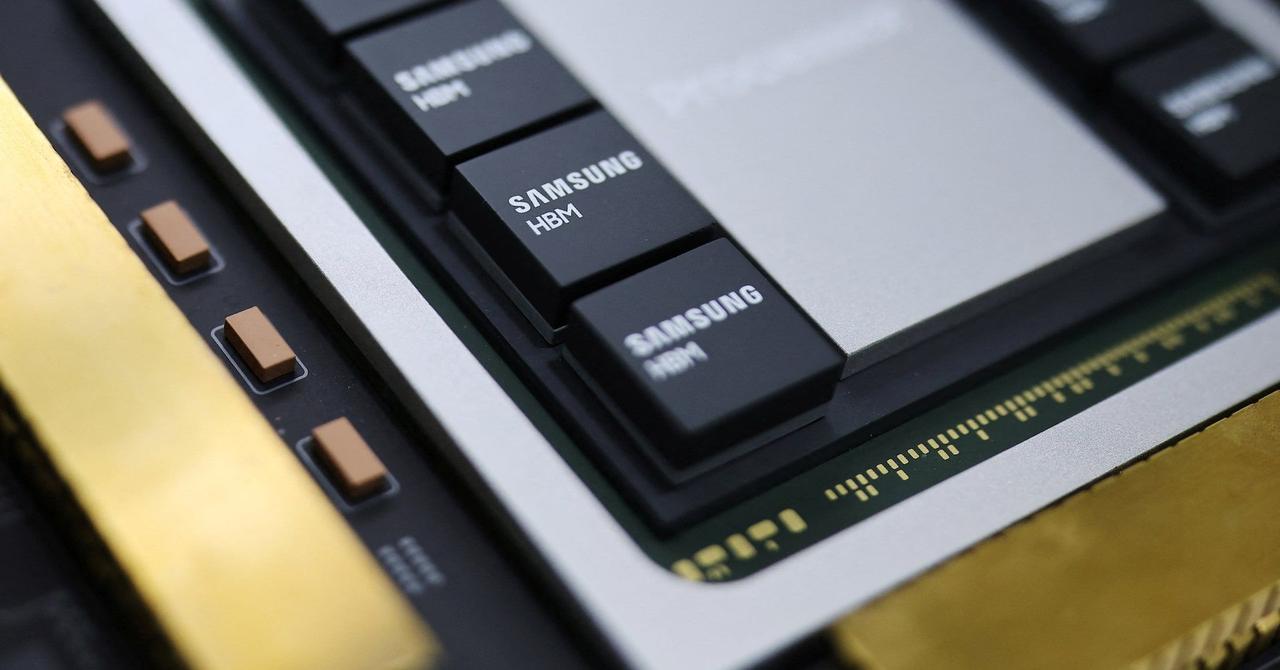

Samsung Electronics and SK Hynix have been confirmed as the sole HBM4 suppliers for Nvidia's Vera Rubin AI accelerator, excluding Micron Technology from the flagship platform. The Korea Economic Daily reported on March 8, citing industry sources, that the two South Korean chipmakers are on Nvidia's vendor list for the advanced memory. The selection secures the most lucrative segment of the AI memory market for Samsung and SK Hynix. The allocation marks a turnaround for Samsung after previous yield struggles and reinforces SK Hynix's position as Nvidia's primary memory partner. The supplier split favors SK Hynix with roughly 70 percent of Nvidia's HBM4 allocation. Samsung holds approximately 30 percent of the allocation, according to the report. "Micron isn't even being discussed as a Vera Rubin HBM4 supplier," an industry source told the Korea Economic Daily. Samsung passed Nvidia qualification tests for both 10 Gbps and 11 Gbps HBM4 variants and began limited shipments in February. SK Hynix is optimizing its product for the 11 Gbps test and expects full-scale production this month. Nvidia required memory speeds above the 8 Gbps JEDEC industry standard for Vera Rubin. Industry analysts attributed Micron's exclusion to difficulties meeting speed, base die design, and thermal performance requirements. Micron currently supplies HBM3E for Nvidia's existing platforms but does not appear on the Vera Rubin vendor list. Vera Rubin will incorporate 8 HBM4 stacks per GPU totaling 288 GB per GPU. The full Vera Rubin Superchip combines two GPUs for 576 GB of total memory. The platform is slated for release in the second half of 2026. Nvidia is expected to formally unveil Vera Rubin at the GTC developer conference on March 16. Micron is expected to supply HBM4 for mid-tier Rubin accelerators, such as the Rubin CPX, but not for the top-tier Vera Rubin. TrendForce noted Nvidia may eventually add all three suppliers to its HBM4 ecosystem. The confirmed vendor list indicates Samsung and SK Hynix will dominate the initial and most profitable ramp.

[2]

Why Nvidia Snubbed Micron For Samsung, SK Hynix - Micron Technology (NASDAQ:MU), NVIDIA (NASDAQ:NVDA)

Rising demand for artificial intelligence hardware is reshaping the high-bandwidth memory (HBM) market, giving South Korea's leading chipmakers an edge in supplying critical components for next-generation AI processors. Samsung, SK Hynix Win HBM4 Supply For Nvidia AI ChipsAI Boom Strengthens Pricing Power In HBM Market AI-driven demand is reshaping the HBM market, with major chipmakers competing to supply next-generation HBM for advanced AI processors. Samsung has begun mass production of its HBM4 AI memory chips and is reportedly negotiating prices of about $700 per unit, roughly 20%-30% higher than the previous generation, reflecting strong demand and tight supply in the AI memory market. The company is trying to regain momentum after initially trailing SK Hynix, while both firms compete to supply Nvidia for its next-generation "Vera Rubin" AI platform. Nvidia Supply Chain Highlights Competitive Divide Industry reports indicate Nvidia plans to source most of its HBM4 supply from SK Hynix and Samsung, with SK Hynix expected to provide about 70% and Samsung roughly 30%, while Micron has struggled to meet the stricter technical requirements. MU Price Action: Micron Technology shares were down 0.67% at $367.83 during premarket trading on Monday, according to Benzinga Pro data, after closing 6.74% lower on Friday. Image via Shutterstock Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[3]

Nvidia taps Samsung, SK Hynix as exclusive HBM4 suppliers for Vera Rubin - report By Investing.com

Investing.com-- Samsung Electronics (KS:005930) and SK Hynix (KS:000660) have been selected as the sole suppliers of sixth-generation high-bandwidth memory (HBM4) for Nvidia's (NASDAQ:NVDA) next flagship AI accelerator, Vera Rubin, the Korea Economic Daily reported on Monday, citing industry officials. The decision gives South Korea's two memory chipmakers a fresh advantage in the race to supply premium AI components, as demand for high-bandwidth memory continues to surge alongside advanced AI processors. Get real-time updates on market-moving news with InvestingPro According to the report, the move sidelines U.S. memory rival Micron Technology (NASDAQ:MU) from the upcoming product cycle.

Share

Share

Copy Link

Samsung Electronics and SK Hynix have been confirmed as the sole HBM4 suppliers for Nvidia's Vera Rubin AI accelerator, excluding Micron Technology from the flagship platform. SK Hynix will provide roughly 70 percent of the allocation while Samsung holds approximately 30 percent, marking a significant shift in the AI memory market as demand for high-bandwidth memory intensifies.

Samsung and SK Hynix Secure Exclusive HBM4 Supply Deal

Samsung Electronics and SK Hynix have been confirmed as the exclusive HBM4 suppliers for Nvidia's Vera Rubin AI accelerator, effectively sidelining Micron Technology from the flagship platform

1

. The Korea Economic Daily reported on March 8, citing industry sources, that the two South Korean chipmakers have secured the most lucrative segment of the AI memory market. The supplier split heavily favors SK Hynix with roughly 70 percent of Nvidia's HBM4 allocation, while Samsung holds approximately 30 percent1

. An industry source told the Korea Economic Daily that "Micron isn't even being discussed as a Vera Rubin HBM4 supplier," highlighting the competitive divide in the supply chain1

.

Source: Benzinga

Technical Requirements Drive Supplier Selection

The selection of Samsung and SK Hynix as exclusive HBM4 suppliers reflects their ability to meet Nvidia's stringent technical requirements for the next-generation AI platform. Samsung passed Nvidia qualification tests for both 10 Gbps and 11 Gbps HBM4 variants and began limited shipments in February

1

. SK Hynix is currently optimizing its product for the 11 Gbps test and expects full-scale production this month. Nvidia required memory speeds above the 8 Gbps JEDEC industry standard for Vera Rubin, setting a high bar for potential suppliers1

. Industry analysts attributed Micron Technology's exclusion to difficulties meeting speed, base die design, and thermal performance requirements, despite the company currently supplying HBM3E for Nvidia's existing platforms1

.Vera Rubin Platform Specifications and Timeline

The Vera Rubin AI accelerator will incorporate 8 HBM4 stacks per GPU totaling 288 GB per GPU, representing a substantial leap in memory capacity for advanced AI processors

1

. The full Vera Rubin Superchip combines two GPUs for 576 GB of total memory, designed to handle increasingly complex AI workloads. The platform is slated for release in the second half of 2026, with Nvidia expected to formally unveil Vera Rubin at the GTC developer conference on March 161

. While Micron is expected to supply HBM4 for mid-tier Rubin accelerators such as the Rubin CPX, it will not participate in the top-tier Vera Rubin supply chain1

.Related Stories

Pricing Power Reflects AI Hardware Demand

The allocation marks a significant turnaround for Samsung after previous yield struggles and reinforces SK Hynix's position as Nvidia's primary memory partner

1

. Samsung has begun mass production of its HBM4 AI memory chips and is reportedly negotiating prices of about $700 per unit, roughly 20 percent to 30 percent higher than the previous generation2

. This pricing power reflects strong demand and tight supply in the AI memory market as AI-driven demand continues to reshape the high-bandwidth memory landscape2

. The decision gives South Korea's two memory chipmakers a fresh advantage in the race to supply premium AI components3

. TrendForce noted Nvidia may eventually add all three suppliers to its HBM4 ecosystem, though the confirmed vendor list indicates Samsung and SK Hynix will dominate the initial and most profitable ramp1

.References

Summarized by

Navi

[2]

Related Stories

SK Hynix Leads AI Memory Race with HBM4 Breakthrough

12 Sept 2025•Technology

Samsung gains ground in HBM4 race as Nvidia production ignites AI memory battle with SK Hynix, Micron

02 Jan 2026•Technology

Samsung HBM4 nears Nvidia approval as rivalry with SK Hynix intensifies for Rubin AI platform

26 Jan 2026•Technology

Recent Highlights

1

Apple Plans Major Siri AI Overhaul in iOS 27 With Third-Party Chatbot Integration

Technology

2

OpenAI shuts down Sora after six months, ending Disney's $1 billion licensing partnership

Technology

3

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research