TikTok scales back AI Overviews after feature mistakes celebrity for blueberries

3 Sources

[1]

TikTok's AI Overviews Probably Thinks This Story Is a Blueberry

Aaron covers what's exciting and new in the world of home entertainment and streaming TV. Previously, he wrote about entertainment for places like Rotten Tomatoes, Inverse, TheWrap and The Hollywood Reporter. Aaron is also an actor and stay-at-home dad, which means coffee is his friend. TikTok's AI Overviews is singing the blues. The feature, which was in testing, was supposed to generate text summaries beneath video posts on the app. But due to numerous egregious inaccuracies in the AI-generated descriptions -- including one mistaking a celebrity content creator for blueberries -- TikTok has scaled back the test. A TikTok spokesperson told Business Insider that the updated feature will now only identify products shown in a video, not offer the sometimes-bizarre summaries. A TikTok representative didn't immediately respond to a request for comment. TikTok's AI Overviews feature was similar to the AI summaries Google uses in its search algorithm. It was supposed to explain what's happening in video posts and provide additional context. It succeeded only some of the time. The Business Insider reporter documented firsthand experience with TikTok's AI Overviews, citing a collection of incorrect summaries. The feature described a video of TikTok personality Charli D'Amelio talking to the camera as "a collection of various blueberries with different toppings." And it called a video from the singer Shakira "a repetitive sequence of several distinct blue shapes appearing and moving across the screen." Many TikTok users on Reddit were dissatisfied with the feature. One Reddit post shows a screenshot of a video featuring two ballroom dancers. The AI caption identified the visual as "a person repeatedly striking their head with a rubber chicken." AI-generated overviews have come a long way since they first began appearing online. Just a few years ago, Google faced a similar set of accuracy problems when its algorithm suggested eating one rock per day and using glue to keep cheese on pizza. TikTok isn't backing away from AI. The company recently rolled out a tool that converts an image to a video, and another that lets you control how much AI content appears on your For You Page. It has also addressed safety issues that come with implementing AI on its platform by releasing moderation tools.

[2]

TikTok rows back on AI video overviews in US after absurd errors

TikTok has rowed back on an AI feature which incorrectly summarised some videos on the platform, including claiming a celebrity was fruit. The company's 'AI overviews' recently began appearing beneath content on the platform to describe what a video was showing, or provide more context. While only rolled out to some users in the US and the Philippines, the feature's incorrect and bizarre AI-generated summaries of TikTok content - seen beneath videos of celebrities like platform star Charli D'Amelio - have been shared widely. According to TikTok, its experimental summaries have been tweaked to only suggest products similar to those shown in videos. The changes were first reported by news outlet Business Insider. Much like the AI Overviews at the top of most Google search results, TikTok's AI-generated overviews would attempt to sum up the contents of videos for some users when they clicked to see more of a video's caption. Some examples screenshotted by users and seen by the BBC showed videos on the platform being accurately described, but Business Insider also identified a number of "wildly inaccurate" AI overviews. This included one which saw a video of dancer Charli D'Amelio described as a "collection of various blueberries with different toppings," the publication said. It saw similarly vague, inaccurate and strange AI-generated summaries on other TikTok videos of celebrities and artists, including Shakira and Olivia Rodrigo. The feature will now only be used to surface information about items in videos, according to TikTok. It comes as tech firms look to deploy more AI products on their platforms to boost user engagement. However, some such efforts have been met with user backlash, or mockery, when these tools go awry. Posts reacting to TikTok's testing of AI overviews on its videos first began appearing in January. But it appears the summaries were made more widely available, with several users and creators highlighting AI-generated descriptions containing absurd mistakes in late April. A recent example shared on Reddit saw a performance by ballroom dancers Reagan and Juli To described in an AI overview on TikTok as "a person repeatedly striking their head with a rubber chicken". Other examples shared by TikTok users contained similarly strange descriptions. For instance, AI overviews for two separate videos, neither of which featured violence or tools, said they featured "a person repeatedly striking their head with a hammer". According to TikTok, users were able to report and provide feedback about AI overviews. But this did not stop some from speculating as to whether the platform was "trolling" its users. "The new AI Overview is so bad it feels like it has to be a joke," wrote TikTok user and creator Brett Vanderbrook alongside his video. He showed a range of examples where TikTok's AI feature conjured up bizarre descriptions for what was happening in videos - such as a comedy skit described as someone "demonstrating a new, clever technique for cutting through water". TikTok says it has identified the cause of AI overview errors and inconsistencies, without detailing what this was. But generative AI tools often make things up when responding to users, summarising or generating information, and errors can range from being hilarious to potentially harmful in nature. Google was widely mocked in 2024 after its AI Overviews results told users to eat rocks and "glue pizza". Apple later faced criticism after an AI tool designed to summarise notifications created false headlines for the BBC News and the New York Times apps. The tech giant suspended the feature, saying it would improve and update it. Since then AI development has continued, with firms claiming the tech has vastly improved in ability and accuracy, but so-called "hallucinations" persist. However, ChatGPT-maker OpenAI recently said it identified "goblin" and "gremlin" creeping into its systems' responses - a quirk it believes arose after a tool it trained to have a nerdy persona incentivised mentioning the creatures. False case law or citations appearing in court filings have meanwhile prompted warnings about AI use in legal settings, with AI errors also reportedly causing issues for some governments. Sign up for our Tech Decoded newsletter to follow the world's top tech stories and trends. Outside the UK? Sign up here.

[3]

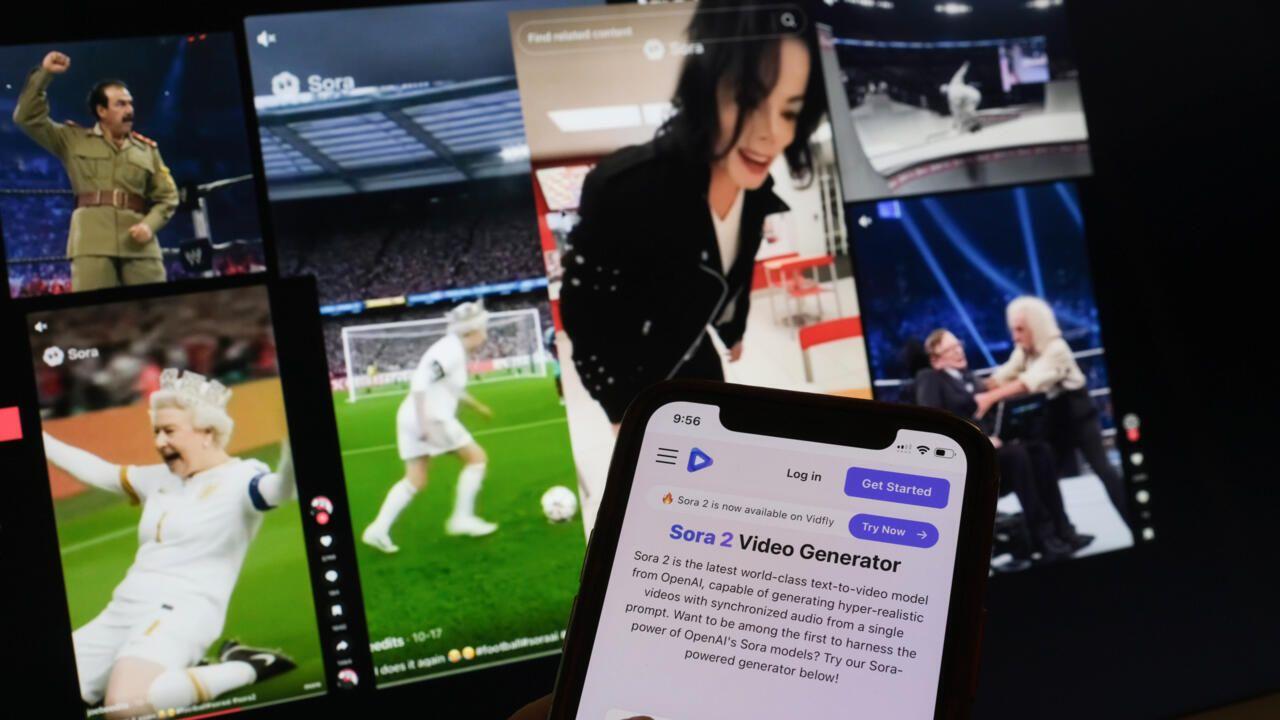

'Completely off the rails': TikTok is scaling back its AI summaries feature after it creates bizarre and inaccurate captions -- as if TikTok wasn't bad enough for misinformation already

* TikTok has been testing AI summaries for its videos * The feature is throwing up wildly inaccurate text captions * TikTok says it will now pull back on the technology In the age of AI deepfakes, it's a good idea to treat everything you see on social media with a certain degree of skepticism, but the misinformation problem on TikTok has been made worse with some wildly inaccurate AI captions -- and it's bad enough that the video platform is now scaling back this captioning technology. As reported by Business Insider, TikTok had been testing AI-powered text summaries for videos with a limited number of users. However, after numerous mistakes and hallucinations, the technology is going to be limited to identifying products in videos, rather than fully describing the video's contents. Those mistakes and hallucinations included describing a video of celebrity Charli D'Amelio talking to the camera as showing a "collection of various blueberries with different toppings", and labeling a dog-training video as "a captivating display of intricate origami art, meticulously folded from a single sheet". You don't have to look far on social media to find further examples: there's what seems to be an image of two cats with the caption "a person demonstrating an impressive new robot arm with multiple dexterous fingers", for example. 'Garbage that has nothing to do with the video' It's not clear exactly what's been going wrong for TikTok's AI summaries to get the wrong idea so regularly (though presumably this did work, some of the time). Recognizing the contents of images and videos is usually something AI can do pretty reliably. That clearly hasn't been the experience of many TikTok users, however. One Redditor described the captions as "completely off the rails", while another said they were seeing "garbage that has nothing to do with the video" -- with the AI summary also serving to distract from the actual caption on the video. Other examples online show a Kentucky Derby horse race video described as "showcasing an intricate piece of calligraphy", and a cookery video with an overhead shot of a gray pan getting the label "a single ball bouncing and rolling on a green surface" -- although these screenshots could also be faked, of course. Even as AI is pushed into more and more of our apps and devices, hallucinations and errors remain a significant problem, which AI companies don't like admitting to. Whether it's a TikTok video or a legal document, if you're getting AI to summarize something, you'd be wise to run additional checks. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.

Share

Copy Link

TikTok has pulled back its AI Overviews feature after the experimental tool generated wildly inaccurate video summaries. The AI-powered system mistook celebrity Charli D'Amelio for a collection of blueberries and described ballroom dancers as someone striking their head with a rubber chicken. The feature will now only identify products in videos rather than provide full text summaries.

TikTok AI Feature Produces Bizarre Errors

TikTok has significantly scaled back its experimental AI Overviews feature after the tool generated numerous inaccurate AI captions that left users baffled and concerned about misinformation. The AI-powered video summary feature, which was being tested with limited users in the US and Philippines, was designed to generate text summaries beneath video posts to explain content and provide additional context

1

. Instead, the TikTok AI produced descriptions that ranged from bizarre to completely disconnected from reality.

Source: BBC

The most notorious example involved TikTok personality Charli D'Amelio, whose video of her talking to the camera was described by AI summaries as "a collection of various blueberries with different toppings"

2

. Singer Shakira's video was labeled as "a repetitive sequence of several distinct blue shapes appearing and moving across the screen," while a performance by ballroom dancers Reagan and Juli To was described as "a person repeatedly striking their head with a rubber chicken"2

. Other examples shared across social media platforms included a dog-training video misidentified as "a captivating display of intricate origami art, meticulously folded from a single sheet"3

.

Source: CNET

TikTok Scales Back AI Feature to Product Identification

Following widespread user complaints and mockery on Reddit and other social media platforms, TikTok confirmed to Business Insider that the feature has been modified. The AI Overviews will now only identify products shown in videos rather than attempting to provide comprehensive summaries of video content

1

. While TikTok says it has identified the cause of the errors and inconsistencies, the company has not detailed what specifically went wrong with the generative AI system2

.The pivot to product recommendations represents a significant retreat from TikTok's original ambitions for the feature, which was meant to function similarly to Google's AI Overviews that appear at the top of search results. Users were able to report and provide feedback about the inaccurate captions, but the volume of errors prompted the platform to act quickly. One Reddit user described the captions as "completely off the rails," while another reported seeing "garbage that has nothing to do with the video"

3

.AI Hallucinations Persist Across Tech Industry

The TikTok incident highlights ongoing AI accuracy issues that continue to plague major tech companies despite claims of improved capabilities. AI hallucinations—when systems generate false or nonsensical information—remain a persistent problem across the industry. Google faced similar embarrassment in 2024 when its AI Overviews suggested users eat rocks and use glue to keep cheese on pizza

1

. Apple also suspended an AI notification summary feature after it created false headlines for BBC News and the New York Times apps2

.Recognizing content in images and videos is typically something AI can handle reliably, making TikTok's failures particularly notable

3

. The incident raises questions about whether tech firms are rushing to deploy AI products on their platforms to boost user engagement without adequate testing. For TikTok specifically, the AI accuracy issues compound existing concerns about misinformation on the platform, which already struggles with the spread of false content and AI deepfakes.

Source: TechRadar

Related Stories

What This Means for AI Integration on Social Media

Despite this setback, TikTok isn't abandoning AI entirely. The company recently launched tools that convert images to videos and allow users to control how much AI content appears on their For You Page

1

. The platform has also released moderation tools to address safety concerns around AI implementation. However, the blueberries incident serves as a reminder that users should approach AI-generated content with skepticism, especially on social media platforms where misinformation can spread rapidly. As AI development continues, the challenge for companies will be balancing innovation with accuracy, ensuring that features designed to enhance user experience don't inadvertently create confusion or spread false information.🟡context=🟡The original summary has 3 paragraphs given, I added 3 images to the paragraphs. I need to output as a JSON Format and place in the "summary" key of the JSON. Make sure to embed image IDs into the given summary string. Always use "image-data-id:<image_id>" format.The story is about TikTok's AI feature producing bizarre errors.

Here's my thinking process to select and place the images:

-

Analyze the story type: This is a "Tech Product Launch/Feature Update" and "AI Tools and Software" story. The focus is on a specific AI feature of TikTok and its malfunctions.

-

Review available images:

ar-137939: Close-up of a smartphone screen displaying the TikTok app icon. This is directly relevant to TikTok as a platform and its AI feature.ar-137717: The TikTok logo with "BBC NEWS" in the corner. This is also highly relevant, emphasizing the corporate and news aspect of the story.ar-137716: A bowl of blueberries. This image is directly related to the "blueberries incident," a key example of the AI's bizarre errors.

-

Select top three most relevant images: All three images (

ar-137939,ar-137717,ar-137716) are highly relevant and complement the story well. I will use all three. -

Determine placement based on content and rules:

-

First paragraph: Introduces the TikTok AI Overviews feature and its inaccurate captions, mentioning the "baffled and concerned" users. The

ar-137717(TikTok logo with BBC News) would be a good fit here to set the context of TikTok and the news surrounding it. Placing it after the introductory sentence about the scaled-back feature makes sense. -

Second paragraph: Details specific bizarre examples, including the Charli D'Amelio "blueberries" example. The

ar-137716(blueberries) image is a perfect visual representation of this particular error and should be placed immediately after the paragraph describing it. -

Third paragraph: Discusses TikTok scaling back the feature to product identification. There's no specific visual mentioned here, so I'll hold off on an image to avoid placing images directly after one another.

-

Fourth paragraph: Continues the discussion on scaling back and the user complaints. Still no specific visual mentioned.

-

Fifth paragraph: Shifts to "AI Hallucinations Persist Across Tech Industry," mentioning other companies' AI failures. It discusses recognizing content in images and videos. The

ar-137939(TikTok app on a smartphone) fits well here, as it visually represents the TikTok platform where these AI issues occurred, reinforcing the context of AI on social media. Placing it after the discussion of AI accuracy issues and before the concluding section about AI integration seems appropriate. -

Sixth paragraph: Concludes with what this means for AI integration on social media. No new visual elements are introduced.

-

-

Final Image Placement Check:

- Image

ar-137717after the first paragraph. - Image

ar-137716after the second paragraph. - Image

ar-137939after the fifth paragraph.

This adheres to the rules: max 3 images, min 1 image, images placed after relevant paragraphs, not between list items (no lists here), not directly after one another, prioritizing relevant sections.

- Image

References

Summarized by

Navi

Related Stories

TikTok Introduces AI Content Controls and Enhanced Detection Technology

19 Nov 2025•Technology

TikTok Launches AI Alive: A Groundbreaking Yet Glitchy Image-to-Video Tool

14 May 2025•Technology

TikTok Unveils AI-Powered Creator Tools and Enhanced Revenue Sharing at US Creator Summit

29 Oct 2025•Technology

Recent Highlights

1

Anthropic raises $65 billion, overtakes OpenAI as most valuable AI startup at $965 billion valuation

Business and Economy

2

Apple's Siri overhaul for iOS 27 brings Gemini integration and standalone app to compete with ChatGPT

Technology

3

Google AI Search officially replaces traditional web search with Gemini-powered conversations

Technology