UK government reverses AI copyright stance after artists and creative industries push back

6 Sources

6 Sources

[1]

UK reverses course on AI copyright position after backlash

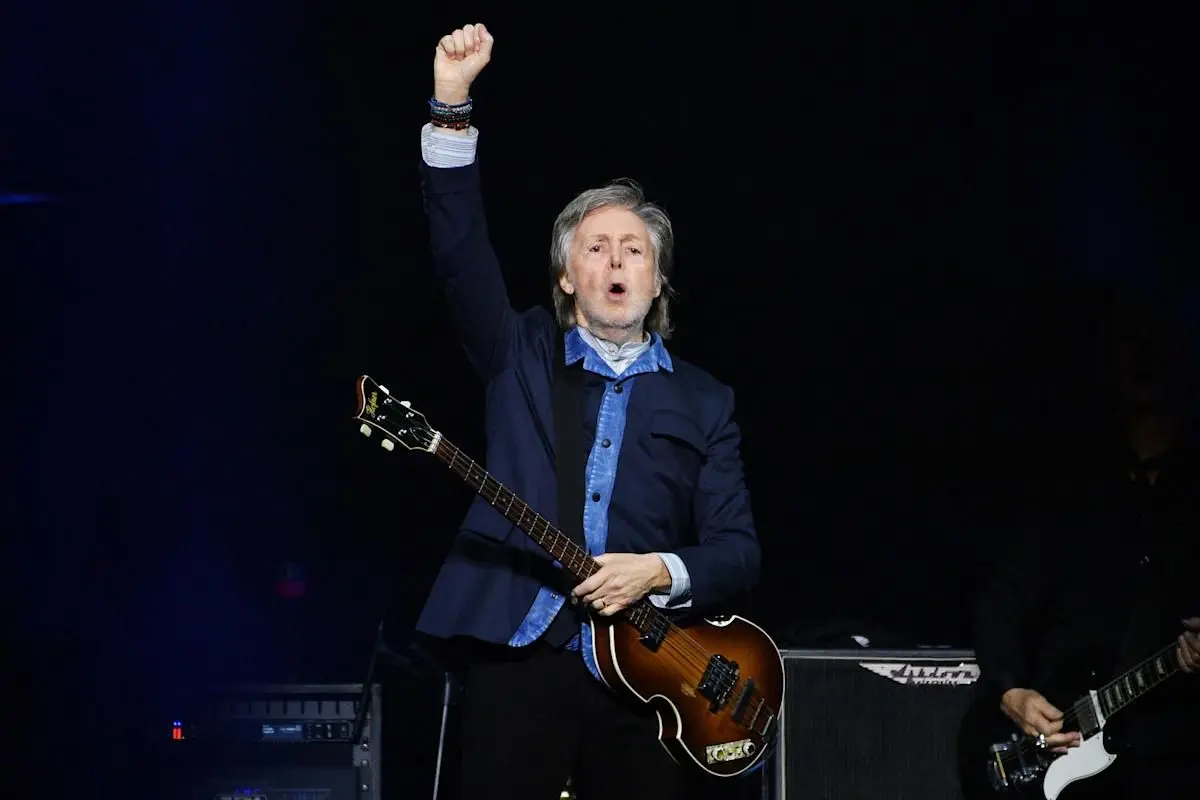

Chalk up a win for creative artists against AI companies. On Wednesday, the UK government abandoned its previous position on copyrighted works. It's currently working on a data bill that, if unaltered, would have allowed AI companies like Google and OpenAI to train models on copyrighted materials without consent. Artists and other copyright holders would only have been offered a mere opt-out clause. After significant backlash, the UK backed off from that position. "We have listened," Technology Secretary Liz Kendall said on Wednesday. However, the government's new stance is, well, not a stance at all. It currently "no longer has a preferred option" about how to handle the issue. Still, backpedaling from its previous position is viewed as a win for artists. UK Music CEO Tom Kiehl described the decision as "a major victory," while promising to work with the government on the next steps. Last year, some of Britain's highest-profile artists objected to the government's position. Sir Elton John and Dua Lipa were among those speaking out. Even Sir Paul McCartney weighed in, warning that the AI industry could "rip off" artists and lead to a "loss of creativity." "You get young guys, girls, coming up, and they write a beautiful song, and they don't own it, and they don't have anything to do with it," McCartney told the BBC in 2025. "And anyone who wants can just rip it off. The truth is, the money's going somewhere... somebody's getting paid." The government will now weigh its options, taking "the time needed" to balance the wishes of artists and the tech industry. "We will not introduce reforms to copyright law until we are confident that they will meet our objectives for the economy and UK citizens," it wrote in a report. "This means protecting the UK's position as a creative powerhouse, while unlocking the extraordinary potential of AI to grow the economy and improve lives." "Any reform must ensure that right holders can be fairly rewarded for the economic value their work creates, and that they are protected against unlawful and unfair use of their work. It must also ensure that AI developers can access high-quality content."

[2]

Government backtracks on AI and copyright after outcry

The UK government has backtracked on its position on copyright and AI, stating it must take time to "get this right". Its original position - allowing AI companies to use copyrighted works to train their models with an opt-out option - received major backlash from the likes of Sir Elton John and Dua Lipa. "We have listened," Technology Secretary Liz Kendall said on Wednesday, saying the government no longer favours that approach. However, the government's position is now unclear, saying it "no longer has a preferred option" for what to do next. Kendall said the government had "engaged extensively" with people in the creative and AI industries. It is attempting to balance the interests of the two sectors by giving creatives "control how their work is used", while recognising AI models need to be trained work such as writing, music and video. In a report published on Wednesday, the government said there was "no consensus on how these objectives should be achieved". In a separate impact assessment, it recognised the contributions both the creative sector and the AI industry make to the UK economy. The assessment said UK culture is a "world-leading national asset", while the AI industry is growing "23 times faster than the rest of the economy". The technology secretary's announcement followed a consultation on the issue, which concluded the government's initial plan was overwhelmingly rejected by the creative sector. But there was no firm conclusion on what happens next, with the government saying it would not reform copyright laws "until we are confident that they will meet our objectives for the economy and UK citizens." Mandy Hill, president of the Publishers Association, said the backtrack was a victory "over the self-interest of a handful of large corporations". However, Hill said the government has not entirely ruled out allowing tech companies to use copyrighted content to train AI models without a license. "The existing law is clear," she added. "Copyright material cannot be used for AI development and training without permission." Anthony Walker, deputy chief executive of Tech UK, said getting the balance right is critical. "The UK has set its sights on leading the G7 in AI adoption, but that requires a clear and enabling framework for AI innovation," he said. "With international competitors moving ahead, the UK cannot afford for this to remain unresolved." The issue of AI and copyright continues to be controversial. Last year, some of the highest profile British artists - along with peers in the House of Lords - wanted an amendment to the government's Data (Use and Access) Bill. It would have forced tech companies to declare their use of copyright material when training AI tools. Without it, it was argued, tech firms would be given free rein to help themselves to UK content and train their AI products to mimic it - putting human artists out of work. Sir Elton, in an interview with the BBC, compared it to "committing theft, thievery on a high scale". However, in June last year, the government refused the amendment and the wide-ranging bill was passed. Sign up for our Tech Decoded newsletter to follow the world's top tech stories and trends. Outside the UK? Sign up here.

[3]

UK Creatives Score Victory Against AI Companies in Training Dispute

The U.K. government has stepped back from proposed copyright reforms that would have allowed AI companies to use copyrighted material without permission, marking a shift after strong opposition from the creative sector. Ministers confirmed that the opt-out model "is no longer the government's preferred way forward". The previous approach -- under which rights holders would have needed to actively exclude their work -- had drawn criticism from photography organizations and prominent U.K. artists, including Sir Elton John and Sir Paul McCartney, who described it as "legalizing theft". "We have listened. We have engaged extensively with creatives, AI firms, industry bodies, unions, academics and AI adopters, and that engagement has shaped our approach. This is why we can confirm today that the government no longer has a preferred option," says Technology Secretary Liz Kendall. The announcement follows a consultation in which creative organizations widely rejected the original proposal. Industry groups described the shift as a significant moment. While the decision this week is seen as good news for creators, there is still a possible "science and research exemption", which some fear could permit AI developers to train systems on protected works before negotiating licenses at a later stage. Critics argue this would weaken the position of creators in any negotiations. Owen Meredith of the News Media Association tells The Times of London the government must dismiss such options, particularly those linked to research or commercial use. "The government's dropping of its hugely unpopular 'preferred option' is certainly welcome. But it is still explicitly considering weakening copyright law to benefit AI companies," Ed Newton-Rex, chief executive of Fairly Trained, tells The Times. "Virtually everything is still on the table, including the opt-out ... It's just kicking the can down the road." The government says it is trying to balance competing priorities. It recognizes the creative sector as a "world-leading national asset", while noting that the AI industry is expanding rapidly. Kendall says reforms will not proceed "until we are confident that they will meet our objectives for the economy and UK citizens". Next steps include work on labeling AI-generated content, improving licensing systems, and addressing risks such as deepfakes. For now, the broader framework for how AI companies can use copyrighted material remains undecided.

[4]

Actors, musicians and writers welcome UK U-turn on AI copyright

Technology secretary says government no longer prefers plan to allow tech firms to take copyrighted work Actors, musicians and writers have welcomed the UK government's decision to backtrack on plans to let AI firms use copyright-protected work without permission. Technology secretary Liz Kendall said it no longer had a "preferred option" on copyright reform, having previously supported a proposal allowing tech companies to take copyrighted work - unless rights holders opted out of the process. "We have listened," said Kendall on Wednesday, "we have engaged extensively with creatives, AI firms, industry bodies, unions, academics and AI adopters, and that engagement has shaped our approach. This is why we can confirm today that the government no longer has a preferred option." The proposal had triggered a backlash from Sir Elton John, who called the government "absolute losers" over the plans. Dua Lipa, Abba's Björn Ulvaeus, the actor Julianne Moore and the Radiohead singer Thom Yorke are among thousands of artists who have voiced their concerns over the potential legal overhaul. Creative industry organisations welcomed the new government stance. Equity, the actors' trade union, said the move was "recognition that selling out the UK's creative industries to benefit US tech companies would've been an act of national self-sabotage". UK Music, a trade body representing the UK music industry including the Musicians' Union, said it was "delighted" but urged the government to rule out the proposal altogether. The Society of Authors said the announcement was a "hard-won" moment for writers and creators, while the News Media Association - whose members include the Guardian - said giving away the UK's "goldmine" of creative content was not the way to drive economic growth. Intellectual property has become a key battleground in the development of AI because the technology requires vast amounts of data, including copyright-protected work taken from the open web, to develop tools such as chatbots and image generators. Ed Newton-Rex, a composer and campaigner for protecting artists' copyright, said the creative industries should not celebrate too soon. "Virtually everything is still on the table, including the opt-out," he said. "It's just kicking the can down the road." Kendall's announcement came as the government published an update on its proposals, including an economic impact assessment - although it did not provide a "monetised" economic cost for each of its four copyright proposals. Along with the former preferred option, a government consultation is considering: leaving the situation unchanged; forcing AI companies to seek licences for using copyrighted work; or allowing AI firms to use copyrighted work with no opt-out for creative companies and individuals. Beeban Kidron, a cross-bench peer who led opposition to the proposals in the House of Lords, said there was "nothing but political will" standing in the way of letting artists see how and where their work is being used by AI firms - which would pave the way for them to be compensated. Kendall also announced: the establishment of a taskforce to examine proposals to label AI content; a consultation on protecting someone's likeness from being used in deepfakes; a working group to support smaller creative organisations in licensing their content; and a review of how creators can monitor use of their work by AI firms. Antony Walker, deputy chief executive at techUK, a tech industry trade body, said the announcement must be used as an opportunity to "reset and find a way forward" on copyright. The economic impact assessments said leaving copyright law unchanged could benefit creative firms by growing the market for licensing content to AI firms, but could prevent the development of cutting-edge AI models in the UK. Requiring licensing could result in some AI tools being withdrawn from the UK, the government added, although it could be a "net positive" for the creative sector. Waiving copyright for AI firms could put at risk a "globally competitive" creative sector but would lower costs for tech firms, it said, while the controversial opt-out option could affect both sides if it is not implemented efficiently.

[5]

AI and copyright - UK government finally backs off 'free for all', but sits on its hands and offers no solutions

After months of controversy, the British Government has backed away from its intent to change copyright law and opt creatives into AIs being trained on their work by default. The about turn has been welcomed as a win by Britain's $165 million creative sectors - and it is, but with an implied intention to still reform copyright rules in the future, a policy to which the government has previously committed. The decision came in the wake of the Government's 2025 public consultation, in which only three percent of over 11,500 respondents supported the proposal, while 95% expressed a preference to either maintain and enforce copyright protections, or strengthen them, forcing AI vendors to license all data. Those findings were sneaked out by Whitehall just before Christmas, in an apparent damage-limitation exercise. In a detailed report published, as promised, yesterday (18 March), the UK Government says: In light of the strong views from the consultation, the gaps in evidence, and the rapidly evolving AI sector and international context, a broad copyright exception with opt-out is no longer the government's preferred way forward. We propose to gather further evidence on how copyright laws are impacting the development and deployment of AI across the economy. We will consider and engage stakeholders on other potential policy approaches. We will also continue to monitor developments in technology, litigation, international approaches, and the licensing market. However, the better part of wisdom is what is left unsaid, and the UK Government has not stated that it will heed advice, from the House of Lords and others, to enforce existing copyright laws, under which some AI vendors' action are illegal. In its place, a new evidence-based, listening approach is declared, which is qualified good news, as it will force vendors to be specific about where the lack of ability to scrape copyrighted data without consent, credit, or payment to the creator is inhibiting their businesses. The report explains: We have limited and uncertain evidence on the impact of copyright on the development and deployment of AI in the UK. We must continue to build this evidence base. The economic impact assessment on copyright and AI considers the available evidence in more detail. There is uncertainty about the extent to which reforms to copyright would secure significant investment in AI development in the UK, and further uncertainty about the benefits to the security and prosperity of the UK economy from that greater investment. It is also unclear how copyright impacts the deployment of AI, particularly as the use of autonomous agents becomes more widespread. Finally, there is some ongoing uncertainty around how a broad exception would impact licensing markets, given most training is likely to occur in jurisdictions with the most permissive regimes, and such regimes are being litigated. In short, 'We don't know what to do': the policy equivalent of typing 'All work and no play makes Jack and dull boy' hundreds of times. However, the report misrepresents one specific aspect of the UK situation. It says: The majority of responses reflected views of right holders and the creative industries. These included a significant number of responses from individuals, including individual creators and performers. Many of these responses either repeated or built upon a set of template letters, or template survey responses, that were created and distributed by interested organisations or individuals. A significant, but smaller, number of responses reflected views of the technology sector, including AI developers. By implying that the protest was, in some way, entirely a coordinated campaign that pitched creatives pitched against technologists and the AI sector - an attitude reflected, outrageously, by the Intellectual Property Office earlier this year, the Government is acting in bad faith. Trade organization UKAI, which represents the industry the British Government claimed to be supporting with its proposal to opt creators into AI training, also rejected the plan. Its coruscating Spring 2025 report on the strategy (see diginomica, passim) called the government's preferred option "misguided", "damaging", and "divisive". So, not only did UK artists, creatives, and media organizations not want it, Britain's AI industry didn't support it either. But for whatever reason, the Government ignored that report and, despite being asked about it on three occasions by diginomica last year, the Department for Science, Innovation, and Technology has always refused to comment on it. So, what solutions does the 125-page document offer? Almost none, beyond commitments to gather evidence, consider impacts on both the creative sectors and the burgeoning AI industry, seek consultation, and work towards viable long-term answers. It also points to significant gaps in areas such as transparency, licensing, and enforcement. In themselves, those are positive developments, but after years of debate, consultation, multiple inquiries, research programs, working groups, and reports, they constitute slim pickings as a policy response. The government should regulate, not step back.But the report says: We must take the time needed to get this right. We will not introduce reforms to copyright law until we are confident that they will meet our objectives for the economy and UK citizens. This means protecting the UK's position as a creative powerhouse, while unlocking the extraordinary potential of AI to grow the economy and improve lives. In the meantime, protests have been growing. Earlier this week, a paperback called 'Don't Steal This Book' was released, which just contains the names of over 10,000 authors whose livelihoods depend on copyright. And in February 2025, 'Is This What We Want?', an album of recordings of empty recording studios, was backed by over 1,000 artists, including Sir Paul McCartney, Damon Alban, and Kate Bush. In response, then, the Government has also offered a blank. However, the report does note the rising number of lawsuits worldwide for copyright theft and the harvesting of pirated content - especially in America, where copyright rules are less stringent. In the US, artists have also been protesting AI vendors' approach, so any claim that the UK's copyright regime is out of step with a more permissive and accepting America is clearly not true. For example, last year in the US, the Human Artistry Campaign saw over seven hundred artists back a project called Stealing Isn't Innovation. "Big Tech is trying to change the law so they can keep stealing American artistry to build their AI businesses - without authorisation and without paying the people who did the work," said the campaign. "That is wrong; it's un-American, and it is theft on a grand scale." That said, the new decision to walk back a deeply unpopular proposal has been welcomed by the House of Lords' Communications and Digital Committee, whose own Inquiry into AI and copyright reported earlier this month. Chair Baroness Keeley said: The Government's publications today are a welcome step towards a more evidence-based approach on AI and copyright. We are encouraged that the government recognizes the need to ensure creators are paid fairly, and to support individuals and smaller organizations as they navigate new licensing opportunities. We also welcome the commitments on technical standards, the labelling of AI-generated content and addressing the harms of unauthorised digital replicas. We understand that this report does not represent the government's final position on AI and copyright, but it is vital that we move quickly towards a stable framework that makes clear that strong copyright protection, meaningful transparency obligations and licensing are the way forward for the UK. The government's update makes clear how much work remains to be done to achieve this." She added: Our recent report on AI, copyright and the creative industries was clear that the government should rule out any weakening of copyright law that would remove incentives to license copyrighted works for AI training, and that a commercial research exception was neither necessary nor desirable. We also called for mandatory transparency obligations to underpin the emerging licensing market.We support the government's proposals for future work on transparency and other areas. The coming months will be crucial in helping us to reach final decisions that give confidence to rightsholders and responsible AI developers alike. We will expect ongoing dialogue with ministers to ensure continued progress towards this goal. The government has yet to publish its detailed response to the House of Lords report, which it is obliged to do. At this stage, it is unclear whether it will merely repeat its 'listening, evidence, consultation' approach, or offer more detailed point-by-point disclosure of its plans. Others have welcomed the new report too, albeit cautiously. Composer, technologist and artist rights campaigner Ed Newton-Rex, founder and CEO of ethical sourcing venture Fairly Trained, said: A change to copyright law that would have been extremely harmful, and [the Government] has now backed away from that proposal. That's certainly worth celebrating. But there is lots that remains uncertain. The government has said it plans to reform copyright law, and nothing in the report suggests that has changed. In fact, it has explicitly left weakening copyright law on the table - so people on the side of creatives should not assume we are out of the woods just yet. Meanwhile Benjamin Woollams, Founder and CEO of another organisation, TrueRights, said: What this report really shows is that we're past the point of debating the principle. Most people now accept that creative work shouldn't be used to train AI systems without permission or payment. The issue is that still isn't how things are working in practice. There's a lot of focus on licensing as the solution, but you can't have a functioning market without visibility. At the moment, creators don't know where their work is being used, which makes control or compensation almost impossible. And Tom West, CEO of Publishers' Licensing Services (PLS), said: "We welcome that the Government has listened to the strong response it received from across the UK's creative industries to its consultation and has stepped back from its preferred option of a copyright exception with an opt out and is to review the transparency of AI inputs - which would further boost licensing. While we await further clarity from the government on the long-term direction of its copyright policy, PLS will continue to serve our publishers and work with our partners on market-based, industry-backed AI licensing solutions. At the annual London Book Fair last week, PLS launched the first stage of a collective licensing solution designed to support the use of published content in AI. Earlier this month in an interview with the BBC's Newsnight current affairs program, American musician David Byrne said: AI is basically sucking up all human knowledge and throwing it back at us - for a price. Asked if he believed, as others have suggested, that this constitutes "the biggest theft in history", his answer was simple: "Yes." But in some ways, I would argue it is worse than that. AI companies understand that more and more AI-enabled Web searches are 'zero click': information is consumed solely within the AI, and visitors do not click out to the original sources. In time, therefore, more and more of those sources - websites, archives, libraries, blogs, channels, research platforms, image banks, and more - will wither and die on the vine, meaning that all their scraped data, in many cases obtained without consent, will only exist in the AIs' weights. Eventually, AI vendors will not only have encircled the world's creative endeavours as though they were commons, therefore, but they will also be the sole remaining stewards. They are doubtless aware of that, and yet have still not licensed much of that data - despite eight vendors being in the top ten most valuable companies on Earth. To quote the late David Bowie, "sit on your hands on the bus of survivors": the UK Government is doing just that. As Newton-Rex - who worked for many years within the AI sector - noted at the Lords Inquiry, there is no shortage of licensing solutions on the table, merely an absence of will among vendors to engage with them.

[6]

Plans to let AI firms use music without permission abandoned by government

The decision was welcomed as a "first step" which avoided the "worst outcome". The UK government has scrapped plans to introduce copyright law exceptions which would allow AI firms to use the work of songwriters without their permission. The government had been in favour of allowing AI developers to train their machines on copyrighted works, with rightsholders having an opt-out option. However, that approach was "overwhelmingly rejected by the vast majority of the creative industries", Liz Kendall, the minister for innovation and technology, said on Wednesday. Due to this, she announced, "the government no longer has a preferred option". The decision was welcomed as a "first step" which avoided the "worst outcome" by The Ivors Academy, which represents songwriters and composers. The government was urged to go further by UK Music and other creative bodies. "We urge them to go further and rule out resurrecting this plan throughout their period in office," UK Music boss Tom Kiehl said. "The 220,000 people in our sector, which generates £8bn for the UK economy, should be entitled to work and earn a living without the constant fear that the fruits of their labour could effectively be taken by AI firms without payment or permission." The government now plans to launch a consultation in the summer on how to address the harm caused by unpermitted "digital replicas", while protecting legitimate innovation. The plans had caused widespread outrage within the creative industries. Producer Giles Martin described the now-abandoned plans to allow AI firms to use artists' work without permission, unless creators opt out, as like allowing criminals to burgle houses unless specifically told not to. Read more from Sky News: UK weather: Temperatures could soar to 21C Senegal stripped of the Africa Cup of Nations Sir Elton John and Simon Cowell were among the celebrities who had backed a campaign opposing the government's previous proposals. More than 1,000 artists and musicians, including Kate Bush, Damon Albarn, Sam Fender and Annie Lennox, previously recorded a silent album in protest at proposed changes. Concerns about unfair mining of material by AI firms have grown across different sectors in recent years. In February, Sky News announced it had formed the Standards for Publisher Usage Rights coalition (SPUR) with other major news organisations to develop industry standards for AI's fair use of their material. The alliance said while the rise of AI brings opportunities for publishers and their audiences, it "also raises urgent questions about fairness, consent, attribution, transparency and trust". "The lack of transparency about how AI answers are created risks eroding public trust in both the news and the technologies used to access it," the group added.

Share

Share

Copy Link

The UK government has abandoned its controversial plan to allow AI companies to train models on copyrighted works without permission. After facing massive backlash from artists including Sir Elton John and Dua Lipa, Technology Secretary Liz Kendall confirmed the opt-out model is no longer preferred. However, the government now has no clear position on how to balance creative sector rights with AI innovation needs.

UK Government Policy Reversal on AI Copyright

The UK government has stepped back from its controversial position on AI copyright, abandoning plans that would have allowed AI companies training models on copyrighted material without obtaining consent from rights holders

1

. Technology Secretary Liz Kendall announced on Wednesday that the government "no longer has a preferred option" on how to handle the contentious issue, marking a significant UK government policy reversal after overwhelming backlash from creative industries2

.

Source: PetaPixel

The original proposal would have permitted companies like Google and OpenAI to use copyrighted material without permission, offering creators only an opt-out model to protect their work. This approach drew fierce opposition from over 11,500 consultation respondents, with 95% expressing preference to either maintain existing copyright protections or strengthen them by forcing AI vendors to obtain proper licensing

5

. Only three percent supported the government's initial proposal.Creative Sector Celebrates Major Victory

The decision represents a significant win for the creative industries, with UK Music CEO Tom Kiehl describing it as "a major victory"

1

. High-profile British artists had vocally opposed the original plans, with Sir Elton John calling the government "absolute losers" and comparing the proposal to "committing theft, thievery on a high scale"4

. Sir Paul McCartney, Dua Lipa, Thom Yorke, and Julianne Moore were among thousands who voiced concerns about the potential legal overhaul4

.

Source: Engadget

Equity, the actors' trade union, stated the move was "recognition that selling out the UK's creative industries to benefit US tech companies would've been an act of national self-sabotage"

4

. Mandy Hill, president of the Publishers Association, called the backtrack a victory "over the self-interest of a handful of large corporations," while emphasizing that existing copyright law clearly states that copyright material cannot be used for AI development and training without permission2

.Balancing AI Innovation and Intellectual Property Rights

The UK government now faces the complex challenge of balancing AI innovation with protecting intellectual property rights. In its report, the government acknowledged that the UK culture sector is a "world-leading national asset," while the AI industry is growing "23 times faster than the rest of the economy"

2

. The government stated it will not introduce reforms to copyright law "until we are confident that they will meet our objectives for the economy and UK citizens"1

.

Source: diginomica

However, critics warn that the battle isn't over. Ed Newton-Rex, chief executive of Fairly Trained, cautioned that "virtually everything is still on the table, including the opt-out," describing the announcement as "just kicking the can down the road"

3

. Concerns remain about a possible "science and research exemption" that could permit AI developers to train systems on protected works before negotiating licensing later, potentially weakening creators' negotiating positions3

.Related Stories

What Happens Next for Training Data and Transparency

The government consultation is still considering multiple options: leaving the situation unchanged, forcing AI companies to seek licenses for using copyrighted work, or allowing AI firms to use copyrighted work with no opt-out

4

. Kendall announced several next steps, including establishing a taskforce to examine proposals for labeling AI-generated content, a consultation on protecting individuals' likeness from being used in deepfakes, a working group to support smaller creative organizations in licensing their content, and a review of how creators can monitor use of their work by AI firms4

.Anthony Walker, deputy chief executive of Tech UK, emphasized that getting the balance right is critical, noting that "the UK has set its sights on leading the G7 in AI adoption, but that requires a clear and enabling framework for AI innovation"

2

. Cross-bench peer Beeban Kidron, who led opposition to the proposals in the House of Lords, stated there is "nothing but political will" standing in the way of letting artists see how and where their work is being used by AI firms, which would pave the way for compensation4

. The government's data bill and broader framework for how AI companies can access training data remains undecided, with implications for both the UK's £165 million creative sectors and its rapidly expanding AI industry5

.References

Summarized by

Navi

[4]

Related Stories

UK government delays AI copyright rules as House of Lords backs licensing-first approach

06 Mar 2026•Policy and Regulation

UK Creative Industries Reject Government's AI Copyright Exemption Proposal

17 Dec 2024•Policy and Regulation

UK Government's AI Copyright Proposals Spark Controversy in Creative Industries

25 Feb 2025•Policy and Regulation

Recent Highlights

1

Google Maps unveils Ask Maps with Gemini AI and 3D Immersive Navigation in biggest update

Technology

2

Three Tennessee teens sue xAI over Grok AI creating child sexual abuse material from real photos

Policy and Regulation

3

Meta plans to cut 20% of workforce as AI spending reaches $600 billion, mirroring broader tech trend

Business and Economy