Cerebras Systems raises $5.5B in blockbuster IPO after nearly burning out with $8M monthly spend

23 Sources

[1]

$60B AI chip darling Cerebras almost died early on, burning $8M a month | TechCrunch

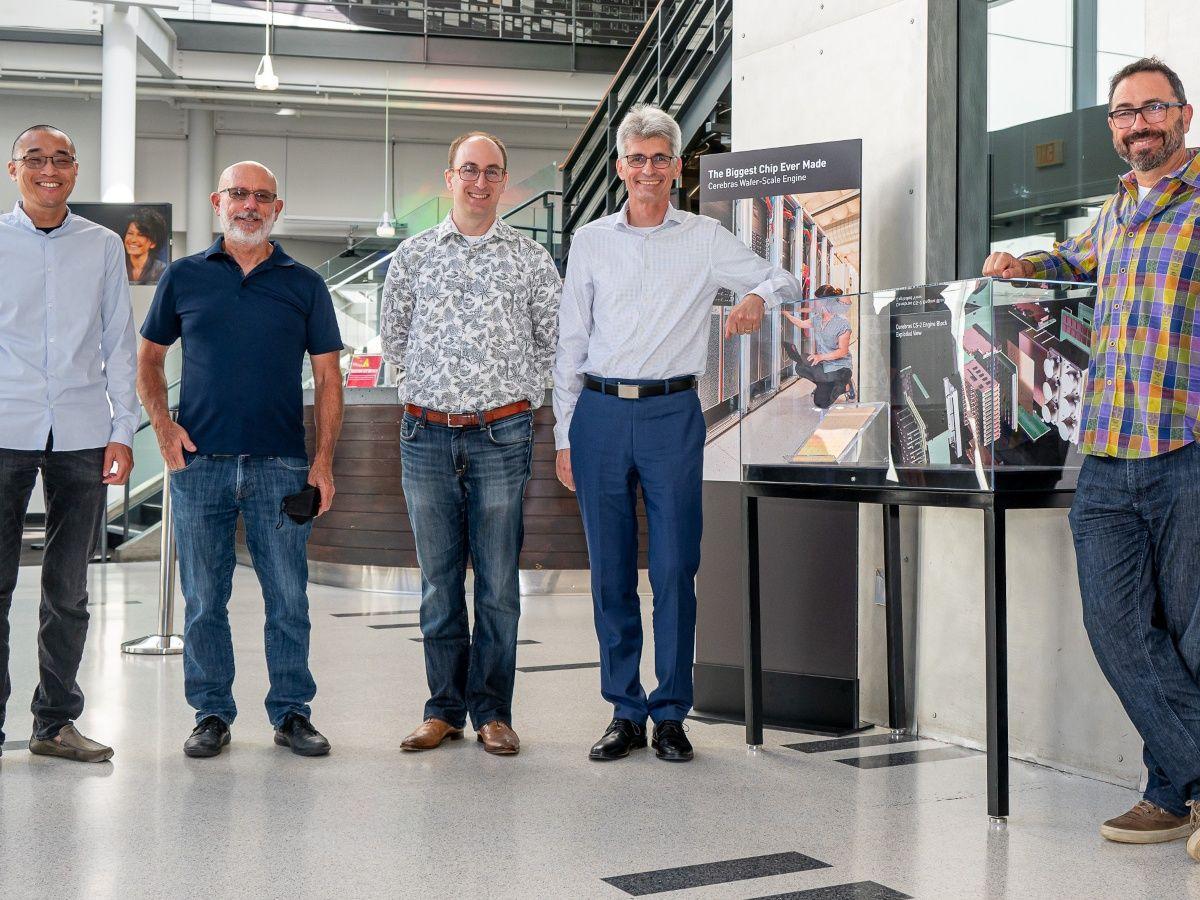

Today, Cerebras Systems is a public company that sells AI chips for inference to giants like OpenAI and AWS. It held a blockbuster IPO on Thursday, with both of its co-founders billionaires, and ended the week worth about $60 billion. But in 2019, when it was three years old, it came dangerously close to failure - incinerating a shocking amount of money. It was trying to solve a technical problem no one in the semiconductor industry thought could be done. "We were spending about $8 million a month," founder CEO Andrew Feldman told TechCrunch of that period. "At this point, we had incinerated nearly $200 million trying to solve one technical problem." Every few weeks, Feldman was forced to make the painful walk of shame to the board meeting to report another failure and more money burned. But he had no choice. Without a solution, Cerebras was dead anyway. It was founded with an idea that was simple on paper. The microprocessor industry had spent its entire 50+ years making CPUs faster and cheaper by cramming more transistors onto a silicon wafer and dicing wafers into ever tinier pieces. But AI required so much compute power, many chips had to be strung together and then forced to communicate with each other. Cerebras' founders believed turning a whole, even bigger wafer into one giant, powerful chip, would work faster. The problem was, no one had ever successfully done this before, for any reason, AI or not. Orchestrating that many microscopic electronic components onto a larger, but still thin, surface introduced compounding engineering problems. Once Cerebras crossed the first threshold of designing the mega chip and then manufacturing it with TSMC, the team hit the real roadblock. They couldn't solve "packaging." This involves everything after manufacturing the silicon itself: adhering it to a motherboard, getting power to it, dealing with heating and cooling as well as the pipes that would deliver and return data, Feldman said. Cerebras' chips "were 58 times larger. We were using 40 times as much power as anybody had ever used," he said. There were no premade heat sinks. No vendors. No manufacturing partners. The brightest minds in microprocessor engineering had tried for decades to build such big, yet more dense chips, and failed. The Cerebras team was left with trial and error in which "we destroyed an enormous number of chips" and an enormous amount of cash. But without functional packaging, the chip was useless. After exhaustive analysis of each failure, the team finally solved enough problems: how to cool it and move data around. In one instance, they had to invent their own machine that could bolt-in 40 screws simultaneously to secure the wafer to a board without cracking it. Feldman still remembers the day in July 2019 when it all, miraculously, worked. They installed the packaged chip into a computer, turned it on and the entire founding team (pictured below) "just stood in the lab and stared at it," he said. "Watching a computer run is about as exciting as watching paint dry. But there we were watching lights flashing on the computer, stunned that we'd solved this." "That was one of the greatest moments of my life," he said. That's significant, because this same founding team had previously built and sold a pioneering cloud server startup, SeaMicro, to AMD for $334 million in 2012. The day the chip finally worked was also about two years after OpenAI had talked to Cerebras acquiring it, which Feldman confirmed to TechCrunch occurred like the publicly revealed emails said it did. Those talks fell through amidst growing squabbling among the OpenAI founders, several of whom are angel investors in Cerebras. Today OpenAI is a customer and a partner, having loaned Cerebras $1 billion secured by warrants. Those warrants conditionally grant OpenAI about 33 million shares of Cerebras' stock, the S-1 discloses. (33 million shares are worth over $9 billion at Friday's closing price of $279.) Interestingly, Cerebras also agreed to not sell its wares to specific OpenAI competitors as part of that loan deal. Feldman wouldn't confirm that the obvious company this involves: Anthropic. He did, however say that restriction is temporary. "It's limited in time, and it was designed to make sure that we could get OpenAI the capacity," he said. The truth was, Cerebras hasn't yet grown big enough to handle multiple fast-growing model makers anyway. He likened selling AI compute capacity to an all-you-can eat buffet. Instead of trying to stuff itself on all potential customers, "We're going to work with part of the buffet only, and we're going to get comfortable with that, before we attack the rest," he said.

[2]

Cerebras raises $5.5B, kicking off 2026's IPO season with a bang | TechCrunch

Cerebras raised $5.5 billion in its IPO on Thursday, pricing shares at $185 Wednesday evening, way higher than its range ($115 to $125, later raised to $150-$160), even as it increased the size of the offering to 30 million shares. And pre-market trading indicates that shares are going to open with a giant pop, as retail investors bid up the price to grab them. (We'll update this story after trading begins.) Even at the IPO price, the company enters its first day of trading at a fully-diluted valuation of $56.4 billion (meaning, accounting for all shares). Co-founder CEO Andrew Feldman's stake at $185/share is worth nearly $1.9 billion, while co-founder CTO Sean Lie's stake weighs in at about $1 billion. A year ago, it looked like this day would never happen for Cerebras. The Nvidia competitor, which designed its giant chip from scratch, purpose-built for AI, had first filed to go public in 2024. But concerns about a large investment from Abu Dhabi-based Group 42 mired the IPO in an endless review from the Committee on Foreign Investment in the United States (CFIUS). Investors were also cool about its financials: Group 42 accounted for almost all of Cerebras's revenues. So those IPO plans were shelved. IPO ambitions reappeared in earnest in April when the company was able to report about double the revenues: $510 million in 2025 (up 76% year-over-year), and from a handful of customers. It also reported a massive swing to a profit -- to $237.8 million in net income -- compared to losing nearly half a billion the year before. Investors began salivating. Cerebras has now come out as a major contender for supplying chips for inference -- the ongoing compute processing required for models to answer prompts -- and now counts OpenAI (in a complicated circular-deal relationship), G42, Saudi's Mohamed bin Zayed University of Artificial Intelligence and Amazon Web Services as customers.

[3]

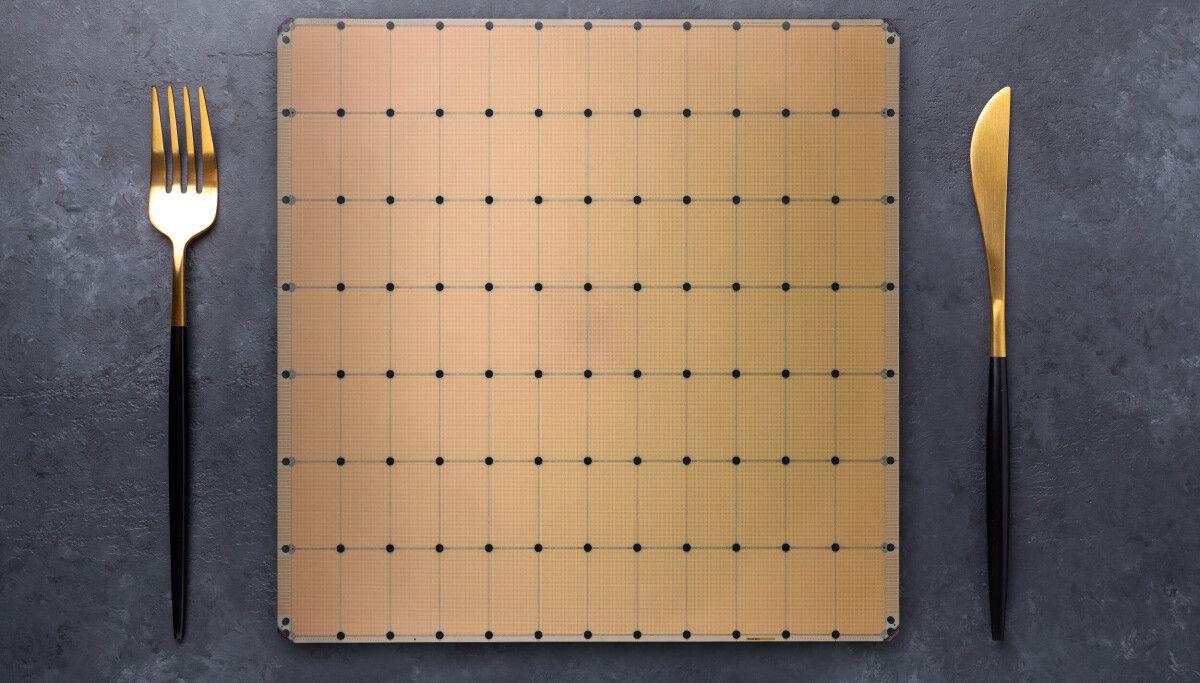

Cerebras risked it all on dinner plate-sized AI accelerators a decade ago. Today it's worth $66 billion

Cerebras Systems has done what many chip startups aspire to but few ever achieve. On Thursday, the company and long-time Nvidia rival raised $5.55 billion in an initial public offering (IPO), making the company worth more than $66 billion on its first day of trading. The milestone didn't happen overnight. It took more than a decade, a radically different approach to chipmaking, and two separate attempts at an IPO to pull off. Founded in 2015 by former SeaMicro head Andrew Feldman, Cerebras Systems' first chips looked nothing like GPUs or AI accelerators of the time. At the time, most high-end GPUs used dies measuring roughly 800 square mm that'd been cut from a larger wafer. Eight or more of these GPUs would typically be stitched together by high-speed interconnects, like NVLink, which allowed them to pool their resources and behave like one big accelerator. Rather than cutting up a wafer into smaller chips just to reconnect them again, Cerebras figured why not etch all that compute into a wafer-sized chip? And so the Wafer-Scale Engine (WSE), a giant chip measuring 46,225 square mm -- about the size of a dinner plate -- was born. Cerebras' first chips weren't just bigger; they were purpose-built for AI training and sported a novel compute engine designed to speed up the highly sparse matrix multiply-accumulate operations common in deep learning. This hardware sparsity took advantage of the fact that large portions of a neural network's parameters ultimately end up being zeros, allowing Cerebras to boost the effective computational output of its first-gen WSE accelerators from 2.65 16-bit petaFLOPS to 26.5. Nvidia added support for sparsity in its Ampere generation a year later, but it only worked for a specific ratio (2:4), limiting its effectiveness to select use cases. To train a model, up to 16 of these chips could be ganged together over a high-speed interconnect. This was kind of important too, because unlike GPUs, which stored model weights in HBM or GDDR memory, Cerebras' chips were almost entirely reliant on on-chip SRAM. Although SRAM is insanely fast, which is why it's used for caches in basically every modern processor, it's not particularly space efficient. While Cerebras' first wafer-scale accelerator could theoretically reach 9 petabytes per second of memory bandwidth, it was limited to just 18 GB of capacity at a time when Nvidia was already at 32 GB per GPU and about to make the leap to 40 GB or even 80 GB per chip. Still, the approach was performant enough that for its second-generation wafer-scale accelerator, launched in 2021, Cerebras doubled down on the architecture. While the WSE-2 wasn't physically larger, the move to TSMC's 7nm process tech allowed the company to more than double the transistor count, compute density, SRAM capacity, and bandwidth. The chips also supported larger clusters, scaling up to 192, though in practice these clusters were usually smaller at between 16 and 32 systems per site. It was also around this time that Cerebras caught the attention of United Arab Emirates-based cloud provider G42, which quickly became its largest financier. By mid-2023, the chip startup had secured orders worth $900 million for nine supercomputing sites with a 36 exaFLOPS of super sparse AI compute between them. A year later, Cerebras made the jump to TSMC's 5nm process with the WSE-3 and while memory and bandwidth only saw modest gains, compute once again doubled now topping a 125 petaFLOPS of Sparse (12.5 petaFLOPS dense) compute at 16-bit precision. Cerebras' CS-3 systems have now seen the largest deployment, and now power the majority of the Condor Galaxy cluster it built for G42, as well as several new sites across North America and Europe. Up to mid-2024, Cerebras' primary focus had been on training, but then the company announced a boutique inference-as-a-service offering to rival those from competing chip startups like Groq and SambaNova. It turns out, Cerebras' latest AI accelerators' massive SRAM capacity not only made them potent training accelerators but particularly well suited to high-speed LLM inference. In its third iteration, Cerebras' wafer scale accelerators boasted more memory bandwidth than they could realistically use. At 21 PB/s, the chip's memory is nearly 1000x faster than Nvidia's new Rubin GPUs. This, along with a dash of speculative decoding, allowed Cerebras to generate tokens far faster than any GPU-based system of the time. Even today, Cerebras routinely ranks among the fastest inference providers in the world. According to Artificial Analysis, Cerebras' kit can churn out more than 2,200 tokens a second when running GPT-OSS 120B High, 2.8x faster than the next closed GPU cloud Fireworks. Cerebras didn't know it at the time, but its inference platform would be a much bigger business than anyone had expected, and in September 2024, the company submitted its S-1 filing to the SEC to take the company public. Almost exactly a year later, Feldman quietly pulled its S-1, delaying its IPO. His reasons? The company's initial S-1 filing was rather concerning, as it showed G42 was responsible for 87 percent of its revenues. But in the year since launching its inference platform, Cerebras had racked up several high-profile customer wins from big names like Alphasense, AWS, Cognition, Meta, Mistral AI, Notion, and Perplexity. Feldman explained that the initial S-1 didn't yet show the financial results of this growth. The company believed it would have a better story to tell investors later down the road. Cerebras' inference platform has only grown since then. The company has steadily expanded its footprint while announcing deeper relationships with AWS and adding OpenAI as a customer. On Thursday, the startup officially joined the NASDAQ under the ticker CBRS, having raised $5.5 billion in the process. Shares skyrocketed nearly 70 percent on the first day of trading, as investors poured their money into a new way to play the AI boom. An IPO is something many startups aspire to but few, especially in the cut throat world of semiconductors, ever accomplish. From a technical perspective, Cerebras is overdue for a refresh. The WSE-3 accelerators that pushed it over the IPO finish line are getting rather long in the tooth and the architecture lead afforded by its SRAM-heavy design is shrinking. Nvidia's acquihire of Groq gave Feldman's long-time rival an SRAM-packed inference platform of its own, while others are racing to catch up. From here, we can only speculate, but we'll hazard a guess that Cerebras' new shareholders are going to want to see new silicon sooner than later. Based on its existing roadmap, we expect WSE-4 will offer a sizable leap in floating point performance, though not necessarily at 16-bit precision. Much of the industry has aligned around lower precision data types like FP8 and FP4. An exaFLOP of ultra-sparse FP4 compute wouldn't shock us in the least. How useful sparsity would actually be for LLM inference is another matter. LLM inference hasn't historically benefited much from sparsity, but that's never stopped chipmakers from advertising sparse FLOPS anyway. We also expect to see Cerebras pack more SRAM into its next wafer scale compute platform, possibly using TSMC's 3D chip stacking tech to do it. The WSE-3's 44GB of SRAM capacity remains a limiting factor for what models it can and can't serve efficiently. A trillion parameter model like Kimi K2 would require somewhere between 12 and 48 of Cerebras' WSE-3 accelerators, depending on how the model weights are stored and how many parameters have been pruned, and so any increase in SRAM capacity would go a long way toward improving the efficiency of its accelerators. Alongside new silicon, we can also expect to see more collaborations akin to Cerebras' tie-up with AWS. Earlier this year, AWS announced it would combine its Trainium3 AI accelerators with Cerebras' WSE-3-based systems to speed up its inference platform in much the same way Nvidia is doing with Groq's accelerators. Cerebras could certainly do something similar with AMD or any other chipmaker. In this sense, Cerebras is in the position to offer its chips as a decode accelerator, which offloads the bandwidth intensive parts of the inference pipeline onto its chips, while other parts handle the compute heavy prompt processing side of the equation. However, Cerebras frames its next collab; its shareholders are going to expect growth. And as the saying goes, the enemy of my enemy is my friend. ®

[4]

Cerebras boosts IPO price to raise $5.5bn

AI chipmaker Cerebras Systems has raised $5.5bn in its initial public offering, valuing the company at about $40bn, a fresh sign of strong investor demand for AI and semiconductor shares. The IPO marked an early test of Wall Street's appetite for new AI listings ahead of the hotly anticipated debuts of OpenAI and Anthropic, which could follow Elon Musk's SpaceX into the public markets as soon as this year. Strong demand for Cerebras stock led bankers to raise the expected offering price for the shares several times, with the company pricing its IPO at $185 per share on Wednesday evening, said a person familiar with the matter. It had targeted a $4.8bn raise earlier this week. The valuation represents a significant jump from a private financing in September that priced the Silicon Valley company at $8.1bn. Its IPO comes almost two years after the chip developer filed paperwork to list and during a soaring chip stock rally as investors flock to the infrastructure behind the AI boom. Nvidia's market capitalisation has risen to a record $5.5tn and Google's crucial AI chip supplier Broadcom hit a $2tn value for the first time in April, while Intel and AMD have both had their stocks rise more than 80 per cent in the past month. Cerebras reported a $146mn operating loss on sales of $510mn in 2025, with revenue up 76 per cent year-on-year. Like other chip providers, Cerebras is benefiting from an industry-wide shortage of the computing power that Big Tech companies and AI start-ups need. That computing crunch has intensified this year with the arrival of AI tools such as Claude Code, which require even more processing power to carry out so-called "inference" involved in responding to queries. Cerebras' technology uses entire sheets of silicon to make a chip the size of a dinner plate, or 58 times larger than the Nvidia GPUs that dominate the AI market. The company argues the large size is well suited to inference because it does away with the need to link many smaller chips together, which can slow responses to users' AI queries. Cerebras recently received an endorsement of its technology in a $20bn deal with OpenAI which will entail the start-up eventually deploying 750 megawatts of Cerebras' chips as well as co-designing hardware. The heavily lossmaking creator of ChatGPT also extended Cerebras a $1bn working capital loan, to be repaid in cash or computing capacity. It is one of several chip partnerships for OpenAI as it races to boost its computing power. Cerebras has also struck partnerships with Amazon Web Services, Meta and AI start-ups Mistral, Cognition and Windsurf. Those deals should help to reduce its reliance on two large customers in the United Arab Emirates -- computing group G24 and Mohamed bin Zayed University of Artificial Intelligence -- which together accounted for 86 per cent of revenues last year. G42 holds warrants to acquire 3.5mn shares in the company. Launched a decade ago by AMD executives, Cerebras is among a generation of challengers to Nvidia's dominance of the chip market. These include Intel-backed SambaNova; UK-based Graphcore, which was sold to SoftBank in 2024; and Groq, which struck a roughly $20bn deal in December with Nvidia to turn over its top talent and a licence to its intellectual property. None of these groups has made a significant dent in Nvidia's share of the AI processor market, which stands at about 80 per cent, according to analyst group IDC, followed by Broadcom. Chip start-ups face a range of challenges, including securing manufacturing space at a time when big companies have snapped up much of the capacity from Taiwan Semiconductor Manufacturing Company. Very large chips pose particular challenges in manufacturing because they are more likely to contain flaws that can cause the whole piece of silicon to be thrown out. The company says its architecture is able to accommodate these flaws, boosting production yields and therefore its margins.

[5]

What you need to know about Nvidia competitor Cerebras after wild IPO

Cerebras: What you need to know about the Nvidia competitor after wild IPO Cerebras Systems' monster debut on Thursday didn't just place it among tech's biggest-ever IPOs -- it was a crystal clear signal of unstoppable demand for chips to power AI, as tech giants scramble to find alternatives to the costly, sold-out graphics processing units made by Nvidia. Cerebras closed its first day trading on Wall Street with a market cap just below $100 billion, putting it near the few companies to close above that mark, such as Facebook-parent Meta and Alibaba. The stock traded lower on Friday, its first full day of trading. Here's what you need to know about this hot Nvidia competitor. Cerebras makes a different type of chip than the classic Nvidia GPU, and it's the size of a dinner plate. "We build the biggest chips in the semiconductor industry," Cerebras CEO and Co-Founder Andrew Feldman told CNBC on Squawk Box Thursday. "Big chips process more information in less time and deliver results more quickly." Until now, Nvidia has been winning the AI chip race because its GPUs serve as general-purpose workhorses, excelling at the parallel math necessary for training large models. But we've now arrived at the era of agentic AI, where inference is key. While training teaches the AI model to learn from patterns in large amounts of data, inference uses the AI to make decisions based on new information.

[6]

Cerebras raises $5.55bn in the biggest US tech IPO since Snowflake

Priced at $185, above the marketed range, the wafer-scale chip company opens trading on Thursday at a $56.4bn valuation. The OpenAI deal is what got the book covered. The customer concentration footnote is what the next quarter has to answer. Cerebras Systems priced its IPO at $185 per share on Wednesday evening, above the marketed range, raising $5.55bn and resulting in a fully diluted valuation of $56.4bn. It is the largest US tech IPO since Snowflake's $3.8bn debut in 2020, and the largest of 2026 by a wide margin. The shares begin trading on Nasdaq on Thursday under the ticker CBRS. The pricing is a long way from where the company started this run. Cerebras filed confidentially in late February, refiled in April at a $26.6bn valuation targeting $3.5bn, raised the range to $4.8bn last week, and ended up here. The bookbuild, in plain English, did not need a second nudge. Cerebras designs the Wafer Scale Engine, a single piece of silicon the size of a dinner plate that holds more than four trillion transistors. The pitch to investors is inference, not training: the workload where AI models run rather than learn, and where speed and unit cost matter more than raw compute. Where Nvidia owns the training side, Cerebras is selling the proposition that the next bottleneck is the one in front of the user. The financial story under the hood is steeper than the IPO docket usually allows. Revenue went from $24.6m in 2022 to $290m in 2024 to $510m in 2025, with the bulk of 2024 revenue coming from a single customer: G42, the Abu Dhabi AI conglomerate that accounted for 85% of that year's sales. The 2024 prospectus stalled when CFIUS opened a review of G42's minority stake. The 2026 refile cleared after G42's holding was restructured into non-voting shares. What changed the IPO's gravity was the January contract with OpenAI: a multi-year agreement for 750 megawatts of inference capacity, expandable to two gigawatts by 2030, with a contracted value over $10bn at signing and disclosed in the S-1 as a binding master relationship agreement worth over $20bn at full expansion. It is the line in the prospectus that lets a public-markets investor look at the G42 footnote and consider it diversified by a second very large name rather than diluted by it. Even that read has limits. G42 and Mohamed bin Zayed University of Artificial Intelligence, treated as a single related-party group, accounted for roughly 86% of 2025 revenue between them. Adding OpenAI on top of that is a structural improvement on paper. It is also another concentration of a different kind, in which three buyers carry the model. Andrew Feldman, who co-founded Cerebras in 2016 after selling his previous company SeaMicro to AMD, did not sell shares in the offering. The float is a smaller percentage of the company than is typical for an IPO of this size. That, combined with the demand that pushed pricing through the top of the range, is a fair part of why the implied valuation moved from $26.6bn in April to $56.4bn at pricing in five weeks. What happens next is the test the company has not yet had to take. Cerebras has been a private company telling a thesis story; from Thursday morning it has to be a public company telling a quarterly one. The OpenAI contract is the answer to the customer-concentration question. The next four quarters are the answer to whether wafer-scale silicon is a category or a product.

[7]

Cerebras prices IPO above expected range, as Wall Street braces for AI tsunami

Andrew Feldman, co-founder and CEO of Cerebras Systems, speaks at the Raise summit in Paris on July 8, 2025. The annual conference gathers global leaders and key speakers in tech and AI. Cerebras Systems, a maker of artificial intelligence chips, priced its IPO at $185 a share on Wednesday, above the expected range, according to a person with knowledge of the matter. The deal comes as investors gear up for what's expected to be a very busy year for new AI offerings. The IPO reeled in at least $5.55 billion for Cerebras, which is hitting the market during a silicon renaissance. Intel, Advanced Micro Devices and memory maker Micron are each up more than 80% in the past month, and have notched much more dramatic gains over the last year as investors spread their chip bets from Nvidia to the wider universe of semiconductor companies now benefiting from It's also one of the largest tech IPOs in years. Uber raised about $8 billion in 2019, and the biggest since then for a U.S. tech company was Snowflake's offering in 2020, which brought in over $3.8 billion. Expanding to include autos, electric vehicle maker Rivian raised roughly $12 billion in 2021. At the IPO price, Cerebras is now worth $56.4 billion on a fully diluted basis. Andrew Feldman, Cerebras' co-founder and CEO, now holds a stake worth about $1.9 billion. Founded in 2016 and headquartered in Silicon Valley, Cerebras has faced a rocky road getting to the Nasdaq, where it will trade under ticker symbol CBRS. In September 2024 Cerebras filed to go public, but withdrew its submission a little over a year later after its prospectus was heavily scrutinized due largely to the company's heavy reliance on a single customer in the United Arab Emirates, Microsoft-backed G42. Cerebras had started shifting its focus away from selling hardware systems and more toward providing a cloud service based on its chips. That means it's going up against cloud providers such as Google and Microsoft, which are both listed as competitors, along with Oracle and CoreWeave. In its refreshed prospectus, Cerebras said that 24% of revenue last year came from G42, down from 85% in 2024. However, last year Mohamed bin Zayed University of Artificial Intelligence in the UAE accounted for 62% of revenue. Cerebras scored a big win in January, when it signed a deal with OpenAI worth over $20 billion for 750 megawatts in Cerebras computing capacity. Cerebras claims its Wafer Scale Engine 3 chips offer speed and price advantages over graphics processing units, such as Nvidia's. On May 4, Cerebras said it was looking to sell 28 million shares at $115 to $125 per share. A week later, Cerebras bumped up the offering to 30 million and raised the expected range to $150 to $160. Earlier on Wednesday, Bloomberg reported, citing unnamed sources, that weeks before Cerebras' IPO, Arm and SoftBank both attempted to acquire it. Cerebras declined to comment. In 2017, OpenAI looked at merging with Cerebras, viewing the chip company as potentially beneficial in the pursuit of artificial general intelligence, or AGI, according to testimony in Elon Musk's trial against OpenAI. "Exclusive access to Cerebras hardware would give OpenAI an overwhelming hardware advantage over Google," Greg Brockman, OpenAI's co-founder and president, wrote in an email. Brockman held about 78,000 shares of Cerebras at the end of 2025, according to a filing in the case, which would be worth $14.4 million at the IPO price. Other Cerebras investors include Fidelity, with a stake valued at about $3.8 billion, and Benchmark, which owns roughly $3.3 billion worth of shares. Foundation Capital's holdings are valued at $2.8 billion, and Eclipse owns a $2.5 billion stake.

[8]

Cerebras Sees Sizzling Demand For IPO Shares

Cerebras Systems priced shares for its upcoming IPO at $185 a piece late Wednesday -- well above the projected range of $150 to $160 -- raising at least $5.55 billion for the company and valuing it at $56.4 billion, according to a CNBC report. Shares of the company, which develops AI computing chips and large-scale AI systems, are slated to begin trading on Nasdaq Thursday under the ticker symbol CBRS. The IPO has been a long time coming for Sunnyvale, California-based Cerebras, which initially filed publicly for an IPO in September, 2024. It withdrew the planned offering a year later, opting to continue raising capital in private markets. Cerebras was a prodigious fundraiser as a private company. It secured $2.85 billion in equity funding and $1.85 billion in debt financing over the years, per Crunchbase data, securing most of that total in the past year. Cerebras' largest venture stakeholders include Fidelity (11.3% of Class B common stock), Benchmark (9.5%), Foundation Capital (8.3%), Eclipse (7.3%) and Alpha Wave (6.5%). Benchmark, Foundation and Eclipse were lead investors in Cerebras' $27 million Series A in 2016, so they appear poised to see the largest percentage gains on their holdings. Investors in the Cerebras IPO, meanwhile, are banking on even more growth and valuation gains ahead. That said, growth of late has been impressive. Revenue increased to $510.0 million in 2025, representing year-over-year growth of 76%, and up more than six-fold over two years. The company has an impressive list of partners and customers as well. Earlier this year, Cerebras signed a deal to partner with OpenAI in integrating its technology into OpenAI's compute systems. Other customers listed on its website include Meta, AWS and IBM. Looking ahead, Cerebras said in its IPO filing that it expects to invest more in research and development as well as sales and marketing. As for a marketing strategy, the company seems to have settled on speed, pitching itself as the builder of "the fastest AI infrastructure in the world." In coming quarters, we'll see if it succeeds in delivering on that promise at scale.

[9]

Cerebras soars almost 70% by market close in a true blockbuster IPO | Fortune

In 2024, Cerebras filed to go public. In 2025, the company withdrew its IPO. And yesterday, Cerebras hit the public markets, at long last. The chipmaker, which designs and manufactures AI inference chips, has long billed itself as an Nvidia challenger, and its first day stock pop certainly reflected Nvidia-mania: Cerebras shares rocketed 70% by market close. The factors that ruffled prospective investors in 2024 seem, for now, far in the rearview mirror. At the time, there were concerns around customer concentration, Cerebras's ties to UAE-connected G42, and CEO Andrew Feldman's own distant past. (Feldman pled guilty to accounting fraud in the 2000s at a previous company.) Now, though, the company's multi-billion partnership with OpenAI, also a shareholder, seems to be key to the company's momentum and future prospects. I asked Feldman on Thursday about the key differences in the company between today and 2024. "We're a stronger company," he told Fortune. "I think we have bigger customers. We have more deployments. Our technology's more mature. I think we have a better roadmap... We told the world we'd win a big customer like G42 and we'd learn from it. They were a billion-dollar customer and everyone said 'you have only one.' But we were ready, and in a position to move quickly." Karl Alomar, managing partner at Cerebras investor M13, says the company's biggest challenge from here is "execution at scale." "Cerebras has proven demand from hyperscalers, but they need to prove they can manufacture at volume, deliver on time, and support multiple customers at the same operational level Nvidia does," he said via email, adding that recent softness in the secondary market for Cerebras is illustrative of this risk. "Investors want to see concrete customer wins and production schedules, not just OpenAI." Still, Eric Vishria, general partner at early backer Benchmark, says there's absolutely room for many winners in chips. "The demand for compute and inference is not slowing down," said Vishria via email. "So... maintaining hardware execution and speed are their moat... When I look forward, I ask myself: how big is the market for slow anything? Slow internet, slow search, slow streaming -- ultimately everything gets really fast, and fast tends to win." Feldman is candid: No one knows how big this market really is, he says. One back-of-the-envelope calculation: "There are 47 million software engineers in the world," said Feldman. "If each one uses $100,000 of tokens a year, that's nearly $5 trillion from just a single use case. Do that bottoms-up and you go, 'Holy crap.'" I suspect that from here, there are only two directions for Cerebras: In a year, it will have ripped, becoming one of the few high-profile venture-backed IPOs to truly rise to the occasion for retail investors. Or it will go in the direction of Figma, down more than 80% since its hype-y first day. Something measured is off the table. Today's numbers, dazzling as they are, aren't the real test. But, my gosh, what a first day. See you Monday, Allie Garfinkle X: @agarfinks Email: [email protected] Submit a deal for the Term Sheet newsletter here. Joey Abrams curated the deals section of today's newsletter. Subscribe here. VENTURE CAPITAL - Nectar Social, a San Francisco-based AI-powered marketing platform that automates brand campaigns, raised $30 million in Series A funding. Menlo Ventures led the round and was joined by True Ventures, Google Ventures, and Kinship Ventures. - Iceotope Group, a Sheffield, U.K. and Morrisville, N.C.-based developer of liquid cooling systems for AI data centers, raised $26 million in Series B funding. Two Seas Capital and Barclays Climate Ventures led the round and were joined by Edinv, ABC Impact, Northern Gritstone, and British Business Bank. - GovWell, a New York City-based AI operating system designed for permitting and licensing processes in local government, raised $25 million in Series A funding, Insight Partners led the round and was joined by existing investors Work-Bench, Bienville Capital, and others. - Stitch, a Riyadh, Saudi Arabia-based developer of infrastructure and workflow software for banks and financial institutions, raised $25 million in Series A funding. a16z led the round and was joined by existing investors Arbor Ventures, COTU Ventures, Raed Ventures, and SVC. - Novella, a New York City-based AI-powered wholesale insurance broker, raised $21 million in funding. Brewer Lane Ventures led the round and was joined by Box Group, Crystal Venture Partners, and others. - Optura, a Nashville, Tenn.-based health care technology company designed to help providers optimize return on AI investments, raised $17.5 million in Series A funding. Salesforce Ventures led the round and was joined by Echo Health Ventures and existing investors Susa Ventures, Matrix Partners, and HC9 Ventures. - Turnkey, a New York City-based platform designed to help developers integrate crypto wallet infrastructure into their applications, raised $12.5 million in funding from Archetype, Circle Ventures, Sequoia Capital, and others. - Urologic Health, an Or Yehuda, Israel-based developer of non-invasive diagnostic technology for bladder function assessment, raised $11 million in seed funding from Edge Medical Ventures, SHD Partners, Longevity Venture Partners, and others. - Flick, a San Francisco-based AI filmmaking tool, raised $6 million in seed funding from True Ventures, Google Ventures, Y Combinator, Lightspeed, and others. - Greenboard, a New York City-based developer of compliance and operations software for financial services, raised $4.5 million in seed funding. Base 10 Partners led the round and was joined by Y Combinator, General Catalyst, Wayfinder Ventures, and others. - Ranger, a San Francisco-based agentic operating system designed for the industrial bidding process, raised $8.4 million in seed funding. Bonfire Ventures led the round and was joined by 25madison, Inovia Capital, and Panache Ventures. - Graphon AI, a San Francisco-based developer of an intelligence layer designed to process and structure data before it reaches AI modelsI, raised $8.3 million in seed funding. Arvind Gupta of Novera Ventures led the round and was joined by Perplexity Fund, Samsung Next, GS Futures, Hitachi Ventures, Gaia Ventures, B37 Ventures, and Aurum Partners. - Chromie Health, a New York City-based AI-powered scheduling and management platform for hospital operations, raised $2 million in pre-seed funding. AIX Ventures led the round. PRIVATE EQUITY - Ambienta acquired a majority stake in Disano, a Milan, Italy-based manufacturer of lighting fixtures. Financial terms were not disclosed. - Fortify Restoration, a portfolio company of Osceola Capital, acquired Beaches Construction, a Panama City Beach, Fla.-based restoration services provider. Financial terms were not disclosed. EXITS - Brookfield agreed to acquire World Freight Company, a Paris, France-based air-cargo capacity company, from PAI Partners and EQT. IPOS - Cerebras Systems, a Sunnyvale, Calif.-based AI infrastructure company, raised $5.6 billion in an offering of 30 million shares priced at $185 on the Nasdaq.

[10]

Cerebras bumps up IPO range as it looks to raise up to $4.8 billion

Cerebras CEO Andrew Feldman speaks to the media at the Colovore office in Santa Clara, Calif., on March 12, 2024. Artificial intelligence chipmaker Cerebras Systems has increased the estimated price range for its initial public offering. It's now looking to sell at $150 to $160 per share, according to a Monday filing, up from the range of $115 to $125 that it disclosed last week. At the high end of the new range, Cerebras would net up to $4.8 billion in proceeds through the IPO. The company could end up being worth up to $48.8 billion on a fully diluted basis. That's up from the $23 billion valuation the company announced in February as part of a funding round. Among companies that want to train and run generative artificial intelligence models like those that power OpenAI's ChatGPT, Nvidia's graphics processing units, or GPUs, have been the industry standard. Cerebras says its chips perform more quickly than GPUs and cost less money. The high speeds that Cerebras advertises helped the company to secure a $20 billion-plus commitment from OpenAI, which relies on Cerebras for a model that writes code. Rather than focus chiefly on selling hardware, Cerebras has been filling data centers with its own chips and providing cloud services to clients. That puts it up against cloud infrastructure providers. But in March, top cloud Amazon Web Services announced a deal to bring Cerebras chips into its data centers. Cerebras has received additional attention in the trial for Elon Musk's lawsuit against OpenAI CEO Sam Altman. Cerebras' planned chips represented "the compute we thought we were going to need," Greg Brockman, OpenAI's co-founder and president, said in a California courtroom last week. OpenAI discussed merging with Cerebras, and Musk was open to a deal, Brockman said. Nasdaq expects the Cerebras IPO to take place on May 14. -- CNBC's Ashley Capoot contributed to this report.

[11]

Cerebras Systems IPO set to raise more than $5.5bn

Cerebras founders, from left: Sean Lie, Gary Lauterbach, Michael James, Jean-Philippe Fricker and Andrew Feldman. Image: Rebecca Lewington The pricing of the 30m class A common stock shares is significantly higher than was expected. Cerebras Systems, the AI chipmaker aiming to rival Nvidia, is set to raise more than $5.5bn after pricing its US initial public offering (IPO) at $185 per share. The pricing of the 30m class A common stock shares - set to begin trading today (14 May) as 'CBRS' on the Nasdaq Global Select Market - is significantly higher than was expected. In early May, a $3.5bn raise through the sale of 28m shares at between $115 and $125 each was mooted. Last week, that estimate had grown to proceeds of up to $4.8bn at a range of $150-160 per share. Reported media valuations for the company after the IPO sit at around $50bn. Morgan Stanley, Citigroup, Barclays and UBS Investment Bank are acting as lead book-running managers for the offering, according to Cerebras. Mizuho and TD Cowen are acting as bookrunners. Needham & Company, Craig-Hallum, Wedbush Securities, Rosenblatt, Academy Securities, Credit Agricole CIB, MUFG and First Citizens Capital Securities are acting as co-managers. In February, the company was valued at around $23bn after a $1bn Series H raise. Cerebras claims that it builds the "fastest AI infrastructure in the world" and CEO Andrew Feldman has also gone on record to say that his hardware runs AI models multiple times faster than that of Nvidia's. Cerebras is behind WSE-3, touted by the company to be the "largest" AI chip ever built with its 19-times more transistors and 28-times more compute than the Nvidia B200. Cerebras initially filed for IPO in September 2024, but drew criticism for a perceived heavy reliance on a single United Arab Emirates-based customer, the Microsoft-backed G42. The following September, it withdrew from a planned IPO without providing an official reason. According to Bloomberg, the Cerebras IPO is the largest of 2026 so far, and drew orders for more than 20 times the number of shares available. Cerebras said it had granted the IPO underwriters a 30-day option to purchase up to an additional 4,500,000 shares. Last month, Elon Musk's SpaceX was reported to have confidentially filed for a US IPO, with estimates of how much this could raise put at between $50bn and $75bn, while the company's valuation could be up to $1.75trn. Don't miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic's digest of need-to-know sci-tech news.

[12]

Cerebras stock almost doubles from its initial offering price after the biggest tech IPO in years raised $5.5B - SiliconANGLE

Cerebras stock almost doubles from its initial offering price after the biggest tech IPO in years raised $5.5B Cerebras Systems Inc. today opened trading as a public company at $350 a share, 89% above its offering price, as it kicked off what looks like a huge year for initial public offerings. The enthusiastic reception among investors follows on Wednesday's pricing of its stock at $185 per share ahead of the most anticipated IPO of the year so far, well above its expected range of between $150 and $160 per share. It's now valued at $100 billion. The artificial intelligence chipmaker raised at least $5.5 billion from the sale Wednesday, which comes at a red-hot moment for the semiconductor industry. In the last month, chipmakers such as Intel Corp., Advanced Micro Devices Inc. and Micron Technology Inc. have all seen their shares rise more than 80%, while growing by even more looking back at the past 12 months. The chip sector is growing fast because investors are becoming increasingly enthusiastic about the potential for other types of silicon chips besides Nvidia Corp.'s graphics processing units to power the AI boom. Cerebras' public debut makes it one of the largest IPOs to hit the technology sector in years. The last time a company raised more was back in 2019 when Uber Technologies Inc. hit the market, raising around $8 billion. Since then, the biggest IPO was Snowflake Inc.'s 2020 offering, which reeled in around $3.8 billion. Including the automotive sector, Rivian Inc. raised $12 billion via its 2021 IPO. The IPO price suggests that Cerebras has a market capitalization of $56.4 billion on a fully diluted basis. Chief Executive Andrew Feldman holds a stake in the company worth about $1.9 billion. Cerebras, which will trade under the ticker symbol "CBRS," was founded 10 years ago in 2016 and is based in Silicon Valley. But it took time to gain traction, and its route to the public markets was a difficult journey. The chipmaker revealed plans to hold an IPO in September 2024, only to withdraw those plans about a year later amid heavy scrutiny over its prospectus, which revealed that it was extremely reliant on a single customer - the United Arab Emirates-based AI company G42, which accounted for 80% of its chip sales that year. The deal was further complicated when the U.S. Committee on Foreign Investment in the United States announced it was conducting a security review over Cerebras' partnership with G42, although the companies were later cleared of any wrongdoing. Since then, Cerebras has looked to diversify its business, and has shifted its focus from selling only hardware systems in favor of cloud-hosted services. It launched a public cloud service based on its chips, which are ideally suited for AI inference workloads - or running trained AI models in production. That means Cerebras is competing not only with Nvidia, but also heavy hitters such as Google Cloud, Amazon Web Services Inc., Microsoft, Oracle Corp. and CoreWeave Inc. In its refreshed prospectus, Cerebras revealed that G42 accounted for just 24% of its revenue in 2025, though most of its new sales went to another UAE-based customer - the Mohamed bin Zayed University of Artificial Intelligence, which accounted for 62% of its revenue that year. Still, investors are betting that the company's revenue mix is going to change. In January, Cerebras announced it had struck a $20 billion deal with OpenAI Group PBC to provide it with 750 megawatts of compute capacity over the next three years. Then in March, the company revealed that AWS is set to offer customers access to its chips via the cloud-based AWS Bedrock service. The company's flagship product is a dinner plate-sized chip called the WSE-3, which is several times larger than Nvidia's Blackwell B200 GPU. One of its main selling points is its 44-gigabyte pool of SRAM. SRAM is a memory variety that features significantly more transistors per square millimeter than standard server DRAM. As a result, it's considerably faster and several orders of magnitude more expensive. The processor also integrates a quartz crystal that moves millions of times per second in response to an electric current. Each movement corresponds to a clock cycle, the basic unit of time in chips. Cerebras says WSE-3's 900,000 cores can access the onboard SRAM pool with latency of one clock cycle, which is significantly faster than what a standard graphics card offers. The result is much faster inference than GPUs. It was only a matter of time before AI fueled a return to record IPO numbers, and Cerebras's strong debut is a promising sign for the broader industry, said Holger Mueller of Constellation Research. "Cerebras provides a viable alternative to Nvidia for inference, and the market is keen to help fund it," the analyst said. "Strong competitors will help to reduce the cost of AI for enterprises, and so this will help to keep the flywheel spinning in terms of innovation." Elon Musk's SpaceX, OpenAI and Anthropic PBC are all expected to go public later this year in even bigger potential IPOs. The signs are good. The IPO price is significantly higher than first advertised. When Cerebras officially filed its paperwork on May 4, it said it was looking to sell 28 million shares at between $115 and $125 per share. A week later, it boosted the offering to 30 million shares at a price range of $150 to $160. Cerebras' biggest shareholders include the venture capital firm Fidelity, which owns a stake valued at about $3.8 billion, followed by Benchmark, which owns about $3.3 billion worth of stock. OpenAI President Greg Brockman is reported to hold shares valued at $14.4 million, while the ChatGPT maker's CEO Sam Altman holds stock that would now be worth about $16.5 million.

[13]

Cerebras prices its shares at $185 ahead of biggest tech IPO in years - SiliconANGLE

Cerebras prices its shares at $185 ahead of biggest tech IPO in years Cerebras Systems Inc. has officially priced its stock at $185 per share ahead of the most anticipated initial public offering of the year today, well above its expected range of between $150 and $160 per share. It means the artificial intelligence chipmaker has raised at least $5.5 billion from the sale, which comes at a red-hot moment for the semiconductor industry. In the last month, chipmakers such as Intel Corp., Advanced Micro Devices Inc. and Micron Technology Inc. have all seen their shares rise more than 80%, while growing by even more if we look back at the past 12 months. The chip sector is growing fast because investors are becoming increasingly enthusiastic over the potential for other types of silicon chips besides Nvidia Corp.'s graphics processing units to power the AI boom. Cerebras' public debut makes it one of the largest IPOs to hit the technology sector in years. The last time a company raised more was back in 2019 when Uber Technologies Inc. hit the market, raising around $8 billion. Since then, the biggest IPO was Snowflake Inc.'s 2020 offering, which reeled in around $3.8 billion. If we include the automotive sector, Rivian Inc. raised $12 billion via its 2021 IPO. The IPO price suggests that Cerebras has a market capitalization of $56.4 billion on a fully diluted basis. The chipmaker's Chief Executive Andrew Feldman holds a stake in the company worth around $1.9 billion. Cerebras, which will trade under the ticker symbol "CBRS," was founded ten years ago in 2016 and is based in Silicon Valley. But it took time to gain traction, and its route to the public markets was a difficult journey. The chipmaker first revealed plans to hold an IPO in September 2024, only to withdraw those plans about a year later amid heavy scrutiny over its prospectus, which revealed that it was extremely reliant on a single customer - the United Arab Emirates-based AI company G42, which accounted for 80% of all its chip sales that year. The deal was further complicated when the U.S. Committee on Foreign Investment in the United States announced it was conducting a security review over Cerebras's partnership with G42, although the companies were later cleared of any wrongdoing. Since then, Cerebras has looked to diversify its business, and has shifted its focus from selling only hardware systems in favor of cloud-hosted services. It launched a public cloud service based on its chips, which are ideally suited for AI inference workloads - or running trained AI models in production. That means Cerebras is competing not only with Nvidia, but also heavy-hitters like Google Cloud, Amazon Web Services Inc., Microsoft, Oracle Corp. and CoreWeave Inc. ' In its refreshed prospectus, Cerebras revealed that G42 accounted for just 24% of its revenue in 2025, though most of its new sales went to another UAE-based customer - the Mohamed bin Zayed University of Artificial Intelligence, which accounted for 62% of its revenue that year. Still, investors are betting that the company's revenue mix is going to change. In January, Cerebras announced it had struck a $20 billion deal with OpenAI Group PBC to provide it with 750 megawatts of compute capacity over the next three years. Then in March, the company revealed that AWS is set to offer customers access to its chips via the cloud-based AWS Bedrock service. The company's flagship product is a dinner plate-sized chip called the WSE-3, which is several times larger than Nvidia's Blackwell B200 GPU. One of its main selling points is its 44-gigabyte pool of SRAM. SRAM is a memory variety that features significantly more transistors per square millimeter than standard server DRAM. As a result, it's considerably faster and several orders of magnitude more expensive. The processor also integrates a quartz crystal that moves millions of times per second in response to an electric current. Each movement corresponds to a clock cycle, the basic unit of time in chips. Cerebras says WSE-3's 900,000 cores can access the onboard SRAM pool with latency of one clock cycle, which is significantly faster than what a standard graphics card offers. The result is much faster inference when compared to GPUs. The IPO price is significantly higher than first advertised. When Cerebras officially filed its paperwork on May 4, it said it was looking to sell 28 million shares at between $115 and $125 per share. A week later, it boosted the offering to 30 million shares at a price range of $150 to $160. Cerebras's biggest shareholders include the venture capital firm Fidelity, which owns a stake valued at around $3.8 billion, followed by Benchmark, which owns around $3.3 billion worth of stock. OpenAI President Greg Brockman is reported to hold shares valued at $14.4 million, while the ChatGPT maker's CEO Sam Altman holds stock that would now be worth around $16.5 million.

[14]

Cerebras IPO Creates Billions for CEO Andrew Feldman and Early A.I. Chip Backers

Andrew Feldman, Benchmark, Foundation Capital and other early Cerebras backers are among the biggest winners from the A.I. chip startup's blockbuster public debut. Cerebras Systems didn't just go public this week; it minted billionaires and delivered one of the biggest venture paydays in years. The A.I. chip startup priced its IPO at $185 a share on Wednesday (May 13), above a range it had already raised twice. Shares opened at $350 on Thursday and closed at $311.07, valuing the company at roughly $95 billion. The $5.55 billion offering is the largest U.S. tech IPO since Uber in 2019 and the biggest semiconductor debut on record, serving as an early test of investor appetite for a wave of A.I. listings, including OpenAI and Anthropic later this year. Sign Up For Our Daily Newsletter Sign Up Thank you for signing up! By clicking submit, you agree to our <a href="http://observermedia.com/terms">terms of service</a> and acknowledge we may use your information to send you emails, product samples, and promotions on this website and other properties. You can opt out anytime. See all of our newsletters The momentum cooled slightly today, with shares falling about 5 percent intraday to around $293. Still, the IPO instantly created two billionaires and generated massive returns for a tight circle of early backers. CEO Andrew Feldman, 56, co-founded Cerebras in 2016 with four former colleagues from SeaMicro, a server company he founded and sold to AMD for $334 million. Feldman owns roughly 5.5 percent of Cerebras, a stake now worth about $3.2 billion, according to Bloomberg. Co-founder and CTO Sean Lie holds a stake valued at approximately $1.6 billion. Feldman is a serial entrepreneur with deep roots in Silicon Valley. He grew up on Stanford's campus, where both his parents were professors, and later earned both a BA and an MBA from the university. Over the past two decades, he has helped take Riverstone Networks public and led marketing at Force10 Networks before its $800 million sale to Dell. Cerebras is his fourth company in semiconductors and data infrastructure and by far his biggest win. Earlier this year, the company's board approved what it described as a "moonshot" compensation package for Feldman, awarding additional stock tied to market-cap milestones. The timing underscores Cerebras' rapid ascent: the company reported $510 million in revenue for 2025, up 76 percent year over year, and swung to $238 million in net income after a $481 million loss in 2024. The biggest winners from the IPO also include the venture firms that backed Cerebras from the start. Benchmark, Foundation Capital, and Eclipse Ventures co-led the company's $27 million Series A in 2016. At Benchmark, Eric Vishria led the deal and remains on the board. The firm's 8.1 percent stake was worth roughly $6 billion at Thursday's close. Benchmark's conviction in the company remained strong even late in the game. In February, it raised two new funds, both called "Benchmark Infrastructure," to invest another $225 million into Cerebras ahead of the IPO. Foundation Capital, where Steve Vassallo led the investment and hosted Feldman in its offices during the company's earliest days, now holds a stake valued at about $5.3 billion. Eclipse Ventures, led by founder Lior Susan, sits on roughly $5.2 billion. Later investors also saw significant gains. Fidelity, which led a $1.1 billion Series G round in September 2025 alongside Atreides Management, remains the largest shareholder at 11.3 percent. That stake is worth about $3.8 billion at the IPO price. Tiger Global followed with a $1 billion Series H in February at a $23 billion valuation. A group of high-profile angel investors also stands to benefit, including Sam Altman, Greg Brockman, Ilya Sutskever, Adam D'Angelo, Andy Bechtolsheim and Intel CEO Lip-Bu Tan. Most of their stakes are too small to require full SEC disclosure, but some surfaced as part of the Musk v. Altman lawsuit. Filings in that case show Altman held roughly 89,000 shares as of December 31, now worth about $27.8 million at post-IPO prices near $311. Brockman held around 78,000 shares, valued at roughly $24.2 million. Those same filings reveal that OpenAI once explored acquiring Cerebras. In 2017, Brockman wrote that "exclusive access to Cerebras hardware would give OpenAI an overwhelming hardware advantage over Google." That relationship evolved years later into a major commercial partnership. In January, OpenAI signed a multi-year compute agreement with Cerebras worth more than $20 billion for 750 megawatts of capacity through 2028. A separate $1 billion loan from OpenAI in December 2025 came with warrants for more than 33 million shares, enough for roughly a 10 percent stake. Additional warrants could be worth about $5 billion at the IPO midpoint, according to Financial Times estimates. Cerebras' blockbuster debut lands as the IPO market reawakens. U.S. IPO proceeds have more than doubled year-to-date in 2026, with Cerebras accounting for roughly a quarter of that total. Companies like SpaceX, OpenAI, and Anthropic are expected to follow, with SpaceX and OpenAI alone projected to raise as much as $135 billion combined.

[15]

Cerebras shares skyrocket in debut as AI mania grips markets

Cerebras Systems, a chip designer, saw its shares surge 89% on its Nasdaq debut, raising $5.55 billion. The company's innovative wafer-scale engine, designed for AI processing, attracted significant investor interest, with major clients like Amazon and OpenAI. This IPO highlights the booming market for AI-focused technology. Shares of chip designer Cerebras Systems soared 89% above the initial public offering price on their Nasdaq debut, extending the market's unrelenting frenzy for companies seen as the biggest beneficiaries of the artificial intelligence boom. Its shares opened at $350 on Thursday, well above the IPO price of $185 per share, where it raised $5.55 billion. The jump gives Cerebras a valuation of $106.75 billion on a fully diluted basis. The Sunnyvale, California-based firm's IPO is the largest so far this year and comes as AI-linked stocks push broader markets to record highs despite challenges to global growth stemming from the Middle East conflict. Founded in 2015, Cerebras sought to challenge conventional AI computing with its wafer-scale engine, designing chips roughly the size of a dinner plate to speed up processing. Unlike traditional GPU-based systems that rely on clusters of interconnected chips, it packs hundreds of thousands of compute cores onto a single processor. "In Silicon Valley we understand just how big AI will be, and what that means," Cerebras CEO Andrew Feldman told Reuters in an interview. "We make AI with training, and we use it with inference. As these models get smarter, the amount we use them will explode." The listing marks its second attempt to go public, after it dropped its plans to list its stock last year. Its partnership with G42, a UAE-based AI company that provided more than 85% of its revenue in 2024, had drawn a national security review by the Committee on Foreign Investment in the United States. The committee eventually cleared the deal. Since then, Cerebras has secured Amazon and OpenAI, two of the biggest builders of AI infrastructure in the world, as customers. AI SPENDING SURGES As the race to develop faster and smarter AI models heats up, tech giants are pouring hundreds of billions of dollars into the ecosystem. The outsized demand has prompted a gold rush-like frenzy among investors, with AI-linked stocks seeing eye-popping returns in hopes that the revolutionary technology will upend traditional workflows. The Dow Jones U.S. Semiconductors Index, which tracks chip heavyweights such as Nvidia, Qualcomm, and Intel, has returned more than 107% over the past year, compared with the S&P 500's about 26% rise. Cerebras raised the size and price range of its IPO earlier this week to manage surging interest in its shares. Sources told Reuters that the offering had drawn orders for more than 20 times the number of shares available.

[16]

Report: AI chipmaker Cerebras to increase IPO price target amid surging investor demand - SiliconANGLE

Report: AI chipmaker Cerebras to increase IPO price target amid surging investor demand Artificial intelligence chipmaker Cerebras Systems Inc. is expected to increase the size and price of its initial public offering later today as investor demand for access to its shares continues to rise. Reuters reported Sunday that the chipmaker is now considering raising its IPO price range to between $150 to $160 per share, up from an initial target of between $115 and $125 per share. The company is also considering increasing the number of marketed shares from 28 million to 30 million, anonymous sources told the news agency. The new price range means that Cerebras can expect to raise around $4.8 billion from the sale, up from an earlier target of $3.5 billion under the original terms of the IPO, which were detailed last week. Cerebras has timed its IPO to take advantage of the surging demand for high-performance AI chips, and has reportedly received orders for more than 20-times the number of shares it made available for the sale, according to the sources. The company is set to officially price its IPO on May 13. The Sunnyvale, California-based company sells wafer-sized AI chips called the WSE-3 that are several times larger than Nvidia Corp.'s Blackwell B200 graphics processing units. One of its main selling points of its chips is their 44-gigabyte pool of SRAM. SRAM is a memory variety that features significantly more transistors per square millimeter than standard server DRAM. As a result, it's considerably faster and several orders of magnitude more expensive. Processors include a quartz crystal that moves millions of times per second in response to an electric current. Each movement corresponds to a clock cycle, the basic unit of time in chips. Cerebras says WSE-3's 900,000 cores can access the onboard SRAM pool with latency of one clock cycle, which is significantly faster than what a standard graphics card offers. The result is faster performance on inference, the process of providing responses to queries. Cerebras ships the WSE-3 as part of a 1.8-ton appliance called the CS-3. Customers can link together multiple CS-3 systems into clusters with the help of auxiliary devices it also sells. When it filed its IPO paperwork last week, Cerebras revealed that its revenue had jumped by 76% in 2025 to $290.3 million. It's already profitable, having recorded a net income of $87.9 million that year, having lost $485 million in the year prior. The company has benefited from increased demand for its processors as AI labs shift from training AI models to deploying them in production. That suits Cerebras, whose chips are better suited for inference workloads, running the computations that enable AI models to respond to user queries, than Nvidia's general-purpose GPUs. Next week's IPO is the chipmaker's second attempt to go public. It originally attempted to do so back in 2024, only to cancel the plan last year due to controversy over its partnership with the United Arab Emirates-based firm G42, which accounted for more than 80% of its revenue that year. The U.S. Committee on Foreign Investment in the United States subjected that partnership to a national security review, but ultimately cleared both firms of any wrongdoing. While that process was ongoing, Cerebras took care to continue building its business, adding OpenAI Group PBC and Amazon Web Services Inc., two of the world's biggest builders of AI infrastructure, as customers. Cerebras's IPO will be the biggest listing so far this year, according to Dealogic. Morgan Stanley, Citigroup, Barclays and UBS Group AG are all helping the company with its offering. Once the listing concludes, Cerebras's stock will be traded on the Nasdaq Global Select Market under the ticker symbol "CBRS."

[17]

Cerebras Isn't Just Challenging Nvidia -- It's Challenging How AI Computing Works - Cerebras Systems (NASD

Beyond GPU Clusters Unlike Nvidia's model of connecting thousands of GPUs through increasingly complex networking infrastructure, Cerebras has built its pitch around wafer-scale computing -- giant chips designed to reduce latency and avoid some of the bottlenecks associated with massive GPU clusters. That matters because the economics of AI are changing fast. Training frontier models still requires enormous compute, but the next phase of the AI race may revolve around inference -- the process of actually serving AI responses to millions of users in real time. Inference workloads increasingly reward speed, efficiency and lower latency, areas where Cerebras believes its architecture has an edge. That potentially shifts the debate from "Who has the most GPUs?" to "Does the future of AI computing even need GPU-heavy clusters in their current form?" A Bigger Infrastructure Debate Emerges Cerebras' rally also comes at a time when investors are starting to question whether the current AI infrastructure stack is becoming too concentrated around a handful of players. The AI boom has largely funneled capital toward Nvidia, hyperscalers and networking suppliers. But Cerebras' strong market debut suggests Wall Street may now be willing to place bets on alternative AI computing architectures as well. If that trend continues, the implications could stretch far beyond chipmakers. A serious push toward alternative AI compute models could eventually reshape spending across cloud infrastructure, networking hardware and AI serving economics. It could also intensify the race to build faster and cheaper inference systems as AI applications scale globally. For now, Nvidia still dominates the AI landscape. Still, Cerebras' arrival as a public company suggests the next big AI debate may no longer be about who wins the GPU race -- but whether the GPU-centric model itself remains the endgame. Photo Courtesy: Samuel Boivin on Shutterstock.com Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[18]

Nvidia Competitor Cerebras Sees Massive Stock Surge In Public Debut - Cerebras Systems (NASDAQ:CBRS)

Cerebras Systems shares are climbing with conviction. What's driving CBRS stock higher? Strong First‑Day Demand Drives Shares Cerebras priced its offering at $185 per share, but demand was strong enough that the stock opened at $350 when trading began, setting the tone for a powerful debut on Thursday. Cerebras sold 30 million shares at $185, with underwriters receiving a 30‑day option to buy another 4.5 million shares at the same price. The stock's immediate move higher reflects heavy interest in the name as it hits the Nasdaq. Shares traded up to $385 on Thursday before pulling back. Cerebras develops advanced computing systems built for large‑scale artificial intelligence workloads. The company is known for designing hardware that accelerates training and inference for complex AI models, a theme that has drawn significant investor attention. The offering was backed by a large underwriting group, with Morgan Stanley, Citigroup, Barclays and UBS leading the deal, joined by Mizuho and TD Cowen as bookrunners and a broad slate of co‑managers including Needham, Craig‑Hallum, Wedbush, Rosenblatt, Academy Securities, Credit Agricole CIB, MUFG and First Citizens Capital Securities. Leadership Frames IPO As A Launchpad For Long‑Term AI Ambitions Cerebras CEO Andrew Feldman described the company's public debut as a pivotal moment in its long‑term AI strategy, noting that the move to the public markets comes at a time when the business is scaling quickly in high‑performance computing. He told CNBC the IPO gives Cerebras the resources it needs for its next stage of growth and argued that the broader AI landscape is still in the early stages of a much larger transformation. Feldman also cast Cerebras as a serious competitor to Nvidia, pointing to the company's massive, high‑throughput chips that are designed to train and run large AI models more efficiently. He noted that Cerebras has relied on Taiwan Semiconductor for advanced manufacturing since the company's earliest days and emphasized that its custom silicon is built to handle enormous workloads while using less power. CBRS Shares Are Soaring CBRS Price Action: Cerebras shares were up 69.14% at $313.07 at the time of publication on Thursday, according to Benzinga Pro. Image: Samuel Boivin/Shutterstock.com Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[19]

2026's Biggest IPO So Far: AI Chipmaker Cerebras Targets $4.8 Billion Raise, But What Do Prediction Marke

Cerebras Systems is set to be the largest IPO of 2026 so far, with the AI chipmaker on track to raise up to $4.8 billion at a $48.8 billion valuation after its order book closed roughly 20 times oversubscribed. The Sunnyvale company lifted its price range to $150 to $160 per share from $115 to $125 and bumped the offering to 30 million shares. At the high end, the deal would be the largest US listing in nearly five years. Cerebras designs specialized AI chips built around its Wafer Scale Engine, a processor the size of a dinner plate that packs more than 4 trillion transistors onto a single piece of silicon. OpenAI's Circular Playbook A March Amazon Web Services partnership and OpenAI's $20 billion-plus compute deal largely solved the customer concentration problem that derailed Cerebras' 2024 listing attempt. But access to Sam Altman's inner circle did not come cheap. Cerebras is handing OpenAI warrants worth up to 10% of the company, around $5 billion at the IPO midpoint, or roughly half the gross profit it stands to make on the deal, according to Financial Times calculations. It is the same playbook OpenAI ran with Advanced Micro Devices (NASDAQ:AMD), whose shares have tripled since the two companies announced their own circular arrangement last October. What Polymarket Traders Are Pricing In A Polymarket contract on Cerebras' closing market cap on day one has the $50 billion to $60 billion bucket as the favorite at 33%, followed by $60 billion to $70 billion at 25% and $70 billion to $80 billion at 17%. The "under $50 billion" outcome sits at just 6%. The Hiive secondary market backs that read, with Cerebras shares reportedly changing hands at $187.53 on Monday, about 17% above the top of the offering range. The crown may be short-lived. SpaceX, OpenAI and Anthropic are all reportedly preparing 2026 listings, with SpaceX and OpenAI alone expected to raise a combined $135 billion. Polymarket currently has SpaceX at 87% to finish 2026 as the largest IPO by market cap. Cerebras begins trading on the Nasdaq under the ticker CBRS on Thursday. Image: Shutterstock Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[20]

Why the hottest tech IPO of the year matters for the AI race with China and in the Middle East

Chris Buskirk, co-founder and chief investment officer of 1789 Capital, a key Cerebras investor, says the company's IPO is geopolitically significant. On Thursday, shares of Sunnyvale, California-based AI chipmaker Cerebras went public and nearly doubled in the moments after it opened on the Nasdaq at $185 per share. It closed out the day at $311 per share marking a 70% increase in the price of the stock, giving it a $95 billion market cap, and making it the largest IPO of the year so far. For investors desperate to get more AI exposure -- the IPO was 20 times oversubscribed -- this is one of the biggest new plays in the public markets, alongside chipmaker Nvidia. "This thing is flying ... partly because there are so few ways for public investors, whether retail or institutional, to invest in this," Chris Buskirk, co-founder and chief investment officer of 1789 Capital, an early investor in the company told me. Cerebras makes specialized high-power chips, most notably the Wafer Scale Engine, the largest chip ever produced with a processor the size of an entire silicon wafer and 19 times more computing power than Nvidia's flagship chip. The IPO arrived at a pivotal moment, with AI becoming a key point of leverage in discussions with China amid President Trump's visit to Xi Jinping in Beijing this week. "American companies are excellent but the Chinese companies are very good -- well capitalized with very talented people," Buskirk said. "It's going to be the American AI tech stack or the Chinese AI tech stack that will proliferate not just in our own countries, but around the world and that's something we absolutely have to win." In that context, Cerebras' listing may be even more significant geopolitically than financially. The IPO serves as a timely reminder of how America is building both the hardware and software foundation to maintain AI dominance edge and how eager China is to get American chips. But investors say the company doesn't just underscore America's commanding AI hardware advantage -- its deep ties across the Middle East are actively expanding US technology influence at a time China is aggressively trying to dominate the region. "This is the existential geopolitical race of our time," Buskirk adds. That's on full display in the Gulf. In 2025, Cerebras relied on two UAE customers for 86% of its $510 million in revenue. Those ties have drawn scrutiny and even led to a national security review from CFIUS that delayed the chip maker's listing (UAE-based G42 has an investment portfolio that included Chinese tech companies). But investors argue the relationship ultimately strengthens influence in areas China wants to control. "During the Biden administration, the US government did everything they could to push our allies in the Middle East away from us into China's arms... It was the Biden administration that was pushing them into China's arms. The Trump administration is bringing them back into alignment with the United States." -- Buskirk told me. "Everybody needs to adopt AI technology -- every company, every country needs to do it. And there's only two choices. Do you align with the United States AI stack or align with the Chinese tech stack?" And speed, at this moment, is everything -- because computing power has become the defining constraint on AI's growth. "Compute is the new bottleneck," said Buskirk. "Software can scale infinitely -- that's why people loved to invest in [Software as a Service] companies. But the amount of compute that's necessary for AI is many orders of magnitude larger." Cerebras has also secured a major strategic investment and compute partnership from OpenAI, which some critics dismiss as a circular deal that only inflates the AI bubble. Buskirk rejected that view, arguing the arrangement is a practical necessity: "They can't deploy their models at scale unless there are leading-edge chip companies that have enough scale to deliver chips to them." The company has also drawn scrutiny for its ties to the Pentagon -- which Buskirk says is actually a good thing. "The US government should be buying the absolute best leading-edge technology from American companies at all times," he told me. "And Cerebras is one of those companies."

[21]

Cerebras Systems prices IPO at $185 per share on Nasdaq By Investing.com

Cerebras Systems Inc. announced the pricing of its initial public offering at $185.00 per share for 30 million shares of Class A common stock. The AI infrastructure company expects to raise $5.55 billion from the offering. The company granted underwriters a 30-day option to purchase up to 4.5 million additional shares at the offering price, less underwriting discounts and commissions. This could increase total proceeds to approximately $6.38 billion if the option is exercised in full. Shares are expected to begin trading on the Nasdaq Global Select Market on May 14, 2026, under the ticker symbol NASDAQ: CBRS. The offering is scheduled to close on May 15, 2026, subject to customary closing conditions. Morgan Stanley, Citigroup, Barclays, and UBS Investment Bank serve as lead book-running managers for the offering. Mizuho and TD Cowen are acting as bookrunners, while Needham & Company, Craig-Hallum, Wedbush Securities, Rosenblatt, Academy Securities, Credit Agricole CIB, MUFG, and First Citizens Capital Securities serve as co-managers. Cerebras develops AI processing technology, including the Wafer-Scale Engine 3 processor. The company states its processor is 58 times larger than leading GPU chips and delivers inference up to 15 times faster than GPU-based solutions on open-source models. The Securities and Exchange Commission declared the registration statement for the securities effective.

[22]

Nvidia, AMD, Broadcom And Now Cerebras: AI Chip Trade Gets A New Name - Broadcom (NASDAQ:AVGO), NVIDIA (N