AMD partners with GlobalFoundries for Co-Packaged Optics in Instinct MI500 AI accelerators

2 Sources

[1]

AMD Eyes GlobalFoundries Co-Packaged Optics for Instinct MI500 Accelerators

AMD is reportedly preparing to expand its AI hardware strategy with co-packaged optics for the Instinct MI500 generation, a move that could bring GlobalFoundries back into the picture in a much more visible way. The reported plan centers on using photonic integrated circuits manufactured by GlobalFoundries, while ASE would manage packaging and assembly. Rather than focusing only on raw accelerator performance, the goal appears to be improving how future Instinct platforms move data at scale. That shift makes sense. AI infrastructure is increasingly constrained by interconnect bandwidth, power efficiency, and latency rather than compute alone. Traditional copper links remain practical, but they become more difficult to scale as system bandwidth requirements keep rising. Signal integrity, power draw, thermal load, and physical routing all become tougher to manage in dense clusters. Co-packaged optics aims to address those issues by moving optical communication much closer to the compute package, reducing electrical bottlenecks and making large multi-node systems easier to scale. For AMD, that could be an important step for the Instinct MI500 family, which is expected in 2027. The company's nearer-term roadmap remains centered on the MI400 series, but the follow-up generation gives AMD room to introduce broader platform-level changes. CPO could help increase usable bandwidth between compute nodes while lowering the power cost of data movement across racks and larger AI deployments. In hyperscale environments, that matters just as much as adding more matrix throughput or memory capacity. The GlobalFoundries angle is also notable for historical reasons. The company originated from AMD's former manufacturing operations before becoming independent, and AMD fully divested years ago. Since then, the relationship has continued in more limited form, mainly around mature-node products. A return through silicon photonics would not signal a broader foundry shift away from advanced logic suppliers, but it would highlight how AMD is selecting specialists for specific functions in the AI supply chain. GlobalFoundries has been investing in silicon photonics and specialty process technologies, which makes it relevant for optical interconnect work even without competing at cutting-edge GPU process nodes. ASE's role in the packaging side is equally important. Advanced AI products are no longer defined by a single die or even a single manufacturing source. Packaging, chip-to-chip connectivity, and interconnect integration now play a major role in system capability. Pairing photonics production from one supplier with packaging expertise from another reflects how modern accelerator design is evolving into a multi-company engineering effort. AMD is not alone in moving this way. NVIDIA is also pursuing optical interconnect technology for future AI platforms, including work tied to Rubin Ultra. That puts AMD's reported MI500 plans into a wider industry context. The next phase of AI hardware competition will depend not only on faster silicon, but also on how efficiently vendors can connect large numbers of accelerators in real deployments. If this partnership takes shape as reported, Instinct MI500 may become one of AMD's most important platform transitions yet, with optical interconnects helping the company address the scaling limits that now define the AI data center race.

[2]

AMD Taps GlobalFoundries for MI500's Co-Packaged Optics as the Silicon Photonics Race With NVIDIA Heats Up

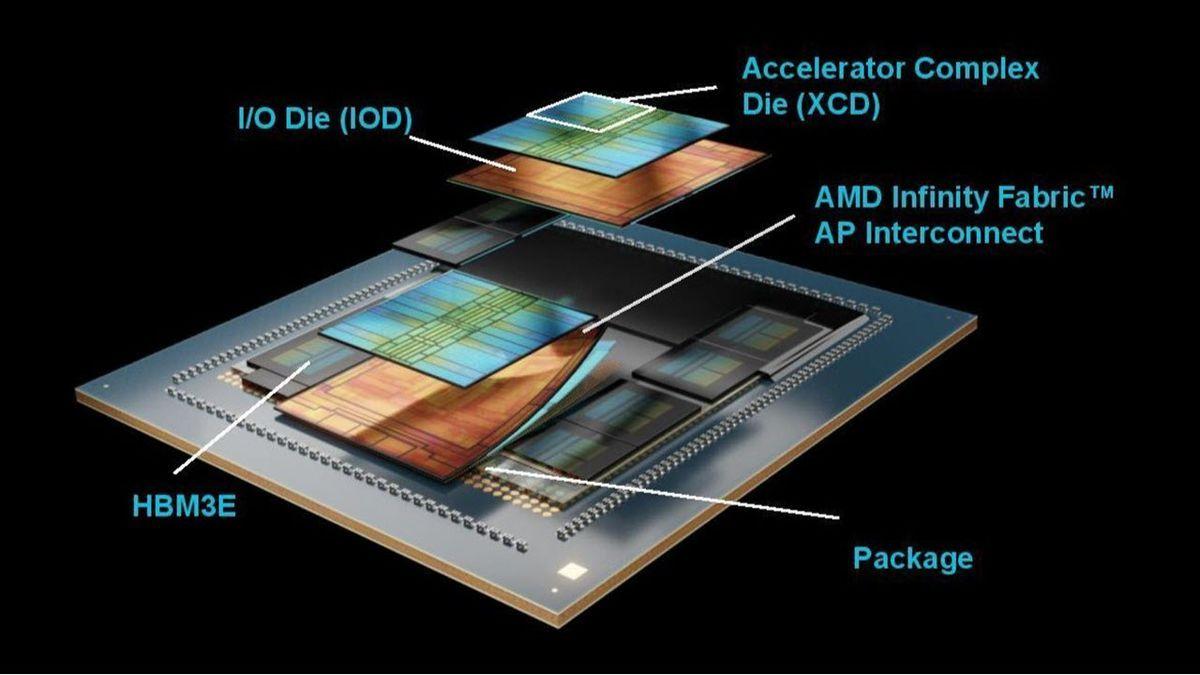

AMD will be leveraging GlobalFoundries for the development of its MRM Co-packaged Optic solution for the next-gen Instinct MI500 AI accelerators. CPO or Co-Packaged Optics (Silicon Photonics) is the next-generation solution that reduces reliance on copper and harnesses light to transfer signals. These CPOs are packaged alongside hardware accelerators such as GPUs and will be a key solution for next-gen AI factories, offering improved interconnect latency and creating high-bandwidth connections between CPU and GPU. Both AMD and NVIDIA will be leveraging these technologies for their next-gen AI GPU accelerators. Based on the latest information, AMD will be developing an MRM-based CPO solution for its next-gen Instinct MI500 accelerator. The PIC or Photonic Integrated Circuits will be handed to GlobalFoundries for manufacturing, while ASE will be responsible for the packaging. Last year, AMD acquired photonics experts, Enosemi, to accelerate its Co-Packaged Optics innovations. Similarly, NVIDIA is also said to be working on its own CPO PIC for Vera Rubin accelerators, using TSMC for the PIC and packaging handled by SPIL. The chips themselves will be assembled at Foxconn Industrial Internet or Industrial Fulian, a subsidiary of Foxconn. For Rubin Ultra, the CPO solution will be prioritized over NPO (Near-Package Optics). Moving forward, NVIDIA is going to fully adopt Co-Packaged Optics, removing the need for NPOs with its Feynman generation of AI accelerators. As for AMD, the company has already confirmed that its MI500 series will be fabricated on an advanced 2nm process technology. The upcoming MI400 series makes use of a 2nm process technology too, but the one utilized by MI500 is more advanced, & offers various enhancements. The chips will be produced at TSMC. The MI500 accelerators will leverage the new CDNA 6 architecture (CDNA 5 for MI400 series), & HBM4E memory, which will offer even higher speeds and memory bandwidth than 19.6TB/s on HBM4-powered MI400 accelerators. Also, unlike previous reports, it looks like AMD isn't going to change to the UDNA architecture naming for Instinct GPUs. AMD has promised a big jump in AI performance with the Instinct MI500 series. The company is on the trajectory to achieve over 1000x AI performance in just four years. This is crucial to meet the growing AI demand and keep up with the competition, which is also accelerating at a rapid pace. MI500 launches in 2027.

Share

Copy Link

AMD is preparing to integrate Co-Packaged Optics into its Instinct MI500 AI accelerators, partnering with GlobalFoundries for photonic integrated circuits and ASE for packaging. The 2027 launch aims to address interconnect bandwidth bottlenecks in AI infrastructure as the silicon photonics race with NVIDIA intensifies.

AMD Instinct MI500 Adopts Co-Packaged Optics for 2027 Launch

AMD is expanding its AI hardware strategy with Co-Packaged Optics for the Instinct MI500 generation, marking a significant shift in how AI accelerators handle data movement at scale

1

. The company will partner with GlobalFoundries for manufacturing Photonic Integrated Circuits (PIC), while ASE handles packaging and assembly2

. This approach reduces reliance on traditional copper connections by harnessing light to transfer signals, directly addressing AI infrastructure challenges that increasingly constrain modern deployments.

Source: Wccftech

Silicon Photonics Race Intensifies Between AMD and NVIDIA

The move places AMD in direct competition with NVIDIA in the silicon photonics race. NVIDIA is developing its own CPO solution for Vera Rubin accelerators, using TSMC for PIC manufacturing and SPIL for packaging, with final assembly handled by Foxconn Industrial Internet

2

. For its Rubin Ultra generation, NVIDIA will prioritize Co-Packaged Optics over Near-Package Optics, eventually eliminating NPOs entirely with its Feynman generation2

. This parallel development signals that optical interconnect technology has become essential for next-generation AI factories, where interconnect bandwidth and latency matter as much as raw compute power.Addressing Bandwidth Bottlenecks and Power Efficiency

AI infrastructure is increasingly constrained by how efficiently systems move data rather than processing capability alone. Traditional copper links struggle with signal integrity, power draw, thermal load, and physical routing in dense clusters as bandwidth requirements climb

1

. Co-Packaged Optics addresses these bandwidth bottlenecks by positioning optical communication much closer to the compute package, reducing electrical constraints and improving data movement efficiency across racks and larger deployments1

. In hyperscale data center environments, lowering the power cost of inter-node communication becomes as critical as adding matrix throughput or memory capacity.GlobalFoundries Returns Through Specialty Manufacturing

The GlobalFoundries photonic integrated circuits partnership marks a notable reconnection between the companies. GlobalFoundries originated from AMD's former manufacturing operations before independence, with AMD fully divesting years ago

1

. Their relationship has continued in limited form around mature-node products, but this photonics work represents a more visible role. GlobalFoundries has invested in silicon photonics and specialty process technologies, making it relevant for optical interconnect work without competing at cutting-edge GPU process nodes1

. This highlights how AMD is selecting specialists for specific functions in the AI supply chain.Related Stories

Advanced Process Technology and Architecture Improvements

The MI500 series will be fabricated on an advanced 2nm process technology at TSMC, more advanced than the 2nm node used for the nearer-term MI400 series

2

. The AI accelerators will leverage the new CDNA 6 architecture, compared to CDNA 5 for MI400, and feature HBM4E memory offering higher speeds and bandwidth than the 19.6TB/s available on HBM4-powered MI400 accelerators2

. AMD has committed to achieving over 1000x AI performance improvement in just four years, with the MI500 launch in 2027 representing a crucial milestone in meeting growing AI demand2

.

Source: Guru3D

Multi-Company Engineering Defines Modern Accelerator Design

Advanced AI products now require coordination across multiple specialists rather than relying on a single manufacturing source. Packaging, chip-to-chip connectivity, and interconnect integration play major roles in system capability

1

. Pairing photonics production from GlobalFoundries with packaging expertise from ASE reflects how modern accelerator design has evolved into a multi-company engineering effort. Last year, AMD acquired photonics experts Enosemi to accelerate its Co-Packaged Optics innovations2

, further strengthening its capabilities in this critical technology area. If this partnership delivers as planned, Instinct MI500 may become one of AMD's most important platform transitions yet, with optical interconnects helping address the scaling limits that now define competition in the AI data center race.References

Summarized by

Navi

Related Stories

AMD Acquires Enosemi to Boost AI Capabilities with Silicon Photonics Technology

29 May 2025•Technology

AMD Unveils Next-Gen AI Accelerators: MI325X and MI355X to Challenge Nvidia's Dominance

11 Oct 2024•Technology

AMD Accelerates Launch of Powerful Instinct MI350 AI GPU to Challenge Nvidia's Dominance

06 Feb 2025•Technology

Recent Highlights

1

Anthropic warns AI may soon build itself, calls for global pause on frontier development

Policy and Regulation

2

Florida sues OpenAI and Sam Altman over ChatGPT safety, alleging AI harms linked to violence

Policy and Regulation

3

Nvidia RTX Spark AI chip debuts in premium laptops, promising Windows its Apple Silicon moment

Technology

Recent Highlights

Today's Top Stories

News Categories