Datadog launches GPU Monitoring as AI costs soar and GPU instances hit 14% of cloud compute

2 Sources

[1]

Datadog digs down into GPU efficiency as AI costs soar

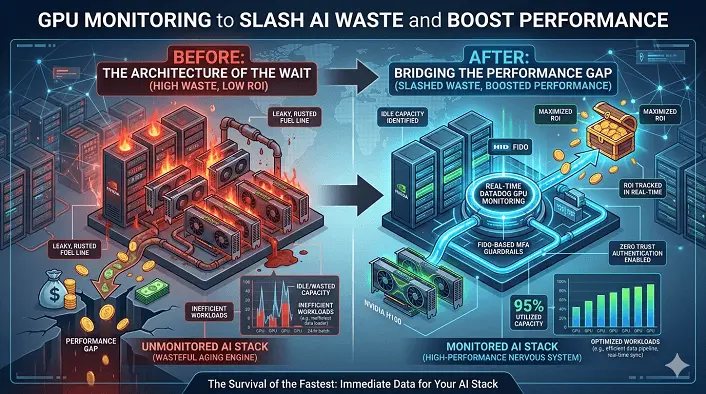

Datadog has added GPU monitoring to its observability stack, giving AI-hungry organizations more insight into exactly what's happening on their most expensive silicon. The observability vendor says GPU instances now make up 14 percent of cloud compute costs as companies clamber on the AI bandwagon, and that GPU spend will take up an even bigger proportion of cloud compute spend in the future. Earlier this month, IDC said: "Worldwide spending on artificial intelligence (AI) infrastructure reached $89.9 billion in Q4 2025" up 62 percent on the year. And accelerated compute - mainly GPUs - is the "structural backbone" of this. But there's plenty of debate over what value - if any - companies are deriving from their massive AI investments. Datadog is not getting into that bearpit. But as chief product officer Yanbing Li puts it, "While these companies can see their costs climbing, they can't chargeback GPU spend across business units, see workload context or identify clear next steps for improvement." To address that, Datadog claims, its latest tool offers unified visibility across the AI stack, "giving customers a single view linking GPU fleet health, cost, and performance directly to the teams relying on them for faster troubleshooting of slow workloads and cost savings." A longer explainer says the tooling works across both cloud and neocloud instances as well as on-prem GPU fleets - handy if sovereignty concerns are making you wary of AI in the cloud. "It's easy to see how much of your fleet is sitting completely idle or being ineffectively consumed by a workload that doesn't require GPUs at all," it says. "You can drill into the Fleet Explorer to hold each team accountable for their GPU utilization and spend." As well as identifying stalled or zombie processes soaking on GPU time, it will spot workloads that were never configured for GPUs in the first place, effectively burning cash. "Internally at Datadog, GPU Monitoring helped us save tens of thousands in monthly expenses by identifying and removing a serving pod that had been stuck in the initialization phase," the explainer said. "Rising costs are often driven by operational inefficiency rather than hardware alone. By linking cost to utilization and workload behavior, teams can reduce waste while maintaining performance." Datadog is certainly not alone in extending observability further down the AI stack. This week also saw Grafana launch observability tools for AI, provide insights into agent behavior, while its Grafana Cloud platform offers GPU observability tools covering hardware utilization and resource allocation, as well as cost optimization. Earlier this month, Nutanix unveiled a multi-tenancy framework to allow organizations to run more workloads on their previous GPUs, and provide more insight into how AI systems are chewing through tokens. So, it's getting easier to work out how much individual AI workloads are costing you, and what processes and software misconfigurations could be making bills higher than necessary. This means enterprises can ensure their AI infrastructure and their associated apps and agent are running as efficiently as possible. Whether this means enterprises can actually start working out whether they're getting value from AI investments may be quite another question. ®

[2]

Datadog Launches GPU Monitoring to Slash AI Waste and Boost Performance. Datadog Launches GPU Monitoring to Slash AI Waste and Boost Performance.

The launch of Datadog's GPU Monitoring helps teams plan capacity, troubleshoot issues quickly, prevent costly failures and avoid wasted spend Datadog, Inc. today announced that GPU Monitoring is available to customers everywhere. The new product addresses one of the most prevalent issues facing organizations today as they look for a scalable and effective way to manage expanding AI costs. "GPU instances account for 14 percent of compute costs -- which is a huge issue as companies are struggling to build AI-first technology in scalable and smart ways. While these companies can see their costs climbing, they can't chargeback GPU spend across business units, see workload context or identify clear next steps for improvement. As a result, it is very challenging to budget and plan in thoughtful ways," said Yanbing Li, Chief Product Officer at Datadog. The launch of GPU Monitoring marks one of the first times a single solution provides unified visibility across the AI stack -- giving customers a single view linking GPU fleet health, cost, and performance directly to the teams relying on them for faster troubleshooting of slow workloads and cost savings. "Smartly managing AI spend becomes a board-level conversation when capacity is misallocated, training and inference workloads stall, and costs escalate. We all know managing GPU costs is a huge problem we need to solve, but most companies are experimenting with solutions and it is still very difficult to get a single view of what is happening across the stack. GPU Monitoring fixes that with efficiency and reliability that we haven't seen before," said Li. Today, most GPU tools provide high-level device health metrics, but they don't surface cross-functional resource contention issues, explain why training and inference workloads fail, or provide visibility into which devices are idle or ineffectively used. This lack of visibility slows down investigations and means that teams overprovision as the safest default -- leading to wasted spend. GPU Monitoring streamlines this work by linking fleet telemetry directly to the workloads consuming those resources, and gives platform engineering and machine learning teams a shared view to investigate together, enabling them to: * Scale AI without overspending: With visibility and forecasting based on the usage patterns of fleets and direct guidance on whether to buy new GPUs or free up existing ones, platform teams avoid expensive purchases and long procurement cycles, machine learning teams get capacity faster, and leadership gets better ROI with predictable spend. * Accelerate AI delivery: Stalled workloads are correlated directly to the underlying GPUs, pods and processes running them so that teams can troubleshoot performance bottlenecks in minutes instead of hours, allowing engineers to focus on shipping AI projects. * Avoid costly disruptions: Unhealthy GPUs are proactively identified before failures cascade across a cluster and cause training and inference delays. * Maximize ROI on GPU spend: Teams are empowered and accountable for their GPU utilization and costs, and can easily pinpoint where they are overserving or underutilizing their GPUs. This allows teams to reclaim and reallocate resources in order to reduce wasted spend. "Datadog GPU Monitoring has made it easy for us to stay on top of our multi-tenant GPU infrastructure. We get per-instance, per-device visibility into core utilization, memory, power and thermals right out of the box with no extra setup. The dashboards are rich out of the gate and simple to customize, and standing up isolated views per customer takes minutes," said Kai Huang, Head of Product at Hyperbolic. "Layering on LLM Observability ties it all together. We can go from a model latency spike straight to the underlying GPU metrics without switching tools. Full stack AI observability in one platform means both our team and our customers can move faster with confidence."

Share

Copy Link

Datadog introduces GPU Monitoring to help organizations manage escalating AI costs as GPU instances now account for 14% of cloud compute spending. The observability platform provides unified visibility across AI infrastructure, linking GPU fleet health, cost, and performance to help teams identify inefficiencies, troubleshoot workloads faster, and reduce wasted spending on expensive silicon.

Datadog Addresses Escalating AI Costs with New GPU Monitoring Tool

Datadog has launched GPU Monitoring for its observability platform, targeting one of the most pressing challenges facing AI-driven organizations: managing rapidly escalating AI costs

1

. The timing is critical, as GPU instances now represent 14 percent of cloud compute costs, a figure expected to grow substantially as companies expand their AI investments2

. According to IDC, worldwide spending on AI infrastructure reached $89.9 billion in Q4 2025, up 62 percent year-over-year, with accelerated compute—primarily GPUs—forming the structural backbone of this growth1

.Unified Visibility Across the AI Stack Enables Cost Optimization

The challenge for most organizations isn't just rising costs but the inability to understand where money is being wasted. "While these companies can see their costs climbing, they can't chargeback GPU spend across business units, see workload context or identify clear next steps for improvement," explained Yanbing Li, Chief Product Officer at Datadog

2

. GPU Monitoring addresses this gap by providing unified visibility that links GPU fleet health, cost, and performance directly to the teams using them1

. The tool works across cloud, neocloud instances, and on-premises GPU fleets, making it valuable for organizations with sovereignty concerns about AI in the cloud1

.

Source: CXOToday

Identifying AI Workloads That Waste Resources and Money

The platform excels at spotting GPU efficiency problems that silently drain budgets. Teams can identify portions of their fleet sitting completely idle or being consumed by AI workloads that don't require GPUs at all

1

. It detects zombie processes soaking up GPU time and workloads never configured for GPUs in the first place, effectively burning cash1

. Datadog's own internal use case demonstrates the impact: GPU Monitoring helped the company save tens of thousands in monthly expenses by identifying and removing a serving pod stuck in the initialization phase1

.Faster Troubleshooting and Performance Bottlenecks Resolution

Most existing GPU tools provide only high-level device health metrics without surfacing resource contention issues or explaining why training and inference workloads fail

2

. This lack of visibility forces teams to overprovision as the safest default, leading to wasted spending2

. GPU Monitoring streamlines troubleshooting by correlating stalled workloads directly to underlying GPUs, pods, and processes, allowing engineers to resolve performance bottlenecks in minutes instead of hours2

.Related Stories

AI Infrastructure Efficiency Becomes a Competitive Necessity

"Smartly managing AI spend becomes a board-level conversation when capacity is misallocated, training and inference workloads stall, and costs escalate," Li noted

2

. The tool enables platform engineering and machine learning teams to share a single view for investigation, helping them maximize return on investment with predictable spending patterns2

. Kai Huang, Head of Product at Hyperbolic, highlighted the practical benefits: "We get per-instance, per-device visibility into core utilization, memory, power and thermals right out of the box with no extra setup," adding that integrating LLM Observability allows teams to trace model latency spikes straight to underlying GPU metrics2

.Growing Market for AI Observability Solutions

Datadog isn't alone in extending observability deeper into the AI stack. Grafana recently launched observability tools for AI with insights into agent behavior, while Grafana Cloud offers GPU observability covering hardware utilization and resource allocation for cost optimization

1

. Nutanix unveiled a multi-tenancy framework to run more workloads on existing GPUs with better insight into token consumption1

. As organizations struggle to determine whether they're getting value from massive AI investments, tools that reduce wasted spending while maintaining performance are becoming essential for managing the economics of AI at scale1

.References

Summarized by

Navi

[1]

Related Stories

Datadog Acquires AI-Powered Data Observability Startup Metaplane to Enhance AI System Reliability

24 Apr 2025•Business and Economy

Nvidia tests location tracking software as $160M chip smuggling network gets busted

09 Dec 2025•Technology

Datadog stock soars 31% as blockbuster earnings prove AI drives growth, not disruption

07 May 2026•Business and Economy

Recent Highlights

1

Google stops first AI-developed zero-day exploit designed to bypass two-factor authentication

Technology

2

Anthropic Mythos evolves faster than expected, completing complex cyberattacks in 20 hours

Technology

3

Google unveils Gemini Intelligence, transforming Android into an AI-first smartphone platform

Technology