Gemini's Personal Intelligence now creates personalized images using your Google Photos library

29 Sources

[1]

Gemini can now create personalized AI images by digging around in Google Photos

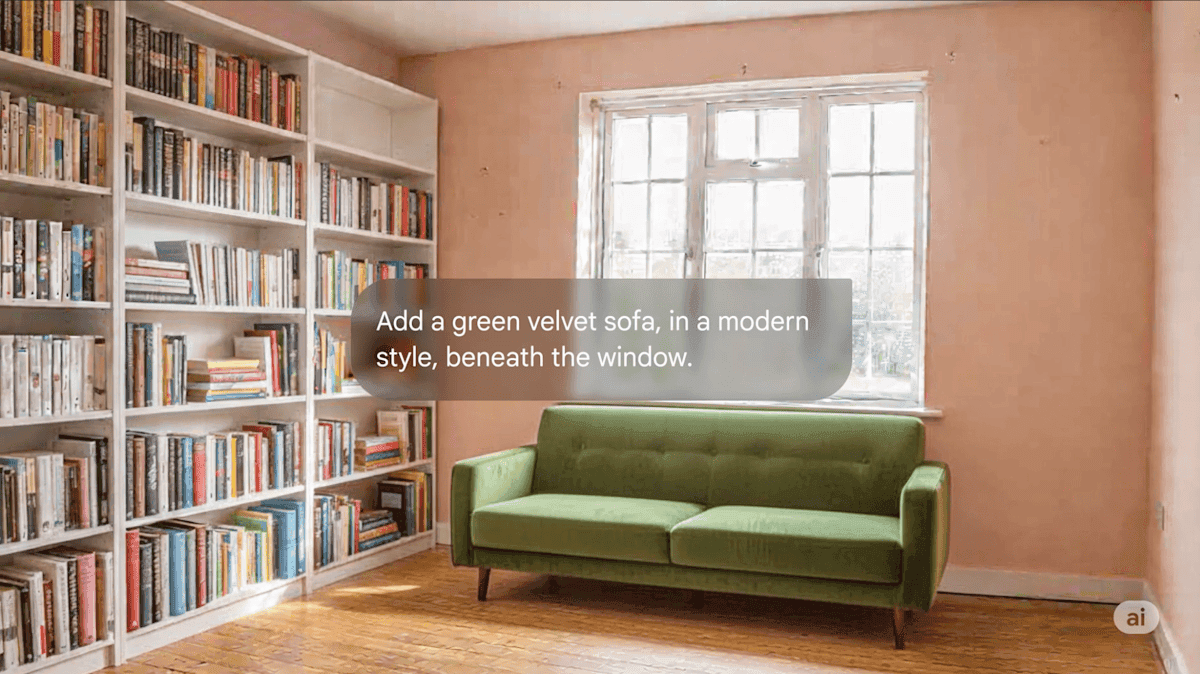

Google began rolling out "personal intelligence" in Gemini early this year, giving AI subscribers the option of a more customized experience when using the company's chatbot. Today, it's using personal intelligence to tie its image-generation model to Google Photos. If you opt in, generated images will have access to your photos and associated labels to simplify prompts and produce more accurate AI images. This change essentially streamlines an existing workflow. Google's Nano Banana 2 is among the best AI image generators available, and it was already possible to feed it images of yourself or others to use as context for creating new AI content. Adding personal intelligence to the mix makes that process smoother by turning the image bot loose on the content of your photos, if indeed that's something you want to do. It is generally true that adding more personal data to an AI prompt results in a better output. Google offers a few examples of how connecting Nano Banana to Photos can help in this way. You won't have to pack as much context into your prompts -- you can just refer to "my family" or "my dog" to let the robot find useful images in your Photos library. Perhaps you want a quirky family photo, so you type "create a claymation image of me and my family enjoying our favorite activity." In this prompt, Gemini will rely on labels you've added to Google Photos to identify "family," and the content of the images can inform its determination of a "favorite activity." You could, of course, get a similar result by explicitly telling Gemini to include certain people doing a particular thing, but personal intelligence saves you from that extra typing. It reduces friction, and it could get people to use AI tools more often, which is Google's ultimate goal. Perfectly imperfect Google notes that the new feature is still evolving, so it might not always choose the right images. If that happens, you may want to check the sources list to see what went wrong. It will list the images referenced in the prompt, and you can also ask Gemini in a follow-up prompt about the images it chose. Manually selecting photos with the plus button in Gemini can help address these shortcomings. While Nano Banana 2 can now peruse your Google Photos library when generating an image, Google stresses that it's not retaining that data for training. The distinction between using your data in a prompt and training AI with it can be confusing, but Google says it doesn't use any images from your library in training. However, it does use the inputs (what you type) and outputs (what the model does as a result) to improve AI products. That can still include personal data about you, but it's not the same as pouring all your photos into Nano Banana's training data. Regardless, the entire endeavor may still give people the creeps. The good news is that you don't have to let Nano Banana into your photo library, even if you use it to generate images. Personal intelligence is off by default, and it's currently only available to those on paid Google AI plans (the Nano Banana tie-in is available even for those on the budget Plus plan). However, as we've seen in the past, AI features often start on the paid tiers before expanding to everyone with a Google account. Gemini is aggressive about asking users to enable personal intelligence, so you'll probably see these popups in the future, even if you're not paying for AI. Personal intelligence also connects Gemini to Gmail, YouTube, and other Google services, but you can decide which ones are allowed when you set it up.

[2]

Google adds Nano Banana-powered image generation to Gemini's Personal Intelligence | TechCrunch

Google on Thursday announced that Gemini's Personal Intelligence feature will get Nano Banana-powered image generation to create images with personalized context. That means its AI images can be created using Gemini's understanding of your likes and interests, without those having to be explicitly noted in the prompt. This works because Gemini already has context of your data through Google account connections, such as Gmail and Google Photos. So, instead of typing "Generate an image of my dream home, my interests are tennis and music," you can now just say, "Design my dream home." What's more, the Nano Banana-powered connection can also use the labels in your Google Photos, so that it understands names and words that describe a group, like "Family". For instance, you can create an image by saying, "Generate an image of my family and me doing our favorite activity." The company said the "sources" button will show how Gemini derived the context for image generation. Google said that just like other connections, Gemini might get the context wrong, and you can provide feedback. Plus, you can also provide reference photos for image generation by clicking the "+" icon. The image generation feature will be available to Plus, Pro, and Ultra subscribers in the U.S. within the coming days. Google plans to bring the feature to Gemini in Chrome desktop and to other users soon. Google first launched Personal Intelligence earlier this year and made it available to all U.S. users in March. Earlier this week, the company expanded the feature to more users in countries like India and Japan.

[3]

Google brings its Gemini Personal Intelligence feature to India | TechCrunch

Google announced on Tuesday that it's bringing Gemini's Personal Intelligence feature to users in India. The feature lets users connect their Google accounts, such as Gmail and Google Photos, then ask Gemini questions to get personalized answers. After connecting their services, users could ask something like, "What are my travel plans for Jaipur?" to get information from their emails or photos. The feature can also refer to recent YouTube videos that users have watched to get ideas. The company said that Gemini will identify sources for its answers so you can verify details, if needed. At launch, the Personal Intelligence feature will be limited to AI Pro and AI Ultra users in India. However, Google said that it aims to expand it to free users in the coming weeks. The expansion to India brings Gemini's capabilities to another sizable market. Google first debuted Personal Intelligence in beta in the U.S. in January, for some of the paid tiers. The company made it available to all users in the U.S. in March and has also launched the feature in Japan. Google cautioned that Gemini doesn't always get the context in your data right and might make connections between completely unrelated topics. "Gemini may also struggle with timing or nuance, particularly regarding relationship changes, like divorces, or your various interests. For instance, seeing hundreds of photos of you at a golf course might lead it to assume you love golf. But it misses the nuance: you don't love golf, but you love your son, and that's why you're there. If Gemini gets this wrong, you can just tell it ("I don't like golf")," the company explained in a blog post. The company is seeding advanced AI features to users in India, one of its biggest markets, at a quick pace. In March, the company launched Gemini in Chrome for users in the country. Last week, it also enabled an agentic flow of booking restaurants through AI mode in India by partnering with platforms like Zomato, Swiggy, and EazyDiner.

[4]

Gemini can now pull from Google Photos to generate personalized images

Google's Personal Intelligence feature, which lets Gemini pull data from apps like Google Photos to offer responses tailored to you, can now use that data and its Nano Banana 2 image model to create images based on your personal context. With the feature, you can use prompts like "Design my dream house" or "Create a picture of my desert island essentials" and the photos Gemini creates will "automatically reflect your specific tastes and lifestyle, gleaned from the Google apps you've connected to," Google says in a blog post. Under the hood, the integration uses your labels in Google Photos to identify people like you, your friends, and your family, and then Nano Banana 2 creates the image, spokesperson Elijah Lawal tells The Verge. Google notes that if you do opt in to Personal Intelligence, the company will not "directly train" its AI models on your private Google Photos library. However, it does train on "limited info" such as "specific prompts in Gemini and the model's responses." Google says the feature will be rolling out "over the next few days" to eligible AI Plus, Pro, and Ultra subscribers in the US. The feature is set to come to Gemini on Chrome desktops and "more users" soon.

[5]

Gemini can now draw on your Google data to personalize the images it generates

Your Google Photos library could soon influence the kind of images you can generate with Gemini. After letting users personalize the AI assistant's responses with data from Gmail, Search and YouTube, Google says it's bringing that same "Personal Intelligence" to Nano Banana 2 to make it easier for users to create personalized images with the AI model. The goal is to have the data affiliated with your Google account -- your YouTube history, emails, Google Photos, etc. -- provide context to Nano Banana 2 so you don't have to. Rather than prompting Gemini's image generation model with information about you or photos of your belongings, a direction to "create a picture of my desert island essentials" should produce an image that includes the things you care about without any extra context. Similarly, if you use labels in Google Photos to identify people or pets, you can tell Gemini to "create a hand-drawn illustration of mom," and it should be able to use Google Photo's labels to find the right reference photo and create an image of the right person. If Gemini creates images that don't look right, you can still send a follow-up prompt to refine the result, or select a new source image from Google Photos with the "+" button. Google says you can also click the "Sources" button to view what images the AI referenced in the first place, or ask it directly for the attribution and sources used for a specific image. Personalized user data is one of the unique advantages Google has over companies offering competing AI assistants, so expanding Personal Intelligence to an already popular feature like image generation is a natural way to build on that lead. For now, this more personalized version of Nano Banana 2 is available in the Gemini app for eligible AI Pro and AI Ultra subscribers. Google says the feature will come to Gemini in Chrome and other users "soon."

[6]

Google will let users connect their photos to the Gemini chatbot and Nano Banana

Google said Thursday it will allow users to connect their Personal Intelligence, an AI feature that connects Google apps for personalized answers, with its Gemini chatbot. By opting in, Nano Banana can create personal images based on a user's private Google Photos as opposed to manually uploading images to the chatbot. Users can ask Gemini to "create a claymation image of me and my family enjoying our favorite activity" and Gemini can generate that specific image for you automatically, the company said in its blog post announcement. Nano Banana was a hit when it launched last year, as people began uploading personal photos to create digital miniature figurines of themselves. It was so popular that it overloaded the company's infrastructure, forcing Google to place temporary limits on usage to ease the burden on its custom-designed chips called tensor processing units. It also pushed the Gemini app to the number one spot on Apple's App Store, de-throning OpenAI's ChatGPT. Despite the popularity, the ability to directly connect to a user's photo library represents a bigger step in the AI chatbot link to private information.

[7]

Google adds Nano Banana image generation to Gemini's Personal Intelligence feature

In short: Google has added Nano Banana-powered image generation to Gemini's Personal Intelligence feature, letting the AI create images informed by a user's Gmail, Photos, Calendar, Drive, and other Google app data. The feature rolls out to Plus, Pro, and Ultra subscribers in the US first, with Europe excluded from the initial global launch. Nano Banana is Google's native image generation family for Gemini, now spanning three model versions. Google has added Nano Banana-powered image generation to Gemini's Personal Intelligence feature, letting the AI create images that draw on a user's personal context across Gmail, Photos, Calendar, Drive, and other Google apps. The update means Gemini can now generate images informed by who you are and what you do, not just what you type into the prompt. The feature is rolling out to Plus, Pro, and Ultra subscribers in the United States in the coming days, with free users expected to gain access over the next few weeks. Google says it plans to expand to Gemini in Chrome on desktop and to additional markets, though Europe is notably excluded from the initial global rollout of Personal Intelligence. Nano Banana is Google's native image generation capability for the Gemini model family, distinct from Imagen, Google's dedicated text-to-image line. Where Imagen is built for users who prioritise quality, iteration speed, and professional workflows, Nano Banana is designed for conversational image generation within the Gemini interface, accepting text, images, or both as inputs. The family now includes three versions. The original Nano Banana, built on Gemini 2.5 Flash, handles basic conversational image generation. Nano Banana 2, launched in February 2026 on Gemini 3.1 Flash, combines the advanced features of the Pro version with faster iteration speeds. Nano Banana Pro, built on Gemini 3 Pro, incorporates the model's full reasoning and real-world knowledge into image generation, producing outputs that reflect deeper understanding of prompts rather than surface-level pattern matching. The technical advantage Google claims is that Nano Banana uses the Gemini model's language understanding to capture prompt nuance in ways that standalone image generators cannot. Because the image generation is native to Gemini rather than bolted on as a separate system, the model can reason about what you are asking for before generating the image, drawing on context from the conversation and, now, from your personal data. Personal Intelligence is Google's framework for connecting Gemini to a user's Google account data. Launched in January 2026, it lets Gemini access text, photos, and videos from Gmail, Calendar, Drive, Google Photos, YouTube, Search, Maps, and other first-party apps. The feature is opt-in, with users controlling which apps Gemini can access, and Google says the AI does not train on personal data. Until now, Personal Intelligence has primarily powered text-based personalisation: answering questions about your travel plans by reading your Gmail confirmations and calendar entries, or making shopping suggestions based on purchase history. Adding Nano Banana image generation extends the personalisation to visual outputs. Google's example use cases include generating images that incorporate your personal photos, creating visuals informed by your preferences and context, and producing outputs that reflect an understanding of your life rather than generic stock imagery. A "sources" button shows how Gemini derived the context for each personalised image, giving users visibility into which personal data informed the output. This is a meaningful transparency feature in a product category where the provenance of AI-generated content is increasingly contentious. Google is not the first to combine personal context with AI image generation, but it has a structural advantage that no competitor can easily replicate: it already has more personal data than any other consumer technology company. Gmail, Google Photos, Drive, Calendar, Maps, Search, and YouTube collectively represent a more comprehensive picture of a user's life than any single app or platform can offer. Connecting that data to a capable image generator creates a personalisation moat that is difficult for OpenAI, Apple, or Meta to match without equivalent data breadth. The timing also matters. ChatGPT's image generation capabilities have driven significant user engagement for OpenAI, and Apple Intelligence has been integrating on-device AI features across the iPhone ecosystem. Google's response is to lean into what it does best: cross-product integration powered by its unmatched data infrastructure. On-device image generation with Gemini Nano is also coming to Pixel phones and Android devices, which would enable instant, private generation without cloud dependency. That combination, cloud-powered personalised generation for complex requests and on-device generation for speed and privacy, positions Google to cover both ends of the use case spectrum. The obvious concern is that giving an AI image generator access to your personal photos, emails, and browsing history creates risks that Google's opt-in controls may not fully address. Google says it does not train on personal data, but the feature necessarily involves processing that data to generate contextually relevant images. The distinction between "training on" and "using for inference" is technically meaningful but may be lost on users who simply see an AI that knows what their house, their children, or their holiday looked like. Europe's exclusion from the rollout suggests that Google's own assessment is that the feature may face regulatory friction under GDPR and the AI Act. The company has form here: Gemini's initial launch was also delayed in Europe, and Google has repeatedly had to navigate the gap between the personalisation its products depend on and the data protection frameworks that European regulators enforce. For users who opt in, the value proposition is clear: an AI assistant that can generate images reflecting your actual life, preferences, and context rather than producing generic outputs from a prompt alone. For users who are wary of handing that much personal context to an AI system, the "sources" button offers some transparency, but the fundamental trade-off, personalisation in exchange for data access, is one that Google has been asking its users to make for two decades. This is simply the latest, and most visually expressive, version of that bargain. Google has not disclosed pricing changes or additional costs for Nano Banana-powered Personal Intelligence image generation. The feature appears to be included in existing subscription tiers, which range from the free plan through Plus, Pro, and Ultra, with the pace of rollout tied to subscription level.

[8]

Google Makes Image Generation a Little Creepier With Personal Intelligence

Earlier this year, Google introduced Personal Intelligence, a feature that goes beyond just letting its Gemini AI remember conversations you have with it directly and gives it access to your internet history. On Thursday, the company announced that it will expand the reach of Personal Intelligence to its image generation model Nano Banana, allowing it to pull details from your life to create more personalized visual outputsâ€"you know, in case you were feeling like your AI-generated images were feeling a little impersonal, for whatever reason. Here's the problem Google says it is solving: "One of the biggest hurdles in AI image generation is finding the right prompt. Previously, to get a result that felt truly personal, you had to write long, detailed descriptions and manually upload a reference photo just to give Gemini the right context." It's addressing that "issue" by extending Personal Intelligence to Nano Banana 2, the search giant's flagship image generation model, to "automatically fill in the blanks, grounding every creation in the things you care most about." The company claims that the feature will get rid of the "heavy lifting" of image generation. "Instead of writing out the intricate details of your life, you can use simple prompts like 'Design my dream house' or 'Create a picture of my desert island essentials,' and the results will automatically reflect your specific tastes and lifestyle, gleaned from the Google apps you’ve connected to," the company explained. When Google first introduced Personal Intelligence, it provided users the option to give Gemini access to their Google Photos library. If they have done so, or if they decide to now, Gemini will pull images directly from their photo library in the image generation process. The model will use the labels users have applied to their images to identify people, places, and things to pull the most relevant material. "With those labels in place, you can simply ask Gemini to 'create a claymation image of me and my family enjoying our favorite activity' and Gemini can generate that specific image for you automatically," Google said. That task would have previously required uploading a reference image. Now it gives Gemini the ability to use any of a user's existing photos as the reference. As with the launch of Personal Intelligence, Google is offering assurances that by opting in to allow it to use your images for image generation, it won't turn your content into training data for the model. The company said, "The Gemini app does not directly train its models on your private Google Photos library. We train on limited info, like specific prompts in Gemini and the model’s responses, to improve functionality over time." It's probably worth flagging the words "direct" and "limited" there, as they appear to be doing some heavy lifting in trying to maintain user trust. According to Google, Personal Intelligence for image generation will roll out in the coming days in the Gemini app and will be available for all paid subscribers on either a Google AI Plus, Pro, or Ultra plan. The feature will eventually make its way to Gemini in Chrome and other platforms, as well. So if you've decided that the level of cognitive offloading that you want is deferring to AI to tell you what your own dream house would be, give it a shot.

[9]

Gemini's most personal feature is making its biggest expansion yet

The company also teases that Personal Intelligence for free Gemini users globally will be here "in the next few weeks." Our modern AI era has made plenty of big promises, but one with the most potential to be really impactful has been the availability of AI agents that really get to know us and our needs on a holistic level. Back at the start of the year, Google announced Personal Intelligence for Gemini, designed to help solve problems by leaning on broad contextual knowledge of our digital lives. And now Google shares that Personal Intelligence is making a big, global expansion.

[10]

Gemini's Personal Intelligence hits Nano Banana for truly personal image generation

Karandeep Singh Oberoi is a Durham College Journalism and Mass Media graduate who joined the Android Police team in April 2024, after serving as a full-time News Writer at Canadian publication MobileSyrup. Prior to joining Android Police, Oberoi worked on feature stories, reviews, evergreen articles, and focused on 'how-to' resources. Additionally, he informed readers about the latest deals and discounts with quick hit pieces and buyer's guides for all occasions. Oberoi lives in Toronto, Canada. When not working on a new story, he likes to hit the gym, play soccer (although he keeps calling it football for some reason🤔) and try out new restaurants in the Greater Toronto Area. Earlier this year, Google transformed Gemini into a true assistant by giving it personal knowledge. The knowledge I'm talking about comes via the 'Personal Intelligence' feature, which allows Gemini to tailor its responses by connecting itself to your Google ecosystem. This includes integration with apps like Gmail, Photos, Search, and even your YouTube History. Related Google says Gemini's Personal Intelligence is the context-aware AI you've been looking for Without the privacy nightmares Posts 1 By Karandeep Singh Oberoi Now, in an attempt to supercharge the tool, Google is finally bringing a Nano Banana 2 integration to Personal Intelligence, allowing you to use your interests and preferences for image generation. Up until now, you've had to rely on relevant prompts to be able to generate personalized images. The latest Personal Intelligence integration means you can put those lengthy prompts behind you. The integration also leverages your Google Photos library, essentially turning your memories into a blueprint from relevant image generation. A lot of your most significant moments live in your Google Photos library. By connecting your Google Photos library to Personal Intelligence, Gemini goes a step further than just understanding your interests. It can use actual images of you and your loved ones to guide the image generation process. Gemini uses labels found in your Google Photos gallery, and that's how it can generate relevant images when you ask it to generate an image of "Dad and I." It's worth noting that this isn't a forced experience. Connecting your Google apps, including Photos, to Gemini is an opt-in experience, and you can disconnect the integration at any time in your settings. Additionally, considering that this is a new experience, Gemini might not always get things a hundred percent correct. In those situations, users will be able to the '+' icon and "select a different reference photo from your Google Photos library to try a new perspective." The new experience is rolling out now to Google AI Plus, Pro, and Ultra subscribers in the US. It should be widely visible over the next few days.

[11]

Gemini app using Personal Intelligence, Photos for tailored Nano Banana generation

With the international expansion underway, Gemini is now bringing together Personal Intelligence, Google Photos, and Nano Banana 2. Image generation in the Gemini app will now "feel deeply personal." This is meant to replace how you currently have to "write long, detailed descriptions and manually upload a reference photo just to give Gemini the right context." Personal Intelligence is leveraged with Nano Banana to "automatically reflect your specific tastes and lifestyle" as gleaned from existing Gemini chats. By integrating this context directly with Nano Banana 2, Gemini can automatically fill in the blanks, grounding every creation in the things you care about most. If you have manually connected Google Photos to Personal Intelligence, Gemini will "use actual images of you and your loved ones to guide the image generation process." Google is specifically leveraging the labels of people and pets in your Photos library: "you can simply ask Gemini to 'create a claymation image of me and my family enjoying our favorite activity' and Gemini can generate that specific image for you automatically." Same prompt from different accounts: Create a claymation image of me and my family enjoying our favorite activity. We want to be standing in the center of the image, staring straight forward. Google cautions that "Gemini might not always pick the exact photo or detail you had in mind on the first try." Tap the Sources button to see "which image was auto-selected to guide the creation." On the privacy front, the Gemini app "does not directly train its models on your private Google Photos library." We train on limited info, like specific prompts in Gemini and the model's responses, to improve functionality over time. Personalized image creation in Gemini is rolling out to Google AI Plus, Pro, and Ultra subscribers in the US over the next few days. It will come to Gemini in Chrome and more users "soon."

[12]

I just tried Gemini's new 'Personal Intelligence' -- it's the end of AI image prompting as we know it

Generating the perfect AI image sounds easy enough until you realize the prompt and the actual image don't seem to match. Luckily, the days of long, complex descriptions are gone. Today, Google is changing the game by introducing Personal Intelligence with Nano Banana image generation. This new integration allows Gemini to use your interests, preferences and even your private Google Photos library to "fill in the blanks" of your imagination automatically. Here's how it works. Skip the prompting Even as a prompt engineer, I can honestly say the biggest hurdle in AI has always been the prompt. But with Personal Intelligence, Gemini now has an inherent understanding of your life from your Google Docs, Photos or even Gmail. Instead of writing a paragraph to describe your aesthetic, you can now use simple commands: * "Design my dream house" -- Gemini will automatically reflect your specific tastes and lifestyle gleaned from your connected Google apps. * "Create a picture of my desert island essentials" -- The AI draws on your established interests to populate the image. Photos starring you and your loved ones The most impressive update is the deep integration with Google Photos. Gemini can now use actual images of you, your family and even your pets to guide the generation process. Since you can already label people and pets in your library, Gemini uses those labels to make your inner circle the stars of the show. You can ask for a "claymation image of me and my family enjoying our favorite activity," and Gemini handles the rest. Beyond claymation, you can experiment with: * Watercolors * Charcoal sketches * Oil paintings * Cartoons/comic The privacy factor Personal Intelligence is something users can enable or toggle off at any time. But even still, giving an AI access to your photo library sounds like a privacy nightmare. However, Google is leaning heavily into an "opt-in" model. Here's what that looks like: * No direct training: Google states the Gemini app does not directly train its models on your private Google Photos library. * Total transparency: A new "Sources" button allows you to see exactly which photo was auto-selected to guide a creation. * Manual control: If Gemini picks the wrong reference, you can click a '+' icon to select a different photo or simply tell the AI what it got wrong. How to get it This feature is powered by the new Nano Banana 2 model and is rolling out now to Google Plus, Pro, and Ultra subscribers in the U.S.. While it's starting on mobile, Google plans to bring these personalized tools to Chrome on desktop soon. Give it a try and let me know in the comments what you think. And feel free to share some of your photos with me. I'd love to see what you create. Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds.

[13]

Gemini can now see your Google Photos -- and generate AI images of 'you' from them

Gemini can now browse a vast library's worth of images about you and produce its own AI creations that are based on them. The AI chatbot's Personal Intelligence features, designed to help Gemini adapt to your preferences and personality, are now, with your permission, able to browse your Google Photos library. The Nano Banana 2 image-making model can then use that information to shape its visuals without lots of explanation on your part. Instead of carefully writing prompts that explain your appearance, your environment, or your general vibe, you can simply say "me" and let the system handle the rest. Once you give Gemini permission to access your photos, you type a prompt as usual. The difference is that Nano Banana 2 now fills in the blanks using what it already knows. The output is not just based on your words, but on a version of you assembled from your own images. Cinematic life To see how well Gemini now knew me, I gave it a fairly open-ended prompt. "Create a cinematic scene from my life as if it were a movie." The result leaned heavily into an atmosphere of anticipation. A very good AI likeness of me stares pensively out at the rain in some sort of old hotel. I am apparently planning a big adventure, judging from the notebook, compass, and small plane outside, but I look as if I haven't made a decision. It's essentially a more dramatic version of many photos of me on trips or in hotel lounges with guidebooks. A cinematic, more narratively interesting version of many of my photos. Fantastic me Next, I wanted to see if Gemini would still be able to recognize me if it put me in a less realistic setting. So I asked it to "turn me into a character in a fantasy adventure." This time, realism disappeared in favor of scale and spectacle. I was placed on a winding stone path that stretched toward a towering castle carved into a mountainous landscape. My clothes had transformed into layered armor and leather, complete with a satchel marked by glowing symbols. A staff replaced the compass, emitting a faint light, while strange creatures hovered nearby as if they were part of the same ecosystem. Despite the setting, the continuity was impressive. The face was clearly mine, not a generic placeholder but a direct translation from my photos. The expression, slightly serious, slightly focused, carried over without exaggeration. Even the posture felt familiar. That consistency made the image more effective than it might have been otherwise. It did not feel like a fantasy character loosely inspired by me. It felt like me inserted into a fantasy world, which is a very different experience. The system was not inventing a new identity. It was adapting an existing one. Hobby extreme As Gemini had proven it knew what I looked like and what a movie version of me might be like, I asked for a more holistic, if over-the-top interpretation. I asked Gemini to "create an image of me doing my favorite hobbies in an extreme or exaggerated way." The result was far less restrained. I appeared in a vividly stylized scene that blended multiple interests into a single, energetic composition. Books floated midair, pages turning as if caught in a controlled storm. A small plane cut across the background, while futuristic cityscapes and distant planets filled the horizon. I was mid-motion, holding objects that hinted at different pursuits, reading, exploring, creating, all dialed up beyond anything that would happen in real life. The tone was exaggerated but not random. Each element seemed to connect back to something the system had inferred from my photos and context. Except for the wings. I don't know what they were about, and Gemini seemed at a loss to explain the reason behind it. The illustrative style was bold, but even so, the underlying likeness remained consistent. The feature sets up a much more powerful way to teach Gemini about yourself. Traditional AI image generation depends heavily on how well you describe what you want. Here, description becomes secondary. The system is already working from a base layer of information. Prompts become shorter and easier to write, and results feel more specific without requiring extra effort. How comfortable you are in sharing that information with Google is another question, of course. The company is good at encouraging people to upload their life story, but in this case, if you already use Google Photos, you're not adding to the database Google already hosts for you. And the frictionless way you get from a brief idea to a personalized artwork is undeniably appealing. Even if it makes you part bird for no particular reason. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button! And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

[14]

Nano Banana can now make AI images based on your Photos library

Google announced today that the Gemini Personal Intelligence feature is now available in Nano Banana 2, the company's popular AI image model. Now, instead of uploading a photo, users can give Nano Banana access to their Google Photos library, which will allow Nano Banana to generate personalized images for users. Don't miss out on our latest stories: Add Mashable as a trusted news source in Google. "One of the biggest hurdles in AI image generation is finding the right prompt," reads a Google blog post. "Previously, to get a result that felt truly personal, you had to write long, detailed descriptions and manually upload a reference photo just to give Gemini the right context. Now, Personal Intelligence gives Gemini an inherent understanding of your preferences from the start." Nano Banana is one of the web's leading AI image generators, and it's particularly good at editing photos. With Personal Intelligence, Nano Banana can reference your images and Labels to make photos based on you, your pets, or anything else in your library. Google gives several examples of how this could be useful. For instance, instead of uploading an image of your family and writing a detailed prompt, you can simply tell Gemini to "Make a claymation image of my family." Google also suggests prompts such as "Design my dream house" and "Create a picture of my desert island essentials." Users will need to organize and label their photos for the feature to work as intended, however. Of course, before granting an AI tool like Gemini or Nano Banana access to your entire photo library, it's important to understand how your images will be used. Google says that Gemini will not "directly" train its models on your photos; however, it will be able to train its models with the photos, prompts, and AI-generated images that appear in the Gemini app. "The Gemini app does not directly train its models on your private Google Photos library," the blog post states. "We train on limited info, like specific prompts in Gemini and the model's responses, to improve functionality over time. And connecting your Google apps to Gemini remains an opt-in experience that you can adjust in your settings at any time." As ever, it's important to check the fine print before using a new feature like this. You can read more about training and privacy at the Google Gemini Privacy Hub.

[15]

Gemini's smartest feature expands globally, but not everywhere

Rajesh started following the latest happenings in the world of Android around the release of the Nexus One and Samsung Galaxy S. After flashing custom ROMs and kernels on his beloved Galaxy S, he started writing about Android for a living. He uses the latest Samsung or Pixel flagship as his daily driver. And yes, he carries an iPhone as a secondary device. Rajesh has been writing for Android Police since 2021, covering news, how-tos, and features. Based in India, he has previously written for Neowin, AndroidBeat, Times of India, iPhoneHacks, MySmartPrice, and MakeUseOf. When not working, you will find him mindlessly scrolling through X, playing with new AI models, or going on long road trips. You can reach out to him on Twitter or drop a mail at [email protected]. In January this year, Google announced Personal Intelligence for Gemini. It turned the AI assistant into a personal assistant by letting it pull data and contextual information from your Google account, Gmail, Photos, and even YouTube's search history. However, the feature was only available in the US, with Google expanding it to free users a couple of months later. Now, it's making the feature available globally, with a few exceptions. Gemini's Personal Intelligence feature is now available to users in almost all major countries worldwide, except for the UK, Nigeria, Korea, Switzerland, and the European Economic Area. For now, users on Google's paid AI plans -- Plus, Pro, and Ultra -- get first dibs on the feature. However, the company promises to roll out Personal Intelligence to free users as well in the coming weeks. When you access Gemini through the web, a card to enable Personal Intelligence should appear. From there, you can link various Google services to get more personalized responses. Alternatively, head over to the Connect apps section in Gemini's settings and enable the toggle for all Google services you want to link to the AI assistant. For privacy reasons, the feature will remain off by default. Google also makes it clear that it will not train Gemini on your Gmail, Google Photos, or other personal data. Since Google is rolling out the feature in phases, it may not appear immediately for you. You can take control of Gemini's memory in just one tap It's possible to turn off Personal Intelligence for a specific conversation in Gemini. For this, tap the Tools icon in the Gemini chat box and turn off the Personal Intelligence toggle. This change will only apply to the current conversation. For future chats, Gemini will again pull context and data from your linked Google services. Subscribe for clear newsletter coverage of Gemini AI Curious about Gemini's Personal Intelligence? Subscribe to our newsletter for clear, expert coverage and context on this feature and related Google AI topics -- including privacy implications and control options. Get Updates By subscribing, you agree to receive newsletter and marketing emails, and accept our Terms of Use and Privacy Policy. You can unsubscribe anytime. You can also tell Gemini to regenerate a response without pulling contextual information from your Google account. Google notes in its announcement that while it has worked on ensuring Personal Intelligence does not make mistakes, you may still get incorrect replies or answers linking two unrelated topics. In such cases, Google urges you to press the thumbs-down button and provide feedback on the generated response.

[16]

Google Gemini now generates AI images from personal data

Google $GOOGL's Gemini app is rolling out a personalized image generation feature that draws on users' connected Google apps and Google Photos libraries to produce AI images without requiring detailed prompts. The capability is available to Google AI Plus, Pro, and Ultra subscribers in the U.S. and will expand to Gemini in Chrome desktop and additional users in the coming weeks, the company said. The feature works through Gemini's Personal Intelligence system and its Nano Banana 2 model. The underlying mechanism relies on account-level context Gemini has already built up -- through connections to services like Gmail -- so a short, unadorned prompt like "Design my dream house" can return results shaped by a user's tastes without requiring any additional personal detail. Linking Google Photos to Personal Intelligence unlocks an additional layer of specificity: Gemini can read the labels and named groupings -- such as "Family" -- that a user has applied within the library, enabling prompts that call up recognizable people without requiring a user to manually upload or identify any photos. Style options include watercolors, charcoal sketches, and oil paintings. For situations where the output misses the mark, TechCrunch reported that Google has built in several corrective options: direct feedback to the model, a "+" icon for swapping in a different reference photo, and a Sources button that surfaces the contextual basis Gemini used to generate the image. On privacy, Google said the Gemini app does not train its models on users' private Google Photos libraries. The company said it trains on limited information, such as specific prompts and model responses, to improve functionality. Connecting Google apps to Gemini remains an opt-in setting that users can change at any time. The feature lands as Gemini's image tools have drawn competitive attention. OpenAI upgraded ChatGPT Images with GPT Image 1.5 -- framed as a response to the attention Google's Nano Banana models attracted -- with the company emphasizing faster performance, lower API pricing, and improved consistency across iterative edits. Google's own developer documentation maps Nano Banana to Gemini 2.5 Flash Image and Nano Banana Pro to Gemini 3 Pro Preview. Personal Intelligence debuted earlier this year, reaching all U.S. users in March before Google extended access internationally to markets that now include India and Japan. The new image generation capability has also drawn scrutiny in the context of broader privacy concerns around Gemini: a proposed class-action lawsuit filed in federal court in San Jose, California accused Google of activating Gemini by default across Gmail, Google Chat, and Meet without user consent, alleging potential violations of California's Invasion of Privacy Act.

[17]

Gemini now makes personalized images by understanding your taste from Photos library

Up until now, using Google Gemini meant being very specific. If you wanted an image, you'd spell it all out, the mood, the lighting, the tiny details, just to get something close to what you had in mind. That's still how most AI tools operate. But this is where things start to shift. With the integration of Nano Banana 2 and Google Photos, Gemini feels much more familiar. It leans on your preferences, what you like, what you usually capture, and the kind of visuals you gravitate towards, and uses that context to shape what it creates for you. So instead of over-explaining every prompt, you're nudging it in a direction, and it fills in the rest in a way that feels personal. The goal here is simple: spend less time describing and more time seeing your ideas come to life, almost the way you imagined them, without having to say everything out loud. Reality is no longer imagined Do you remember those Instagram reels that made you comment just to get a prompt? The slightly annoying kind. Because deep down, they knew if they didn't hand you the "right" words, your result probably wouldn't match what you had in mind. That whole process feels a bit outdated now. With Nano Banana 2, you don't really have to chase the perfect prompt anymore or sit there overthinking every word. You just bring your context, and Gemini sort of fills in the gaps on its own. It picks up on what you mean. And the best part is, there's nothing extra you need to set up. If your Google apps are already connected to Gemini, your context is already there. It's ready when you are, without you having to piece everything together first. When your past starts painting your present So here's what Google is really nudging you to do: link Google Photos with Gemini. And honestly, it makes sense. For most people, Photos is where life stacks up. Your people, your moments, your personality, all sitting there without you having to explain a thing. Once that connection is in place, Gemini has real context. You can say something like, "Create an oil painting image of me and my dog enjoying our playtime," and it doesn't start from scratch. It pulls from what it already knows. Your faces, your moments, the little patterns in your life. The result feels a lot more you than something vaguely personalized. That said, it's not perfect on the first go. Google has already pointed out that Gemini might miss the exact photo or detail you had in mind initially. So you nudge it, refine it, tweak it a bit. The usual dance. Also, this isn't instant magic. There's a bit of patience involved. Gemini is essentially learning you as it goes, and that kind of understanding doesn't happen in a snap. But once it starts clicking, the process feels more like shaping a memory into something new. What I really think about this Google is very clear about one thing. Privacy, it says, is a top priority. And that sounds really reassuring. So far, most of our digital lives have already been living in the cloud. Emails, documents, app activity, all tied neatly to an ID we use almost everywhere. It's familiar at this point, almost invisible. But Photos feel different. They're not just data points. They're people, places, moments you didn't stage for an algorithm. And that's where this shift starts to feel a bit more personal. Linking Google Photos to Gemini means giving it access to those moments. Not just to organize them, but to interpret them, learn from them, and use them to create something new. That's powerful, no doubt. But it also feels like crossing a line that's been sitting there. Google has tried to address this in its blog posts. It explains how your data is handled, how controls are in place, and how you're still in charge. And to be fair, those safeguards matter. They do. But trust isn't just built on explanations. It's also about comfort. And this is where it becomes less about what's possible and more about what feels right. Recommended Videos For me, handing over that level of personal context just to get slightly better, more tailored images doesn't quite balance out. The trade-off feels a bit too steep. I'd rather take the extra minute to describe what I want, even if it's not perfect, than open up parts of my life that were never meant to be part of that process. Because at the end of the day, convenience is great. But not when it starts asking for pieces of you that you're not ready to give.

[18]

New ways to create personalized images in the Gemini app

This content is generated by Google AI. Generative AI is experimental Personal Intelligence makes the Gemini app feel tailored to you, not just a generic tool that works the same for everyone. Today, we're introducing new ways for Gemini to use your interests and preferences with Nano Banana 2 and Google Photos to make image generation -- one of your favorite ways to use Gemini -- feel deeply personal. This lets you create unique images more easily, so you can spend more time creating and less time explaining. One of the biggest hurdles in AI image generation is finding the right prompt. Previously, to get a result that felt truly personal, you had to write long, detailed descriptions and manually upload a reference photo just to give Gemini the right context. Now, Personal Intelligence gives Gemini an inherent understanding of your preferences from the start. By integrating this context directly with Nano Banana 2, Gemini can automatically fill in the blanks, grounding every creation in the things you care about most. And since this is built into how you normally use the Gemini app there's no extra setup. If you've already linked your Google apps, that personal context is ready and waiting the moment you start creating images. This removes the heavy lifting. Instead of writing out the intricate details of your life, you can use simple prompts like "Design my dream house" or "Create a picture of my desert island essentials" and the results will automatically reflect your specific tastes and lifestyle, gleaned from the Google apps you've connected to.

[19]

Gemini Can Now Create AI Images Using Your Own Photos and Videos

You'll need a Google AI subscription to try out the new feature. Gemini has long been able to connect to other Google apps, but earlier this year those integrations were made tighter and more seamless with a feature called Personal Intelligence. Now, Personal Intelligence is expanding into Google Photos and picking up AI image creation capabilities, courtesy of the Nano Banana 2 model. The idea is that you don't have to manually select a picture in Google Photos and tell the AI to do something with it. Instead you just type a prompt such as "create a cartoon showing my family enjoying our favorite activities," and Gemini will do the rest -- mining your Google Photos library for the relevant information and people. Another example prompt Google gives is "create a watercolor image of my dream house nestled in my favorite setting." You can see how the new integrations save you time -- you don't have to explain what your dream house or your favorite setting look like, as long as Gemini can work it out from your photos. "Since this is built into how you normally use the Gemini app there's no extra setup," says Google. "If you've already linked your Google apps, that personal context is ready and waiting the moment you start creating images... the results will automatically reflect your specific tastes and lifestyle, gleaned from the Google apps you've connected to." The upgraded Personal Intelligence experience is rolling out now inside the Gemini app for users in the U.S., but you need to be a paying customer to access it, on either the AI Plus, AI Pro, or AI Ultra plans. Google says access for more users and support for Gemini inside Chrome is coming soon. This is being pushed out now to Google AI subscribers in the U.S., so if that includes you then you shouldn't have to do anything special to get the new feature in Gemini on the web or on mobile. You may well see a pop-up message inside the app announcing that you've got the upgrade, which is what Google often does. With the Create image option selected, you can simply type out what you want to see, and Gemini takes care of the rest. Something like "create a sketch of my family on vacation at the beach" or "make a photo collage of my desert island essentials" should work, if there's enough information to go on in Google Photos. Google says Gemini will look at the labels you've applied in Google Photos, such as the names of people and pets, to try and work out what you're asking for. There's clearly quite a bit of educated guesswork going on with the AI here, and "Gemini might not always pick the exact photo or detail you had in mind on the first try," according to Google. You can always click on the Sources button underneath a generated AI image to see the photos that Gemini has picked as reference points, and ask Gemini to make edits to what's been created using follow-up prompts. You can also click the + (plus) button on the prompt box if you want to point Gemini toward a different reference photo. There is something a little creepy about prompting Gemini using these intimate details about your life, but it's only really the integration between apps that's new: If you use Google Photos, then it's constantly using AI to recognize what's in your pictures so you can better sort through them and organize them, including family members and pets. Google says Gemini doesn't "directly" train its AI models on your photos, but instead uses "limited" information from them to improve the user experience. Connecting Google Photos to Gemini remains an opt-in choice, and one you can reverse at any time: Inside the Gemini app, click the cog icon (on the web) or tap your profile picture (on mobile), then choose Connected apps to make changes.

[20]

Google is expanding Personal Intelligence to Gemini users globally and it's a huge shift

Free users, your turn with Personal Intelligence is coming very soon. If you have been waiting for Gemini to actually feel like it knows you, your wait is almost over. Google's Personal Intelligence, which launched earlier this year for paid US subscribers, is now rolling out globally. What is Gemini Personal Intelligence and what can it do? Personal Intelligence connects Gemini to your Google apps. Think Gmail, Google Photos, YouTube, Search, Maps, Calendar, Drive, and more. It uses your existing data to give smarter, more tailored responses without requiring you to explain everything each time. Recommended Videos The use cases are genuinely impressive. Ask Gemini for shopping recommendations, and it will factor in your recent purchases and style preferences. Stuck troubleshooting a device you do not remember buying? It can pull the exact model from your purchase receipts in Gmail. If you are planning a trip with a tight layover, Gemini can use Personal Intelligence to check your gates, walking time, and meal preferences all at once. It can even suggest a new hobby based on patterns it notices across your activity. Google says this is an opt-in feature, so you choose which apps to connect. Importantly, Gemini does not train directly on your Gmail or Photos data. It references them to answer your questions, but keeps the underlying personal content separate from model training. Who can use Gemini's Personal Intelligence feature? Personal Intelligence works across desktop, Android, and iOS with languages supported by Gemini. The global rollout is now live for Google AI Plus, Pro, and Ultra subscribers everywhere except the European Economic Area, Switzerland, and the UK. Free Gemini users globally will get access within the next few weeks. Why does this matter? Personal Intelligence is probably the most significant thing Google has done with Gemini so far. Gemini is slowly becoming the kind of AI assistant that actually understands your life, not just the internet. With access to Gmail, Photos, Maps, and more, Gemini will no longer feel like a generic chatbot and behave like a genuine personal assistant. No other AI assistant comes close to having this kind of data advantage baked in from the start. Apple Intelligence is still finding its feet and Microsoft's Copilot lives mostly inside productivity tools. Meanwhile OpenAI's ChatGPT has no first-party data ecosystem of its own. Google, on the other hand, already has your entire digital life across billions of users. In an AI race where rival companies are all building toward personalization, Google, with its unmatched ecosystem, is uniquely positioned to win it.

[21]

Google integrates Nano Banana 2 into Personal Intelligence

Google announced an enhancement to Gemini's Personal Intelligence feature that will include Nano Banana-powered image generation, allowing for personalized images without explicit prompts. The AI utilizes existing user data from Google account connections, such as Gmail and Google Photos, to generate images based on users' preferences. Users can simply say, "Design my dream home," rather than outlining specific interests. The Nano Banana-powered feature can interpret labels in Google Photos, enabling context-aware image requests such as, "Generate an image of my family and me doing our favorite activity." A "sources" button will display how Gemini derived the context for the image generation. Video: Google Users will have the ability to provide feedback if the context is misunderstood, and they can also submit reference photos by clicking the "+" icon. This image-generation feature will be available to Plus, Pro, and Ultra subscribers in the U.S. in the coming days. Google plans to introduce the image generation feature to Gemini on Chrome desktop and to other users soon. Personal Intelligence was initially launched earlier this year, becoming available to all U.S. users in March. Recently, the feature was expanded to users in countries such as India and Japan.

[22]

Gemini's Personal Intelligence Rolls Out to More Regions Worldwide - Phandroid

A while back, Google announced that it was working on bringing "Personal Intelligence" features for the Gemini app, which will be able to give users a more personalized experience by tapping into different apps such as Gmail, YouTube, and Photos, for example. That said, Google is now rolling out the feature worldwide, albeit with some exceptions. Google says that the feature will first arrive for Google AI Plus, Pro, and Ultra subscribers across all supported languages, with a broader rollout to free users coming in the next few weeks. It should be noted however that some countries will not be part of the rollout -- more specifically, Google specifies that the European Economic Area (EEA), Switzerland and the United Kingdom will not receive the update, at least for the time being. Announced back in January for users in the United States, Personal Intelligence integrates with every model available within Gemini, and links up with different Google apps more tailored answers to queries, with details and information relevant to each particular user. It's an optional feature of course, and can be disabled if not needed.

[23]

Gemini Personal Intelligence Rolls Out in India With These Features

The assistant retrieves and combines information from multiple sources Google on Monday announced the rollout of Personal Intelligence for Gemini in India. The feature expands the AI assistant's capabilities with contextual responses and improved app integration. First introduced in the US earlier this year, Personal Intelligence allows Gemini to connect with select Google apps and utilise that data to provide more accurate and detailed responses to queries. The Mountain View-based tech giant says its rollout is part of ongoing efforts to expand Gemini's functionality across services such as Search, Gmail, and Chrome. Gemini Personal Intelligence Expands to India With Personal Intelligence, users can connect Gemini with apps such as Gmail, Google Photos, YouTube, and Search. Once enabled, the AI assistant will retrieve and combine information from the aforementioned sources under Google's umbrella to respond to queries that require context across multiple apps. The feature is optional and turned off by default, with users given control over which apps to link, the company explained in a blog post. Google provided an example of planning a trip to Jaipur to illustrate how Personal Intelligence works. If a user asks Gemini about their travel plans, the assistant can pull booking confirmations from Gmail, retrieve relevant images such as saved maps or notes from Photos, and even suggest places based on recently watched YouTube videos. All of this, more importantly, happens within a single response. As per the tech giant, Personal Intelligence is designed to reason across multiple sources and retrieve specific details. It combines both of these capabilities to generate responses. The company also notes that Gemini can cite the source of the information, and users can ask follow-up questions for further clarification. The company stated that the connected data is used only to respond to queries and is not directly used to train AI models. Users can disable the feature at any time or use temporary chats without the personalisation aspect. Google, however, acknowledged that Personal Intelligence is still evolving and may return inaccurate or overly personalised responses at times. There may also be instances where Gemini could misinterpret context, such as inferring interests incorrectly. Users can correct it directly during conversations and provide feedback using the thumbs-down option. Personal Intelligence is being rolled out to Google AI Plus, Pro, and Ultra subscribers in India, with wider availability expected in the coming weeks. To enable the feature, users can navigate to the Gemini app, head over to Settings > Personal Intelligence, and choose the apps they want to connect.

[24]

Google expands Personal Intelligence globally to all Gemini languages

Google has announced the global expansion of its Personal Intelligence feature for the Gemini application, although availability will not extend to European markets such as the European Economic Area, Switzerland, and the UK. Initially launched at the beginning of the year, Personal Intelligence integrates data from Gmail, Photos, YouTube, and Search to enhance user experience by understanding individual contexts and needs. The rollout now includes all supported Gemini languages and offers access to users with the more affordable AI Plus plan. Prior to this expansion, Personal Intelligence was limited to paid subscribers (AI Pro and AI Ultra) in the United States. Google plans to extend access to free Gemini users worldwide, with an anticipated rollout scheduled for the next few weeks. The feature aggregates user data to provide tailored responses. For example, it can answer vehicle-related queries by sourcing information from Google Photos and other Google services. Users can expect an improved experience with Personal Intelligence as it becomes more widely available. Google stated, "Personal Intelligence is making a big, global expansion," signaling the company's intent to enhance the tool's relevance and application in daily digital interactions. The upcoming rollout aims to make Personal Intelligence functionality accessible to a broader audience, enhancing user engagement across varying subscription tiers.

[25]

Google brings Personal Intelligence to Gemini users in India, four months after beta launch in US - The Economic Times

Google rolled out the Personal Intelligence feature to Gemini users in India on Tuesday, four months after its beta launch in the US. With Personal Intelligence, Google aims to make its artificial intelligence (AI) assistant Gemini more personalised for users by using the latter's information across apps like Gmail, Google Photos, YouTube, and Search. Last month, Google rolled out the Personal Intelligence experience to all users in the US across AI Mode in Search, Gemini on Chrome, and the Gemini app. Eligible users will either get an invite on the Gemini home screen, or they may enable the feature manually in the AI assistant app's Settings. Users will simply have to enable the Personal Intelligence feature and choose Connected Apps (such as Gmail and Photos). Once enabled, it works across web, Android, and iOS, and is available with all models in the Gemini model picker. The Personal Intelligence enables Gemini to reason better across multiple sources and synthesise information from emails, photos, and online activity to deliver more tailored and context-aware responses. This reduces the need to manually search across platforms and enables a more seamless, integrated experience. The experience is currently being rolled out to personal Google accounts of eligible Google AI Plus, Pro, and Ultra subscribers. Access to free users will be extended later. Does it not raise privacy concerns? While this may raise privacy concerns among users, the tech giant lets them choose which apps to link to power the Intelligence, to ensure a secure feature experience. By default, app connections are turned off, allowing users to enable or disable these connections at any time. Google claims that Gemini only accesses data to respond to specific queries, and users can see or request explanations for how their information was used. "Gemini doesn't train directly on your Gmail inbox or Google Photos library. We train on limited info, like specific prompts in Gemini and the model's responses, to improve functionality over time," Google India wrote in a blog post. Users will also get controls to manage personalisation, to regenerate responses without personal context, or to use temporary chats for non-personalised interactions. Yet, the tech giant has warned that users may encounter occasional inaccuracies or 'over-personalisation,' where unrelated data is incorrectly linked. Users can flag and give feedback on such responses.

[26]

Google Gemini adds personalized image creation with Google Photos integration

Google is rolling out a new Personal Intelligence feature in the Gemini app that makes AI image generation more context-aware and personalized. The update combines Nano Banana 2 with Google Photos integration so users can create images based on their lifestyle, preferences, and real-world memories without needing long prompts or manual uploads. Gemini is shifting from prompt-heavy generation to context-driven creation. Once users connect their Google apps, the system automatically uses available context to understand intent from simple prompts like "Design my dream house." Instead of requiring detailed instructions or reference images, Gemini fills in missing details using signals from connected services. This makes image generation faster and more aligned with the user's personal style, interests, and past activity, while reducing the effort needed to describe ideas. With Google Photos integration, Gemini can recognize and use labeled people and pets to include real individuals in generated images. This allows the system to build scenes featuring the user's personal circle in both realistic and stylized formats. Users can turn real-life moments into creative outputs or reimagine them in different artistic styles without uploading any files or manually selecting reference images, as the system already understands relationships through existing photo labels. Users can refine generated results if they are not accurate or aligned with expectations. They can provide feedback and regenerate images with improved instructions. A built-in "+" option allows switching reference images from Google Photos to influence different outcomes. The Sources view shows which photo was selected for generation and explains how it contributed to the final result, giving users visibility into the process. Google states that private Google Photos libraries are not used directly to train Gemini models. Instead, model improvements rely on limited interaction data such as prompts and generated responses. The feature is fully opt-in, and users can manage or disconnect connected Google apps anytime through settings, ensuring full control over personal data usage. The feature is rolling out over the coming days to Google AI Plus, Pro, and Ultra subscribers in the United States. Google also plans to expand it to Gemini on Chrome desktop and more users in future updates.

[27]

Google Gemini Personal Intelligence launched in India

Earlier this year, Google introduced the Personal Intelligence feature for Gemini in the United States. The company has now officially expanded this personalized AI experience to users in India. The feature is designed to securely integrate information across a user's Google applications -- such as Gmail, Google Photos, YouTube, and Google Search -- to provide tailored responses and streamline digital tasks based on individual context. Personal Intelligence operates on two primary capabilities: reasoning across varied, complex information sources and retrieving specific details from linked applications. By synthesizing text, photos, and video data, the AI attempts to deliver highly contextualized answers. Practical Application: If a user asks, "What are my travel plans for Jaipur?", the system can bypass a standard search and instead: This integration is designed to reduce the need for users to manually search through multiple apps or their browsing history. Google emphasizes that Personal Intelligence was built with a central focus on user privacy and data control. Google clarifies that Gemini does not train directly on a user's personal Gmail inbox or Google Photos library content. Instead, the model is trained on limited data, such as specific user prompts and the AI's subsequent responses, only after personal information has been filtered or obfuscated. The system is trained to understand how to locate relevant details when requested, rather than "learning" the underlying personal data itself. As the feature is currently in a beta phase, Google notes that users may encounter inaccuracies. One noted issue is "over-personalization," where the model draws incorrect connections between unrelated topics. The AI may also struggle with context and nuance, particularly regarding changes in relationships or varied interests. For example, the system might incorrectly assume a user enjoys golf based on numerous photos taken at a golf course, missing the nuanced context that the user was actually there to spend time with a family member. The Personal Intelligence feature with Connected Apps is currently rolling out to personal Google accounts in India for eligible Google AI Plus, Pro, and Ultra subscribers. Google plans to expand access to free tier users in the coming weeks. Once enabled, the feature is accessible across Web, Android, and iOS platforms and functions across all models available in the Gemini model picker. Users retain full control to adjust settings, disconnect apps, or delete their chat history at any time. How to Enable Personal Intelligence: If an invitation prompt does not appear automatically on the Gemini home screen, users can enable the feature manually by following these steps:

[28]

Google Gemini can now generate personalised images using Google Photos: Here is how

The feature is powered by Google's Personal Intelligence system and its Nano Banana 2 image generation model. Google has announced a new update for the Gemini app that makes AI image generation more personal. The company says Gemini can now create images based on a user's interests, preferences and photos stored in their Google Photos library. The goal is to make image creation easier and reduce the need for long and complicated prompts. Until now, users often had to write detailed prompts and upload reference photos to generate images that felt personal. With the new update, Gemini can understand more about the user from connected Google apps and automatically use that information while creating images. Also read: OpenAI introduces GPT Rosalind for scientific research: What it can do The feature is powered by Google's Personal Intelligence system and its Nano Banana 2 image generation model. Together, they allow Gemini to use context from Google apps that a user has connected to their account. For example, instead of writing a long description, users can simply type prompts like 'Design my dream house' or 'Create a picture of my desert island essentials. Gemini will then generate images that reflect the user's preferences using information gathered from connected apps. Also read: OpenAI upgrades Codex with computer control, image generation to rival Claude Code If users link their Google Photos library, Gemini can use labelled photos of people and pets to guide the image creation process. This allows the AI to include real people from the user's life in the generated images. For instance, users can ask Gemini to 'create a claymation image of me and my family enjoying our favorite activity,' and the AI can generate an image using photos from the library as references. Users can also try different art styles such as watercolour paintings, charcoal sketches and oil paintings. If the generated image is not accurate, users can give additional instructions to refine it. Users can also manually choose a different reference photo from their Google Photos library. Google explains that Gemini does not directly train its models using a user's private Google Photos library. Also read: Is AI replacing jobs or creating them? Here is what LinkedIn data says The new personalized image creation experience in the Gemini app is rolling out over the next few days to eligible Google AI Plus, Pro and Ultra subscribers in the US. Google plans to bring the feature to Gemini in Chrome desktops and more users soon.

[29]

Google launches Gemini Personal Intelligence in India: What is it and how to use it

Once available, the feature works across the web, Android and iOS and with all of the models in the Gemini model picker. Google has officially launched its Gemini Personal Intelligence feature in India. The feature was first introduced in the US earlier this year and is designed to make the AI assistant more useful. With Personal Intelligence, Gemini can connect to apps across your Google account to better understand your context. Once enabled, it can pull information from services like Gmail, Google Photos, YouTube and Search to answer questions more accurately and help with everyday tasks. Google says the feature is built with privacy in mind. The connections are turned off by default, and users can choose exactly which apps they want Gemini to access. The company also says the AI does not directly train on data from Gmail or Photos. Also read: OpenAI introduces GPT 5.4 Cyber, an AI model built for cybersecurity defence: All details Personal Intelligence works by connecting information from multiple Google apps so Gemini can understand your activities and preferences. According to Google, it has two main strengths: reasoning across different sources and retrieving specific details from connected apps to answer your question. For example, if you ask Gemini, 'What are my travel plans for Jaipur?', the AI can check your booking confirmations in Gmail and organise them into a timeline. It can even retrieve details like screenshots or saved photos related to your trip from Google Photos. Also, if you recently watched a video on YouTube about local food trends, it could suggest a restaurant based on that. Gemini will also try to show where information came from so users can verify it. If an answer seems wrong, you can correct it, for example by saying, 'Remember, I prefer window seats.' Also read: OpenAI acquires Hiro Finance: What the AI startup offers Google says Personal Intelligence is rolling out to eligible personal Google accounts in India for Google AI Plus, Pro and Ultra subscribers. The company also plans to expand it to free users in the coming weeks. Once available, the feature works across the web, Android and iOS and with all of the models in the Gemini model picker.

Share

Copy Link

Google expands Gemini's Personal Intelligence feature to include Nano Banana-powered image generation. AI subscribers can now create personalized AI images by letting the chatbot access a user's Google Photos library, simplifying prompts and producing more contextually accurate results. The feature remains optional and is currently available to Plus, Pro, and Ultra subscribers in the US.

Gemini Brings Personal Intelligence to Image Generation

Google has expanded its Personal Intelligence feature in Gemini to include Nano Banana-powered image generation, allowing AI subscribers to create personalized AI images that draw on your Google data without extensive prompting

1

. The new capability, announced Thursday, enables users who connect their Google accounts to generate personalized images based on context gleaned from their Google Photos library, Gmail, YouTube history, and other connected Google apps2

.

Source: FoneArena

Instead of typing detailed image prompts like "Generate an image of my dream home, my interests are tennis and music," users can now simply say "Design my dream home" and the image generation model will automatically reflect their specific tastes and lifestyle

2

. The system leverages labels users have added to Google Photos to identify people, pets, and groups, meaning a prompt like "create a hand-drawn illustration of mom" or "create a claymation image of me and my family enjoying our favorite activity" can produce accurate results without manually uploading reference photos5

.

Source: The Verge

How Personal Intelligence Streamlines the Workflow

This update essentially streamlines an existing workflow that previously required users to manually feed images into Nano Banana 2, one of the best AI image generators available

1

. By turning the image bot loose on the content of your photo library, Personal Intelligence reduces friction and saves users from extra typing when crafting image prompts1

.Source: Android Authority

Users can verify how Gemini derived context by clicking the "sources" button, which displays the images referenced in generating personalized answers to queries

2

. Google acknowledges the feature is still evolving and might not always choose the right images from a user's photo library1