Iran War Disinformation Crisis: AI-Generated Content Floods X With Fake Videos and Images

14 Sources

14 Sources

[1]

Fake AI Content About the Iran War Is All Over X

When disinformation expert Tal Hagin asked Grok to verify a post on X about Iranian missiles that had supposedly struck Tel Aviv, Elon Musk's AI-powered chatbot failed miserably. Grok repeatedly misidentified the location and date for the video, which was originally shared on X by an Iranian state-owned media outlet on Sunday. Then, the chatbot then tried to prove its point by sharing an AI-generated image. "Now Grok is replying with AI slop of destruction," Hagin wrote in response. "Cooked I tell you." The interaction neatly sums up just how unhinged from reality X has become since the US and Israel began their attack on Iran on February 28. As WIRED reported at the time, the social media platform was quickly flooded with disinformation by accounts sharing fake and repurposed videos. As the conflict has continued, the flood has only gotten worse. In recent days, it's been supercharged by AI images and videos, while Grok has repeatedly given false information when asked to verify claims made on the platform. AI images are being shared by paid accounts bearing blue checkmarks and Iranian officials seeking to portray exaggerated damage. The proliferation of easy-to-access AI image and video generation tools has led to increasingly sophisticated fake content. On March 2, for example, Iranian officials and state media shared AI-generated videos of a high-rise building in Bahrain on fire. The videos and images appear realistic enough for many: One image of a US B-2 bomber being shot down by Iran with US troops detained was viewed over a million times before it was deleted, while images of members of Delta Force being captured by Iranian authorities were viewed over 5 million times before they were deleted. Some of the AI content promoted on X is less realistic.One video, for example, purports to show Iranian forces manufacturing missiles deep inside a cave. However, the video was still been shared by multiple accounts and has been viewed over a million times. AI is also being used by the Iranian government to push overtly antisemitic narratives, with accounts in a pro-regime propaganda network on X sharing AI-generated posts depicting Orthodox Jews leading American soldiers to war or celebrating American deaths, according to researchers from the Institute of Strategic Dialogue (ISD), who shared their analysis with WIRED. A number of accounts in this pro-regime network also shared a fake video that supposedly showed a line of young girls walking past President Donald Trump wearing only underwear. The post was viewed over 6.8 million times, according to ISD, before being taken down, though it continues to be shared by other accounts on X. "What is particularly unique about this war is the dramatic uptick in AI-generated content I find myself debunking," Hagin tells WIRED. "This is likely due to AI being advanced enough to fool journalists, and the ease with which users can create this AI slop with zero consequences. The longer we go without regulations against AI abuse, the more harm will be caused. I see the proliferation of AI-based fake news pushing us over the edge of a fact-based world unless we enact change now." When the flood of AI-generated fakes began taking over the platform last week, X announced it would temporarily demonetize blue checkmark accounts if they post AI-generated videos of armed conflict without a label. X did not respond to a request for comment about how many accounts it had demonetized since introducing the measure. Until recently, a number of Iranian officials appeared to be paying X for its premium service, which provided their accounts with blue checkmarks, boosted engagement, and created the potential to earn money for their posts.

[2]

State actors are behind much of the visual misinformation about the Iran war

As attacks spread after the bombing of Iran by U.S. and Israeli forces, a video circulated widely of crowds peering up at fire, smoke and debris coming from the top of a high-rise building said to be in Bahrain. Social media users claimed an Iranian attack had hit the skyscraper. But while buildings in Bahrain have been struck by Iranian missiles during the Iran war, this video wasn't real. It was generated with artificial intelligence and shared by accounts associated with the Iranian government as part of an effort to amplify its successes. There are multiple clues that the video was not authentic, including two cars on the left side of the clip that appear stuck together and a man in the bottom-right corner whose elbow seems to move straight through a backpack. A deluge of misrepresented or fabricated videos has spread widely online since the Iran war began last weekend, fueled in part by state-linked propaganda and influence campaigns -- particularly around who is winning the war and how many casualties there have been. "The content that's coming from state actors tends to be a little better targeted," said Melanie Smith, senior director of policy and research on information operations at the Institute for Strategic Dialogue. "They have a very clear kind of narrative structure and the videos are just used to support some kind of statement they want to make about the conflict and about the kind of geopolitical situation writ large." Pro-Iran social media accounts have adopted a narrative that exaggerates the destruction and death tolls wrought by the country's military -- a position supported by what is being reported in Iranian state media. This has led to a large number of AI-generated videos of supposed air strikes, such as the one of the Bahraini high-rise on fire. An ongoing Russia-aligned influence operation called Operation Overload, also referred to as Matryoshka or Storm-1679, has been posting videos designed to impersonate intelligence agencies and news outlets, undermining people's sense of safety in an effort to sway their behavior -- a tactic the network has previously used during election cycles. For example, it shared a warning falsely attributed to Israeli intelligence telling Israelis in Germany and the U.S. to be cautious when in public or to not go outside at all. Misrepresented and fabricated videos have been a key feature of other recent conflicts, such as the Russia-Ukraine and Israel-Hamas wars, but experts say a major difference now is the lack of information from the Iranian public due to internet shutdowns and general censorship -- a loss of perspectives that could have worked both for and against the Iranian government. "In Ukraine, that message was so full-throated it really changed the entire dynamic of the conflict because the world really aligned with the perspective of Ukrainians facing the attacks and showing resilience in light of the attacks, but we're sort of missing that story from Iran," said Todd Helmus, a senior behavioral scientist at RAND who studies irregular warfare, terrorism and information operations. In search of clicks, opportunistic social media users not affiliated with state actors have also contributed heavily to the misinformation that has spread during the first days of the Iran war, presenting old footage from other conflicts as recent, sharing video game clips as real and posting their own AI-generated content. AI, in particular, has helped fuel misinformation in ways that weren't possible during past conflicts, even just a few years ago. Coupled with state-linked disinformation and censorship, this creates an even wider vacuum in which the truth can get lost. "The volume of AI content is starting to just pollute the information environment in these kinds of crisis settings to a really terrifying degree," Smith said. "The inability to get access to verified and credible information in times like this -- it's getting harder and harder to do that." Nikita Bier, X's head of product, wrote in a Tuesday post that the platform will suspend users from its revenue-sharing program if they post AI-generated content from an armed conflict without a proper disclosure. The suspensions are 90 days for a first offense and permanent after that. Emerson Brooking, director of strategy and resident senior fellow at the Atlantic Council's Digital Forensic Research Lab, warns that social media platforms are now frontlines in war, and that users should be aware of their potential to be used by state actors, even if they are located thousands of miles away from on-the-ground action. "If you're in these spaces, just understand that this is an extension of the physical battle space," he said. "That there are actors on all sides of the conflict that are actively trying to spread propaganda and disinformation to convince you that certain things are true that aren't. That your eyeballs and your attention are an asset."

[3]

AI-generated Iran war videos surge as creators use new tech to cash in

An unprecedented wave of AI-generated misinformation about the US-Israel war with Iran is being monetised by online creators with growing access to generative AI technology, experts have told BBC Verify. Our analysis has found numerous examples of AI-generated videos and fabricated satellite imagery being used to make false and misleading claims about the conflict which have collectively amassed hundreds of millions of views online. "The scale is truly alarming and this war has made it impossible to ignore now," says Timothy Graham, a digital media expert at the Queensland University of Technology. "What used to require professional video production can now be done in minutes with AI tools. The barrier to creating convincing synthetic conflict footage has essentially collapsed," he says. The US and Israel began launching strikes on Iran on 28 February. In response, Iran has launched drone and missile attacks on Israel, as well as multiple Gulf nations and US military assets in the region. Many have turned to social media to search for and share the latest information and to help make sense of a fast-moving week of conflict. The platform X announced this week it will temporarily suspend creators from its monetisation programme if they post AI-generated videos of armed conflict without a label. The scheme rewards eligible users whose posts create large numbers of views, likes, shares and comments with payments from the platform. "It's a notable signal that they've noticed that this is a big problem," says Mahsa Alimardani, a researcher specialising in Iran at the Oxford Internet Institute. We asked TikTok and Meta, the company of Facebook and Instagram, if they intend to take similar action, but they did not respond to our requests for comment. A typical example of an AI-generated video that BBC Verify has tracked appears to show missiles striking the city of Tel Aviv in Israel as the sound of explosions rings out in the background. This video has been featured in more than 300 posts which have then been shared tens of thousands of times across social media platforms. Some X users turned to the platform's AI chatbot Grok to confirm the video's veracity. But in many cases seen by BBC Verify, Grok wrongly insisted that the AI-generated video was real. Another fake video, viewed tens of millions of times, claims to show Dubai's Burj Khalifa skyscraper in flames, while a crowd of people seem to be running towards the building. This AI-generated footage spread widely online at a time of considerable concern from residents and tourists about the drone and missile strikes on the city. "Fake videos like these have a detrimental impact on people's trust in the verified information they see online and make it much harder to document real evidence," says Alimardani. A new feature of this conflict analysed by BBC Verify is the emergence of AI-generated satellite imagery. We verified multiple real videos showing Iranian drone and missile strikes on the US Navy's Fifth Fleet headquarters in Bahrain on the first day of the conflict. A fabricated photo, shared on X by the state-linked newspaper The Tehran Times, began to spread the following day and claimed to show extensive damage to the base. The fake appears to be based on real satellite imagery of a US naval base in Bahrain taken in February 2025, which is publicly available online. According to Google's SynthID watermark detector, the fake image was generated or edited with a Google AI tool. Three vehicles parked outside are also in the exact same spot in both the genuine satellite imagery and the AI picture - despite the photos allegedly having been taken a year apart. Google's AI tools, including its video generator Veo, are on the growing list of popular AI platforms, like OpenAI's Sora model, Chinese AI app Seedance, and Grok which is built into X. "The number of different tools that are now available to create a wide range of highly realistic AI manipulations is unprecedented," says Henry Ajder, a generative AI expert. "We have never seen these tools so available, so easy and so cheap to use," he says. This has led to a surge of AI-generated content online "because the pipeline onto social media can now be almost fully automated," says Victoire Rio, executive director of the technology policy non-profit What To Fix. X's head of product said on Tuesday that "99%" of the accounts spreading AI-generated videos like these were trying to "game monetization" by posting content that will generate large amounts of engagement in return for payment through the app's Creator Revenue Sharing programme. The platform does not publish how many accounts are part of the programme, or how much money they can make. But Graham estimates that X could pay about "eight to 12 dollars per million verified user impressions". "Creators have to hit five million organic impressions in three months, plus hold an X premium subscription, to be eligible," he added. "Once you're in, viral AI-generated content is basically a money printer," he says. "They've built the ultimate misinformation enterprise." X did not respond to our request for comment or our questions about the Creator Revenue Sharing programme. Experts have told BBC Verify that while many social media companies say they are trying to change their moderation and detection systems to address the scale and speed at which AI-generated content spreads, there is no simple solution to the problem. "The deeper issue is that engagement-driven monetisation and accurate information are fundamentally in tension, and no platform has fully resolved that tension or perhaps ever will," says Graham.

[4]

Iranian TV and Social Media Project Defiant and Distorted View of the War

On Iran's official television networks and through a network of affiliated or sympathetic social media accounts, the country is striving to present a resolute image despite thousands of strikes from Israel and the United States that have hammered its cities, military bases and political leadership. It is waging an information war parallel to the real-world fighting, blending fact and fiction, often using unproven claims and fake videos generated using artificial intelligence. In Tehran's telling, Iranian missiles have ravaged Tel Aviv and other Israeli cities, its jets have decimated an American aircraft carrier, and hundreds of Americans have been killed at bases and embassies around the region. The messages convey resilience, presenting the country as not only fighting back but winning. In reality, while Iran has retaliated on multiple fronts, damaging Israeli cities and nearby American bases, the country's counteroffensive has resulted in fewer deaths and less damage than its state media has described. "It's flooding the zone with content that projects strength in the wake of attacks on Iran -- and it's similarly distorting the picture of what is actually happening inside the country," said Moustafa Ayad, a researcher at the Institute for Strategic Dialogue, a group in London that studies disinformation. Propaganda has long been a feature of warfare, but the sweep of social media and the persuasiveness of artificial intelligence have made influence campaigns farther reaching and often more effective. Russia has made such campaigns a central tactic in its war in Ukraine, and Iran, a Russian ally, has adopted similar methods, including the increasing use of A.I. To Iran's adversaries, the danger of its media apparatus is clear. The United States has made an effort to debunk some of the claims. Among Israel's bombing targets, along with military and government infrastructure, was the hub of Islamic Republic of Iran Broadcasting, the state television and radio broadcaster. The United States and Israel have also tried to shape coverage of the conflict, including by providing limited information about some of the damages they have incurred. With the internet inside Iran once again shut down and largely inaccessible, Iran's propaganda appears focused more on swaying international audiences, friends and foes alike. Much of Iran's official communication strategy -- issued in Farsi, Arabic and English -- sought to inflate the success of Tehran's counteroffensive in effusive terms, with one senior official saying in a statement aired on state television that its "extensive and successful operation" against Israel and other countries had "left all military experts in awe." State-linked outlets are also amplifying unverified claims and half-truths, according to Alethea, a digital risk analysis company. An unverified claim that the Iranian military completely destroyed an American radar installation in Qatar, for example, was shared in an online article by the Tehran Times newspaper and in an X post accompanied by an A.I.-manipulated image from the Tasnim news agency, according to Alethea. Tasnim crowed in one article that Iranian forces shot down an American fighter jet near Kuwait "in a brilliant move." The U.S. military described "an apparently friendly-fire incident," with three American jets downed by Kuwaiti air defenses. Addressing the friendly-fire claims, a state television channel said that Iran still deserved credit, having earlier attacked Kuwait's radar systems. "The fact that Iran has struck it is obvious," one television host said on Islamic Republic of Iran News Network, a state news channel. "But even if it was friendly fire, it was as a result of Iran's strike." State outlets are also circulating images of the destruction caused by airstrikes in hopes of signaling that a peaceful transition of power, one of President Trump's stated war aims, is impossible, said Omid Memarian, a senior analyst at DAWN, a nonprofit in Washington. "They want to send the message to the Americans or Israelis that this is not a Disney-like scenario, where you attack us and we give up power on a silver platter," he said. "This is going to be bloody and costly." A social media account linked to the Iranian military claimed that 560 Americans had been killed or wounded so far in the fighting, far higher than the six deaths reported by the Pentagon. From there, TASS, a Russian state news agency, circulated the claim, followed by RT, another Kremlin-backed outlet. The claim was eventually picked up by a variety of social media accounts and channels. Mr. Ayad of the Institute for Strategic Dialogue said that many of those accounts appeared to be coordinating or copying the messaging. Some were until recently focused on entertainment content, but appeared to have been purchased or taken over for influence operations. Iranian state media has also criticized foreign news sources and social media for presenting what it called a warped vision of the war effort. "It is broadcasting fake, disheartening news, and in no way does it cover our successes at all," a media analyst complained during an interview on Islamic Republic of Iran News Network. Advances in artificial intelligence tools have allowed countries at war to supercharge their propaganda campaigns, creating and spreading seemingly realistic videos and images as fast as their adversaries can debunk them. A result is to compound confusion over what is really happening on the battlefield, which in this case has spread throughout the region. PressTV, one of Iran's state broadcasters that airs in English and French, posted a video on X that seemed generated by A.I., describing a high-rise building in Bahrain aflame after Iranian airstrikes. (The post was later removed.) Another image that purported to show the United States Embassy in Saudi Arabia on fire spread widely on social media, including X and Telegram. Neither video was consistent with credible accounts and footage from the ground. "It's been stunning how much the Iranian cyber apparatus is producing A.I.-related content to boost the image of the Iranian military online," Mr. Memarian said. Social media accounts controlled by or sympathetic to Iran also played a role in the propaganda efforts. They, too, have exaggerated military successes and made unfounded claims that senior American and Israeli officials had been killed, including Prime Minister Benjamin Netanyahu. Some accounts disseminated debunked rumors via copypasta, a type of internet message that is repeatedly copied and pasted and shared. Alethea researchers discovered what they called "a recurrent propaganda format designed to visually communicate psychological defeat": a series of A.I.-generated videos claiming to show U.S. soldiers emotional or crying after missile strikes, all accompanied by the same caption. Similar ones have shown Israeli soldiers. NewsGuard, a company that tracks false narratives online, found accounts that shared a video of a large explosion and cloud of smoke and attributed it to an Iranian attack against a nuclear facility in southern Israel; the footage is actually from a fire at an ammunition depot in Ukraine in 2017. Other accounts circulated a video purportedly of a C.I.A. building in Dubai ablaze after being struck by an Iranian missile; the clip is actually from a fire at a residential tower in a different city in 2015, it said. The United States has taken steps to respond to some of the narratives. On the second day of the war, an anchor on Iranian state television read a statement from the military claiming that the Abraham Lincoln, one of the American aircraft carriers involved in the initial strikes, had been "attacked by four ballistic missiles." It was not. The claim, which also mentioned the "powerful blows of the armed forces," soon spread across pro-Iranian accounts on social media, often accompanied by images from a video game or generated by A.I. Another series used an old video of an international scuttling of a decommissioned ship to create a reef, according to NewsGuard. The United States Central Command, which oversees American forces in the region, used its account on X to fact-check false claims. "LIE," it wrote in response to the posts about the Abraham Lincoln, which had been seen by millions of users. "The Lincoln was not hit. The missiles launched didn't even come close." Farnaz Fassihi and Artemis Moshtaghian contributed reporting.

[5]

As the U.S. wages war with Iran, social media users face worsening disinformation

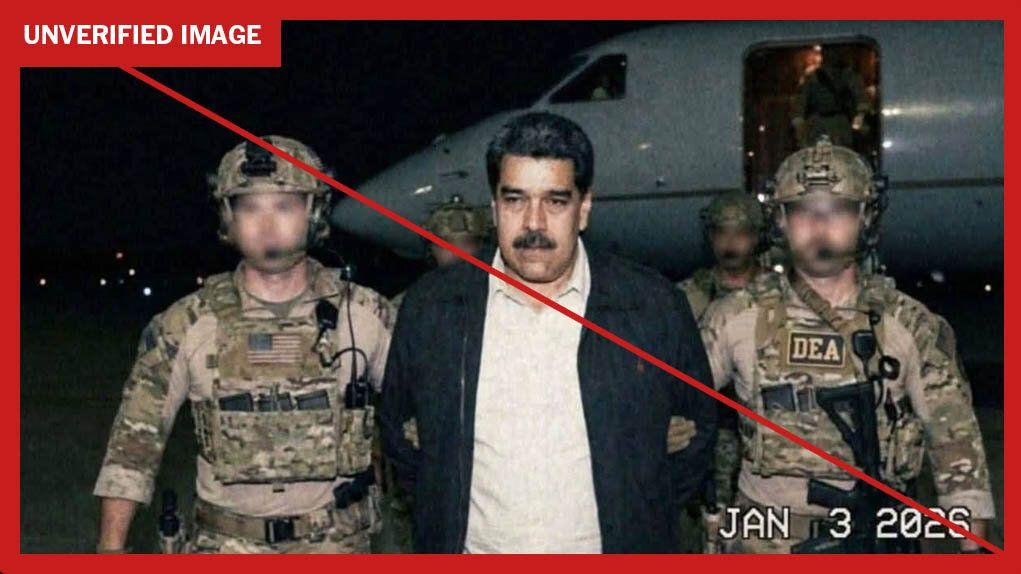

Disinformation engagement farming is getting worse with the help of AI. Credit: Jason Armond / Contributor / Los Angeles Times via Getty Images Before the dust had settled on the ruins of the Shajareh Tayyebeh school -- a casualty of the recent U.S.-Israel military strikes against Iran, and one which resulted in the deaths of up to 168 adults and children -- people were already engagement-farming online. Clips of digital flight simulators were passed off as real-time ops footage, while out-of-context images of battleships and old videos of aerial missile attacks were repurposed to sell users a tale of Iranian dominance. AI-edited content proliferated. According to experts, the posts had accumulated hundreds of millions of views in just a handful of days. The growing number of viral posts -- and the potential for even more to pop up as users earned cash for the viral falsehoods -- was alarming enough to prompt X to edit its policies on misinformation. As of yesterday, X says it will suspend users from its Creator Revenue Sharing program if they post AI-generated content depicting armed conflict without labeling it as such. And not even Google searches are safe from misinformation these days. The proliferation of digital misinformation is the product of a web of bots and engagement farming accounts, all with the shared goal of being the loudest, most clicked-on account in the room. Some hope to win political and social influence, others just want the money. Meanwhile, users, prone to confirmation bias and a reliance on digital news sources, repeatedly fall victim to their racket. Engagement farming, no longer just exchanging the currency of memes and clickbait, has become a dangerous, politically fraught game. Recent posts engaging in active disinformation about the conflict in Iran primarily involve exaggerating the scale and success of Iranian counterattacks, experts explain. A recent investigation by Wired documented hundreds of posts across Elon Musk's X that included misleading footage and photos -- including AI-manipulated content -- or promoted false claims about the scale of the attacks, many of which were posted in the immediate aftermath of missile strikes. A post with more than 4 million views claimed to show ballistic missiles sailing over Dubai, but actually depicted an Iranian attack on Tel Aviv in Oct. 2024. Another with more than 375,000 impressions shows a fictitious before-and-after image of the shelled compound of assassinated Iranian leader Ali Hosseini Khamenei. According to Wired, nearly all of the posts were shared by premium subscriber accounts with blue checkmarks, including state-funded media outlets in Iran. As in previous military conflicts, accounts have also attempted to pass off video game footage as verified news clips, including AI-manipulated images of downed F-35 fighter jets ripped from flight simulator games. The images have been shared across TikTok, some with links to Russian influence operations, the BBC reported. In addition to out-of-context footage and misleading content, the BBC also documented a handful of completely AI-generated videos that had amassed nearly 100 million total views, shared by what the outlet calls notorious "super-spreaders" of disinformation. A report from misinformation watchdog NewsGuard also chronicled a cadre of users sharing viral posts circulating false claims of targeted military strikes against U.S. and Israeli strongholds, predominately using repurposed video footage and out of context or completely recontextualized images of destruction. "[These videos] are posted by anonymous accounts that tend to report on geopolitical conflicts. These are accounts that are known to NewsGuard for spreading exaggerated claims, usually from a pro-Iran perspective," said Sofia Rubinson, senior editor of NewsGuard's Reality Check newsletter and co-author of the report. From there, Rubinson explains, other accounts with larger followings pick up and spread the false claims. For example, hours after initial reports of the U.S.'s military strikes in Iran, users on X began reposting an image of a sinking naval aircraft carrier. Users claimed that it showed a recent attack on the battleship USS Abraham Lincoln in the Arabian Sea. The U.S. military's Central Command issued a statement refuting the claim that same day. NewsGuard confirmed the image actually showed the intentional sinking of the USS Oriskany that took place nearly 20 years ago. The claim was shared by unverified "news" accounts and even Kenyan parliamentary member Peter Salasya. Salasya's post has been viewed more than 6 million times. Multiple accounts, including Salasya's, shared another video allegedly showing Israel's Dimona nuclear power plant under siege by air. The video racked up hundreds of thousands of impressions across anti-Israel and pro-Iran pages -- an X Community Note now appears below the video on Salasya's page, clarifying the images are of a March 2017 attack in Balaklia, Ukraine. NewsGuard found that such posts have already garnered at least 21.9 million views across X. Posts inducing fear of domestic retaliatory attacks have also circulated online, including an unverified list of U.S. cities alleged to be top targets for Iranian sleeper cells -- the list appears to have been written in Apple's Notes app. The acceleration of advanced generative AI and relaxed moderation policies across social media platforms has exacerbated an online misinformation crisis, experts have warned. Particularly over recent months, including during the U.S.-led capture of Venezuelan leader Nicolas Maduro, NewsGuard researchers have noticed a pattern in online disinformation emerging over periods of breaking news. "People now have a shorter window for the lapse between an event occurring and authentic visuals coming out of the media," explained Rubinson. To put it more bluntly: Users are losing their patience, used to an online environment where information is usually right at your fingertips. These brief periods, or voids, between breaking news reports and confirmed video or photos become fertile ground for disinformation bots and engagement farmers, Rubinson says. They also threaten to reinforce conspiratorial thinking -- that mainstream news outlets are keeping information from the public, for example -- and lend themselves to a user's own confirmation bias. Political conflict is particularly rife for the spreading of such misinformation, which is in turn strengthened by active disinformation campaigns from both sides of armed conflict. Researchers have found that a lack of proximity to events makes it easier to believe out of context or exaggerated information. "It's an attempt to fill this fog of war," said Rubsinson. "It can be very overwhelming for people. They want to make sense of it, and visuals are a good way for us to process what is going on in war when we can't comprehend the scale of these conflicts." This becomes a greater problem as individuals increasingly use social media platforms as sole sources for news and as previously reliable fact-checking tools, including straightforward Google searches, become more unreliable. AI chatbots and search have become embedded into the very fiber of real world crisis events, as users turn to them real time fact checkers. Rubinson said that nearly every X post NewsGuard analyzed included the same reply: "@Grok is this true?" But AI assistants and platform chatbots, including X's Grok, are notoriously unreliable at disseminating and verifying breaking news. They are also inconsistent at applying their own platforms' moderation policies. The BBC found that Grok erroneously verified recent AI-generated images depicting Iranian military movements, for example. According to a second report by NewsGuard published March 3, Google AI-powered Search Summaries have repeated misleading claims about the U.S.-Iran conflict when prompted with reverse image searches. For example, NewsGuard researchers uploaded a frame from a video shared online claiming to show the destruction of a CIA outpost in Dubai. Google's AI summary verified the story, writing: "The image shows a fire at a high-rise residential building in Dubai, UAE, reportedly occurring on March 1, 2026, following regional tensions. ... Conflicting reports emerged regarding the cause, with some sources mentioning a drone strike and others referring to the building as a specific intelligence facility." The video actually depicts a 2015 residential fire in the city of Sharjah. Security experts have sounded alarm bells over such "AI information threats," including AI tools used to generate and amplify misleading content. A report by the UK Centre for Emerging Technology and Security suggests the worsening information environment may pose existential threats to public safety, national security, and democracy without direct intervention. Meanwhile, civilians and journalists on the ground in Iran are fighting back against a near total internet blackout, following a massive push by the Trump administration and its ally Elon Musk to get Starlink internet connections to those on the ground. Bad actors, on the other hand, are still finding their way through the block and back onto sites like X.

[6]

AI-enhanced images of real events distort view of Mideast war

Athens (AFP) - The Middle East war has unleashed a torrent of AI-driven disinformation. Beyond entirely fabricated visuals, another kind of content is spreading: authentic images "enhanced" in ways that experts say are subtly distorting perceptions of what's happening on the ground. In one striking photo, a kneeling US pilot is confronted by a Kuwaiti local, moments after parachuting from his jet. The high-quality image was widely shared online and even published by media outlets. Yet the pilot appears to have only four fingers on each hand. AFP fact-checkers ran the photo through AI detection tools and found it contained a SynthID, an invisible watermark meant to identify images made with Google AI. But that's not the whole story. The situation itself appears to be genuine. A video showing the same scene began circulating on social media on March 2, and satellite imagery verified the location. It also corresponded with reports that day that Kuwait had mistakenly shot down three US warplanes. AFP was also able to locate an earlier version of the photo on Telegram that matched the high-resolution photo exactly, except that it was blurry. AI verification tools determined this image, which had none of the same detail in the pilot's face, was real. This suggests it may have served as the starting point for the image that returned the Google AI result. "AI-enhancement may subtly alter textures, faces, lighting, or background details, creating an image that looks more 'real' than the original," said Evangelos Kanoulas, a professor in AI at the University of Amsterdam. This can "strengthen a particular narrative about an event -- for example, making a protest appear more violent, making a crowd appear larger, making facial expressions more intense." In another case, social media users shared a dramatic image of a huge blaze near Erbil airport in Iraq, after the area was targeted by Iranian strikes on March 1. Although SynthID detection recognised the use of Google AI in the picture, it was not a total fabrication. The original version of the image shows the same scene but with a far smaller fire and smoke column, and less vivid colours. 'Very different story' Experts warned that the line between enhancement and content generation, accidentally or intentionally, was a thin one. "Even little changes can end up telling a very different story," said James O'Brien, a professor of computer science at the University of California, Berkeley, and "could change the perception of events". Generative artificial intelligence is also still prone to error and may "hallucinate" elements that were not in the original image, Kanoulas added. This happened following the shooting of Alex Pretti by federal immigration agents in the US state of Minneapolis in January, when an AI-enhanced image of the incident went viral. The image was based on a frame taken from a genuine video of the shooting, showing Pretti falling to his knees with officers beside him, one of them holding a gun to his head. In the grainy, low-quality frame, Pretti holds an object that in reality was a phone. In the AI-treated image, some social media users wrongly saw a weapon in his hand. As the war triggered by the US-Israeli attacks on Iran rages on, experts said that without proper labelling, AI-enhanced images further eroded the public's trust. This kind of content was already having "a huge impact on people and their ability to trust the truth," said O'Brien. "People start doubting authentic images as well," Kanoulas agreed.

[7]

Did you spot these fake videos about the Iran war?

The escalation between Iran, Israel and the US has prompted a wave of viral war footage online. But many of the videos gaining millions of views are misleading, wrongly captioned, lifted from video games or entirely AI-generated. As the crisis in the Middle East escalates, social media has been flooded with an unprecedented amount of misleading videos and images claiming to show strikes and military action in both Israel and Iran. But many of the clips online don't show the war at all. Some are footage taken out of context from other countries, while others, viewed by millions, come from video games or are generated entirely with AI. Here are three viral videos that have gained hundreds of thousands of views but do not show what they claim. One widely shared video on X claims to show Iranian missiles striking the centre of Tel Aviv. It has been viewed by more than 4 million people, but it does not depict Tel Aviv. In fact, it's a video from Algeria that has been debunked multiple times in the past. The video actually shows football fans celebrating the victory of an Algerian club called CR Belouizdad, not missiles hitting Israel. We geolocated this footage to Al Mokrani Square in Algiers. The club has celebrated its victories in the past with similar fireworks, seen here and here. The Cube, Euronews' fact-checking team, debunked the same video in 2023, when it claimed to show an Israeli attack on Gaza. Another clip circulating purports to show a US military strike on Iran. One of the original versions of this clip has been viewed more than 5 million times, with a Chinese caption reading "the US has unleashed its powerful F-15 fighter jets in the largest airstrike in modern history". But these are actually simulations of a Russian Air Force SU-57 in Arma 3, a military simulation video game that uses a photorealistic style. Nevertheless, millions have seen the clip alongside other video game footage masquerading as legitimate videos from the war. One clip seen by The Cube on X racked up more than 7 million views, claiming to show "an Iranian plane VS a US ship". It was shared and then deleted by Texas Governor Greg Abbott. It ultimately turned out to be a clip from the simulation video game War Thunder. One clip has been shared widely across X, TikTok, Instagram, Youtube and Douyin, China's version of TikTok. It claims to show the centre of Tel Aviv being pummeled by Iranian ballistic missiles, destroying residential buildings. The video is, however, AI-generated. The rooftops of some of the buildings are duplicated, the smoke featured in the clip is an unnatural shade of orange, and there are no sirens heard in the background. Part of the reason why so many false and misleading videos have circulated widely, with many believing their content to be true, is due to AI chatbots. Many users turned to xAI's chatbot, Grok, to verify the alleged Tel Aviv video as it circulated on X. However, Grok failed to tell users that it had been AI-generated, and often denied that it was, despite repeated evidence from experts and fact-checkers online that this was the case. In one instance, Grok responded to a user that, "No, this isn't AI, it's a real photo from today's Iranian ballistic missile strikes on central Israel", before incorrectly citing Reuters, CNN and Euronews as sources. X's head of product, Nikita Bier, announced that the platform would crack down on AI-generated videos as the war continues, by suspending users from the platform's creator revenue sharing if they did not label AI-generated images as synthetic. Creator revenue sharing allows users to earn money from X and is available to accounts with a large reach. He added that X would identify AI-generated content about the war through its community notes tool, although verification experts have questioned the effectiveness of community notes given the scale of the content. Bier said the platform had identified an account posing as a Gaza journalist posting fake videos of airstrikes pummelling Tel Aviv, as well as a user in Pakistan using a network of accounts to spread AI-generated videos of the war. The user hacked into 31 accounts before changing the usernames to spread the fake footage, according to Bier. Users can be motivated to spread fake and misleading imagery for financial incentives. In an active conflict such as this one, fake images can also be used to claim one side is gaining a competitive advantage and distort the information space. Media rating website Newsguard found that videos and images that had garnered more than 21.9 million views claimed to show Iran gaining an advantage in the war over Israel. Many of these posts were circulated by pro-Iranian social media users and exaggerated the country's military might.

[8]

Fake AI satellite imagery spurs US-Iran war disinformation

Washington (United States) (AFP) - The satellite image posted by an Iranian news outlet looked real: a devastated US base in Qatar. But it was an AI-generated fake, underscoring the accelerating threat of tech-enabled disinformation during wartime. The rise of generative AI has turbocharged the ability of state actors and propagandists to fabricate convincing satellite imagery during major conflicts, a trend that researchers warn carries real-world security implications. As the US-Israeli war against Iran rages, Tehran Times, a state-aligned English daily, posted on X a "before vs. after" image it claimed showed "completely destroyed" US radar equipment at a base in Qatar. In fact it was an AI-manipulated version of a Google Earth image from last year of a US base in Bahrain, researchers said. The subtle visual giveaways included a row of cars parked in identical positions in both the authentic satellite photo and the manipulated image. Yet the manipulated photo garnered millions of views as it spread across social media in multiple languages, illustrating how users are increasingly failing to distinguish reality from fiction on platforms saturated with AI-generated visuals. Brady Africk, an open-source intelligence researcher, noted an "increase in manipulated satellite imagery" appearing on social media in the wake of major events including the Middle East war. "Many of these manipulated images have the hallmarks of imperfect AI-generation: odd angles, blurred details, and hallucinated features that don't align with reality," Africk told AFP. "Others appear to be an image manipulated manually, often by superimposing indicators of damage or another change on a satellite image that had no such details to begin with," he said. 'Fog of war' Information warfare analyst Tal Hagin flagged another AI-generated satellite image purporting to show that Israeli-US jets had targeted the painted silhouette of an aircraft on the ground in Iran, while Tehran seemingly moved real planes elsewhere. The telltale clues included gibberish coordinates embedded in the fake image, which spread across sites including Instagram, Threads and X. AFP detected a SynthID, an invisible watermark meant to identify images created using Google AI. The fabricated satellite images follow the emergence of imposter OSINT -- or open-source intelligence -- accounts on social media that appear to undermine the work of credible digital investigators. "Due to the fog of war, it can be very difficult to determine the success of an adversary's strikes. OSINT came as a solution, using public satellite imagery to circumvent the censorship" inside countries like Iran, Hagin said. "But it's now being preyed upon by disinformation agents," he added. Reports of fake satellite imagery created or edited using AI also followed the Russia-Ukraine conflict and the four-day war between India and Pakistan last year. 'Critical awareness' "Manipulated satellite imagery, like other forms of misinformation, can have real-world impacts when people act on the information they come across without verifying its authenticity," Africk said. "This can have effects that range from influencing public opinion on a major issue, like whether or not a country should engage in conflict, to impacting financial markets." In the age of AI, authentic high-resolution satellite imagery collected in real time can give decision-makers vital clues to assess security threats and debunk falsehoods from unverified sources. During a recent militant attack on Niamey airport in Niger, satellite intelligence company Vantor said it detected images circulating online purporting to show the main civilian terminal on fire. The company's own satellite imagery helped confirm that the photos were fake, almost certainly generated using AI, Vantor's Tomi Maxted told AFP. "When a satellite image is presented as visual evidence in the context of war, it can easily influence how people interpret events," Bo Zhao, from the University of Washington, told AFP. As AI-generated imagery grows increasingly convincing, it is "important for the public to approach such visual content with caution and critical awareness," Zhao said.

[9]

Iran's state media ramps up disinformation campaign, report

Disinformation is spreading on Iran's state media outlets, a new report warns. Iran's state media outlets have significantly increased disinformation efforts, including alleged battlefield victories which are supported by old or manipulated images, according to a report. Since the United States and Israel launched attacks on Iran on 28 February, 18 war-related claims by Iran were found to be false, according to the news rating organisation NewsGuard. In contrast, five other false claims published by Iranian sources were identified in the two weeks before the US-Israel attack on Iran. NewsGuard also found that Iranian outlets are increasingly turning to AI-doctored images to spread false claims. In many cases, these images are created outside Iran. Iranian state-controlled news service Tehran Times published a satellite image which allegedly reflected the destruction of a US radar at Qatar's Al-Udeid Air Base in a post on social media platform X on 28 February. The picture showed the site before and after the supposed attack. "An American radar in Qatarwas completely destroyed today in an Iranian drone strike," the post wrote. However, information warfare analyst Tal Hagin later dispelled these claims by highlighting that this was originally a Google Earth image from 2 February 2025, which was manipulated by AI. "One way to tell is that all the cars stayed in the exact same location," Hagin notedin his X post. Iranian state-linked sources also shared a video which claimed to be of a fighter jet being shot down over Tehran on March 4. This initially led to Telegram channels linked to the Islamic Revolutionary Guard Corps (IRGC) celebrating the video as proof of Iran shooting down a US F-15 fighter jet. However, the Israeli Air Force later said that the video showed an F-35 shooting down an Iranian Yak-130 over Tehran. Mehr, a semi-official Iranian outlet, also reported that four Iranian ballistic missiles hit the USS Abraham Lincoln, attributing the claim to a statement by the IRGC. However, the US Central Command clarified on 1 March that not only was the Lincoln not hit, but the missiles did not even come close. Similarly, an IRGC spokesperson claimed that 650 US troops were killed or wounded in the first two days of the conflict, published by Tasnim, a military-aligned Iranian outlet. However, CENTCOM rebutted these claims, noting that six US service members had been killed in the war with Iran. In some cases, war images were also extracted from video games like Arma 3, according to Factnameh, a Persian news fact-checking website. Meanwhile, a recent Wired investigation found hundreds of posts on Elon Musk's X platform spreading misleading or false content about the conflict, including AI-manipulated images and exaggerated claims about the scale of the attack, with many appearing within minutes of missile strikes. One post, viewed more than 4 million times, purported to show ballistic missiles over Dubai but actually showed footage of an Iranian attack on Tel Aviv in October 2024. Another, with over 375,000 impressions, featured a fabricated before-and-after image of the compound of the late Iranian Supreme Leader Ali Hosseini Khamenei. One of the main reasons that Iranian state media outlets and linked sources can spread disinformation is due to the Iranian government's almost complete blocking of citizens from using the internet. This was described as a "near-complete shutdown" by web infrastructure company Cloudflare on 28 February, as traffic plunged 98 percent compared to the previous week. With very limited access to foreign media, Iranians are forced to get news s from state-run radio and television outlets. Other options include the National Information Network, which is the state-controlled domestic internet network, or a state-backed messaging app, Bale. However, according to NewsGuard, these sources continue to spread a vast number of false claims about the Iranian military's alleged wins. NewsGuard also reported that Russia has been using Iran's fake claims to undermine Ukraine and its allies by claiming that Iranian missiles have destroyed Ukrainian military bases in Dubai.

[10]

State Actors Are Behind Much of the Visual Misinformation About the Iran War

As attacks spread after the bombing of Iran by U.S. and Israeli forces, a video circulated widely of crowds peering up at fire, smoke and debris coming from the top of a high-rise building said to be in Bahrain. Social media users claimed an Iranian attack had hit the skyscraper. But while buildings in Bahrain have been struck by Iranian missiles during the Iran war, this video wasn't real. It was generated with artificial intelligence and shared by accounts associated with the Iranian government as part of an effort to amplify its successes. There are multiple clues that the video was not authentic, including two cars on the left side of the clip that appear stuck together and a man in the bottom-right corner whose elbow seems to move straight through a backpack. A deluge of misrepresented or fabricated videos has spread widely online since the Iran war began last weekend, fueled in part by state-linked propaganda and influence campaigns -- particularly around who is winning the war and how many casualties there have been. "The content that's coming from state actors tends to be a little better targeted," said Melanie Smith, senior director of policy and research on information operations at the Institute for Strategic Dialogue. "They have a very clear kind of narrative structure and the videos are just used to support some kind of statement they want to make about the conflict and about the kind of geopolitical situation writ large." Pro-Iran social media accounts have adopted a narrative that exaggerates the destruction and death tolls wrought by the country's military -- a position supported by what is being reported in Iranian state media. This has led to a large number of AI-generated videos of supposed air strikes, such as the one of the Bahraini high-rise on fire. An ongoing Russia-aligned influence operation called Operation Overload, also referred to as Matryoshka or Storm-1679, has been posting videos designed to impersonate intelligence agencies and news outlets, undermining people's sense of safety in an effort to sway their behavior -- a tactic the network has previously used during election cycles. For example, it shared a warning falsely attributed to Israeli intelligence telling Israelis in Germany and the U.S. to be cautious when in public or to not go outside at all. Iranian censorship confuses matters further Misrepresented and fabricated videos have been a key feature of other recent conflicts, such as the Russia-Ukraine and Israel-Hamas wars, but experts say a major difference now is the lack of information from the Iranian public due to internet shutdowns and general censorship -- a loss of perspectives that could have worked both for and against the Iranian government. "In Ukraine, that message was so full-throated it really changed the entire dynamic of the conflict because the world really aligned with the perspective of Ukrainians facing the attacks and showing resilience in light of the attacks, but we're sort of missing that story from Iran," said Todd Helmus, a senior behavioral scientist at RAND who studies irregular warfare, terrorism and information operations. In search of clicks, opportunistic social media users not affiliated with state actors have also contributed heavily to the misinformation that has spread during the first days of the Iran war, presenting old footage from other conflicts as recent, sharing video game clips as real and posting their own AI-generated content. AI, in particular, has helped fuel misinformation in ways that weren't possible during past conflicts, even just a few years ago. Coupled with state-linked disinformation and censorship, this creates an even wider vacuum in which the truth can get lost. "The volume of AI content is starting to just pollute the information environment in these kinds of crisis settings to a really terrifying degree," Smith said. "The inability to get access to verified and credible information in times like this -- it's getting harder and harder to do that." Nikita Bier, X's head of product, wrote in a Tuesday post that the platform will suspend users from its revenue-sharing program if they post AI-generated content from an armed conflict without a proper disclosure. The suspensions are 90 days for a first offense and permanent after that. Emerson Brooking, director of strategy and resident senior fellow at the Atlantic Council's Digital Forensic Research Lab, warns that social media platforms are now frontlines in war, and that users should be aware of their potential to be used by state actors, even if they are located thousands of miles away from on-the-ground action. "If you're in these spaces, just understand that this is an extension of the physical battle space," he said. "That there are actors on all sides of the conflict that are actively trying to spread propaganda and disinformation to convince you that certain things are true that aren't. That your eyeballs and your attention are an asset." ___ Find AP Fact Checks here: https://apnews.com/APFactCheck.

[11]

'Narrative war': disinformation surges as conflict roils Middle East

Washington (United States) (AFP) - Recycled images, video game footage passed off as missile strikes, and AI-generated combat visuals: the US-Israeli assault on Iran has unleashed a torrent of online disinformation that analysts are calling a war of narratives. Since US and Israeli strikes over the weekend ignited a regional conflict, a parallel information war has erupted, with supporters on both sides flooding social media with falsehoods that often spread faster than the facts on the ground. AFP's fact-checkers have debunked a series of claims by pro-Iranian accounts posting old videos to exaggerate the damage from Tehran's missile strikes on Israel and Gulf states including the UAE and Saudi Arabia. "There is definitely a narrative war unfolding online," Moustafa Ayad, from the Institute for Strategic Dialogue (ISD), told AFP. "Whether it was to rationalize the strikes across the Gulf, or to trumpet Iranian military might in the face of the Israeli and US strikes, the goals seem to be wear down 'enemies.'" On the other end of the divide, Iranian opposition outlets have pushed false narratives on X and Telegram blaming a missile strike on an Iranian girls' school on the Iranian government itself, researchers said. ISD also cautioned that fake social media accounts have sprung up impersonating senior Iranian leadership. Meanwhile, video game clips repurposed as Iranian missile strikes and AI-generated images of US warships being sunk, including the USS Abraham Lincoln, have garnered millions of views across major platforms. Similar disinformation tactics have also been reported in other global conflicts including Ukraine and Gaza. "It is really the speed and scale of these representations that is astounding, driving much of the online confusion of what has been targeted, or casualty counts for instance," said Ayad. Such fabricated visuals -- portraying Iran as more menacing than evidence from the ground suggests -- have collectively garnered more than 21.9 million views on the Elon Musk-owned X alone, according to the disinformation watchdog NewsGuard. 'Fog of war' X on Tuesday announced it would suspend creators from its revenue sharing program for 90 days if they post AI-generated videos of armed conflicts without disclosing they were artificially made. The policy change targets what the company described as a threat to information authenticity amid the ongoing war against Iran. "During times of war, it is critical that people have access to authentic information on the ground," X's head of product Nikita Bier said, adding that current AI technologies make it "trivial to create content that can mislead people." The new AI disclosure policy represents a notable pivot for a platform whose approach to content moderation has been heavily criticized since Musk completed his $44 billion acquisition of the site in October 2022. "The fog of war is quickly becoming the slop of war as AI synthetic content creates infinite noise in information ecosystems," said Ari Abelson, co-founder of OpenOrigins, a media authenticity company that fights deepfakes. "As we witness yet another immensely impactful global conflict unfolding in Iran, it's important for us to all understand how our media ecosystem is shifting." In what could further stoke online chaos, a NewsGuard study showed that Google's reverse-image tool has produced inaccurate AI-generated summaries of fabricated and misleading visuals tied to the Middle East conflict. This exposes a "significant weakness in a widely used system for verifying the authenticity of images," the watchdog said. There was no immediate comment from Google. The United States and Israel launched the attack on Saturday and quickly killed Iran's supreme leader, Ayatollah Ali Khamenei, two days after US envoys had been speaking to Iran in Geneva on a nuclear accord. Since then, Iran has expanded its retaliatory missile and drone barrage across the Middle East, hitting on Tuesday a US consulate and base as the United States and Israel said they had pummeled key sites inside Tehran.

[12]

AI-enhanced images of real events distort view of Mideast war

Athens: The Middle East war has unleashed a torrent of AI-driven disinformation. Beyond entirely fabricated visuals, another kind of content is spreading: authentic images "enhanced" in ways that experts say are subtly distorting perceptions of what's happening on the ground. In one striking photo, a kneeling US pilot is confronted by a Kuwaiti local, moments after parachuting from his jet. The high-quality image was widely shared online and even published by media outlets. Yet the pilot appears to have only four fingers on each hand. AFP fact-checkers ran the photo through AI detection tools and found it contained a SynthID, an invisible watermark meant to identify images made with Google AI. But that's not the whole story. The situation itself appears to be genuine. A video showing the same scene began circulating on social media on March 2, and satellite imagery verified the location. It also corresponded with reports that day that Kuwait had mistakenly shot down three US warplanes. AFP was also able to locate an earlier version of the photo on Telegram that matched the high-resolution photo exactly, except that it was blurry. Also Read: Putin warns oil flow through Strait of Hormuz could halt within a month as Iran war triggers energy crisis AI verification tools determined this image, which had none of the same detail in the pilot's face, was real. This suggests it may have served as the starting point for the image that returned the Google AI result. "AI-enhancement may subtly alter textures, faces, lighting, or background details, creating an image that looks more 'real' than the original," said Evangelos Kanoulas, a professor in AI at the University of Amsterdam. This can "strengthen a particular narrative about an event -- for example, making a protest appear more violent, making a crowd appear larger, making facial expressions more intense." In another case, social media users shared a dramatic image of a huge blaze near Erbil airport in Iraq, after the area was targeted by Iranian strikes on March 1. Although SynthID detection recognised the use of Google AI in the picture, it was not a total fabrication. The original version of the image shows the same scene but with a far smaller fire and smoke column, and less vivid colours. Very different story Experts warned that the line between enhancement and content generation, accidentally or intentionally, was a thin one. "Even little changes can end up telling a very different story," said James O'Brien, a professor of computer science at the University of California, Berkeley, and "could change the perception of events". Generative artificial intelligence is also still prone to error and may "hallucinate" elements that were not in the original image, Kanoulas added. This happened following the shooting of Alex Pretti by federal immigration agents in the US state of Minneapolis in January, when an AI-enhanced image of the incident went viral. The image was based on a frame taken from a genuine video of the shooting, showing Pretti falling to his knees with officers beside him, one of them holding a gun to his head. In the grainy, low-quality frame, Pretti holds an object that in reality was a phone. In the AI-treated image, some social media users wrongly saw a weapon in his hand. As the war triggered by the US-Israeli attacks on Iran rages on, experts said that without proper labelling, AI-enhanced images further eroded the public's trust. This kind of content was already having "a huge impact on people and their ability to trust the truth," said O'Brien. "People start doubting authentic images as well," Kanoulas agreed.

[13]

The Latest Weapon in the Iran War Is AI-Generated Misinformation

What Former Prince Andrew's Arrest Says About the Royal Family: 'It's the Stuff of Nightmares' A photograph of a massive explosion at an Iraqi airport; satellite images depicting damage to a U.S. Naval base in Qatar; video of Iranian ballistic missiles striking the center ofTel Aviv. These are all images that have circulated in the past week since the Trump administration attacked Iran. And none of them are real. These images -- along with many more -- were created or manipulated by AI, spreading misinformation about what is actually happening in and around Iran, and they are increasingly becoming a problem for those trying to distinguish truth and reality from lies and propaganda. The spread of misinformation has always been a part of warfare, as conflicting sides battle for the public's support while launching their bombs. But now, generative AI has made the ability to fake images and videos easier than ever before. Gone are the days when one would need Photoshop skills to create a false narrative. And with social media, these manipulated images can travel across countries in seconds. While bad actors might be intentionally attempting to sow discord, there are exponentially more people who are unknowingly sharing it. This, combined with a White House intent on spreading propaganda, makes for an information ecosystem that can feel overwhelming and confusing. "We have reached a level of realism in video, audio, and image deepfakes that for most people, it is not discernible from fact," says Rumman Chowdhury, a prominent AI researcher and former head of ethics at X (when it was still known as Twitter). "While AI companies have agreed to watermarking and other methods of verification, they are not built with the consideration of how users interact with social media." "This is particularly dangerous when considering situations like the war in Iran," adds Chowdhury. "Most Americans are likely entering with low information and probably biased and prejudiced information. Fake media will only confuse and compound these biases." On Feb. 28, Iran's state-aligned newspaper Tehran Times shared satellite images, which supposedly showed the destruction left behind after an Iranian drone struck American radar equipment at a U.S. naval base in Qatar. BBC Verify, a team of journalists dedicated to fact-checking images, has been tracking and mapping attacks related to the U.S. and Israel's war on Iran, and labeled this satellite imagery as an AI-generated fake. The team explained that the altered image was based on real satellite imagery of a U.S. base, but it was edited with Google AI to falsely depict damage. These days, it's not as simple as people getting fed deepfakes and being fooled. Political scientist Steven Feldstein says that as people have become savvier about AI, the disinformation content creators have also become more sophisticated in how they present things, resulting in a "shallowfake," which is a more subtle manipulation. "Rather than present something that would look completely false, [they] present shades of the truth, manipulate what's there," says Feldstein. Meaning content creators provide details and nuance that's good enough to get past people's bullshit detectors, but could still be misconstruing reality to represent a specific viewpoint. This can happen by only slightly manipulating images, like a real Iraqi airport photo which depicted smoke over a U.S. military base in Iraq, but on March 1 was changed using AI to show a giant fireball explosion. Or it could be sharing an image out of context, like using an old photo and saying it just occurred. "You're seeing that happen in increasing levels," says Feldstein, author of The Rise of Digital Repression: How Technology is Reshaping Power, Politics, and Resistance. "It's become very sophisticated and also a critical part of geopolitics." Feldstein says that the 12-Day War, when Israel and the U.S. attacked Iran in June 2025, showed how quickly AI-generated content can spread. BBC Verify's Shayan Sardarizadeh told the Global Investigative Journalism Network that attack was the "first example of a major global conflict where we were seeing more misinformation being produced using AI than in traditional ways." It marked a "new era in the way AI-generated content is being used in the wake of a major breaking news story," said Sardarizadeh, whose team saw several AI-generated videos and images misleading people with "millions and millions of views." Chowdhury, who was the former U.S. science envoy for AI, says we are already seeing "agents of disinformation all over social media pushing particular agendas." She points to when X released a location feature this past November, which uses IP addresses to show where accounts are based. "It turned out a lot of American right-wing influencers are in Africa, Bangladesh, Russia, and Ukraine." Both Chowdhury and Feldstein say that the blurring of fiction and reality can make it so when people see a real video, they might claim it is fake. If you can't trust your own eyes, it becomes harder to challenge your own strongly held beliefs. "It's now to a point where nothing that comes in beyond your own pre-existing narrative is accepted as something that is truthful," Feldstein says, "and that's just as harmful, as well." Misinformation does not always rely solely on AI. Sometimes, for example, a screenshot from a video game can be circulated and shared as if it is a real photograph of destruction. And then there's just outright propaganda, which has grown exponentially under Trump. During the ICE raids in Minnesota, the Trump administration relied on cruel memes and AI-generated images to try and sway public opinion. They even did their own version of a shallowfake, digitally altering a photograph of a woman being arrested to make it appear as if she was crying, when she was not. Then came Iran. On March 4, the White House released a video on its official X account merging real clips of Iran missile strikes with footage from the Call of Duty video game. Halfway through, a choppy voiceover says, "We're winning this fight." On March 5, the White House released another video, this time celebrating "justice the American way" with clips from movies and TV series like Braveheart, Breaking Bad, Iron Man, and Gladiator. The war has already left more than 1,000 people dead, including more than 100 Iranian schoolgirls, according to Iran state media, and at least six American service members. "War isn't a video game," tweeted military veteran and Barstool Sports podcaster Connor Crehan. "The consequences of war are final. I wish we didn't treat it with such a cavalier approach." Feldstein says that he's increasingly seeing information and images being used to mobilize action and that social media has made it so rhetoric can spread extremely quickly, before anyone can stop and figure out the original source of the content. If you don't know who is making these claims, and if it's a credible news source, it's hard to tell whether the narrative being pushed is one-sided and from a particular viewpoint that could be contested. He adds that the president of the United States and Israel's prime minister have used images and motifs to try and call on Iranian citizens to rise up against their government. The U.S. is not [currently putting] troops on the ground, but it is relying on information transmission as a means to mobilize change on the ground in terms of Iran's government," says Feldstein. "You can see how high the stakes are when it comes to how quickly that information is digested and it [spurring] action." And of course, he points out, there's an enormous humanitarian risk to bad information, beyond political manipulation. People living in areas of armed conflict need to know where it's safe to seek shelter, where drones are attacking, if they need to evacuate. It's important to have information you can trust.

[14]

State actors are behind much of the visual misinformation about the Iran war

Fake videos are spreading online about the Iran war. AI and government propaganda are creating confusion about who is winning and casualty numbers. This misinformation campaign makes it hard to find real news. Social media platforms are now battlegrounds for these information wars. Users must be aware that their attention is a valuable asset. As attacks spread after the bombing of Iran by U.S. and Israeli forces, a video circulated widely of crowds peering up at fire, smoke and debris coming from the top of a high-rise building said to be in Bahrain. Social media users claimed an Iranian attack had hit the skyscraper. But while buildings in Bahrain have been struck by Iranian missiles during the Iran war, this video wasn't real. It was generated with artificial intelligence and shared by accounts associated with the Iranian government as part of an effort to amplify its successes. There are multiple clues that the video was not authentic, including two cars on the left side of the clip that appear stuck together and a man in the bottom-right corner whose elbow seems to move straight through a backpack. A deluge of misrepresented or fabricated videos has spread widely online since the Iran war began last weekend, fueled in part by state-linked propaganda and influence campaigns - particularly around who is winning the war and how many casualties there have been. "The content that's coming from state actors tends to be a little better targeted," said Melanie Smith, senior director of policy and research on information operations at the Institute for Strategic Dialogue. "They have a very clear kind of narrative structure and the videos are just used to support some kind of statement they want to make about the conflict and about the kind of geopolitical situation writ large." Pro-Iran social media accounts have adopted a narrative that exaggerates the destruction and death tolls wrought by the country's military - a position supported by what is being reported in Iranian state media. This has led to a large number of AI-generated videos of supposed air strikes, such as the one of the Bahraini high-rise on fire. An ongoing Russia-aligned influence operation called Operation Overload, also referred to as Matryoshka or Storm-1679, has been posting videos designed to impersonate intelligence agencies and news outlets, undermining people's sense of safety in an effort to sway their behavior - a tactic the network has previously used during election cycles. For example, it shared a warning falsely attributed to Israeli intelligence telling Israelis in Germany and the U.S. to be cautious when in public or to not go outside at all. Iranian censorship confuses matters further Misrepresented and fabricated videos have been a key feature of other recent conflicts, such as the Russia-Ukraine and Israel-Hamas wars, but experts say a major difference now is the lack of information from the Iranian public due to internet shutdowns and general censorship - a loss of perspectives that could have worked both for and against the Iranian government. "In Ukraine, that message was so full-throated it really changed the entire dynamic of the conflict because the world really aligned with the perspective of Ukrainians facing the attacks and showing resilience in light of the attacks, but we're sort of missing that story from Iran," said Todd Helmus, a senior behavioral scientist at RAND who studies irregular warfare, terrorism and information operations. In search of clicks, opportunistic social media users not affiliated with state actors have also contributed heavily to the misinformation that has spread during the first days of the Iran war, presenting old footage from other conflicts as recent, sharing video game clips as real and posting their own AI-generated content. AI, in particular, has helped fuel misinformation in ways that weren't possible during past conflicts, even just a few years ago. Coupled with state-linked disinformation and censorship, this creates an even wider vacuum in which the truth can get lost. "The volume of AI content is starting to just pollute the information environment in these kinds of crisis settings to a really terrifying degree," Smith said. "The inability to get access to verified and credible information in times like this - it's getting harder and harder to do that." Nikita Bier, X's head of product, wrote in a Tuesday post that the platform will suspend users from its revenue-sharing program if they post AI-generated content from an armed conflict without a proper disclosure. The suspensions are 90 days for a first offense and permanent after that. Emerson Brooking, director of strategy and resident senior fellow at the Atlantic Council's Digital Forensic Research Lab, warns that social media platforms are now frontlines in war, and that users should be aware of their potential to be used by state actors, even if they are located thousands of miles away from on-the-ground action. "If you're in these spaces, just understand that this is an extension of the physical battle space," he said. "That there are actors on all sides of the conflict that are actively trying to spread propaganda and disinformation to convince you that certain things are true that aren't. That your eyeballs and your attention are an asset." (You can now subscribe to our Economic Times WhatsApp channel)

Share

Share

Copy Link

Social media platforms, particularly X, are drowning in AI-generated fake videos and images about the Iran war. State actors and engagement farmers are spreading visual misinformation that's been viewed hundreds of millions of times, while Elon Musk's Grok chatbot repeatedly fails to verify false claims, instead sharing AI-generated images as proof.

State Actors Lead Information War With Synthetic Conflict Footage

The Iran war has unleashed an unprecedented wave of AI-generated content across social media platforms, with state actors and engagement farmers flooding X with fake videos and fabricated satellite imagery that have collectively amassed hundreds of millions of views

1

3

. Since the US and Israel began strikes on Iran on February 28, the information environment has deteriorated rapidly, with disinformation expert Tal Hagin noting that Elon Musk's Grok chatbot has repeatedly failed to verify false claims, instead sharing AI-generated images as supposed proof1

.

Source: Euronews

Iranian officials and state media have been at the forefront of this information war, sharing AI-generated videos of fabricated attacks and exaggerated damage. On March 2, Iranian state media circulated AI-generated videos showing a high-rise building in Bahrain on fire, while images of a US B-2 bomber allegedly shot down garnered over a million views before deletion

1

. Pro-Iran social media accounts have adopted narratives that inflate destruction and death tolls, supported by what Iranian state media reports2

.Visual Misinformation Reaches Alarming Scale Across Platforms

The scale of fake AI content has experts deeply concerned about the collapse of fact-based information during conflicts. "What is particularly unique about this war is the dramatic uptick in AI-generated content I find myself debunking," Hagin told WIRED, adding that "the longer we go without regulations against AI abuse, the more harm will be caused"

1

. Timothy Graham, a digital media expert at Queensland University of Technology, described the situation bluntly: "What used to require professional video production can now be done in minutes with AI tools. The barrier to creating convincing synthetic conflict footage has essentially collapsed"3

.

Source: BBC

A new feature of this conflict is the emergence of fabricated satellite imagery. The Tehran Times, a state-linked newspaper, shared an AI-generated photo claiming to show extensive damage to the US Navy's Fifth Fleet headquarters in Bahrain

3

. According to Google's SynthID watermark detector, the fake image was created using a Google AI tool, with the fabrication based on real satellite imagery from February 20253

. Meanwhile, a fake video showing Dubai's Burj Khalifa in flames was viewed tens of millions of times during a period of genuine concern about drone and missile strikes on the city3

.Engagement Farming and Monetization Drive Social Media Disinformation

The proliferation of AI-generated Iran war videos is being fueled by creators seeking to monetize viral content through X's Creator Revenue Sharing program. X's head of product stated that "99%" of accounts spreading these videos are attempting to "game monetization" by posting content designed to generate massive engagement in return for payment

3

. Graham estimates that X pays approximately "eight to 12 dollars per million verified user impressions," with creators needing to hit five million organic impressions in three months plus hold an X premium subscription to qualify3

.

Source: Wired