Microsoft Copilot now uses GPT and Claude AI models together to fact-check research outputs

12 Sources

[1]

Microsoft unveils AI upgrades, rolls out Copilot Cowork to early-access customers

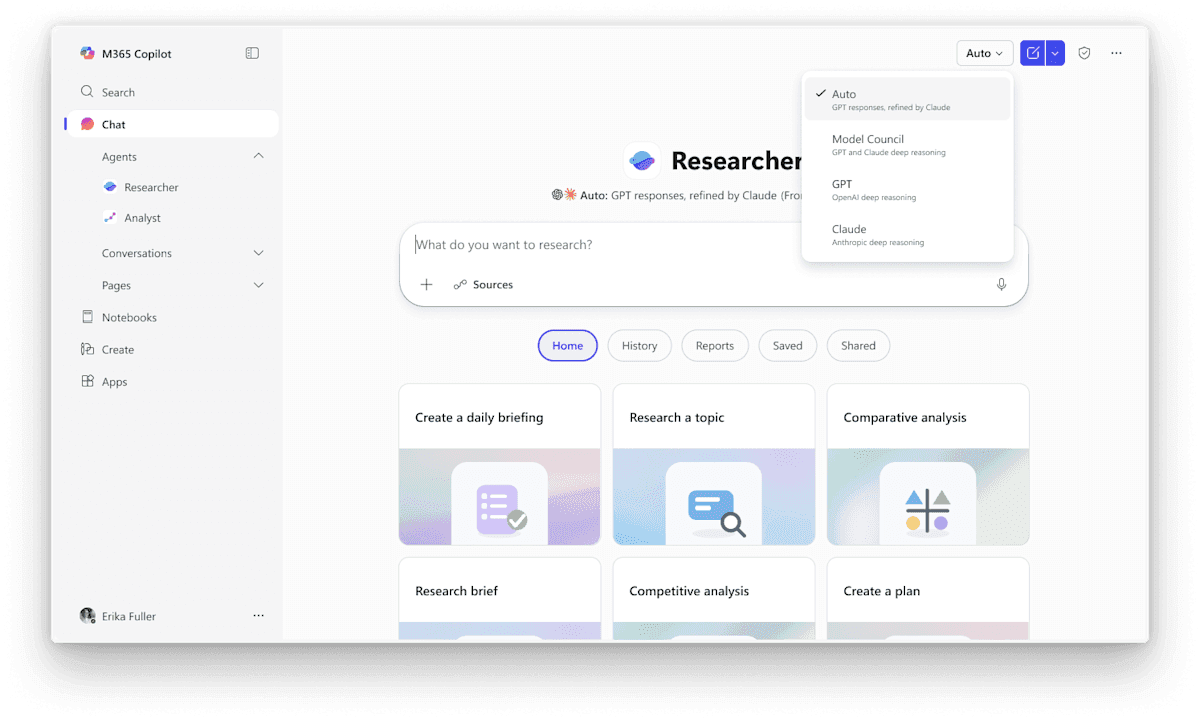

March 30 (Reuters) - Microsoft (MSFT.O), opens new tab on Monday unveiled new features in its Copilot research assistant that would allow users to utilize multiple AI models simultaneously within the same workflow, the latest move by the tech giant to improve its AI offering and boost adoption. In a new feature called "Critique", Copilot's Researcher agent will now be able to pull outputs from both OpenAI's GPT and Anthropic's Claude models for every response, rather than relying on a single model. While GPT generates the response, Claude will review the output for accuracy and quality before presenting it to the user, Microsoft said. The company expects to make that workflow bi-directional in the future, allowing GPT to review Claude's drafts as well. "Having various different models from different vendors in Copilot is highly attractive - but we're taking this to the next level, where customers actually get the benefits of the models working together," Nicole Herskowitz, corporate vice president of Microsoft 365 and Copilot, said in an interview with Reuters. The multi-model approach will help speed up user workflow, keep in check AI hallucinations - where systems generate false information - and produce more reliable outputs, boosting productivity and quality for customers, Herskowitz added. Microsoft is also launching 'model Council', a feature that will allow users to compare responses from different AI models side-by-side. The upgrades come as Microsoft makes its new Copilot Cowork agentic AI tool more widely available to members in its 'Frontier' program, which provides customers with early access to some of its latest AI features. Microsoft unveiled Copilot Cowork - a tool based on Anthropic's viral Claude Cowork product - in testing mode earlier this month, capitalizing on the growing demand for autonomous AI agents. The Windows maker has been racing to improve its Copilot assistant to drive better adoption amid intense competition from rivals including Google's (GOOGL.O), opens new tab Gemini and autonomous agents such as Claude Cowork. Reporting by Deborah Sophia in Bengaluru; Editing by Shailesh Kuber Our Standards: The Thomson Reuters Trust Principles., opens new tab

[2]

Microsoft 365 Copilot's new agent uses Claude to fact-check GPT's work

Simon is a Computer Science BSc graduate who has been writing about technology since 2014, and using Windows machines since 3.1. After working for an indie game studio and acting as the family's go-to technician for all computer issues, he found his passion for writing and decided to use his skill set to write about all things tech. Since beginning his writing career, he has written for many different publications such as WorldStart, Listverse, and MakeTechEasier. However, after finding his home at MakeUseOf in February 2019, he would eventually move on to its sister site, XDA, to bring the latest and greatest in Windows, Linux, and DIY electronics. Summary Microsoft 365 Copilot mixes GPT drafting with Claude fact-checking for stronger research outputs. Researcher's Critique scores 13.8% higher on DRACO by combining drafting and citation checks. Council shows multiple model answers and disagreements, letting you assemble the best workflow. Things are getting interesting in the world of AI. First, companies used each other's AI models. Then, we had a moment where everyone battened down the hatches and began focusing purely on making their model the best one. Now, we're entering an era where each AI model does something the others can't, so the only way to provide a truly stellar service is to mix different LLMs together. Such is the case with Microsoft 365 Copilot's newest agent, which mixes together the power of GPT and Claude when performing research. While GPT will be the one handling all the drafting, Claude will act as the strict editor who will fact-check the result and ensure everything is up to par. And the best part is, it works. Related I tested Claude's new interactive visuals, and they're changing how I explain things Most LLMs suffer with visualisation, Claude doesn't Posts 2 By Abhinav Raj Microsoft 365 Copilot Researcher's new feature delivers the best of two worlds Two experts are better than one In a press release, Microsoft revealed that Copilot Cowork is moving into the Frontier preview program. Copilot Cowork combines Claude's capabilities with Microsoft's own to create an agent you can delegate work to. It goes beyond the simple 'chatbot style' of LLMs and becomes a digital assistant of sorts. One of the more exciting new features involves a new tool for Researcher, which combines two LLMs so that each one works in harmony with what it does best. As Microsoft describes it: Researcher's new Critique feature takes this even further, putting GPT and Claude to work together on every response: GPT drafts, Claude reviews for accuracy, completeness, and citation integrity before it's delivered. [...] The results are measurable -- Researcher now scores 13.8% higher on the Deep Research Accuracy, Completeness, and Objectivity, or DRACO benchmark, the industry standard for deep research quality. Researcher will also come with a tool called 'Council' that hands your prompt to several models and lets you see what each one says and where they agree or disagree. As such, Microsoft's plan seems to be less on relying on Copilot to do all the heavy lifting and more tapping into the might of different AI companies to create a service that can handle every step of the workflow. Related Microsoft 365 Copilot's new wave of features has been announced, with some nice productivity-boosting tools Claude is coming with, too. Posts By Simon Batt

[3]

Microsoft's research assistant can now use multiple AI models simultaneously

Microsoft's Copilot is getting even better at research thanks to a new feature that combines the power of both OpenAI's ChatGPT and Anthropic's Claude. In a blog post announcing Copilot Cowork's availability, Microsoft debuted the Critique feature that will be used in Microsoft 356 Copilot's Researcher tool. Unlike the standard Copilot, Researcher is designed to tackle more complex tasks with multiple steps. Now, Researcher is getting even better at that with the Critique feature that uses GPT responses, which are then refined by Claude. In a blog post, Microsoft said that, "this architecture creates a powerful feedback loop that delivers higher-quality results across factual accuracy, analytical breadth, and presentation," adding that Researcher's process is similar to what you see in "academic and professional research settings." Microsoft claims the upgrade scores somewhat higher (compared to the most recent Perplexity Deep Research models) on the Deep Research Accuracy, Completeness, and Objectivity benchmark. On its own, Anthropic has a Research feature that can use multiple Claude agents to provide a comprehensive response to more complex requests. If you prefer doing research with a little more autonomy, Microsoft also added the Model Council feature that's available as an alternative option for Researcher. With Model Council, you'll get side-by-side responses from both Anthropic and OpenAI, with a report that shows where the models agree and disagree. Both features are currently available in Microsoft 365 Copilot's Frontier program, which acts as a early access space for the company's AI innovations.

[4]

GPT drafts, Claude critiques: Microsoft blends rival AI models in new Copilot upgrade

A new feature from Microsoft uses Anthropic's Claude to assess and correct the work of OpenAI's GPT, blending the tech giant's new and old AI partnerships in the latest attempt to boost adoption of its Microsoft 365 Copilot tools for businesses. The company announced the new capability Monday morning as part of updates to Microsoft 365 Copilot's Researcher agent. A new feature called Critique puts the two models to work in sequence: GPT drafts a response to a research query, and Claude reviews it for accuracy, completeness and citation quality before the response is given to the user. It's part of a larger trend of using multiple AI models to improve results. Microsoft says it expects the process to eventually run in both directions, with Claude drafting and GPT critiquing. The multi-model approach has led to a 13.8% improvement on the DRACO benchmark, an industry measure of deep research quality, putting it ahead of standalone deep-research tools from OpenAI, Google, Perplexity and Anthropic, according to the Redmond company. The announcement comes as Microsoft tries to accelerate Copilot adoption among its existing base of business customers. The company in January reported 15 million paid Copilot seats, still in the low-single digits, roughly 3.3%, of its 450 million commercial Microsoft 365 users. It comes amid intensifying competition in enterprise AI. Google has been expanding Gemini across its Workspace apps, Anthropic's Claude has seen growing adoption among businesses, and OpenAI has been pushing ChatGPT Enterprise and its own deep-research tools. Microsoft also said Copilot Cowork, its new tool for delegating long-running, multi-step tasks inside Microsoft 365, is now available through its Frontier early access program. The product, first announced earlier this month, is built on technology from Anthropic's Claude Cowork.

[5]

Microsoft research tools uses Anthropic and OpenAI models

Why it matters: AI companies are increasingly pairing models together -- having them cross-check, evaluate or specialize -- in a bid to boost accuracy and reduce errors that any one model might miss. Driving the news: The software giant is taking advantage of multiple models within its Microsoft 365 Copilot Researcher. * A new "Critique" layer uses Anthropic's Claude to review answers generated by OpenAI's model to improve accuracy before a user sees the response. * The company says that approach enabled the research agent to score 13.8% higher on the DRACO benchmark, an industry standard for deep research quality. * Another new option, called Model Council, allows users to see a side-by-side comparison of responses from different models. What they're saying: "It's becoming very clear to us that there will be many models, Microsoft executive VP Charles Lamanna told Axios. "Come summertime there will be many more models than just these two inside of Copilot." The big picture: AI companies are experimenting with several different ways to use multiple models to complete tasks. * When you prompt ChatGPT, Copilot or other models, they will often use a smaller classifier model to route you to the model most appropriate for the task. * Perplexity has long allowed its users to choose from multiple models and see responses side-by-side. * Anthropic uses a self-critique step mid-generation to catch errors before surfacing a final response from Claude. Between the lines: The multi-model system has an added benefit for Microsoft, which is looking to show it isn't overly reliant on OpenAI. * With the leading frontier labs frequently leapfrogging one another, Lamanna said businesses are interested in AI tools that can easily change which models are running under the hood. Yes, but: Using multiple models on a single query can lead to increased costs and slower response times. * Microsoft's Model Council, for example, costs roughly 2.5 times as much as using a single model, while the Critique approach costs about 20 percent more. * That cost isn't directly passed on given Copilot is a subscription service, but it does inform where Microsoft decides to use multiple models versus relying on a single algorithm. What we're watching: Microsoft is also building more homegrown models and Lamanna said those models might show up first working in conjunction with outside models rather than as a full replacement.

[6]

'When intelligence and trust move together, AI stops being an experiment and starts becoming how work gets done': Microsoft and OpenAI are making AI research tools smarter to help answer even your trickiest questions

* Multi-model agents will check each other before sharing research with you to ensure maximum quality * Researcher mode with Critique enabled scores highly on the DRACO benchmark * Copilot Cowork is here to Frontier program customers Microsoft has announced plans to upgrade its M365 Copilot Researcher agent with a clear focus on using multiple models across AI workflows to combine the power of various systems. Under this shift away from single-model systems, multiple AI agents will collaborate and hand off different parts of the task to each other. To begin with, the Researcher agent will use GPT models to generate the initial response, with Claude stepping in to review it for accuracy, completeness and quality. M365 Copilot's Researcher agent will pass responses through other agents Microsoft AI at Work Chief Marketing Officer Jared Spataro explained The update follows the success of Anthropic's Claude Cowork, which has since been integrated into M365 Copilot. The aptly-named Copilot Cowork, has now been made available in the Frontier program ahead of a broader rollout, allows humans to delegate work to AI. Spataro explained how Copilot Cowork moves AI's usefulness from single, basic prompts to end-to-end task execution, ideal for long-running and multi-step workflows. As for Researcher mode with the new Claude-based Critique function, it has already outperformed single-model systems in early testing, with the second ensuring best-quality output. It scores 13.8% higher on the DRACO benchmark (Deep Research Accuracy, Completeness and Objectivity), deemed the industry standard. Achieving 57.4% thanks to the multi-model setup, it's more than twice as reliable as Deep Research with OpenAI's o4-mini model. It's also better than o3-based Deep Research, Gemini Deep Research, Claude Opus 4.6 and Perplexity's Deep Research when using Opus 4.5 and 4.6. Microsoft didn't compare it to newer flagship models like GPT-5.4, acting singularly. "When intelligence and trust move together, AI stops being an experiment and starts becoming how work gets done," Spataro wrote, speaking about Microsoft's progress towards Wave 3 of M365 Copilot - an intelligence it defines as "understand[ing] the context of work." Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button! And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

[7]

Microsoft's Copilot Cowork arrives with smarter AI research tools to spot gaps in your work

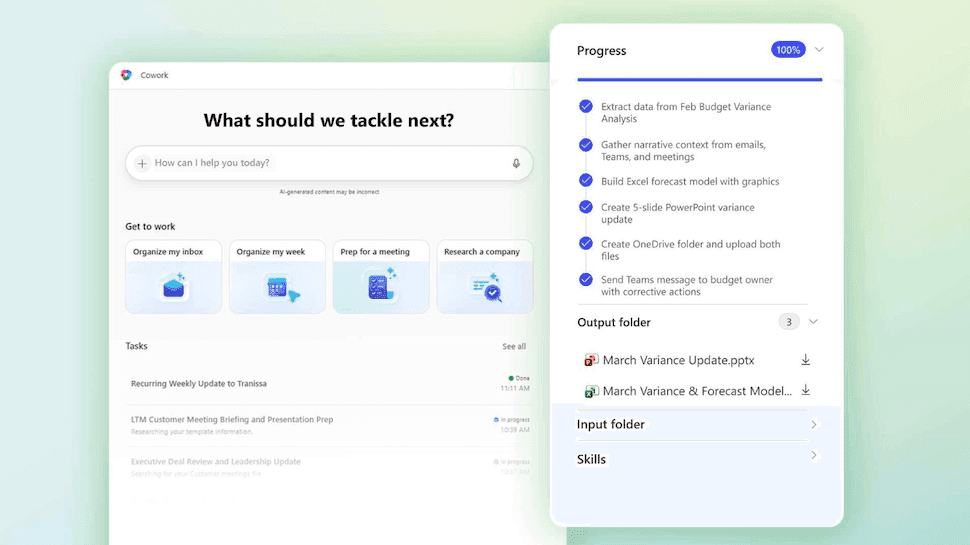

Your work can now get a second opinion with Microsoft's new Researcher features Earlier this month, Microsoft unveiled Copilot Cowork, which is based on Anthropic's Claude Cowork. Now, the company has rolled out Copilot Cowork in early access through its Frontier program, alongside new upgrades to its Researcher tool that will help you plan, analyze, and make decisions at work. So what can Microsoft's Copilot Cowork do for you? Copilot Cowork is an agentic AI tool built for handling long, multi-step tasks inside Microsoft 365. It can help you think through tasks, break down goals, and work alongside you like a colleague across documents and workflows. Recommended Videos You describe the outcome you want, and it creates a plan and completes the task while showing you its progress. You can also step in and redirect it at any point. It can handle everything from one-off requests to repeating workflows like monthly budget reviews. New AI features in Copilot's Researcher tool Microsoft is also upgrading Researcher, its deep research feature inside Copilot, with two key additions. The first is Critique, a new setup where two AI models work together on the same task. OpenAI's GPT generates the initial response, and Anthropic's Claude reviews it for accuracy and quality before it reaches you. According to Reuters, Microsoft plans to make this interaction bi-directional in the future, meaning Claude's drafts could eventually be reviewed by GPT, too. According to Microsoft, this feature improved the Researcher tool's score by 13.8% on the DRACO benchmark, the industry standard for measuring accuracy and quality of deep research. The second addition is a new model Council, which lets you pull responses from different AI models and compare them side by side. You can instantly see where they agree, where they differ, and what each brings uniquely to your question. Microsoft says all of this is part of Wave 3 of Microsoft 365 Copilot, a push to move AI from a tool you experiment with to one that actively does your work for you.

[8]

Microsoft Made GPT and Claude Work Together -- And the Result Beats Every AI Research Tool Out There - Decrypt

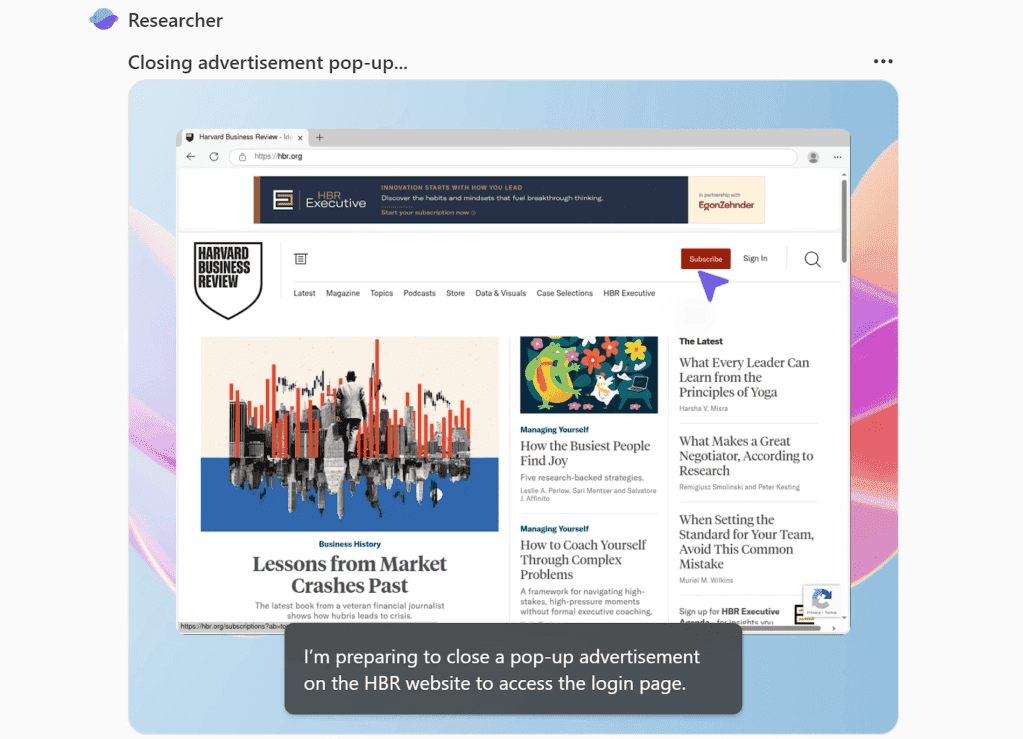

This two-model workflow fixes hallucinations, weak citations, and other problems associated with mono-model AI research. Deep research AI has been one of the hottest arms races in tech this year. Google announced its research agent for Gemini in December 2024, OpenAI released its own research agent in February 2025, xAI followed suit, Perplexity doubled down, and Anthropic's Claude built a loyal following among professionals who need detailed, cited answers, introducing its agent in April of last year. Every company has been trying to convince you that their single AI model is the smartest researcher in the room. Microsoft just said: Why pick one? The company announced two new features on Monday for Copilot's Researcher tool -- called Critique and Council -- that put OpenAI's GPT and Anthropic's Claude to work on the same research task in sequence. The result, according to Microsoft's testing against an industry benchmark, scores higher than every system included in that test, including models from the top AI companies. "Critique is a new multi model deep research system designed for complex research tasks. It separates generation from evaluation and utilizes a combination of models from Frontier labs, including Anthropic and OpenAI," Microsoft explains. "One model leads the generation phase, planning the task, iterating through retrieval, and producing an initial draft, while a second model focuses on review and refinement, acting as an expert reviewer before the final report is produced." Here's the basic problem Critique is designed to fix: Every AI research tool today works the same way. You ask a question, one model plans a search, scours sources, writes a report, and hands it back to you. That single model is doing everything with no one checking its work. This can end up with some hallucinations slipping in, some errors in citations, fake or inaccurate claims, etc. Critique breaks that workflow in two. GPT handles the first phase -- it plans the research, pulls sources, and writes an initial draft. Then Claude steps in as a strict editor, reviewing the report for factual accuracy, citation quality, and whether the answer actually addressed what was asked. Only after that review does the final report reach the user. Microsoft says the roles can eventually run in the opposite direction too, with Claude drafting and GPT critiquing, though for now GPT goes first. On the DRACO benchmark -- a standardized test covering 100 complex research tasks across 10 domains including medicine, law, and technology -- Copilot with Critique scored 57.4. points with Anthropic's Claude Opus 4.6 by itself hitting 42.7. Microsoft's combined system beats the next best result by nearly 14%. The biggest gains showed up in breadth of analysis and presentation quality, with factual accuracy also posting a significant improvement. The second feature, Council, takes a different approach to the same problem. Instead of having one model review the other's work, Council runs GPT and Claude simultaneously and puts their full reports side by side. A third "judge" model then reads both and writes a summary explaining where the two AIs agreed, where they diverged, and what unique angles each one caught that the other missed. Comparing AI research tools manually has been something users have had to do themselves until now. In Critique, the models essentially collaborate with each other while in Council the models compete against each other. Critique is the default experience in Researcher whereas Council requires you to select "Model Council" from the picker to activate the side-by-side mode. Both features are currently available to users enrolled in Microsoft's Frontier program, the early-access channel for Copilot's newest capabilities. A Microsoft 365 Copilot license ($30/user/month) is required, but users also need to be enrolled in Frontier to access them. OpenAI and Microsoft have a multibillion-dollar partnership, but Microsoft's bet is that no single model stays on top for long, and that the real value is in the orchestration layer that routes tasks to whichever combination works best.

[9]

Microsoft accelerates agentic automation with Copilot Cowork for complex workflows - SiliconANGLE

Microsoft accelerates agentic automation with Copilot Cowork for complex workflows Microsoft Corp. is moving closer to delivering on its vision of autonomous artificial intelligence agents that can do more than just chat. Today it has announced the launch of Copilot Cowork, a new capability within the Microsoft 365 platform that can handle "long-running, multistep tasks" that could previously be done only with constant human oversight. Copilot Cowork was announced in a blog post by Jared Spataro, Microsoft's chief marketing officer for AI at Work. He said the new capability is being made available through the company's Frontier program, which lets enterprises test cutting-edge AI features before they're released more broadly. Microsoft's Copilot tool has been around for a couple of years already, but until now it has mostly been focused on generative tasks, such as summarizing emails or drafting the text of an email or blog post. Copilot Cowork, on the other hand, is built for delegation, so instead of having humans perform every step in a complex workflow, someone can now describe their desired outcome and let AI complete all of those tasks autonomously. Spataro said users simply tell Copilot Cowork what they're trying to accomplish, and it will then go ahead and create a plan before immediately carrying out the necessary tasks to achieve that goal, reasoning across various Microsoft 365 applications and files. Human oversight is still present, though. As it's working, humans will be able to monitor the agent's progress, and step in to "steer" it in the right direction should it go off track, Spataro said. The system is grounded in the Work IQ framework that's designed to teach Copilot about the specific context of an organization's data while ensuring its security and governance protocols are followed. Spataro said Copilot Cowork is all about making work more efficient, eliminating the need for humans to keep jumping from one application to another. Even a relatively simple task such as completing a monthly budget review will require a human to constantly switch between platforms such as Excel, Outlook, Teams and SharePoint. It's necessary to gather the required data and coordinate with colleagues, before compiling everything into a report. Copilot Cowork eliminates all of this hassle. It acts as an "orchestrator," performing tasks such as daily briefings and calendar management without needing to be prompted to complete each individual step. Barton Warner, senior vice president of enterprise technology at Capital Group Companies Inc., an early adopter, said Copilot Cowork is about taking real action, rather than generating content and answers. "It's connecting steps, coordinating tasks and following through across everyday workflows," he explained. One of Copilot Cowork's biggest strengths is its multi-model approach, integrating with both OpenAI Group PBC's GPT models and Anthropic PBC's Claude. This can be seen in the company's newly enhanced "Researcher" agent, which now taps into both AI models via a new "critique" layer. The way it works is that OpenAI's GPT model will draft a response, which is then reviewed by Claude for accuracy and to ensure its citations are correct. Spataro said this combination has improved the Researcher agent's score on the DRACO benchmark by 13.8%. In addition, it's possible to reverse the roles, so that Claude drafts the response and GPT does the fact checking. Then, with the new "model council" feature, users can compare the results of each model to see where they agree, where they diverge and where they come up with unique outputs. It's much like having multiple researchers working on the same project. By allowing different models to perform distinct roles, with one for drafting a response and one for critiquing, Microsoft is trying to build a more resilient system that reduces the "hallucinations" that have plagued early AI systems. By allowing humans to cross-reference the work of different AIs, enterprises can potentially scale up AI automation with greater trust.

[10]

Microsoft Copilot Is Using a Surprising AI Trick to Create More Accurate Research Reports

In a March 30 blog post, Microsoft said that it added new features to Copilot Researcher, the company's version of the report-generating deep research agents offered by OpenAI and Anthropic. These agents typically work by developing a plan for how to conduct research into a given topic, then creating multiple sub-agents to check hundreds of sources simultaneously. Copilot's updated Researcher agent takes a slightly different approach. Microsoft said that a new "Critique" mode directs the Researcher agent to use two separate models: one to generate the research report, and another to act as an expert reviewer. "By giving evaluation as much emphasis as generation," Microsoft wrote, "this architecture creates a powerful feedback loop that delivers higher-quality results across factual accuracy, analytical breadth, and presentation." Critique mode will be automatically enabled when using Copilot's Researcher agent, but users can also choose to have a single OpenAI or Anthropic model handle the entire research process.

[11]

Microsoft unveils AI upgrades, rolls out Copilot Cowork to early-access customers

Microsoft has launched new features for its Copilot research assistant. Users can now employ multiple AI models together for improved accuracy and speed. This multi-model approach aims to reduce AI errors and boost productivity. Microsoft is also making its Copilot Cowork agentic AI tool more widely available. These upgrades come amid strong competition in the AI market. Microsoft on Monday unveiled new features in its Copilot research assistant that would allow users to utilise multiple AI models simultaneously within the same workflow, the latest move by the tech giant to improve its AI offering and boost adoption. In a new feature called "Critique", Copilot's Researcher agent will now be able to pull outputs from both OpenAI's GPT and Anthropic's Claude models for every response, rather than relying on a single model. While GPT generates the response, Claude will review the output for accuracy and quality before presenting it to the user, Microsoft said. The company expects to make that workflow bi-directional in the future, allowing GPT to review Claude's drafts as well. "Having various different models from different vendors in Copilot is highly attractive - but we're taking this to the next level, where customers actually get the benefits of the models working together," Nicole Herskowitz, corporate vice president of Microsoft 365 and Copilot, said in an interview with Reuters. The multi-model approach will help speed up user workflow, keep in check AI hallucinations - where systems generate false information - and produce more reliable outputs, boosting productivity and quality for customers, Herskowitz added. Microsoft is also launching 'model Council', a feature that will allow users to compare responses from different AI models side-by-side. The upgrades come as Microsoft makes its new Copilot Cowork agentic AI tool more widely available to members in its 'Frontier' program, which provides customers with early access to some of its latest AI features. Microsoft unveiled Copilot Cowork - a tool based on Anthropic's viral Claude Cowork product - in testing mode earlier this month, capitalizing on the growing demand for autonomous AI agents. The Windows maker has been racing to improve its Copilot assistant to drive better adoption amid intense competition from rivals including Google's Gemini and autonomous agents such as Claude Cowork.

[12]

Microsoft unveils AI upgrades, rolls out Copilot Cowork to early-access customers

March 30 (Reuters) - Microsoft on Monday unveiled new features in its Copilot research assistant that would allow users to utilize multiple AI models simultaneously within the same workflow, the latest move by the tech giant to improve its AI offering and boost adoption. In a new feature called "Critique", Copilot's Researcher agent will now be able to pull outputs from both OpenAI's GPT and Anthropic's Claude models for every response, rather than relying on a single model. While GPT generates the response, Claude will review the output for accuracy and quality before presenting it to the user, Microsoft said. The company expects to make that workflow bi-directional in the future, allowing GPT to review Claude's drafts as well. "Having various different models from different vendors in Copilot is highly attractive - but we're taking this to the next level, where customers actually get the benefits of the models working together," Nicole Herskowitz, corporate vice president of Microsoft 365 and Copilot, said in an interview with Reuters. The multi-model approach will help speed up user workflow, keep in check AI hallucinations - where systems generate false information - and produce more reliable outputs, boosting productivity and quality for customers, Herskowitz added. Microsoft is also launching 'model Council', a feature that will allow users to compare responses from different AI models side-by-side. The upgrades come as Microsoft makes its new Copilot Cowork agentic AI tool more widely available to members in its 'Frontier' program, which provides customers with early access to some of its latest AI features. Microsoft unveiled Copilot Cowork - a tool based on Anthropic's viral Claude Cowork product - in testing mode earlier this month, capitalizing on the growing demand for autonomous AI agents. The Windows maker has been racing to improve its Copilot assistant to drive better adoption amid intense competition from rivals including Google's Gemini and autonomous agents such as Claude Cowork. (Reporting by Deborah Sophia in Bengaluru; Editing by Shailesh Kuber)

Share

Copy Link

Microsoft unveiled a multi-model approach for its Copilot Researcher tool that leverages both OpenAI's GPT and Anthropic's Claude simultaneously. The new Critique feature has GPT draft responses while Claude reviews for accuracy, achieving a 13.8% improvement on industry benchmarks. The company also rolled out Copilot Cowork to early-access customers as competition intensifies in enterprise AI.

Microsoft Copilot Combines Multiple AI Models in New Research Tool

Microsoft unveiled significant upgrades to Microsoft 365 Copilot on Monday, introducing a multi-model approach that allows users to utilize multiple AI models simultaneously within the same workflow

1

. The centerpiece of this update is the Critique feature, which pairs OpenAI's GPT with Anthropic's Claude to deliver more accurate research outputs through the Copilot Researcher tool3

.

Source: ET

In this new workflow, GPT generates the initial response while Claude reviews the output for accuracy, completeness, and citation integrity before presenting it to the user

4

. Microsoft expects to make this process bi-directional in the future, allowing GPT to review Claude's drafts as well1

. "Having various different models from different vendors in Copilot is highly attractive - but we're taking this to the next level, where customers actually get the benefits of the models working together," said Nicole Herskowitz, corporate vice president of Microsoft 365 and Copilot, in an interview with Reuters1

.

Source: Engadget

Measurable Improvements in Research Accuracy

The multi-model approach has delivered tangible results. Microsoft reports that the Critique feature enabled Researcher to score 13.8% higher on the DRACO benchmark, an industry standard for deep research quality that measures accuracy, completeness, and objectivity

2

5

. This improvement positions Microsoft ahead of standalone deep-research tools from OpenAI, Google, Perplexity, and Anthropic4

.The strategy addresses a critical challenge in AI: hallucinations, where systems generate false information. By having Claude fact-check GPT's work, Microsoft creates a feedback loop similar to what occurs in academic and professional research settings

3

. This approach helps keep AI hallucinations in check while speeding up user workflow and producing more reliable outputs1

.Model Council Offers Side-by-Side Comparisons

Alongside Critique, Microsoft introduced Model Council, a feature that allows users to compare responses from different AI models side-by-side

1

. This tool shows where models agree and disagree, giving users more autonomy in assembling the best workflow for their needs2

. However, this approach comes with trade-offs: Model Council costs roughly 2.5 times as much as using a single model, while the Critique approach costs about 20% more5

. These costs aren't directly passed to users given Microsoft Copilot operates on a subscription model, but they inform where Microsoft deploys multiple models versus single algorithms5

.Related Stories

Copilot Cowork Expands to Early Access Program

Microsoft also made Copilot Cowork more widely available to members in its Frontier program, which provides customers with early access to the latest AI features

1

. Built on technology from Anthropic's viral Claude Cowork product, this agentic AI tool goes beyond simple chatbot interactions to become a digital assistant that handles long-running, multi-step tasks inside Microsoft 3652

4

.

Source: TechRadar

Strategic Positioning Amid Intense Competition

These updates arrive as Microsoft races to improve research accuracy and drive better adoption amid intense competition from rivals including Google's Gemini and autonomous agents such as Claude Cowork

1

. The company reported 15 million paid Copilot seats in January, representing roughly 3.3% of its 450 million commercial Microsoft 365 users4

. The multi-model system also helps Microsoft demonstrate it isn't overly reliant on OpenAI, a strategic consideration as leading frontier labs frequently leapfrog one another5

."It's becoming very clear to us that there will be many models," Microsoft executive VP Charles Lamanna told Axios. "Come summertime there will be many more models than just these two inside of Copilot"

5

. Lamanna noted that businesses are interested in AI tools that can easily change which models run under the hood, and Microsoft is building more homegrown models that might first appear working alongside outside models rather than as full replacements5

. Both the Critique and Model Council features are currently available through Microsoft 365 Copilot's Frontier program3

.References

Summarized by

Navi

[2]

Related Stories

Microsoft Expands AI Partnerships: Integrates Anthropic's Claude Models into Copilot

24 Sept 2025•Technology

Microsoft Unveils AI-Powered Deep Research Tools for 365 Copilot

26 Mar 2025•Technology

Microsoft Copilot Unveils Major AI Expansion with Secure Sandboxing and No-Code Development Tools

29 Oct 2025•Technology

Recent Highlights

1

Meta AI chatbot let hackers hijack Instagram accounts by simply asking to change email addresses

Technology

2

Florida sues OpenAI and Sam Altman over ChatGPT safety, alleging AI harms linked to violence

Policy and Regulation

3

Apple's Siri overhaul for iOS 27 brings Gemini integration and standalone app to compete with ChatGPT

Technology