Nvidia ships first Vera Rubin AI chips to customers, promising 10x efficiency gains over Blackwell

4 Sources

4 Sources

[1]

Nvidia delivers first Vera Rubin AI GPU samples to customers -- 88-core Vera CPU paired with Rubin GPUs with 288 GB of HBM4 memory apiece

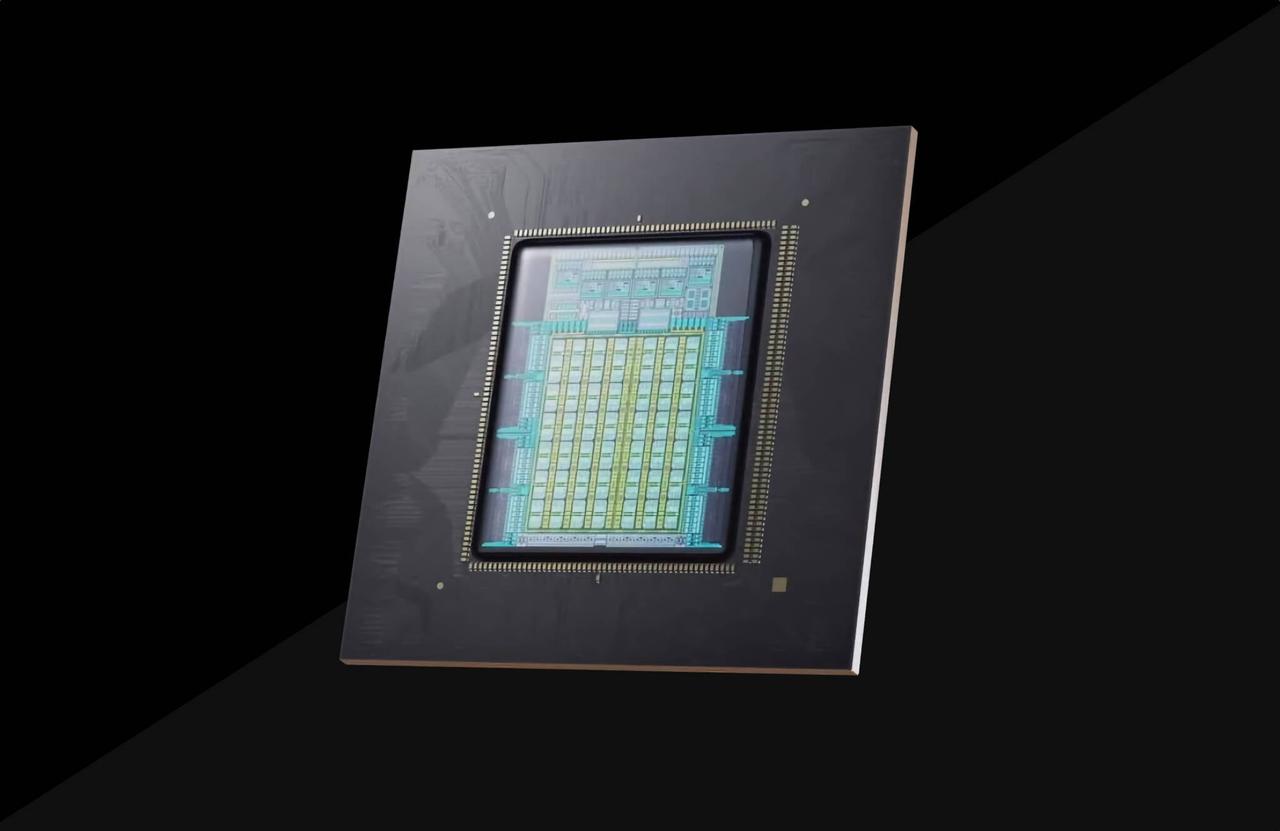

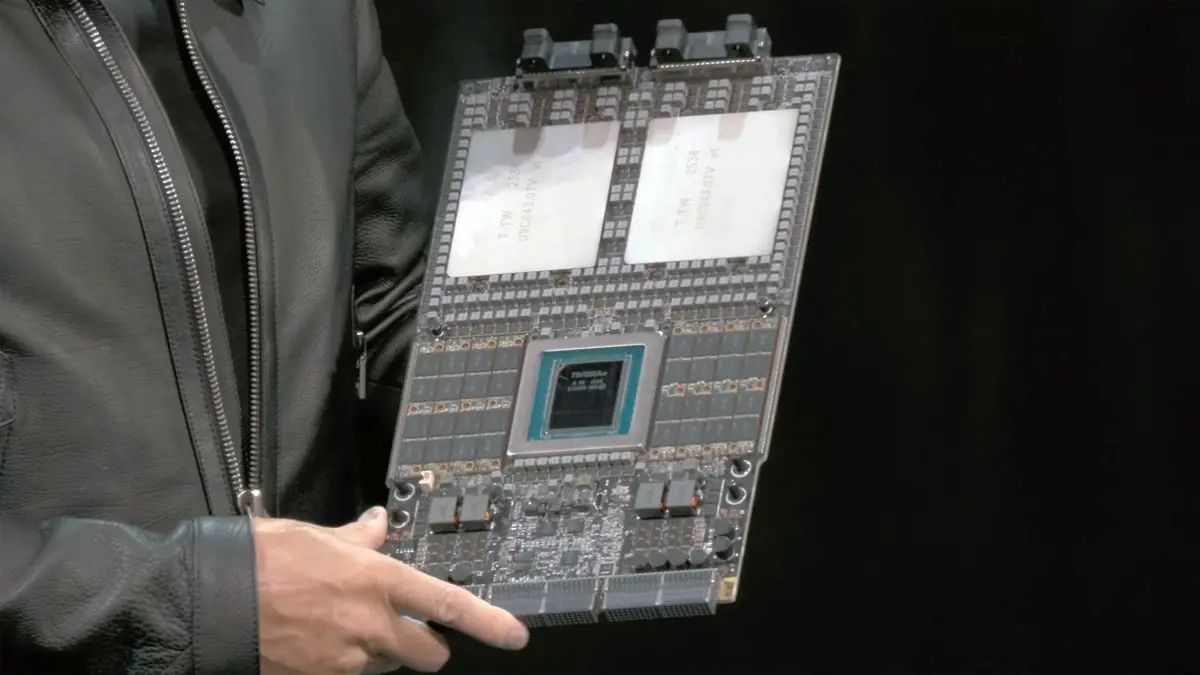

Nvidia has started delivering samples of its Vera Rubin platform for next-generation AI data centers to select customers, the company announced at its earnings call on Wednesday. As soon as the company's partners qualify and validate the new platform, they may start preparations for its deployment, which is expected in the second half of 2026 or in early 2027. " We shipped our first Vera Rubin samples to customers earlier this week, and we remain on track to commence production shipments in the second half of the year," said Colette Kress, chief financial officer of Nvidia, during the company's earnings call with analysts and investors. The fact that Nvidia began sampling of Vera Rubin with customers almost certainly means that it has frozen performance and power specifications of the parts by now, though it remains to be seen whether the company ended up upgrading performance of its GPUs to strengthen its leadership. Nvidia's Vera Rubin platform is the company's next-generation architecture for AI data centers that includes an 88-core Vera CPU, Rubin GPU with 288 GB HBM4 memory, Rubin CPX GPU with 128 GB of GDDR7, NVLink 6.0 switch ASIC for scale-up rack-scale connectivity, BlueField-4 DPU with integrated SSD to store key-value cache, Spectrum-6 Photonics Ethernet, and Quantum-CX9 1.6 Tb/s Photonics InfiniBand NICs, as well as Spectrum-X Photonics Ethernet and Quantum-CX9 Photonics InfiniBand switching silicon for scale-out connectivity. In a bid to get ready for the arrival of the Vera Rubin platform, the company's partners have to prepare their software and hardware stacks for it, so different partners will get different parts of the platform, whereas some will get actual NVL72 VR200 racks packing all of the aforementioned components. Also, samples of actual silicon will also go to hardware partners, such as Foxconn, Quanta, Supermicro, Wistron, and other well-known makers of AI servers. According to market rumors, Nvidia intends to ship its partners fully assembled Level-10 (L10) VR200 compute trays with Vera CPU and Rubin GPUs, cooling systems, and interfaces pre-installed, leaving very little design and integration work freedom to its ODMs. "Based on its modular cable-free tray design, Rubin will deliver improved resiliency and serviceability relative to Blackwell," Kress added. "We expect every cloud model builder to deploy Vera Rubin." Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

Nvidia's new AI system Vera Rubin is 10 times more efficient than its predecessor -- here's a first look

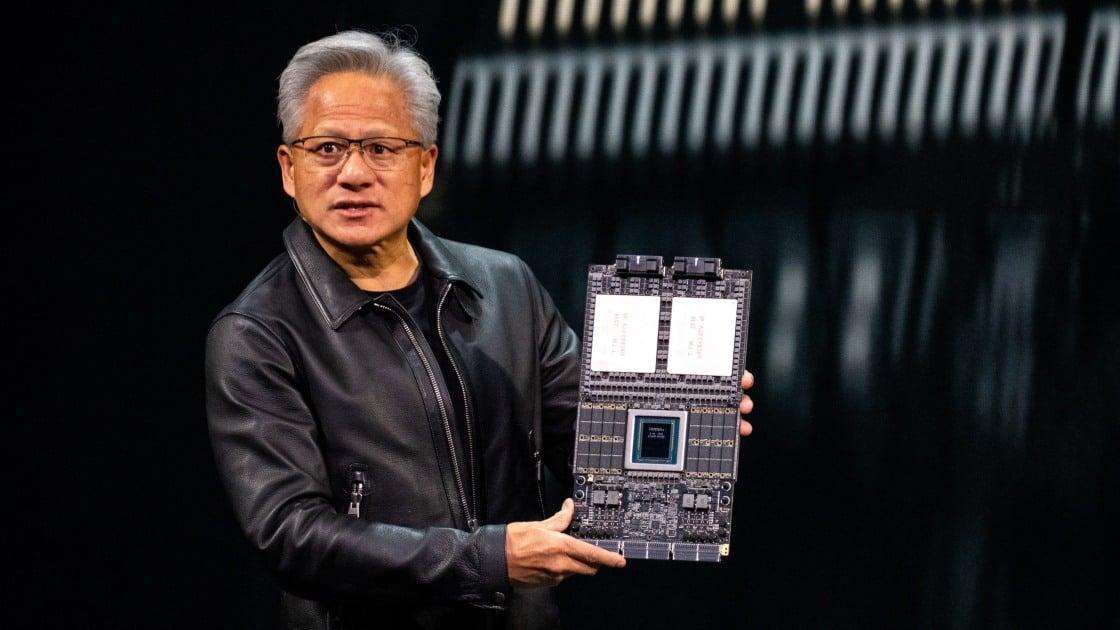

First look at Vera Rubin, Nvidia's next AI system that's 10 times more efficient Nvidia's earnings on Wednesday are expected to show booming sales of the company's current rack-scale system. But all eyes are on its next AI system, Vera Rubin, which is scheduled to roll out later this year. Vera Rubin, which is made up of 1.3 million components, will deliver 10 times more performance per watt than its predecessor, Grace Blackwell, the company claims. That's a significant development when energy consumption is one of the most critical issues facing the artificial intelligence build-out. CNBC got an exclusive first look at Vera Rubin at Nvidia's headquarters in Santa Clara, California. Nvidia says the new AI system is a complex web of parts sourced from around the world. Its core chips include 72 Rubin graphics processing units, or GPUs, and 36 Vera central processing units, or CPUs, primarily made by Taiwan Semiconductor Manufacturing Co. The other parts, from liquid cooling elements to power systems and compute trays, come from more than 80 suppliers in at least 20 countries, including China, Vietnam, Thailand, Mexico, Israel and the U.S. One big challenge the company faces is the soaring costs of memory due to a global shortage from all the AI-driven demand. Dion Harris, Nvidia's AI infrastructure head, said in an interview that the company has been giving suppliers "very detailed forecasts." "We're aligning to make sure that everything we're shipping will be met by our supply chain," he said. "We're in good shape." It's a critical moment for Nvidia, which dominates the market for AI processors but faces intensifying competition from Advanced Micro Devices as well as custom silicon from Broadcom and Google's homegrown tensor processing units. Nvidia has plans to manufacture up to $500 billion of AI infrastructure in the U.S. through 2029, including making Blackwell GPUs at TSMC's new Arizona fabs. Grace Blackwell went into production in 2024, and changed the game on how much compute was possible with a single system. Vera Rubin, which is expected to ship in the second half of 2026, takes the company to another level. Nvidia CEO Jensen Huang announced in January that the system was in full production.

[3]

Nvidia's latest AI chips bring unprecedented compute density

Nvidia has started shipping Vera Rubin AI chips with integrated CPU and GPU * Nvidia delivers Vera Rubin chips to customers enabling high-performance AI workloads immediately * The platform combines CPU, GPU, memory, and networking for unified performance * Early access allows partners to optimize AI software across large data centers Nvidia has confirmed it has begun distributing its Vera Rubin AI chips, offering early access to select customers and marking a notable step in AI infrastructure development. The chips combine advanced CPU and GPU architectures, designed specifically to manage the immense computational demands of modern AI workloads. Vera Rubin integrates high-memory GPUs, specialized CPUs, and fast interconnects, aiming to reduce bottlenecks during training and inference and supporting large generative AI and neural network models. Early access and deployment The Vera Rubin platform comes as fully assembled NVL72 VR200 compute trays, which include CPUs, GPUs, memory, and networking components in a rack-ready system. This simplifies integration and allows partners such as Foxconn, Quanta, and Supermicro to start testing data-intensive AI workloads immediately. The architecture of the Vera Rubin platform is built for efficiency in high-performance AI environments, as it incorporates NVLink 6.0 switch ASICs, BlueField-4 DPUs with integrated SSDs, and photonics-based interconnects to accelerate large-scale computations. Networking is supported through Spectrum-6 Photonics Ethernet and Quantum-CX9 InfiniBand NICs, as well as switching silicon designed for scalable connectivity across data center racks. This combination of CPU, GPU, storage, and networking components creates a unified system intended to handle both training and inference tasks, while offering real-time analytics capabilities in demanding data center setups. " We shipped our first Vera Rubin samples to customers earlier this week, and we remain on track to commence production shipments in the second half of the year," said Colette Kress, chief financial officer of Nvidia, speaking during the company's recent financial results. "Based on its modular cable-free tray design, Rubin will deliver improved resiliency and serviceability relative to Blackwell. We expect every cloud model builder to deploy Vera Rubin." The company is also extending its influence into practical applications, including AI integration in autonomous vehicles through its Alpamayo platform and potential robotaxi services in partnership with industry players. These initiatives leverage the processing density and memory bandwidth of the Vera Rubin chips -- focusing on linking high-performance computation to real-world AI deployment. Customers can begin optimizing their software stacks to take advantage of the new platform, preparing for faster, more efficient AI-driven research and commercial applications. Despite the technical advancements, adoption remains uncertain. Analysts note that the scale of AI uptake could be overestimated due to complex financial arrangements and circular investments. Geopolitical tensions also add complexity, with US regulations affecting the sale of advanced AI chips to China and leaving questions about the global impact. Data centers that rely on Nvidia's chips, which already support major AI applications for companies like OpenAI and Meta, will serve as the proving ground for the Vera Rubin platform. The effectiveness of these chips will ultimately depend on how well customers integrate CPU, GPU, and networking resources to accelerate AI workloads at scale. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button! And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

[4]

Here's a Look at One of the World's Most Complex AI Systems, the NVIDIA Vera Rubin, Integrating a Million Components

NVIDIA's next-gen Vera Rubin is currently under full production, and the company has provided us with an extensive overview of the rack architecture, diving into individual components. When we talk about rack generations, NVIDIA is set to feature major upgrades with Vera Rubin, which we'll discuss in depth, but based on a recent video by CNBC diving into the Vera Rubin architecture, we saw an extensive look at multiple components, ranging from the main compute node to networking and cooling elements. More importantly, NVIDIA's Senior Director of Infrastructure, Dion Harris, calls Vera Rubin one of the "world's most complex AI systems, arguing that what NVIDIA does is unique and difficult to execute. Given that Rubin is expected to see customer commitments soon, it is important that we dive into what an NVL72 rack actually looks like. And, one of the most essential elements of the rack out there is, of course, the Vera Rubin SuperChip itself. We have already talked about how the Rubin GPU and Vera CPU configuration looks from a technical perspective, but one important point to note is that major performance improvements come from NVIDIA integrating HBM4 with the GPU, along with dedicated SOCAMM modules. Altogether, memory bandwidth reaches a whopping 1.2 TB/s. NVIDIA's major upgrade with Vera Rubin also comes within the cooling department, since Team Green plans to integrate modular liquid cooling designs, covering SuperChip elements such as Rubin GPU and Vera CPU, through dedicated cold plates. NVIDIA's executives argue that Rubin deployment will indeed convince hyperscalers to switch to upgraded liquid-cooling systems, and, interestingly, the current implementation reduces water use, another benefit touted by NVIDIA. NVLink is an important aspect of Vera Rubin NVL72, and with the 6th-generation interconnection fabric, often called the "NVLink Spine", NVIDIA plans to deliver a total aggregate bandwidth of 260 TB/s per rack. Harris says that with the latest NVLink generation, the company has taken modularity to a whole new level, which is why it claims the NVLink 6 spine supports zero-downtime maintenance and rack-level RAS services. While estimates suggest that Vera Rubin will debut with a decent price hike, NVIDIA says that the architecture brings in a 10x reduction in inference token cost and 4x reduction in the number of GPUs to train MoE models vs Blackwell GB200, which means that the "most you buy, the more you save" rule by NVIDIA's CEO is still intact.

Share

Share

Copy Link

Nvidia has begun delivering samples of its Vera Rubin platform to select customers, marking a pivotal moment in AI infrastructure evolution. The system combines 72 Rubin GPUs with 288 GB HBM4 memory apiece and 36 Vera CPUs, promising 10 times better performance per watt than its Blackwell predecessor. Production shipments are expected in the second half of 2026.

Nvidia Begins Shipping Vera Rubin Samples to Select Partners

Nvidia has started delivering samples of its Vera Rubin platform to select customers, the company announced during its earnings call on Wednesday. CFO Colette Kress confirmed that "we shipped our first Vera Rubin samples to customers earlier this week, and we remain on track to commence production shipments in the second half of the year."

1

This early access allows partners including Foxconn, Quanta, and Supermicro to begin qualifying and validating the new platform ahead of full deployment expected in the second half of 2026 or early 2027. The fact that Nvidia began sampling almost certainly means it has frozen performance and power specifications, though questions remain about potential last-minute performance upgrades to strengthen its market leadership.

Source: Tom's Hardware

Inside the Vera Rubin Platform Architecture

The Vera Rubin platform represents Nvidia's next-generation architecture for AI data centers, integrating an 88-core Vera CPU paired with Rubin GPUs equipped with 288 GB of HBM4 memory apiece.

1

The complete system includes Rubin CPX GPU with 128 GB of GDDR7, NVLink 6.0 switch ASIC for scale-up rack-scale connectivity, BlueField-4 DPU with integrated SSD to store key-value cache, and Spectrum-6 Photonics Ethernet alongside Quantum-CX9 1.6 Tb/s Photonics InfiniBand NICs. This AI system comprises 1.3 million components sourced from more than 80 suppliers across at least 20 countries, including China, Vietnam, Thailand, Mexico, Israel, and the U.S.2

The Vera Rubin SuperChip achieves memory bandwidth of 1.2 TB/s, while the NVLink 6 spine delivers a total aggregate bandwidth of 260 TB/s per rack.4

Source: Wccftech

Ten Times More Efficient Than Blackwell

Vera Rubin will deliver 10 times more performance per watt than its predecessor, Grace Blackwell, according to Nvidia.

2

This energy-efficient design addresses one of the most critical issues facing the AI infrastructure build-out: power consumption. Beyond raw efficiency gains, Nvidia claims the architecture brings a 10x reduction in inference token cost and a 4x reduction in the number of GPUs needed to train mixture-of-experts models versus Blackwell GB200.4

The platform's modular cable-free tray design delivers improved resiliency and serviceability relative to Blackwell, making it easier to maintain and repair in demanding data center environments. Kress emphasized that "we expect every cloud model builder to deploy Vera Rubin," signaling Nvidia's confidence in widespread adoption.1

Liquid Cooling and Modular Design Innovation

Nvidia plans to integrate modular liquid cooling designs with Vera Rubin, covering SuperChip elements such as Rubin GPUs and Vera CPU through dedicated cold plates.

4

Executives argue that Rubin deployment will convince hyperscalers to switch to upgraded liquid-cooling systems, with the current implementation reducing water use compared to previous generations. According to market rumors, Nvidia intends to ship its partners fully assembled Level-10 VR200 compute trays with Vera CPU and Rubin GPUs, cooling systems, and interfaces pre-installed, leaving minimal design and integration work to ODMs.1

With the latest NVLink generation, the company has elevated modularity significantly, supporting zero-downtime maintenance and rack-level reliability, availability, and serviceability features.4

Related Stories

Supply Chain Challenges and Market Competition

One major challenge Nvidia faces is soaring memory costs due to a global shortage driven by AI demand. Dion Harris, Nvidia's AI infrastructure head, said the company has been giving suppliers "very detailed forecasts" to ensure alignment. "We're aligning to make sure that everything we're shipping will be met by our supply chain. We're in good shape," he stated.

2

This comes at a critical moment for Nvidia, which dominates the market for AI processors but faces intensifying competition from Advanced Micro Devices as well as custom silicon from Broadcom and Google's homegrown tensor processing units. The company has plans to manufacture up to $500 billion of AI infrastructure in the U.S. through 2029, including making Blackwell GPUs at TSMC's new Arizona fabs.2

Real-World Deployment and Future Outlook

The Vera Rubin platform arrives as fully assembled NVL72 VR200 compute trays, simplifying integration for partners to start testing data-intensive AI workloads immediately.

3

This unified system handles both AI training and inference tasks while offering real-time analytics capabilities in demanding setups. Data centers that already support major AI applications for companies like OpenAI and Meta will serve as the proving ground for the platform. However, analysts note that adoption remains uncertain, with concerns that the scale of AI uptake could be overestimated due to complex financial arrangements and circular investments.3

Geopolitical tensions add further complexity, with U.S. regulations affecting the sale of advanced AI chips to China. The effectiveness of these AI chips will ultimately depend on how well customers integrate CPU, GPU, and networking resources to accelerate AI workloads at scale, with early customer feedback likely shaping the trajectory of this ambitious platform.References

Summarized by

Navi

[1]

Related Stories

Nvidia Unveils Vera Rubin Superchip: Six-Trillion Transistor AI Platform Set for 2026 Production

29 Oct 2025•Technology

Nvidia Vera Rubin architecture slashes AI costs by 10x with advanced networking at its core

06 Jan 2026•Technology

NVIDIA Unveils Next-Gen AI Powerhouses: Rubin and Rubin Ultra GPUs with Vera CPUs

19 Mar 2025•Technology