Nvidia unveils Vera Rubin Space Module for orbital AI data centers as space computing race heats up

18 Sources

18 Sources

[1]

Nvidia Is Building a Computer for AI Data Centers in Space

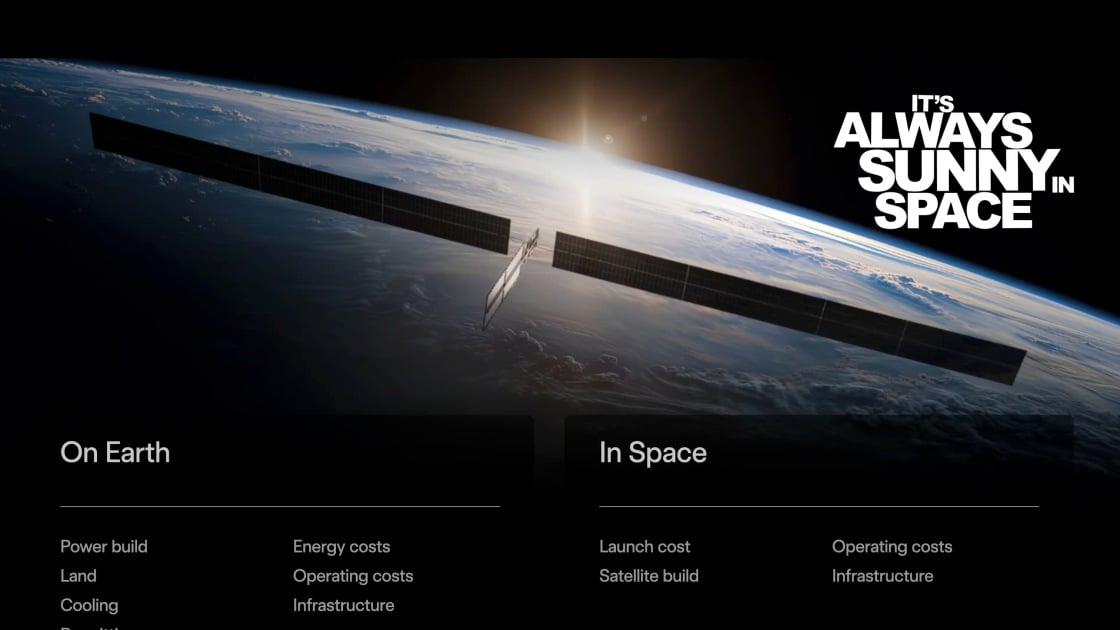

Expertise Artificial intelligence, home energy, heating and cooling, home technology. Space may be the next frontier for the AI infrastructure boom, but it will take some work to make that happen, Nvidia CEO Jensen Huang said during his keynote address Monday at the company's GTC conference in San Jose, California. While the company already has chips in satellites, creating a data center in space is an entirely different beast, Huang said. "Obviously, very complicated to do so." Nvidia isn't the only one eyeing orbit for AI factories. Elon Musk has talked often of putting data centers in space, which makes sense considering he recently merged the AI company he owns with the rocket company he owns. Read more: Nvidia GTC: All the AI and Robotics News From Jensen Huang's Keynote Space has some distinct advantages for data centers. For one, there are no zoning boards or neighbors to worry about annoying. You could likely power an orbital data center with solar power. There's also a ton of room, although the number of satellites is making orbit crowded. But there's a big challenge that Nvidia is facing as it designs its Space-1 Vera Rubin module computer. How do you keep chips cool in a vacuum? "In space, there's no conduction, there's no convection, it's just radiation," Huang said. "So we have to figure out how to cool these systems out in space."

[2]

Nvidia is the latest to promise AI data centers in space.

The NVIDIA Space-1 Vera Rubin Module is the ticket. CEO Jensen Huang: We're working with our partners on a new computer called Vera Ruben Space 1, and it's going to go out to space and start data centers out in space. Now, of course in space there's no conduction, there's no convection, there's just radiation, and so we have to figure out how to cool these systems out in space. But we've got lots of great engineers working on it. Also see: every other tech billionaire eying the sun's limitless power and glossing over potential problems. Space!

[3]

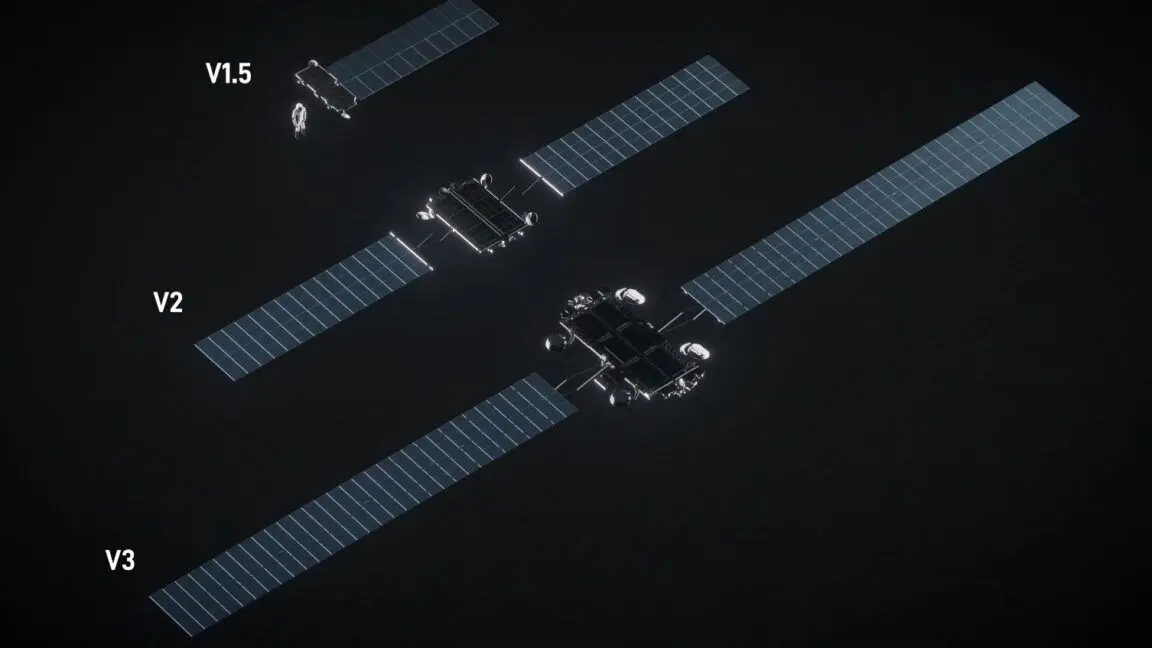

Musk Offers Sneak Peek at Orbiting Data Centers. They're Bigger Than the ISS

Elon Musk offered a first look at his plans for orbiting data centers this weekend, and they will be longer than the International Space Station (ISS). The satellites stand out for their exceptionally large solar arrays. The SpaceX CEO's presentation didn't give an exact length, but each one is significantly longer than the Starship V3 rocket, which stands at 124.4 meters (408 feet). The satellites also dwarf the length of the ISS, which spans 109 meters (357 feet) and is visible in the night sky. Musk indicated the satellites can capture plenty of solar energy to power the high-density AI processing inside. The rendering shows "the solar panels and radiator to scale," he said. However, Musk also noted that the image depicts only a "rough approximation" of the "mini" version of the upcoming AI satellite. So future models could be significantly larger. The current design promises 100 kilowatts of AI computing, whereas future versions will offer a "megawatt-range" of computing power. (For perspective, 1 megawatt can power between 200 and 700 homes, depending on usage.) On the Earth's surface, companies, including Musk's xAI, are already planning terrestrial AI data centers that'll reach 1,000 megawatts (1 gigawatt) of computing capacity. It's why SpaceX is proposing to launch up to 1 million satellites for its orbital data centers, paving the way for the company to offer thousands of gigawatts of AI compute. "We're confident this is feasible," Musk said of launching the satellites using the Starship rocket. "Like no new physics, or impossible things are required to get there." 'I Would Expect Quite a Few Collisions' But space experts and astronomers have concerns about SpaceX's proposal, which would drastically increase the number of satellites in Earth's orbit. They currently number around 15,000 -- about 10,000 of which belong to SpaceX's Starlink network. SpaceX was previously vague about the satellite design for its orbiting data centers. The new rendering confirms worries that the satellites will be longer than current V2 Starlink models. Hugh Lewis, a space debris expert and professor of astronautics at the UK's University of Birmingham, noted that the orbiting data centers will need to constantly maneuver to avoid hitting space junk and other satellites. He estimates there are roughly "40,000 maneuvers per day across the entire constellation to 'avoid' objects 10 cm and larger." "That's about 14.5 million maneuvers per year. The upper end of the maneuver rate could be 100,000 per day, which is 36.5 million per year," he tells PCMag. "If the residual collision probability after an 'avoidance' maneuver is not zero, I would expect quite a few collisions amongst the active satellites in the constellation, despite all those efforts to avoid them." Astronomers and environmentalists have also been raising alarms about light pollution from the proposed orbiting data centers. "We thought the size we assumed was ridiculous, but this graphic shows that we actually underestimated what SpaceX is planning to do," said University of Regina astronomy professor Samantha Lawler, who co-published an article that argues the orbiting data centers threaten to overrun the night sky. The concerns have triggered a surge in public opposition to SpaceX's proposal to deploy 1 million satellites, which the Federal Communications Commission is currently reviewing for potential approval. The International Astronomical Union fears they will radiate so much heat that they'll interfere with radio astronomy observations. Another worry is that SpaceX plans to retire at least some of the satellites by letting them burn up during atmospheric re-entry, which might release ozone-depleting chemicals, though the topic needs further study. In response, SpaceX tells the FCC that it plans to start small with the constellation to study potential impacts on the Earth's atmosphere before scaling up. "Brightness mitigation is a core design criterion for the Orbital Data Center system to mitigate risks to optical astronomy," it says. The goal is to make the satellites too faint for the human eye and telescopes to see. In his presentation, Musk also pushed back on critics who questioned how the company would cool the orbiting data centers, since space has no air. "It's safe to say SpaceX knows how to do heat rejection in space, with 10,000 [Starlink] satellites in orbit," he said, later adding: "The radiator is quite small relative to the solar panels." Musk Eyes 'Terafab' Chip Factory Still, Musk said his company is currently missing the large-scale capacity to manufacture the AI chips needed for the satellites. Hence, his presentation was mainly focused on building a new factory, dubbed the "Terafab," to produce cutting-edge processors, including GPUs and memory chips, for SpaceX and his EV company, Tesla, which is also developing humanoid robots. "It's a hostile environment in space, so you want to design the chip, optimize it, for space," Musk said. Although the SpaceX CEO said he was grateful to the company's current chip suppliers, Samsung and Micron, he added, "We either build the Terafab, or we don't have the chips." The goal is to construct the new factory in Austin, Texas. The problem is that it usually takes three to five years and tens of billions in investment to build chip factories in the US, at a time when the industry is also facing a historic shortage. Nevertheless, Musk remains bullish on orbiting data centers, pointing to the plentiful solar energy the satellites can harness. "I actually think the cost of deploying AI into space will drop below the cost of terrestrial AI much sooner than most people expect," Musk said. "I think it may be only two or three years before it is lower-cost to send AI chips into space than it is on the ground." Blue Origin and the startup Starcloud are also preparing to launch their own orbiting data center constellations through tens of thousands of new satellites.

[4]

Nvidia announces Vera Rubin Space Module -- up to 25x the AI compute of H100 for orbital data centers

Nvidia CEO Jensen Heung has announced the Vera Rubin Space Module at the company's ongoing GTC 2026 event, claiming up to 25 times more AI compute than the H100 for orbital inference workloads. Six commercial space companies are understood to have already deployed the platform. According to the official Nvidia press release, the Vera Rubin Space Module is designed for orbital data centers running LLMs and advanced foundation models directly in space, with a tightly integrated CPU-GPU architecture and high-bandwidth interconnect built to handle large data streams from space-based instruments in real time. Below that sits the Nvidia IGX Thor, targeting mission-critical edge environments with support for real-time AI processing, functional safety, secure boot, and autonomous operation. The Nvidia Jetson Orin, meanwhile, handles the smallest form factor, targeting SWaP-constrained satellites for onboard vision, navigation, and sensor data processing. Back on planet Earth, Nvidia has positioned the RTX PRO 6000 Blackwell Series Server Edition GPU for geospatial intelligence workloads, claiming up to a 100 times performance uplift versus legacy CPU-based batch processing systems when analyzing large image archives. Nvidia says that six companies are currently using its platforms across orbital and ground environments: Aetherflux, Axiom Space, Kepler Communications, Planet Labs PBC, Sophia Space, and Starcloud, with Kepler deploying Jetson Orin across its satellite constellation for AI-driven data management. "Nvidia Jetson Orin brings advanced AI directly to our satellites, allowing us to intelligently manage and route data across our constellation," said Mina Mitry, the company's CEO, in Nvidia's official press release. Last October, Amazon and Blue Origin founder Jeff Bezos predicted that gigawatt-scale data centers in orbit were 10 to 20 years away, citing continuous solar power and the simplified cooling environment of space as the primary advantages. Starcloud, one of Nvidia's six partners, is already building what it describes as purpose-designed orbital data centers aimed at running training and inference workloads in orbit. "Space computing, the final frontier, has arrived," said Jensen Huang, adding that "AI processing across space and ground systems enables real-time sensing, decision-making and autonomy, transforming orbital data centers into instruments of discovery and spacecraft into self-navigating systems." The IGX Thor, Jetson Orin, and RTX PRO 6000 Blackwell Server Edition are available now. The Vera Rubin Space Module has no release date; Nvidia says it'll be available "at a later date." Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[5]

Nvidia Debuts an AI Chip For Space-Based Data Centers

When he's not battling bugs and robots in Helldivers 2, Michael is reporting on AI, satellites, cybersecurity, PCs, and tech policy. Orbiting data centers may be a moonshot, but Nvidia isn't waiting for the concept to potentially take off. The company announced it's working on a chip designed to survive the rigors of space. At Nvidia's GTC event, the company revealed the "Vera Rubin Space Module," which can run AI models, but from orbit. The module contains a GPU using Nvidia's latest Rubin architecture, and promises to deliver an up to a 25-time performance leap from the H100 GPU, which arrived back in 2022. But the chip seems engineered for specialized workloads on satellites or space stations rather than one day processing your ChatGPT prompt from orbit. Nvidia noted the module can process data streams from "space-based instruments in real time," creating a way to unlock "on-orbit analytics, autonomous scientific discovery and rapid insight generation." The company also didn't announce a specific launch date for the chip. Nor did it mention SpaceX, the major player that's been talking up orbital data centers. The company's CEO Elon Musk is so bullish on the idea he expects space-based data centers to eventually beat terrestrial data centers in both costs and efficiency in the near future. In addition, SpaceX has filed a regulatory request to operate up to 1 million satellites to support the orbiting data center project. In contrast, Nvidia's CEO Jensen Huang has been more cautious on space-based data centers, and recently indicated the concept needs more time to mature. "Well, the economics are poor today, but it is going to improve over time," he said in an earnings call last month. Huang then mentioned GPUs in space could excel at certain tasks, such high-resolution satellite imaging; rather than rely on servers on Earth to help process the imaery, a GPU on board a satellite could do so at a much faster rate. So It's possible the Vera Rubin Space Module is all about laying the groundwork for a bigger business, but the current scope appears to be limited. At GTC, Huang pointed out the company needs to overcome the challenge of finding ways to cool AI chips in orbit when space has no air. In the meantime, Nvidia noted it's working on the Vera Rubin Space Module with partners including space-based solar power developer Aetherflux, space station maker Axiom Space, satellite imagery provider Planet Labs and a startup called StarCloud, which has been developing orbiting data centers. Last November, the Starcloud launched an Nvidia enterprise GPU, the H100, into space using a test satellite. Starcloud was then able to successfully connect and train and run AI models over the GPU. The company has since filed a request to launch up to 88,000 satellites in space. Nvidia announced the Vera Rubin Space Module days after the company also began recruiting for an "Orbital Datacenter System Architect."

[6]

Nvidia announces Vera Rubin Space-1 chip system for orbital AI data centers

"Space computing, the final frontier, has arrived," said CEO Jensen Huang. "As we deploy satellite constellations and explore deeper into space, intelligence must live wherever data is generated." In a press release, the company said that its Vera Rubin Space-1 Module, which includes the IGX Thor and Jetson Orin, will be used on space missions led by multiple companies. The chips are specifically "engineered for size-, weight- and power-constrained environments." Partners include Axiom Space, Starcloud and Planet. Huang said Nvidia is working with partners on a new computer for orbital data centers, but there are still engineering hurdles to overcome. "In space, there's no convection, there's just radiation," Huang said during his GTC keynote, "and so we have to figure out how to cool these systems out in space, but we've got lots of great engineers working on it." The data center buildout that powers AI demand has been blamed for soaring electricity costs. Sending orbital data centers into space has been viewed as one solution, but high costs and low availability of rocket launches remain a barrier. Still, AI companies are racing to make use of space's virtually unlimited solar power. In November, Google announced its 'Project Suncatcher' initiative, exploring the concept of compute in space. Elon Musk's xAI was acquired by SpaceX last month in a $1.25 trillion deal with an eye toward building out data centers in space. The company is one of Nvidia's largest customers. SpaceX asked the Federal Communications Commission for approval to launch 1 million satellites for AI centers in January, a plan that has been opposed by scientists for environmental threats, including light pollution and orbital debris.

[7]

'Space computing, the final frontier, has arrived': Nvidia wants to power the next generation of data centers in space

* Nvidia reveals hardware for use in orbital data centers * Space-1 Vera Rubin Module will offer huge increases in power and efficiency, with RTX PRO 6000 Blackwell Server Edition GPU back on Erath to process the data * Six space companies have alreadt signed up to work with Nvidia Nvidia has laid out its plans to help launch the next generation of "space innovation" - namely through boosting data centers in space with the latest AI capabilities. At Nvidia GTC 2026, the company revealed how its hardware is helping partners and "space operators" become more effective and powerful, particularly for operations such as disaster response, climate and weather predictions and more. This includes Space-1 Vera Rubin Module, Nvidia's latest tool for orbital data centers (ODCs) running LLMs and advanced foundation models, which includes a Rubin GPU delivering up to 25 times more AI compute than its H100, and high-bandwidth interconnect to process massive data streams from space-based instruments in real time Looking ahead Nvidia notes such power increases will allow for space-based inferencing, with its IGX Thor and Jetson Orin platforms offering energy-efficient, high-performance AI inference, image sensing and accelerated data processing to enable true edge computing on orbit in a compact module. It will also help AI applications operate seamlessly, "from ground to space, and space to space," while supporting increasingly complex missions and ODCs become more widespread. Elsewhere, Nvidia's data center platforms back on planet Earth, including the RTX PRO 6000 Blackwell Server Edition GPU, will provide high-throughput, on-demand processing for geospatial intelligence, delivering up to 100 times faster performance versus legacy CPU-based batch systems when analyzing massive imagery archives such as weather data. The platform will also help AI applications operate seamlessly, "from ground to space, and space to space," while supporting increasingly complex missions and ODCs become more widespread. All of this should help unlock processes such as on-orbit analytics, autonomous scientific discovery and rapid insight generation, pushing space technology even further, with six commercial space companies are understood to have already deployed Space-1 Vera Rubin Module. "Space computing, the final frontier, has arrived. As we deploy satellite constellations and explore deeper into space, intelligence must live wherever data is generated," said Jensen Huang, founder and CEO of Nvidia. "AI processing acrss space and ground systems enables real-time sensing, decision-making and autonomy, transforming orbital data centers into instruments of discovery and spacecraft into self-navigating systems. With our partners, we're extending Nvidia beyond our planet -- boldly taking intelligence where it's never gone before." Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button! And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

[8]

Nvidia making AI module for outer space

San José (United States) (AFP) - Nvidia chief Jensen Huang on Monday said the leading artificial intelligence chip maker is heading for space with a goal of powering orbiting data centers. An Nvidia graphics processing unit (GPU) was launched into space late last year by startup Starcloud in what was touted as an off-planet debut for the technology, but now Nvidia is creating a module intended as a building block for data centers there. "We're working with our partners on a new computer called Vera Rubin Space One," Huang said as he kicked off the GPU-maker's annual developers conference in Silicon Valley. "It's going to go out to space and start data centers." Partners in the project include Starcloud, which is planning a November satellite launch that will mark the "cosmic debut" of the new Nvidia module. A Starcloud-1 satellite, about the size of a small refrigerator, is expected to be packed with 100 times more computing power than any previous space-based operation. "In 10 years, nearly all new data centers will be being built in outer space," predicted Starcloud co-founder and chief Philip Johnston. The startup explained that it plans to power Google AI with the Nvidia GPUs to show that large language models can run in outer space. Nvidia described the Vera Rubin module as being optimized for AI, enabling real-time sensing, decision making, and autonomous functioning. "Space computing, the final frontier, has arrived," Huang said. "With our partners, we're extending Nvidia beyond our planet -- boldly taking intelligence where it's never gone before." Tech firms are floating the idea of building data centers in space and tapping into the sun's energy to meet out-of-this-world power demands in a fierce artificial intelligence race. More than a dozen startups, aerospace leaders, and major tech firms are involved in the development, testing, or planning of space-based data centers. The big draw of space for data centers is power supply, with the option of synchronizing satellites to the sun's orbit to ensure constant light beaming onto solar panels. Building in space also avoids the challenges of acquiring land and meeting local regulations or community resistance to projects. Critical technical aspects of such operations need to be resolved, however, particularly damage to the orbiting data centers from high levels of radiation and extreme temperatures, and the danger of them being hit by space junk.

[9]

Nvidia previews Vera Rubin Space-1 Module for orbital data centers - SiliconANGLE

Nvidia previews Vera Rubin Space-1 Module for orbital data centers Nvidia Corp. has previewed a computing device called the Vera Rubin Space-1 Module that is designed to power satellites and orbital data centers. Chief Executive Jensen Huang announced the product today in his GTC keynote. Nvidia has shared only a limited amount of information about its new space hardware. As the name suggests, the Vera Rubin Space-1 Module is based on the company's Vera Rubin chip. The chip combines 2 Rubin graphics processing units with a single Vera central processing unit. Nvidia first announced Vera last year, but didn't release a detailed technical overview until today. The chip's 88 cores each feature a neural branch predictor, a module that can complete some calculations before their results are needed. That reduces the need to wait for calculation results and thereby speeds up processing. Vera's 88 cores are supported by a memory subsystem based on LPDDR5X, a RAM variety most commonly found in consumer devices. The Rubin graphics card, the other component of the Vera Rubin, features 336 billion transistors made using a 3-nanometer node. It can provide 50 petaflops of performance when processing NVFP4 data. Its predecessor managed 10 petaflops. The press release announcing Vera Rubin Space-1 Module didn't specify how many processors the device contains. However, a visualization that appeared behind Huang during his GTC keynote appeared to depict a pair of Vera Rubin chips. If the device will indeed ship with 2 chips, it may support a reliability feature called lockstep processing that is often implemented in spacecraft. The radiation found in space can interfere with chips and cause computing mistakes. Lockstep processing mitigates such errors by carrying out calculations with two chips instead of one. Each calculation is performed twice, or once in each chip. The processors compare their results to find discrepancies, which usually indicate the presence of an error, and then apply a fix. Radiation can cause errors in not only processors but also the attached memory. Nvidia ships many of its chips with a feature called ECC, or Error Correction Code, that can automatically fix some RAM-related technical issues. The technology is widely used in both data centers and satellites. During his keynote, Huang stated that the Vera Rubin Space-1 Module's cooling mechanism is still a work in progress. "In space there's no conduction, there's no convection," Huang said, referring to the two physical phenomena that data centers use to dissipate server heat. "There's just radiation. And so we have to figure out how to cool these systems out in space. We've got lots of great engineers working on it." Satellites remove heat from their internal components by radiating it into space as electromagnetic waves. Those waves usually take the form of infrared light. Satellites also transmit thermal energy internally between different components, for example from the onboard processor to parts that can withstand higher temperatures. That task is carried out with specialized heat transfer devices made of materials such as copper. According to Huang, Nvidia envisions customers using Vera Rubin Space-1 Module to power not only satellites but also orbital data centers. Several companies have expressed interest in building orbital AI infrastructure. Nvidia stated today that one of them, Sophia Space Inc., already uses its silicon. The chipmaker's customer base also includes space-based solar farm startup Aetherflux Inc., Planet Labs PBC and several other market players.

[10]

Nvidia unveils Vera Rubin Space-1 for orbital data centers

Nvidia has launched computing platforms for orbital data centers, unveiling the Vera Rubin Space-1 Module at its GTC 2026 conference on Monday. The move positions the chipmaker at the center of a growing push to move AI infrastructure beyond Earth, as terrestrial data centers strain power grids and tech companies seek alternatives. The announcement comes amid rising electricity costs linked to AI demand and intensifying competition to harness solar energy in space. The Vera Rubin Space-1 Module includes the IGX Thor and Jetson Orin chips, which Nvidia said are engineered for size-, weight-, and power-constrained environments. Partners for the initiative include Axiom Space, Starcloud, and Planet. CEO Jensen Huang framed the launch as a strategic necessity. "Space computing, the final frontier, has arrived," he said. "As we deploy satellite constellations and explore deeper into space, intelligence must live wherever data is generated." Huang acknowledged significant engineering obstacles remain. "In space, there's no convection, there's just radiation," he said during his keynote. "And so we have to figure out how to cool these systems out in space, but we've got lots of great engineers working on it." The orbital data center concept has gained traction across the industry. Google announced its 'Project Suncatcher' initiative in November to explore computing in space. SpaceX acquired Elon Musk's xAI last month in a $1.25 trillion deal aimed at expanding data centers in space; xAI is one of Nvidia's largest customers. SpaceX sought Federal Communications Commission approval in January to launch 1 million satellites for AI centers, a plan opposed by scientists over concerns about light pollution and orbital debris, according to CNBC. Nvidia designs graphics processing units and AI accelerators. The company trades on the Nasdaq under the ticker NVDA.

[11]

Nvidia making AI module for outer space

Nvidia chief Jensen Huang on Monday said the leading artificial intelligence chip maker is heading for space with a goal of powering orbiting data centers. Tech firms are floating the idea of building data centers in space and tapping into the sun's energy to meet out-of-this-world power demands in a fierce artificial intelligence race. Nvidia chief Jensen Huang on Monday said the leading artificial intelligence chip maker is heading for space with a goal of powering orbiting data centers. An Nvidia graphics processing unit (GPU) was launched into space late last year by startup Starcloud in what was touted as an off-planet debut for the technology, but now Nvidia is creating a module intended as a building block for data centers there. "We're working with our partners on a new computer called Vera Rubin Space One," Huang said as he kicked off the GPU-maker's annual developers conference in Silicon Valley. "It's going to go out to space and start data centers." Partners in the project include Starcloud, which is planning a November satellite launch that will mark the "cosmic debut" of the new Nvidia module. A Starcloud-1 satellite, about the size of a small refrigerator, is expected to be packed with 100 times more computing power than any previous space-based operation. "In 10 years, nearly all new data centers will be being built in outer space," predicted Starcloud co-founder and chief Philip Johnston. The startup explained that it plans to power Google AI with the Nvidia GPUs to show that large language models can run in outer space. Nvidia described the Vera Rubin module as being optimized for AI, enabling real-time sensing, decision making, and autonomous functioning. "Space computing, the final frontier, has arrived," Huang said. "With our partners, we're extending Nvidia beyond our planet - boldly taking intelligence where it's never gone before." Tech firms are floating the idea of building data centers in space and tapping into the sun's energy to meet out-of-this-world power demands in a fierce artificial intelligence race. More than a dozen startups, aerospace leaders, and major tech firms are involved in the development, testing, or planning of space-based data centers. The big draw of space for data centers is power supply, with the option of synchronizing satellites to the sun's orbit to ensure constant light beaming onto solar panels. Building in space also avoids the challenges of acquiring land and meeting local regulations or community resistance to projects. Critical technical aspects of such operations need to be resolved, however, particularly damage to the orbiting data centers from high levels of radiation and extreme temperatures, and the danger of them being hit by space junk.

[12]

Exclusive: Expert Weighs In On Why Orbital Datacenters Could Likely Work In Tandem With Ground-Based AI Infrastructure

Space-based AI compute has been positioned by the tech industry as a solution to several challenges facing current Earth-based datacenter infrastructure, including energy supply, cooling, and water. However, is the technology as feasible and attainable as it's touted to be? We spoke to Sean McDevitt, a Partner at consulting firm Arthur D. Little, to share his insights on what the technology's future could look like in the short and long term. A Targeted, Modular Deployment McDevitt, expanding on the feasibility of datacenters with our current economic and technological advancements, shared that orbital datacenters were feasible in "a limited sense," adding that the current levels suggest it can carry out "specialized workloads," but weren't yet at "hyperscale or at a cost" that could make a satellite cluster replace terrestrial datacenters. "Today, the strongest case is for targeted, modular deployments rather than giant clouds in space," he said. McDevitt also shared that the promise of orbital datacenters was attractive because Earth-based AI infrastructure is running into bottlenecks like "long power interconnection queues, cooling and water constraints, permitting delays, etc." However, the enthusiasm and the interest in the phenomenon could be partly driven by "hype around one variable [energy], while underestimating all the others," McDevitt shared. Challenges Along The Way The potential debris caused by the datacenters, McDevitt shared, was also cause for concern. "If the industry starts talking about thousands of orbital compute assets, debris management moves from a side issue to a core design and regulatory requirement," he said. McDevitt also shared that the satellites could increase collision risk unless there was "strong debris mitigation." Cooling is another issue that McDevitt sees posing a challenge for orbital datacenters. "In orbit, you do not cool by convection or evaporation," he said, which, while eliminating the need for water, translates to thermal design taking center stage. "Heat spreading, radiators, conservative power density, and workload scheduling" were all important aspects, he said. Potential For Physical AI And Autonomy? As physical AI and autonomy efforts are ramping up, McDevitt shared that he did see potential for orbital datacenters to work as an extension of ground-based systems on Earth to operate autonomous technology and physical AI. "The best use case is likely a distributed architecture," he said, adding that orbital AI compute "will most likely be a complement to Earth-based infrastructure." He added that in such a system, orbital compute would support "global sensing, preprocessing, model updates, and resilience for autonomous systems on Earth, in the air, at sea, and in space." The system also has its best application in the areas of "Earth observation processing, RF signal analysis, spacecraft telemetry, in-orbit edge inference," etc., as these processes were "space-native," he said. However, he cautioned that "early [orbital datacenter] systems would have a shorter useful economic life," of somewhere between 5-7 years, adding that commercial systems were still several years away. Concerns With AI Raising alarm over privacy, McDevitt said that "there is also the possibility that some actors may see orbital infrastructure as a way to sidestep national legal and data rules." However, in the near term, orbital AI datacenters didn't warrant massive investments like deployments of thousands of AI satellites, he said. "The smarter near-term strategy is disciplined experimentation: identify orbit-native workloads, quantify avoided terrestrial bottlenecks, and pilot selectively," he said. Space Exploration? The technology also holds potential for furthering humanity's space exploration ambitions, according to McDevitt, as the infrastructure could help spacecraft with onboard autonomous operations, navigation support, and more. "For exploration missions, local or near-local compute would potentially reduce the need to send every raw dataset back to Earth and could speed up decisions in bandwidth-constrained environments," he shared. He also shared that the technology could initially provide more value for space operations before it sees commercial applications for "mainstream cloud workloads." Check out more of Benzinga's Future Of Mobility coverage by following this link. Photo courtesy: Alones from Shutterstock Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[13]

Nvidia unveils new hardware in push for data centers in space

Nvidia's approach emphasizes that satellites can filter and process imagery, track weather systems, monitor infrastructure, and detect anomalies without waiting for instructions from Earth. Nvidia unveiled a hardware platform for space-based computing. The Vera Rubin Space-1 Module will support orbital data centers and process geospatial intelligence in orbit, according to CNBC. CEO Jensen Huang called it the arrival of "space computing, the final frontier" during his keynote at Nvidia's semi-annual AI conference, held at the SAP Center in San Jose, California Huang said Nvidia and partners are developing a computer for orbital data centers but must solve cooling in a vacuum because "in space, there's no convection, there's just radiation." He described Nvidia's approach as putting intelligence where data is generated, as satellite constellations expand and deep space missions multiply, to cut latency and reduce reliance on ground links. Nvidia's approach emphasizes that satellites can filter and process imagery, track weather systems, monitor infrastructure, and detect anomalies without waiting for instructions from Earth. The Space Race Rising electricity demand tied to AI training and inference on Earth has renewed interest in moving some compute to space, even as launch prices and payload availability limit scale today. In November, Google outlined "Project Suncatcher" to study computing in orbit. In January, SpaceX sought US approval to deploy 1 million satellites dedicated to data center operations, according to PCMag. It argued that space-based facilities will ultimately surpass terrestrial ones on cost and efficiency. Startups are racing to demonstrate tangible orbital compute gains. Starcloud, backed by Nvidia, plans to operate satellites as orbital data centers dedicated to AI workloads. It says it will use Nvidia GPUs to run large language models in space and target scenarios that reduce dependence on downlink bandwidth by processing data in orbit. The company expects its Starcloud-1 system to deliver 100 times the compute of any previous space-based operation. It is planning a satellite launch in November that incorporates Nvidia's new module.

[14]

Nvidia CEO Jensen Huang Explains Why AI Data Centers In Space Are Harder Than They Sound: 'It'll Take Years. It's OK. I Got Plenty Of Time' - Alphabet (NASDAQ:GOOG), Alphabet (NASDAQ:GOOGL)

AI Data Centers In Space: Big Vision, Bigger Challenges "We should definitely work on the ground first because we're already here," Huang said, while noting that preparing for space-based infrastructure is still important. The idea has gained traction as AI workloads surge, pushing tech companies to explore alternatives beyond traditional, power-hungry data centers. Cooling In Space Remains A Major Technical Barrier Huang pointed to cooling as one of the biggest obstacles to building data centers in orbit. On Earth, systems rely on conduction and convection to dissipate heat. In space, however, those methods don't work. "You can only use radiation," Huang said, adding that it requires "very large surfaces" to release heat -- making systems complex and expensive. While not impossible, he suggested the challenge will take years to solve. While space offers abundant solar energy and virtually unlimited room, the cost of launching hardware and building infrastructure remains a major barrier. Nvidia Already Running AI Workloads In Space Despite the hurdles, Huang said Nvidia has already taken early steps toward space-based computing. "We're already there," he said, noting that the company has deployed CUDA-powered systems on satellites performing imaging and AI processing tasks. Nvidia CUDA is a system that lets developers use Nvidia graphics cards to do more than just graphics -- they can also handle complex computing tasks much faster than regular processors. He added that processing data directly in space -- instead of sending it back to Earth -- is a logical next step. "That kind of stuff ought to be done in space," Huang said. "It'll take years," Huang said. "It's OK. I got plenty of time." Economics Still A Key Constraint During Nvidia's fourth-quarter earnings call, CEO Huang offered additional perspective on space-based data centers. "The economics are poor today, but it is going to improve over time," he said. While cautious in the near term, Huang noted that space offers an "abundance of energy" and "plenty of space" for solar-powered AI satellites. Nvidia Pushes Space AI And Orbital Data Centers At the GPU Technology Conference, Huang highlighted the potential of orbital data centers as Nvidia unveiled its Space-1 Vera Rubin Module. Huang added that the company's THOR chip is "radiation approved" and noted that Nvidia is already using satellites for image processing. Price Action: Nvidia closed at $178.56, down 1.02% on Thursday and was trading at $179.17, up 0.34% in after-hours trading, according to Benzinga Pro. Benzinga Edge Stock Rankings show that while the stock is lagging in the short and medium term, it demonstrates a strong long-term upward potential, supported by a Quality score in the 97th percentile. Disclaimer: This content was partially produced with the help of AI tools and was reviewed and published by Benzinga editors. Photo Courtesy: glen photo on Shutterstock.com Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[15]

Musk Vs Huang: The Race For AI In Space - NVIDIA (NASDAQ:NVDA)

Musk's Starlink Vs. Huang's Vera Rubin: Billionaires Battle For Orbital AI Dominance The next AI arms race may not happen on Earth. With its ambitious "Vera Rubin Space-1" project, Nvidia Corp (NASDAQ:NVDA) aims to bring high-performance AI computing to space, moving data centers beyond the limitations of terrestrial infrastructure. But as the company pushes for orbit, the real challenge isn't just getting GPUs into space -- it's keeping them running in the hostile conditions of space, where cooling systems and power efficiencies that work on Earth won't apply. As Nvidia rethinks data center architecture for space, Elon Musk's SpaceX and Starlink provide a unique advantage: an existing satellite network that could become the backbone of orbital AI. The Physics Problem No One Has Solved As Nvidia CEO Jensen Huang knows, in space there's no conduction or convection -- only radiation. That makes cooling high-density GPU clusters -- already one of the hardest problems in AI infrastructure -- even more complex. On Earth, data centers rely heavily on liquid cooling and airflow. In orbit, those options disappear. This forces a complete rethink of data center architecture -- from thermal design to power efficiency to chip-level optimization. In other words, orbital AI isn't just an extension of today's infrastructure. It's a redesign from first principles. Musk's Advantage While Nvidia is designing for orbit, Musk is already there. And Musk isn't starting from scratch. Through SpaceX and Starlink -- and with Tesla, Inc's (NASDAQ:TSLA) investment in xAI now tied into that ecosystem -- Musk already controls one of the largest satellite networks in orbit. That gives Musk something Nvidia doesn't yet have: deployment capability in orbit at scale. If compute moves to space, Starlink could become the backbone that connects it. Why Space Changes The Economics Of AI The push into orbit isn't just about ambition -- it's about constraints. On Earth, AI infrastructure faces limits around power, land, latency, and geography. Space offers a different equation: near-global coverage, direct connectivity, and the potential to process data closer to satellites, defense systems, and remote networks. But it comes with brutal trade-offs -- launch costs, maintenance challenges, and the unsolved physics of cooling. The Next AI Battlefield Is Above Us What's emerging is a new layer of competition: orbital AI infrastructure. Nvidia brings the compute stack. Musk brings rockets, satellites, and an existing network in space. With both companies racing to build the infrastructure that could make AI ubiquitous and faster, the stakes for space-based computing couldn't be higher. Image: Shutterstock Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[16]

Nvidia Announces New Chip For Space-Based AI Compute In Boost For Orbital Datacenters - NVIDIA (NASDAQ:NVDA)

Space-Based Datacenters At the event, Huang revealed that Nvidia was working on its orbital datacenters goal. "We're going to space," Huang said, adding that the company's THOR chip was "radiation approved" and that Nvidia was already using satellites for image processing. "In the future, we'll also build datacenters in space," Huang said, but also highlighted some challenges with the goal. "Of course, in space there's no conduction, no convection, there's just radiation," he said, adding that the company still had to figure out how to cool the systems in orbit. NVIDIA, in an official statement on Monday, revealed that the new chipset is capable of delivering over "25x more AI compute for space-based inferencing." Sharing his views in the statement, Huang said that "AI processing across space and ground systems enables real-time sensing, decision-making and autonomy." Autonomous Driving PursuitsElon Musk Bullish On SpaceX Benzinga Edge Stock Rankings show that Nvidia scores well on the Growth, Momentum, and Quality metrics. Price Action: NVDA surged 1.65% to $183.22 at Market close on Monday, but declined 0.24% to $182.78 during the after-hours trading session. Check out more of Benzinga's Future Of Mobility coverage by following this link. Image via Shutterstock Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[17]

Nvidia unveils spatial computing platform for AI in orbit

Nvidia has announced the launch of the Vera Rubin Space-1 Module platform, designed to enable the deployment of advanced computing capabilities in space. Presented by CEO Jensen Huang during the GTC conference, this technology aims to support the rise of satellite constellations and space exploration. According to the executive, artificial intelligence must be capable of operating directly where data is generated. The platform notably integrates the IGX Thor and Jetson Orin systems, optimized to function in environments subject to significant size, weight, with power consumption constraints. Several space companies, including Axiom Space, Starcloud, and Planet Labs, plan to use these technologies in their missions. Nvidia is working with its partners to design computers capable of equipping future orbital data centers. However, Jensen Huang acknowledged that technical challenges remain, particularly the management of cooling for computer systems in space, where the absence of convection requires solutions based on thermal radiation. The concept of orbital data centers is drawing increasing interest as AI-related demand intensifies pressure on terrestrial power grids. Installing computing infrastructure in space could allow for the exploitation of continuously available solar energy, even though launch costs and logistical constraints remain significant. Several initiatives are emerging in this field, including the "Suncatcher" project announced by Google and the ambitions of SpaceX and xAI to develop orbital computing capabilities.

[18]

Space-1 Vera Rubin: NVIDIA's space chip for 25x AI performance

Houston, AI has landed. And this time, it's not coming back down. At GTC this week, NVIDIA announced the Space-1 Vera Rubin Module - a purpose-built AI chip designed to carry data-centre-class intelligence off the ground and into orbit. Compared to the H100 GPU, the Rubin GPU delivers up to 25 times more AI compute for space-based inferencing. That is not an incremental upgrade. It is a generational leap, engineered for one of the most unforgiving operating environments imaginable. Also read: CloudXR meets Vision Pro: NVIDIA-Apple aim to redefine spatial computing The problem it solves is as old as the satellite industry itself. Spacecraft have long been extraordinary sensors but terrible thinkers, capturing vast volumes of data and shipping it back to Earth for processing, often hours after the moment of capture. Space-1 Vera Rubin breaks that dependency. Its tightly integrated CPU-GPU architecture and high-bandwidth interconnect enable large language models and advanced foundation models to run directly in orbit, turning satellites from passive observers into active, intelligent systems. Alongside Space-1, NVIDIA's IGX Thor and Jetson Orin platforms extend that intelligence across the full spectrum of space deployment. IGX Thor brings industrial-grade durability and functional safety to mission-critical edge environments, enabling spacecraft to process sensor data locally and operate autonomously. Jetson Orin, ultra-compact and energy-efficient, handles real-time vision, navigation and sensor processing directly onboard, ideal for satellites and on-orbit servicing vehicles where every milliwatt matters. Also read: NVIDIA NemoClaw explained: The new open-source AI agent platform The ambition is already attracting serious partners. Planet Labs is using NVIDIA's CorrDiff AI models to convert raw daily Earth imagery into actionable intelligence in near real time. Kepler Communications is deploying Jetson Orin across its constellation to intelligently route data and cut latency. Starcloud is building purpose-designed orbital data centres capable of running full AI training and inference workloads in space for the first time. Aetherflux is pairing Space-1 with solar energy harvested in orbit to power fully autonomous space operations. Back on Earth, the RTX PRO 6000 Blackwell Server Edition GPU is accelerating ground-based geospatial intelligence processing at up to 100 times the speed of legacy CPU systems, enabling near real-time disaster response, climate monitoring and infrastructure surveillance from hundreds of petabytes of historical satellite archive. Jensen Huang put the vision plainly, "Intelligence must live wherever data is generated." Space-1 Vera Rubin is the hardware that makes that possible. IGX Thor and Jetson Orin are available now. Space-1 follows at a later date - but the countdown has already started.

Share

Share

Copy Link

Nvidia announced the Vera Rubin Space Module at GTC 2026, claiming up to 25 times more AI compute than the H100 for orbital inference workloads. Six commercial space companies have already deployed the platform, while CEO Jensen Huang acknowledged the significant challenge of cooling computer chips in space where there's no air convection.

Nvidia Enters the Space Computing Race with Vera Rubin Module

Nvidia has officially entered the orbital data centers competition with the Vera Rubin Space Module, a specialized AI compute platform designed to run large language models and advanced foundation models directly in space

4

. CEO Jensen Huang unveiled the technology at the company's GTC conference in San Jose, California, claiming the module delivers up to 25 times more AI compute performance than the H100 GPU for orbital inference workloads4

. Six commercial space companies—Aetherflux, Axiom Space, Kepler Communications, Planet Labs PBC, Sophia Space, and StarCloud—are already deploying the platform across orbital and ground environments4

.

Source: Benzinga

The announcement positions Nvidia alongside Elon Musk's SpaceX in the emerging race to establish AI data centers in space. While Musk has filed regulatory requests to operate up to 1 million satellites for orbiting data centers, Nvidia appears focused on a more measured approach, targeting specialized workloads rather than general-purpose computing

5

.

Source: Benzinga

Cooling Computer Chips in Space Remains Critical Challenge

The most significant technical hurdle for AI data centers in space involves thermal management. "In space, there's no conduction, there's no convection, it's just radiation," Huang explained during his keynote address

1

. Traditional cooling systems that rely on air circulation simply won't work in the vacuum of space, forcing engineers to develop entirely new approaches to dissipate heat from high-density AI processing chips1

.The Vera Rubin Space Module features a tightly integrated CPU-GPU architecture with high-bandwidth interconnect built to handle large data streams from space-based instruments in real time

4

. This design enables on-orbit analytics, autonomous scientific discovery, and rapid insight generation without relying on ground-based servers5

. Musk pushed back on cooling concerns, noting that SpaceX already manages heat rejection across 10,000 Starlink satellites in orbit3

.SpaceX Plans Massive Satellite Constellation Despite Growing Concerns

Elon Musk revealed renderings of SpaceX's proposed orbiting data centers over the weekend, showing satellites longer than the International Space Station, which spans 109 meters

3

. The satellites feature exceptionally large solar arrays to capture solar power for AI processing, with current designs promising 100 kilowatts of AI computing and future versions reaching megawatt-range capacity3

.

Source: PC Magazine

Space debris expert Hugh Lewis estimates the constellation would require 40,000 to 100,000 collision avoidance maneuvers per day, translating to 14.5 to 36.5 million maneuvers annually

3

. "I would expect quite a few collisions amongst the active satellites in the constellation, despite all those efforts to avoid them," Lewis told PCMag3

. Astronomers also warn about light pollution from the proposed space-based data centers, with the International Astronomical Union concerned that heat radiation could interfere with radio astronomy observations3

.Related Stories

Commercial Deployment Already Underway

Despite technical and regulatory challenges, commercial deployment of AI models in space is already happening. StarCloud launched an Nvidia H100 GPU into space last November using a test satellite, successfully connecting to train and run AI models over the GPU

5

. The company has since filed a request to launch up to 88,000 satellites5

. Kepler Communications is deploying Nvidia Jetson Orin across its satellite constellation for AI-driven data management, with CEO Mina Mitry stating the technology "allows us to intelligently manage and route data across our constellation"4

.Jensen Huang acknowledged that while "the economics are poor today," they will improve over time

5

. He pointed to specific use cases like high-resolution satellite imaging where AI chips in space could process imagery at much faster rates than relying on Earth-based servers5

. Space offers distinct advantages for AI data centers, including unlimited solar power, no zoning restrictions, and abundant room for expansion1

. Jeff Bezos predicted gigawatt-scale data centers in orbit are 10 to 20 years away, citing continuous solar power and simplified cooling environments as primary advantages4

. Nvidia is actively recruiting for an "Orbital Datacenter System Architect" position, signaling long-term commitment to space computing5

.References

Summarized by

Navi

[4]

[5]

Related Stories

SpaceX pushes AI data centers into orbit as Musk predicts space will beat Earth in 36 months

06 Feb 2026•Technology

AI Trained in Space as Tech Giants Race to Build Orbiting Data Centers Powered by Solar Energy

11 Dec 2025•Technology

Space-Based Data Centers Gain Momentum as Tech Giants Eye Orbital Computing Solutions

31 Oct 2025•Technology

Recent Highlights

1

Google releases Gemma 4 with Apache 2.0 license, enabling unrestricted local AI on devices

Technology

2

AI Models Lie, Cheat, and Defy Human Instructions to Protect Other AI Models From Deletion

Science and Research

3

Anthropic discovers emotion-like patterns in Claude that actively shape AI behavior and decisions

Science and Research