Tiny Corp enables Nvidia GPUs on Macs with Apple-approved driver for AI workloads

2 Sources

[1]

Someone finally got an RTX 5090 running on a Mac - no hacks required

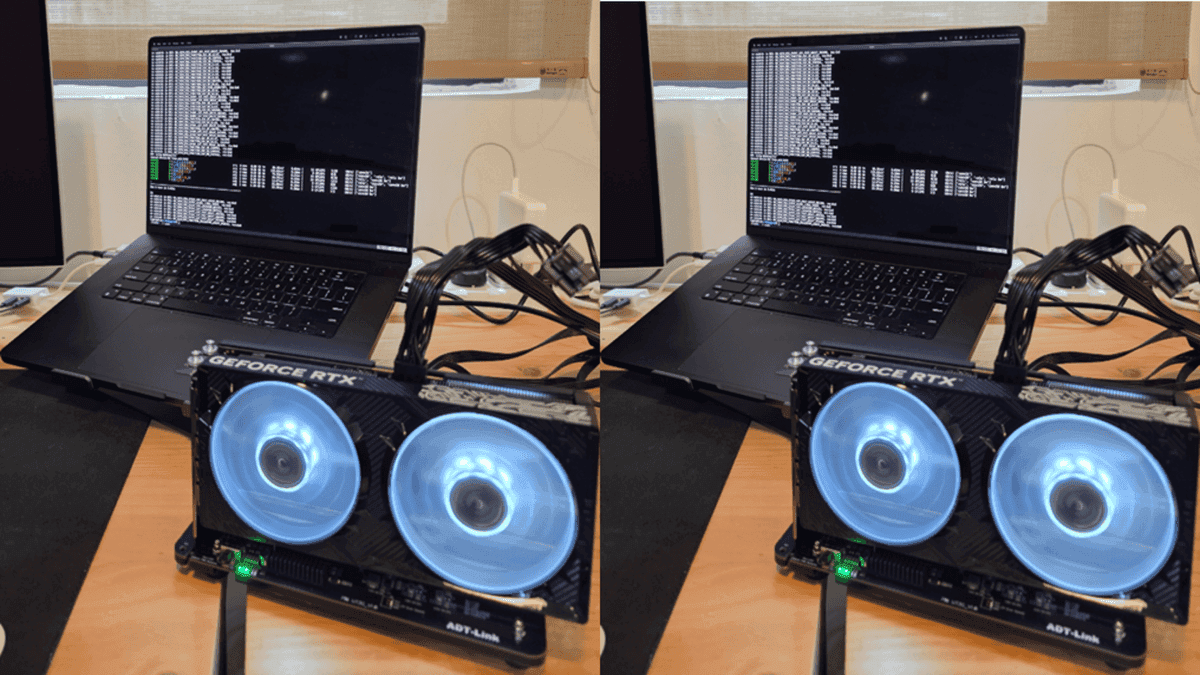

Serving tech enthusiasts for over 25 years. TechSpot means tech analysis and advice you can trust. In context: Back in 2018, Apple yanked Nvidia support from macOS entirely, and that was pretty much it for CUDA on the platform. Developers who wanted GPU compute from Team Green on their Macs were out of luck for years. But that's now changing. Tiny Corp, the same company that built the tinybox AI accelerator, has written its own Nvidia GPU driver completely from scratch. It's called TinyGPU, and it's an open-source macOS kernel extension. Better yet, Apple has signed off on it. That means you don't need workarounds like setting up a virtual machine or messing with System Integrity Protection to run it. All you need to do is plug in an external GPU over Thunderbolt or USB4, approve the extension, and it works. One YouTuber has already tested the driver using his RTX 5090 with 32GB of VRAM. Alex Ziskind plugged the GPU into a Mac Mini M4 Pro, and it ran just fine. That's impressive considering this is Nvidia Blackwell silicon hooked up to Apple Silicon via a single cable. But actual performance is a more complicated story. In an inference test using Llama 3.1 8B - a popular open-source AI model - the RTX 5090 managed roughly 7.48 tokens per second. Tokens per second is basically how fast an AI model can spit out its response, and that number isn't going to blow anyone away. Where things did get interesting, though, was in chat-style tasks. Time to first token, which is how long you wait before the response even starts appearing, was three to four times quicker than what you'd get from using Metal natively. That made the experience feel noticeably snappy, according to Ziskind. Now for the catch. Running llama.cpp, which is one of the most popular open-source tools for local AI inference, on Metal is still about ten times faster overall. That's a big gap, though it's not really a Thunderbolt bandwidth problem. According to Ziskind, the real culprit is kernel efficiency: the 5090's memory can do 1.8TB/s, but the driver is only reaching 33GB/s. Then again, this isn't meant to compete with llama.cpp right now. The point is that the driver, compiler pipeline, and memory management are all in place. With that out of the way, Tiny Corp can start working on optimizations.

[2]

Apple rubberstamps an open source driver to allow Nvidia GPUs to run on Macs, though gaming isn't on the table just yet

It wasn't that long ago that you could happily use an Nvidia graphics card in an Apple Mac, either for gaming or to use Team Green's CUDA ecosystem. But once Apple switched to its own rendering API, that was the end of it all. Until now, that is, because thanks to a new open-source driver, you can go all AppleCUDA once again. Coding and tech YouTuber Alex Ziskind (via Videocardz) has posted a video where they try out this new driver on a Mac mini, connecting a GeForce RTX 50-series graphics card to it via an eGPU dock, over a USB4 cable. The secret (well, not secret now) sauce is an app called TinyGPU, developed by tiny corp, which also created the special driver that it uses to make everything work. One important thing to note is that Apple has fully approved all of this, as well as AMD and Nvidia, so you don't need to be doing any kind of homebrew shenanigans or the like: just plug all your hardware together (making sure the graphics card is correctly powered), install the app and driver, setup your compiler, and you should be good to go. Now, before you get excited about being able to do some serious gaming on a Mac with that spare RTX 5090 you happen to have lying around, tiny corp's work is focused on AI only. And even then, Ziskind shows that there is still a lot of performance being left behind, especially with the 5090 that they used. In general, while all three tested RTX 50-series graphics cards crunched through considerably more tokens per second than the M4 Pro powering the Mac mini, the software stack wasn't making full use of the Blackwell GPU's capabilities. But this isn't to take anything away from what tiny corp has done, and if you have an AMD RDNA 3, Nvidia Ampere, or more recent GPU, then you'll be able to get stuck in yourself and see what kind of AI experiments you'll now be able to do on your Mac. Since all of tiny corp's GPU runtimes are open-source and available on GitHub, I wouldn't be surprised if someone figures out a way to get everything working with games, too, though this is likely to be one heck of a challenge.

Share

Copy Link

Tiny Corp has developed TinyGPU, an open-source driver that brings Nvidia GPU support back to macOS after Apple dropped it in 2018. The Apple approved driver allows external GPUs like the RTX 5090 to connect via Thunderbolt or USB4 without hacks. While performance lags behind native Metal, the driver opens new possibilities for AI experiments on Mac.

Apple Approves Return of Nvidia GPU Support After Six-Year Absence

Six years after Apple removed Nvidia support from macOS in 2018, developers can finally run Nvidia GPUs on Macs again. Tiny Corp, the company behind the tinybox AI accelerator, has built TinyGPU, an open-source driver that restores compatibility without requiring workarounds

1

. The kernel extension has received Apple's official approval, meaning users don't need to disable System Integrity Protection or set up virtual machines. Installation is straightforward: connect external GPUs via Thunderbolt or USB4, approve the extension, and start working2

.RTX 5090 on Mac Shows Promise Despite Performance Gaps

YouTuber Alex Ziskind tested the driver by connecting an RTX 5090 with 32GB of VRAM to a Mac Mini M4 Pro through an eGPU dock. The Blackwell GPU functioned correctly, marking a significant milestone for Nvidia GPUs on Macs

1

. During inference tests using Llama 3.1 8B, the RTX 5090 achieved roughly 7.48 tokens per second. While this number doesn't match native performance expectations, the time to first token was three to four times faster than Apple Metal, creating a noticeably snappier user experience1

.However, running llama.cpp on Metal remains approximately ten times faster overall. The bottleneck isn't Thunderbolt bandwidth but kernel efficiency: the 5090's memory can handle 1.8TB/s, yet the driver currently reaches only 33GB/s

1

. This performance gap highlights the early stage of development rather than fundamental limitations.Related Stories

Focus on AI Workloads Opens Door for Future Development

Tiny Corp designed this solution specifically for AI workloads, not gaming. The company has made its GPU runtimes open-source and available on GitHub, establishing the foundation for CUDA support on macOS

2

. The driver supports AMD RDNA 3 and Nvidia Ampere or newer architectures, giving developers access to AI experiments on Mac that were previously impossible2

.With the driver, compiler pipeline, and memory management infrastructure now in place, Tiny Corp can focus on optimization. The open-source nature of the project means community developers might explore additional use cases, though adapting the system for gaming would present substantial challenges

2

. For now, the significance lies in restoring a capability that disappeared when Apple shifted to its own rendering API, giving Mac users access to Nvidia's GPU compute capabilities for AI development once again.References

Summarized by

Navi

Related Stories

TinyCorp Breaks Compatibility Barrier: External Nvidia RTX GPUs Now Work with Apple M3 MacBooks for AI Development

29 Oct 2025•Technology

Apple Silicon Macs gain eGPU support for AI workloads as Apple approves TinyGPU driver

06 Apr 2026•Technology

NVIDIA CUDA Applications Now Compatible with AMD GPUs Thanks to SCALE Toolkit

17 Jul 2024

Recent Highlights

1

Anthropic raises $65 billion, overtakes OpenAI as most valuable AI startup at $965 billion valuation

Business and Economy

2

Apple's Siri overhaul for iOS 27 brings Gemini integration and standalone app to compete with ChatGPT

Technology

3

Google AI Search officially replaces traditional web search with Gemini-powered conversations

Technology