Adobe Firefly AI Assistant orchestrates tasks across Photoshop, Premiere, and Creative Cloud apps

19 Sources

[1]

Adobe takes Creative Cloud into Claude Code-esque territory

Adobe has been putting task-specific AI tools and features into its creative productivity applications like Photoshop, Illustrator, and Premiere at a breakneck pace, but the latest product from the company -- a chat-based interface that can handle complex, multi-modal projects across several applications -- marks a significant shift in how users can think about its suite of tools. You could imprecisely but defensibly call it a sort of "Claude Code for creative apps." On one hand, it's meant to provide experienced creatives with an efficient way to offload mundane tasks across multiple apps. On the other, it's meant to reduce the "barrier to entry" for inexperienced or casual users, in the wake of tool complexity that the company says has previously "widened the gap between idea and output." Adobe has offered chat-based prompts within individual apps before and in other Firefly interfaces. It has also offered access to generative models under the Firefly brand before. What's different here is that Firefly AI Assistant (as they call this new interface) promises to work across numerous Adobe Creative Cloud apps and to actually orchestrate workflows across them, checking in regularly with the user for suggestions and questions. As with similar tools we've already seen for programming and the like, users can interject mid-task with clarifications or additional information. While it's primarily a chat interface, it dynamically surfaces contextually relevant controls, such as sliders, based on the task at hand. Adobe also says it can learn from users over time about their favorite tools or even stylistic preferences. That could be useful, but as with the memory features for LLMs, it could become frustrating if it pigeonholes a user. Here's hoping you can customize that or disable that feature as needed. In addition, there will be "skills," which work pretty much like the skills you may have seen in similar tools for other disciplines, like OpenAI's Codex or Anthropic's Claude Code. Skills are essentially prepackaged integrations and workflows tailored to specific tasks. Users can tap a library of these, or they can build or configure their own. Firefly AI Assistant was actually first previewed last October, when it had the moniker "Project Moonlight" -- this is just the actual public release of that concept. This development marks a notable shift in Adobe's AI strategy. Think of it like this: Adobe's approach up to this point has been somewhat similar to Apple's, with an emphasis on leveraging models for very specific features and capabilities built into existing apps and workflows. By contrast, this is an entirely different paradigm, where users may work significantly less within specialized applications, and the technology is used to facilitate a new approach to working rather than just giving new functionality to people who already know how to use the apps. Adobe says Firefly AI Assistant will enter a public beta within a few weeks, but it hasn't yet offered specifics about pricing, limits, or which users or plans it will be available to.

[2]

Adobe's new Firefly AI assistant can use Creative Cloud apps to complete tasks | TechCrunch

Last October, Adobe previewed a new assistant under the "Project Moonlight" moniker that could do tasks for you by tapping different Adobe apps like Acrobat, Photoshop and Express. That product is now being launched as Firefly AI Assistant. The Firefly AI Assistant will be available in public beta in the coming weeks. The company didn't specify if the AI assistant will be priced differently from Firefly's credit-based subscription tiers. Like other creative tools, the Firefly AI Assistant lets you describe what you want it to create, and it will handle the rest. Adobe says the assistant can work across apps like Firefly, Photoshop, Premiere, Lightroom, Express, Illustrator and its other apps to do tasks for you. Users can control the outputs from the AI assistant using text prompts as well as buttons and sliders. The assistant can suggest actions, orchestrate between actions and apps, and execute workflows, but leaves room for users to interject at any time. The agent will also show controls based on the project you are working on. For instance, if you're editing a product photo set in a forest, the assistant might give you a simple slider to increase or reduce the amount of trees and foliage. Adobe says the assistant will learn more about your creative preferences over time and suggest actions accordingly. Adobe is also releasing skills, which consist of multiple steps, for the assistant. The "social media assets" skill, for instance, can help you adapt images to different platforms by cropping or expanding, optimizing file sizes, and storing the outputs. Adobe has been working steadily to launch AI-powered assistants for Photoshop, Express and Acrobat. The company said on Wednesday that it is exploring having these assistants work better with third-party large language models. Other competitors like Canva and Figma are also working on agentic workflows, though Adobe says its strength is in unifying its existing and popular tools. "We have the opportunity with the Firefly AI assistant and with agentic experiences to remove some of the friction in learning this large catalog of tools we have and bring all of that value to our customers at their fingertips. And that's the opportunity we have," Alexandru Costin, vice president of AI and innovation, creativity and productivity business at Adobe, told TechCrunch. Adobe is adding new features to the Firefly tool, too. The AI video editor is getting an option to reduce noise in speech, adjust reverb and music, a color adjustment tool, and now integrates with Adobe's stock library. The company is also adding the Kling 3.0 and Kling 3.0 Omni models to Firefly's library of third-party AI models.

[3]

Adobe Is Working With Anthropic to Bring a Creative AI Agent to Claude

Adobe is diving deeper into agentic AI and expanding its partnership roster in a new deal with the AI developer Anthropic. Adobe on Wednesday introduced its latest conversational, agentic creative assistant, which is the technological foundation for its work with Anthropic. Firefly is the hub for all things Adobe AI, with integrations across other popular Creative Cloud apps such as Photoshop, Acrobat and Premiere Pro. The new Firefly AI assistant is agentic, which means that it can perform tasks with minimal human oversight. You can upload a batch of photos and have the AI edit them for you, automatically adjusting the lighting and cropping, for example. One way to think about it is as a new school AI tool that you can use to do old-school, or non-generative, editing. Adobe has been building assistants into its creative software for a while now. It introduced AI assistants in Adobe Express and Photoshop back in October. Agentic AI tools like the kind Adobe is building are becoming rapidly popular across the entire AI industry, with tools like Claude Code and OpenClaw shaking up legacy tech companies. This new partnership brings Adobe's creative agent to Claude. This is Claude's first major creative AI tool, expanding the popular app's capabilities beyond the coding and enterprise prowess it's known for. Adobe wrote in its blog post that it is "enabling creators to access the best of Adobe directly across the surfaces where they work every day," by bringing its tools to third-party models like Claude. Anthropic declined to comment. More details about the Adobe connector to Claude should be released in the coming weeks, including the exact date it will become available. The Firefly assistant will be released as a public beta later this month. There are a few other Firefly updates that are available now. Firefly's video editor is getting better audio, advanced coloring options and more integrations with Adobe Stock. Firefly's image editing suite is also getting a few upgrades. New Kling models, Kling 3.0 and 3.0 Omni, will also be added to the 30-plus outside AI models that creators can use through Firefly.

[4]

Adobe embraces conversational AI editing, marking a 'fundamental shift' in creative work

Adobe is fully embracing AI tools that enable creators to edit their work using descriptive prompts, instead of manually using specific Creative Cloud apps. The software giant's new Firefly AI Assistant allows users to describe what they want to change by typing their own words into a conversational interface. Adobe says this marks a "fundamental shift in how creative work is done" by removing skill barriers and laborious tasks, while still giving creatives full control over their work. It'll be "available soon" on the Firefly AI studio platform according to Adobe, though no specific launch date was provided in the announcement. The unified AI interface, which builds on the Project Moonlight experiment that Adobe introduced at its Max conference last year, automatically performs "complex, multi-step workflows" to edit projects, utilizing specific tools and apps (including Firefly, Photoshop, Premiere, Lightroom, Express, Illustrator, and more) on the user's behalf. Users of the Firefly AI Assistant can instruct the chatbot to "retouch this image" or "resize this for social media," for example, with Adobe's AI agent then providing a selection of edits to choose from alongside surfacing specific tools or sliders that allow creators to fine-tune the results. For more detailed adjustments, creatives can also open the edited results in Creative Cloud apps to finish the project. The Firefly AI assistant will learn the user's preferences over time, such as preferred tools, workflows, and aesthetic choices, to help make the results feel more personalized and consistent. Adobe's AI chief Alexandru Costin told The Verge that creatives will be able to choose whether to enable this feature, and can select specific projects for the AI assistant to learn from. Creatives can also create "Creative Skills" -- tools that provide specific and consistent presets -- that the AI assistant can execute, or select from a library of pre-made skills at launch. This is Adobe's latest push into the world of AI agents, having already launched specific AI assistants for apps like Adobe Acrobat, Express, and Photoshop. Adobe says it will also bring these agentic features to third-party AI apps like Anthropic's Claude, allowing those users to access Adobe tools outside of its own Firefly and Creative Cloud platforms. This announcement comes alongside some new image, video, and audio editing capabilities for Adobe's Firefly platform, which are rolling out starting today. The Firefly Video Editor is now integrated with Adobe Stock for easy access to B-roll footage, and allows users to access new features for improving color adjustments and the clarity of spoken dialogue. New editing features are also available in the Firefly image editing tool -- Precision Flow, which enables creators to make and compare a wider range of generated images without adjusting their prompts, and a new AI Markup tool that lets users control where edits should be made using brush and rectangle tools or reference images.

[5]

Adobe's Firefly AI Assistant works across Photoshop, Premiere and other apps

Few creative software companies have embraced AI like Adobe, with the company embedding image, video, audio and vector generation tools into nearly all its apps. Now, Adobe is taking on AI apps like Gemini's Nano Banana with its new prompt-based Firefly AI Assistant. You simply describe the outcome you want and it will execute "complex multi-step workflows" across Photoshop, Premiere, Lightroom, Illustrator and other apps to achieve that result, Adobe says. The complexity of apps like Photoshop creates a "barrier to entry" for users who may have a vision but lack skill, according to Adobe. That's where the FireFly AI Assistant comes in. It works much like ChatGPT and other prompt-based AI assistants, but it has Adobe's suite of powerful apps behind it to execute the required steps. "You no longer have to map the process. You can start from the outcome," the company says. Adobe emphasizes that while the Firefly AI Assistant is doing the grunt work, you remain in control. "You stay in the loop as the assistant executes, stepping in at any point to guide direction, adjust outputs and create something that's distinctly yours." It also maintains Adobe's native file formats, so the final output remains fully editable. You'll be able to launch complex workflows with Creative Skills that let you run multi-step workflows from a single prompt, then customize them to your working style. For instance, you can start with the "social media assets" skill then direct the assistant to crop or use Generative Extend to make it fit the format of Instagram, Facebook and other platforms. It can also handle context-aware creative decisions. In one example, Adobe describes a product photo set in a forest. "The assistant might give you a simple slider to increase or reduce the surrounding trees and foliage -- making it easy to adjust the scene without complex edits," the company explains. Finally, to gather and act on feedback, the Assistant can organize and share work among team members via Adobe's Frame.io. Adobe emphasizes that Firefly AI Assistant is grounded in the company's pro-grade creative tools to deliver "precise, context aware results" in a way that other AI agents can't and will learn your style over time. That's an argument the company no doubt hopes will counter a narrative that generative AI apps like Nano Banana are "eating software" like Adobe Photoshop. Adobe's Firefly AI Assistant will arrive in public beta in the coming weeks. Should you wish to use other AI image generator within Adobe apps, the company has added Kling 3.0 and Kling 3.0 Omni, "all-purpose video models optimized for fast, high-quality production with smart storyboarding and audio-visual sync." That's on top of other models already offered, including Google's Nano Banana 2 and Veo 3.1, Runway Gen-4.5, Luma AI's Ray 3.14, ElevenLabs' Multilingual v2, Topaz Lab's Topaz Astra and others.

[6]

Adobe launches Firefly AI Assistant to orchestrate tasks across Creative Cloud

Adobe launched the Firefly AI Assistant, a conversational agent that orchestrates tasks across Photoshop, Premiere, Lightroom, Illustrator, Express, and Frame.io using natural language. Previously codenamed Project Moonlight, it enters public beta in coming weeks, integrates with third-party models including Anthropic's Claude, and maintains context across sessions. Adobe also announced Firefly Image Model 5, Custom Models, and the node-based Project Graph workflow system. The launch comes as CEO Narayen prepares to step down and Adobe faces competition from Canva (260M MAUs) and Figma. Adobe has launched the Firefly AI Assistant, a conversational agent that can operate across Photoshop, Premiere Pro, Lightroom, Illustrator, Express, and the Firefly web app to complete creative tasks described in plain language. Rather than switching between applications and navigating menus, a designer can tell the assistant what they need, resize a set of images for social media, colour-grade footage to match a brand palette, generate vector variations of a logo, and the system orchestrates the work across whichever Adobe tools the task requires. The assistant, which enters public beta in the coming weeks, was first previewed under the codename "Project Moonlight" at Adobe MAX in October 2025. It maintains context across sessions, meaning it can remember a project's parameters, brand guidelines, and previous decisions rather than starting from zero each time. It also integrates with Frame.io, Adobe's collaborative video review platform, so that feedback and approval workflows feed directly into the assistant's task pipeline. Adobe confirmed that the assistant will work with third-party AI models, including Anthropic's Claude, alongside Adobe's own Firefly models and partner models from Google, OpenAI, Runway, Luma AI, ElevenLabs, and others. The new video and image editing capabilities announced alongside the assistant are available immediately for customers on Firefly plans. The Firefly AI Assistant represents Adobe's entry into the agentic era of software, the shift from tools that respond to individual commands toward systems that understand intent and execute multi-step workflows autonomously. It is a significant architectural change for a company whose business has been built on selling individual applications, each with its own interface, learning curve, and subscription tier. The competitive pressure behind the move is visible in Adobe's financials. The company reported $23.77 billion in revenue for fiscal year 2025, with digital media annual recurring revenue of $19.20 billion, representing 11.5 per cent year-on-year growth. It is targeting $26 billion in revenue for FY2026. Those are large numbers, but the growth rate has slowed, and the stock has declined roughly 43 per cent as investors question whether Adobe's traditional per-application model can survive a market where every software company is embedding AI and where competitors offer increasingly capable creative tools at a fraction of the price. Canva now has more than 260 million monthly active users, many of them the small businesses and marketing teams that Adobe's Express product targets. Figma commands an estimated 80 to 90 per cent market share in UI and UX design, the category Adobe tried to acquire for $20 billion before regulators blocked the deal. Both companies are building their own AI-driven creative assistants, and neither carries the legacy of a product suite designed before large language models existed. In practical terms, the Firefly AI Assistant works as an orchestration layer sitting above Adobe's individual applications. A user might ask it to take a raw photograph from Lightroom, apply a specific editing style, generate three variations with different aspect ratios in Photoshop, create a matching set of social media graphics in Express, and prepare the assets for review in Frame.io, all in a single conversational thread. The assistant determines which application handles each step and executes accordingly. This matters because multi-application workflows are where creative professionals lose the most time. A video editor working in Premiere Pro who needs a title card designed in Illustrator, colour-corrected footage from Lightroom, and audio processing from Adobe's tools currently manages those hand-offs manually. The assistant aims to collapse that friction into a single interface where the apps become invisible and only the outcome matters. Adobe is also launching Firefly Image Model 5 in public beta, its latest image generation model, and expanding Firefly Custom Models, which let creators train a model on their own image library to capture a specific visual style, character design, or photographic look. Custom models are private by default and reusable across projects, a feature aimed at enterprise teams and brand-conscious studios that need consistent visual output without exposing their assets to third-party training sets. Alongside the assistant, Adobe is developing Project Graph, a node-based visual system that lets creators design, connect, and automate AI-powered workflows across Creative Cloud. Where the Firefly AI Assistant uses natural language, Project Graph uses a visual editor in which users wire together AI models, Adobe tools, and effects into reusable "capsules", portable workflow templates that can be shared across teams and dropped into individual applications. Project Graph can access tools from across the Creative Cloud suite, including Photoshop, Illustrator, and Premiere Pro, as well as the 30-plus third-party AI models Adobe has integrated through partnerships. It is still in development but represents Adobe's longer-term bet: that the value of its platform lies not in any single application but in the connective infrastructure between them. The AI push arrives as Adobe navigates a CEO transition. Shantanu Narayen, who has led the company for 18 years and drove its transformation from packaged software to cloud subscriptions, announced in March 2026 that he will step down once a successor is appointed. He will remain as board chair. The successor search, led by a special committee under lead independent director Frank Calderoni, is considering both internal and external candidates. Narayen's departure creates an unusual situation: the executive who built Adobe's subscription empire is leaving just as that model faces its most serious structural challenge. His successor will inherit a company with nearly $24 billion in annual revenue, dominant market share in professional creative tools, and a strategic partnership with NVIDIA on next-generation Firefly models -- but also a stock price that suggests the market is not yet convinced the transition to AI-native creative tools will protect the margins that made Adobe one of the most profitable software companies in the world. The Firefly AI Assistant is the clearest signal yet of the direction. Adobe is betting that the future of creative software is not a suite of applications you learn individually but a conversational partner that knows what you want and which tools to use. Whether creative professionals embrace that vision, or see it as the automation of their craft, will define the next chapter for a company that has shaped how the world makes things for four decades.

[7]

Adobe Firefly AI Assistant will handle multi-step tasks across Creative Cloud apps - 9to5Mac

Available in public beta soon, Adobe Firefly AI Assistant will take a user's prompt and then orchestrate multi-step workflows across multiple Creative Cloud apps. Here are the details. Today, Adobe is announcing Firefly AI Assistant, a smart agent that will soon build on existing AI assistants across several Creative Cloud apps, and orchestrate multi-step actions across them from a single, unified interface, maintaining context across sessions. In practice, this means users won't need to know the nuts and bolts of platforms such as Photoshop, Premiere, Express, Lightroom, Illustrator, and more. Instead, they'll prompt Firefly AI Assistant, which will orchestrate actions across these apps to deliver the result. Available in public beta in the coming weeks, Firefly AI Assistant will feature a growing library of pre-built Creative Skills (such as retouching portrait photos with consistent presets or generating content across social channels), but will also let users create their own skills to streamline their workflow. In fact, Adobe says Firefly AI Assistant will learn the user's preferences over time, including aesthetic choices and preferred tools and workflows, "to deliver more consistent, tailored results." Importantly, Firefly AI Assistant will also present suggestions and even ask contextual questions depending on the user's request, and will let users "step in at any point to guide, refine or adjust outputs." One ambitious aspect of Firefly AI Assistant is its context-aware capabilities. Here's Adobe on the feature: For example, if you're editing a product photo set in a forest, the assistant might give you a simple slider to increase or reduce the surrounding trees and foliage -- making it easy to adjust the scene without complex edits. Adobe adds that its shared workflow platform Frame.io will also be part of the experience, letting users ask the assistant to package and organize materials for a presentation, share them with collaborators, collect feedback, and even apply requested changes automatically. Finally, the company worked with Anthropic to bring Firefly AI Assistant compatibility to Claude, "enabling creators to access the best of Adobe directly within the surfaces where they work every day," with additional third-party integrations underway. Right now, there is no launch date set for Firefly AI Assistant. The company says the platform "will be available in public beta in the coming weeks," with more information and demos planned for Adobe Summit, which is set take place from April 19-22 in Las Vegas. Today's announcement also includes more actionable news that creators can leverage immediately. First, Firefly Video Editor is adding new capabilities, including: Adobe Firefly is also adding Kling 3.0 and Kling 3.0 Omni to its list of more than 30 third-party video models, which also includes Google's Nano Banana 2 and Veo 3.1, Runway Gen-4.5, Luma AI's Ray3.14, Black Forest Labs' FLUX.2 [pro], ElevenLabs' Multilingual v2, Topaz Lab's Topaz Astra and Adobe's own "commercially safe" Firefly models. Finally, Firefly's image editing toolset is also getting new capabilities: What's your take on today's news? Let us know in the comments.

[8]

Adobe's new Firefly AI Assistant wants to run Photoshop, Premiere, Illustrator and more from one prompt

Adobe today launched its most ambitious AI offensive to date, unveiling the Firefly AI Assistant -- a new agentic creative tool that can orchestrate complex, multi-step workflows across the company's entire Creative Cloud suite from a single conversational interface -- alongside a raft of new video, image, and collaboration features designed to position the company at the center of the rapidly evolving AI-powered content creation landscape. The announcements, which also include a new Color Mode for Premiere Pro, the addition of Kling 3.0 video models to Firefly's growing roster of third-party AI engines, and Frame.io Drive -- a virtual filesystem that lets distributed teams work with cloud-stored media as though it lived on their local machines -- represent Adobe's clearest signal yet that it views agentic AI not as a feature upgrade but as a fundamental reshaping of how creative work gets done. "We want creators to tell us the destination and let the Firefly assistant -- with its deep understanding of all the Adobe professional tools and generative tools -- bring the tools to you right in the conversation," Alexandru Costin, Vice President of AI & Innovation at Adobe, told VentureBeat in an exclusive interview ahead of the launch. The stakes could hardly be higher. Adobe is fighting to convince Wall Street, creative professionals, and a wave of well-funded AI-native competitors that its decades-old software empire can not only survive the generative AI revolution but lead it. How Adobe turned a research prototype into a 100-tool creative agent The centerpiece of today's announcement is the Firefly AI Assistant, which Adobe describes as a fundamentally new way to interact with its creative tools. Rather than requiring users to manually navigate between Photoshop, Premiere, Illustrator, Lightroom, Express, and other apps -- selecting the right tool for each step of a complex project -- the assistant lets creators describe an outcome in natural language. The agent then figures out which tools to invoke, in what order, and executes the workflow. The assistant is the productized version of Project Moonlight, a research prototype Adobe first previewed at its annual MAX conference in the fall of 2025 and subsequently refined through a private beta. "This is basically [Project] Moonlight," Costin confirmed to VentureBeat. "We started with all the learnings from Moonlight, and we engaged with customers. We looked internally. We evolved that architecture to make it more ambitious." Under the hood, Adobe says it has assembled roughly 100 tools and skills that the assistant can call upon, spanning generative image and video creation, precision photo editing, layout adaptation, and even stakeholder review through Frame.io. The system is built around a single conversational interface inside the Firefly web app where users describe what they want and the assistant maintains context across sessions. Pre-built Creative Skills -- purpose-built, multi-step workflow templates such as portrait retouching or social media asset generation -- can be run from a single prompt and customized to match a creator's own style. The assistant also learns a creator's preferred tools, workflows, and aesthetic choices over time, and understands the content type being worked on -- image, video, vector, brand assets -- to make context-aware decisions. Crucially, outputs use native Adobe file formats -- PSD, AI, PRPROJ -- meaning users can take any result into the corresponding flagship app for manual, pixel-level refinement at any point. "We always imagine this continuum where you can have complete conversational edits and pixel-perfect edits, and you can decide, as a creative, where you want to land," Costin said. The Firefly AI Assistant will enter public beta in the coming weeks, though Adobe did not specify an exact date. Why Wall Street is watching Adobe's AI pricing model so closely For a company whose AI monetization story has faced persistent skepticism from investors, the pricing structure of the Firefly AI Assistant will be closely watched. Costin told VentureBeat that, at launch, using the assistant will require an active Adobe subscription that includes the relevant apps -- meaning users who want the agent to invoke Photoshop cloud capabilities, for instance, will need an entitlement that includes the Photoshop SKU. Generative actions will consume the user's existing pool of generative credits, consistent with how Firefly credits work across the rest of Adobe's platform. "To use some of these cloud capabilities from Photoshop and other apps, you need to have a subscription that includes access to the Photoshop SKU," Costin explained. "You'll be consuming your credits when you use generative features." He acknowledged, however, that the model could evolve: "As we better understand the value of this -- and the costs of operating the brain, the conversation engine -- things might change." The question of whether Adobe can convert AI enthusiasm into meaningful revenue growth is anything but theoretical. When Adobe reported its most recent quarterly results in March, it touted 10% year-over-year revenue growth to $6.4 billion and disclosed that annual recurring revenue from AI standalone and add-on products had reached $125 million -- a figure CEO Shantanu Narayen projected would double within nine months. Adobe adds Chinese AI video models to Firefly, raising commercial safety questions Alongside the assistant, Adobe is expanding Firefly's roster of third-party AI models to include Kling 3.0 and Kling 3.0 Omni, two video generation models developed by Kuaishou, the Chinese technology company. Kling 3.0 focuses on fast, high-quality production with smart storyboarding and audio-visual sync, while the Omni variant adds professional controls for shot duration, camera angle, and character movement across multi-shot sequences. The additions bring Firefly's model count to more than 30, joining Google's Nano Banana 2 and Veo 3.1, Runway's Gen-4.5, Luma AI's Ray3.14, Black Forest Labs' FLUX.2[pro], ElevenLabs' Multilingual v2, and others. When asked whether Adobe had concerns about integrating a model from a Chinese tech company given the current geopolitical climate, Costin was direct: "We think choice is what we want to offer our customers." He explained that Adobe's strategy distinguishes between its own commercially safe, first-party Firefly models -- trained on licensed Adobe Stock imagery and public domain content -- and third-party partner models, which carry different commercial safety profiles. "For some use cases, like ideation, non-production use cases, we got requests from customers to support some external models," Costin said. "If I'm in ideation, I might be more flexible with commercial safety. When I go into production, I'd want to have a model that gives you more confidence." This raises an important nuance for the agentic era. When the Firefly AI Assistant autonomously selects which model to use for a given task, the commercial safety guarantees may vary depending on which engine it invokes. Costin pointed to Adobe's Content Credentials system -- the metadata-and-fingerprinting framework developed through the Content Authenticity Initiative -- as the mechanism for maintaining transparency. "The agentic power -- and the fact that the assistant has access to all of those models -- means it could decide to use a model that carries different content credentials," he acknowledged. "But with the transparency of content credentials, the user will know how a particular piece of content was created and can decide whether that's commercially safe or not." Adobe offers commercial indemnity for its first-party Firefly models but applies different indemnity levels for third-party models -- a distinction that enterprise buyers, in particular, will need to carefully evaluate. Inside Adobe's active collaboration with Nvidia on long-running AI agent infrastructure Adobe's agentic ambitions also intersect with its strategic partnership with Nvidia, announced earlier this year at Nvidia's GTC conference. When asked whether the Firefly AI Assistant's agentic capabilities are built on NVIDIA's agent toolkit and NeMo infrastructure, Costin revealed that the collaboration is active but has not yet made it into a shipping product. "We're in active discussions -- investigating not only Nemotron," Costin said. "They have this technology called Open Shell and Nemo Claw, which give us the ability to efficiently run long-running agentic workflows in a sandboxed environment." He said the technology would become increasingly important as Adobe pushes the assistant to handle longer, more autonomous creative tasks -- but cautioned that "it's not shipping yet. It's being actively explored." For Nvidia, which is building an ecosystem of enterprise AI agent platforms with partners like Adobe, Salesforce, and SAP, the partnership could eventually serve as a high-profile proof point for its agent infrastructure stack in the creative vertical. For Adobe, the ability to run complex, long-duration agentic workflows efficiently and securely in sandboxed environments could be the technical foundation that separates the Firefly AI Assistant from lighter-weight chatbot integrations offered by competitors. The partnership also signals Adobe's recognition that the computational demands of agentic AI -- where a single user request may trigger dozens of model calls and tool invocations -- require infrastructure partnerships that go well beyond what a software company can build alone. Premiere Pro's new color grading mode and the tools Adobe is shipping today Beyond the headline AI assistant announcement, Adobe's broader set of updates reflects a company trying to strengthen its position across every phase of the content creation pipeline. Color Mode in Premiere Pro may be the most significant near-term upgrade for working editors. Entering public beta today, Color Mode is described as a first-of-its-kind color grading experience built specifically for the way editors -- rather than dedicated colorists -- think and work. Adobe notes that it was developed through an extensive private beta with hundreds of working editors, and that participants reported they "actually enjoy color grading" -- a sentiment suggesting Adobe may have found a way to democratize one of post-production's most intimidating disciplines. General availability is expected later in 2026. The Firefly Video Editor gains audio upgrades including the Enhance Speech feature migrated from Premiere and Adobe Podcast, direct Adobe Stock integration with access to more than 800 million licensed assets, and simple color adjustment controls with intuitive sliders and one-click looks. On the image editing front, Adobe introduced Precision Flow, which generates a range of semantic variations from a single prompt and lets users browse them via an interactive slider -- a novel approach that Costin described as "the best slider-based control mixed with the best semantic understanding of not only the existing scene, but what the scene could be." AI Markup complements this by letting users draw directly on images to specify where and how edits should be applied. After Effects 26.2 adds an AI-powered Object Matte tool that dramatically accelerates rotoscoping and masking -- create accurate mattes of moving subjects with a hover and click, refine with a Quick Selection brush, and perfect edges with a Refine Edge tool. Frame.io Drive wants to kill the shipped hard drive and make cloud media feel local Rounding out the announcements, Frame.io Drive addresses one of the most persistent pain points in distributed video production: getting media from point A to point B without losing hours -- or days -- to downloads, syncing, and shipped hard drives. Frame.io Drive is a desktop application that mounts Frame.io projects to a user's computer so media appears in Finder or Explorer and behaves like local files. The underlying technology, called Frame.io Mounted Storage, streams media on demand as applications request it, while local caching ensures smooth playback. The product builds on streaming technology provided by Suite Studios, and the real-time file access capability is included with every Frame.io account. Adobe emphasized that all content lives solely within Frame.io and is never shared with third parties. The move positions Frame.io not just as a review-and-approval tool at the end of the production pipeline but as the central media layer from the very beginning of a project -- from first capture through final delivery. If successful, the strategy could significantly deepen Adobe's lock-in with professional video teams by making Frame.io the single source of truth for distributed productions. Frame.io Drive and Mounted Storage will roll out in phases, with Enterprise customers gaining access starting today and accounts on other plans following shortly. Others can join a waitlist. Adobe's biggest challenge isn't building the AI -- it's convincing creators to trust it Taken together, today's announcements paint a picture of a company executing aggressively across multiple fronts -- but also one that is navigating a complex moment. Adobe first introduced Firefly in March 2023 as a family of generative AI models focused on image and text effects, with a strong emphasis on commercial safety through training on licensed Adobe Stock content. In the two years since, the company has rapidly expanded into video generation, multi-model access, and now agentic workflows -- a trajectory that mirrors the broader industry's shift from standalone AI features to AI-native systems. But the competitive field has grown dramatically. Runway, Pika, and a host of AI-native video generation startups have captured mindshare among creators. Canva has aggressively integrated AI into its design platform. And the emergence of powerful foundation models from OpenAI, Google, and Anthropic -- the latter of which Adobe says it will integrate with Firefly AI Assistant capabilities -- means the barrier to building creative AI tools has never been lower. Adobe is also navigating these product ambitions against a complex corporate backdrop: the impending departure of CEO Shantanu Narayen, an actively exploited zero-day vulnerability in Acrobat Reader (CVE-2026-34621) that had been used by hackers for months before being patched this week, a U.K. antitrust investigation over cancellation fees, and a recent $75 million lawsuit settlement. Adobe's response, articulated clearly through today's launches, is to lean into what it believes is its deepest moat: the integration of AI into a set of professional-grade, category-leading applications that no startup can replicate overnight. Costin framed the agentic transition as empowering rather than threatening to creative professionals, comparing Creative Skills to a next-generation version of Photoshop Actions -- the macro-recording feature that has long allowed power users to automate repetitive tasks. "We want to help our customers become -- from the ones doing all the work -- to be creative directors, doing some of the work, but most importantly, guiding the assistant in executing some of those creative visions," he said. It is a compelling pitch -- and, in its own way, a revealing one. For three decades, Adobe made its fortune by selling the tools that turned creative vision into finished pixels. Now it is asking its customers to let an AI agent handle more of that translation, trusting that the human role will shift from operating the tools to directing the outcome. Whether creators embrace that bargain -- and whether Wall Street rewards it -- will determine not just Adobe's trajectory but the shape of an entire industry learning to create alongside machines.

[9]

AppleInsider.com

Adobe is doubling down on artificial intelligence with a new tool designed to help users create and edit projects across multiple Adobe products. Adobe's Firefly AI Assistant is agentic, meaning it can perform complex tasks and make decisions on its own. Instead of requiring continual guidance, agentic AI works independently to achieve a goal set by the user. So, essentially, you'll tell the assistant what you want, and the assistant will take the steps to make that happen. According to Adobe, it will be able to execute "complex, multi-step workflows across Adobe's Creative Cloud apps including Firefly, Photoshop, Premiere, Lightroom, Express, Illustrator and more." "Adobe is leading the shift into a new era of agentic creativity, where you direct how your work takes shape and your perspective, voice and taste become the most powerful creative instruments of all," said David Wadhwani, President, Creativity & Productivity Business, Adobe. "Adobe Firefly is a category of one, with the best models, the most powerful tools and now, a fundamentally new way of creating that gives you the combined power and precision of all our creative apps in one place." According to the company, Firefly AI Assistant will bring creation into a single conversational interface in the Firefly app. Using natural language, users describe the outcome they want, and the assistant will orchestrate workflows, maintain context, and results will carry into individual apps. Adobe says that Firefly AI assistant is a creator-led tool, and that the AI merely plays a supporting role. It will ask users contextual questions and offer suggestions, and users are encouraged to step in at any point to guide, refine, or adjust outputs. It also comes with pre-built creative skills. For example, users can retouch portrait photos with consistent presets, executable from a single prompt. Allegedly, the assistant will also learn a user's preferences over time. This should, theoretically, help users create more consistent pieces across projects. Adobe has also included integrated review and iteration with Frame.io. Users can ask Firefly AI assistant to organize and share information with stakeholders, who can then provide actionable feedback to the assistant. Firefly AI assistant isn't out yet. Beta testers will have access to it in an upcoming public beta in the coming weeks.

[10]

Adobe Firefly can now run your entire creative workflow from a single chat

Adobe's new AI Assistant can now run your entire creative workflow. Yes, all of it. Adobe has quietly been building something big inside Firefly, its all-in-one creative AI studio. And today, the company is ready to show it off. Meet Firefly AI Assistant, a conversational tool that lets you describe what you want to create and then handles the execution across Adobe's entire app ecosystem, including Photoshop, Premiere, Lightroom, Express, and Illustrator. You no longer need to bounce between the apps and can handle all the edits with simple text prompts. The idea is simple. You bring your vision and creative judgment, give Firefly a prompt, and the assistant will figure out the rest. So what can it actually do for you? The assistant works through something Adobe calls Creative Skills, which are pre-built workflows for common tasks like editing portrait photos with consistent presets or generating content for multiple social platforms at once. You can use the ones Adobe provides or build your own AI skills. Recommended Videos It also remembers your preferences over time, your favorite tools, your aesthetic choices, and the kind of outputs you like. The more you use it, the more it learns your style. One of the major pain points of AI creation tools is that they don't deliver consistent results across a big project. Adobe is promising it has cracked this issue, and if it's as good as what the company says, it will be a game-changer for new-age creators. What else is new in Firefly? Beyond the assistant, Adobe packed in a lot more. The Firefly Video Editor now supports automatic dialogue cleanup, color adjustment tools, and access to over 800 million licensed Adobe Stock assets from inside the editor. Two new image editing tools are also coming. Precision Flow lets you browse a range of variations from a single prompt using a slider. AI Markup lets you draw directly on an image to place objects, adjust lighting, or add elements with a brush. Firefly also added Kling 3.0 and Kling 3.0 Omni to its growing roster of AI video models, joining over 30 other models already available on the platform. Adobe is promising a lot with this new update, but whether the company can deliver on its promises is a whole other thing. I have learned not to trust AI features that companies announce before trying them.

[11]

Adobe's new Firefly AI Assistant could forever change the way you use its apps

If the final product works like the demo, the new Firefly AI Assistant will change the fundamental way people interact with design software, giving the keys of the walled professional creative castle to anyone willing to pay the money, write in plain English, and move sliders that appear contextually to finely tune aspects of their creations whenever it is needed. Instead of forcing newcomers to memorize a labyrinth of menus, nested palettes, and pop-up windows, the assistant lets them achieve complex results just by asking. At the same time, the new assistant is the first stepping stone into a new type of automation for professionals. It gives veterans a fast track to bypass the tedious grunt work they already know how to do. "We have the full spectrum covered from people coming new to our franchise and they don't know the full power of Photoshop and they want to achieve some amazing edits they can also tap into it and just talk to the assistant," Adobe vice president of AI and innovation Alexandru Costin told me in an interview. "On the other side of the spectrum, the creative professionals that fully understand our tools can actually take those assets and continue editing them in our tools." This tool evolved from Project Moonlight, which Adobe teased at last year's MAX conference and tested in a private beta. The core idea came directly from working professionals who were looking for a modern upgrade to Photoshop Actions, a decades-old feature that allows users to record and replay mouse clicks. Actions only works for fixed, repeatable chores, like adjusting a thousand images' hue and saturation using fixed values. But users wanted a smarter type of automation agent that could adapt to what the agent sees in each image, video, or illustration. Adobe decided to create something that goes beyond basic editing, changing things on media according to the context and content of the image or the video itself, even creating new images, mockups and final candidates for art.

[12]

Adobe launches Firefly AI Assistant across Creative Cloud apps

Adobe has introduced a chat-based interface, the Firefly AI Assistant, designed to handle complex, multi-modal projects across its creative applications like Photoshop, Illustrator, and Premiere. This development marks a significant shift in user interaction, aiming to streamline workflows and enhance productivity for experienced creatives while also reducing barriers for inexperienced users. The Firefly AI Assistant allows users to offload mundane tasks and integrates prompts from previous applications, addressing tool complexity that has historically hindered creativity. Unlike earlier chat-based functions, this interface operates across multiple Adobe Creative Cloud applications. It orchestrates workflows by regularly checking in with users for suggestions or questions, enabling them to provide clarifications mid-task. The interface primarily operates through a chat format but dynamically presents contextually relevant controls, such as sliders, to adapt to user needs during their projects. Adobe's ongoing integration of task-specific AI tools showcases its commitment to enhancing user experience and innovation in creative software.

[13]

Adobe's Firefly AI Assistant Can Perform Complex Design Tasks on Your Behalf

A demo of the agentic chatbot will be showcased at the Adobe Summit 2026 Adobe introduced the Firefly AI Assistant on Wednesday, an agentic conversational chatbot that can perform complex design tasks. Available inside the Firefly platform and connected to the Creative Cloud apps, the assistant can both analyse and act on natural language prompts. The company said the tool will help creators automate creative workflows while maintaining context. It is scheduled to be released in beta soon, and will be showcased by the company at its upcoming Adobe Summit 2026. Adobe Unveils Firefly AI Assistant In a blog post, the software giant announced the new agentic AI chatbot, Firefly AI Assistant. So far, the company has used its Firefly platform as a suite of AI capabilities, where users can pick and choose the feature and the underlying model based on their preference. Now, the new assistant adds an agentic conversational layer, where users can just command the chatbot, and it will find the best tools to complete the actions. The Firefly AI Assistant's biggest advantage is its connectivity across the Creative Cloud apps, including Firefly, Photoshop, Premiere, Lightroom, Express, Illustrator, and more. Since the tool is capable of handling multi-step workflows, users can potentially ask it to build the entire project, and then make changes manually as per their needs. It also asks contextual questions to the user, surfaces decisions, and presents suggestions to help them in their workflow. Adobe highlights that users can interrupt the assistant at any point to guide, refine, or adjust outputs. Adobe has also added a library of Creative Skills, which are pre-built workflows that the user can apply with a single command. Some of these allow users to retouch portrait photos or generate content across social channels. Adobe has also created a mechanism to let the Firefly AI Assistant receive feedback on its creations. The company says the assistant can share work in Frame.io, and collaborators can review and provide feedback. The agentic chatbot will then automatically apply the changes. The Firefly AI Assistant will be available in public beta in Firefly in the coming weeks.

[14]

Adobe releases AI assistant for creative tools, says it will work with Anthropic's Claude

Adobe said on Wednesday it was releasing a new artificial intelligence assistant designed to help users carry out tasks across its suite of software for editing photos, videos and other digital content. The Firefly AI assistant is designed to take orders fromhuman creative professionals about what results they want for apiece of content and then autonomously tap into Adobe's softwaretools, such as Photoshop, Illustrator and Premiere Pro, to getthat outcome. The new capabilities will also be available to users ofAnthropic's Claude AI model through a connector to Adobe, thoughAdobe did not disclose the financial arrangements between thefirms. "There are parts of projects, or individual sections of animage, where you really care about getting into theindividual pixels, and we want to continue to support customersin doing that, but there are places where you would be happy tojust hand this stuff off to an agent or an assistant," said ElyGreenfield, chief technology officer at Adobe's creativity andproductivity business unit. The Firefly AI assistant is the latest in a series ofAdobe investments since 2023 in proprietary AI tools that itsays are financially guaranteed as safe for use in corporatesettings. This is one of the ways Adobe is trying todifferentiate itself from lower-cost rivals as AI lowers thebarrier to entry for creating images and videos. Adobe's longtime CEO said last month that he will stepdown after a successor is named, amid investor scepticism aboutwhen the company's AI investments will pay off. Adobe did not disclose how much the new assistant willcost users, but said it expects the assistant to increase theirconsumption of what it calls AI credits, the main way thecompany currently charges for AI products.

[15]

Adobe rolls out Firefly AI Assistant with creative agent for multi-step workflows

Adobe has announced an update to its Firefly platform with the introduction of Firefly AI Assistant, powered by a creative agent. The update also brings new video and image editing features and expands support for third-party AI models. Firefly is positioned as an all-in-one creative AI studio, where users can create content across Adobe's ecosystem using a unified interface. The assistant allows users to describe the outcome they want, while it orchestrates and executes complex, multi-step workflows across applications. Firefly AI Assistant introduces a conversational interface within the Firefly app that enables users to describe desired outcomes in natural language. The assistant then orchestrates and executes multi-step workflows across Adobe apps including Photoshop, Premiere Pro, Illustrator, Lightroom, Express, and Firefly. Adobe describes this as a shift to agentic creativity, where users provide the vision, judgment, and creative direction, while the assistant handles execution. It supports both quick task initiation for general users and more complex workflows for professional use cases, while reducing manual steps involved in multi-app processes. Adobe has introduced updates to its multi-track Firefly Video Editor and image editing tools. Adobe also continues to offer its commercially safe Firefly models. Speaking on the launch, David Wadhwani, President, Creativity & Productivity Business, Adobe, said:

[16]

Adobe is making bold claims about its new Firefly AI Assistant

Tech companies often (read: always) make bold claims when unveiling their latest innovations. But even in this context, Adobe's positing that its new Firefly AI Agent will mark a "fundamental shift in how creative work is done" is big talk. The brand is heralding a move towards "agentic creativity", where creators can describe the desired outcome in their own words, and the assistant can dip into the entire Creative Cloud suite to create it. Firefly AI Assistant brings the entire Creative Cloud suite together in a single conversational interface that can orchestrate workflows across Photoshop, Premiere, Lightroom, Express, Illustrator and more. Back at Adobe Max last October, we asked if 'conversational editing' was the future of design, and with this update, it's clear Adobe thinks so. Users can guide Firefly AI Assistant's output through text prompts, along with interactive controls like buttons and sliders. The assistant is able to recommend actions, coordinate tasks across apps and carry out workflows. It also adapts its controls to the project at hand. Perhaps anticipating a potential backlash, Adobe's David Wadhwani has written a blog post arguing that what sets the company apart from competitors is its belief that AI agents must "keep humans in the loop". "Our belief is that agentic AI should serve human creativity, making creation more accessible, expressive and powerful than ever before. We're developing a new Adobe creative agent, a new way of working that empowers you to become the creative director of your own story. You set the vision, apply your taste and make the calls that only you can make. The creative agent will help you carry forward your vision by orchestrating various models, tools and production processes, reaching across applications to complete all sorts of tasks that previously took vastly more time and effort." Firefly is also getting new video and image editing capabilities, and expanded partner models, with Kling 3.0 and Kling 3.0 joining the likes of Google's Nano Banana 2 and Veo 3.1, Runway Gen-4.5, Luma AI's Ray3.14 and more. Adobe's focus on AI has become increasingly dogged in recent years, but the company does seem intent on exploring ways in which the tech can work alongside the human hand. The recently announced Firefly Custom Models are designed to ensure output consistency adheres to your own aesthetic style, while Firefly AI Agent lets creators step in at any point to guide, refine or adjust outputs. Firefly AI Assistant will be available in public beta in Firefly in the coming weeks.

[17]

Adobe to Integrate New AI Assistant With Anthropic's Claude

Adobe released a new artificial intelligence assistant that will connect to the Claude chatbot and will allow people to complete design projects with simple commands. The software company behind tools like Photoshop and Premiere on Wednesday said its new Firefly AI Assistant will take conversational directions from users and execute multi-step workflows throughout its Creative Cloud apps. Adobe is partnering with Anthropic and other AI companies to allow users to access Firefly and Adobe tools through Claude and other "leading third-party AI models," the company said. Firefly will be personalized to each user and can be used for AI video and image editing, with improvements in sound and color, the company said. "Adobe is leading the shift into a new era of agentic creativity, where you direct how your work takes shape and your perspective, voice and taste become the most powerful creative instruments of all," said David Wadhwani, Adobe's president of creativity and productivity business. Adobe's announcement comes as companies race to deploy more capable AI agents, which are AI models that not only chat with users but also autonomously complete tasks.

[18]

Adobe releases AI assistant for creative tools, says it will work with Anthropic's Claude

SAN FRANCISCO, April 15 (Reuters) - Adobe said on Wednesday it was releasing a new artificial intelligence assistant designed to help users carry out tasks across its suite of software for editing photos, videos and other digital content. * The Firefly AI assistant is designed to take orders fromhuman creative professionals about what results they want for apiece of content and then autonomously tap into Adobe's softwaretools, such as Photoshop, Illustrator and Premiere Pro, to getthat outcome. * The new capabilities will also be available to users ofAnthropic's Claude AI model through a connector to Adobe, thoughAdobe did not disclose the financial arrangements between thefirms. * "There are parts of projects, or individual sections of animage, where you really care about getting into theindividual pixels, and we want to continue to support customersin doing that, but there are places where you would be happy tojust hand this stuff off to an agent or an assistant," said ElyGreenfield, chief technology officer at Adobe's creativity andproductivity business unit. * The Firefly AI assistant is the latest in a series ofAdobe investments since 2023 in proprietary AI tools that itsays are financially guaranteed as safe for use in corporatesettings. This is one of the ways Adobe is trying todifferentiate itself from lower-cost rivals as AI lowers thebarrier to entry for creating images and videos. * Adobe's longtime CEO said last month that he will stepdown after a successor is named, amid investor skepticism aboutwhen the company's AI investments will pay off. * Adobe did not disclose how much the new assistant willcost users, but said it expects the assistant to increase theirconsumption of what it calls AI credits, the main way thecompany currently charges for AI products. (Reporting by Stephen Nellis in San Francisco; Editing by Jamie Freed)

[19]

Adobe partners with Anthropic to build AI creative agent for Claude

It will also work with Claude to help users turn ideas into finished content quickly. Adobe has recently partnered with Anthropic and introduced a new generation of its Firefly creative assistant. With the latest iteration the company is bringing more advanced automation features into its Adobe creative tools. Moreover, the company is also expanding beyond its own platforms, as Adobe is connecting its services across apps and third-party ecosystems. The centre of this push is the Adobe Firefly. The company says that by combining the automation with familiar tools, they are trying to make the content creation faster, simpler, and more accessible for everyday users. Unlike the earlier tools, the all-new Adobe Firefly assistant with the Anthropic AI push is designed to act more independently. It can now complete tasks such as editing a group of photos, adjusting lighting, and cropping images without step-by-step input. Moreover, it can also batch edit photos, improve lighting, and adjust framing with minimal human input. Also read: Is AI replacing jobs or creating them? Here is what LinkedIn data says Rather than focusing only on generating new visuals, the Adobe Firefly assistant now also supports the routine editing work, which remains the key part of most creative projects in today's scenario. Furthermore, Adobe Firefly continues to integrate with widely used apps like Photoshop, Acrobat, and Premiere Pro, ensuring that users can access AI features within tools they already know. Adobe has also been steadily adding AI capabilities across its software, and this assistant builds on earlier updates introduced in Adobe Express and Photoshop. Also read: App Store, Google Play Store accused of promoting nudify apps through search suggestions: All details The partnership with Anthropic plays a major role in this new development, and as a result of this newly found partnership, Adobe is also bringing its Firefly capabilities to Claude, allowing the users to move from idea generation to execution more smoothly in the AI tool itself. Moreover, with this move it's also expected that Claude's use will expand beyond coding and professional tasks into creative workflows. Aside from the abovementioned, Adobe is also improving Firefly's video and image tools with better audio, enhanced colour controls, and access to more AI models, offering users greater flexibility. Adobe has said more details about the Firefly and Claude integration will be shared soon, including the exact date of its launch. However, so far we know that the Firefly AI assistant is set to roll out as a public beta later this month.

Share

Copy Link

Adobe launches Firefly AI Assistant, a conversational AI editing tool that executes multi-step workflows across Creative Cloud applications like Photoshop, Premiere, and Illustrator. Previously known as Project Moonlight, the assistant enters public beta soon and marks Adobe's shift toward agentic AI that can automate complex tasks while keeping creators in control.

Adobe Shifts Strategy with Conversational AI Editing Tool

Adobe is launching Firefly AI Assistant, a chat-based interface that orchestrates complex, multi-step workflows across its Creative Cloud applications

1

. The tool, previously previewed as Project Moonlight last October, represents what the company calls a "fundamental shift in how creative work is done" by enabling users to describe desired outcomes using natural language prompts rather than manually navigating specialized software4

. Unlike Adobe's previous approach of embedding task-specific AI features into individual apps, this assistant can work across Photoshop, Premiere, Lightroom, Illustrator, Express, and other Creative Cloud applications to execute tasks on behalf of users2

.

Source: 9to5Mac

How Firefly AI Assistant Streamlines Creative Workflows

The Firefly AI Assistant functions as an agentic AI tool that can perform tasks with minimal human oversight while keeping creators in the loop

3

. Users can issue commands like "retouch this image" or "resize this for social media," and the assistant provides a selection of edits alongside contextually relevant controls such as sliders based on the task at hand1

4

. For instance, when editing a product photo set in a forest, the assistant might surface a simple slider to increase or reduce surrounding trees and foliage without requiring complex manual edits5

. The tool learns user preferences over time, including favorite tools, workflows, and aesthetic choices, though Alexandru Costin, Adobe's vice president of AI and innovation, confirmed that creatives can choose whether to enable this feature and select specific projects for the AI to learn from4

.

Source: Fast Company

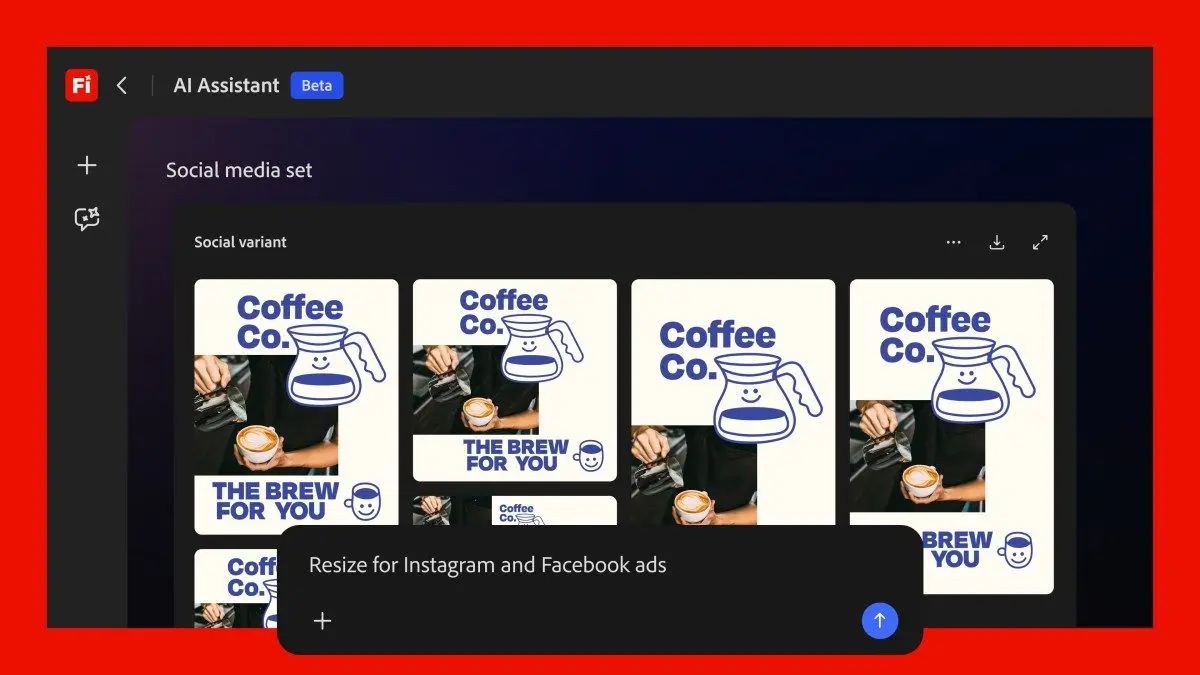

Creative Skills and Automation Capabilities

Adobe is introducing Creative Skills, which function as prepackaged integrations and workflows tailored to specific tasks across apps

1

. Users can tap into a library of these skills or build and configure their own4

. The "social media assets" skill, for example, can adapt images to different platforms by cropping or using Generative Extend, optimizing file sizes, and storing outputs2

. This approach aims to lower skill barriers for inexperienced users while providing experienced creatives with efficient ways to offload mundane tasks1

. Users can interject at any point during execution with clarifications or additional information, and the assistant maintains Adobe's native file formats so final outputs remain fully editable5

.

Source: TechCrunch

Related Stories

Integration with Anthropic and Third-Party AI Models

Adobe is expanding its partnership with Anthropic to bring the Firefly AI Assistant to Claude, marking Claude's first major creative AI tool beyond its coding and enterprise capabilities

3

. The company stated it is "enabling creators to access the best of Adobe directly across the surfaces where they work every day" by bringing its tools to third-party AI models3

. Additionally, Adobe is adding Kling 3.0 and Kling 3.0 Omni models to Firefly's library of over 30 third-party AI models, joining existing options like Google's Nano Banana 2 and Veo 3.1, Runway Gen-4.5, and Luma AI's Ray 3.145

.Availability and Strategic Implications

The Firefly AI Assistant will enter public beta in the coming weeks, though Adobe has not yet specified pricing details, limits, or which subscription tiers will have access

1

2

. Alexandru Costin emphasized that Adobe's strength lies in unifying its existing and popular tools, stating: "We have the opportunity with the Firefly AI assistant and with agentic experiences to remove some of the friction in learning this large catalog of tools we have and bring all of that value to our customers at their fingertips"2

. This release positions Adobe to compete with emerging generative AI applications while leveraging its established professional-grade creative tools to deliver what the company describes as "precise, context aware results" that standalone AI agents cannot match5

.References

Summarized by

Navi

[1]

[2]

[4]

Related Stories

Adobe Firefly AI Assistant enters public beta with cross-app workflow automation

27 Apr 2026•Technology

Adobe Unveils AI Assistants for Photoshop and Express, Introducing Conversational Editing and Third-Party Model Integration

28 Oct 2025•Technology

Adobe launches Photoshop AI assistant in public beta for web and mobile editing

10 Mar 2026•Technology

Recent Highlights

1

Pope Leo XIV releases first AI encyclical calling for disarmament from monopolistic control

Policy and Regulation

2

Trump cancels AI executive order signing after tech CEOs skip event and industry pushback

Policy and Regulation

3

Google AI Search officially replaces traditional web search with Gemini-powered conversations

Technology