Amazon S3 Files gives AI agents native file system access, ending two decades of storage friction

3 Sources

[1]

AWS turns its S3 storage service into a file system for AI agents

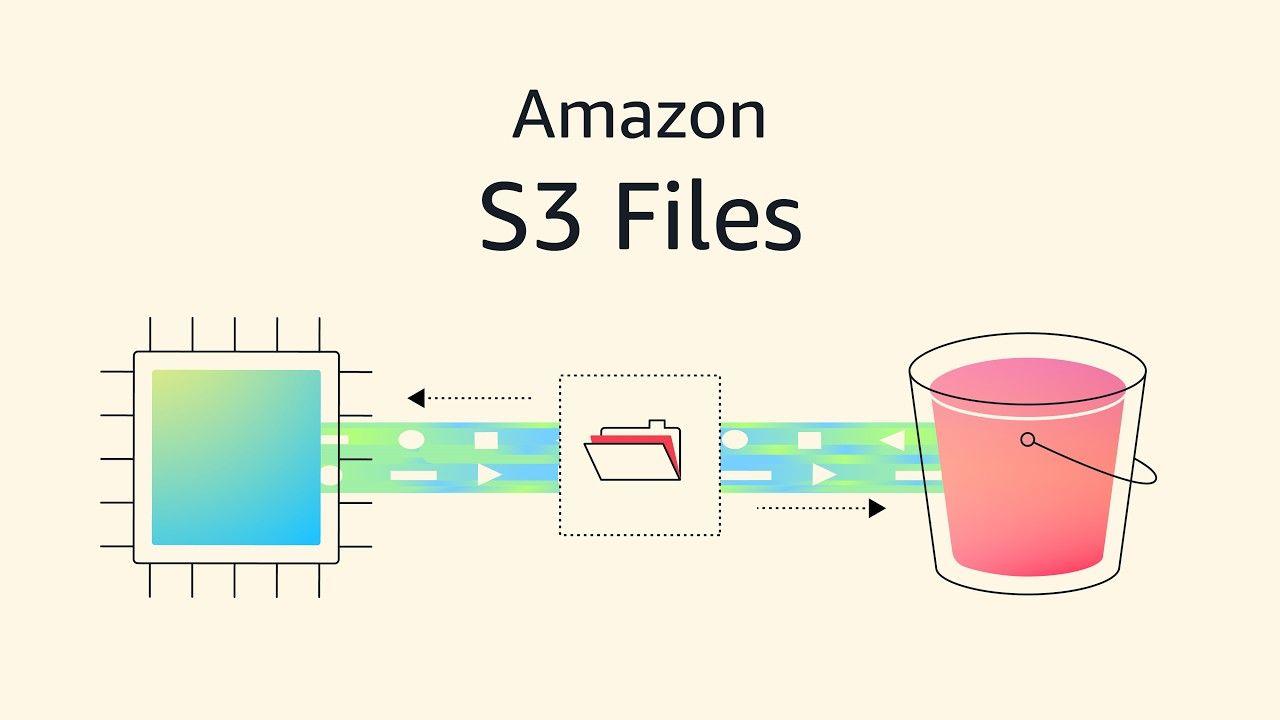

S3 Files, a native file system interface on top of Amazon Simple Storage Service offers developers simplicity and CIOs a more unified, cost-efficient data architecture, analysts say. Amazon Web Services is making its S3 object storage service easier for AI agents to access with the introduction of a native file system interface. The new interface, S3 Files, will eliminate a longstanding tradeoff between the low cost of S3 and the interactivity of a traditional file system or of Amazon's Elastic File System (EFS). "The file system presents S3 objects as files and directories, supporting all Network File System (NFS) v4.1+ operations like creating, reading, updating, and deleting files," AWS principal developer advocate Sébastien Stormacq wrote in a blog post. The file system can be accessed directly from any AWS compute instance, container, or function, spanning use cases from production applications to machine learning training and agentic AI systems, Stormacq said.

[2]

Amazon revamps S3 cloud storage for the AI era, removing a key barrier for apps and agents

Amazon Web Services is making it possible to access data stored in its S3 cloud storage service as a traditional file system, bridging a divide between two types of storage that has frustrated developers and data scientists for nearly two decades. The new capability, called Amazon S3 Files, lets applications running on AWS access an S3 storage bucket as if it were a local file system, reading and writing data using standard file operations rather than specialized cloud storage commands. In practice, this means a machine learning team can run a training job directly against data in S3 without first copying it to a separate file system. Or, perhaps more importantly these days, an AI agent can read and write files in S3 using the same basic tools it would use on a local hard drive. S3, launched 20 years ago, holds a huge amount of the world's cloud data. S3 Files promises to open the door for a much broader range of apps and AI systems to work directly with that data. The backstory: In an unusually candid essay coinciding with the news, Andy Warfield, a vice president and distinguished engineer who leads S3 engineering at AWS, described the technical and philosophical challenges of making the feature work, and why the first approach failed. The core issue, Warfield wrote, is that files and objects are fundamentally different. Files can be edited in place and shared across applications in real time, working the way most software has always expected. Objects in S3 work differently: they are designed to be stored and retrieved as complete units, and millions of applications are built around that assumption. So they "did the only sensible thing you can do when you are faced with a really difficult technical design problem: we locked a bunch of our most senior engineers in a room and not let them out till they had a plan that they all liked," Warfield wrote. "Passionate and contentious discussions ensued," he said. "And then finally we gave up." But ultimately, the team found its answer by no longer trying to hide the boundary between files and objects and instead making it a deliberate part of the design. The approach: S3 Files uses a "stage and commit" model, borrowing the concept from version control systems like Git: changes accumulate on the file system side and are pushed back to S3 as whole objects, preserving the guarantees that existing S3 applications depend on. Google and Microsoft offer their own tools for accessing cloud object storage through file system interfaces, but AWS is positioning S3 Files as a deeper integration, backed by a fully managed file system rather than a simple adapter. S3 Files is available today in AWS regions worldwide, built on Amazon's Elastic File System. The company says it has been in customer testing for about nine months.

[3]

Amazon S3 Files gives AI agents a native file system workspace, ending the object-file split that breaks multi-agent pipelines

AI agents run on file systems using standard tools to navigate directories and read file paths. The challenge, however, is that there is a lot of enterprise data in object storage systems, notably Amazon S3. Object stores serve data through API calls, not file paths. Bridging that gap has required a separate file system layer alongside S3, duplicated data and sync pipelines to keep both aligned. The rise of agentic AI makes that challenge even harder, and it was affecting Amazon's own ability to get things done. Engineering teams at AWS using tools like Kiro and Claude Code kept running into the same problem: Agents defaulted to local file tools, but the data was in S3. Downloading it locally worked until the agent's context window compacted and the session state was lost. Amazon's answer is S3 Files, which mounts any S3 bucket directly into an agent's local environment with a single command. The data stays in S3, with no migration required. Under the hood, AWS connects its Elastic File System (EFS) technology to S3 to deliver full file system semantics, not a workaround. S3 Files is available now in most AWS Regions. "By making data in S3 immediately available, as if it's part of the local file system, we found that we had a really big acceleration with the ability of things like Kiro and Claude Code to be able to work with that data," Andy Warfield, VP and distinguished engineer at AWS, told VentureBeat. The difference between file and object storage and why it matters S3 was built for durability, scale and API-based access at the object level. Those properties made it the default storage layer for enterprise data. But they also created a fundamental incompatibility with the file-based tools that developers and agents depend on. "S3 is not a file system, and it doesn't have file semantics on a whole bunch of fronts," Warfield said. "You can't do a move, an atomic move of an object, and there aren't actually directories in S3." Previous attempts to bridge that gap relied on FUSE (Filesystems in USErspace), a software layer that lets developers mount a custom file system in user space without changing the underlying storage. Tools like AWS's own Mount Point, Google's gcsfuse and Microsoft's blobfuse2 all used FUSE-based drivers to make their respective object stores look like a file system. Warfield noted that the problem is that those object stores still weren't file systems. Those drivers either faked file behavior by stuffing extra metadata into buckets, which broke the object API view, or they refused file operations that the object store couldn't support. S3 Files takes a different architecture entirely. AWS is connecting its EFS (Elastic File System) technology directly to S3, presenting a full native file system layer while keeping S3 as the system of record. Both the file system API and the S3 object API remain accessible simultaneously against the same data. How S3 Files accelerates agentic AI Before S3 Files, an agent working with object data had to be explicitly instructed to download files before using tools. That created a session state problem. As agents compacted their context windows, the record of what had been downloaded locally was often lost. "I would find myself having to remind the agent that the data was available locally," Warfield said. Warfield walked through the before-and-after for a common agent task involving log analysis. He explained that a developer was using Kiro or Claude Code to work with log data, in the object only case they would need to tell the agent where the log files are located and to go and download them. Whereas if the logs are immediately mountable on the local file system, the developer can simply identify that the logs are at a specific path, and the agent immediately has access to go through them. For multi-agent pipelines, multiple agents can access the same mounted bucket simultaneously. AWS says thousands of compute resources can connect to a single S3 file system at the same time, with aggregate read throughput reaching multiple terabytes per second -- figures VentureBeat was not able to independently verify. Shared state across agents works through standard file system conventions: subdirectories, notes files and shared project directories that any agent in the pipeline can read and write. Warfield described AWS engineering teams using this pattern internally, with agents logging investigation notes and task summaries into shared project directories. For teams building RAG pipelines on top of shared agent content, S3 Vectors -- launched at AWS re:Invent in December 2024 -- layers on top for similarity search and retrieval-augmented generation against that same data. What analysts say: this is not just a better FUSE AWS is positioning S3 Files against FUSE-based file access from Azure Blob NFS and Google Cloud Storage FUSE. For AI workloads, the meaningful distinction is not primarily performance. "S3 Files eliminates the data shuffle between object and file storage, turning S3 into a shared, low-latency working space without copying data," Jeff Vogel, analyst at Gartner, told VentureBeat. "The file system becomes a view, not another dataset." With FUSE-based approaches, each agent maintains its own local view of the data. When multiple agents work simultaneously, those views can potentially fall out of sync. "It eliminates an entire class of failure modes including unexplained training/inference failures caused by stale metadata, which are notoriously difficult to debug," Vogel said. "FUSE-based solutions externalize complexity and issues to the user." The agent-level implications go further still. The architectural argument matters less than what it unlocks in practice. "For agentic AI, which thinks in terms of files, paths, and local scripts, this is the missing link," Dave McCarthy, analyst at IDC, told VentureBeat. "It allows an AI agent to treat an exabyte-scale bucket as its own local hard drive, enabling a level of autonomous operational speed that was previously bottled up by API overhead associated with approaches like FUSE." Beyond the agent workflow, McCarthy sees S3 Files as a broader inflection point for how enterprises use their data. "The launch of S3 Files isn't just S3 with a new interface; it's the removal of the final friction point between massive data lakes and autonomous AI," he said. "By converging file and object access with S3, they are opening the door to more use cases with less reworking." What this means for enterprises For enterprise teams that have been maintaining a separate file system alongside S3 to support file-based applications or agent workloads, that architecture is now unnecessary. For enterprise teams consolidating AI infrastructure on S3, the practical shift is concrete: S3 stops being the destination for agent output and becomes the environment where agent work happens. "All of these API changes that you're seeing out of the storage teams come from firsthand work and customer experience using agents to work with data," Warfield said. "We're really singularly focused on removing any friction and making those interactions go as well as they can."

Share

Copy Link

Amazon Web Services launched S3 Files, a native file system interface that lets AI agents access S3 object storage directly using standard file operations. The new capability eliminates the need for duplicate storage layers and data synchronization pipelines that have plagued developers for nearly 20 years, particularly as agentic AI workflows demand seamless access to enterprise data.

Amazon S3 Files Bridges the Object-File Divide

Amazon Web Services (AWS) has introduced Amazon S3 Files, a native file system interface that allows AI agents and applications to access its S3 object storage service as if it were a traditional file system

1

. The new capability addresses a fundamental incompatibility that has frustrated developers and data scientists for nearly two decades: the divide between object stores that serve data through API calls and file systems that use standard file operations2

.The file system presents S3 objects as files and directories, supporting all Network File System (NFS) v4.1+ operations like creating, reading, updating, and deleting files

1

. This means machine learning training jobs can run directly against data in S3 without first copying it to a separate file system, and AI agents can read and write files using the same basic tools they would use on a local hard drive2

.

Source: VentureBeat

Why the Object-File Split Matters for AI-Driven Systems

The rise of agentic AI workflows has made the storage challenge more acute. AI agents run on file systems using standard tools to navigate directories and read file paths, but enterprise data predominantly resides in object stores like S3

3

. Bridging that gap previously required a separate file system layer alongside S3, creating data duplication and sync pipelines to keep both aligned3

.Andy Warfield, VP and distinguished engineer at AWS, explained that engineering teams using tools like Kiro and Claude Code kept running into the same problem: agents defaulted to local file tools, but the data was in S3 bucket storage

3

. Downloading data locally worked until the agent's context window compacted and the session state was lost. "By making data in S3 immediately available, as if it's part of the local file system, we found that we had a really big acceleration with the ability of things like Kiro and Claude Code to be able to work with that data," Warfield told VentureBeat3

.A Different Architecture Than FUSE-Based Solutions

Previous attempts to solve this problem relied on FUSE (Filesystem in USErspace), a software layer that lets developers mount custom file systems without changing underlying storage

3

. Tools like Google Cloud Storage FUSE and Azure Blob NFS all used FUSE-based drivers to make object stores look like file systems, but these solutions either faked file behavior by adding extra metadata or refused file operations that the object store couldn't support3

.S3 Files takes a different approach entirely. AWS connects its Elastic File System (EFS) technology directly to S3, presenting a full native file system access layer while keeping S3 as the system of record

3

. Both the file system API and the S3 object API remain accessible simultaneously against the same data3

.

Source: GeekWire

In an unusually candid essay, Warfield described how the team initially struggled with the technical challenge. They "locked a bunch of our most senior engineers in a room and not let them out till they had a plan that they all liked," he wrote. "Passionate and contentious discussions ensued. And then finally we gave up"

2

. The breakthrough came when the team stopped trying to hide the boundary between files and objects and instead made it a deliberate part of the design2

.Related Stories

Accelerating Multi-Agent Pipelines and Log Analysis

For multi-agent pipelines, the capability allows multiple agents to access the same mounted bucket simultaneously. AWS says thousands of compute resources can connect to a single S3 file system at the same time, with aggregate read throughput reaching multiple terabytes per second

3

. Shared state across agents works through standard file operations: subdirectories, notes files, and shared project directories that any agent in the pipeline can read and write3

.Warfield walked through a practical example involving log analysis. Previously, a developer using an AI agent to work with log data would need to tell the agent where the log files are located and to download them. With S3 Files, if the logs are immediately mountable on the local file system, the developer can simply identify that the logs are at a specific path, and the agent immediately has access

3

.The file system can be accessed directly from any AWS compute instance, container, or function, spanning use cases from production applications to machine learning training and agentic AI systems

1

. For teams building Retrieval-Augmented Generation (RAG) pipelines on top of shared agent content, S3 Vectors—launched at AWS re:Invent in December 2024—layers on top for similarity search against that same data3

.S3 Files is available now in most AWS Regions and has been in customer testing for about nine months

2

. The service is built on Amazon's Elastic File System and offers developers simplicity and a more unified, cost-efficient data architecture1

.References

Summarized by

Navi

[2]

Related Stories

AWS launches S3 Vectors with 90% cost savings claim, scales to 2 billion vectors per index

03 Dec 2025•Technology

Amazon AI pushes into enterprise software with AI agents for supply chain, hiring, and productivity

28 Apr 2026•Technology

Amazon Forms New Agentic AI Group, Signaling Major Push into Advanced AI Technology

05 Mar 2025•Technology

Recent Highlights

1

Pope Leo XIV releases first AI encyclical calling for disarmament from monopolistic control

Policy and Regulation

2

Trump cancels AI executive order signing after tech CEOs skip event and industry pushback

Policy and Regulation

3

Google AI Search officially replaces traditional web search with Gemini-powered conversations

Technology